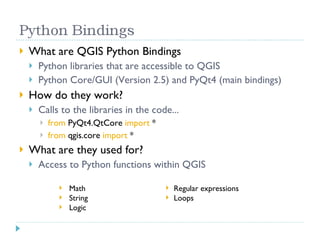

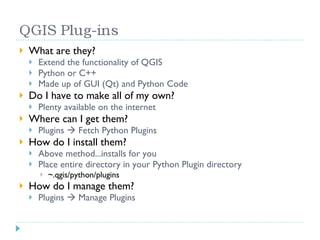

The document provides an introduction to Quantum GIS (QGIS), highlighting its customization capabilities through various programming languages and plugins. It emphasizes the benefits of using Python with QGIS for extending functionality, showcasing available plugins and training opportunities. Additionally, it includes links for further resources and training on QGIS and Python.

![How to get more training.... Contact Carteryx @ [email_address] or 778.668.5025 More training classes to come (watch http://www.carteryx.com ) Pre-defined and Personalized training.... Links http://www.qgis.org http://forum.qgis.org http://blog.qgis.org/ http://wiki.qgis.org/qgiswiki http://www.python.org http://www.diveintopython.org/](https://image.slidesharecdn.com/leveragingosgiswithpythonqgis-12543516495891-phpapp03/85/Leveraging-Open-Source-GIS-with-Python-A-QGIS-Approach-14-320.jpg)