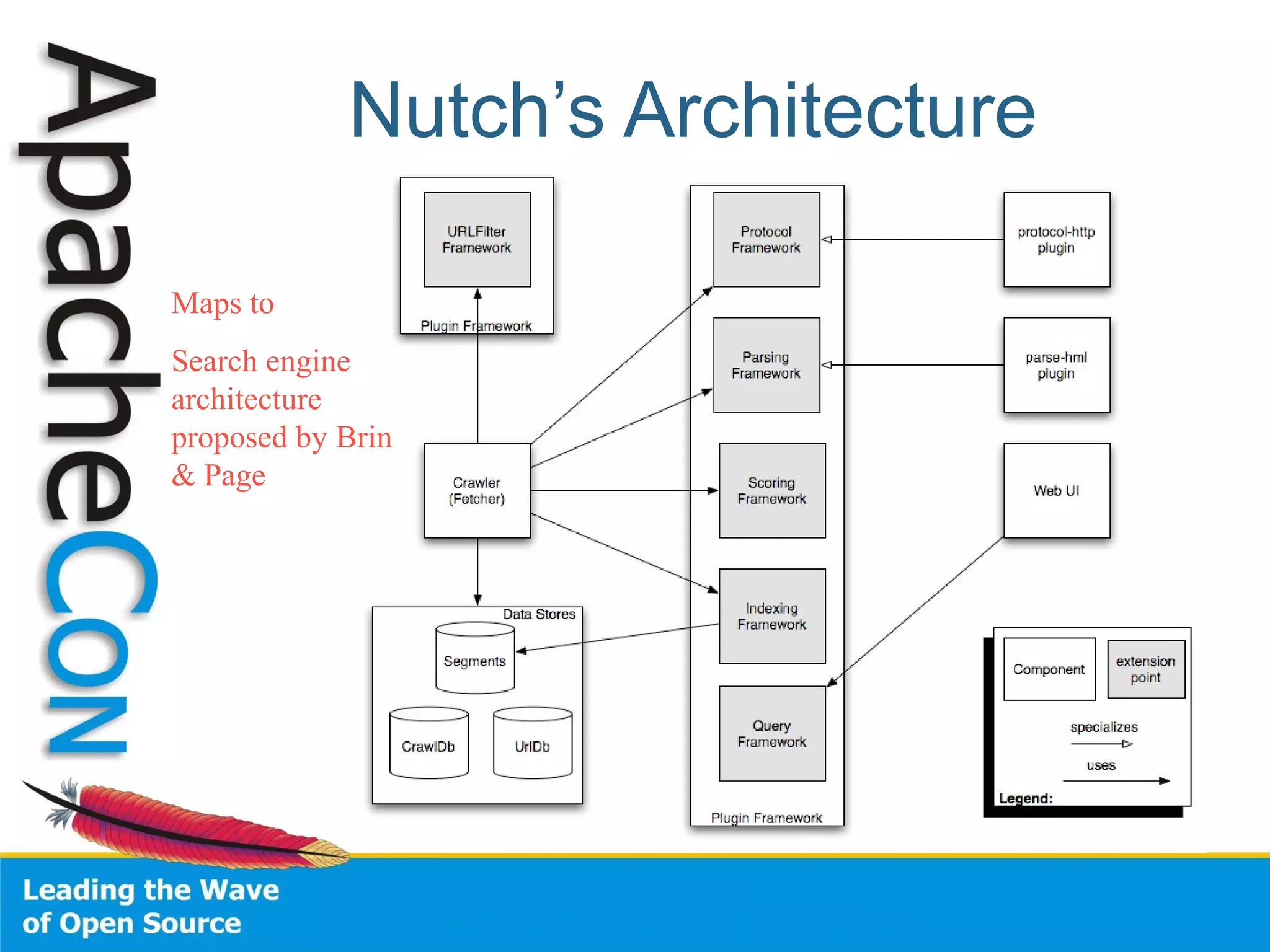

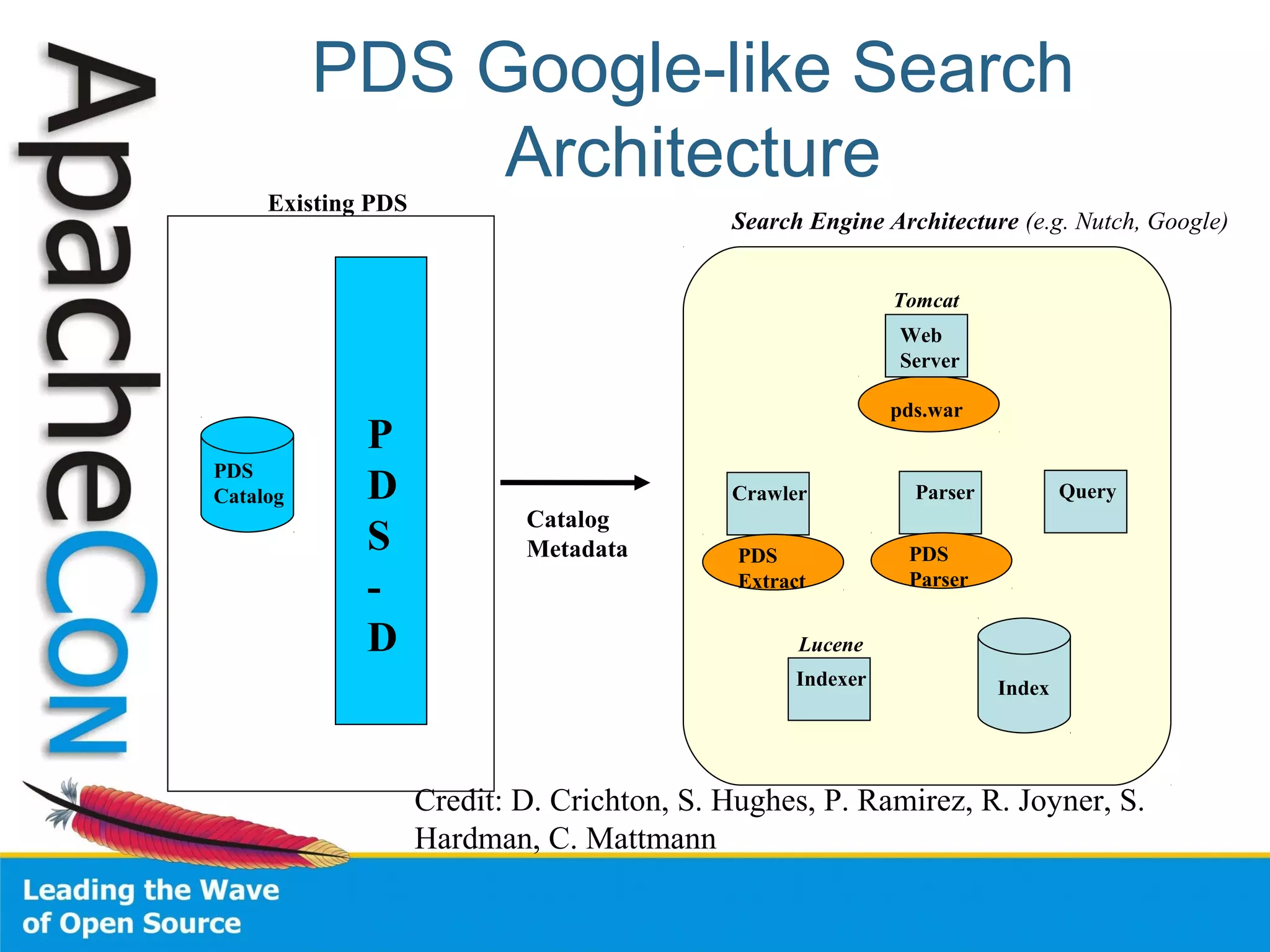

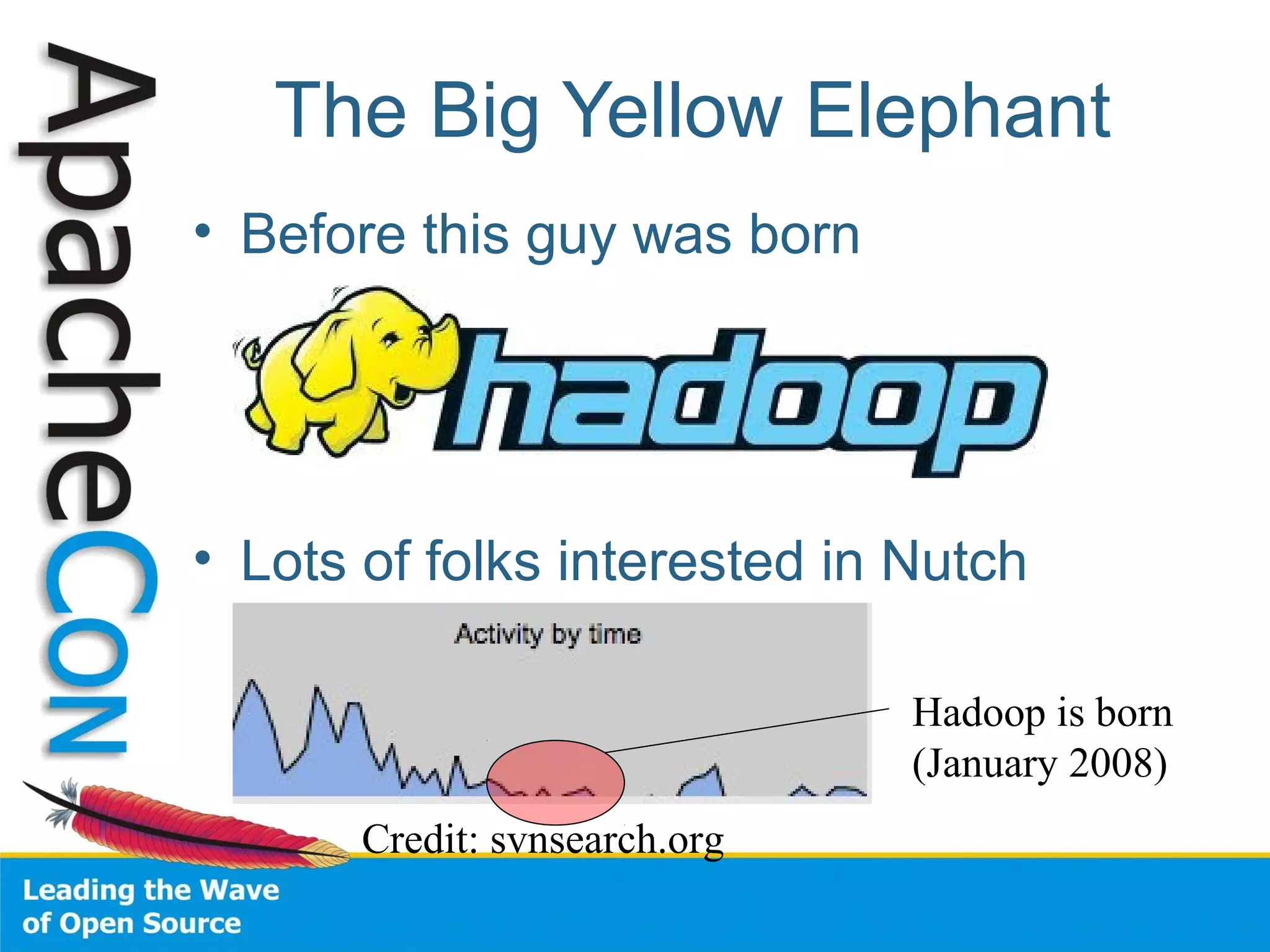

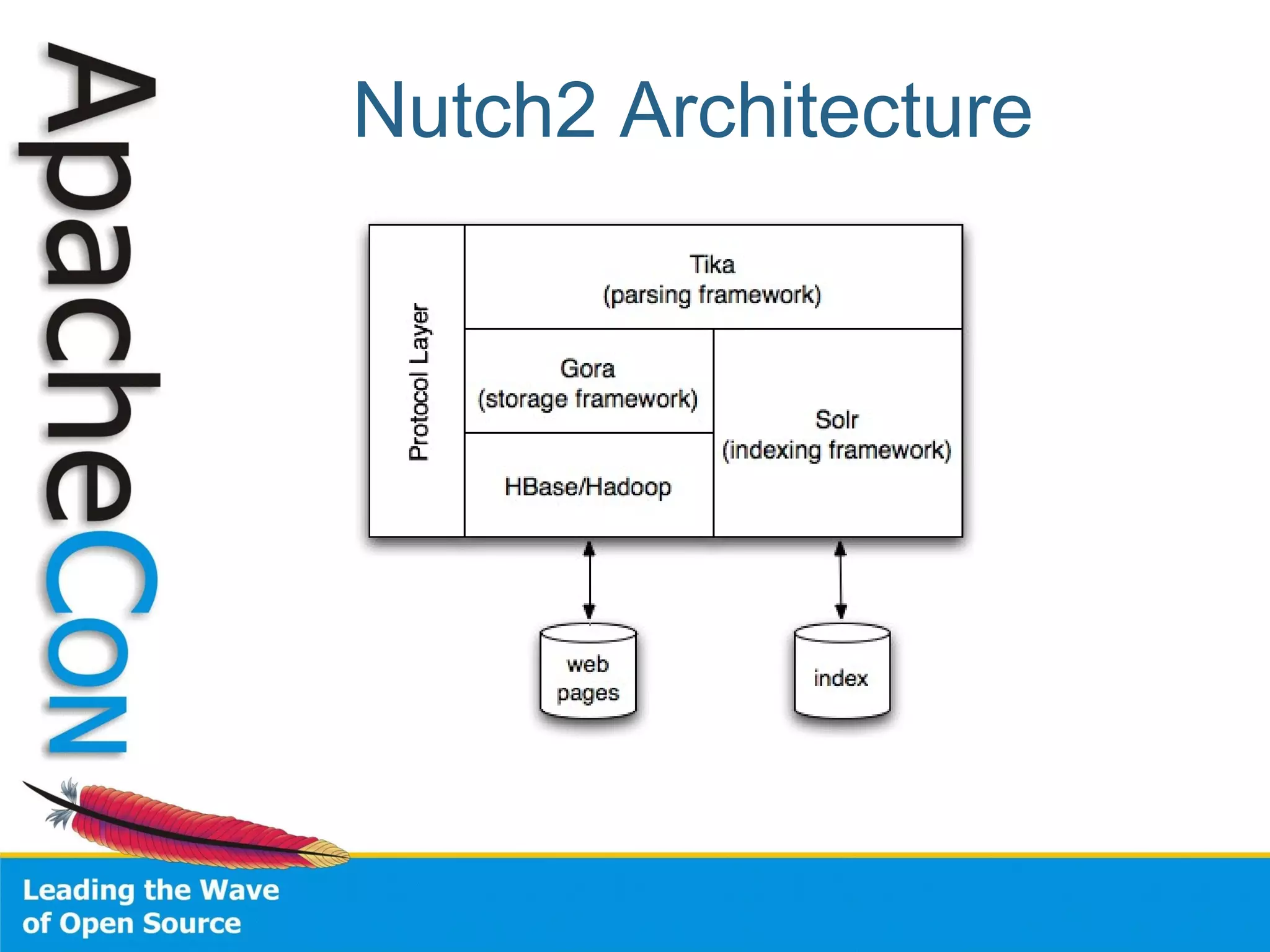

This document summarizes the history and development of the Nutch web search engine project. It discusses how Nutch evolved from its original version to incorporate Hadoop and become more modular by delegating functions like indexing and parsing to other Apache projects like Solr and Tika. The current version, Nutch 2.0, aims to have a slimmed down architecture where it acts as a delegator to these other frameworks rather than handling these functions itself. The document also reflects on lessons learned from earlier stages of the project around community engagement, maintenance, and configuration challenges.