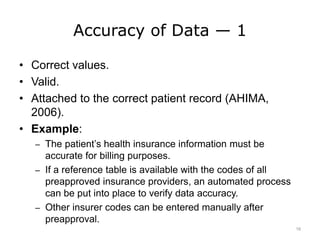

This lecture discusses assessing data quality and identifies 10 key attributes of data quality: definition, accuracy, accessibility, comprehensiveness, consistency, currency, timeliness, granularity, precision, and relevancy. Poor data quality can threaten patient safety and quality of care, reduce effectiveness of decision making, and increase costs. The lecture provides examples and recommendations for ensuring each of the 10 data quality attributes.