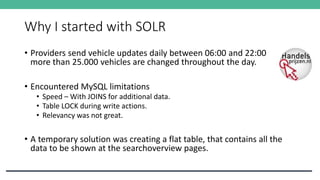

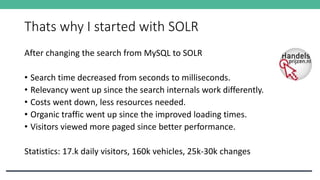

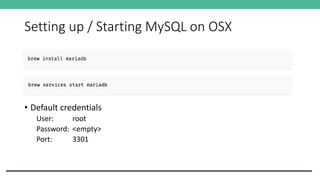

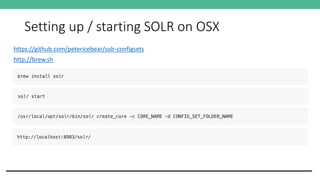

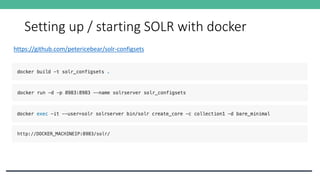

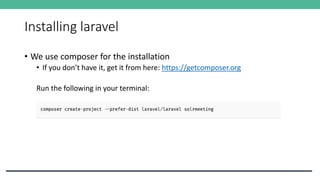

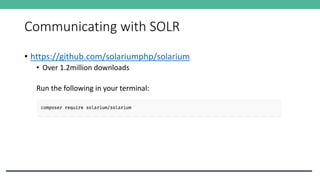

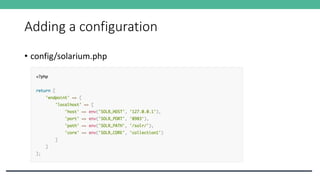

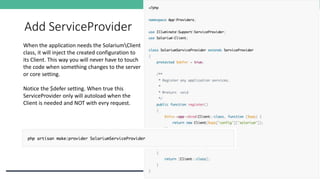

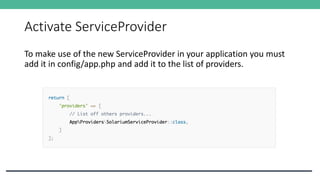

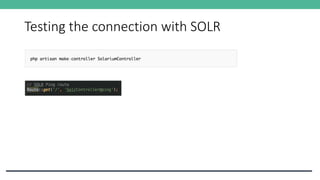

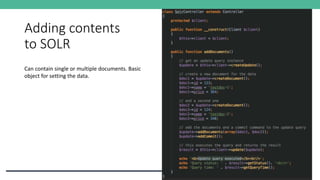

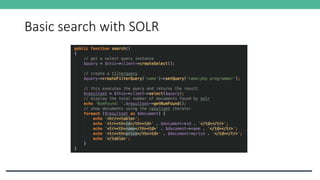

This document discusses integrating Solr with Laravel to create a powerful search solution, highlighting the limitations of MySQL for search tasks and the benefits of switching to Solr, such as improved speed, relevancy, and decreased costs. It includes practical information on setting up both MySQL and Solr, configuring them, and utilizing Solarium for communication with Solr. Additionally, it outlines the essential steps to integrate and test Solr within a Laravel application.

![Facets query

inStock possible values

True [200]

False [100]](https://image.slidesharecdn.com/presentatiegroningenphp-170222114606/85/Laravel-and-SOLR-31-320.jpg)