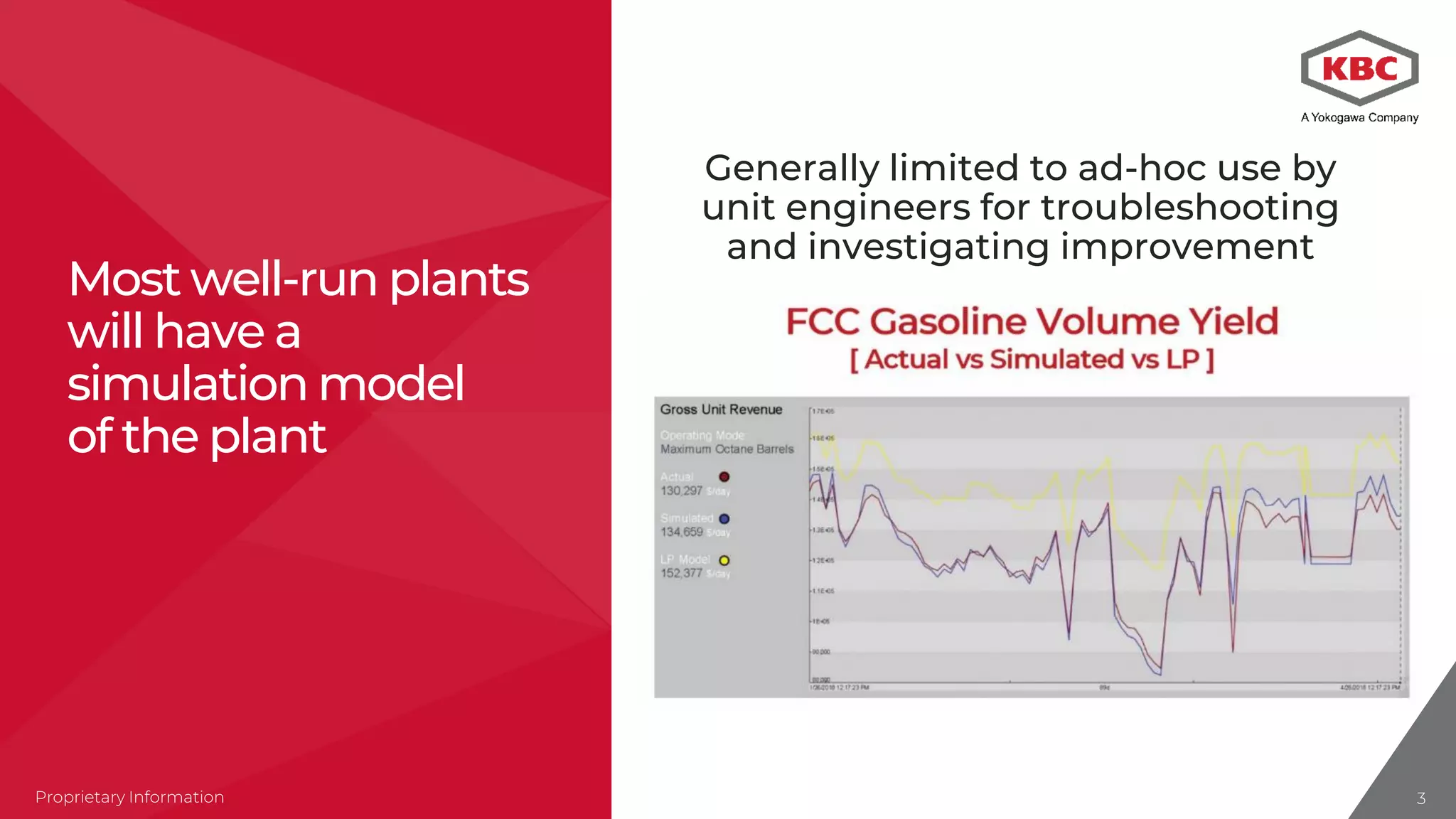

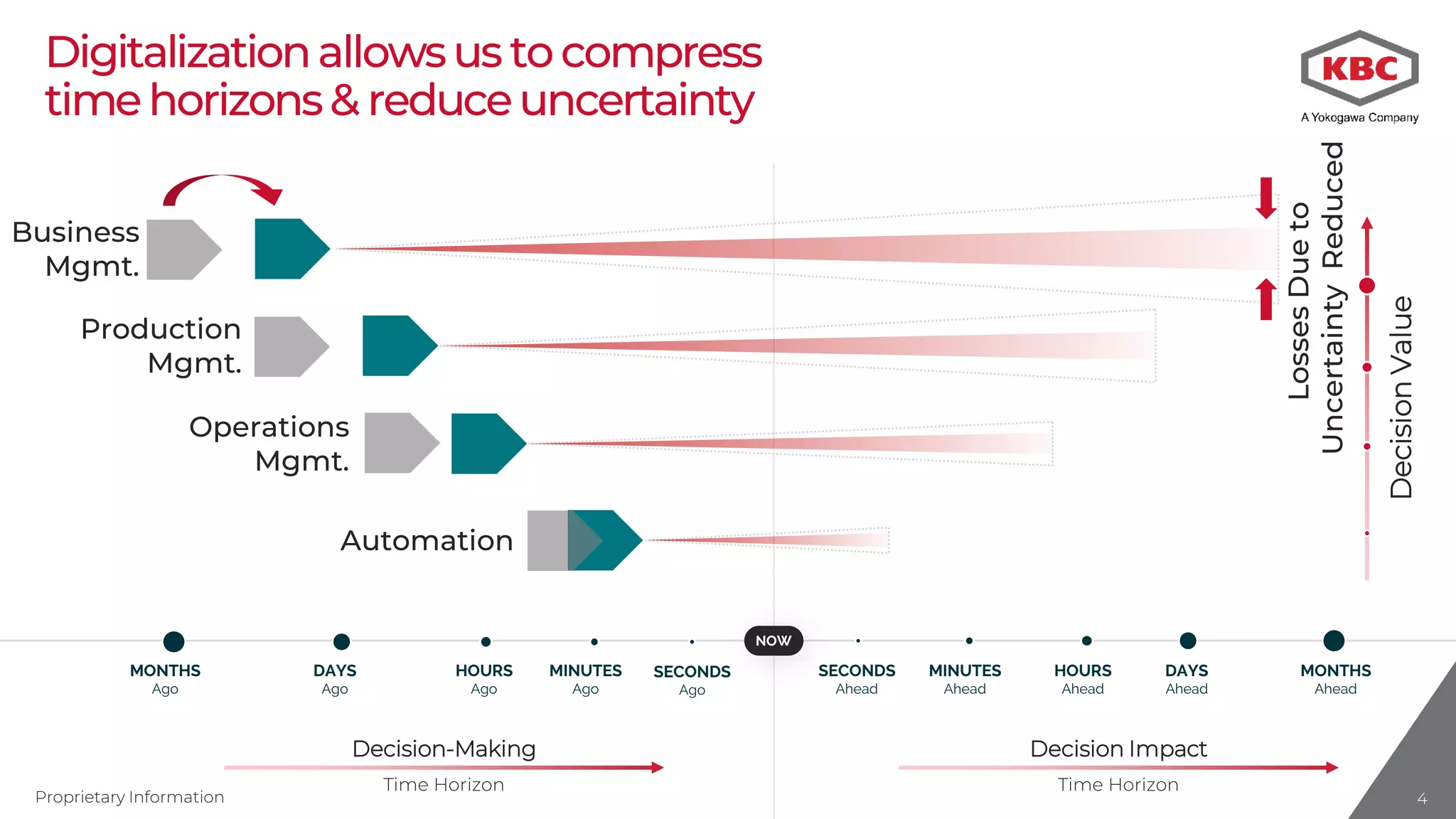

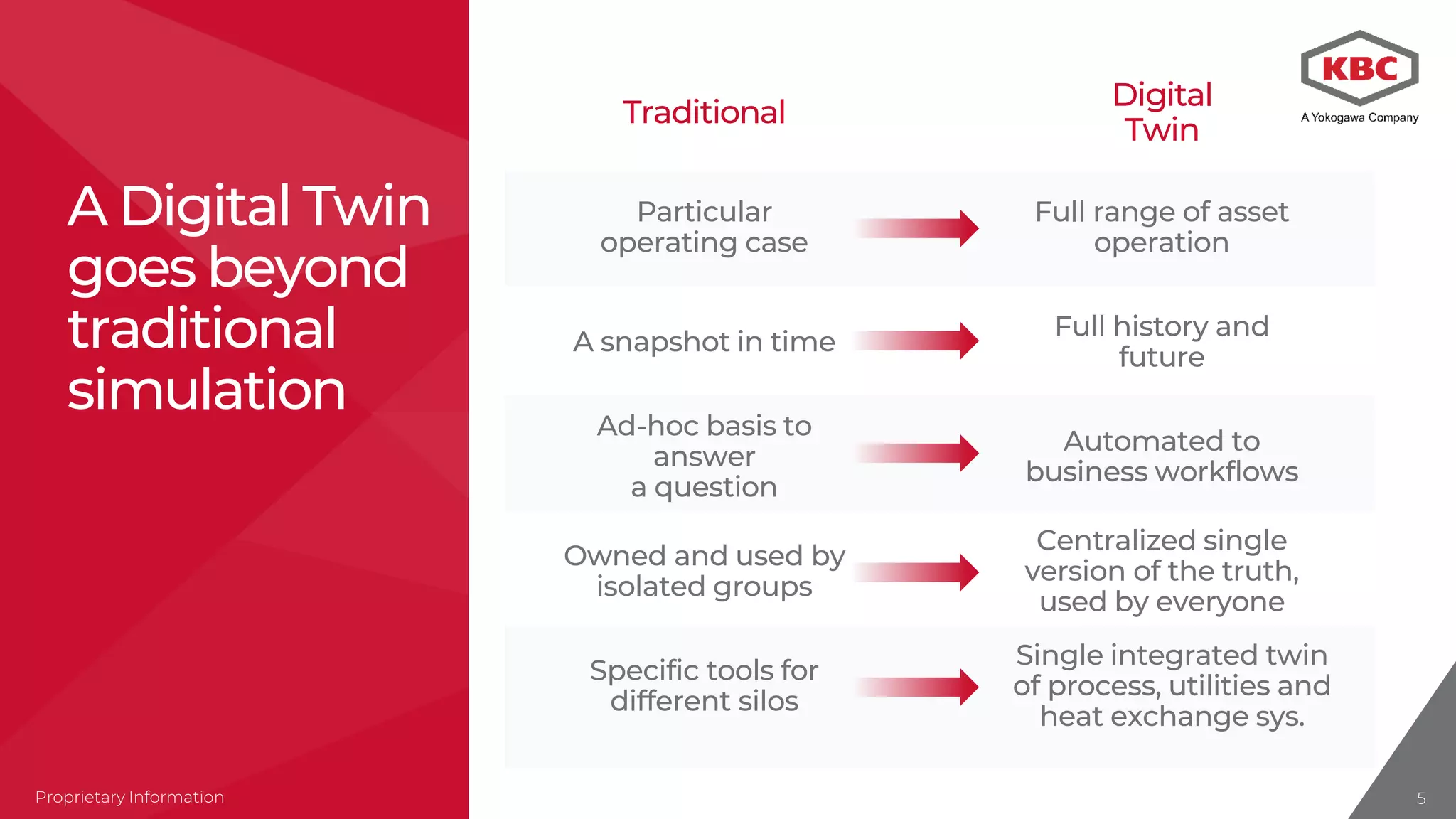

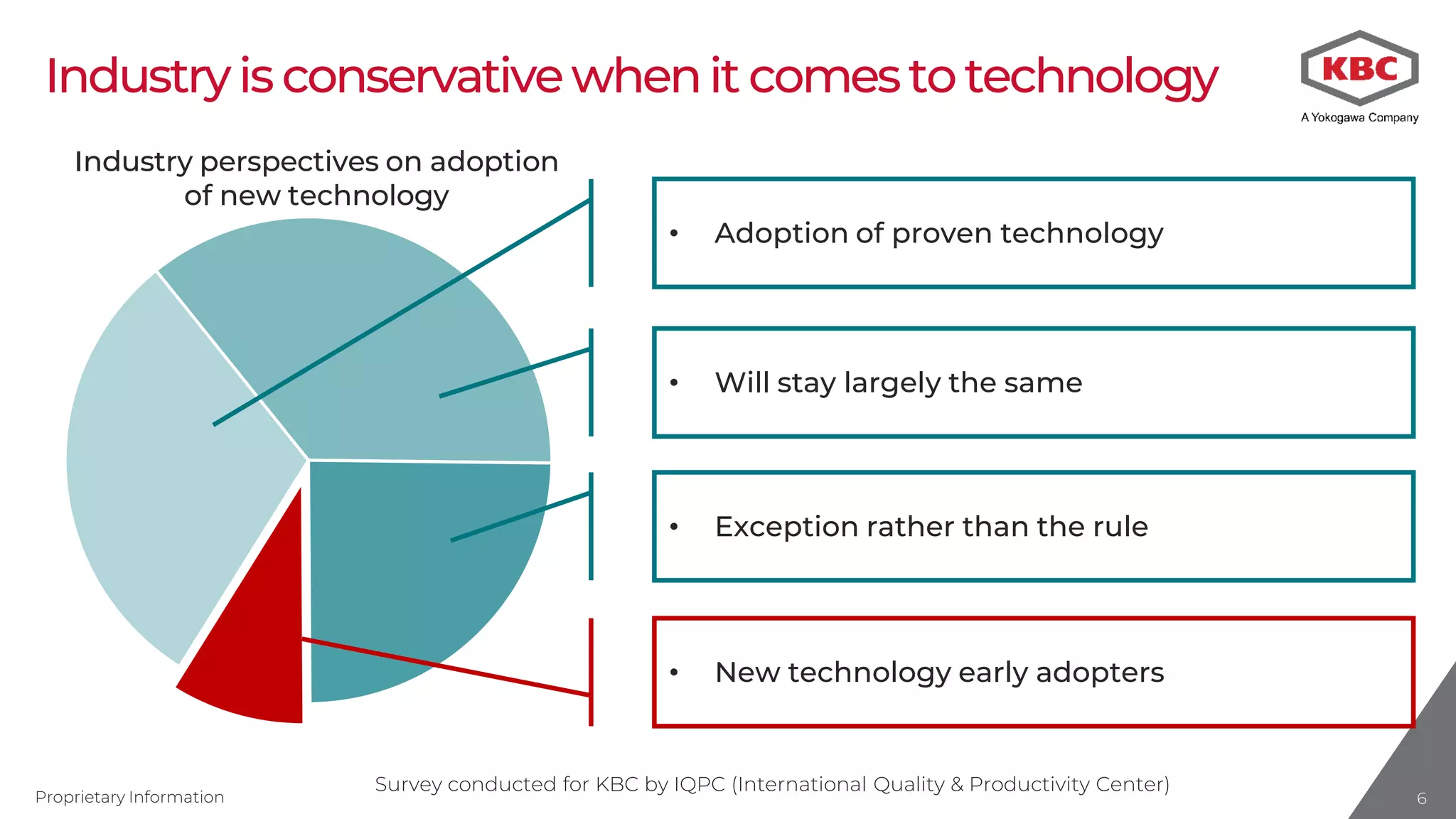

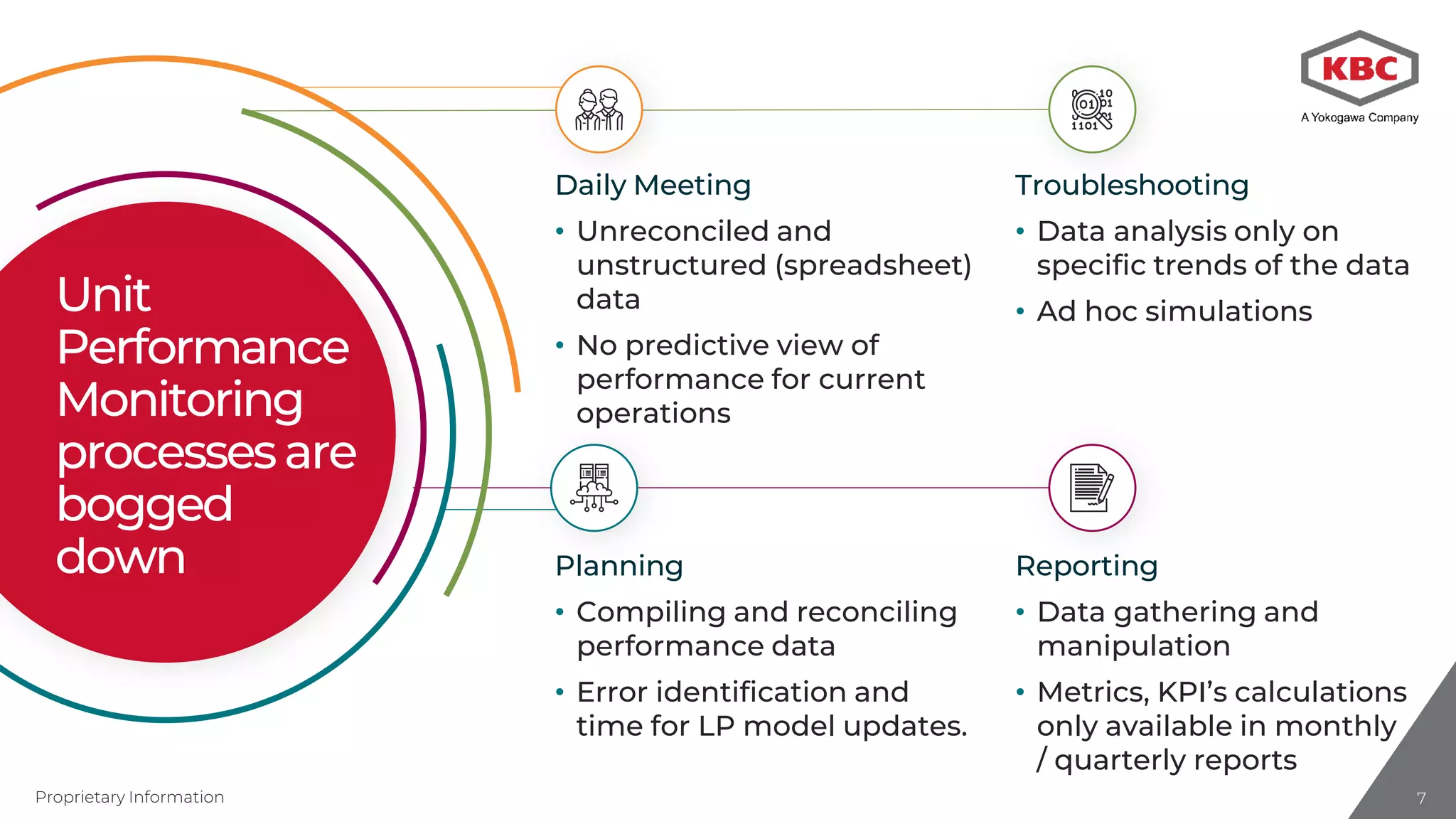

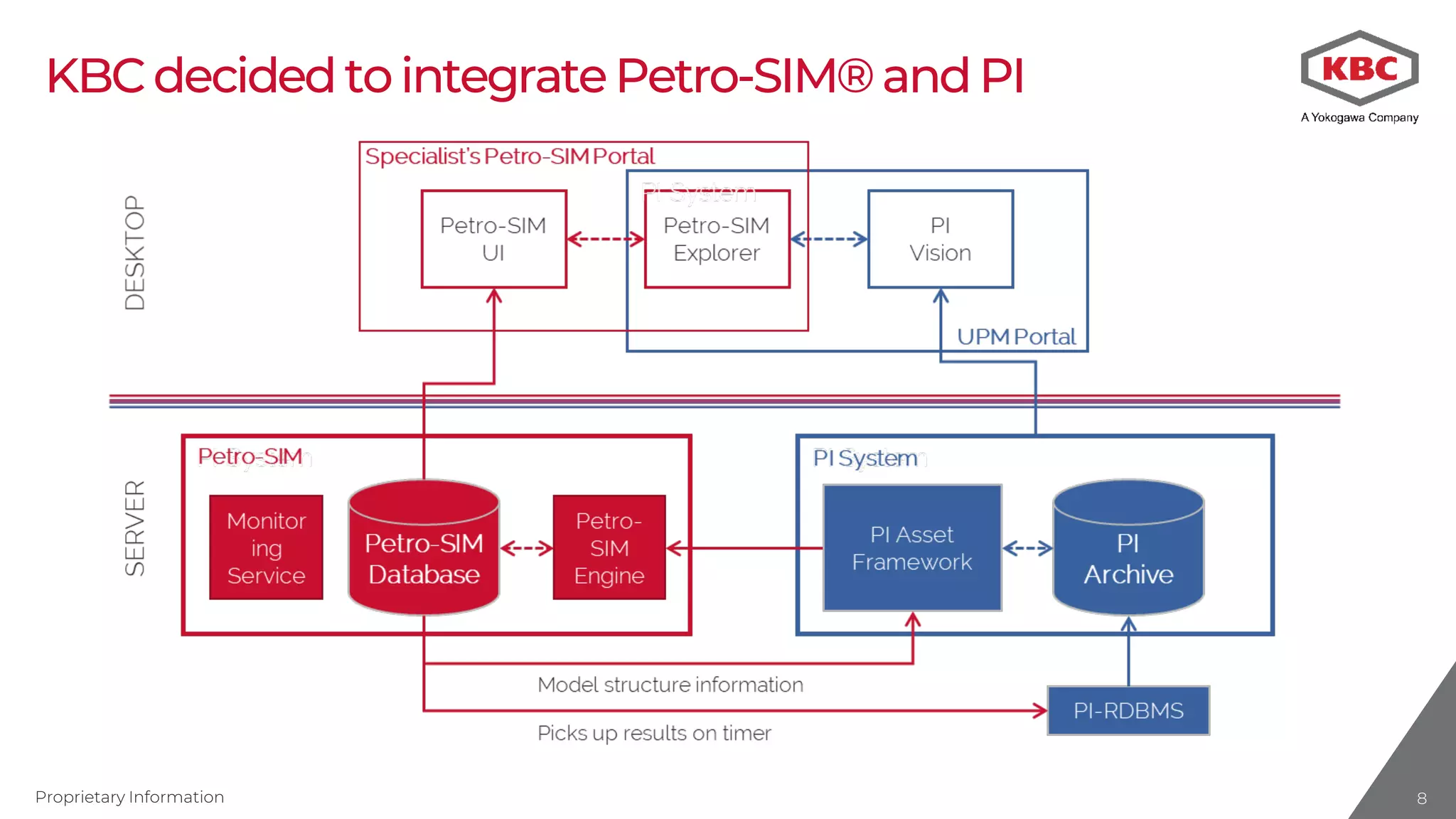

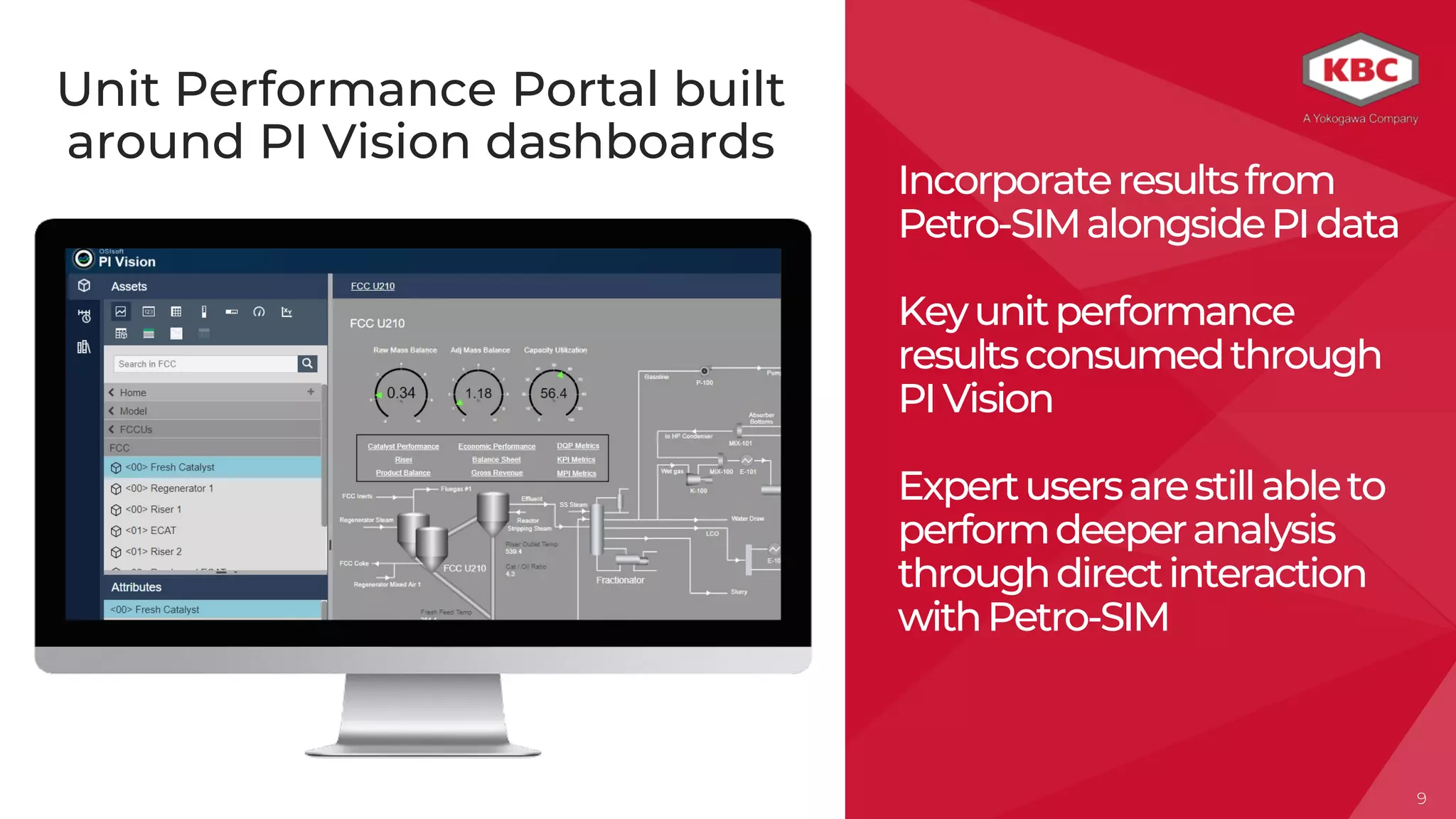

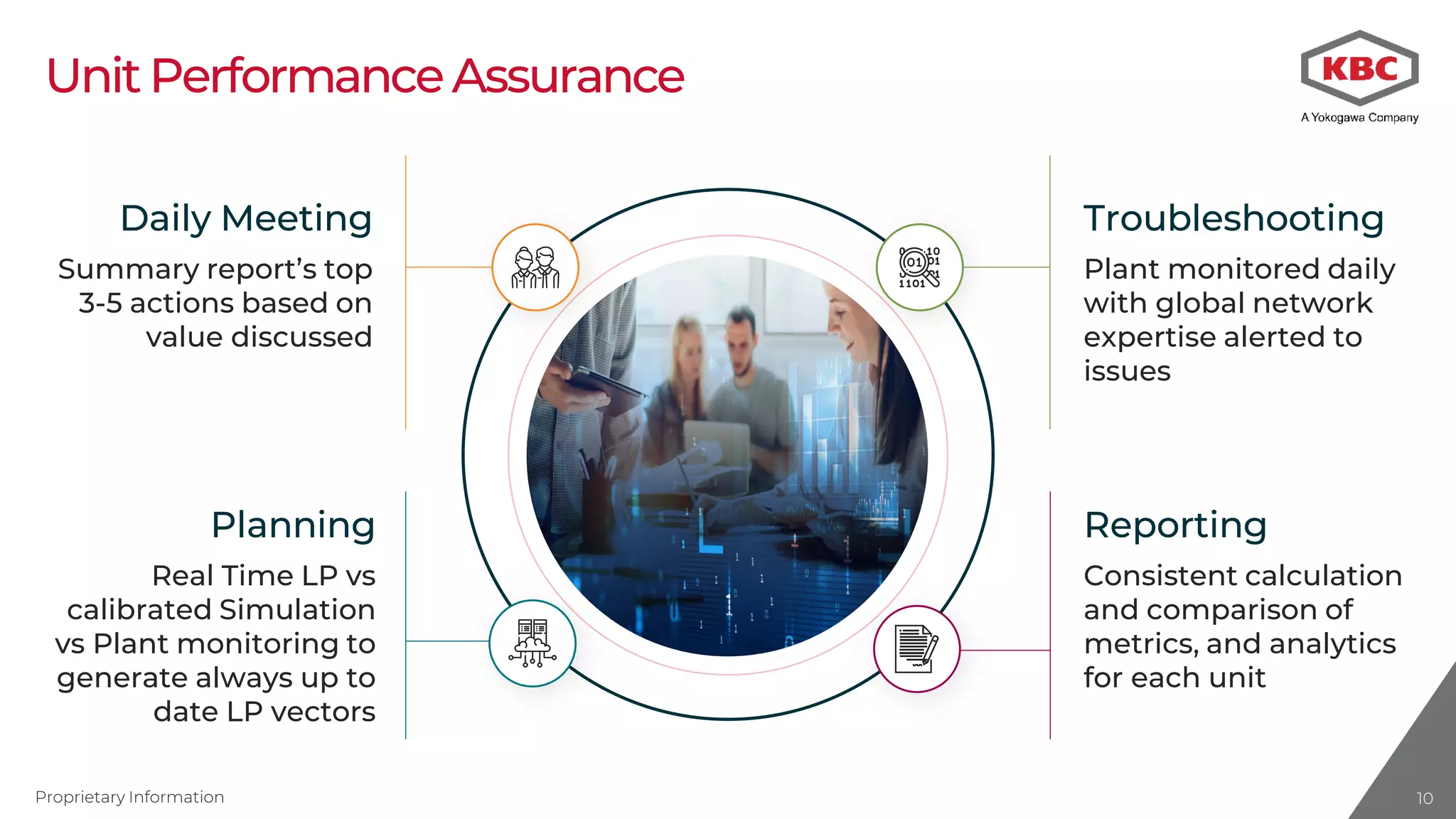

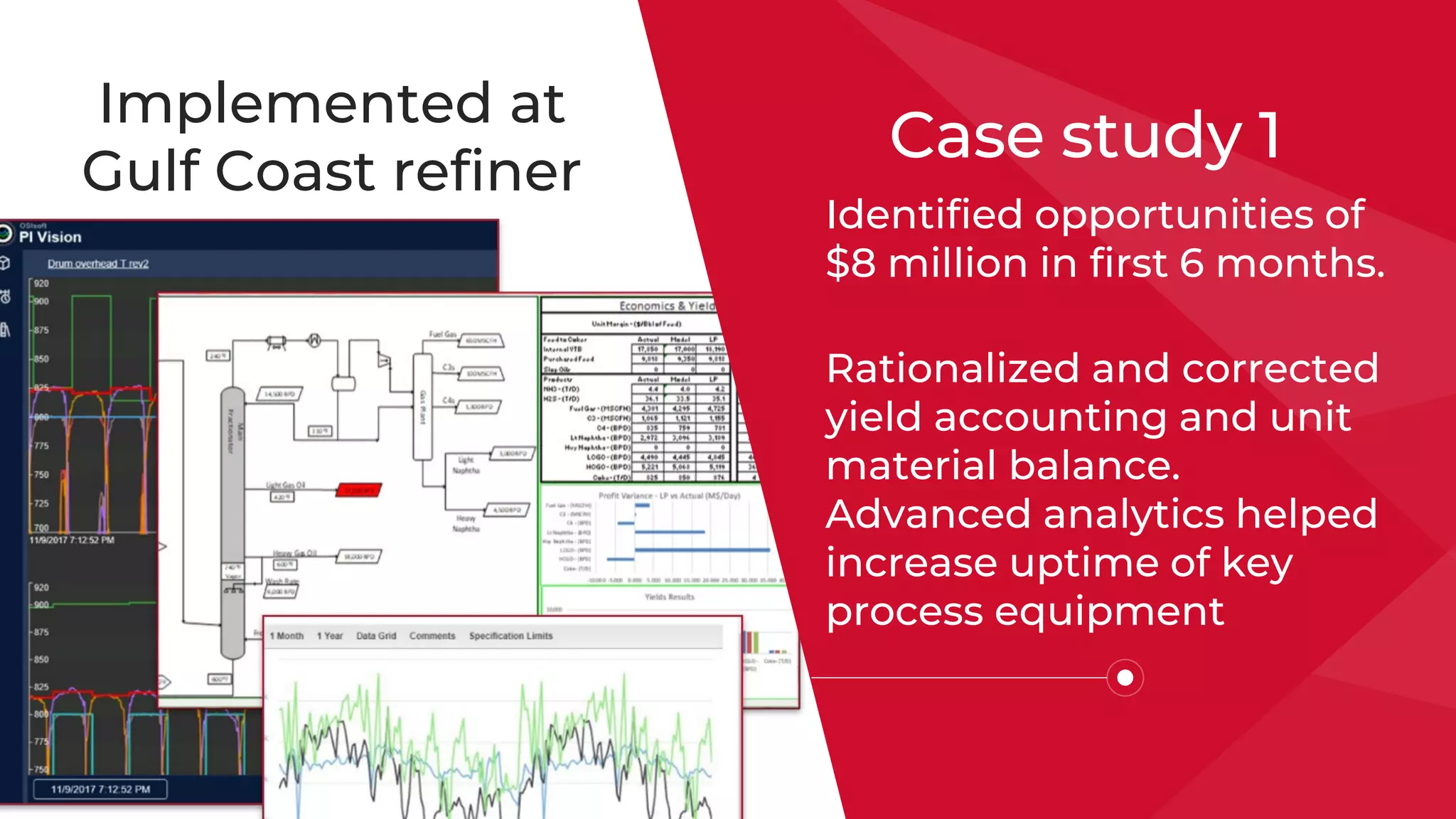

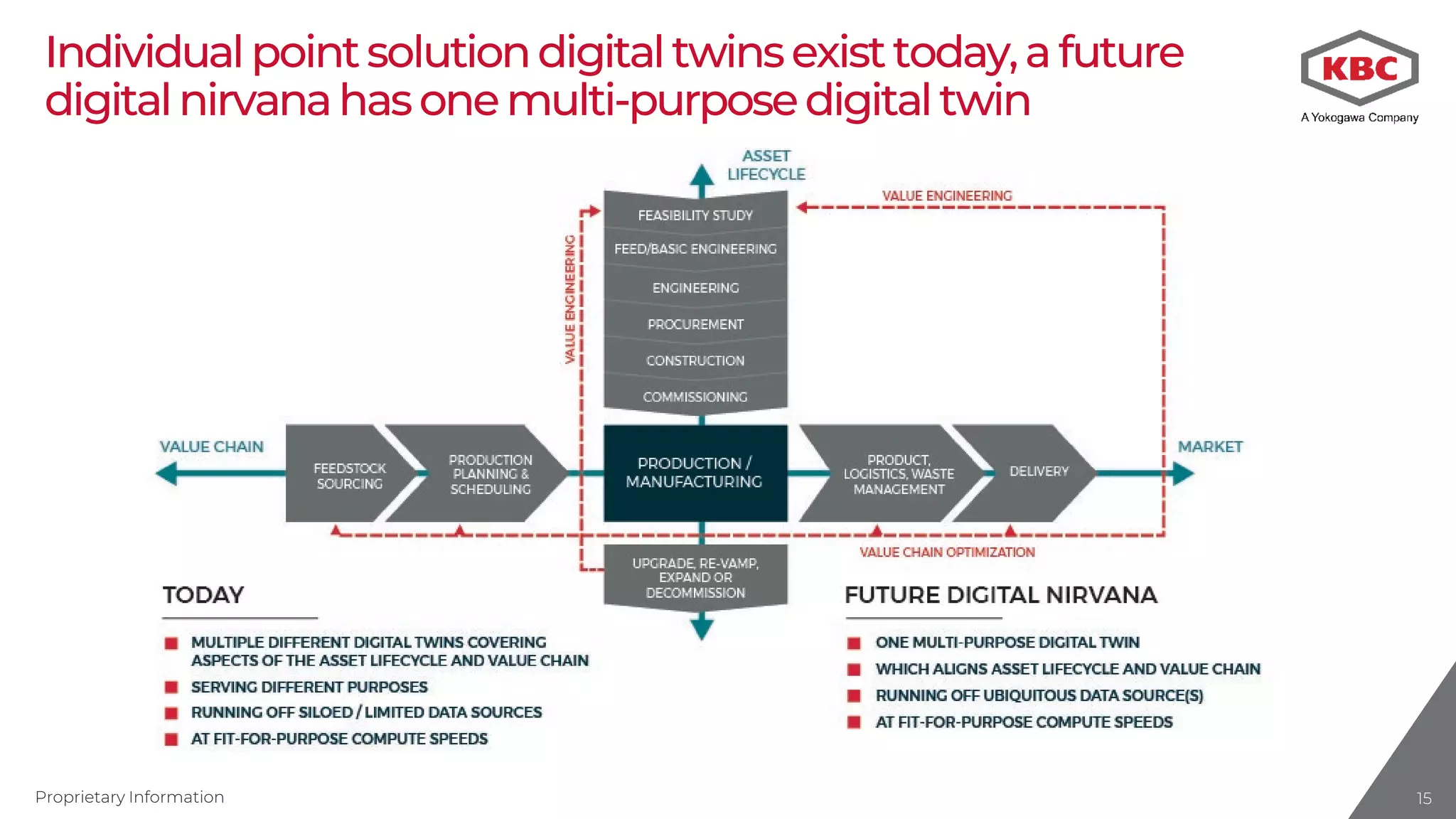

The document explores the practical application of digital twins in minimizing lost profit through integrating simulations into daily operations. It emphasizes the advantages of having a centralized digital twin that maintains a complete history and future projections, enhancing decision-making and operational efficiency. Case studies demonstrate significant financial benefits achieved through real-time monitoring and model validation in refining processes.