Embed presentation

Downloaded 46 times

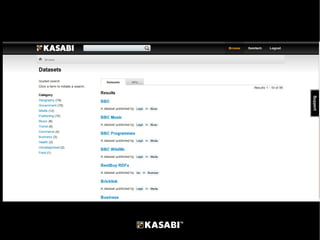

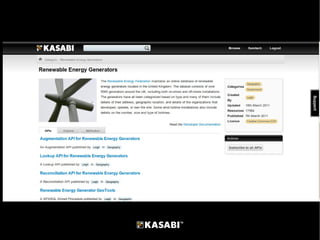

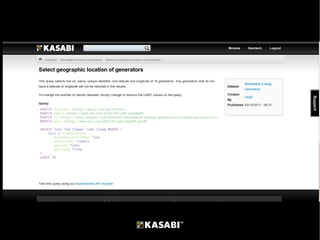

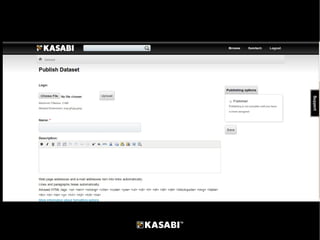

The document discusses Kasabi, a linked data marketplace that aims to make it easy to publish and use data and help people get paid for their data. It does this through cloud-based RDF storage, linked data publishing tools, search and browse capabilities for datasets, standard and custom APIs for accessing datasets instantly. The presentation demonstrates Kasabi and outlines future features like usage statistics, dataset analysis, and commercial features. Kasabi's revenue model involves fees for high-volume API usage and revenue sharing on commercial data. In summary, Kasabi is a platform for discovering, consuming, publishing and monetizing linked data.