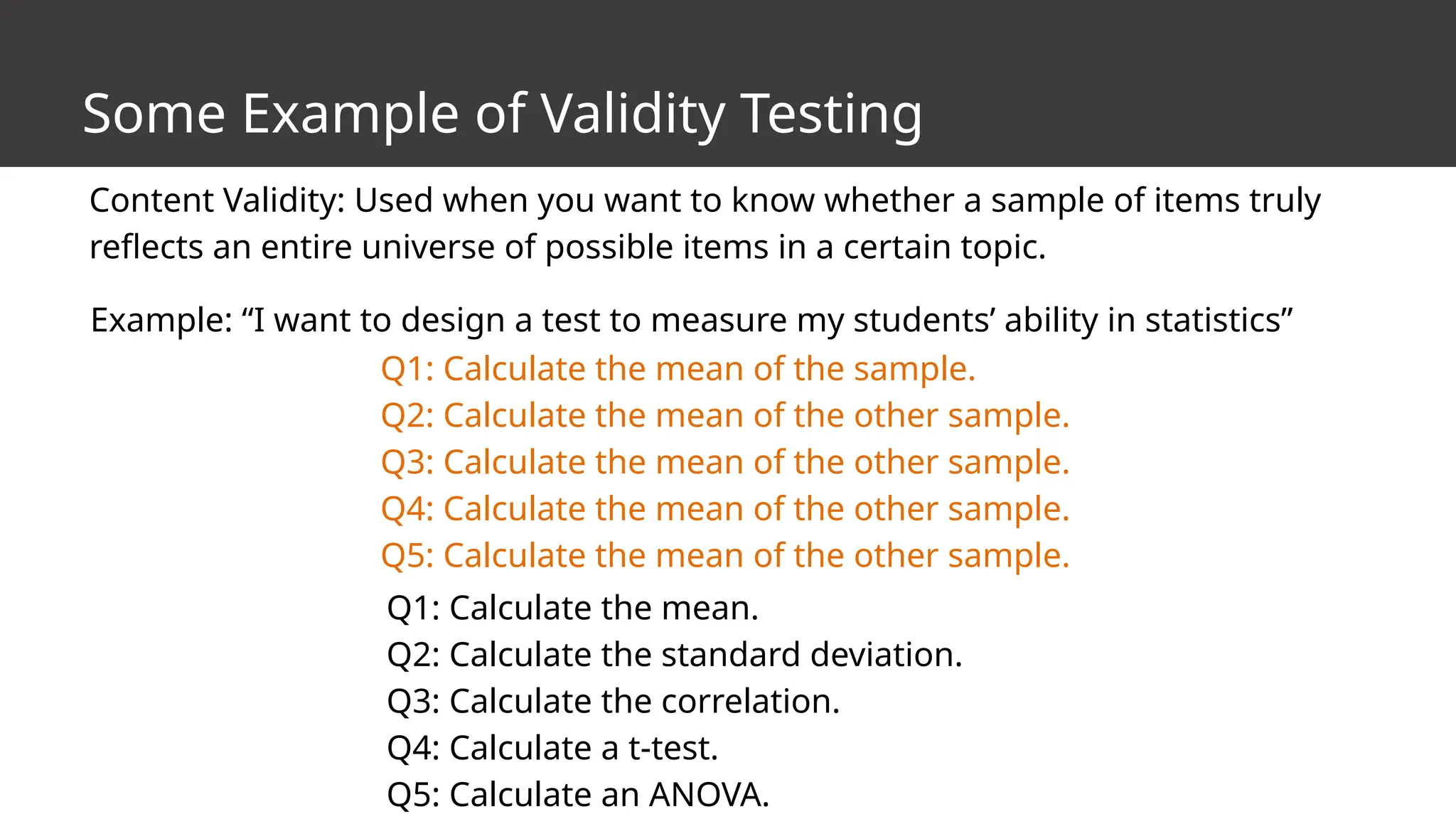

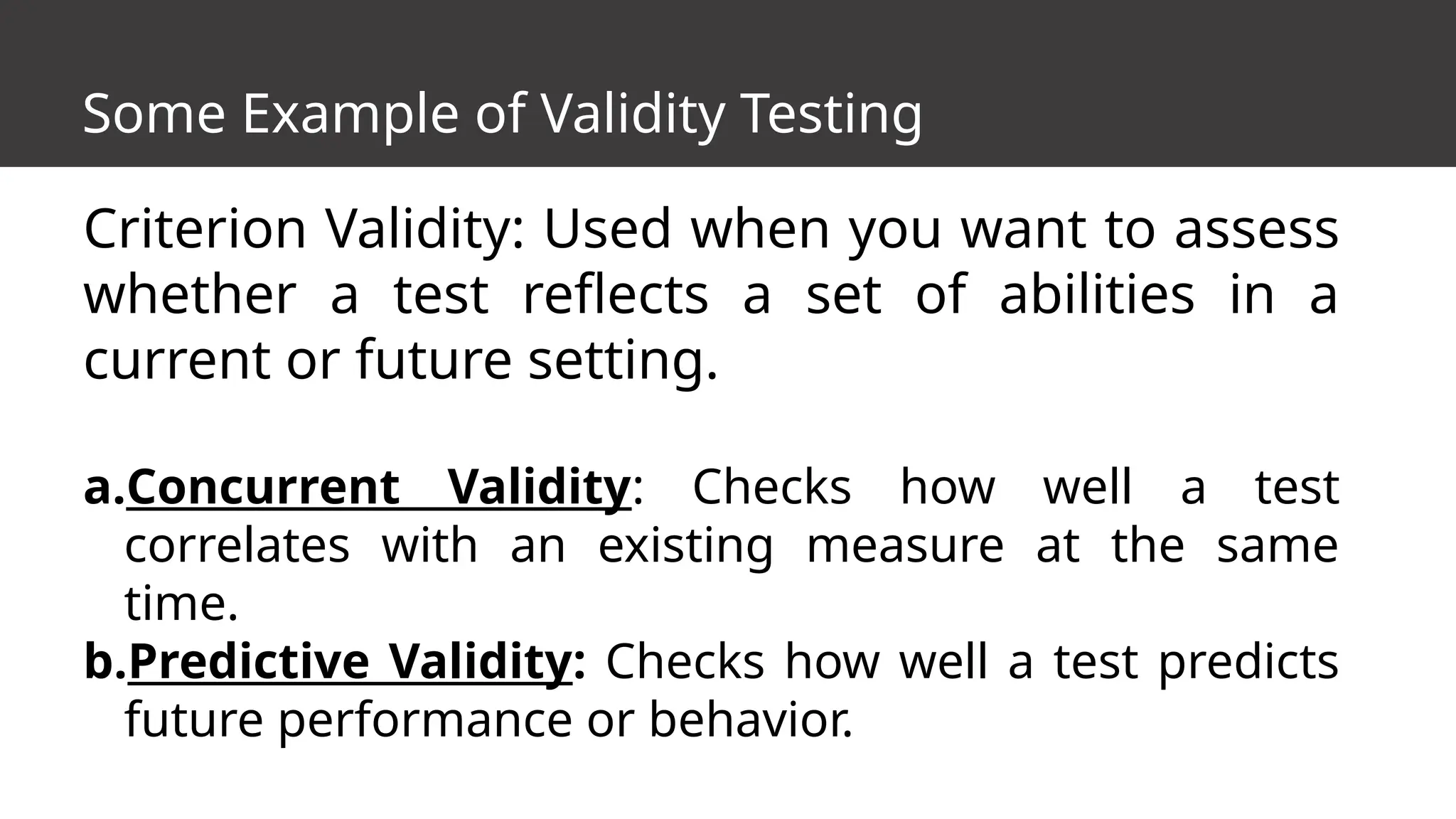

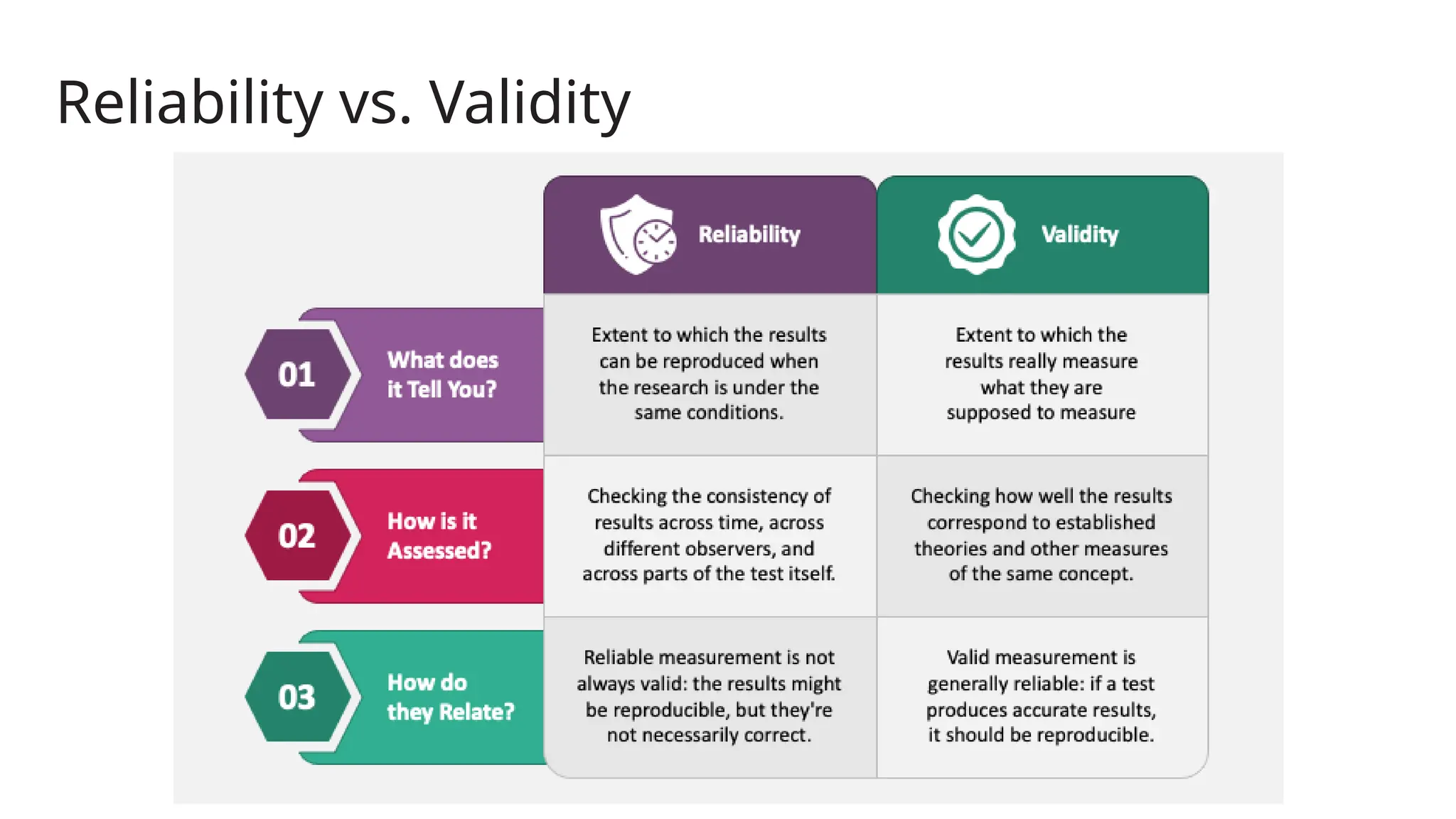

The document explains the concepts of reliability and validity in research measurement, detailing types of reliability such as test-retest, inter-rater, parallel forms, and internal consistency. It emphasizes how reliability measures consistency over time, across different observers, and among test items, while validity assesses the accuracy of what is being measured. Additionally, it discusses the differences between research-made, standardized, and modified-standardized instruments in the context of their validation and reliability.