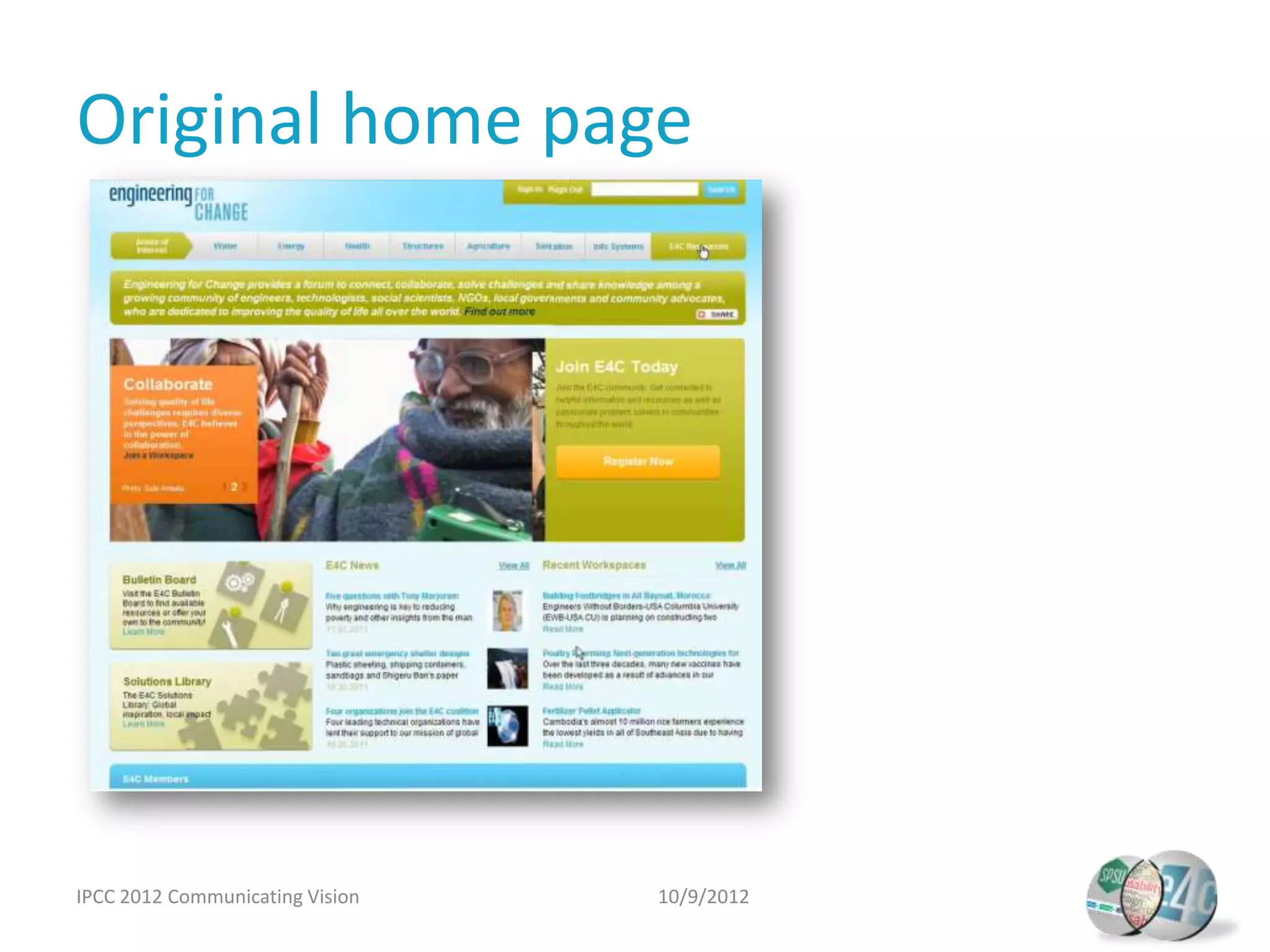

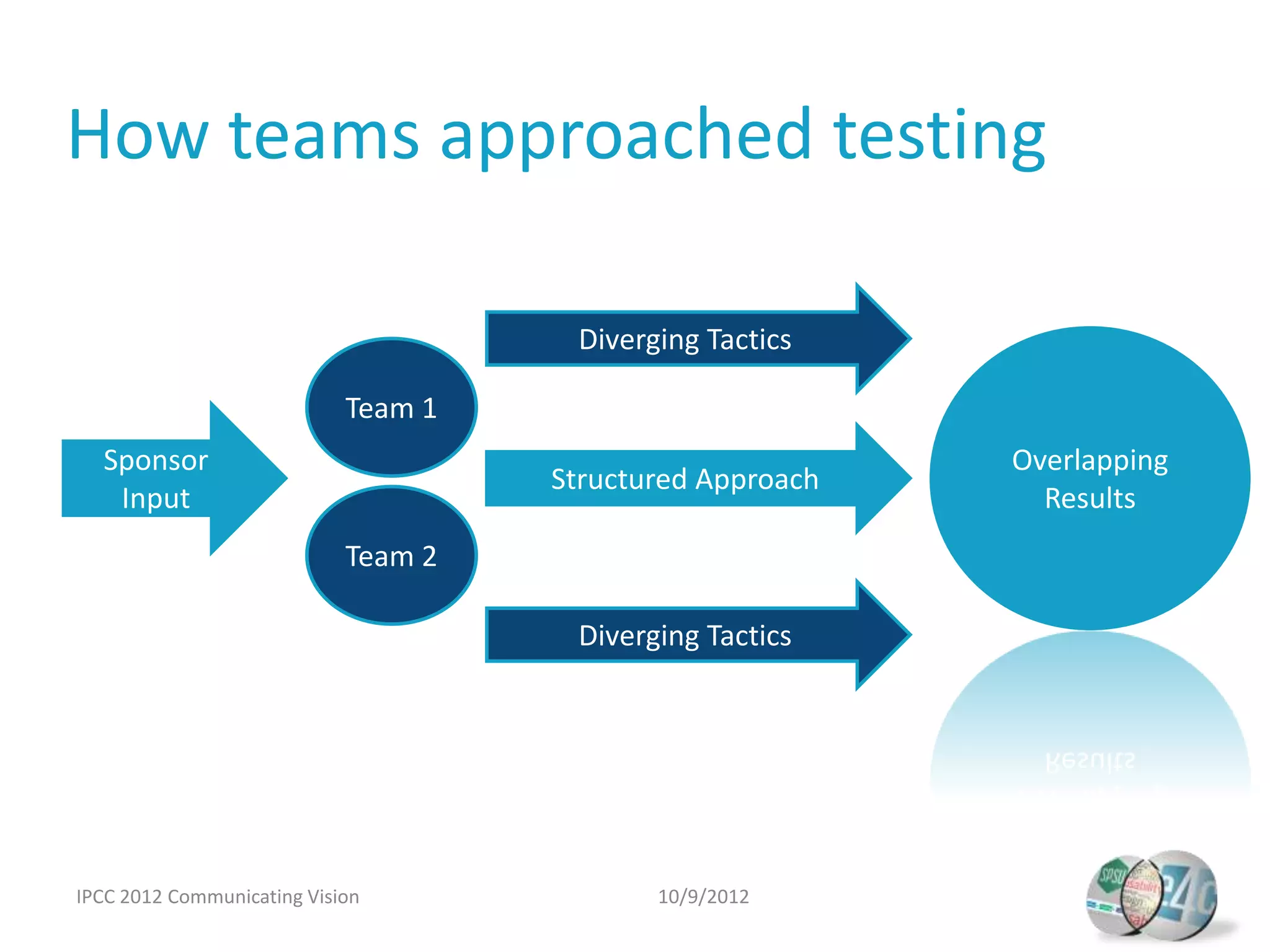

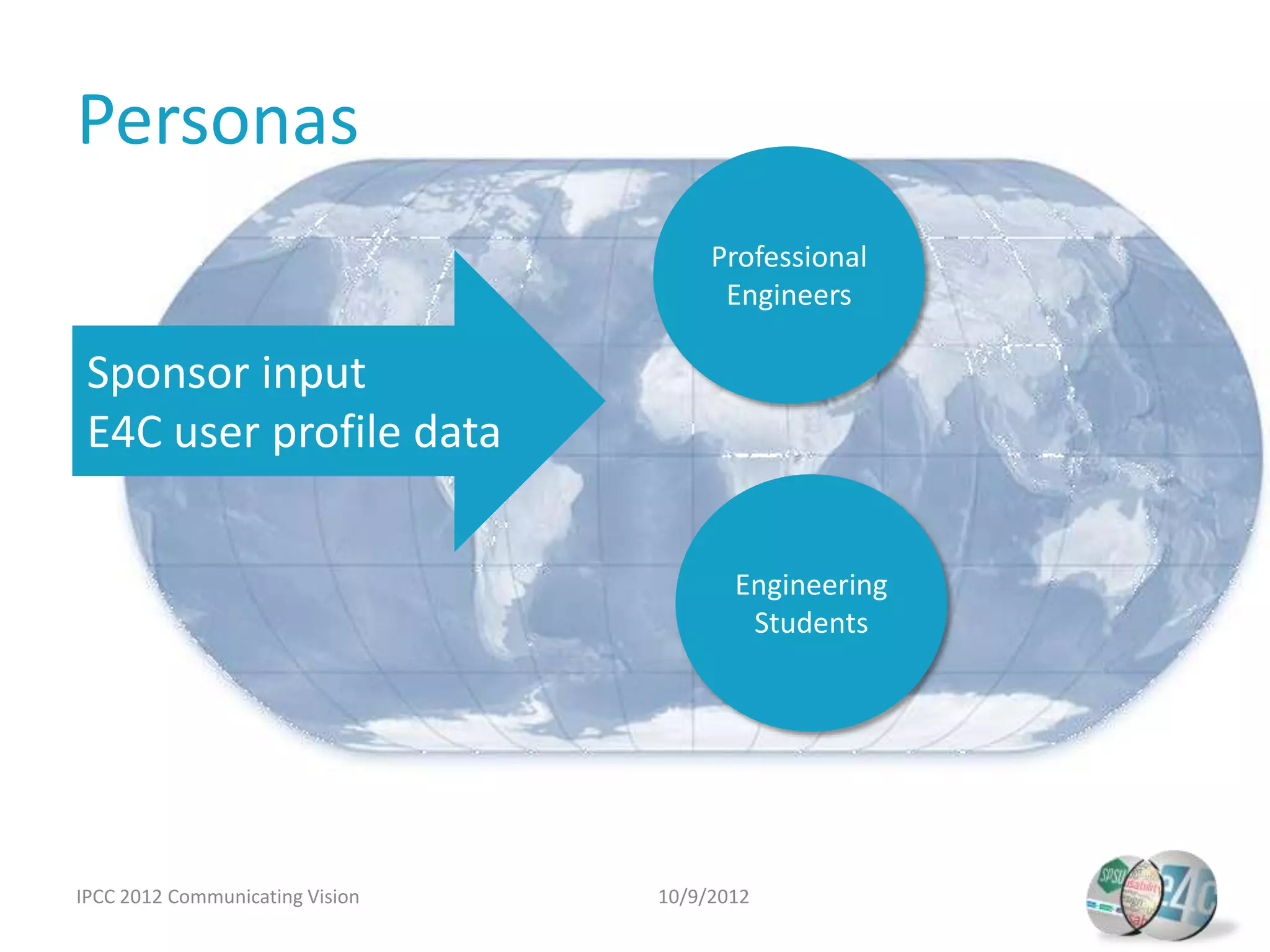

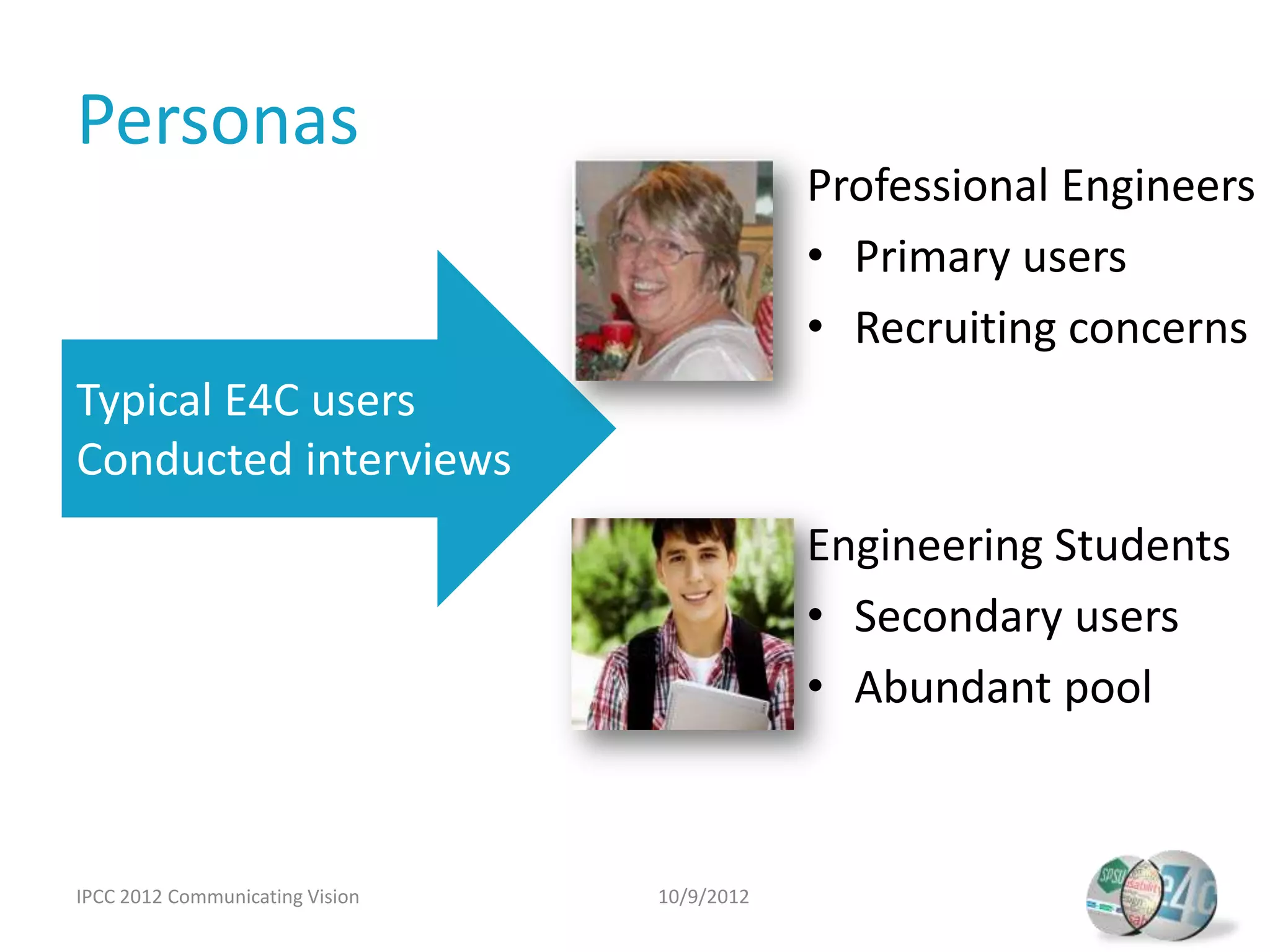

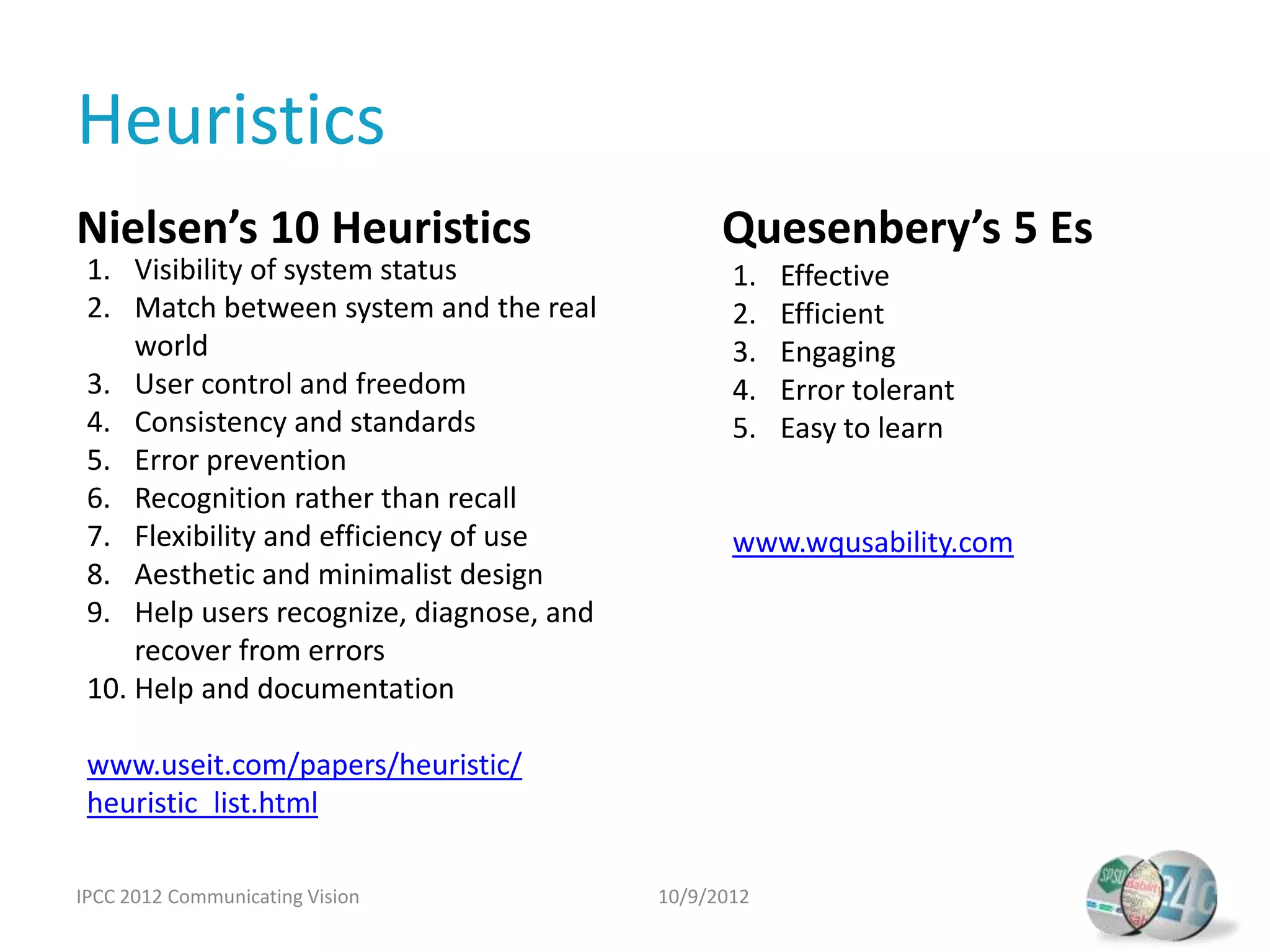

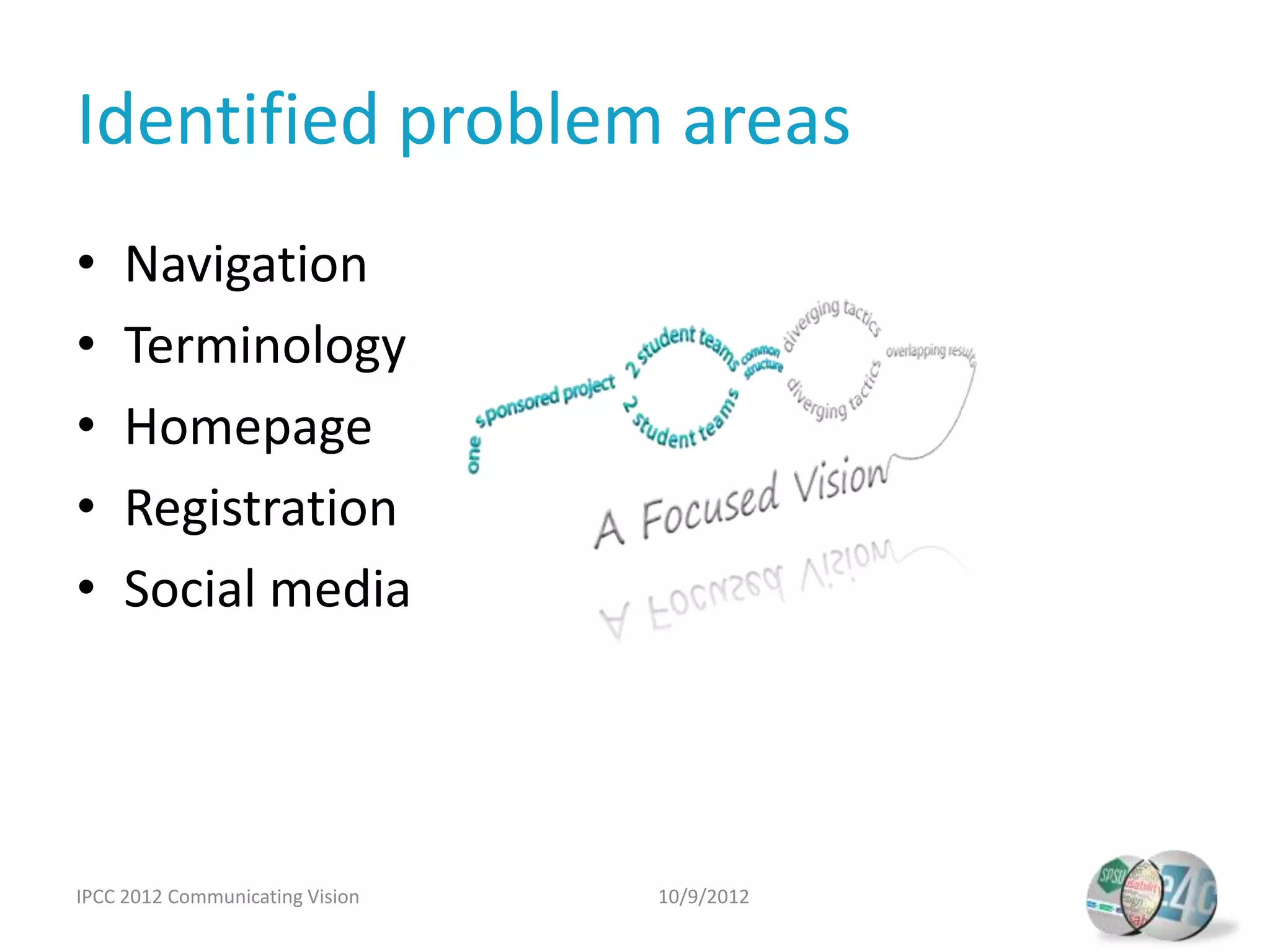

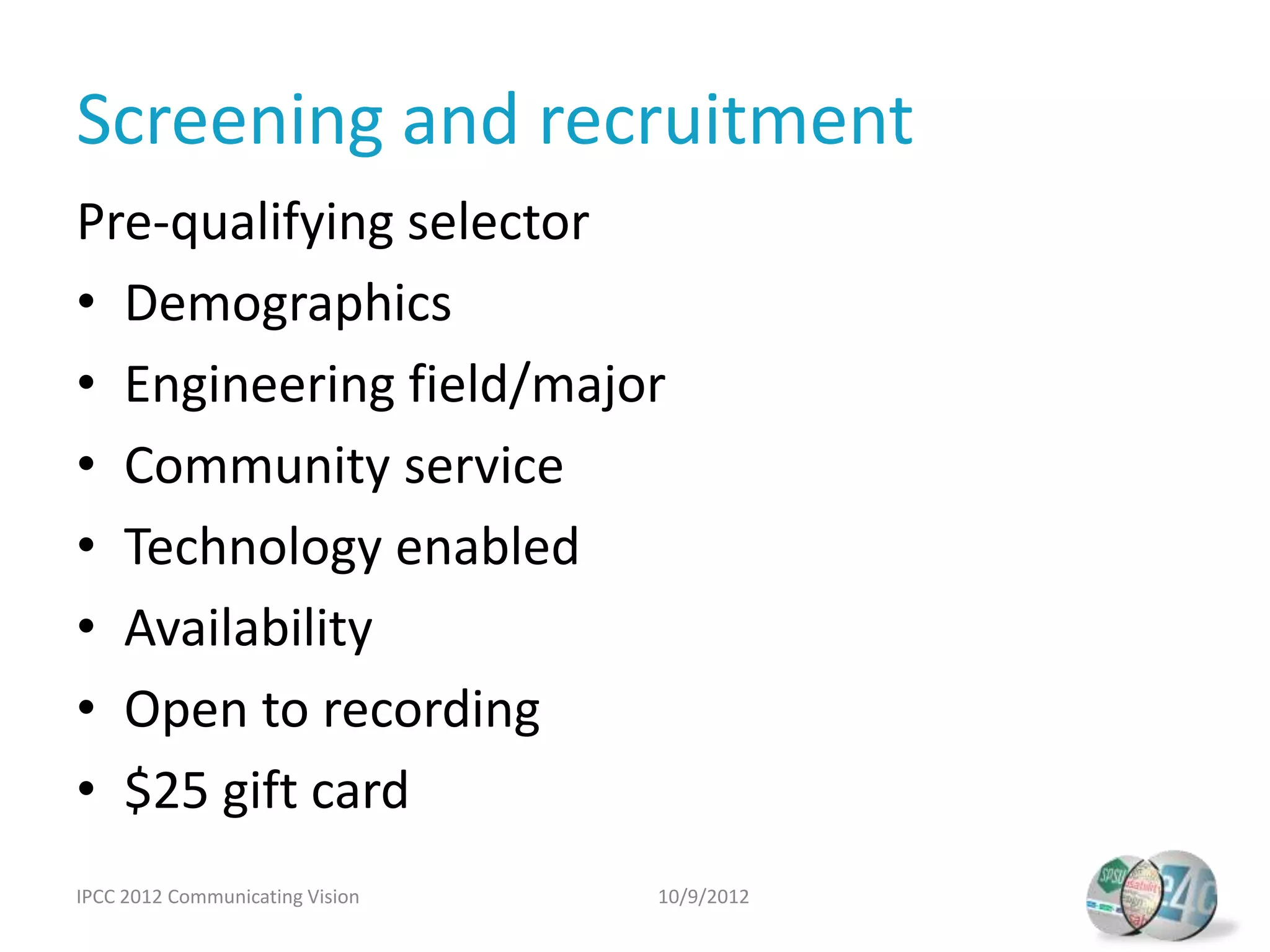

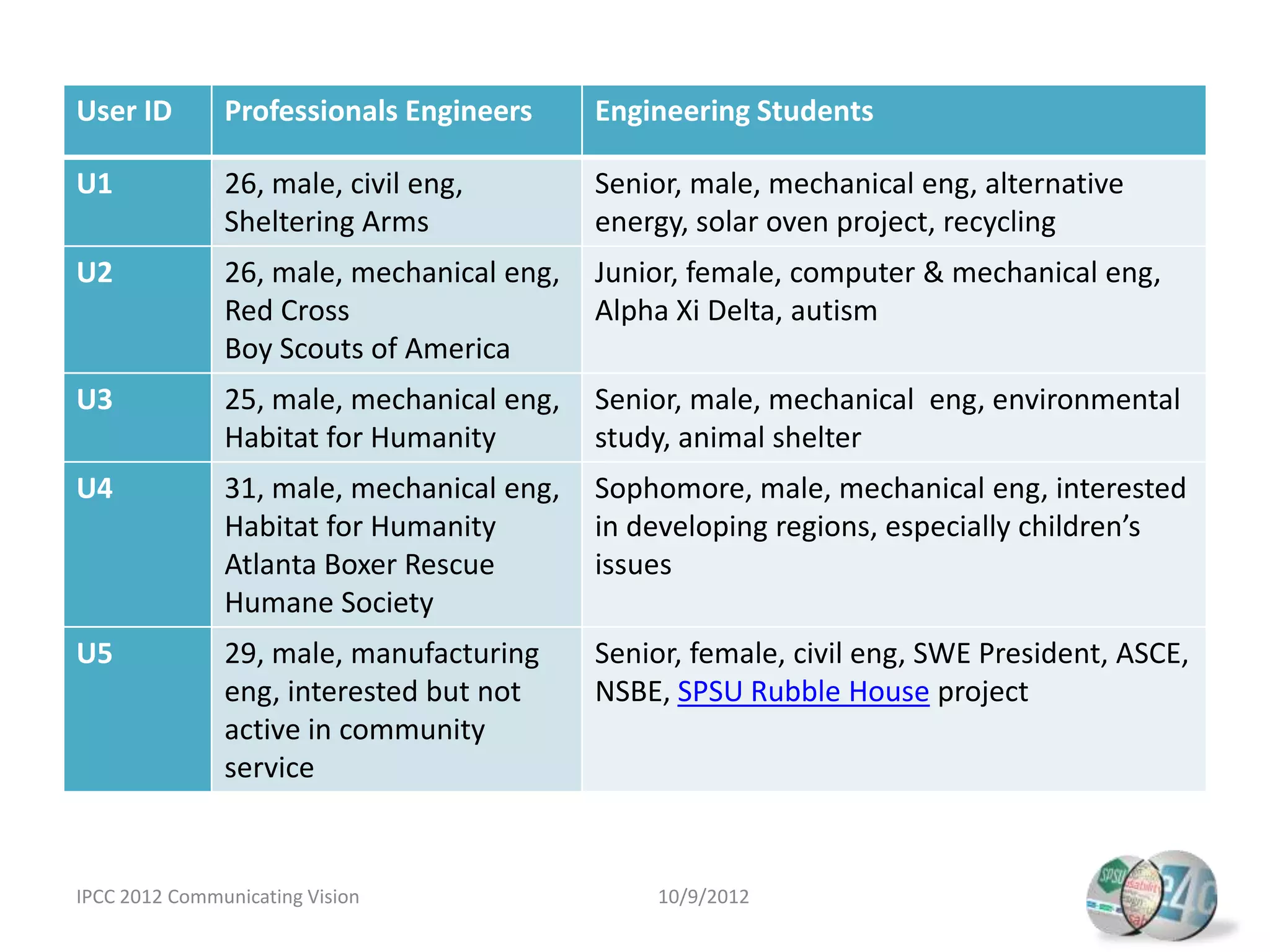

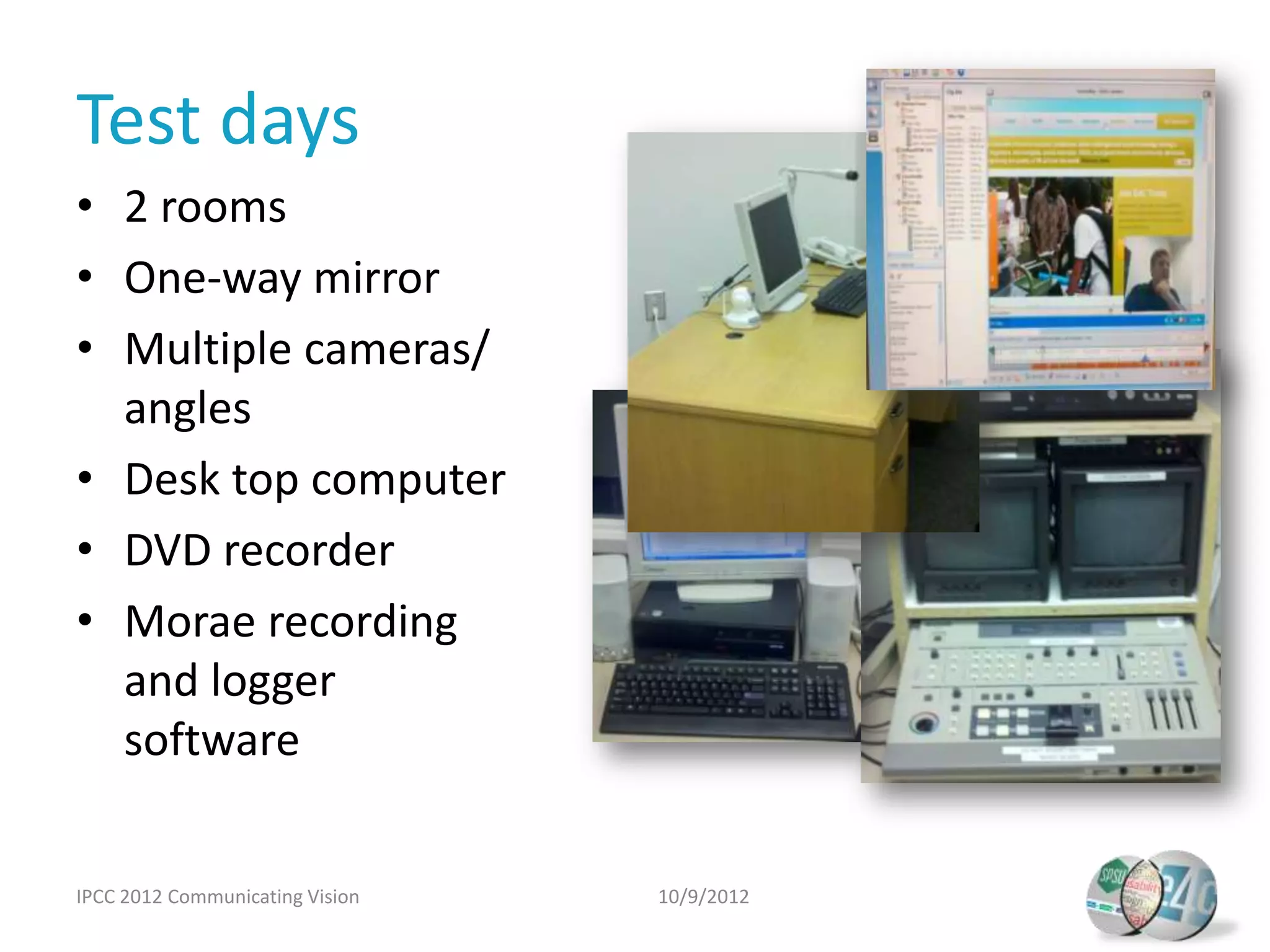

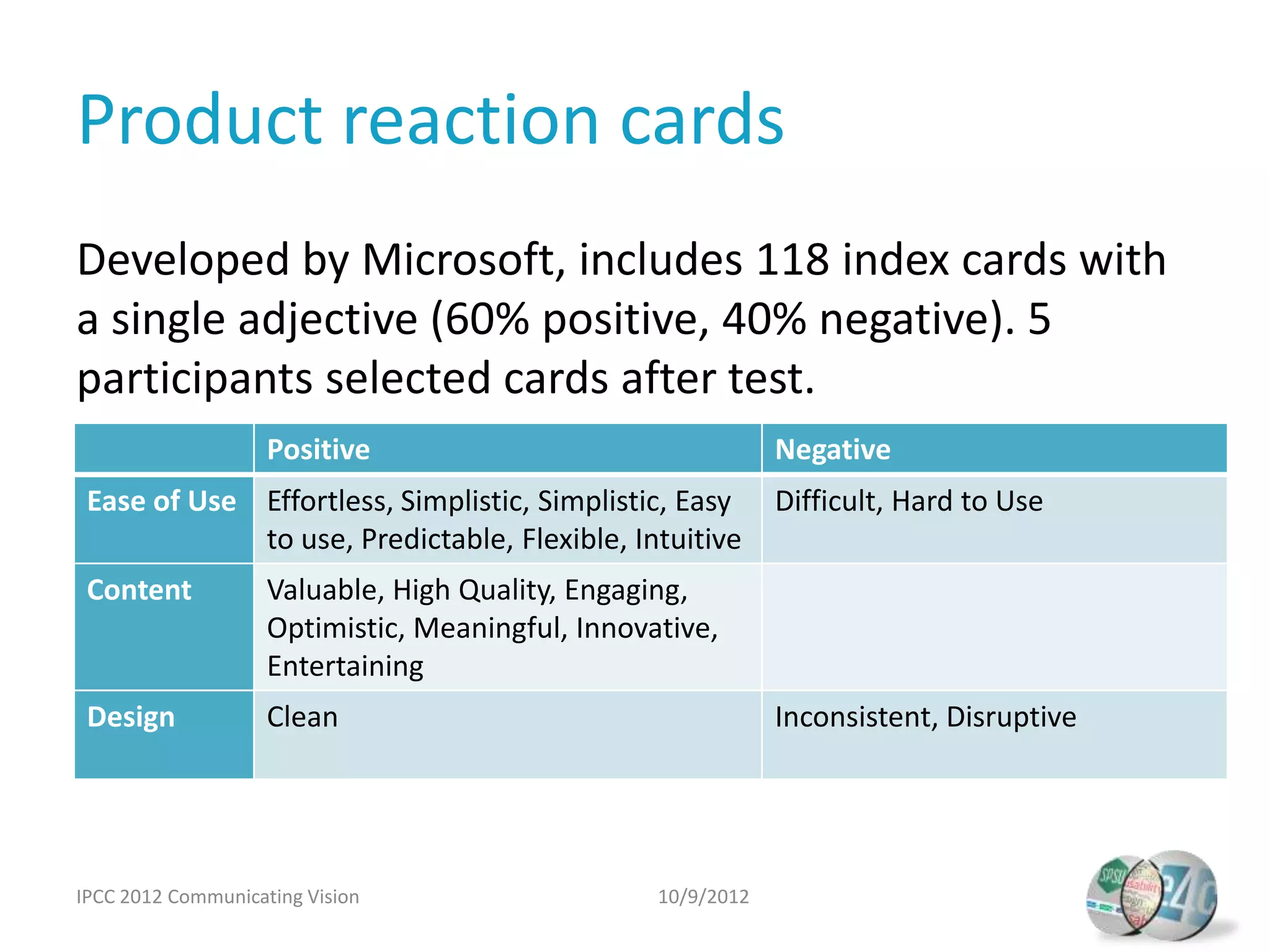

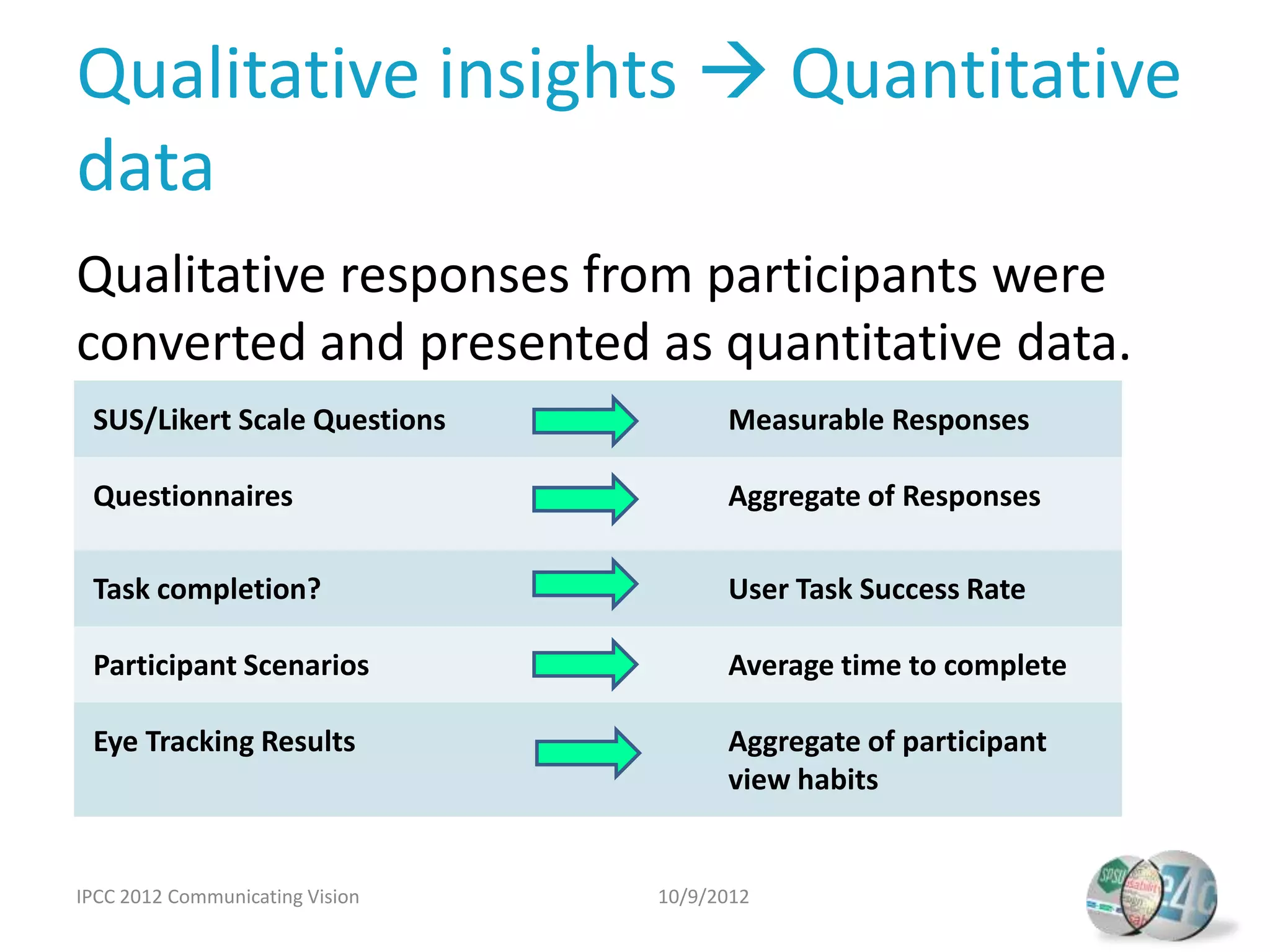

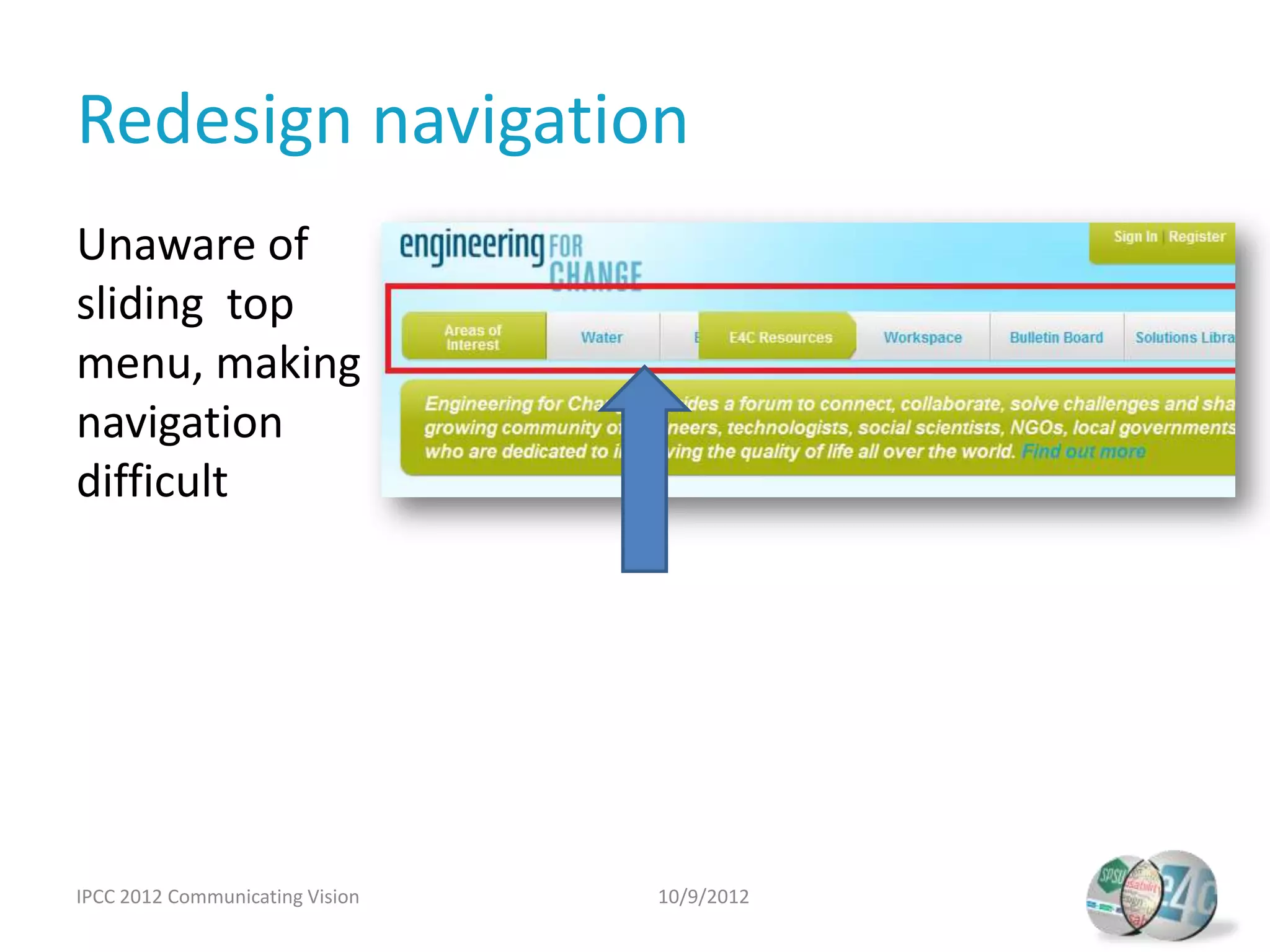

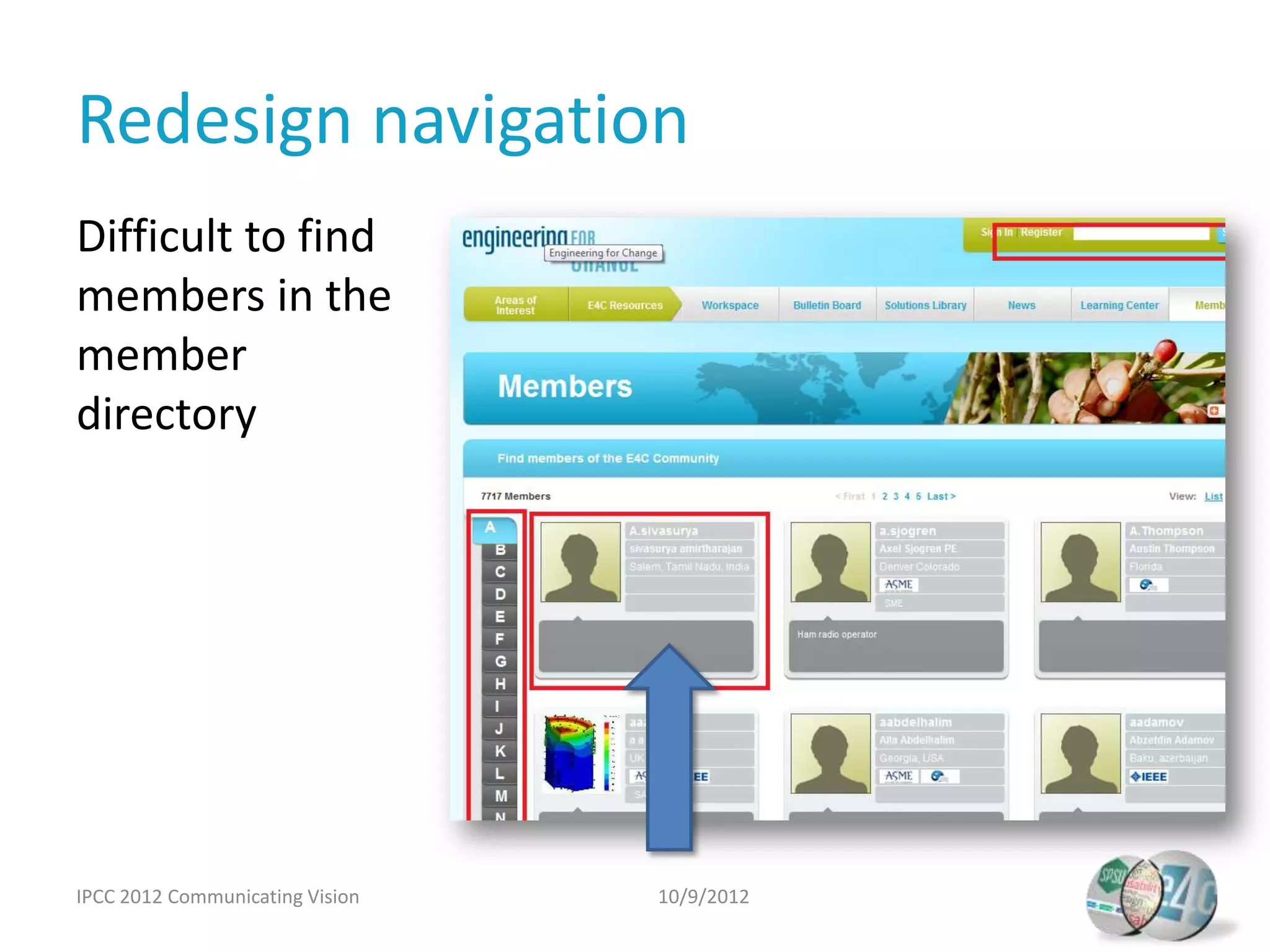

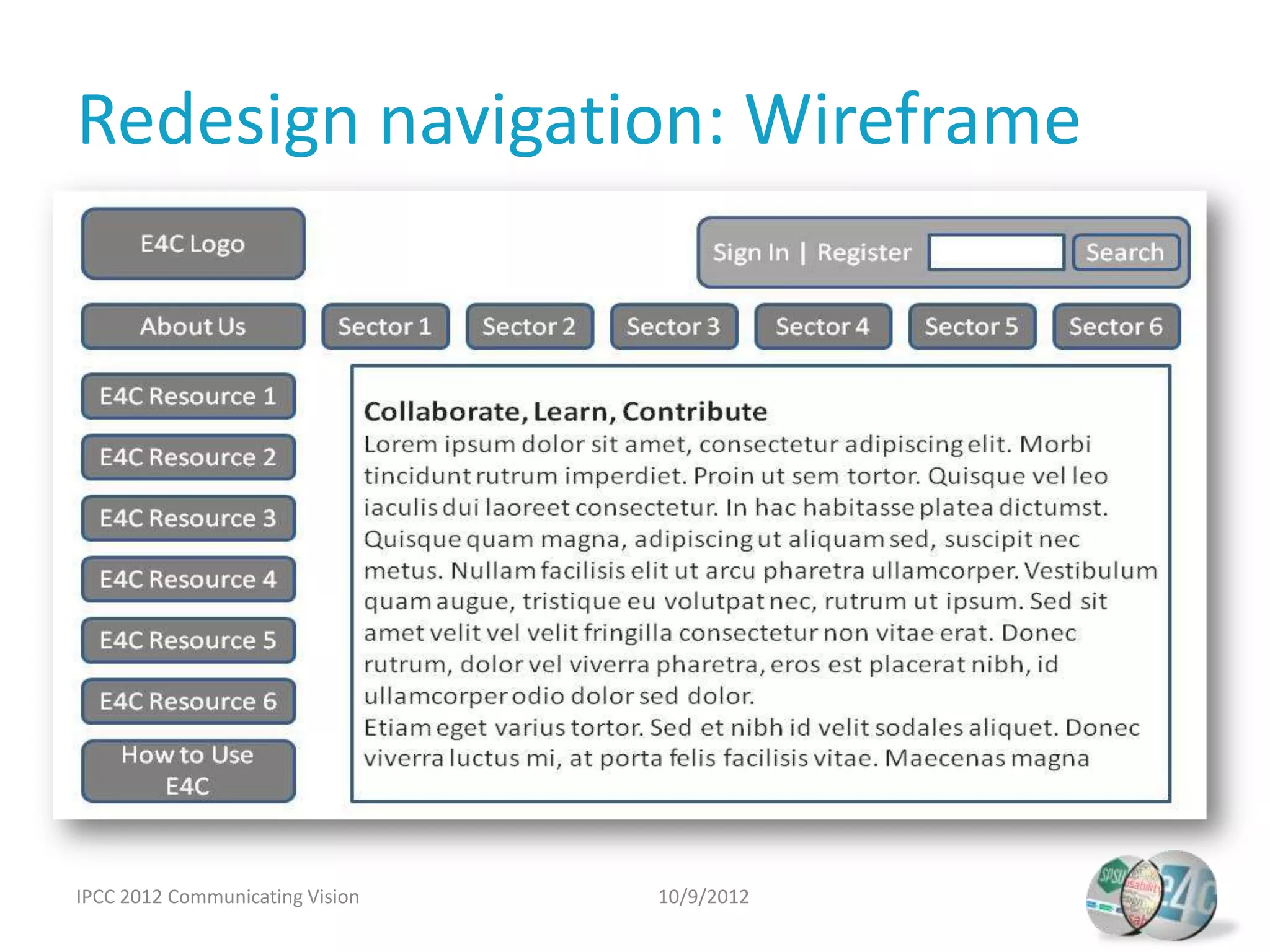

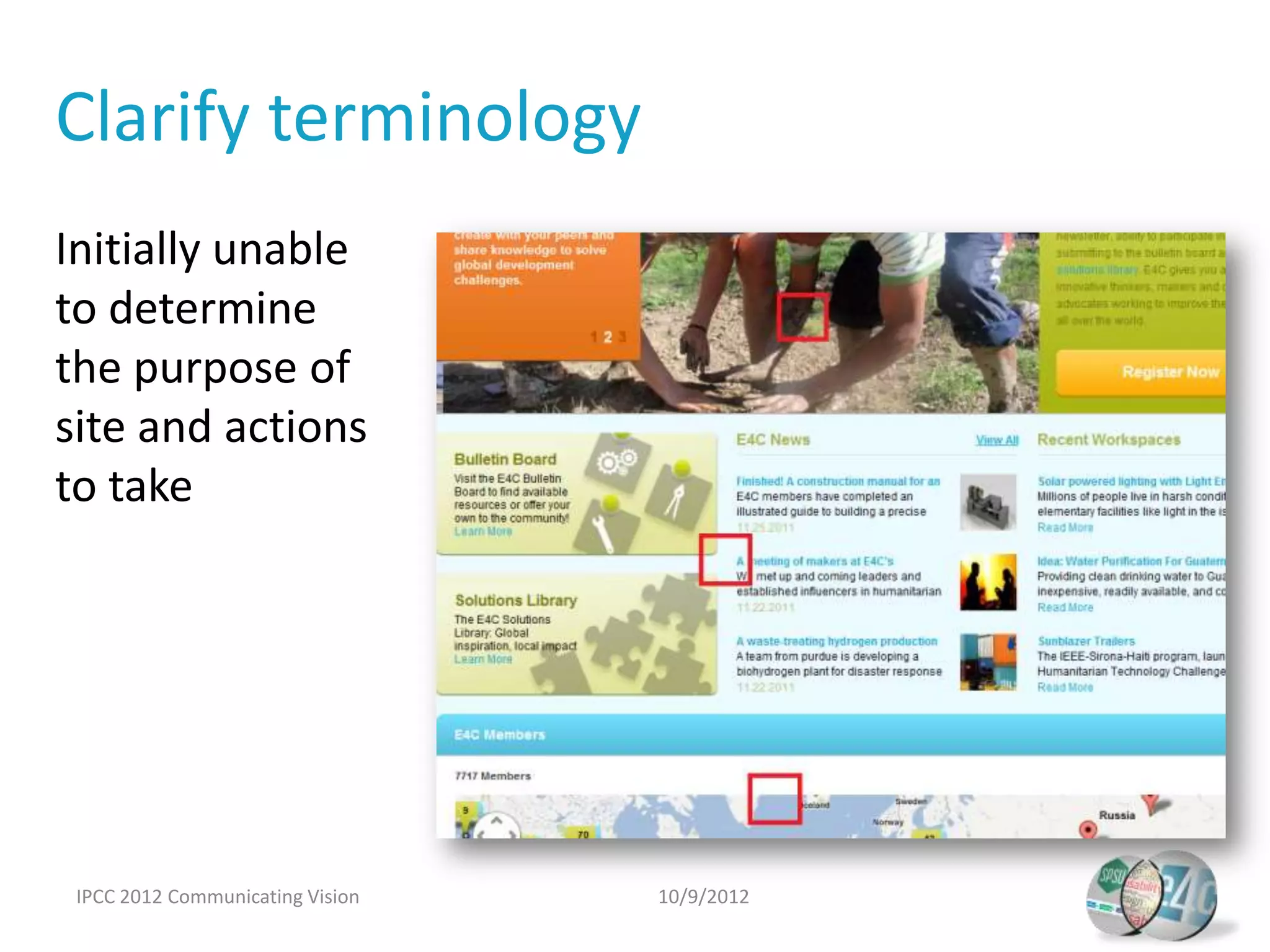

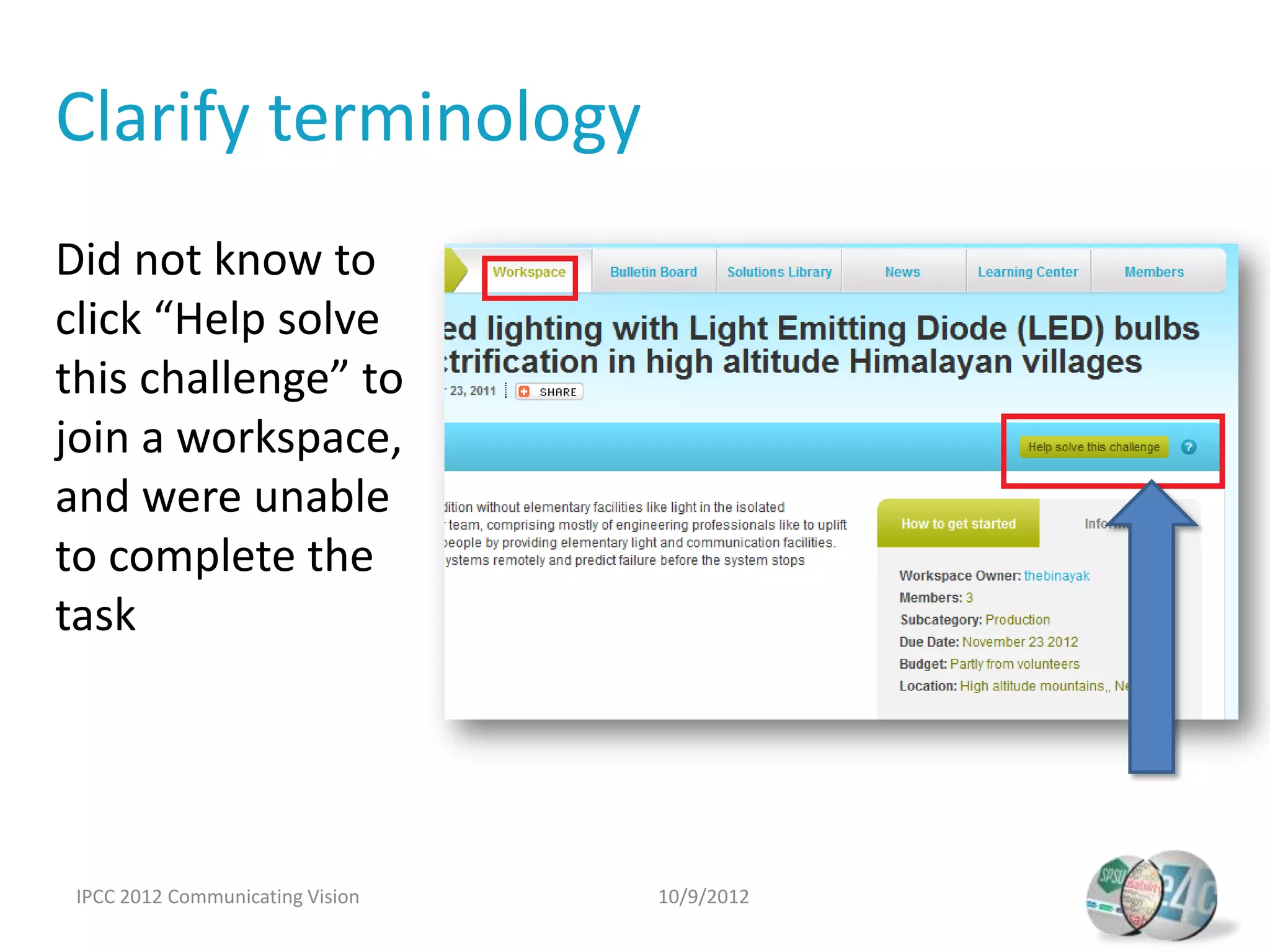

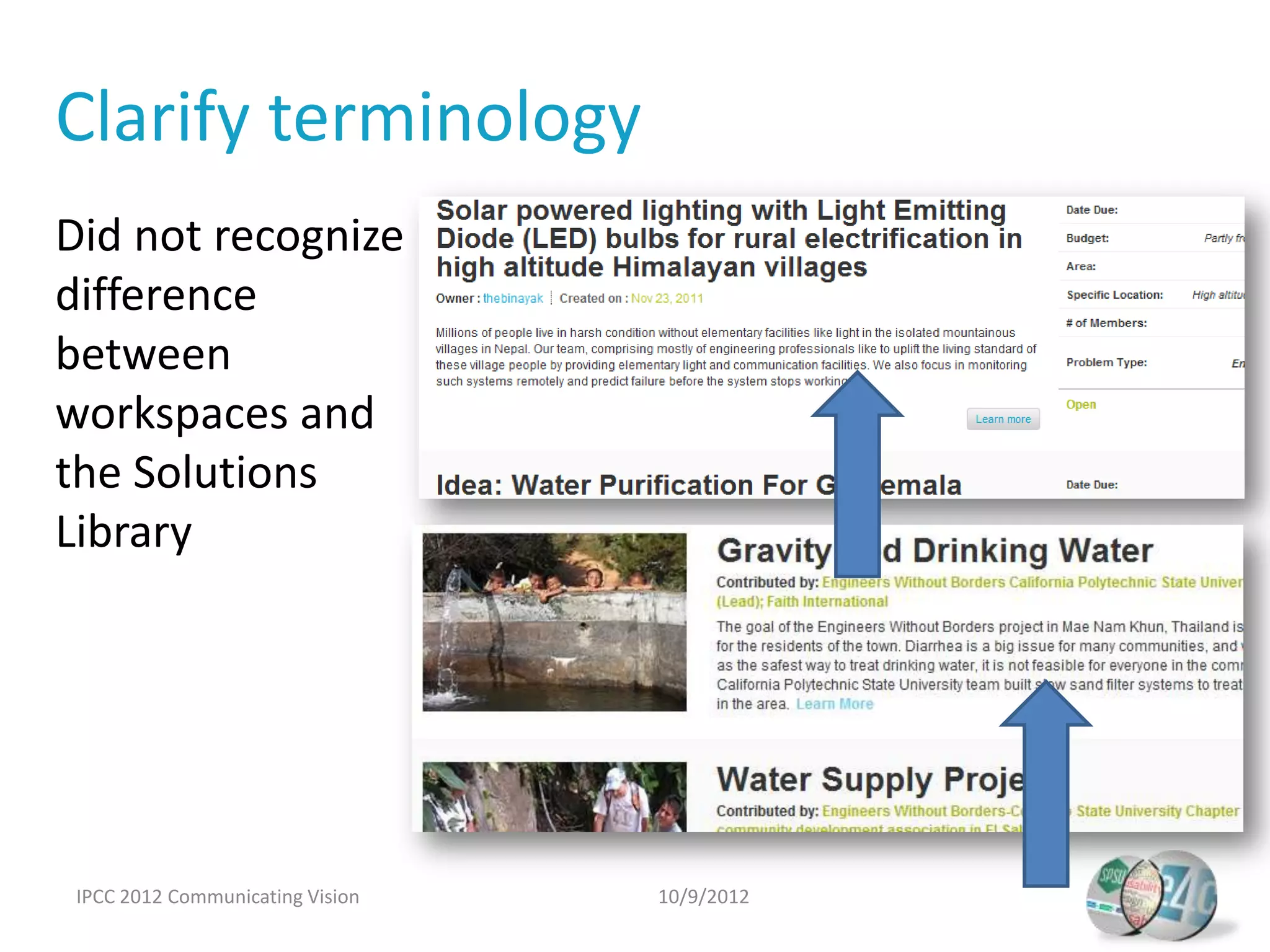

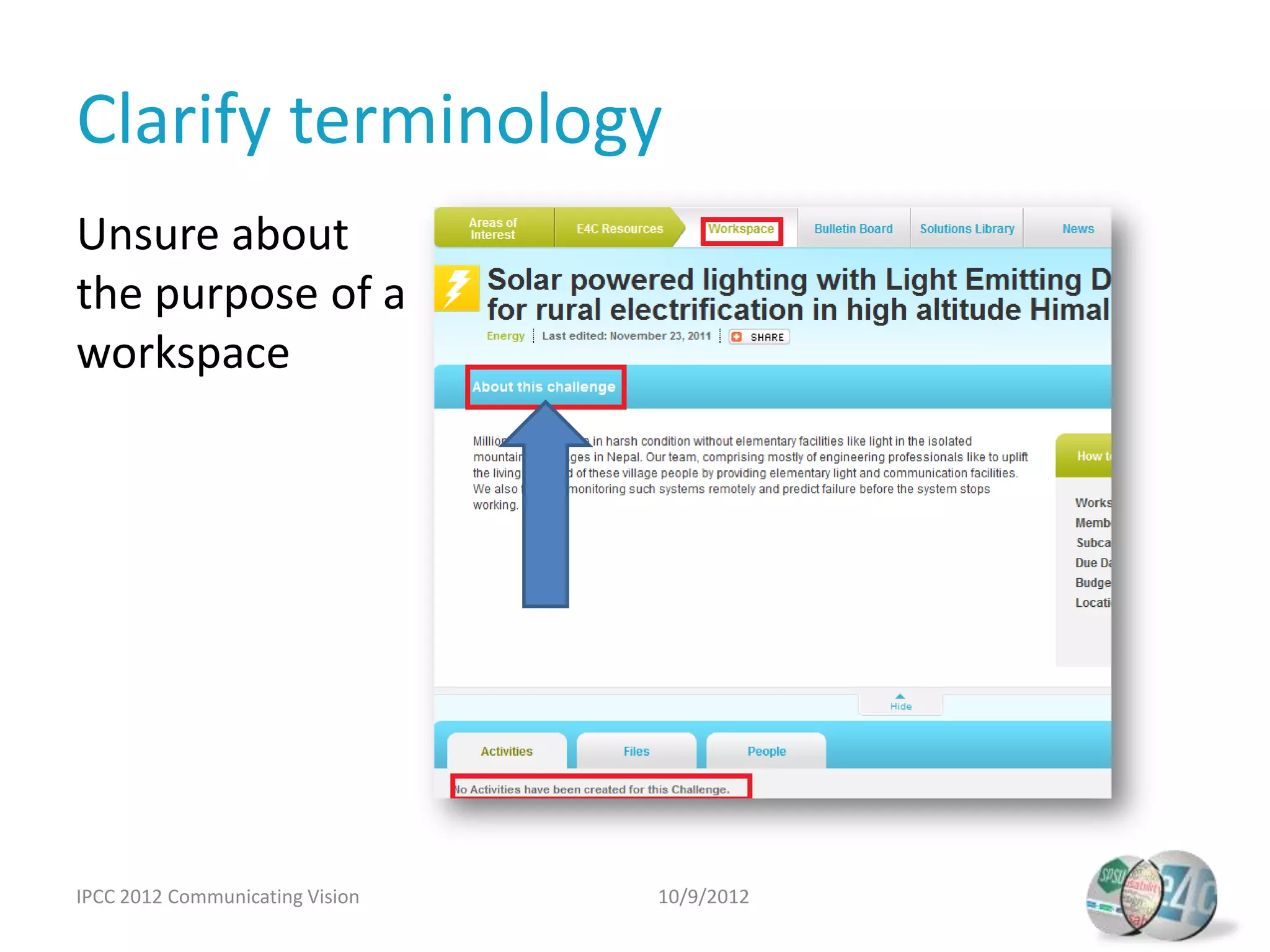

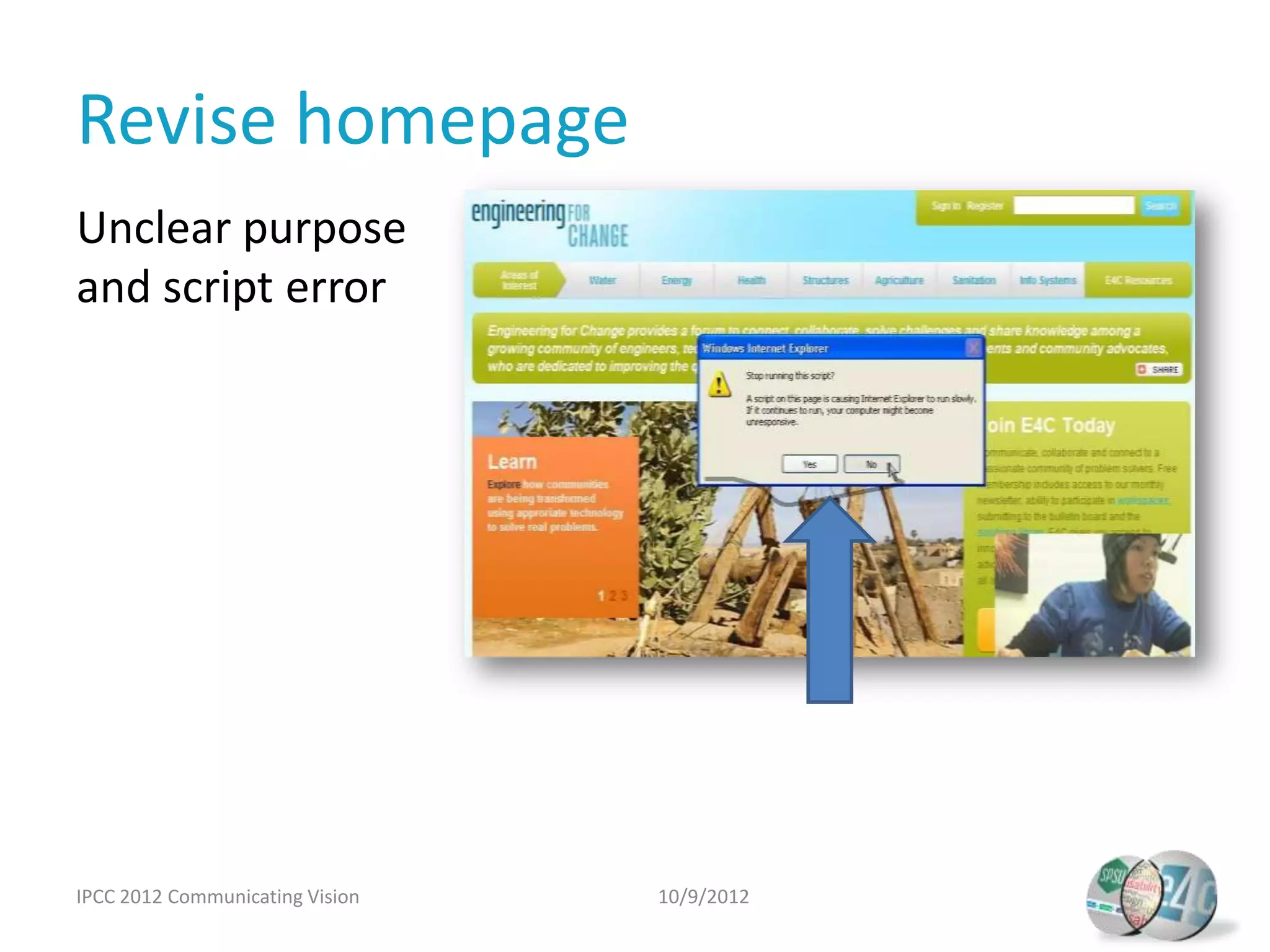

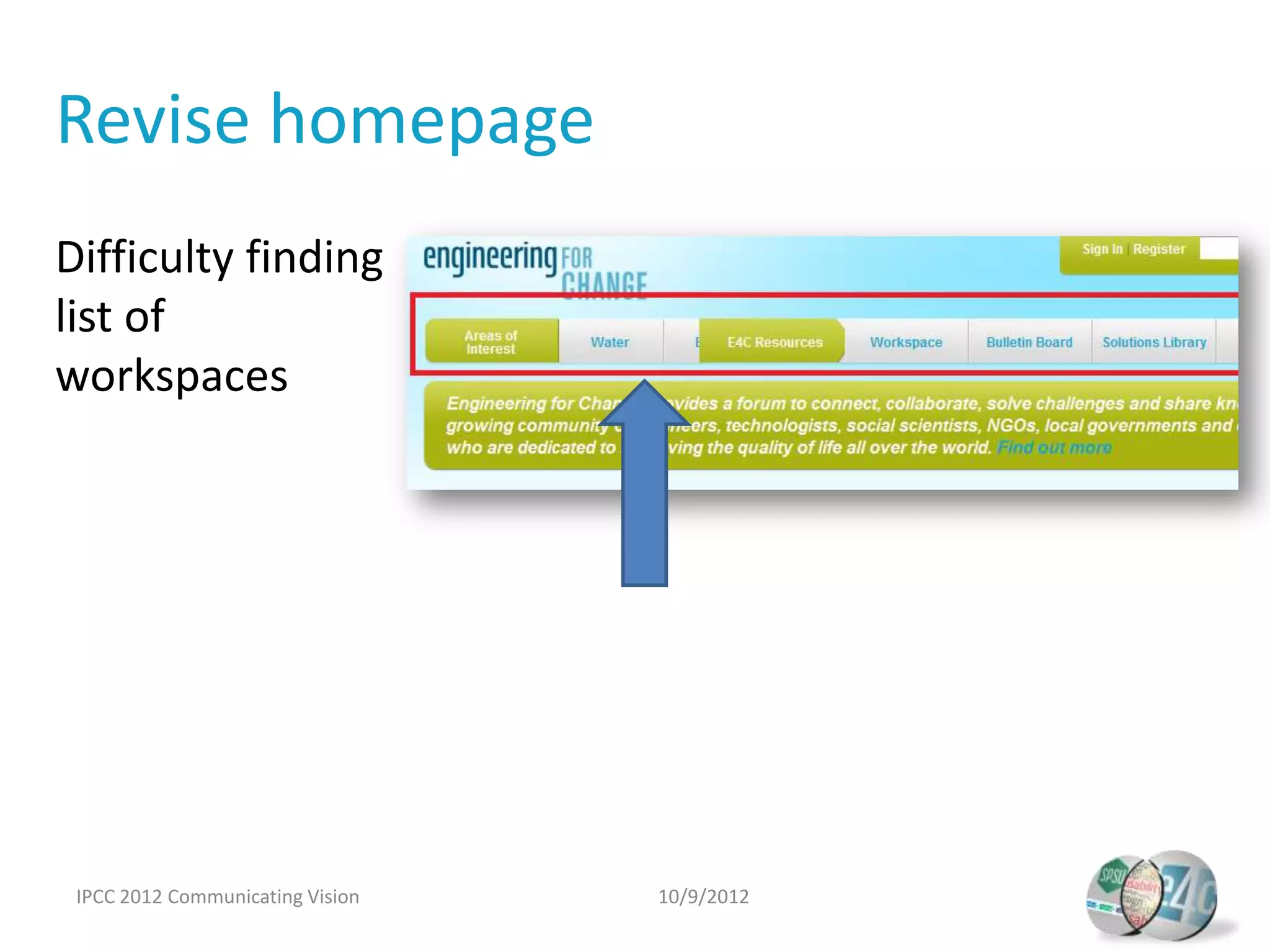

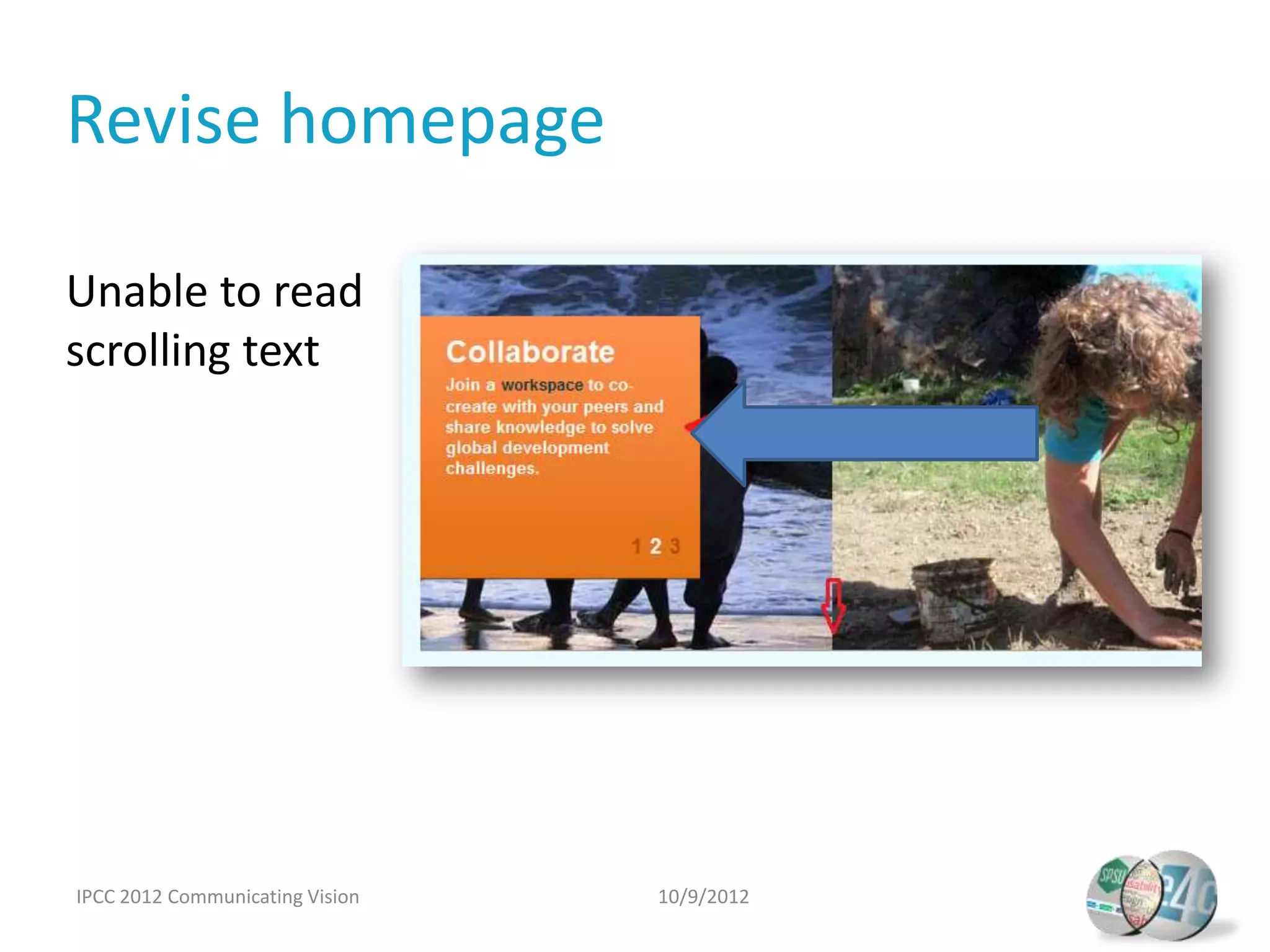

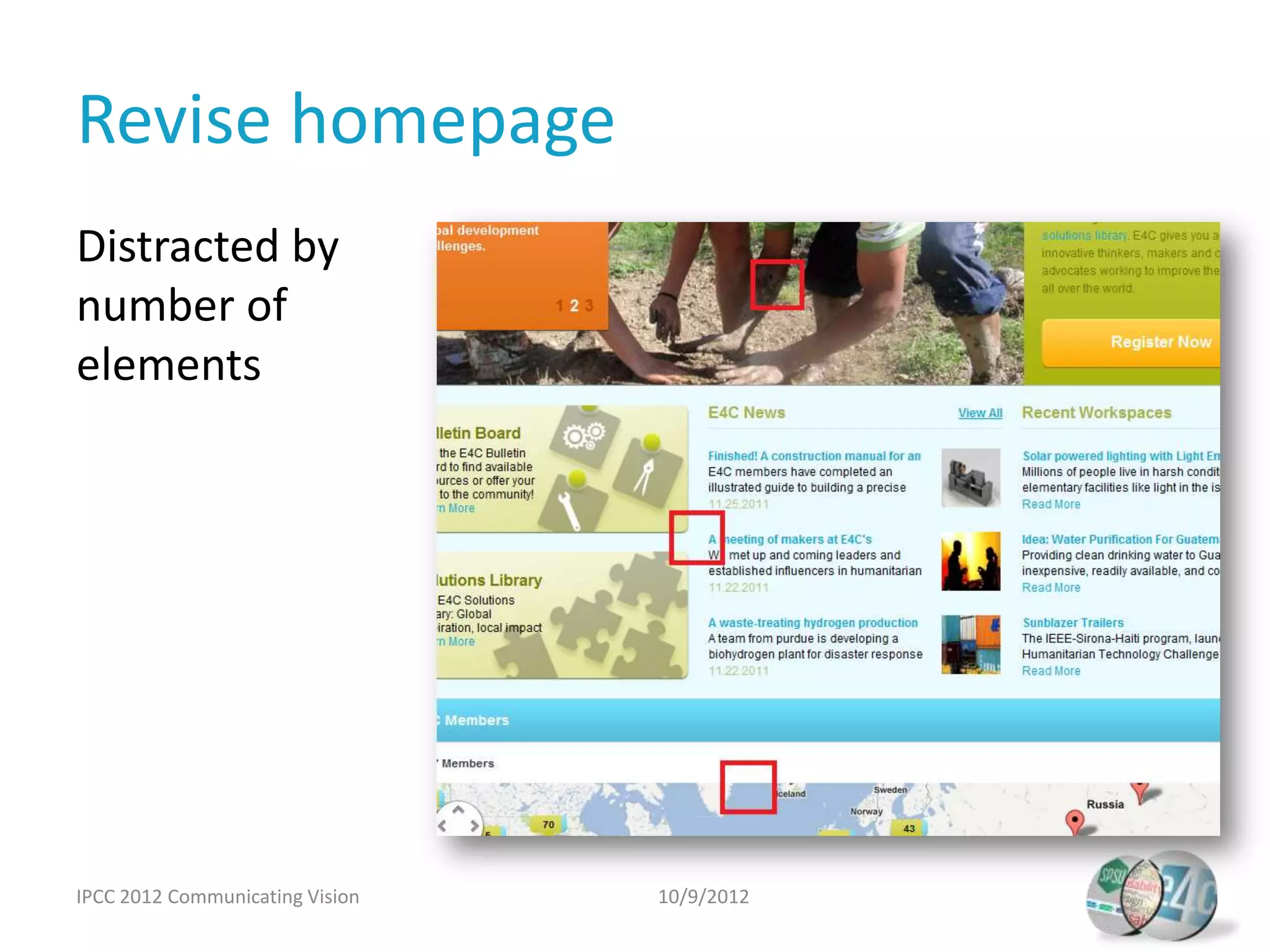

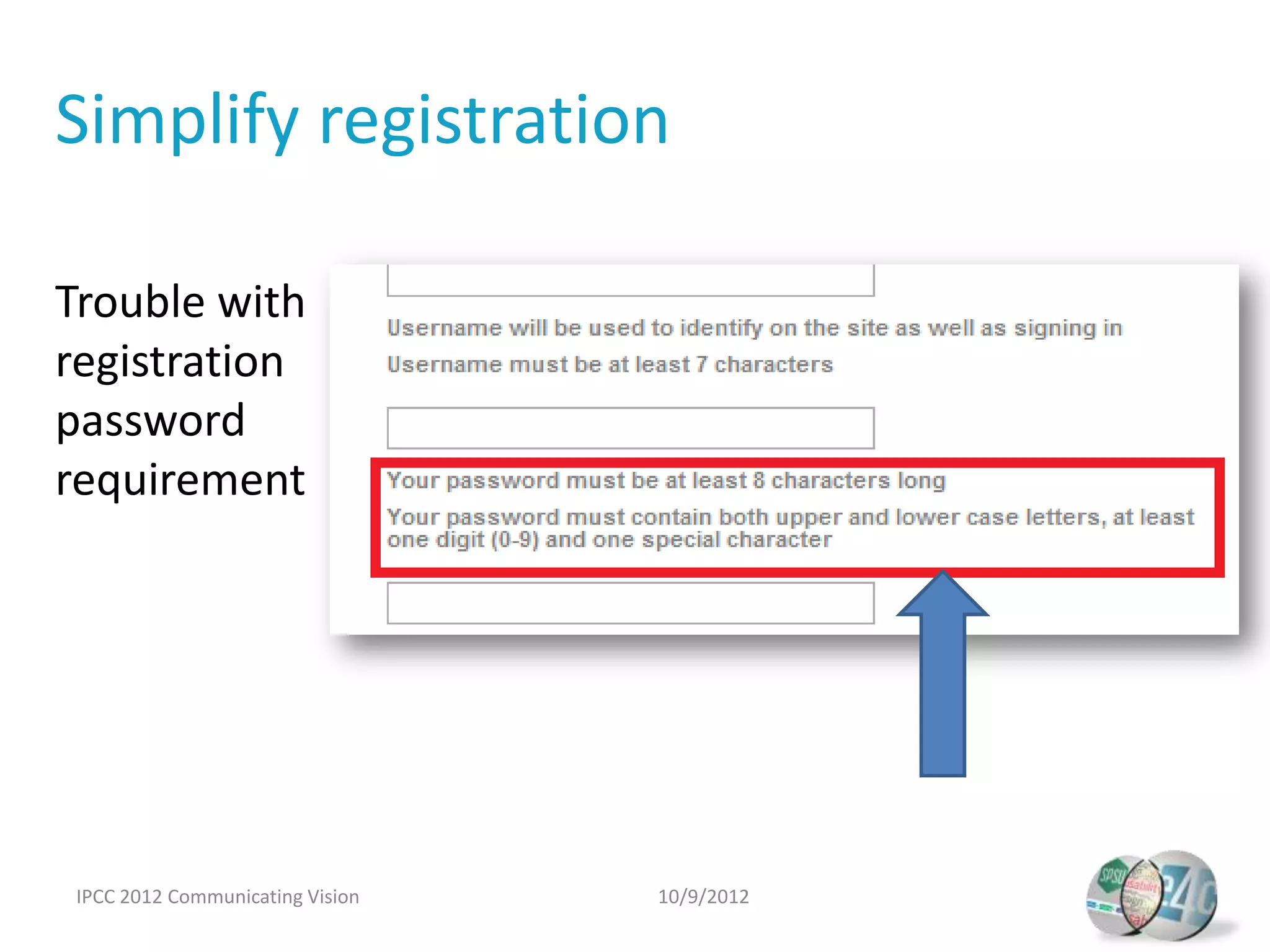

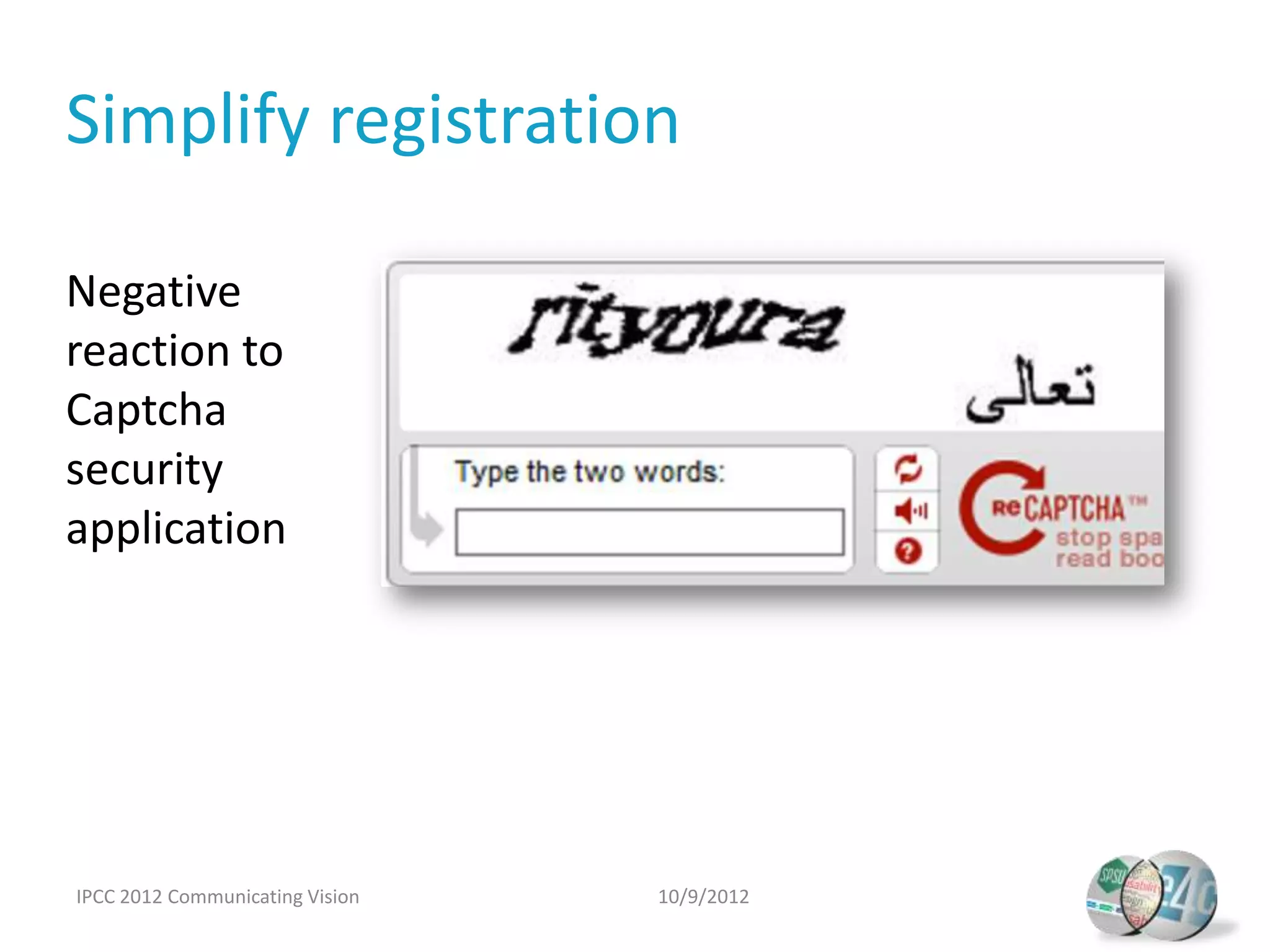

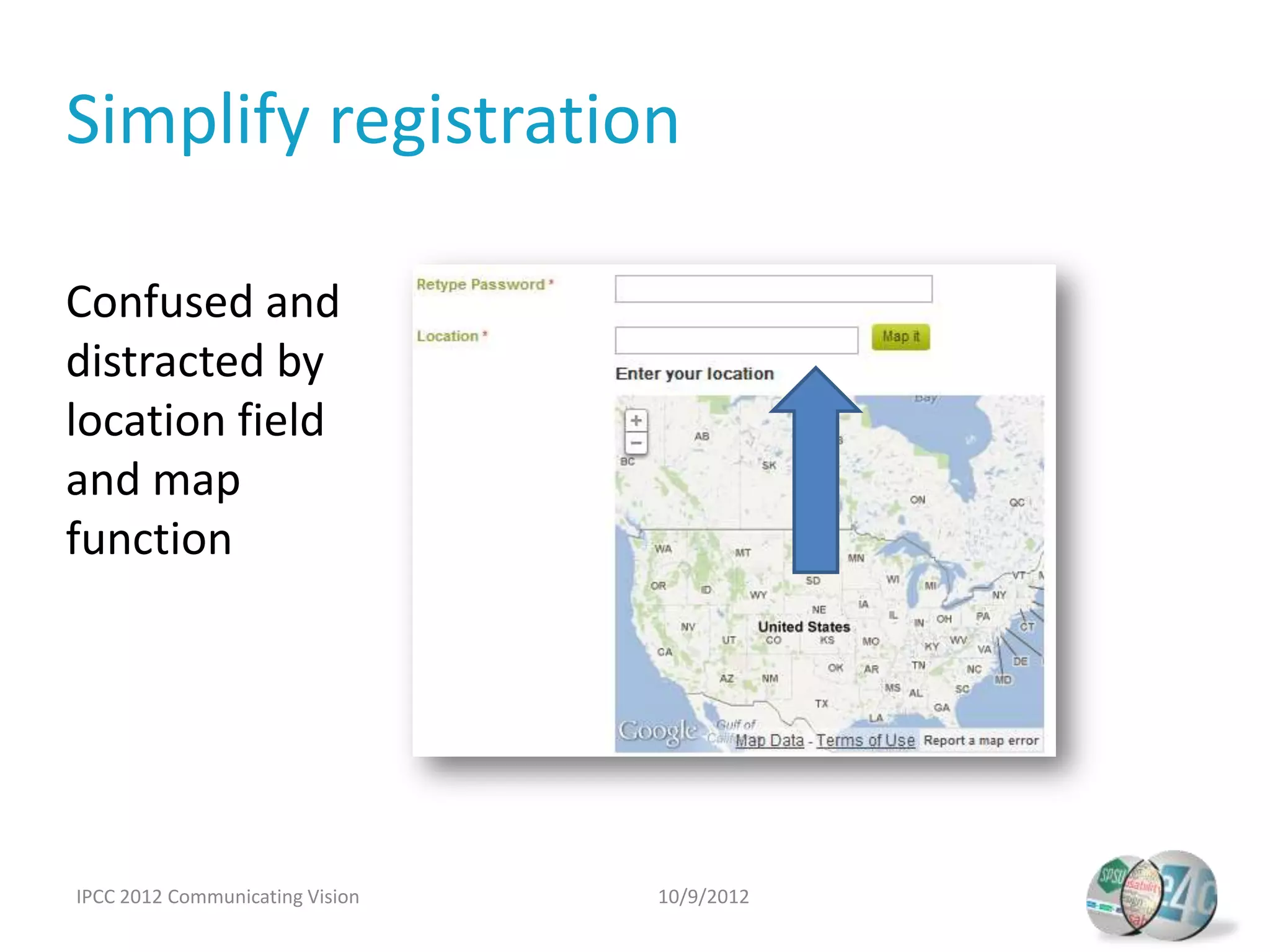

The document presents a usability study conducted by Dr. Carol Barnum and her team, focusing on how the Engineering for Change website aligns with community needs. It outlines the project's setup, user research methods, testing approaches, and key findings, which highlighted issues with navigation, terminology, and registration processes. Recommendations for improvement include redesigning navigation, clarifying terminology, revising the homepage, simplifying registration, and integrating social media.