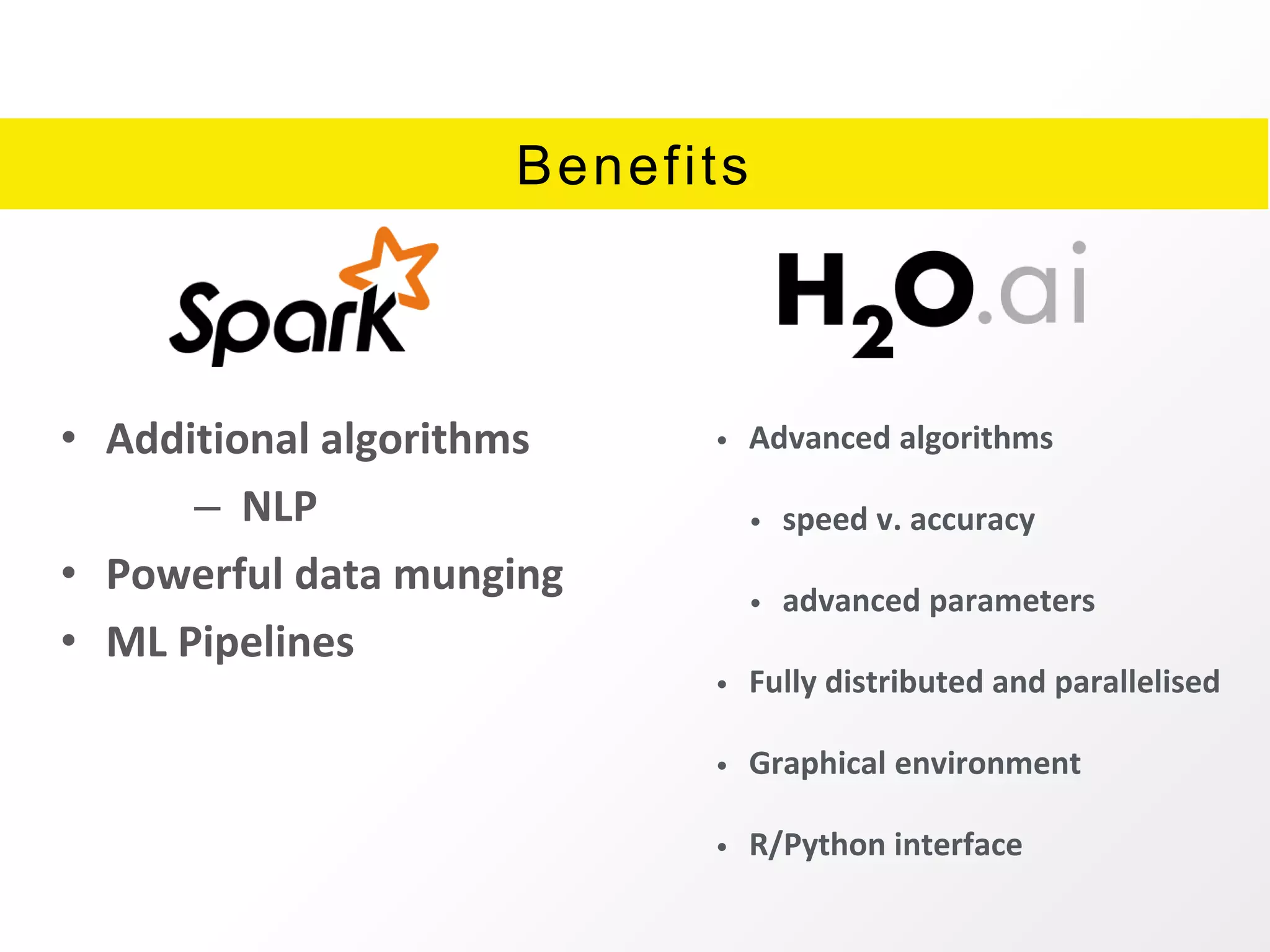

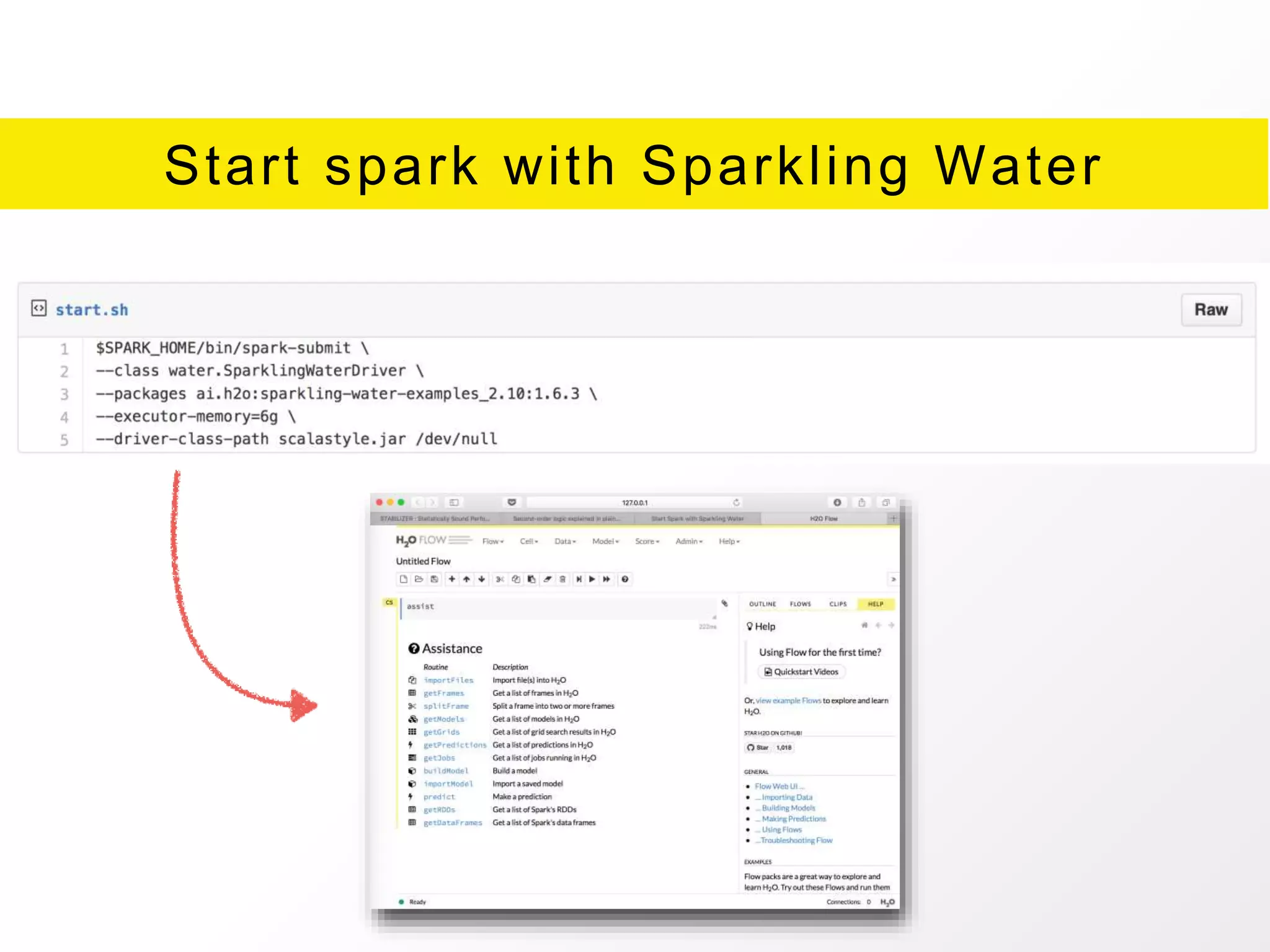

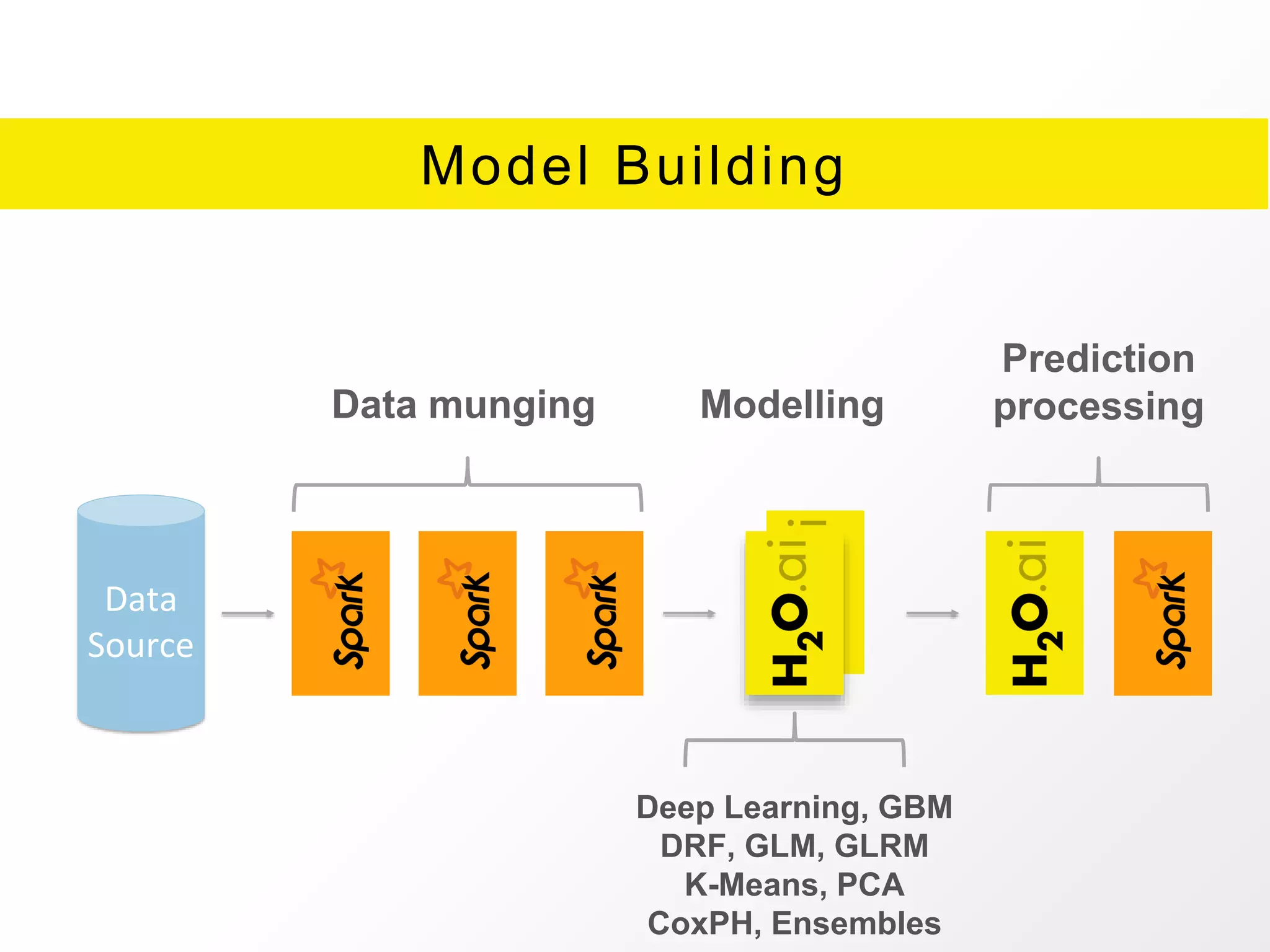

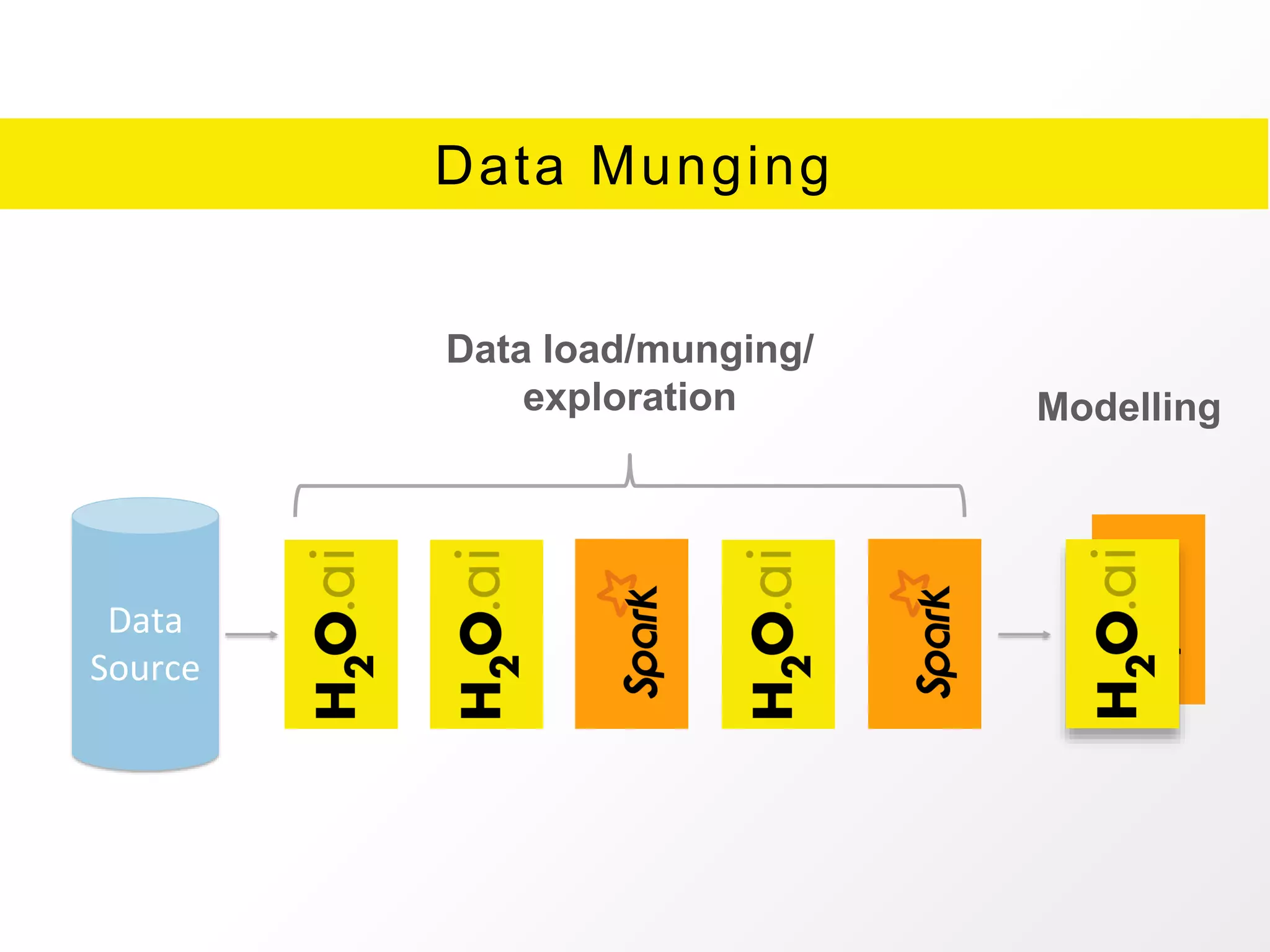

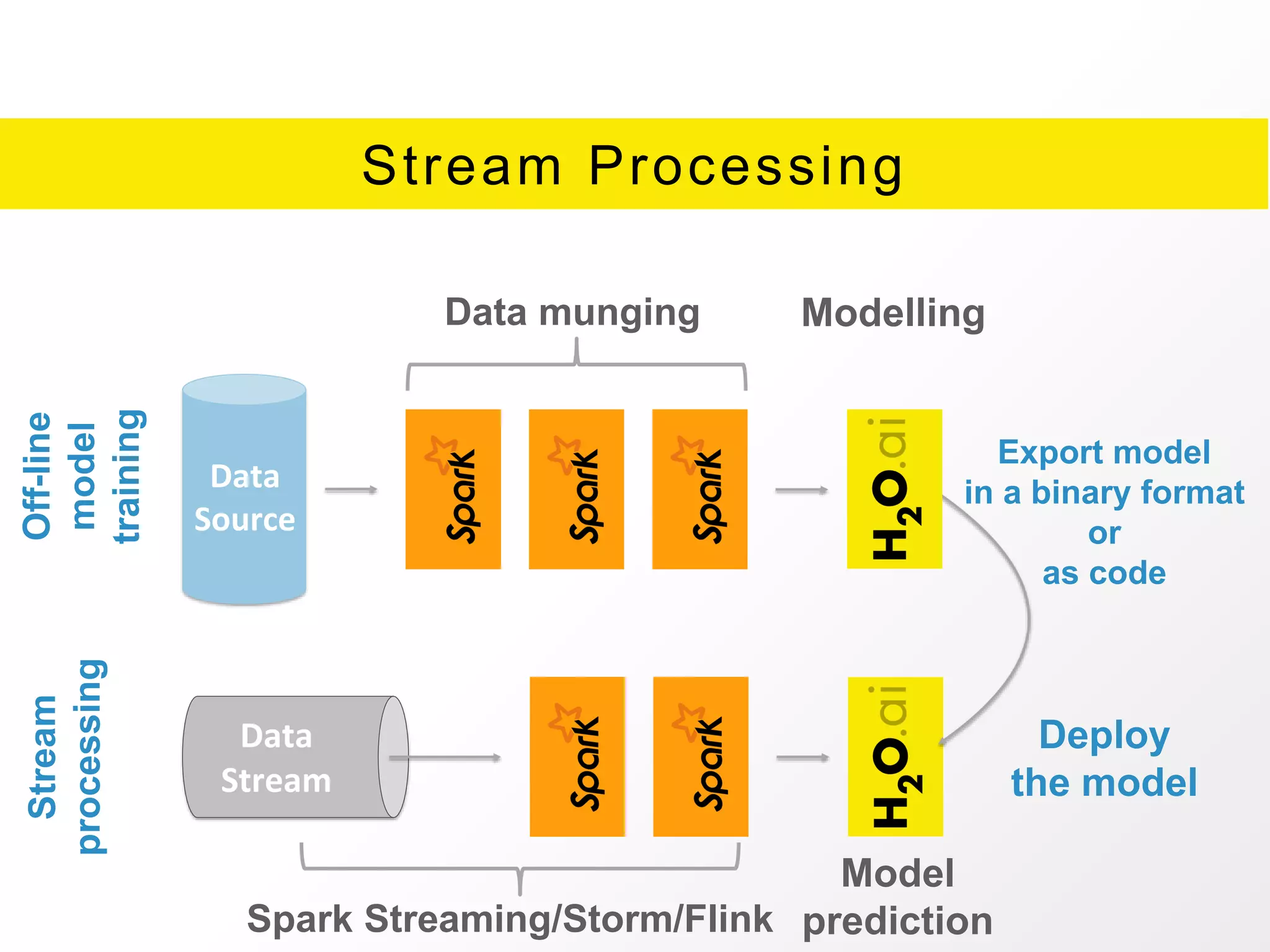

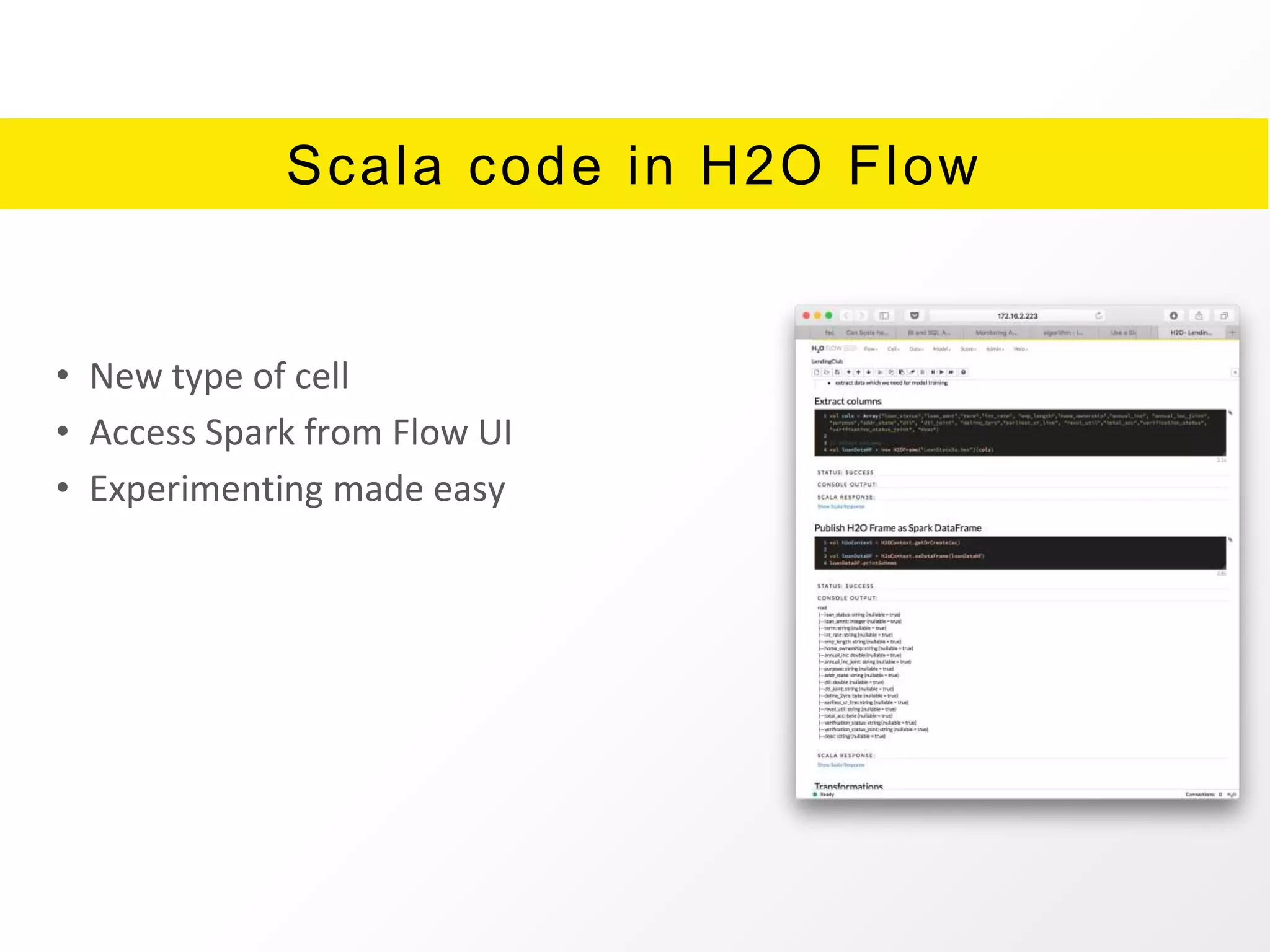

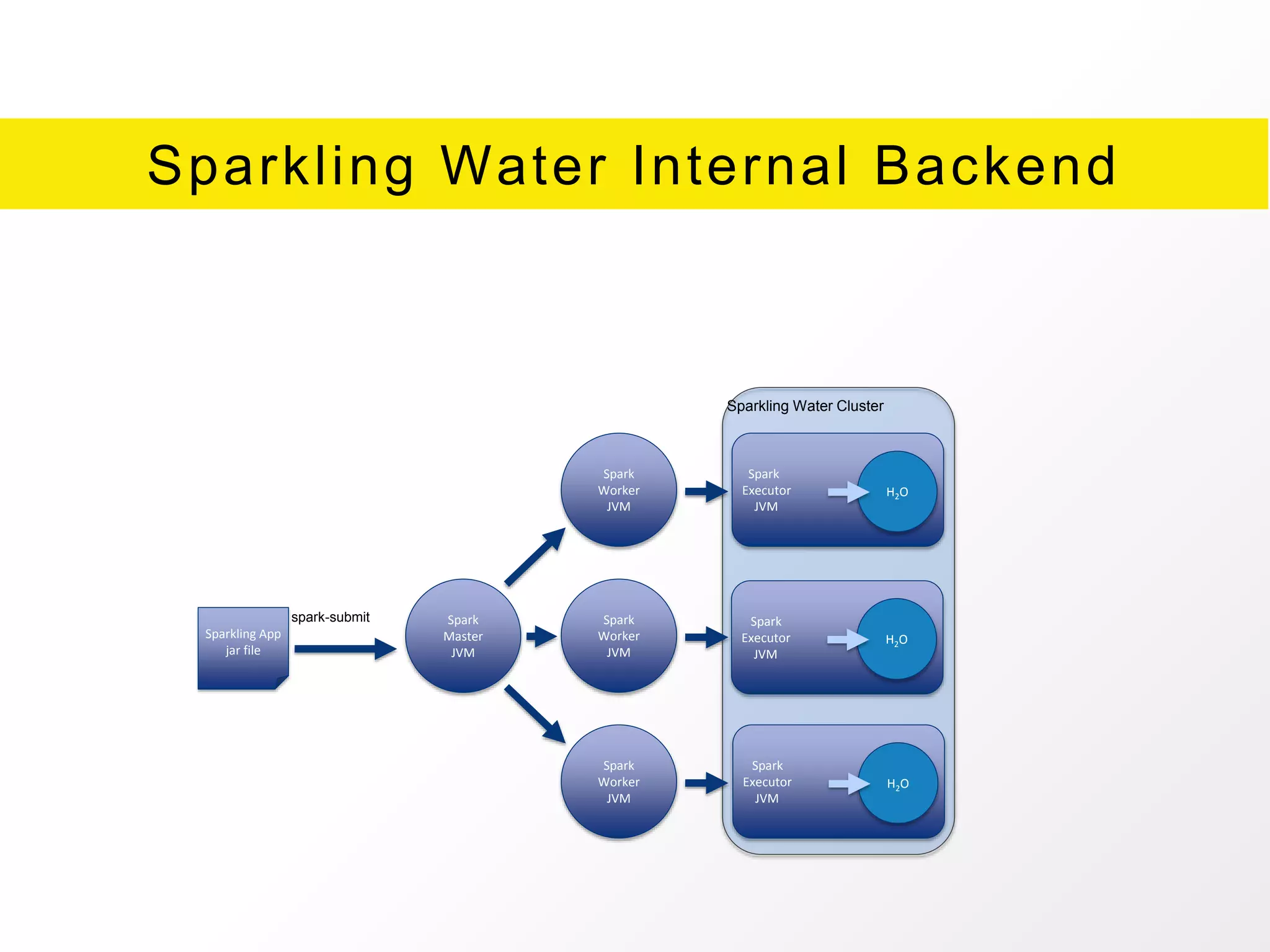

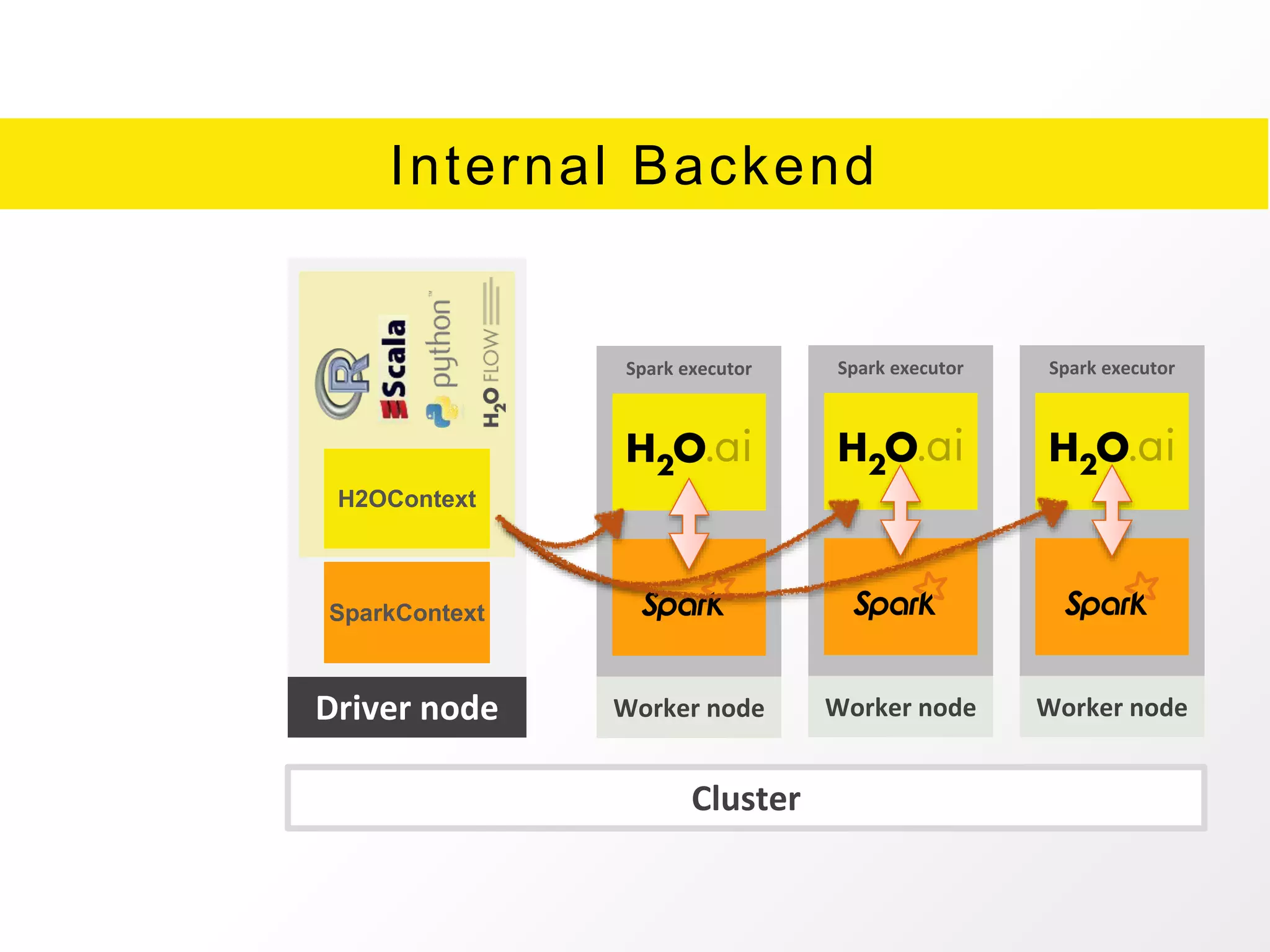

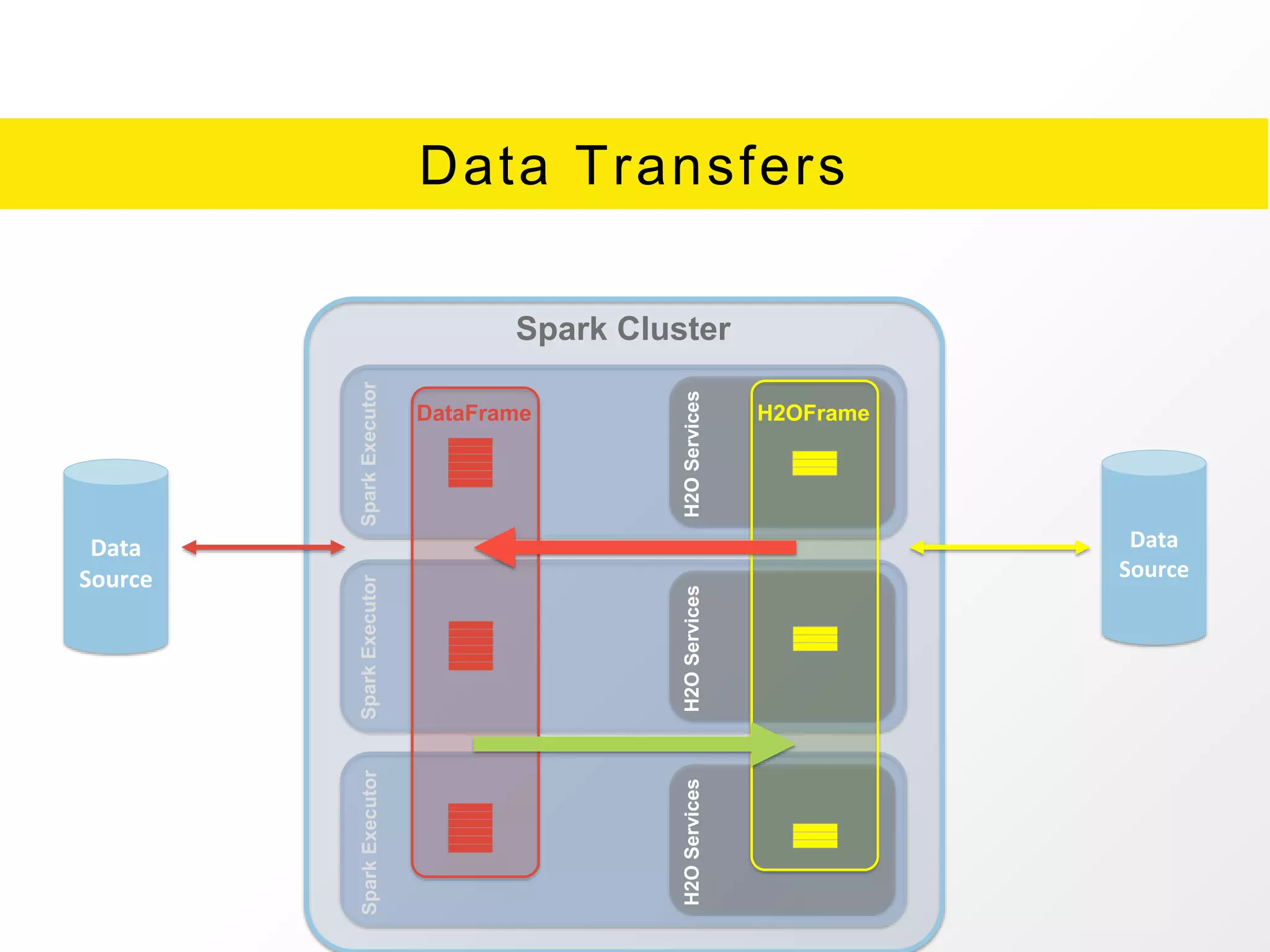

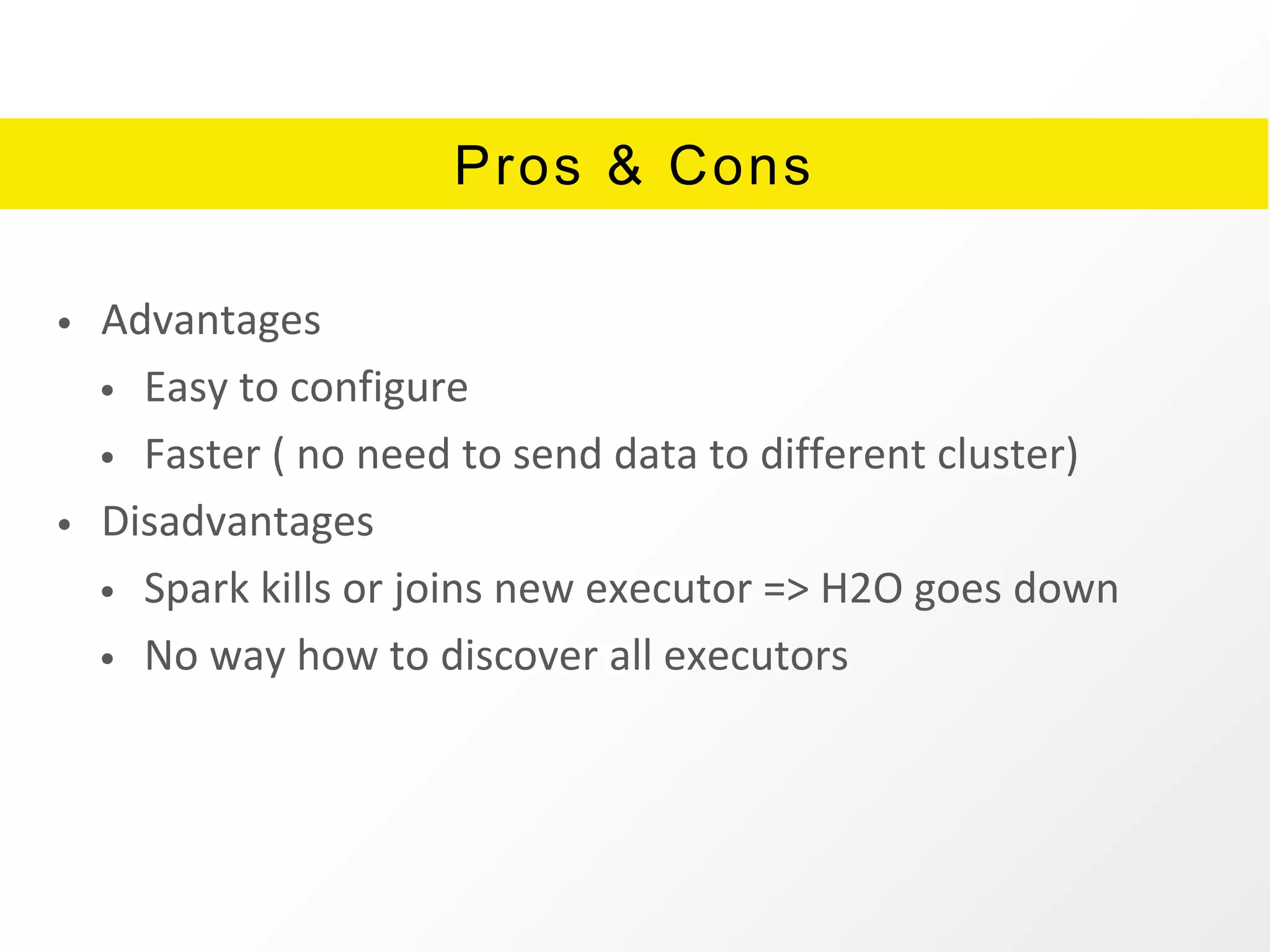

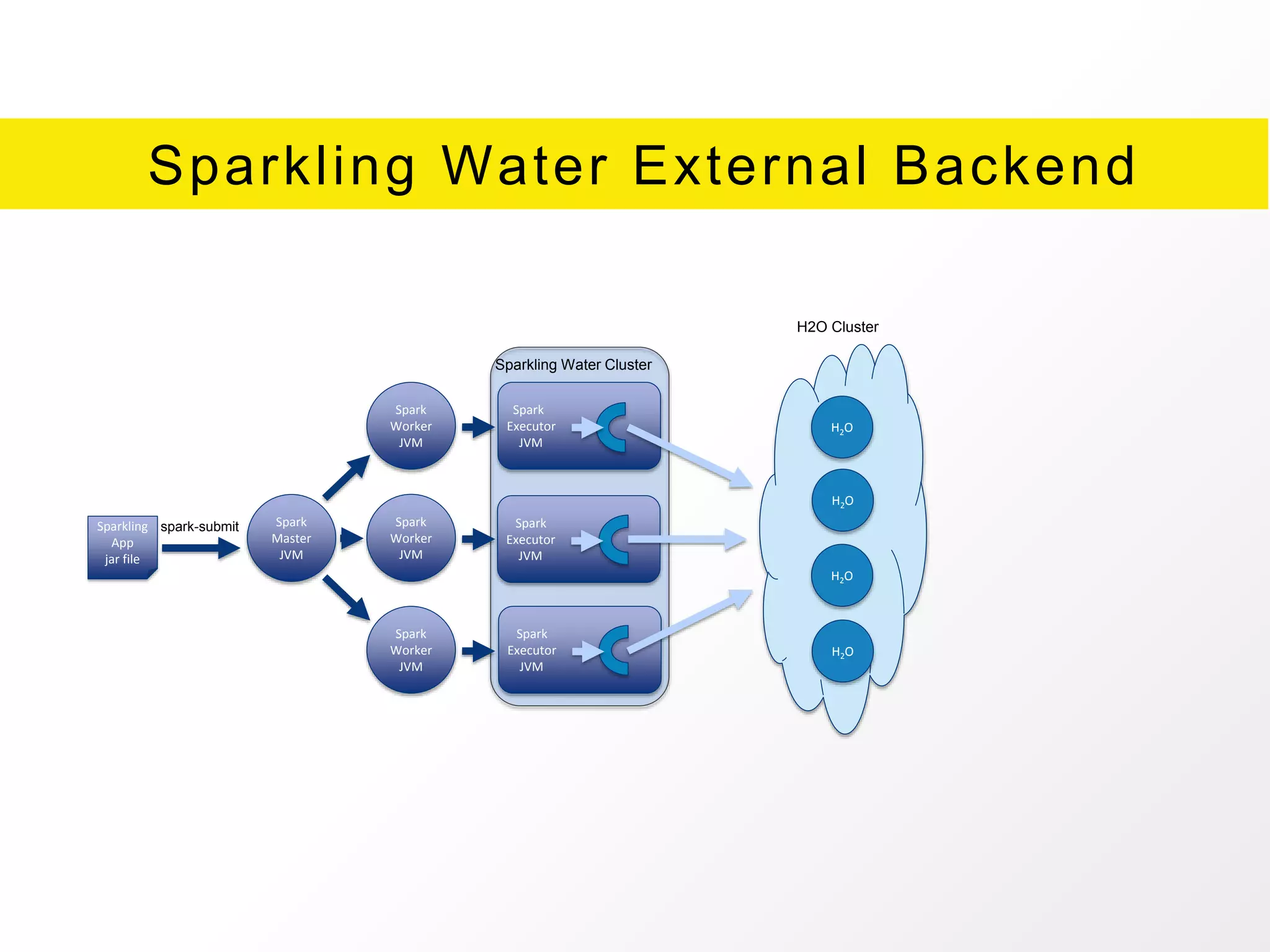

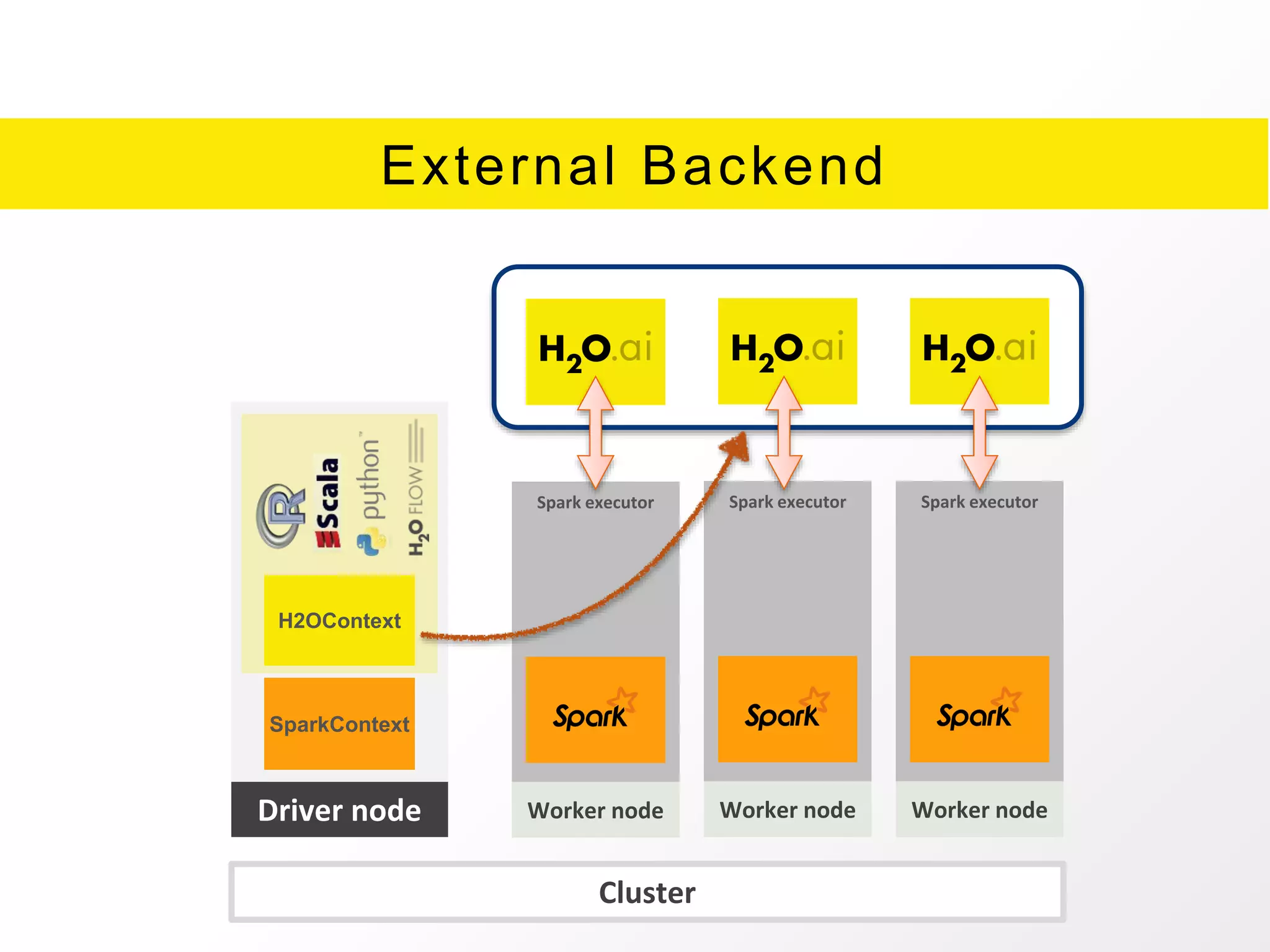

The document presents an overview of H2O.ai's Sparkling Water, which integrates H2O machine learning algorithms with Apache Spark. It highlights features such as seamless integration, advanced modeling capabilities, and support for different programming languages, along with various operational modes of the H2O cluster. Additional resources for documentation, tutorials, and community engagement are also provided.