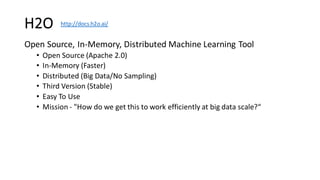

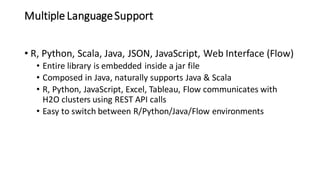

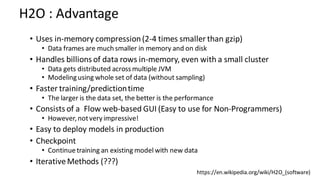

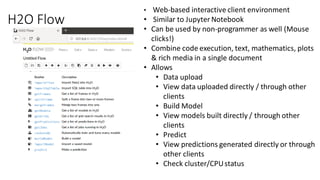

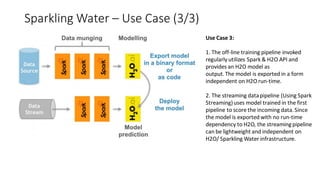

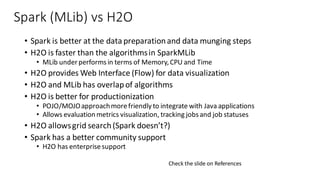

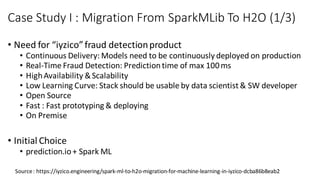

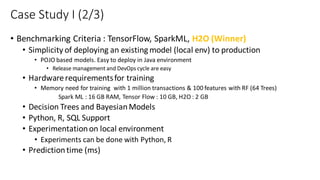

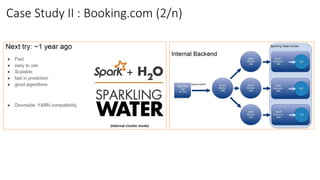

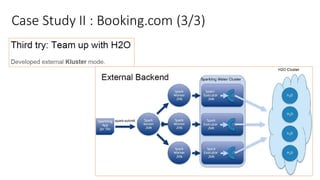

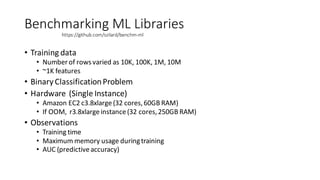

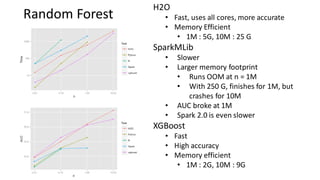

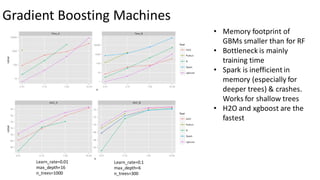

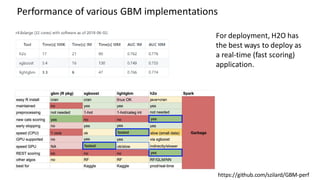

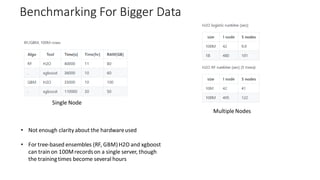

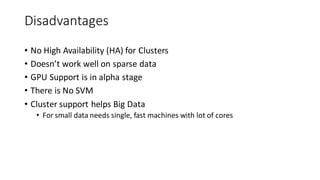

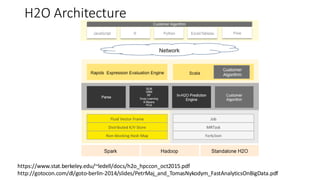

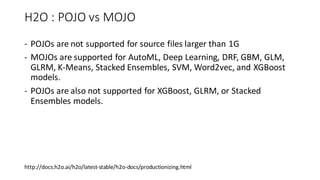

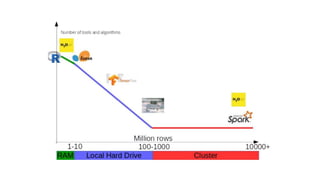

The document compares H2O, an open-source distributed machine learning tool, with Spark ML. H2O boasts faster performance and a user-friendly web interface, while Spark excels in data preparation. H2O is noted for its efficient model production capabilities and has several case studies highlighting its advantages over Spark in real-world applications.