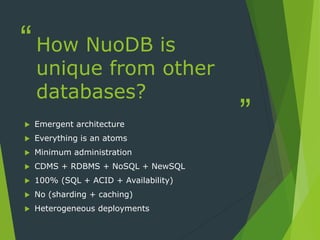

NuoDB is an elastic SQL database that uses an emergent architecture where everything is represented as autonomous atoms. Atoms can replicate themselves across nodes to provide scalability without compromising on ACID transactions or requiring additional administration. Unlike traditional SQL databases, NuoDB's distributed model allows it to scale elastically in the cloud while providing the full functionality of SQL and high availability even with node failures.