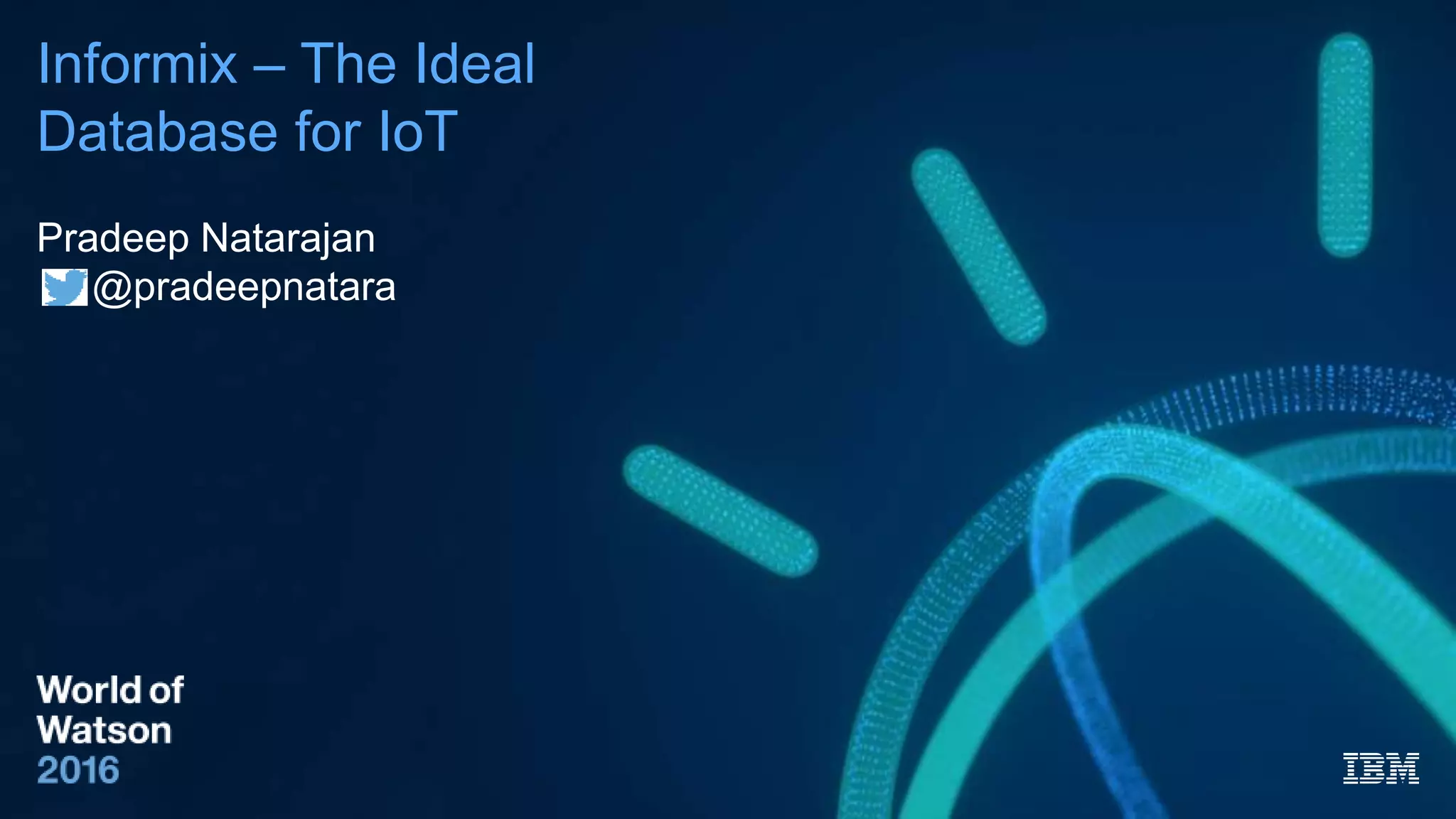

IBM Informix is identified as the ideal database for IoT solutions, offering features that cater specifically to the needs of IoT applications, such as support for time series and spatial data, ease of use, and low memory requirements. The document outlines the advantages of using gateways in IoT, including reduced backend costs and real-time data processing. Additionally, it emphasizes the ability to handle both structured and unstructured data efficiently, making Informix a robust option for cloud and operational deployments.

![World of Watson 2016

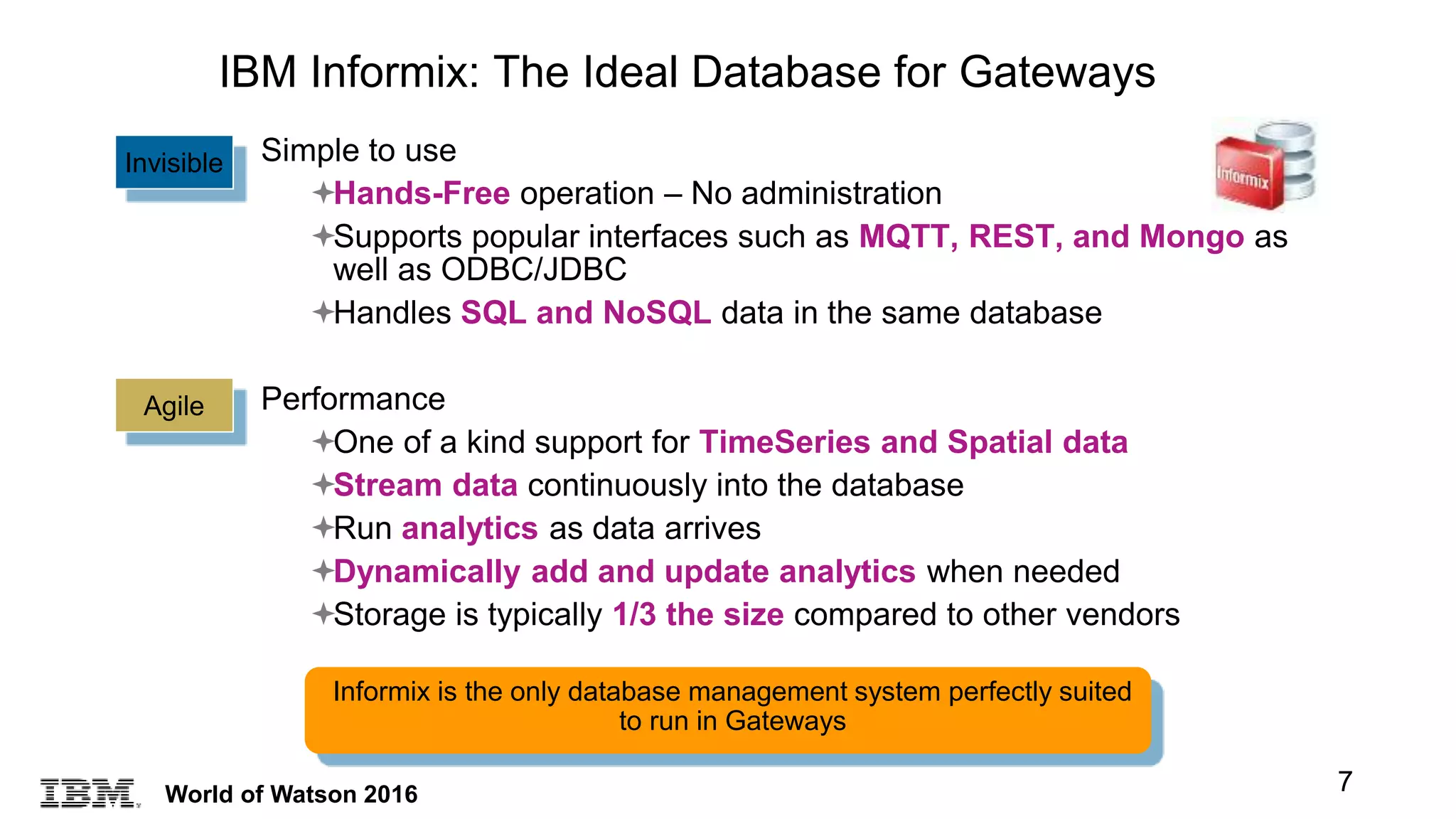

Traditional Table Approach

Informix TimeSeries Approach

Device_ID Time Sensor1 Sensor2 ColN

1 1-1-11 12:00 Value 1 Value 2 ……… Value N

2 1-1-11 12:00 Value 1 Value 2 ……… Value N

3 1-1-11 12:00 Value 1 Value 2 ……… Value N

… … … … ……… …

1 1-1-11 12:15 Value 1 Value 2 ……… Value N

2 1-1-11 12:15 Value 1 Value 2 ……… Value N

3 1-1-11 12:15 Value 1 Value 2 ……… Value N

… … … … ……… …

Device_ID Series

1 [(1-1-11 12:00, value 1, value 2,…, value N), (1-1-11 12:15, value 1, value 2, …, value N), …]

2 [(1-1-11 12:00, value 1, value 2,…, value N), (1-1-11 12:15, value 1, value 2, …, value N), …]

3 [(1-1-11 12:00, value 1, value 2,…, value N), (1-1-11 12:15, value 1, value 2, …, value N), …]

4 [(1-1-11 12:00, value 1, value 2,…, value N), (1-1-11 12:15, value 1, value 2, …, value N), …]

…

Traditional Sensor data storage vs Informix TimeSeries Storage

9](https://image.slidesharecdn.com/informixiotluncheon-161025204728/75/Informix-The-Ideal-Database-for-IoT-9-2048.jpg)