This document is a best practices guide for Information Lifecycle Management (ILM) published by IBM. It provides an overview of ILM concepts and strategies. The guide contains three parts that cover ILM basics, key building blocks, and ILM strategies and solutions. It is intended to help organizations effectively manage the lifecycles of their information assets through the use of automated policies and tiered storage systems.

![Regulation Intention Applicability

Basel II aka The New Accord Promote greater consistency in Financial industry

the way banks and banking

regulators approach risk

management across national

borders.

21 CFR 11 Approval accountability. FDA regulation of

pharmaceutical and

biotechnology companies

For example, in Table 1-2, we list some requirements found in SEC 17a-4 to which financial

institutions and broker-dealers must comply. Information produced by these institutions,

regarding solicitation and execution of trades and so on, is referred to as compliance data, a

subset of retention-managed data.

Table 1-2 Some SEC/NASD requirements

Requirement Met by

Capture all correspondence (unmodified) Capture incoming and outgoing e-mail before

[17a-4(f)(3)(v)]. reaching users.

Store in non-rewritable, non-erasable format Write Once Read Many (WORM) storage of all

[17a-4(f)(2)(ii)(A)]. e-mail, all documents.

Verify automatically recording integrity and Validated storage to magnetic, WORM.

accuracy [17a-4(f)(2)(ii)(B)].

Duplicate data and index storage Mirrored or duplicate storage servers (copy

[17a-4(f)(3)(iii)]. pools).

Enforce retention periods on all stored data and Structured records management.

indexes [17a-4(f)(3)(iv)(c)].

Search/retrieve all stored data and indexes High-performance search retrieval.

[17a-4(f)(2)(ii)(D)].

IBM ILM data retention strategy

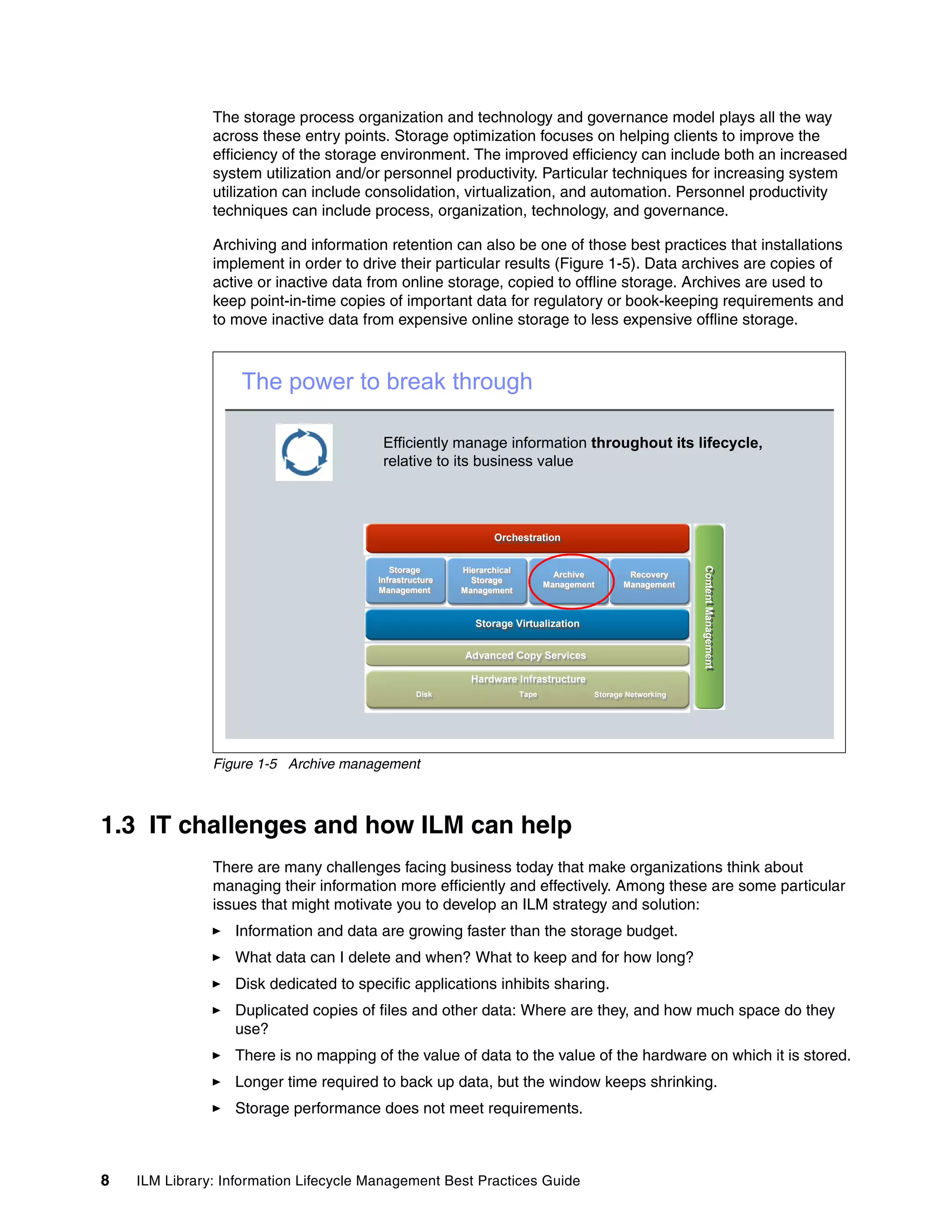

Regulations and other business imperatives, as we just briefly discussed, stress the

requirement for an Information Lifecycle Management process and tools to be in place. The

unique experience of IBM with the broad range of ILM technologies, and its broad portfolio of

offerings and solutions, can help businesses address this particular requirement and provide

them with the best solutions to manage their information throughout its lifecycle. IBM provides

a comprehensive and open set of solutions to help.

IBM has products that provide content management, data retention management, and

sophisticated storage management, along with the storage systems to house the data. To

specifically help companies with their risk and compliance efforts, the IBM Risk and

Compliance framework is another tool designed to illustrate the infrastructure capabilities

required to help address the myriad of compliance requirements. Using the framework,

organizations can standardize the use of common technologies to design and deploy a

compliance architecture that might help them deal more effectively with compliance initiatives.

For more details about the IBM Risk and Compliance framework, visit:

http://www-306.ibm.com/software/info/openenvironment/rcf/

14 ILM Library: Information Lifecycle Management Best Practices Guide](https://image.slidesharecdn.com/ilmlibraryinformationlifecyclemanagementbestpracticesguidesg247251-120524205609-phpapp01/75/Ilm-library-information-lifecycle-management-best-practices-guide-sg247251-32-2048.jpg)

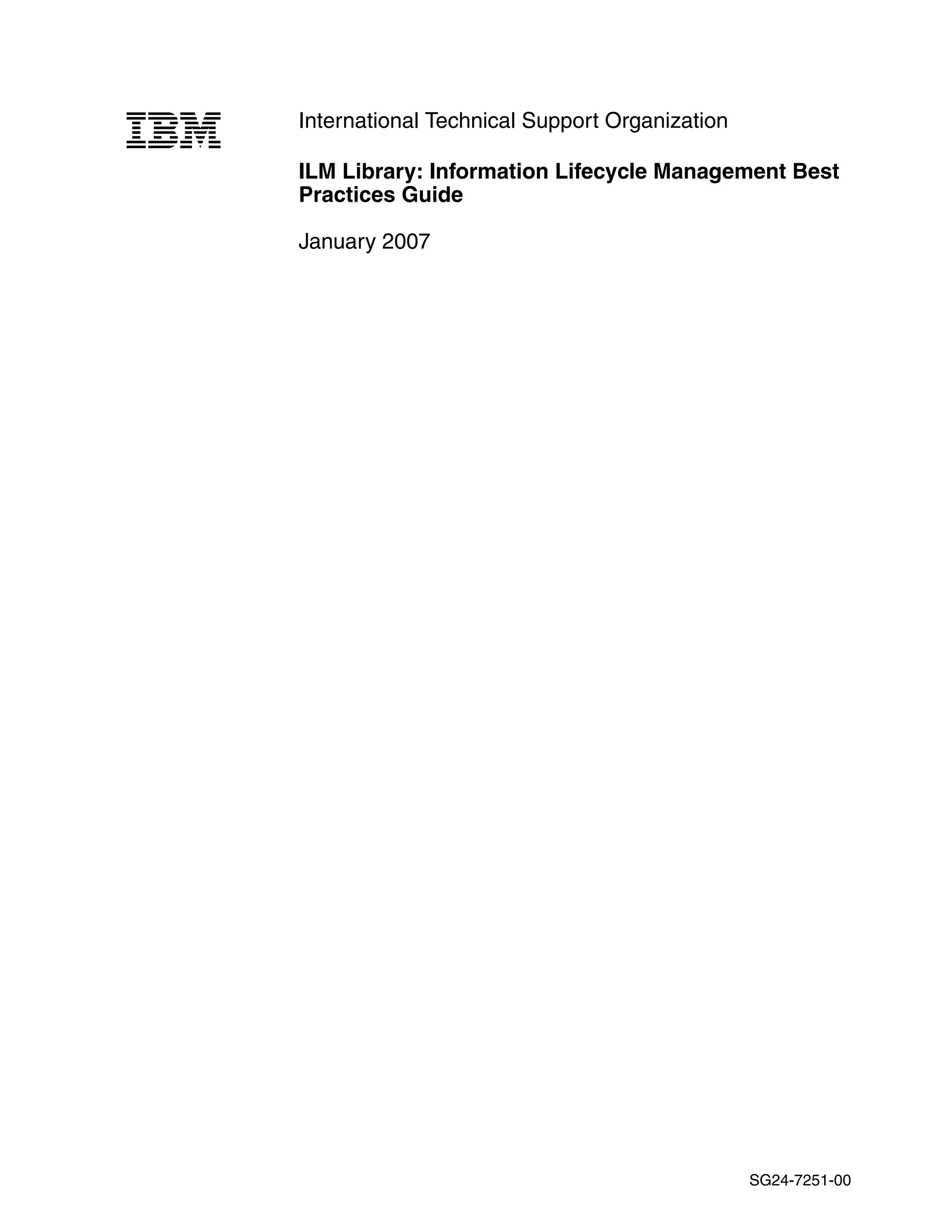

![2.3.1 Regulation examples

It is not within the scope of this book to enumerate and explain the regulations in existence

today. For illustration purposes only, we list some of the major regulations and accords in

Table 2-1, summarizing their intent and applicability.

Table 2-1 Some regulations and accords affecting companies

Regulation Intention Applicability

SEC/NASD Prevent securities fraud. All financial institutions and

companies regulated by the

SEC

Sarbanes Oxley Act Ensure accountability for public All public companies trading on

firms. a U.S. Exchange

HIPAA Privacy and accountability for Health care providers and

health care providers and insurers, both human and

insurers. veterinarian

Basel II aka The New Accord Promote greater consistency in Financial industry

the way banks and banking

regulators approach risk

management across national

borders.

21 CFR 11 Approval accountability. FDA regulation of

pharmaceutical and

biotechnology companies

For example, in Table 2-2, we list some requirements found in SEC 17a-4 to which financial

institutions and broker-dealers must comply. Information produced by these institutions,

regarding solicitation and execution of trades and so on, is referred to as compliance data, a

subset of retention-managed data.

Table 2-2 Some SEC/NASD requirements

Requirement Met by

Capture all correspondence (unmodified) Capture incoming and outgoing e-mail before

[17a-4(f)(3)(v)]. reaching users.

Store in non-rewritable, non-erasable format Write Once Read Many (WORM) storage of all

[17a-4(f)(2)(ii)(A)]. e-mail, all documents.

Verify automatically recording integrity and Validated storage to magnetic, WORM.

accuracy [17a-4(f)(2)(ii)(B)].

Duplicate data and index storage Mirrored or duplicate storage servers (copy

[17a-4(f)(3)(iii)]. pools).

Enforce retention periods on all stored data and Structured records management.

indexes [17a-4(f)(3)(iv)(c)].

Search/retrieve all stored data and indexes High-performance search retrieval.

[17a-4(f)(2)(ii)(D)].

38 ILM Library: Information Lifecycle Management Best Practices Guide](https://image.slidesharecdn.com/ilmlibraryinformationlifecyclemanagementbestpracticesguidesg247251-120524205609-phpapp01/75/Ilm-library-information-lifecycle-management-best-practices-guide-sg247251-56-2048.jpg)

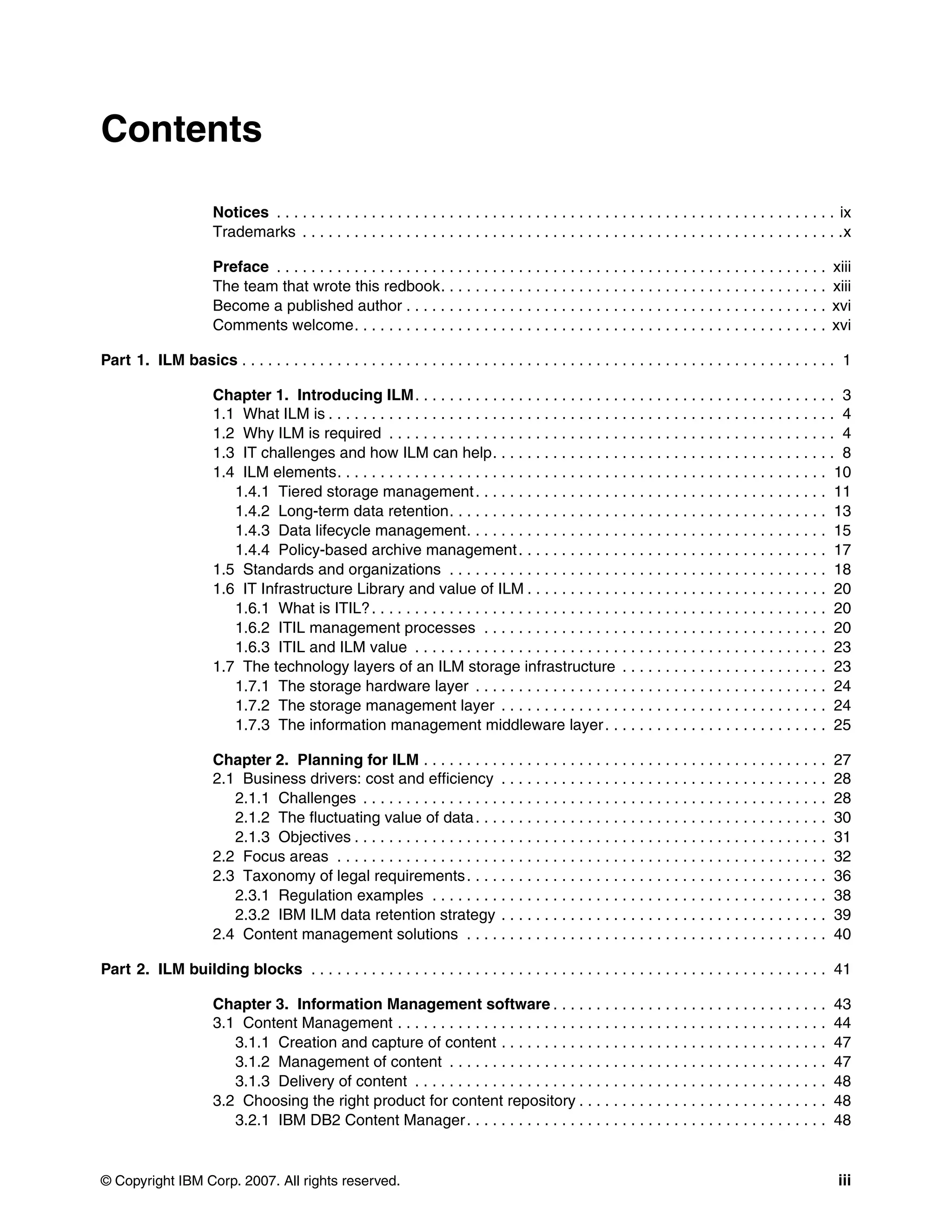

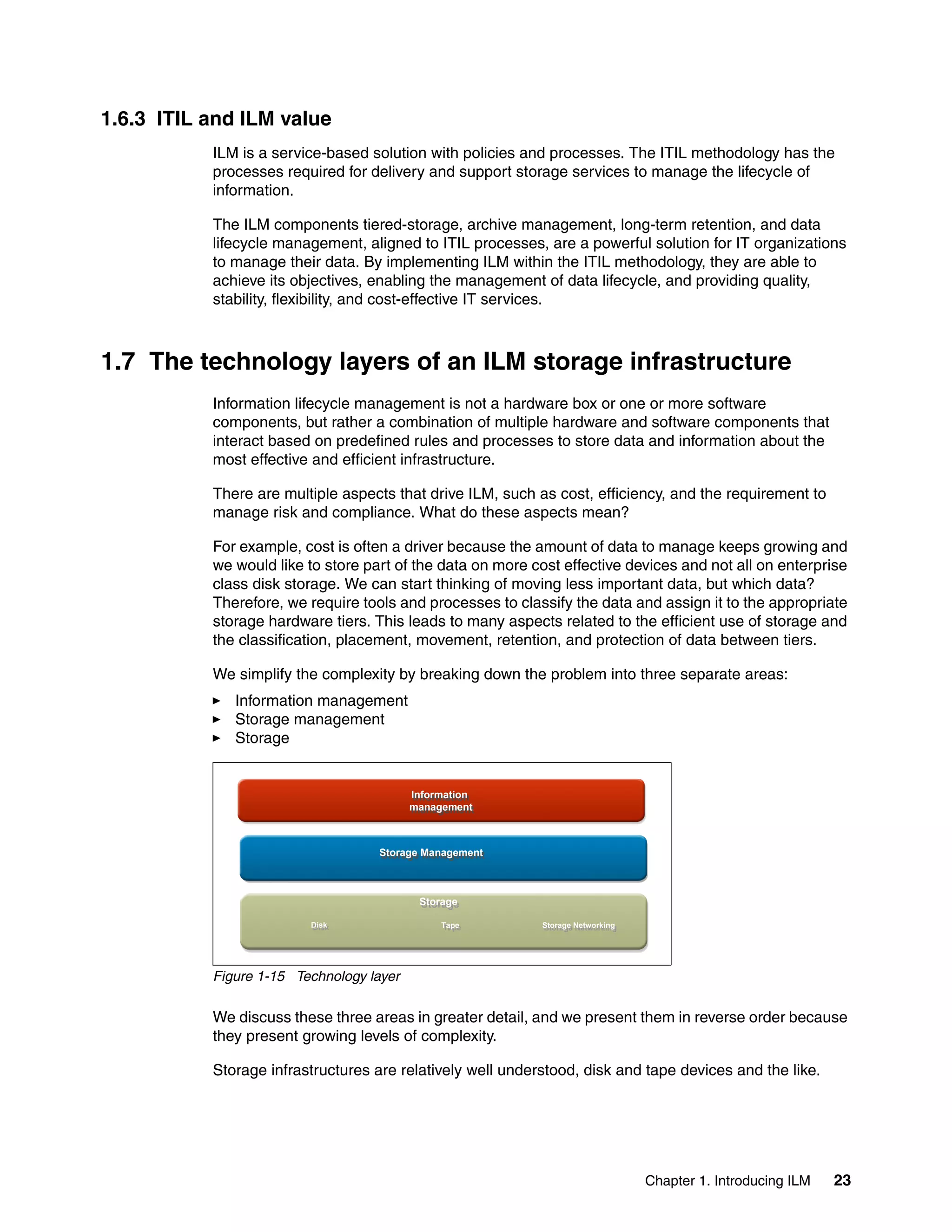

![metadata data

system pool intermediate pool slow pool

Disk1 Disk2 Disk3 Disk4 Disk5 Disk4

Figure 10-10 GPFS storage pools

Files can be moved between storage pools by changing the file’s storage pool assignment

with commands, as shown in Figure 10-11. The file name is maintained unchanged. You can

also chose to move files immediately or defer movement to a later time for a batch-like

process called rebalancing. Rebalancing will move files to their correct storage pool, defined

with pool assignment commands.

[metadata]

/

/dir1/f1

/dir1/f2

system pool /dir1/d2/f3 intermediate pool slow pool

Disk1 Disk2 Disk3 Disk4 Disk5 Disk4

f2

f1

f3 migrate f3

Figure 10-11 GPFS file movement between pools

GPFS filesets

Filesets provide a means of partitioning the namespace of a file system, allowing

administrative operations at a finer granularity than the entire file system.

In most file systems, a typical file hierarchy is represented as a series of directories that form

a tree-like structure. Each directory contains other directories, files, or other file-system

objects such as symbolic links and hard links. Every file system object has a name associated

with it, and is represented in the namespace as a node of the tree. GPFS also utilizes a file

system object called a fileset. A fileset is a subtree of a file system namespace that in many

respects behaves as an independent file system. Filesets provide a means of partitioning the

file system to allow administrative operations at a finer granularity than the entire file system:

You can define per-fileset quotas on data blocks and inodes. These are analogous to per

user and per group quotas.

Filesets can be specified in the policy rules used for placement and migration of file data.

Filesets are not specifically related to storage pools, although each file in a fileset physically

resides in blocks in a storage pool. This relationship is many-to-many; each file in the fileset

can be stored in a different user storage pool. A storage pool can contain files from many

filesets. However, all of the data for a particular file is wholly contained within one storage

pool.

274 ILM Library: Information Lifecycle Management Best Practices Guide](https://image.slidesharecdn.com/ilmlibraryinformationlifecyclemanagementbestpracticesguidesg247251-120524205609-phpapp01/75/Ilm-library-information-lifecycle-management-best-practices-guide-sg247251-292-2048.jpg)

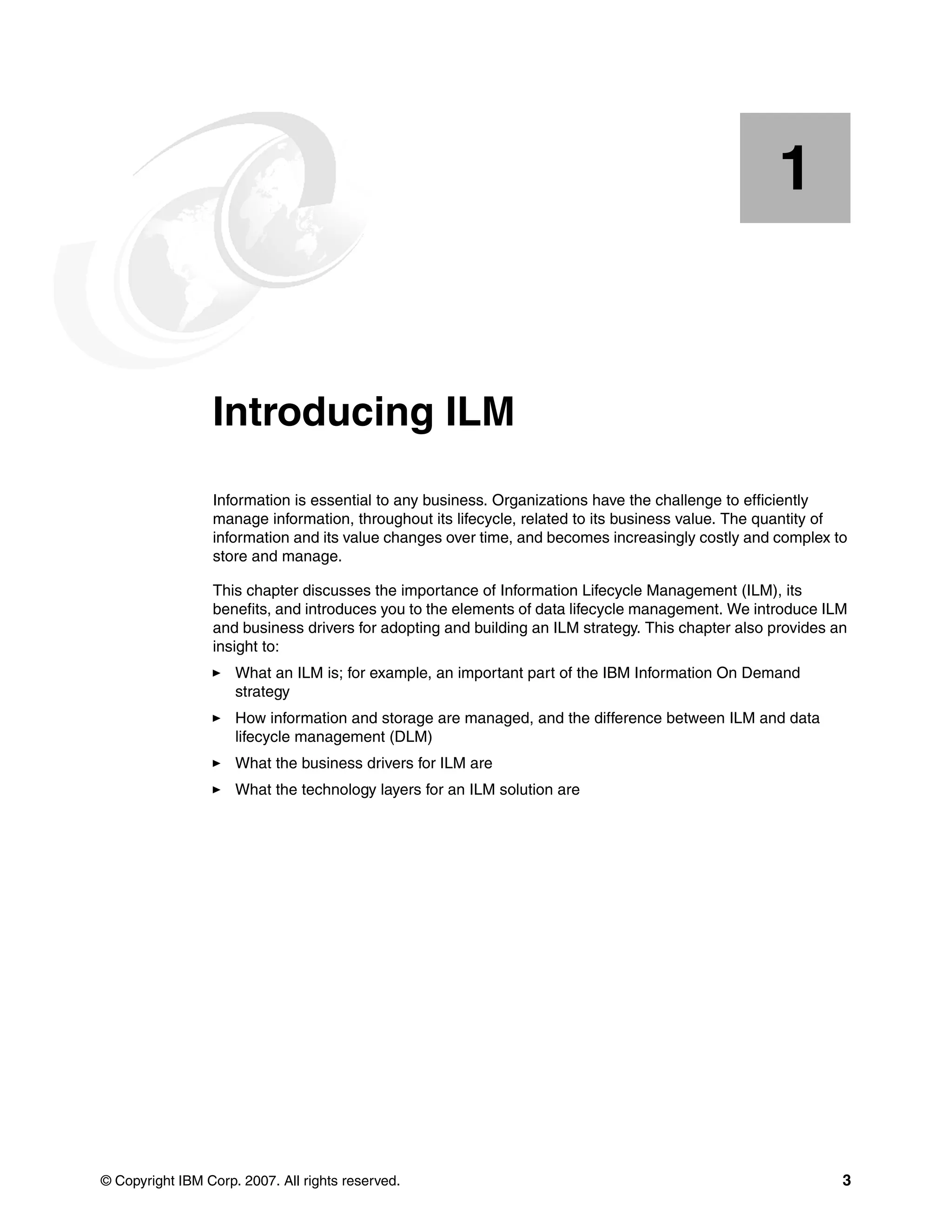

![File placement rule

A file placement rule, for newly created files, has the format:

RULE [’rule_name’] SET POOL ’pool_name’

[ REPLICATE(data-replication) ]

[ FOR FILESET( ’fileset_name1’, ’fileset_name2’, ... )]

[ WHERE SQL_expression ]

File migration rule

A file migration rule, to move data between storage pools, has the format:

RULE [‘rule_name’] [ WHEN time-boolean-expression]

MIGRATE

[ FROM POOL ’pool_name_from’

[THRESHOLD(high-occupancy-percentage[,low-occupancy-percentage])]]

[ WEIGHT(weight_expression)]

TO POOL ’pool_name’

[ LIMIT(occupancy-percentage) ]

[ REPLICATE(data-replication) ]

[ FOR FILESET( ‘fileset_name1’, ‘fileset_name2’, ... )]

[ WHERE SQL_expression]

Attention: Before you begin, with file migration you should test your rules thoroughly.

File deletion rule

A file deletion rule has the format:

RULE [‘rule_name’] [ WHEN time-boolean-expr]

DELETE

[ FROM POOL ’pool_name_from’

[THRESHOLD(high-occupancy-percentage,low-occupancy-percentage)]]

[ WEIGHT(weight_expression)]

[ FOR FILESET( ‘fileset_name1’, ‘fileset_name2’, ... )]

[ WHERE SQL_expression ]

File exclusion rule

A file exclusion rule has the format:

RULE [‘rule_name’] [ WHEN time-boolean-expr]

EXCLUDE

[ FROM POOL ’pool_name_from’ ]

[ FOR FILESET( ‘fileset_name1’, ‘fileset_name2’, ... )]

[ WHERE SQL_expression ]

Additional details on these features are provided in the manual General Parallel File System:

Advanced Administration Guide, SA23-2221.

10.5.3 GPFS typical deployments

GPFS is deployed in a number of areas today. The most-prominent environments involve

high-performance computing (HPC), digital media, data mining and BI, and seismic and

engineering applications. There are other deployments; but the aforementioned deployments

are illustrative of the capabilities of the product.

276 ILM Library: Information Lifecycle Management Best Practices Guide](https://image.slidesharecdn.com/ilmlibraryinformationlifecyclemanagementbestpracticesguidesg247251-120524205609-phpapp01/75/Ilm-library-information-lifecycle-management-best-practices-guide-sg247251-294-2048.jpg)

![[[

[]ILM Library: Information

Lifecycle Management Best

ILM Library: Information Lifecycle

Management Best Practices Guide](https://image.slidesharecdn.com/ilmlibraryinformationlifecyclemanagementbestpracticesguidesg247251-120524205609-phpapp01/75/Ilm-library-information-lifecycle-management-best-practices-guide-sg247251-324-2048.jpg)