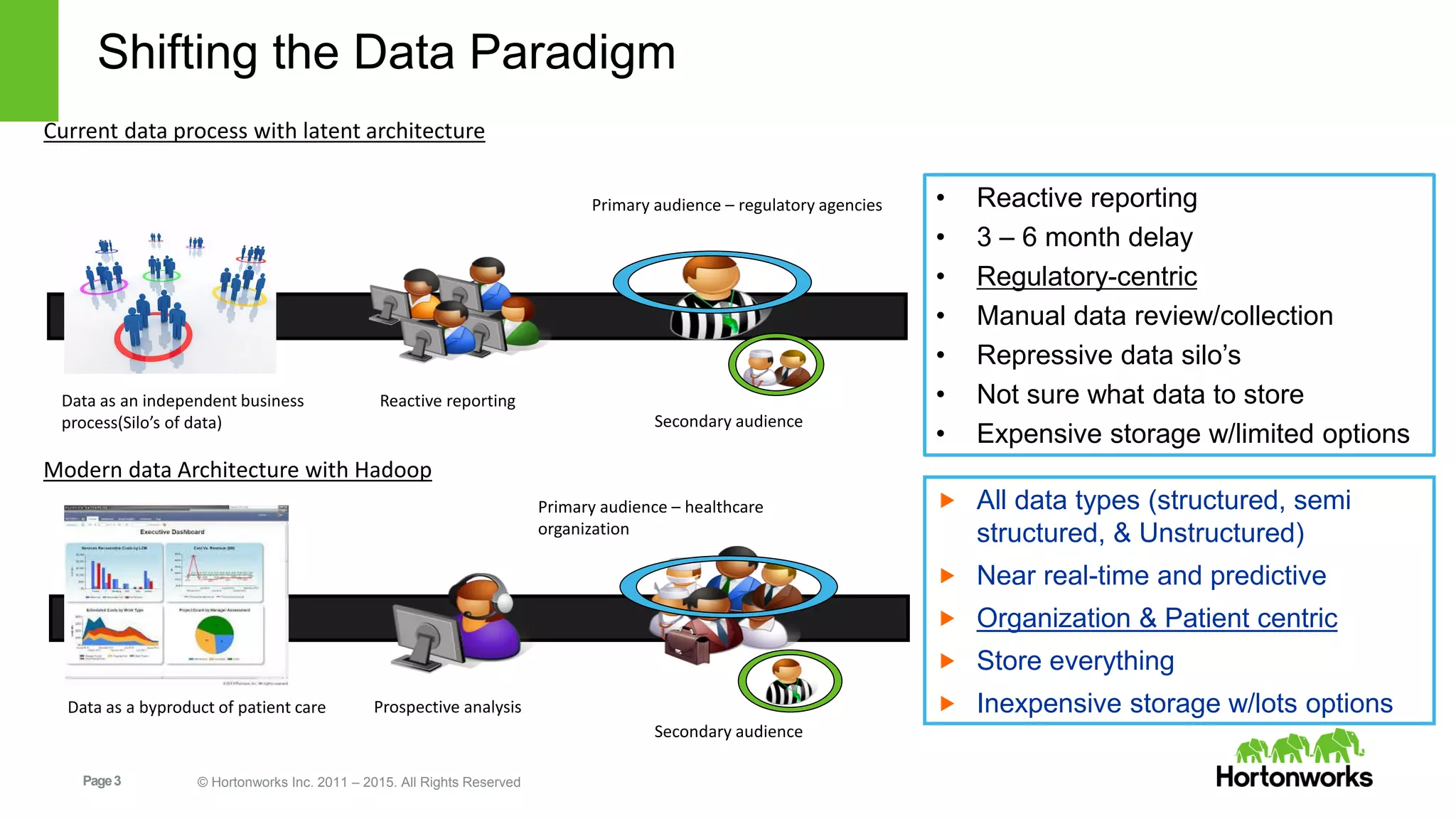

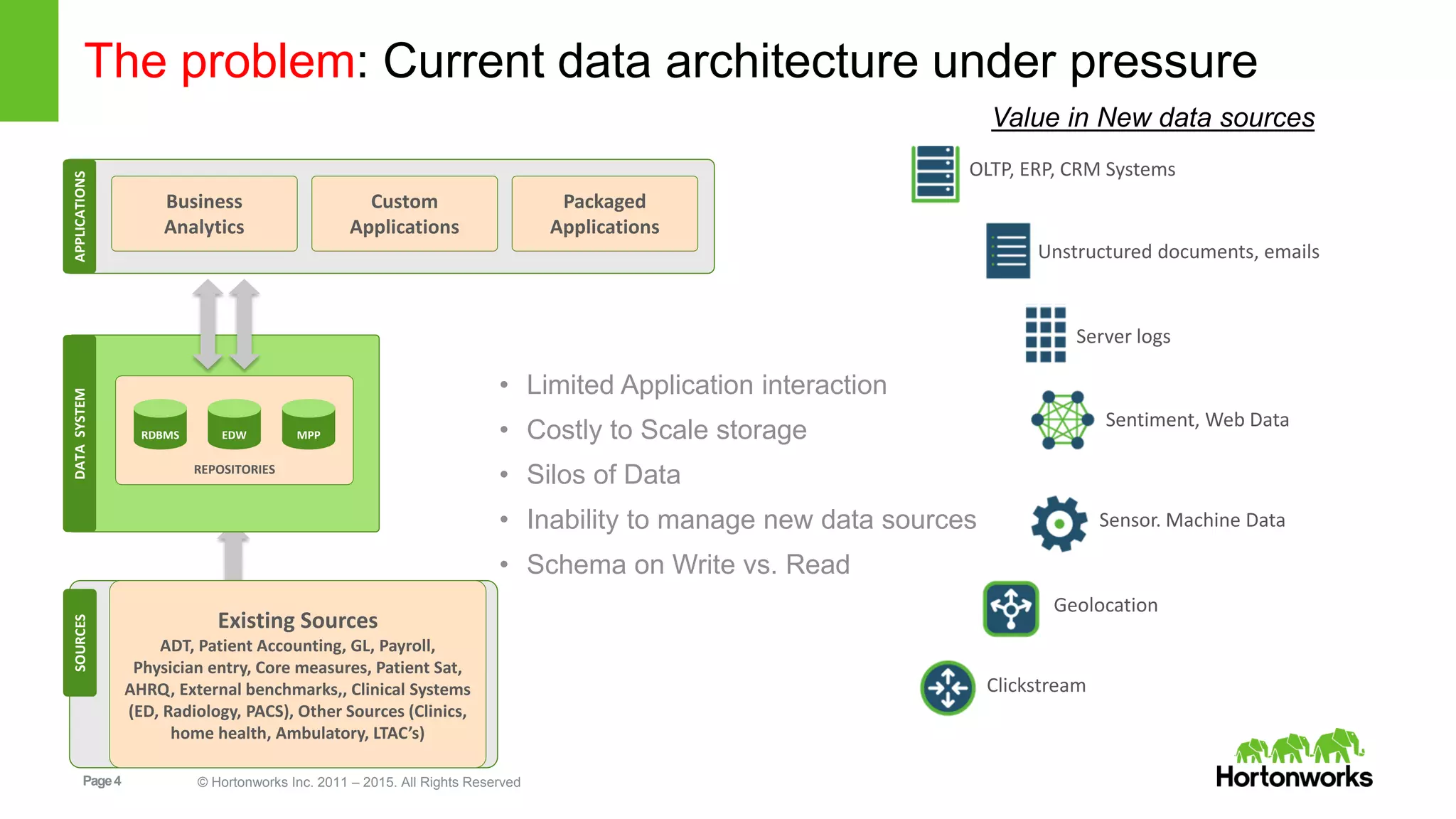

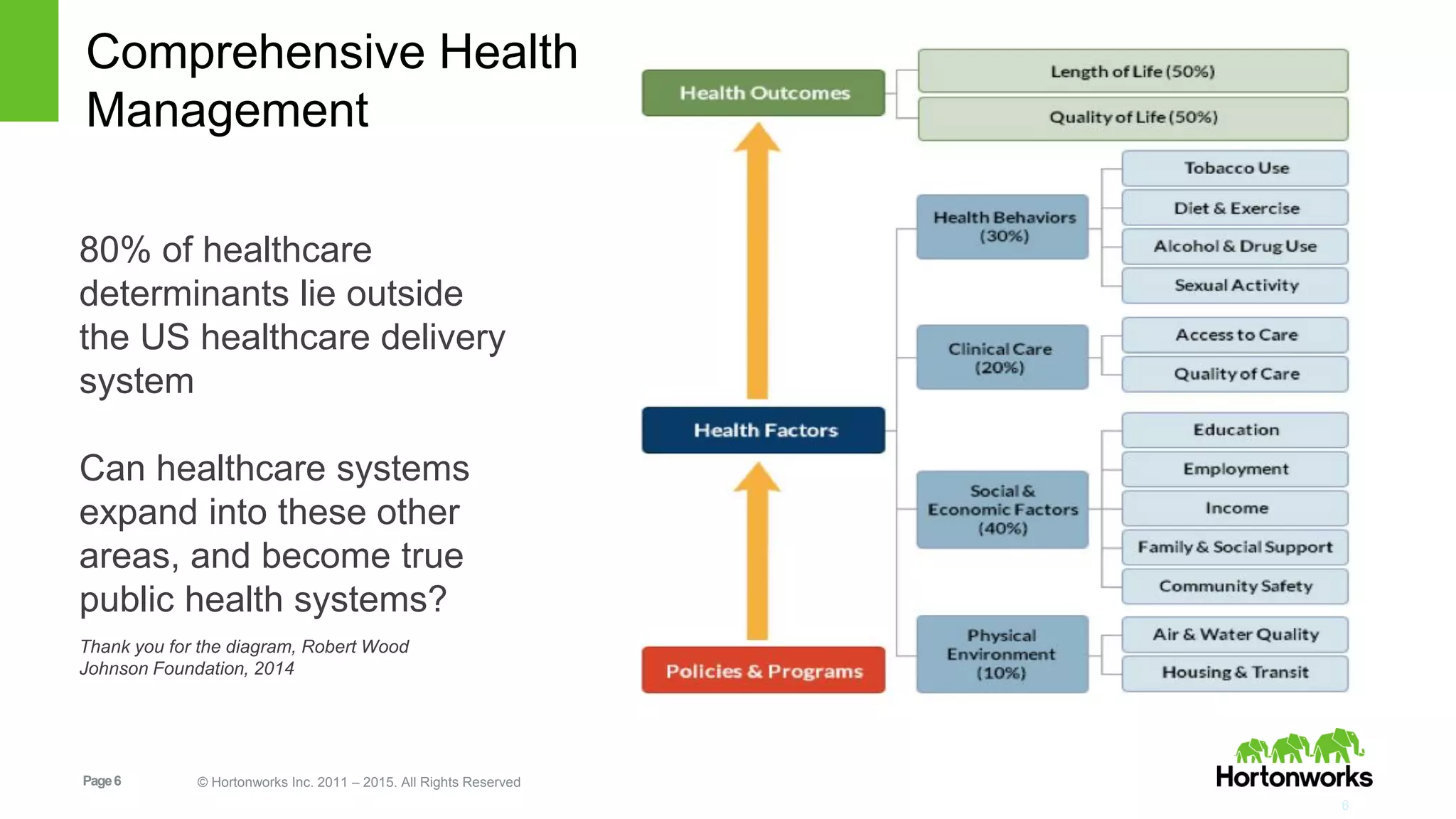

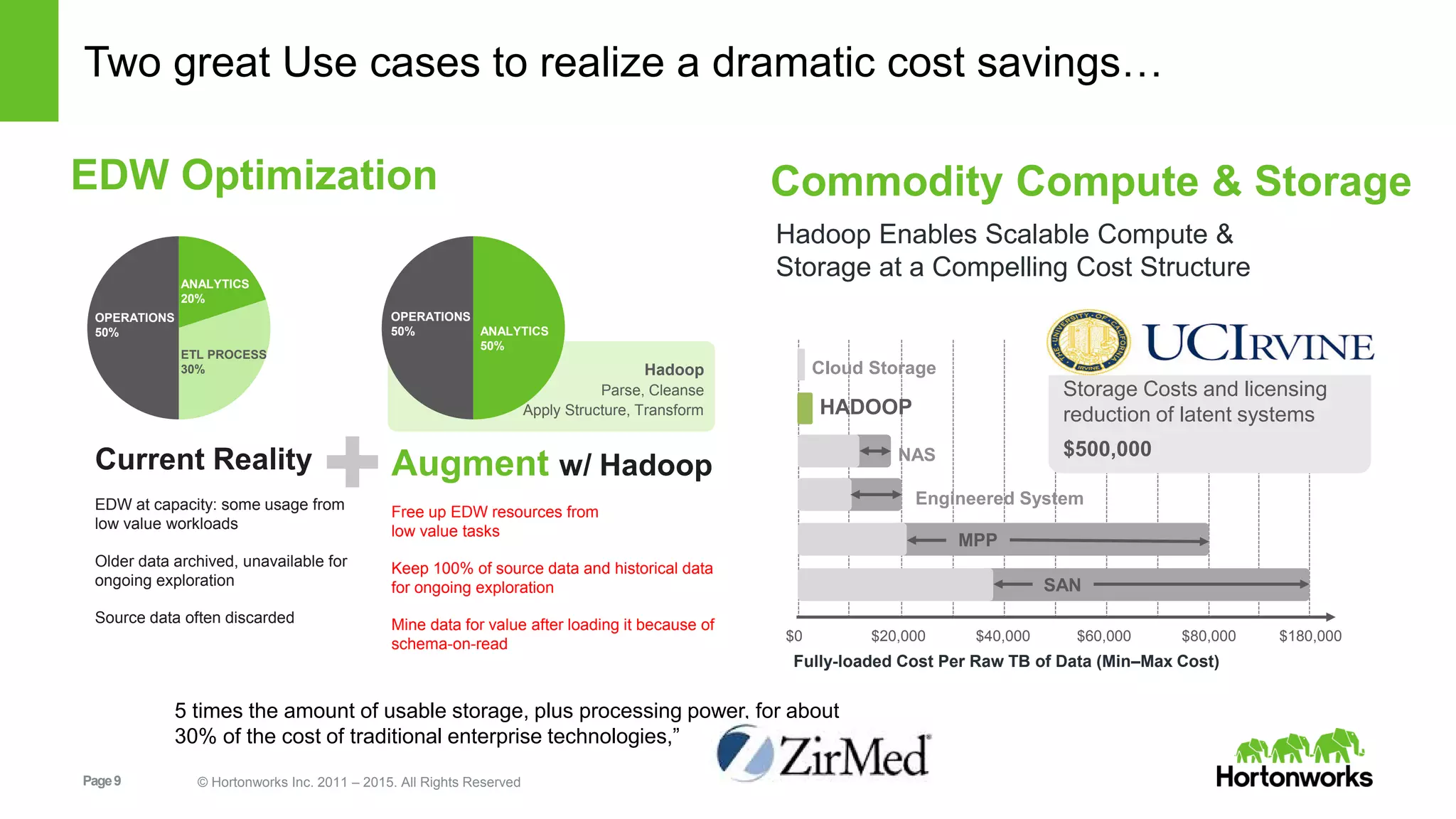

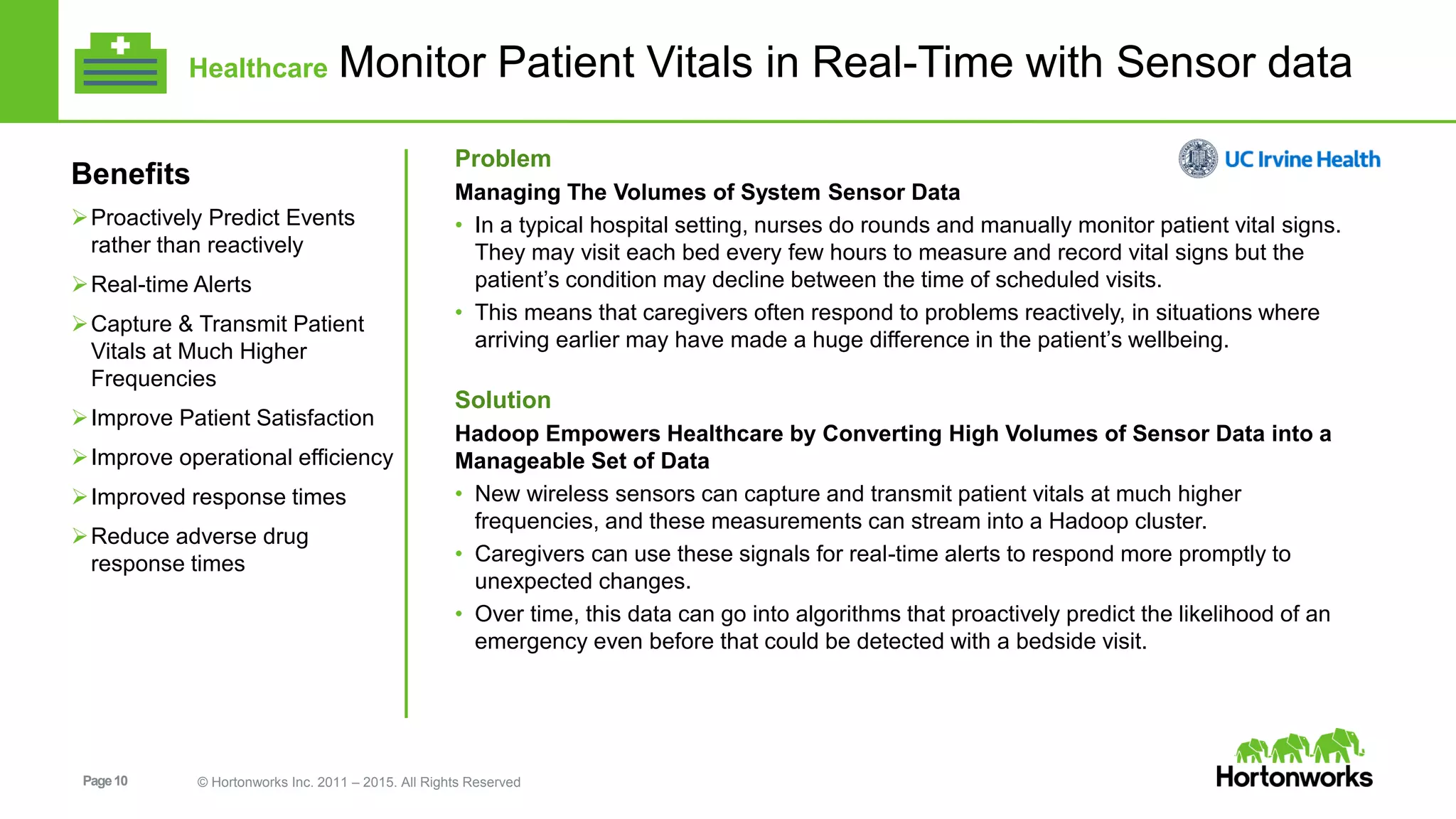

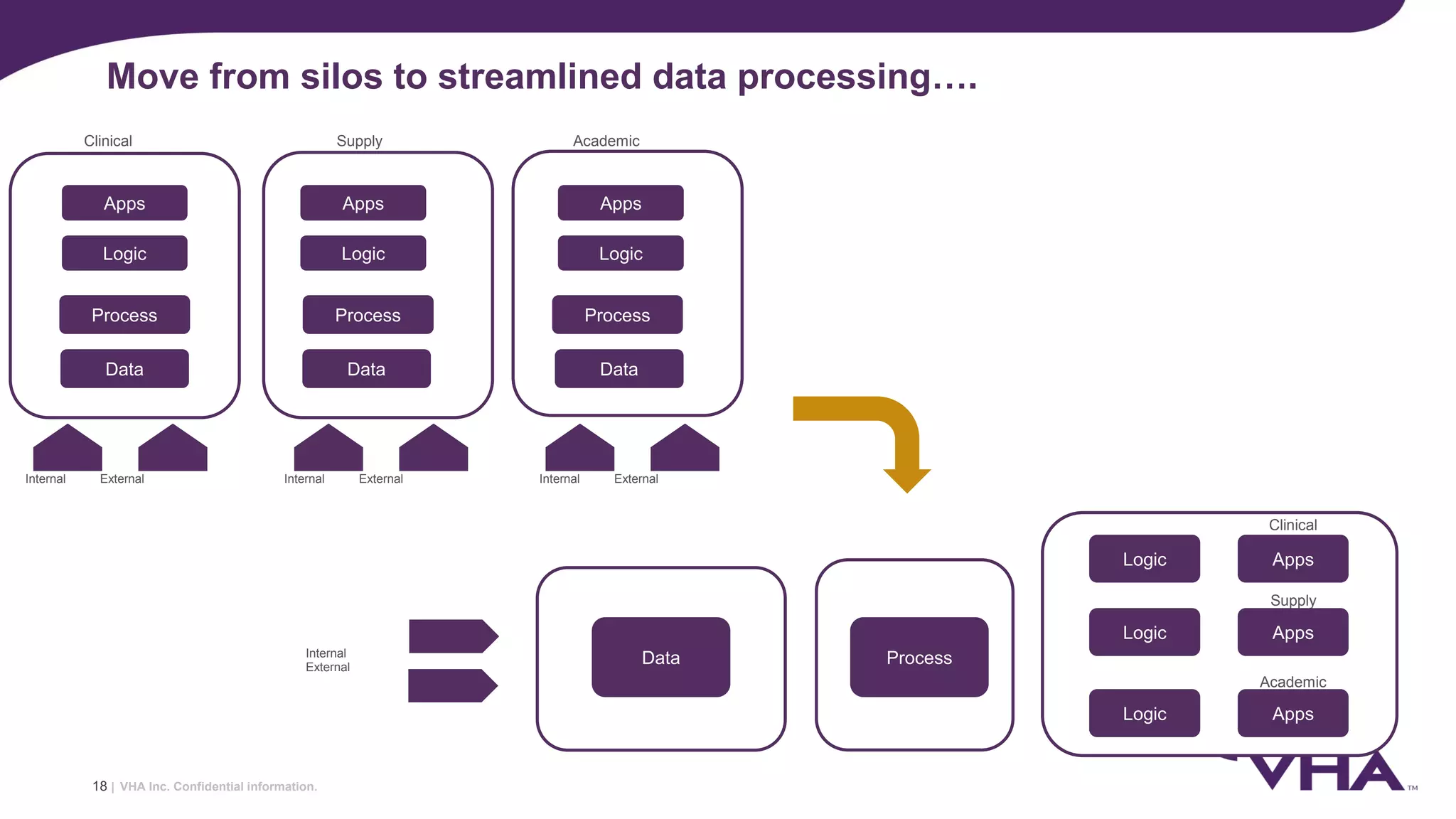

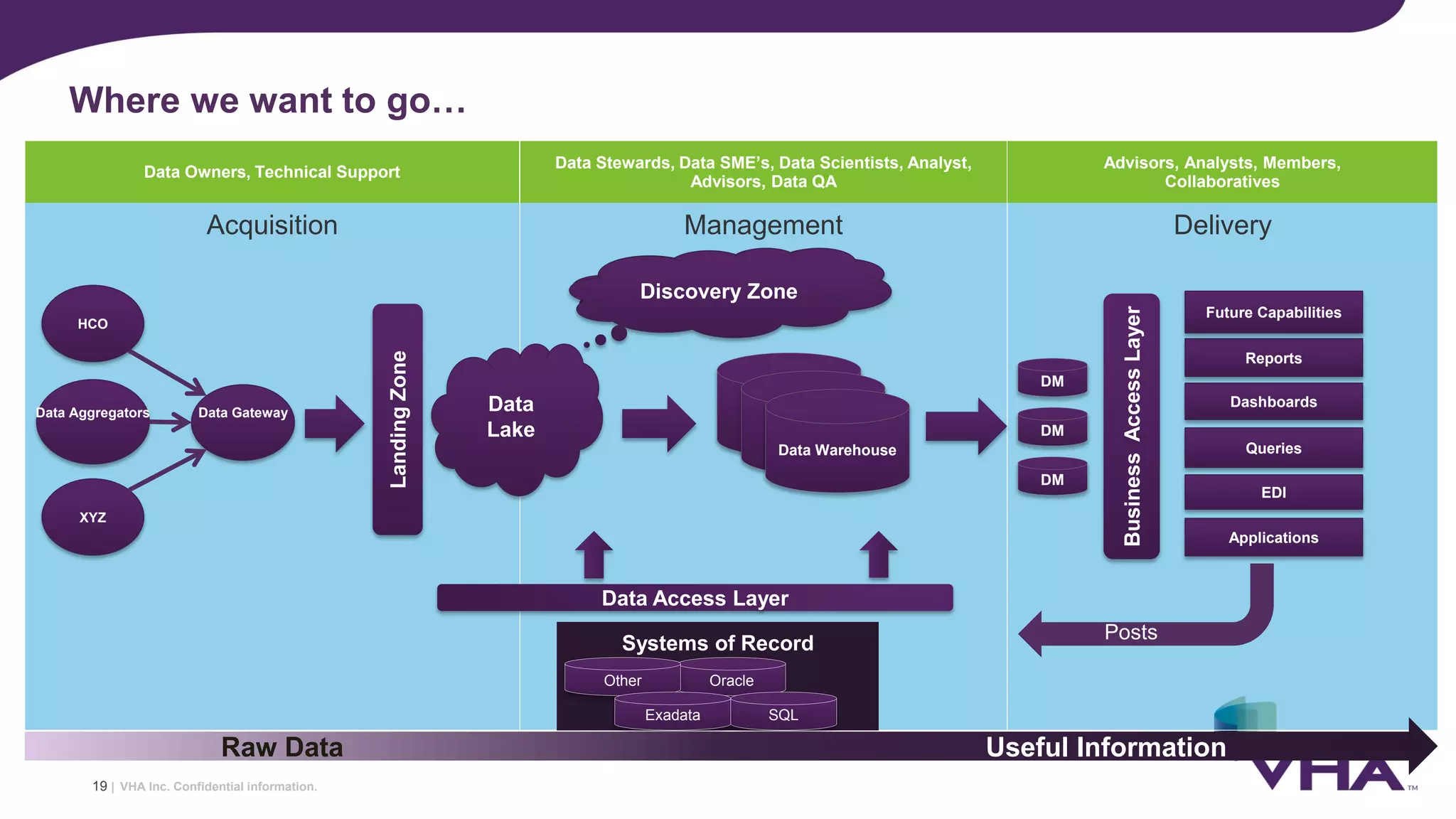

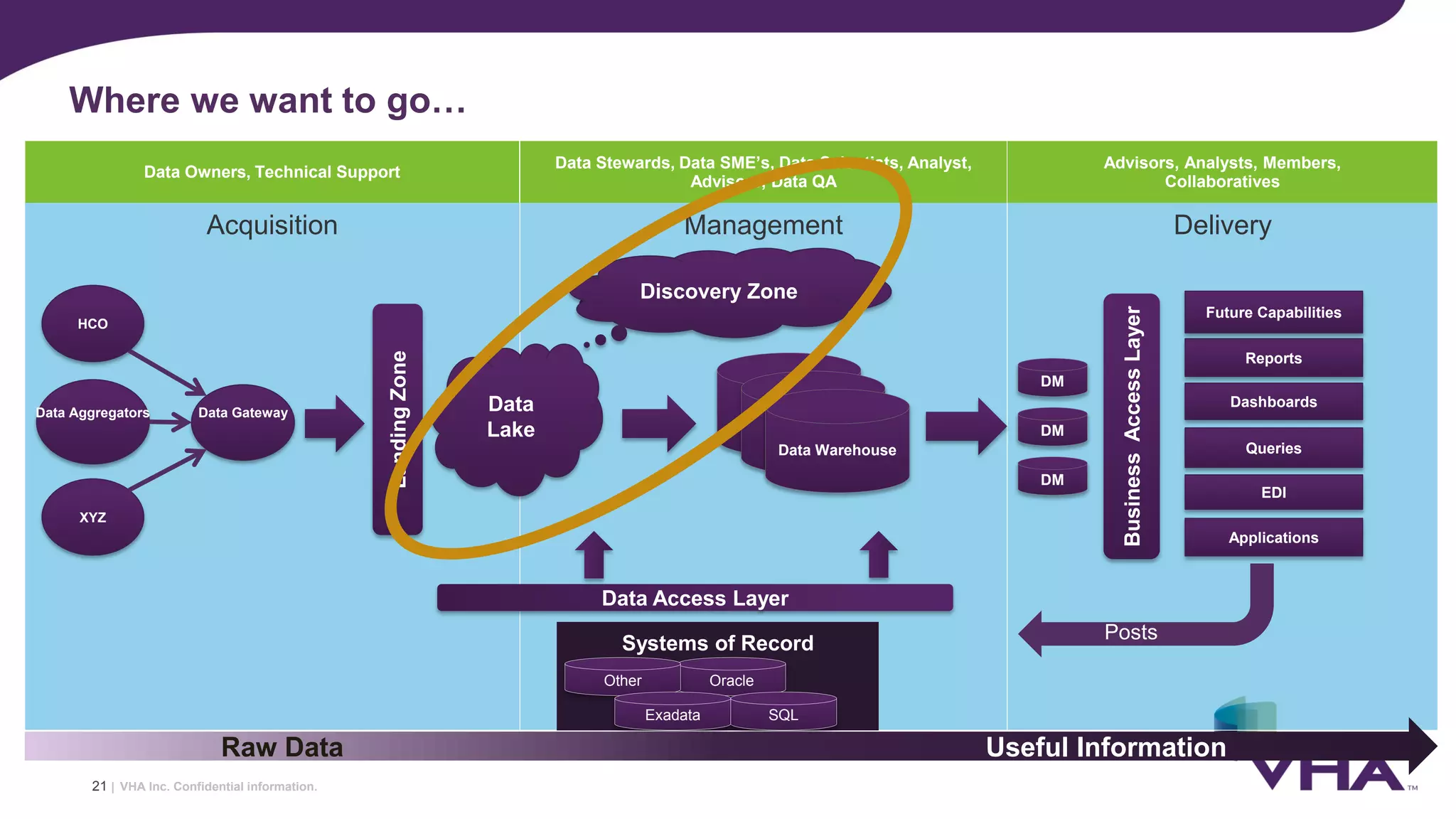

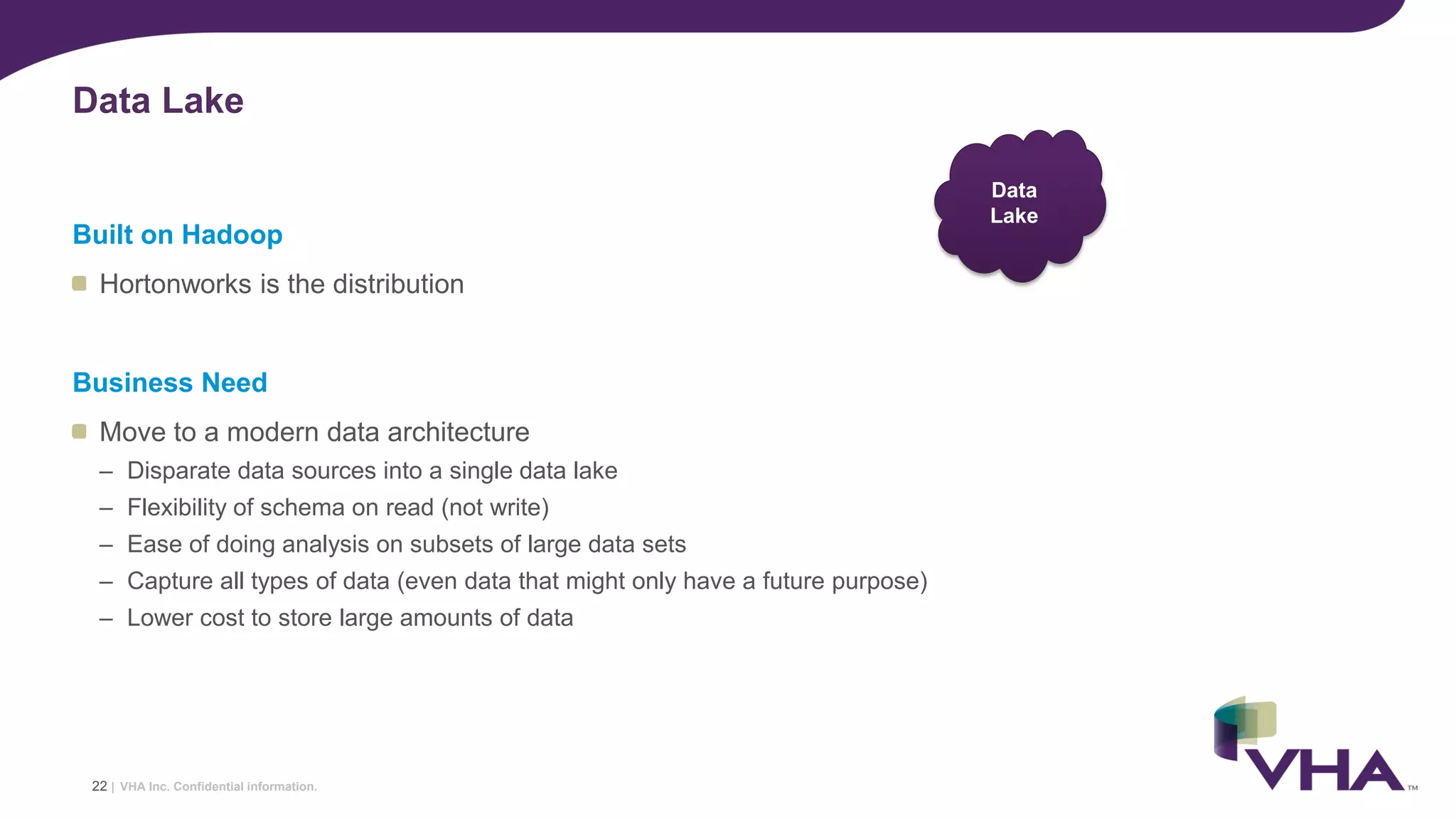

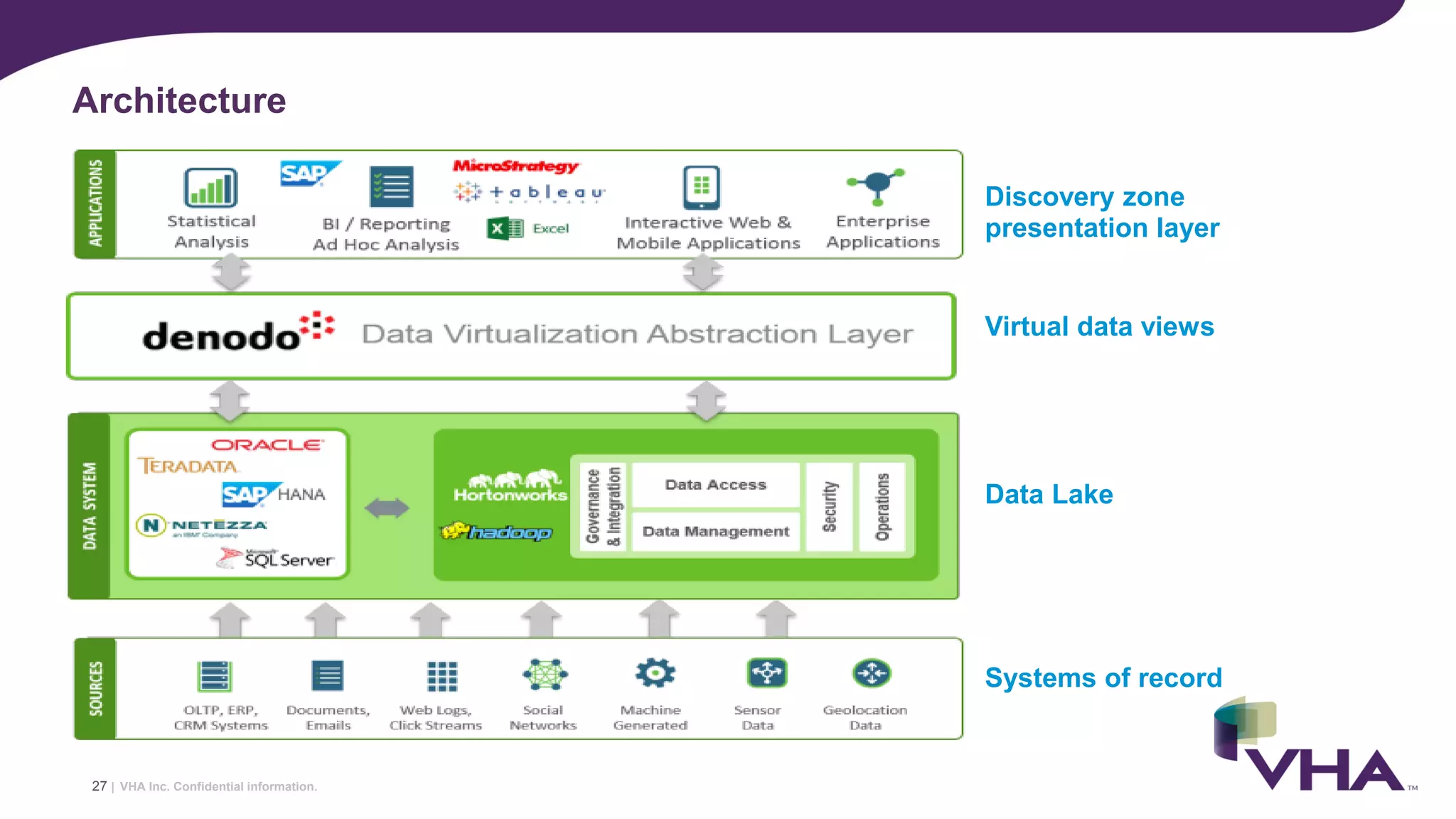

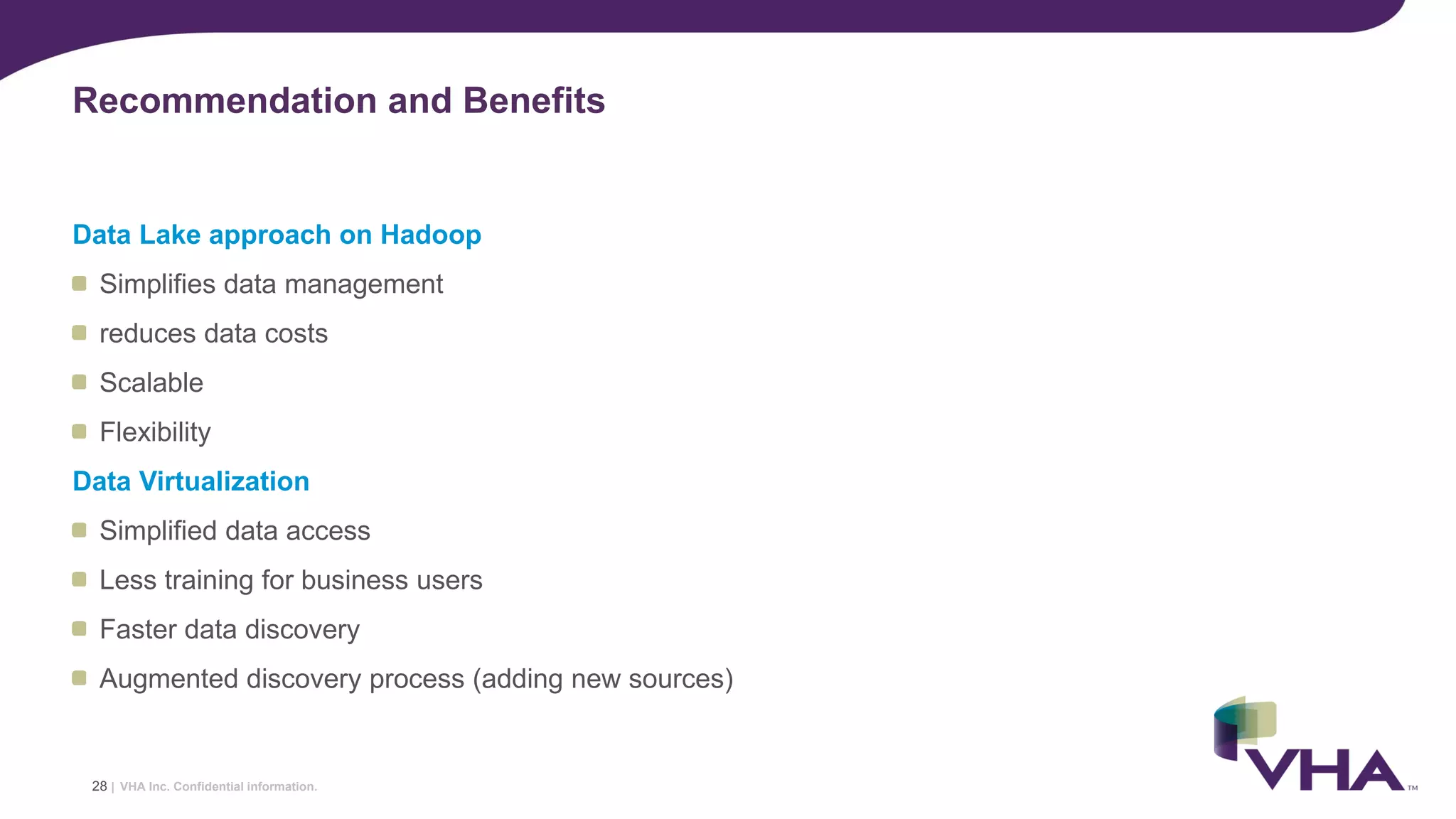

The document discusses a collaboration between VHA, Denodo, and Hortonworks aimed at transforming healthcare data architecture through Hadoop and data virtualization. It highlights the shift from traditional reactive reporting to real-time, predictive analytics, enhancing patient care and operational efficiency. The benefits include reduced storage costs, improved data accessibility, and the ability to manage diverse data sources effectively.