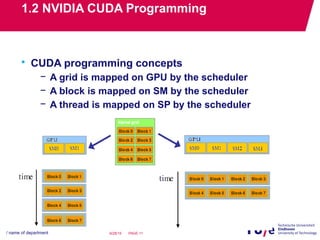

The document discusses GPU programming and mapping the SMAC atmospheric correction application to a GPU. It begins with an overview of NVIDIA GPU hardware and CUDA programming concepts. It then describes mapping the SMAC application, which processes large satellite image datasets, to run its correction algorithm on a GPU. Experiments show the GPU implementation significantly reduces processing time compared to CPU. Optimization techniques for GPU programming are also covered.

![1.2 NVIDIA CUDA Programming

• Example:

/*******************************************************/

/* File: main.c

/* Description: 8x8 matrix addition on CPU

/*******************************************************/

//Data definition

const int mat1[64] = {…};

const int mat2[64] = {…};

const int mat3[64];

//Matrix addition on CPU

void matrixAdd_CPU(int index, int* IN1, int* IN2, int* OUT);

// Main body

int main()

{

// Run the matrix addition on CPU

matrixAdd_CPU(64, mat1, mat2, mat3);

return 0;

}

/****************************************************/

/* File: main.cu

/* Description: 8x8 matrix addition on GPU

/****************************************************/

//Data definition

const int mat1[64] = {…};

const int mat2[64] = {…};

const int mat3[64];

//Matrix addition on GPU

void matrixAdd_GPU(int index, int* IN1, int* IN2, int* OUT);

// Main body

int main()

{

// Run the matrix addition on CPU

matrixAdd_GPU(row, col, mat1, mat2, mat3);

return 0;

}](https://image.slidesharecdn.com/c516166c-a429-49d7-8f36-094cc05edac0-150629191903-lva1-app6892/85/GPU-programming-and-Its-Case-Study-14-320.jpg)

![1.2 NVIDIA CUDA Programming

/**************************************************************/

/* File: main.c

/* Description: 8x8 matrix addition on CPU

/******************************************************************/

//Matrix addition on CPU

void matrixAdd_CPU(int index, int* IN1, int* IN2, int* OUT)

{

int i;

for(i = 0; i < index ; i++){

OUT[i] = IN1[i] + IN2[i];

}

}

/****************************************************/

/* File: main.cu

/* Description: 8x8 matrix addition on GPU

/****************************************************/

//Matrix addition on GPU

void matrixAdd_GPU(int index, int* IN1, int* IN2, int* OUT)

{

int* deviceIN1;

int* deviceIN2;

int* deviceOUT;

dim3 grid(1, 1, 1);

dim3 block(index, 1, 1);

cutilSafeCall(cudaMalloc((void**) &deviceIN1, sizeof(int) * index));

cutilSafeCall(cudaMalloc((void**) &deviceIN2, sizeof(int) * index));

cutilSafeCall(cudaMalloc((void**) &deviceOUT, sizeof(int) *

index));

cutilSafeCall(cudaMemcpy(deviceIN1, IN1, sizeof(int) * index,

cudaMemcpyHostToDevice));

cutilSafeCall(cudaMemcpy(deviceIN2, IN2, sizeof(int) * index,

cudaMemcpyHostToDevice));

GPU_kernel<<<grid, block>>>(deviceIN1, deviceIN2, deviceOUT);

cutilSafeCall(cudaMemcpy(OUT, deviceOUT, sizeof(int) * index,

cudaMemcpyDeviceToHost));

}

Allocate GPU memory

Copy the input

Copy the output

Run the matrix addition

/***********************************/

/* File: main.cu

/* Description: GPU kernel

/*****************************************/

__global__ void

GPU_kernel( int* IN1, int* IN2, int* OUT)

{

int tx = threadIdx.x;

OUT[tx] = IN1[tx] + IN2[tx];

}](https://image.slidesharecdn.com/c516166c-a429-49d7-8f36-094cc05edac0-150629191903-lva1-app6892/85/GPU-programming-and-Its-Case-Study-15-320.jpg)