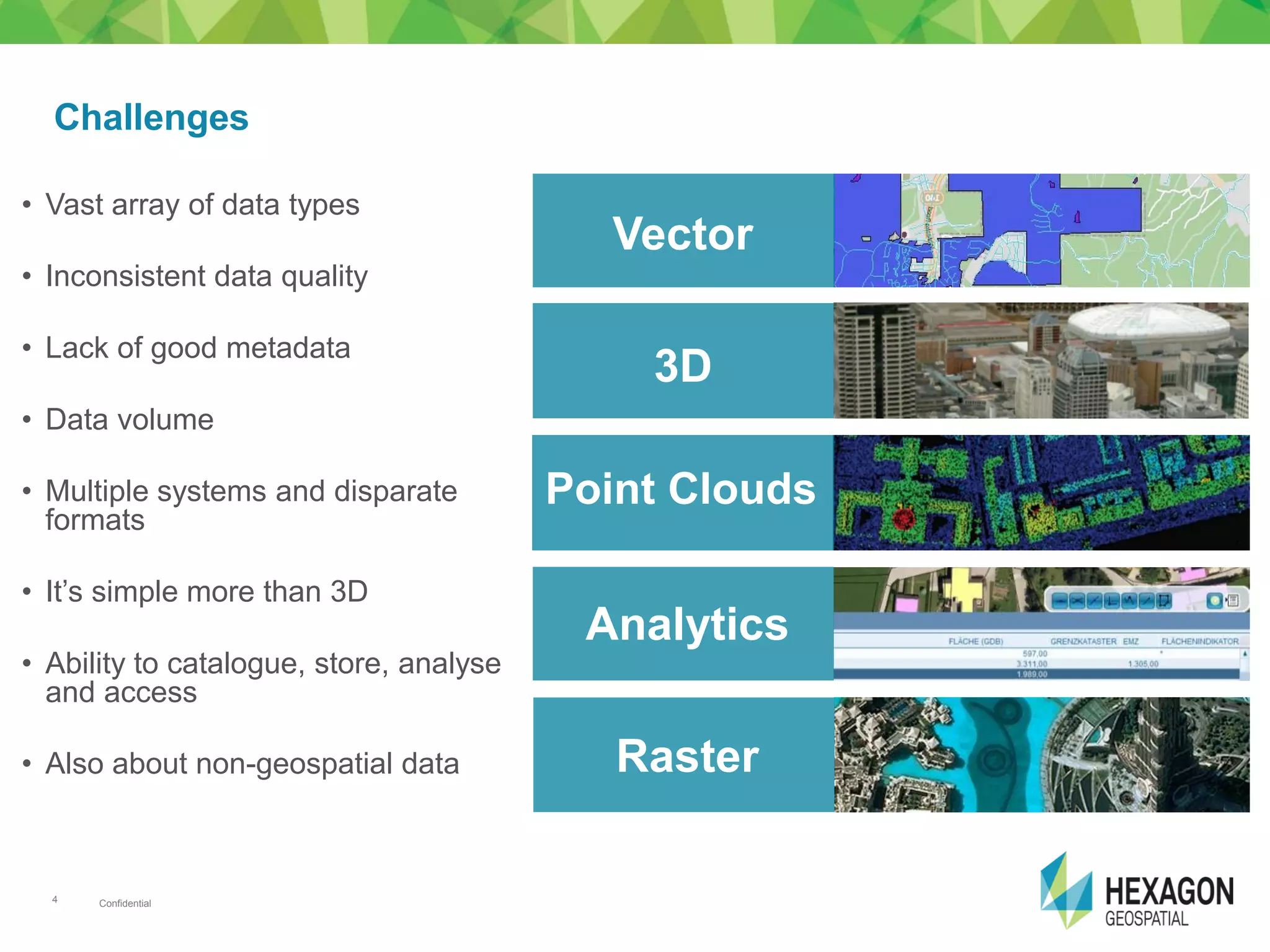

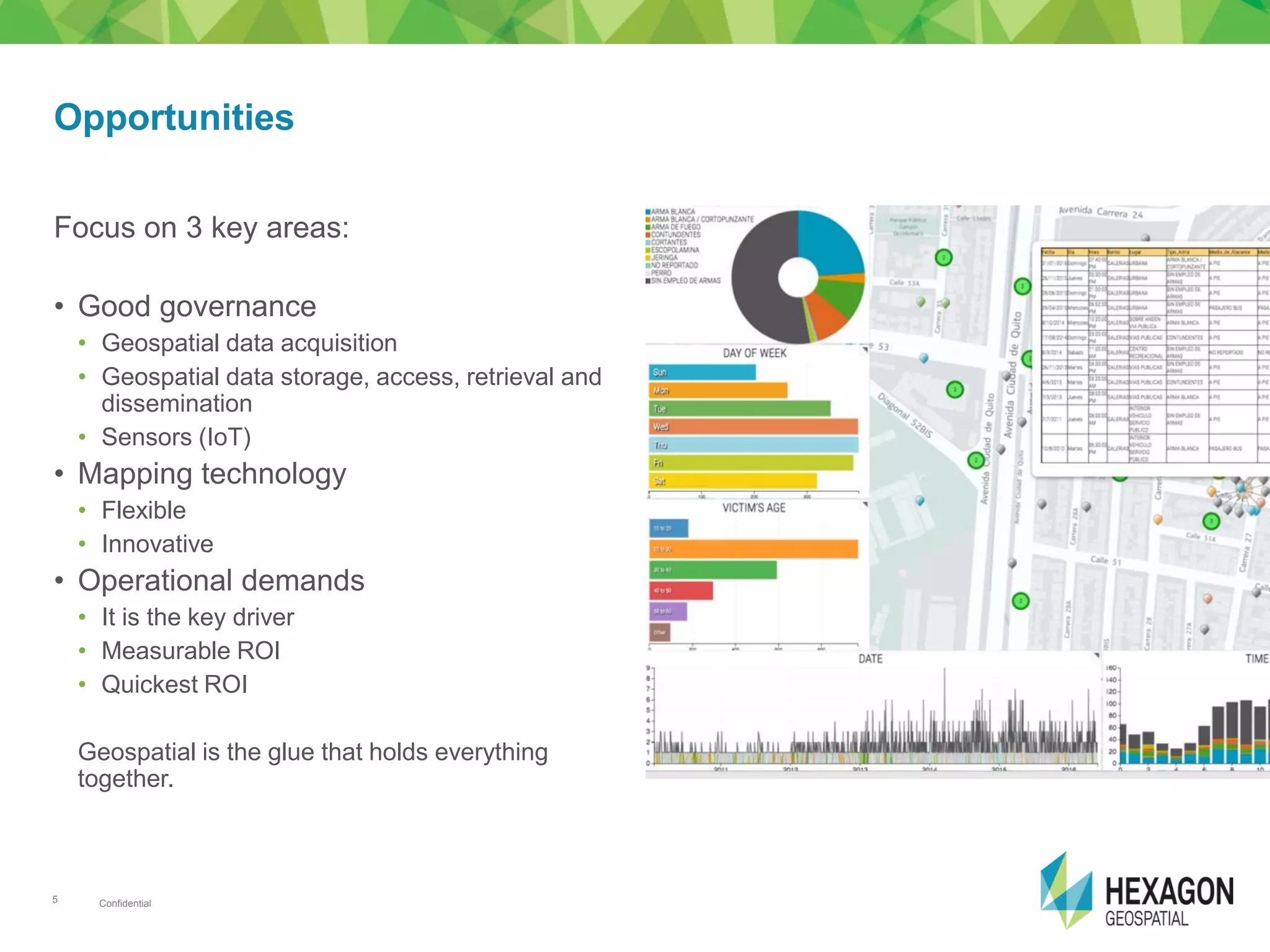

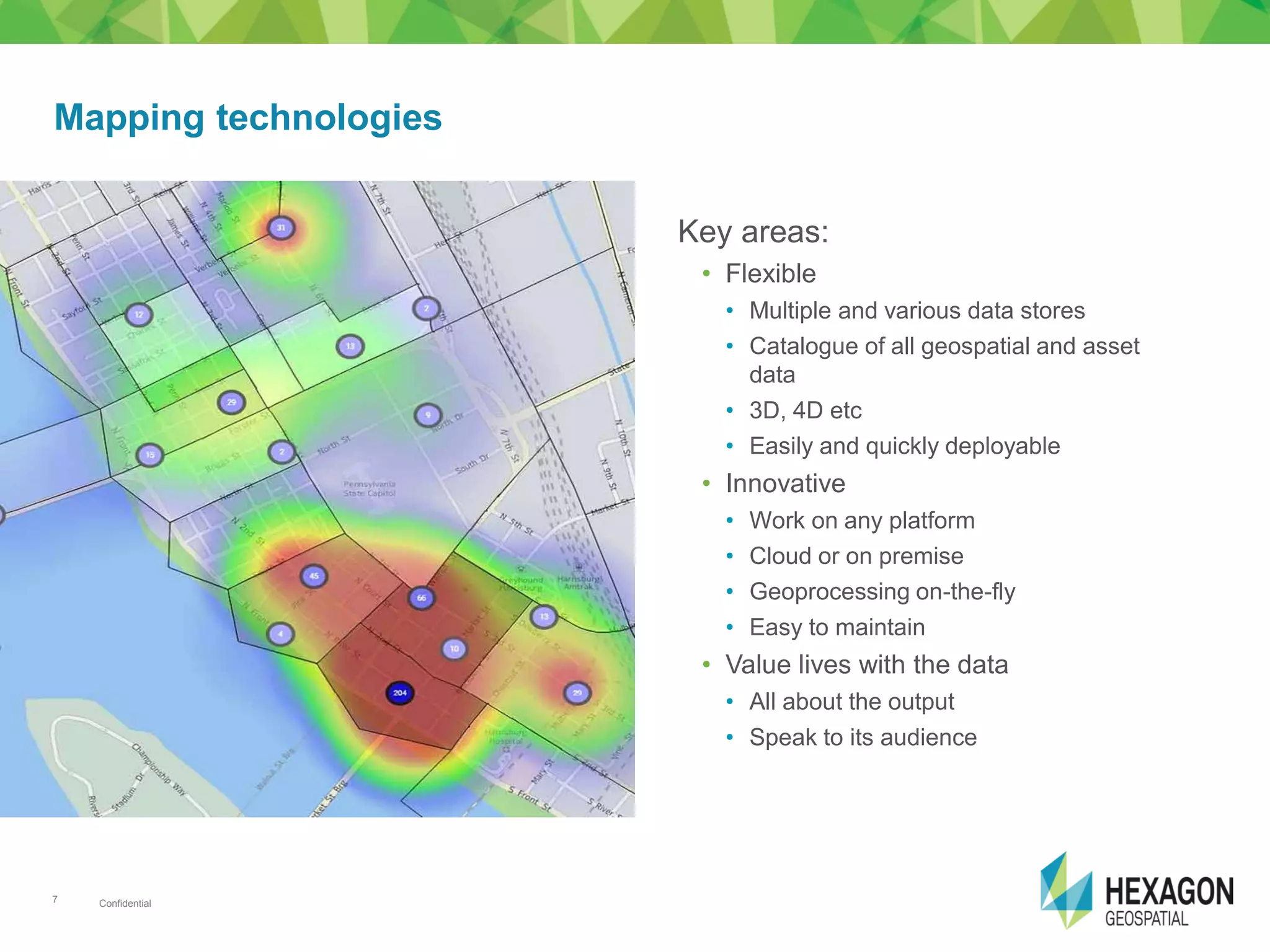

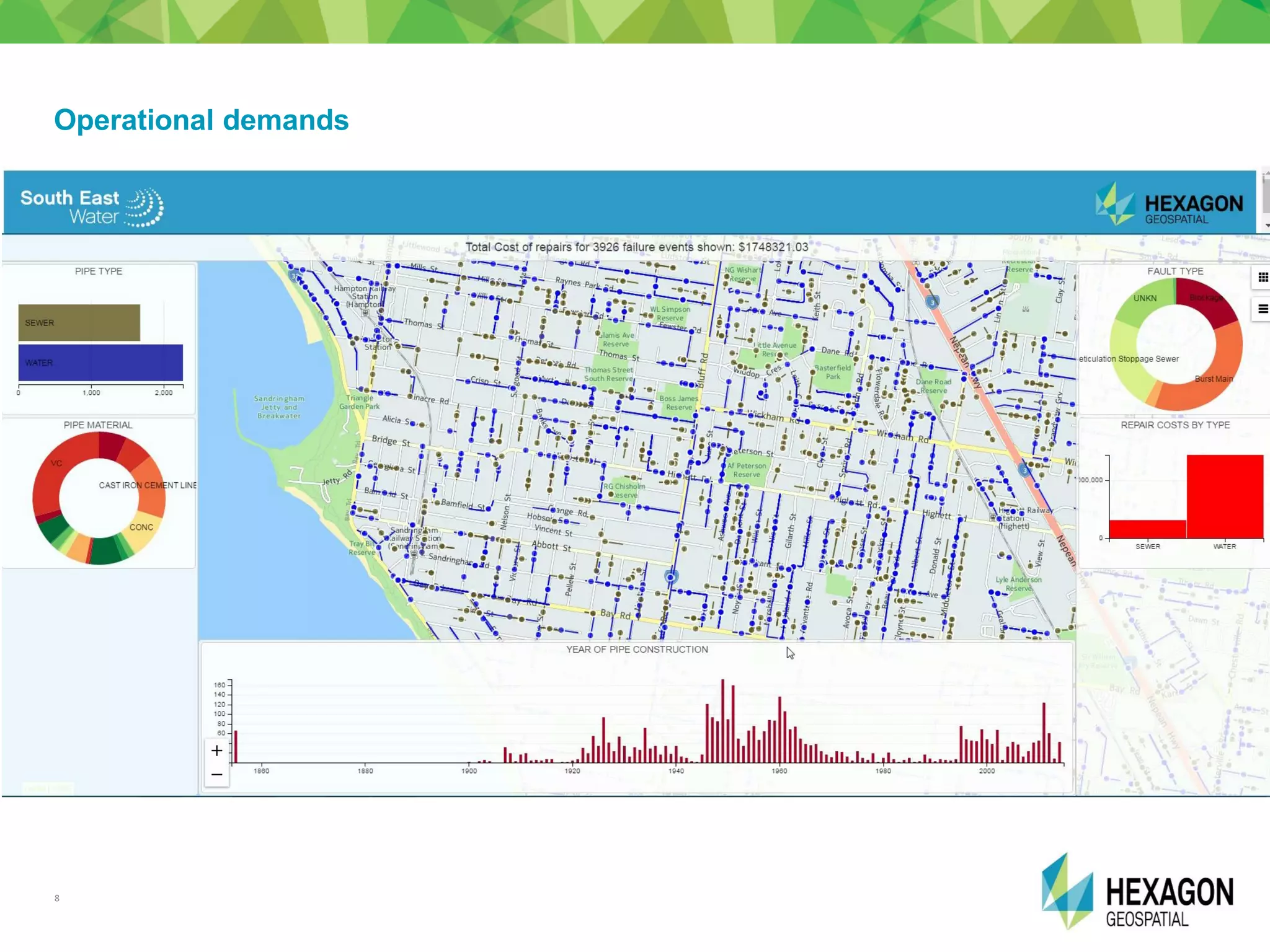

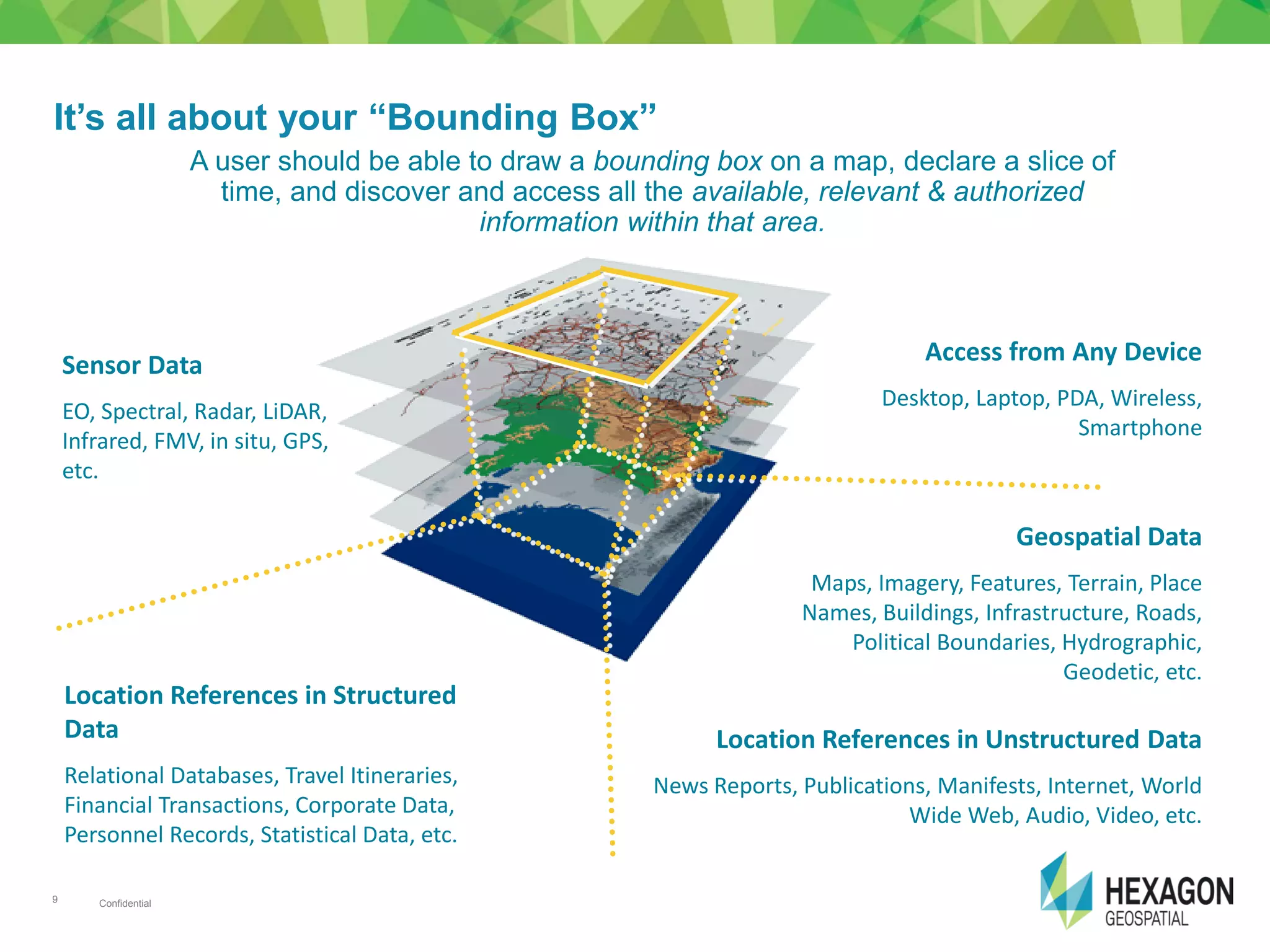

The document discusses the importance of effective geospatial data management for engineering and geospatial professionals, highlighting the need for access to accurate information efficiently and cost-effectively. It outlines the challenges posed by diverse data types, quality issues, and the integration of various systems, while also presenting opportunities in governance and innovative technologies such as IoT. The content emphasizes the role of geospatial data as a crucial component in modern urban development, especially in Africa's evolving infrastructure landscape.