TNTP, a nonprofit organization, transitioned its TeacherTrack system from SQL Server to MongoDB to improve performance and scalability, addressing issues like slow queries and long deployment times. Using MongoDB's document structure, surveys and responses could be efficiently managed, leading to faster query responses under 10ms. The shift was facilitated by pre-conversion strategies, training from MongoDB, and a talented team that significantly improved system reliability and development agility.

![Survey Documents in MongoDB

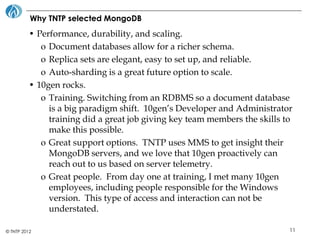

• Surveys are a great match for MongoDB.

• The number of responses never changes after a survey is instantiated,

making it an ideal candidate for being an embedded array in the survey

document.

• <10ms query times!

{

"_id" : BinData(3,"vD+ifVfvS0qlk5vN8OPQOQ=="),

"AccountId" : BinData(3,"B1giiULLskSEG7rYmdqBUA=="),

"Title" : "Registering",

"Responses" : [

{

"_id" : BinData(3,"UvqabcPS1UGZipKODPKgGA=="),

"Value" : "Ryan",

"QuestionText" : "What is your first name?",

"QuestionElementType" : 1,

"QuestionControlType" : 1

}

]

}

© TNTP 2012 12](https://image.slidesharecdn.com/fromsqlservertomongodb-120927204702-phpapp01/85/From-sql-server-to-mongo-db-12-320.jpg)