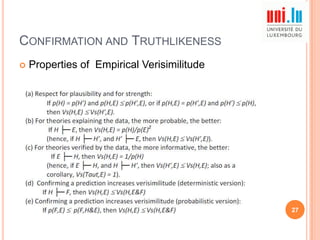

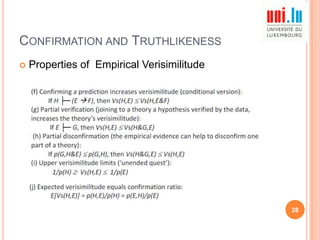

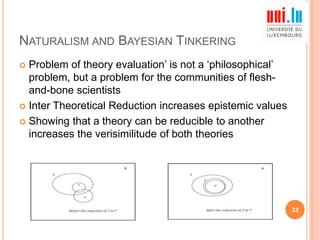

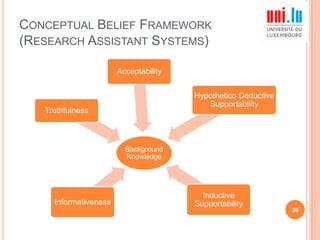

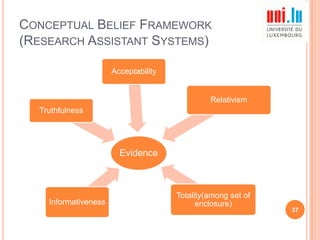

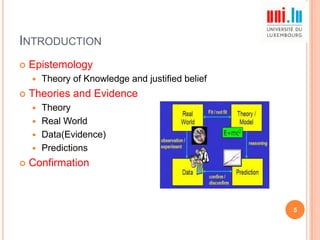

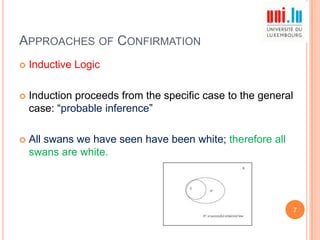

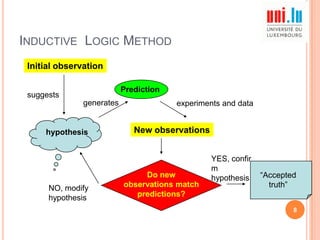

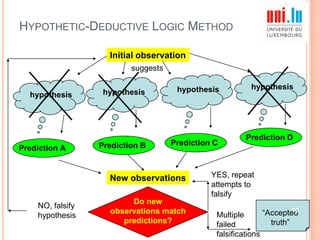

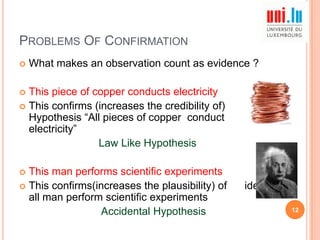

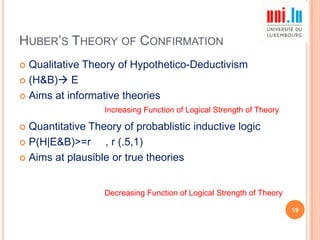

The paper explores various theories of confirmation, comparing the theory of confirmation with the theory of verisimilitude, and examines the connections between confirmation, naturalism, and the belief framework for cognitive agents. Key topics include different approaches to confirmation, problems related to evidential support for theories, and Huber's evaluation of theories based on their informativeness and plausibility. The discussion emphasizes the importance of coherent and predictive theories in the context of empirical evidence.

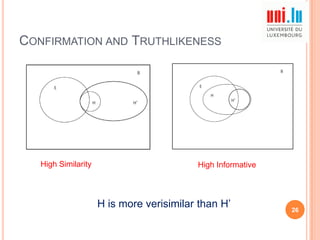

![CONFIRMATION AND TRUTHLIKENESS

Acceptable theories

Not Only High degree of confirmation

But Also Capacity of explaining or predicting the empirical

evidence

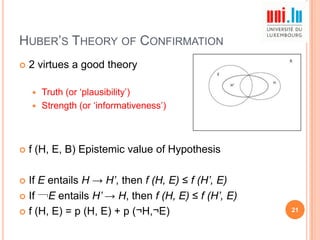

Epistemic value of a theory depends on two factors

Coherence or Similarity between H and E

How Informative our Empirical Evidence is ?

Vs (H, E) = [p (H&E) /p (HvE)] [1/p (E)] = p (H, E) /p (HvE)

25](https://image.slidesharecdn.com/finalpresentation-131215041956-phpapp02/85/Why-are-Good-Theorys-Good-Review-25-320.jpg)