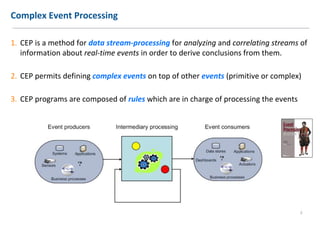

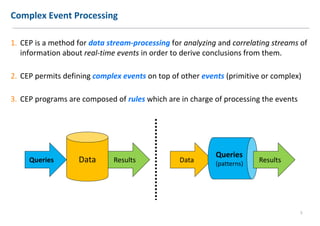

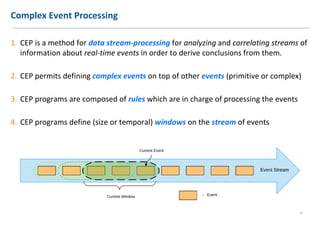

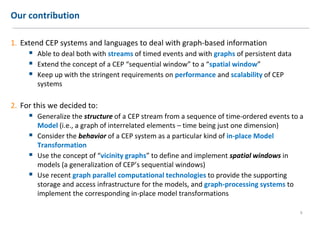

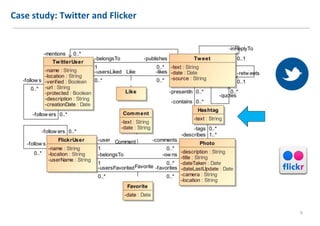

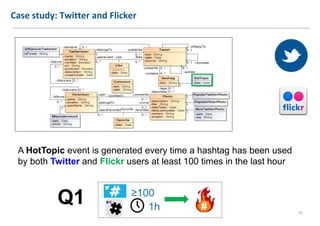

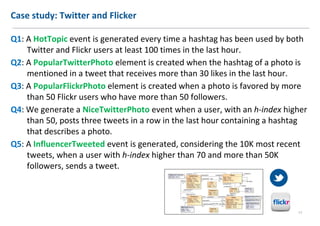

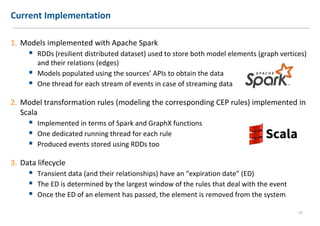

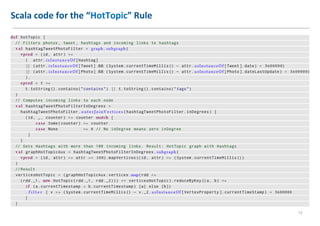

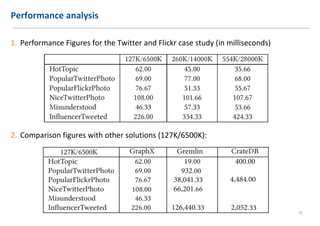

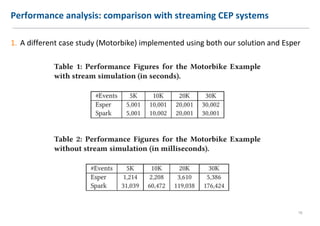

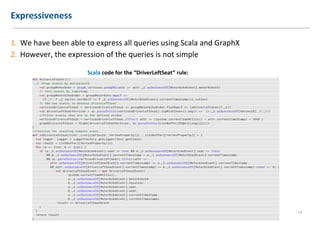

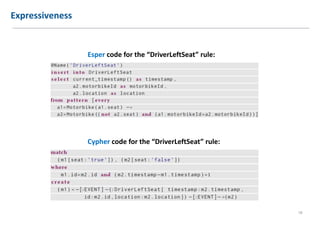

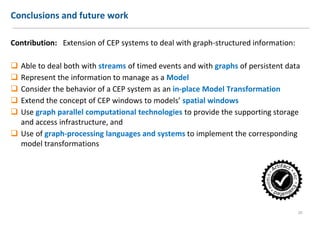

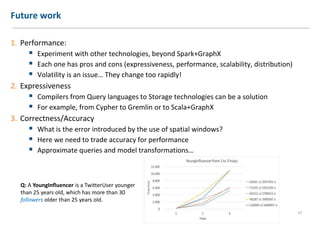

The document discusses extending Complex Event Processing (CEP) to handle graph-structured information, allowing for both time-ordered events and persistent data graphs. It highlights the need for CEP systems to evolve in performance and scalability to address complex data structures, using examples such as Twitter and Flickr data. The authors propose utilizing graph-parallel computational technologies and offer insights into future work involving performance, expressiveness, and correctness.