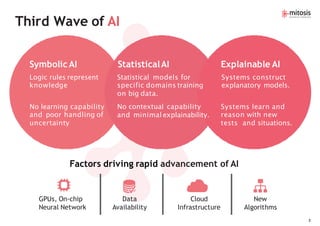

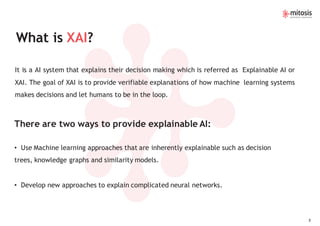

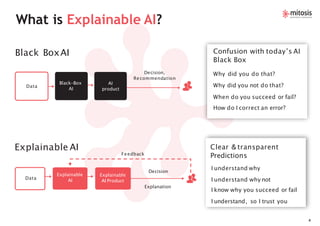

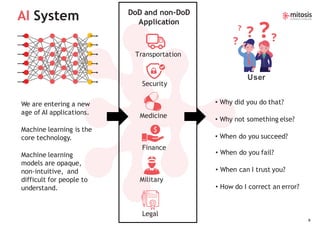

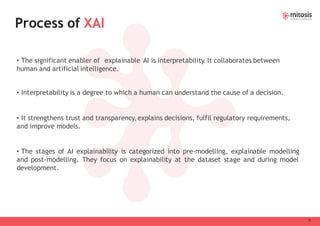

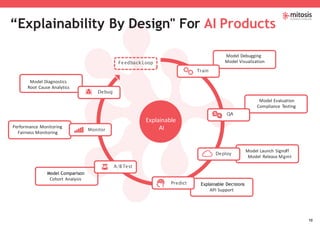

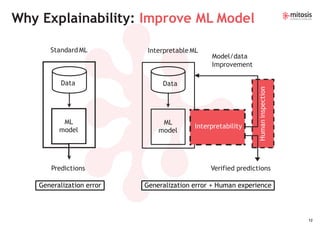

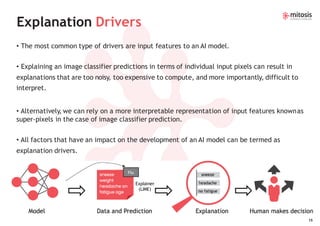

The document discusses the rapid advancements in explainable AI (XAI), emphasizing the need for transparency and accountability in artificial intelligence systems to foster trust in their decision-making processes. It outlines various methods for providing explainable AI, including inherently interpretable machine learning approaches and new techniques for complex neural networks. Additionally, the document highlights the challenges and goals of explainability, such as enhancing model interpretability and fulfilling regulatory requirements.