This document contains a glossary of terms related to video game design and development. It provides definitions for terms like demo, beta, alpha, pre-alpha, gold, debug, automation, white-box testing, bug, vertex shader, and pixel shader. For each term, it gives a short definition from an online source and describes how the term relates to the production practice of video games.

![Salford City College

Eccles Sixth Form Centre

BTEC Extended Diploma in GAMES DESIGN

Unit 73: Sound For Computer Games

IG2 Task 1

10

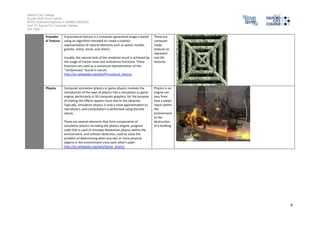

Collision Collision detection typically refers to the computational

problem of detecting the intersection of two or more objects.

While the topic is most often associated with its use in video

games and other physical simulations, it also has applications

in robotics. In addition to determining whether two objects

have collided, collision detection systems may also calculate

time of impact (TOI), and report a contact manifold (the set

of intersecting points).[1] Collision response deals with

simulating what happens when a collision is detected (see

physics engine, ragdoll physics). Solving collision detection

problems requires extensive use of concepts from linear

algebra and computational geometry.

http://en.wikipedia.org/wiki/Collision_detection

Collision is

used so that

models do

not start to

clip into

each other.

Lighting Computer graphics lighting refers to the simulation of light in

computer graphics. This simulation can either be extremely

accurate, as is the case in an application like Radiance which

attempts to track the energy flow of light interacting with

materials using radiosity computational techniques.

Alternatively, the simulation can simply be inspired by light

physics, as is the case with non-photorealistic rendering. In

both cases, a shading model is used to describe how surfaces

respond to light. Between these two extremes, there are

many different rendering approaches which can be employed

to achieve almost any desired visual result.

http://en.wikipedia.org/wiki/Computer_graphics_lighting

Light is used

so that the

environment

made in a

game looks

more

realistic.](https://image.slidesharecdn.com/engineterminology1-140915071741-phpapp01/85/Engine-Terminology-1-10-320.jpg)