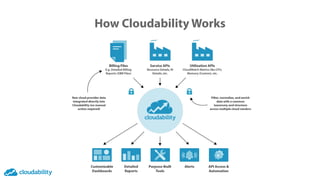

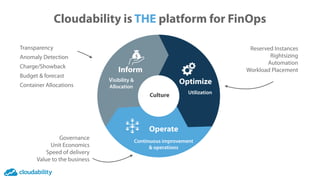

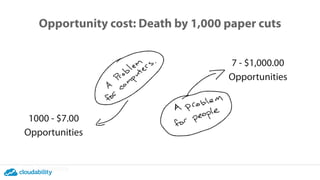

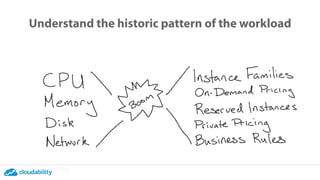

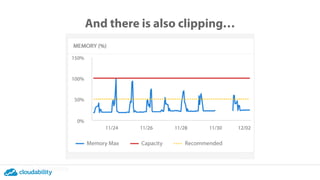

The document discusses a presentation on efficiency and cost optimization in cloud resource management using the Cloudability platform. It emphasizes the importance of historical workload patterns and the role of Datadog metrics in providing accurate rightsizing recommendations. The goal is to enhance financial transparency and optimize cloud operations for companies managing significant cloud expenditures.