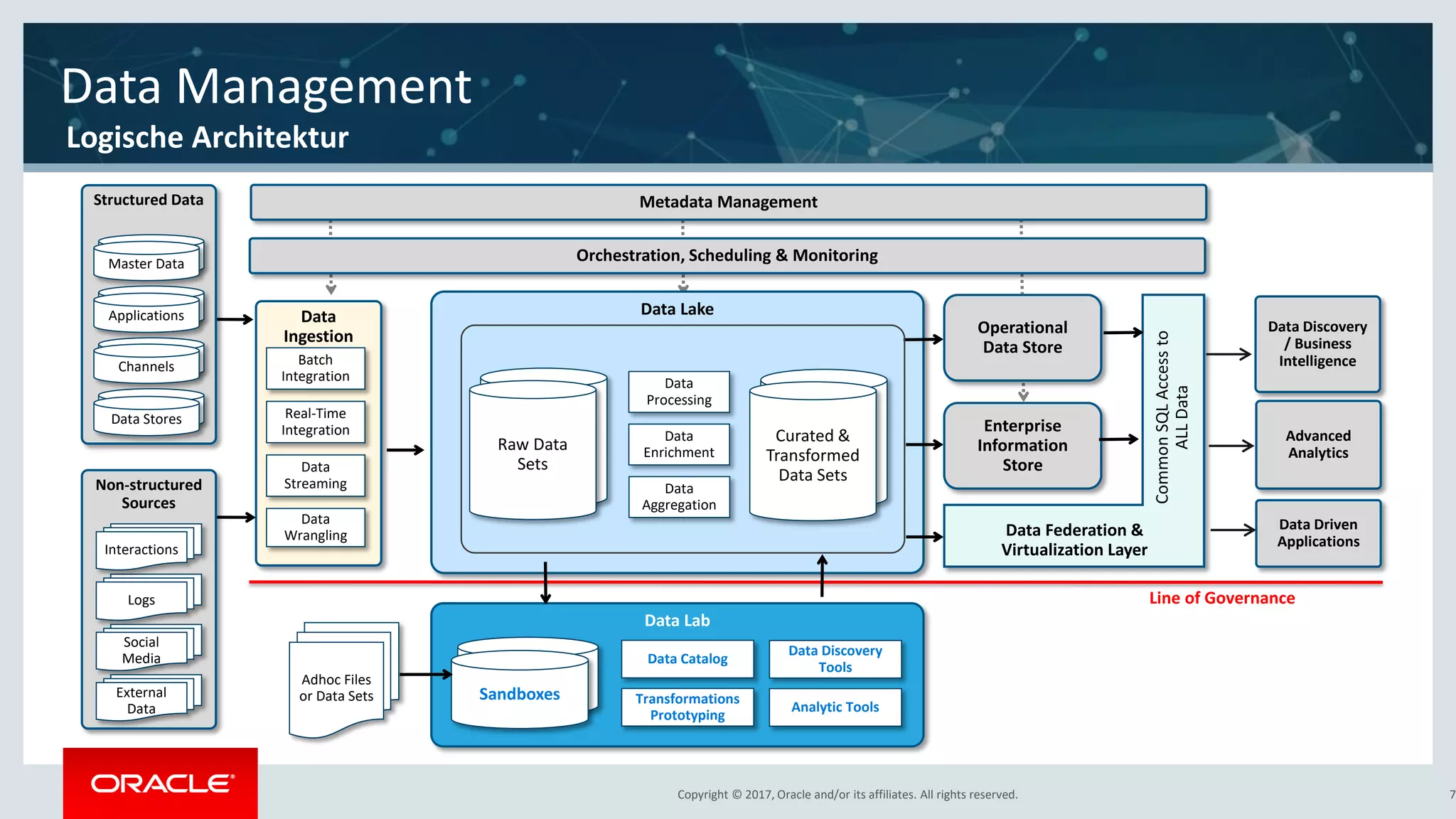

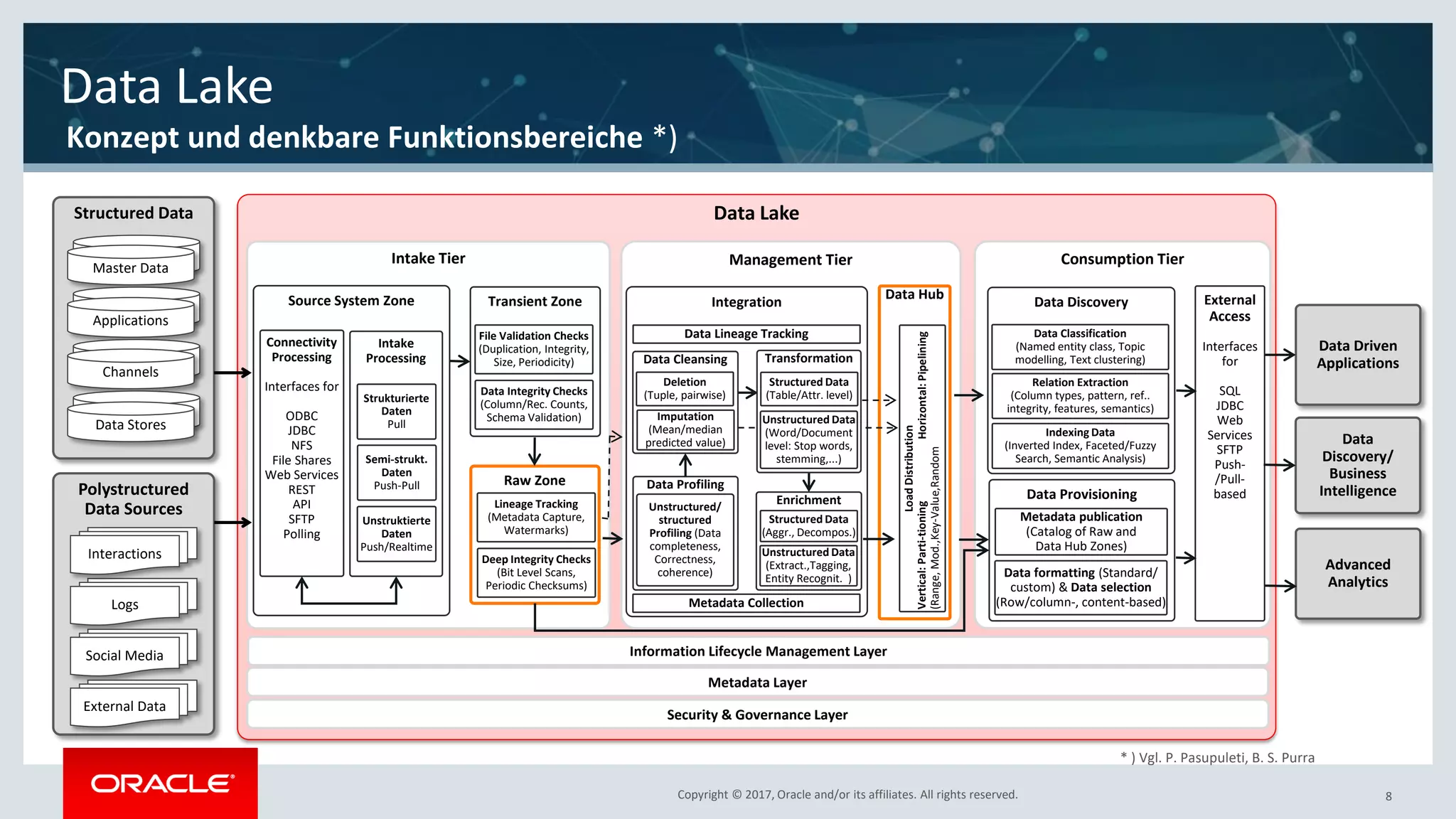

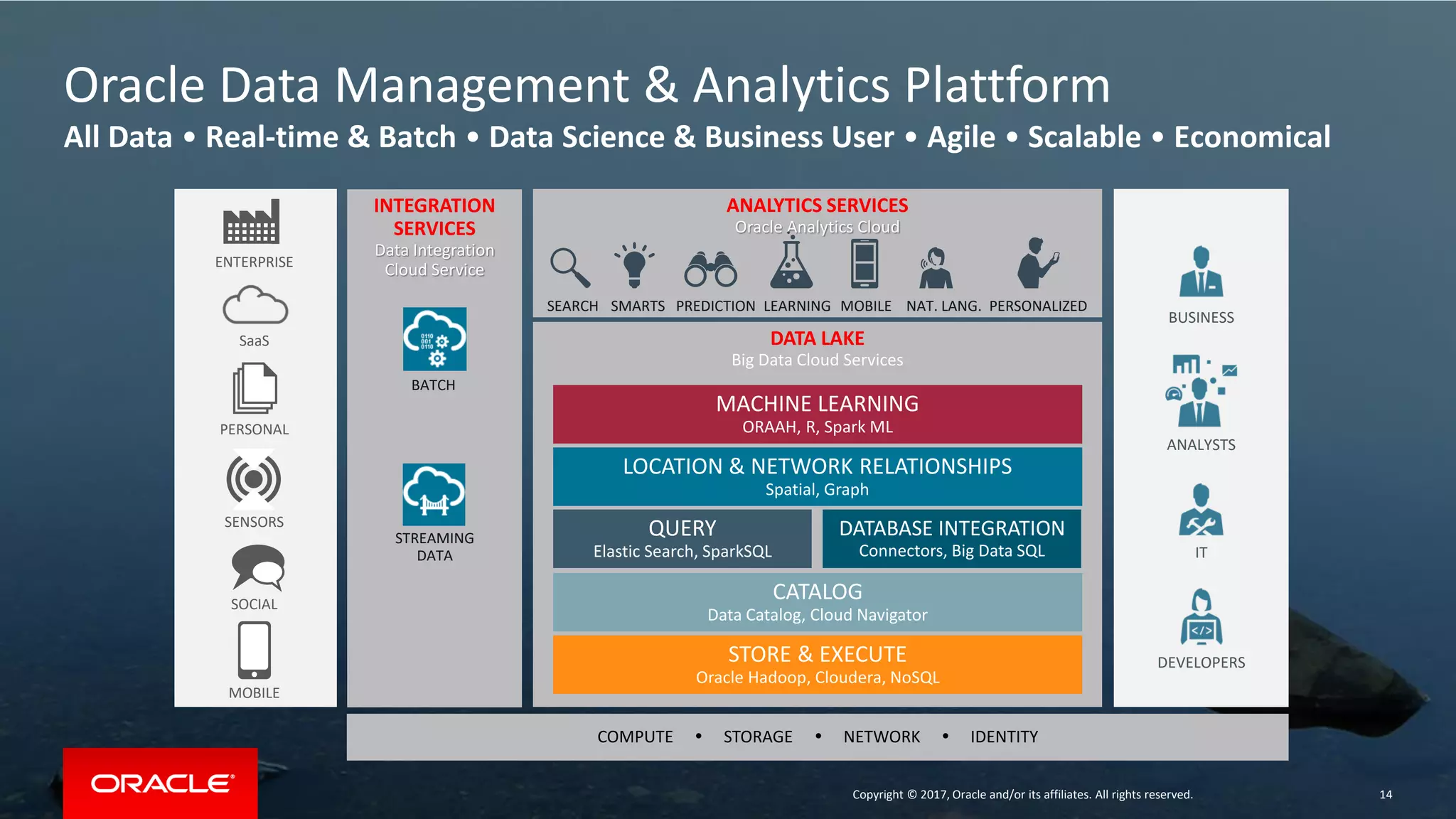

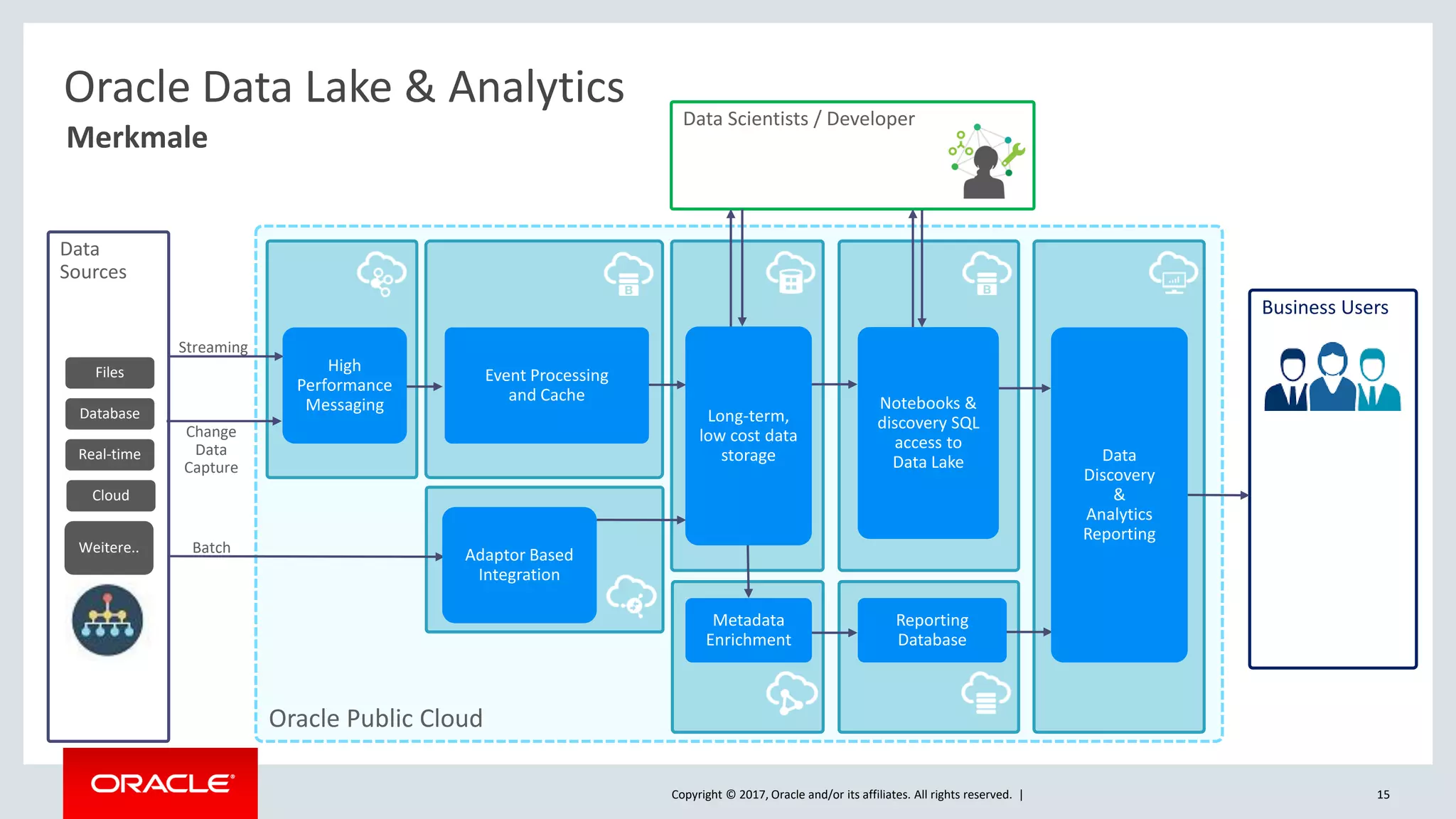

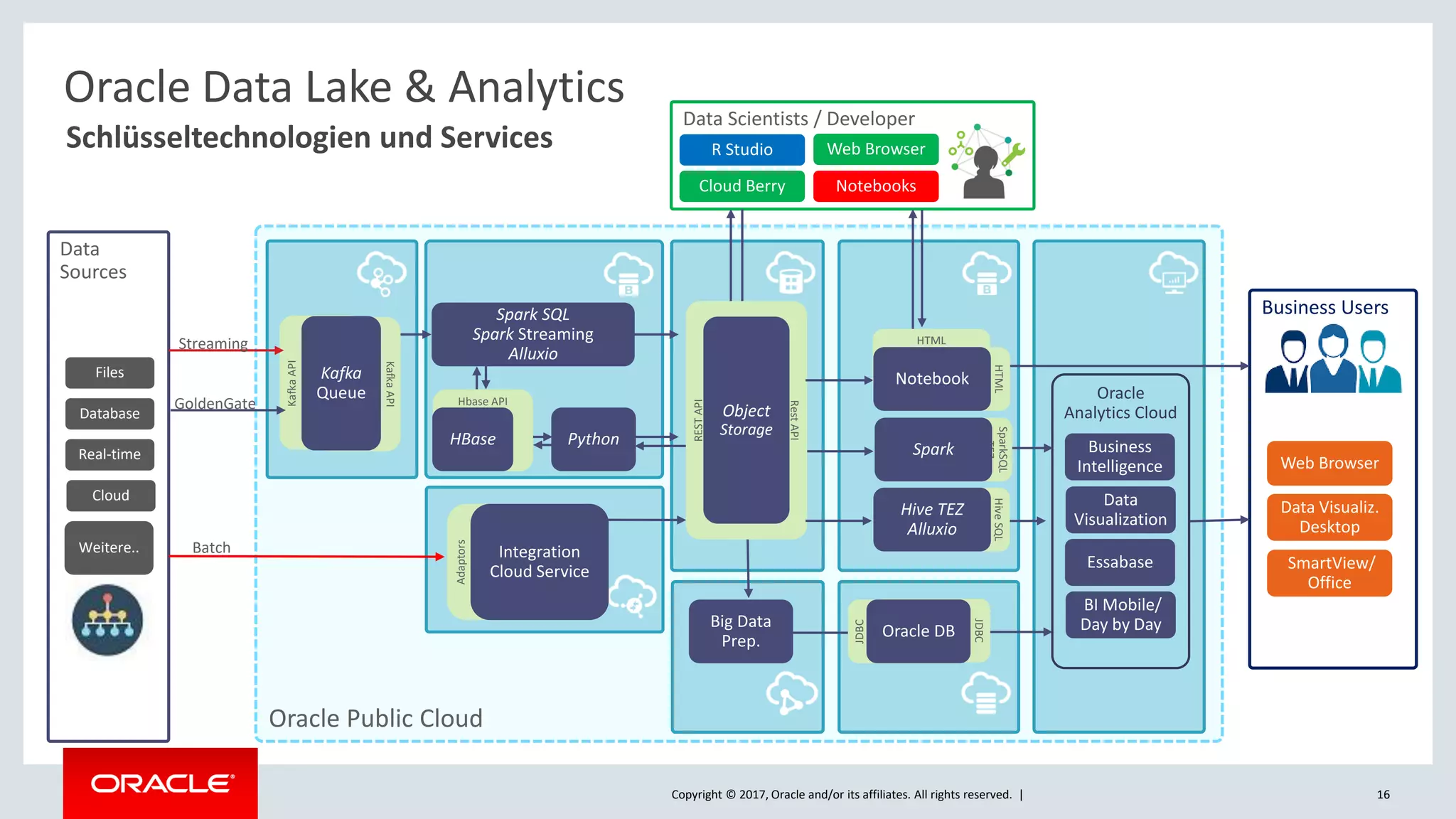

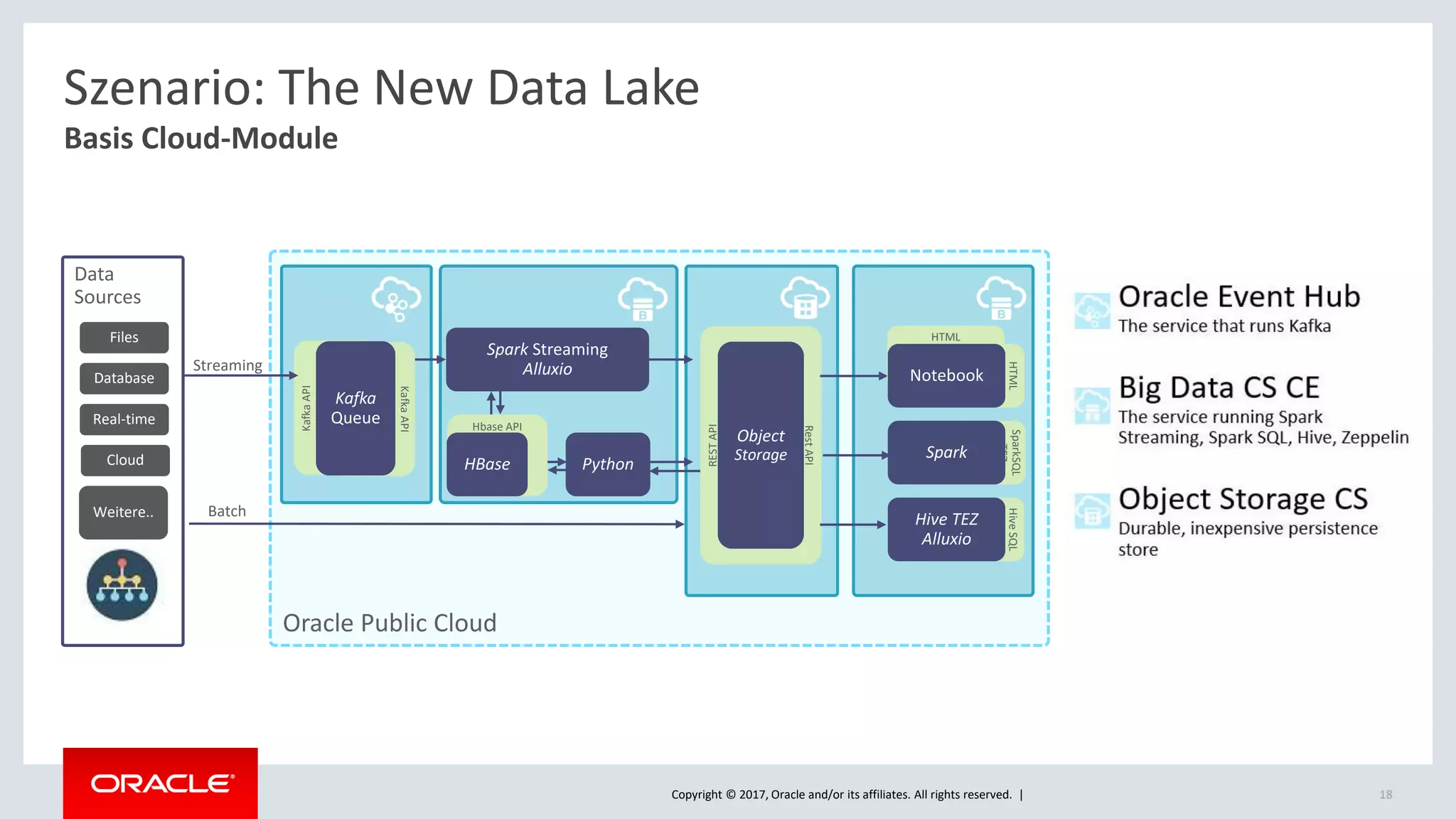

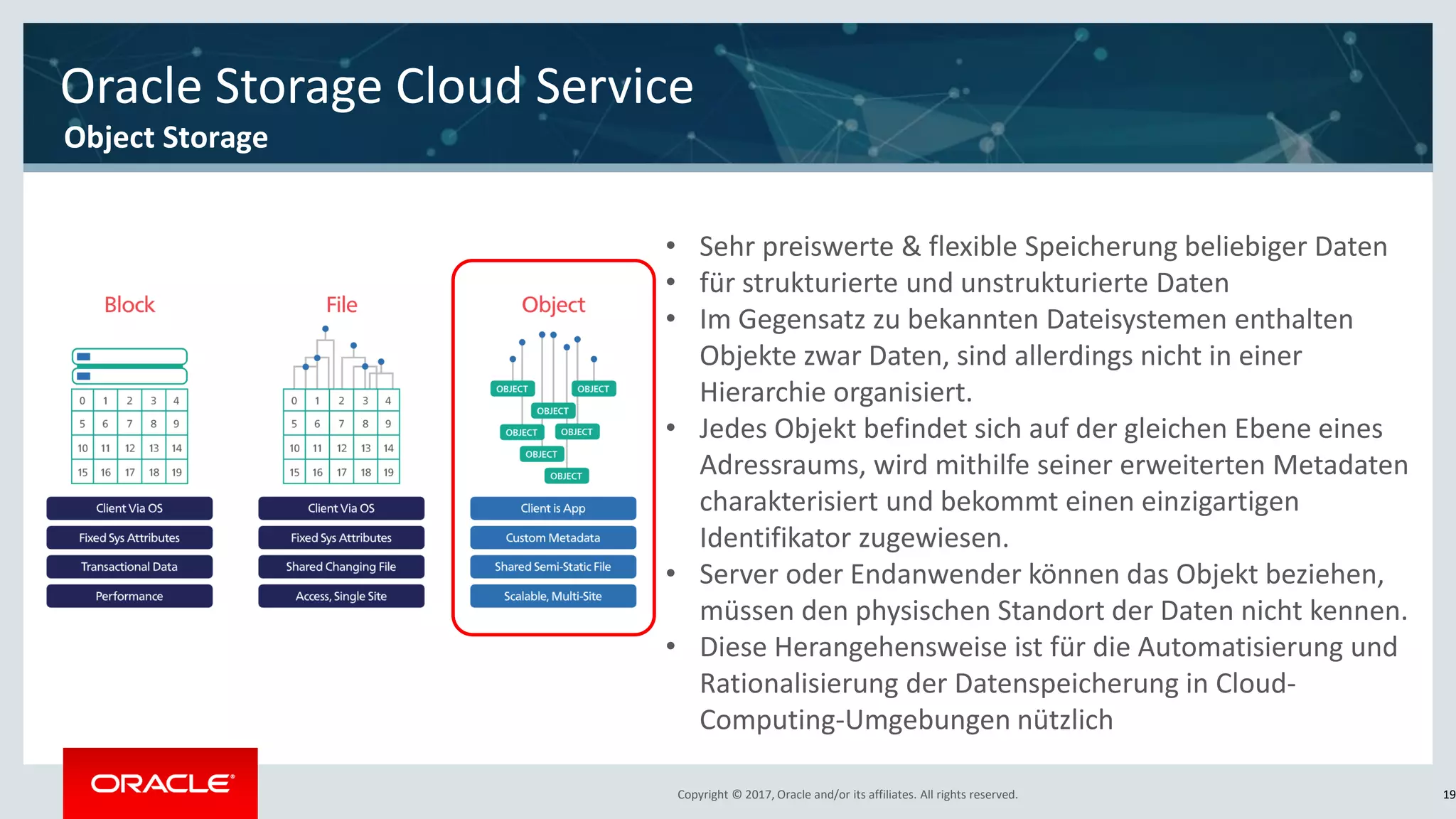

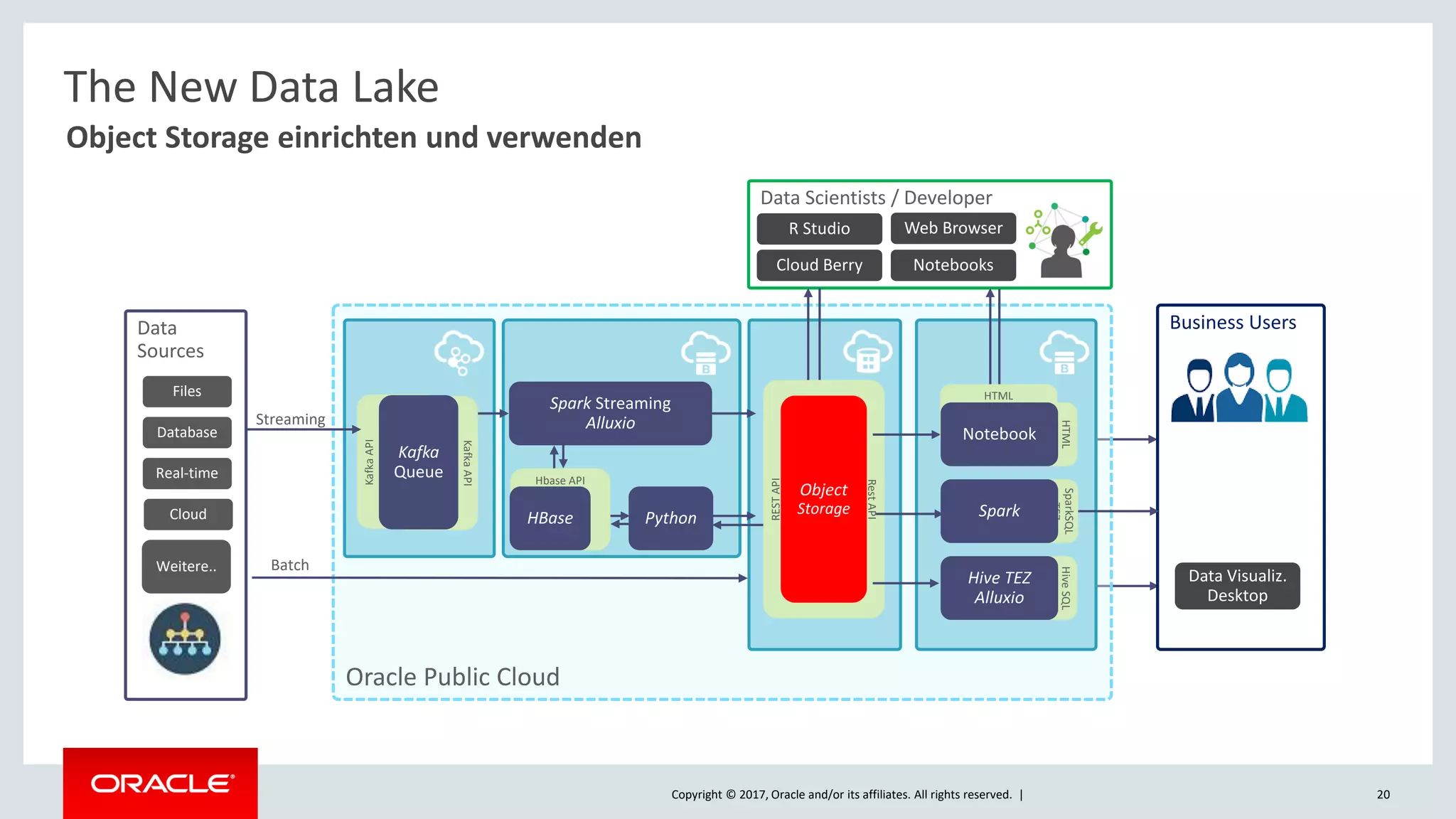

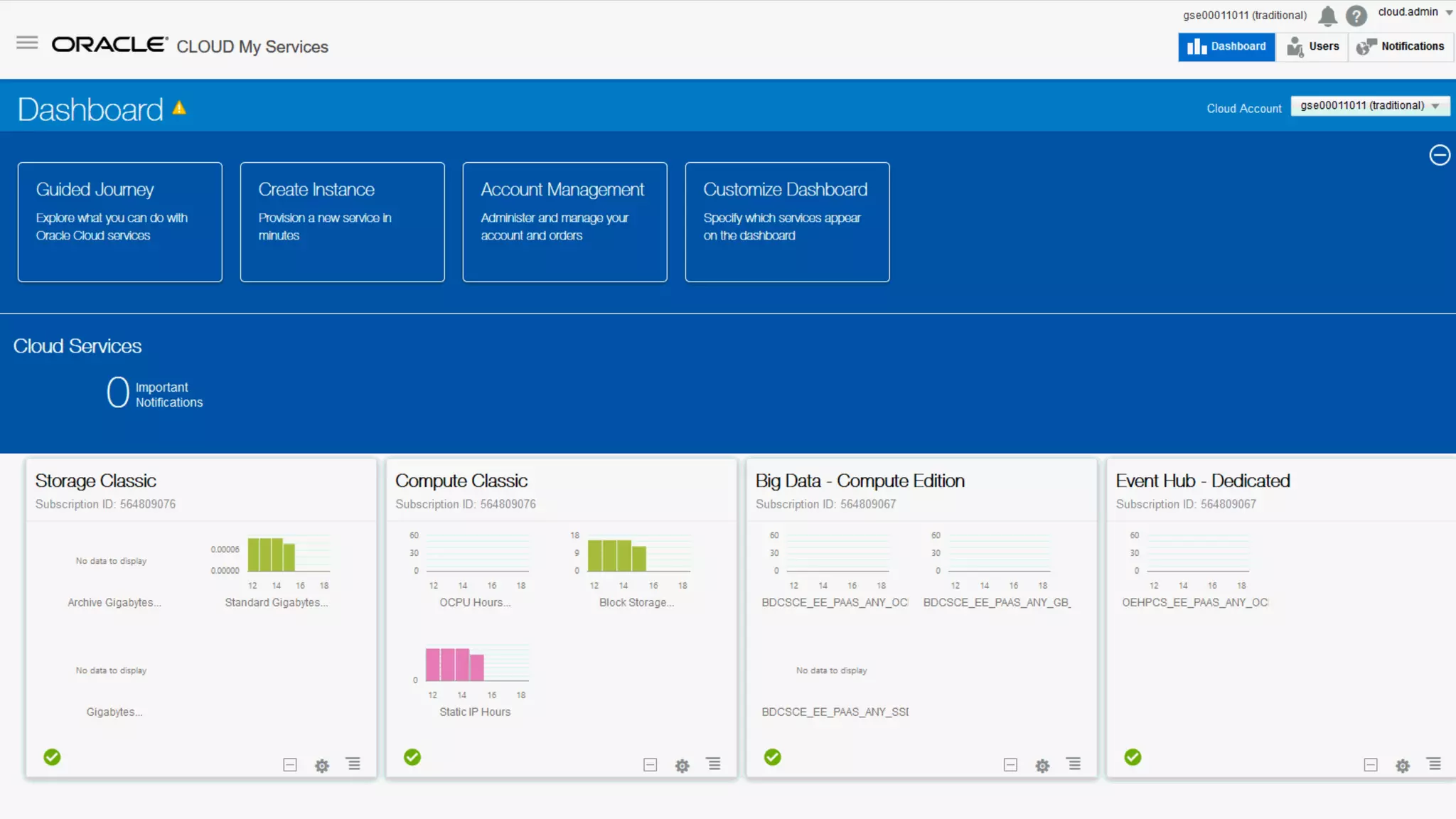

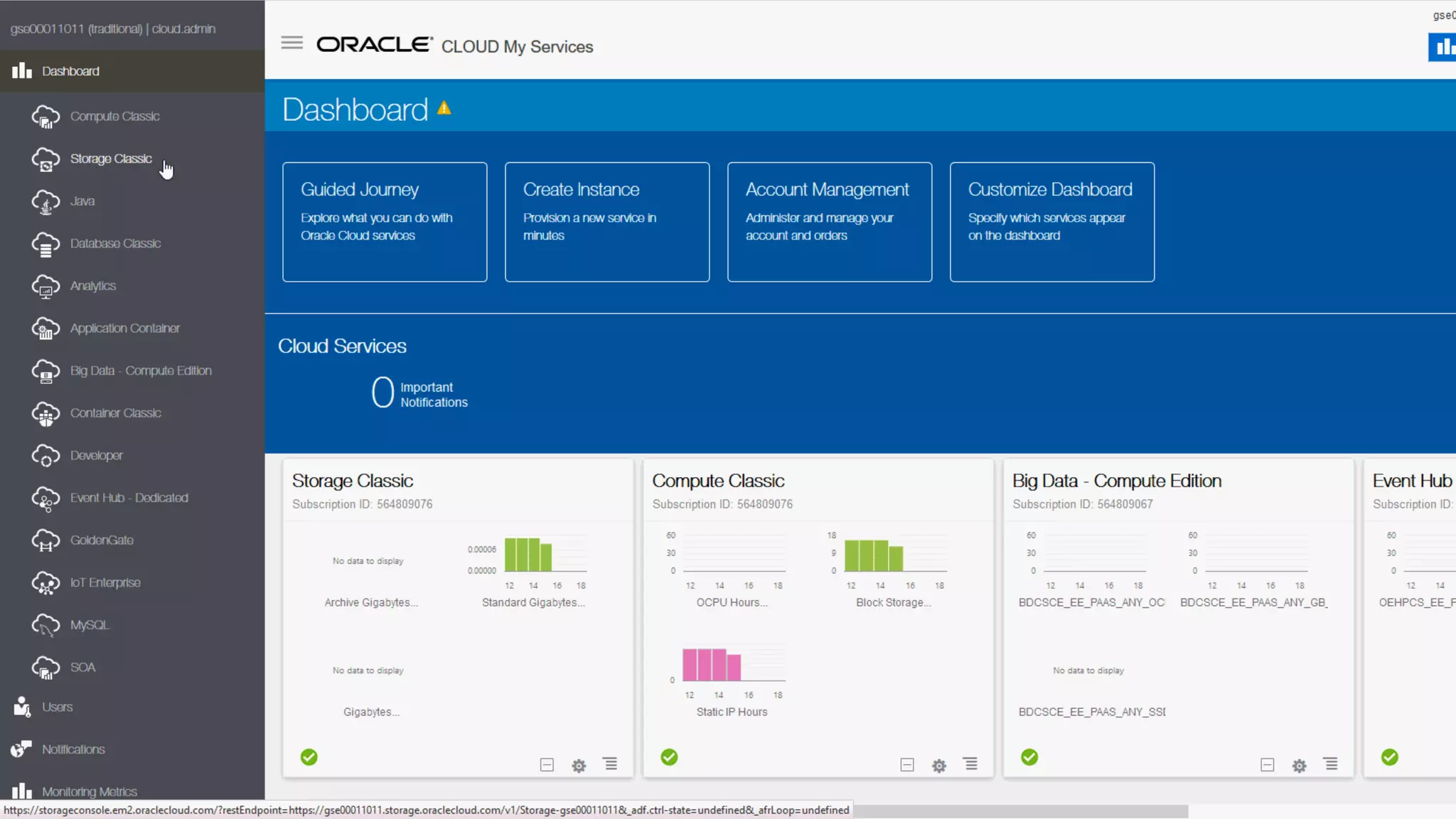

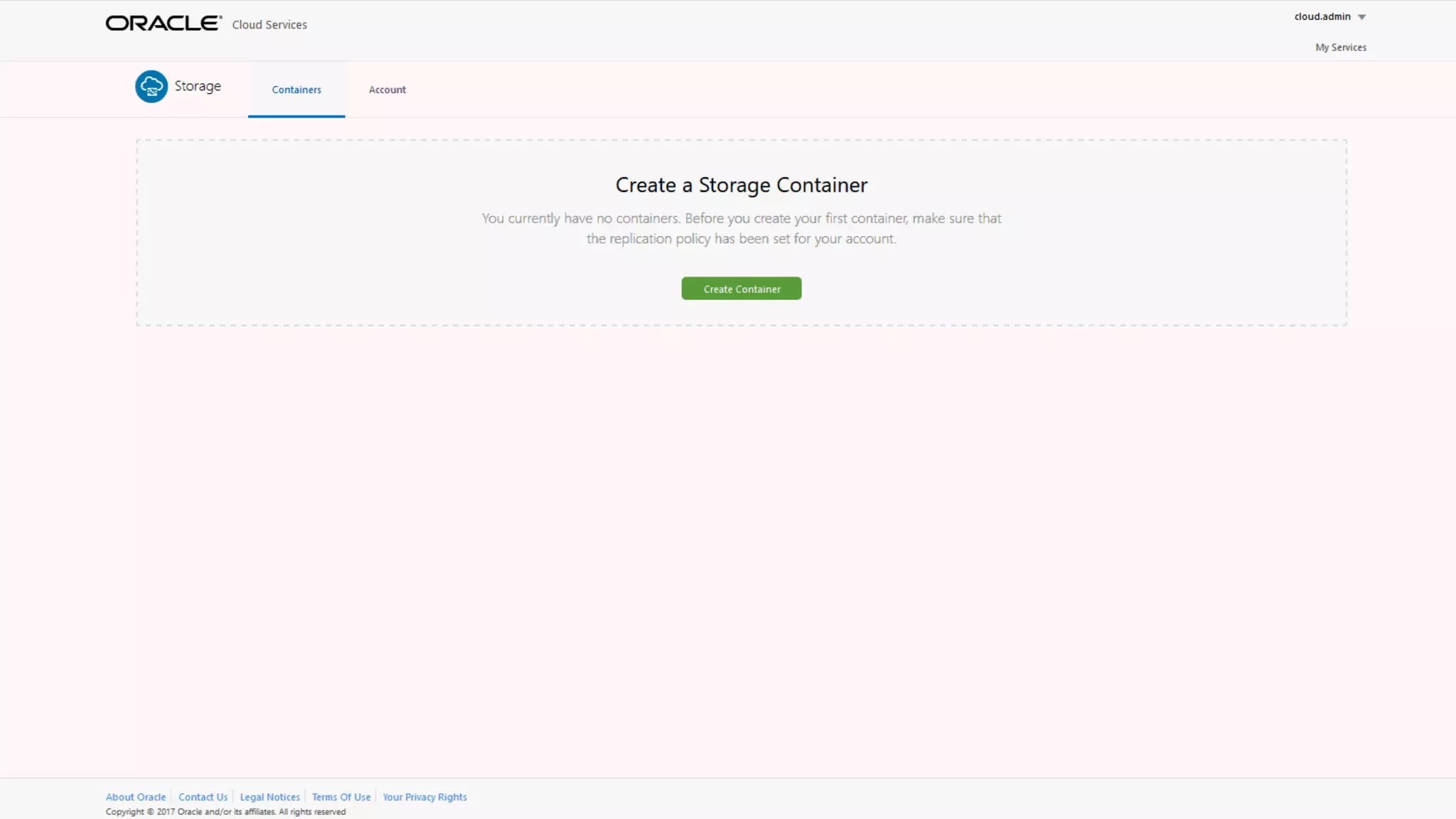

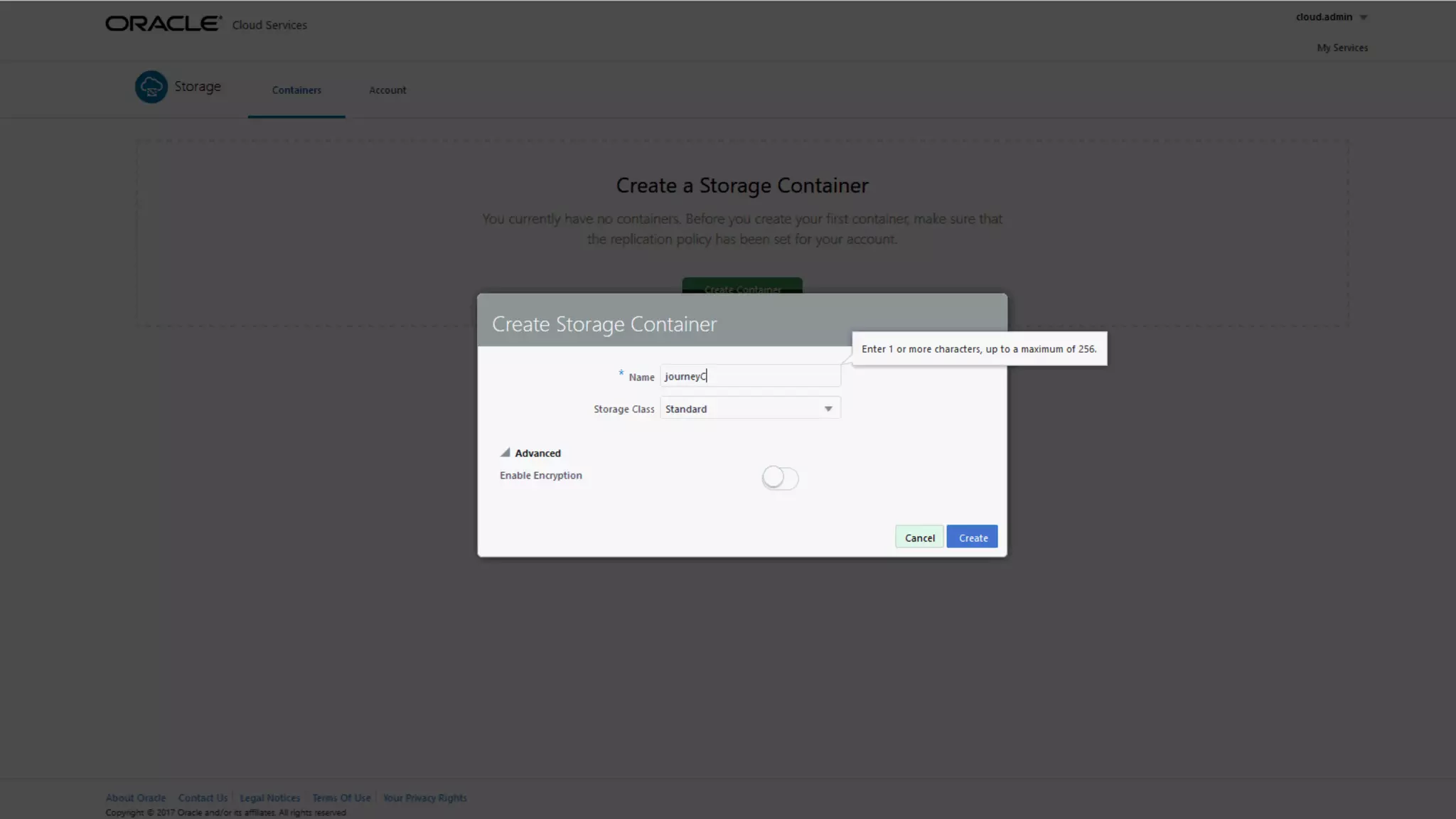

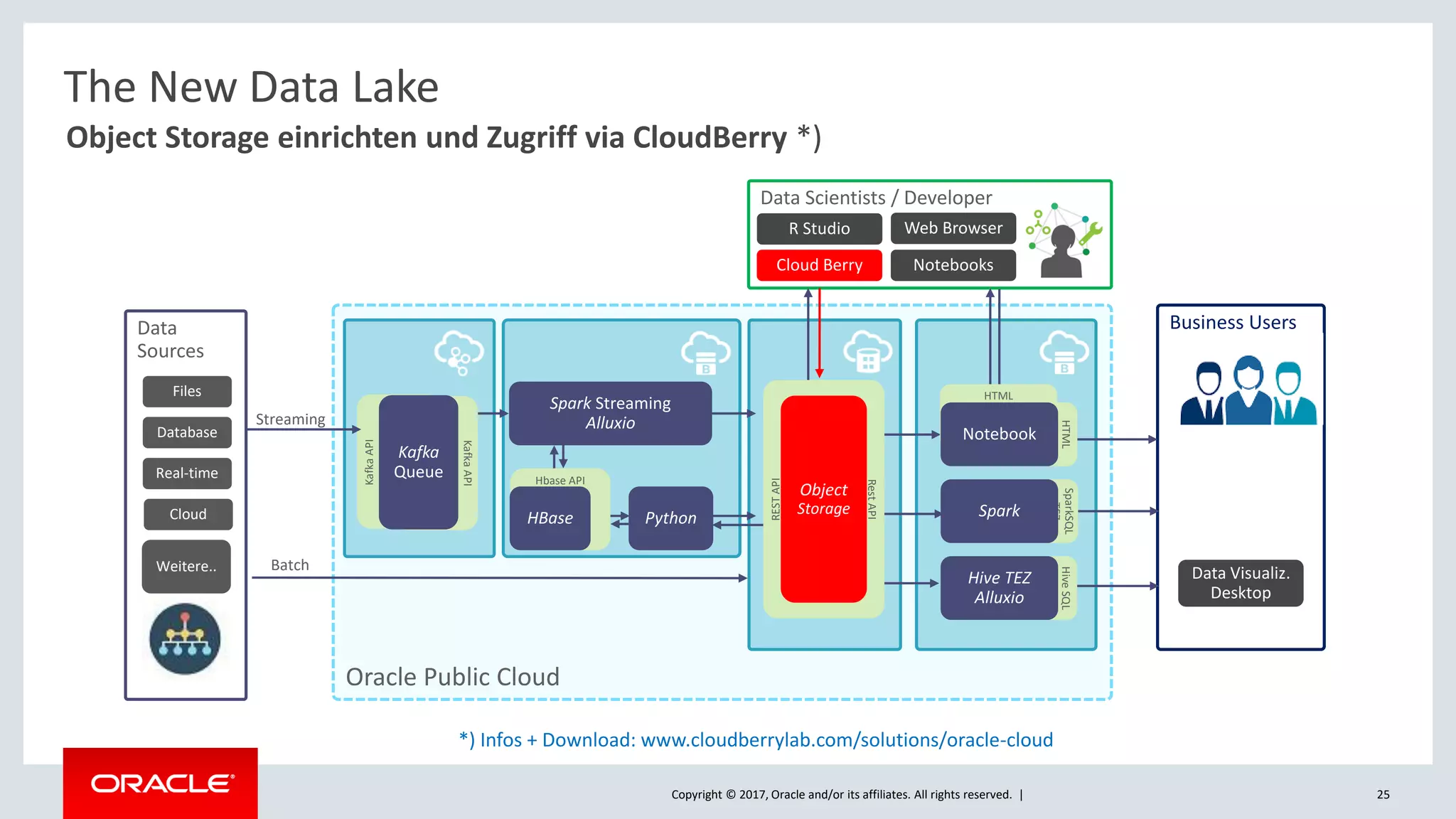

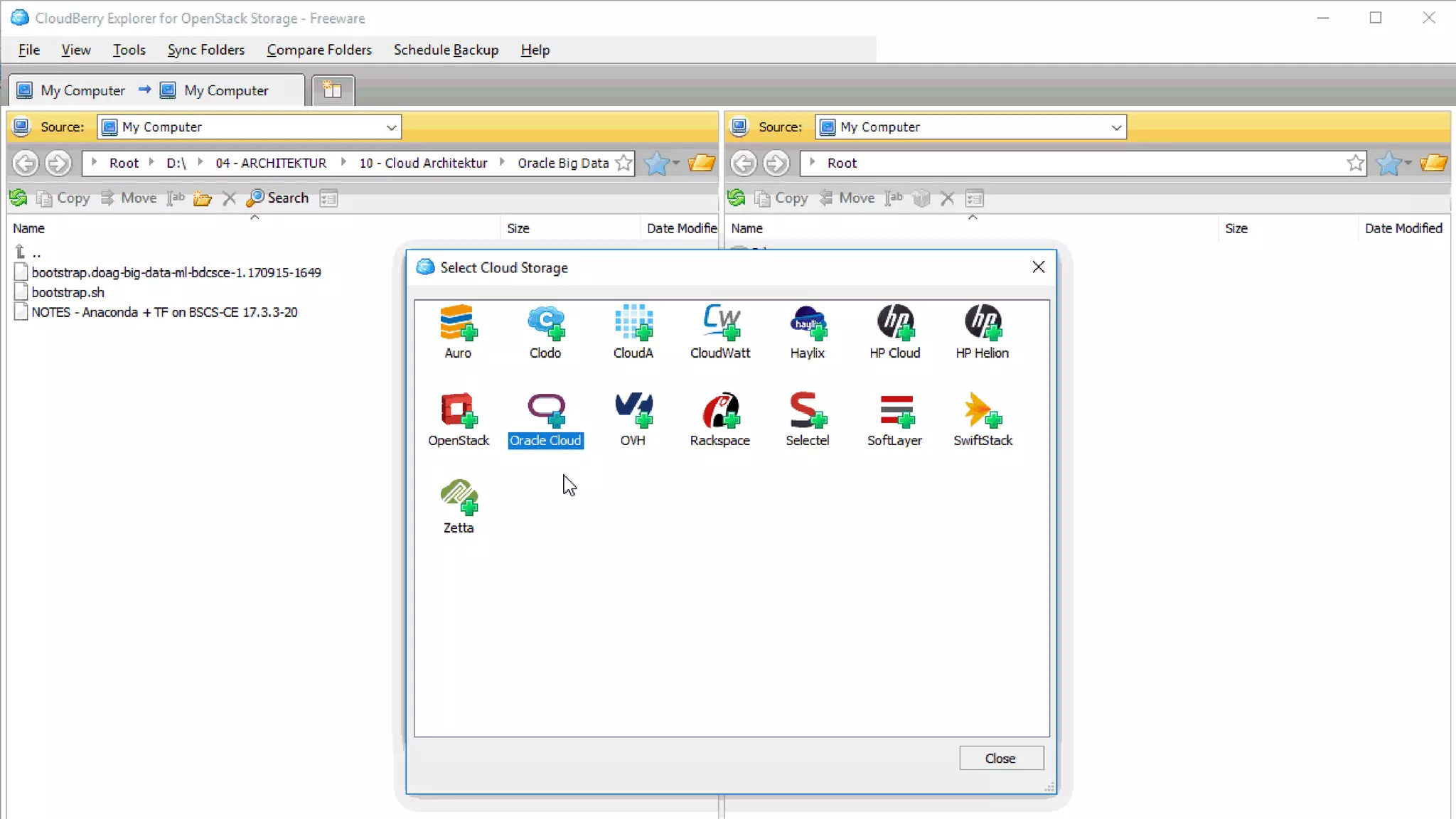

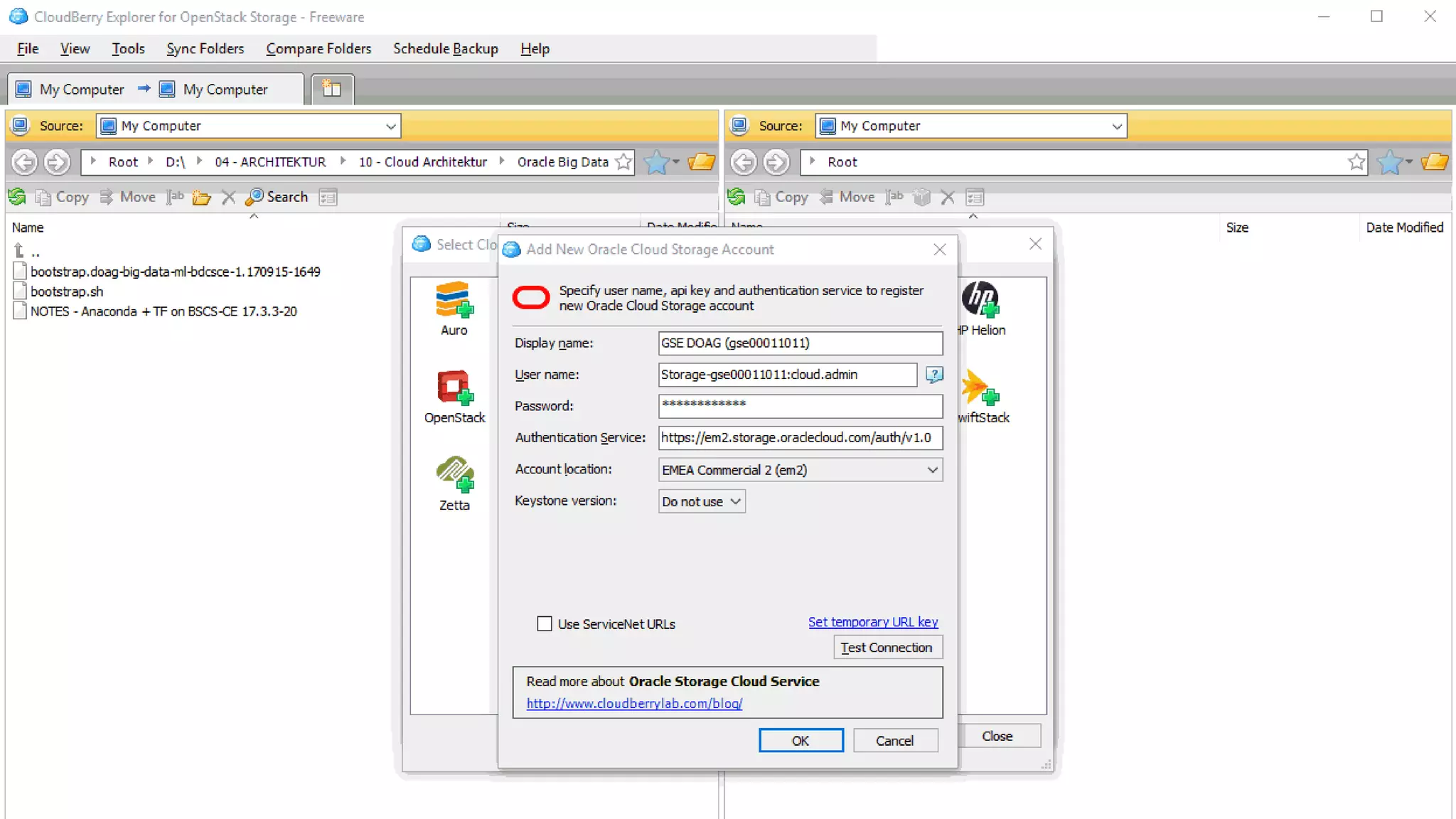

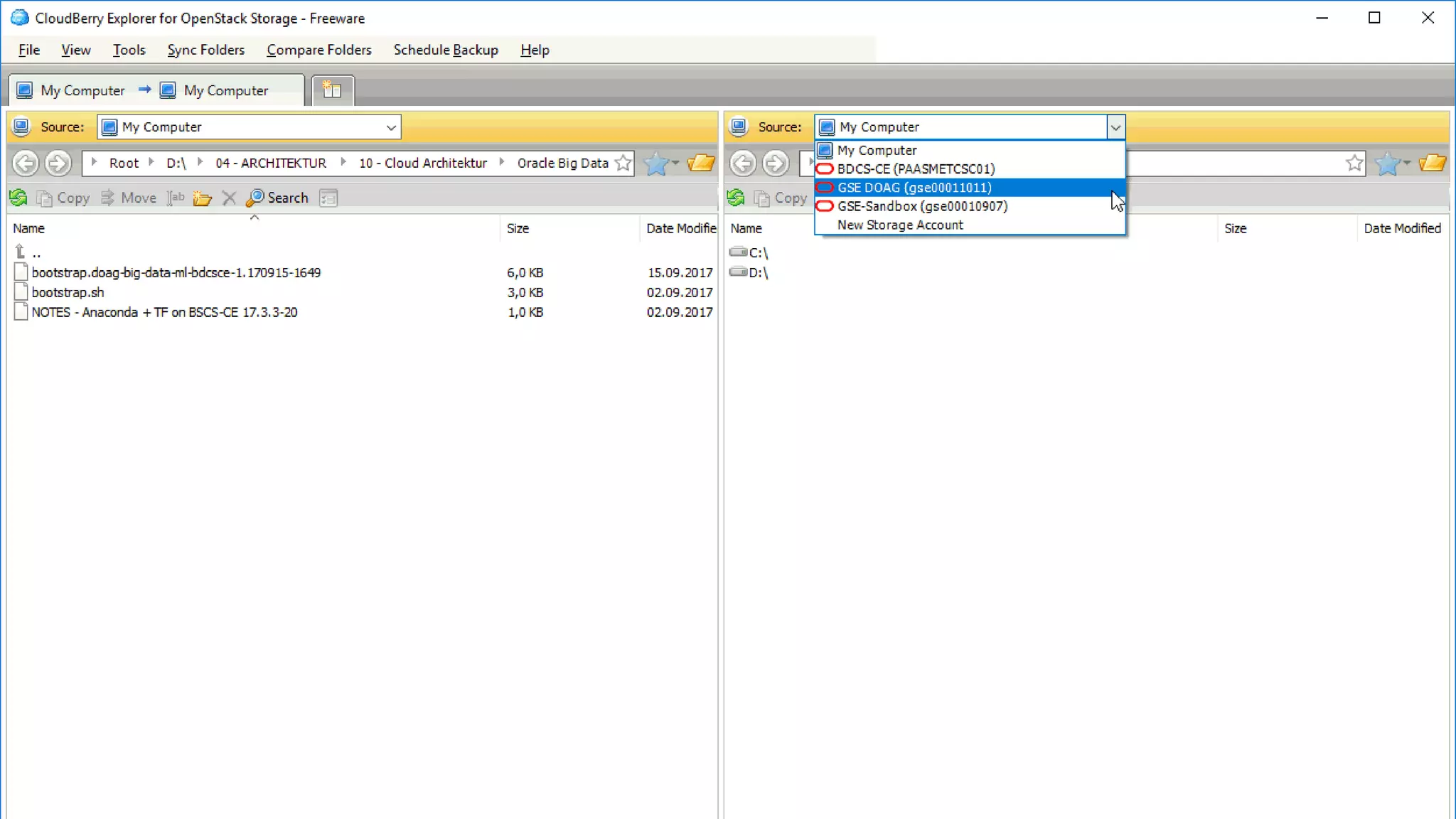

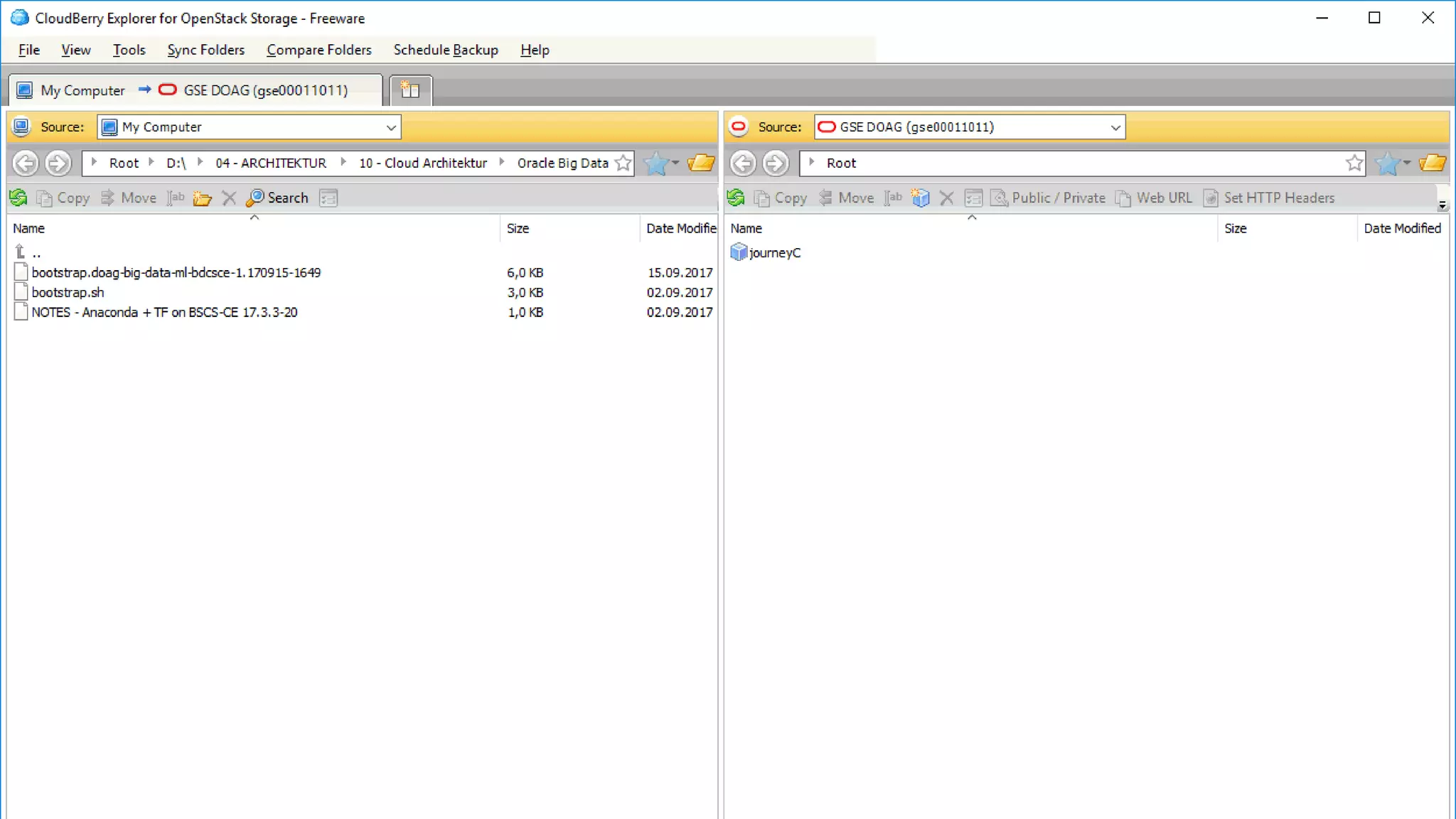

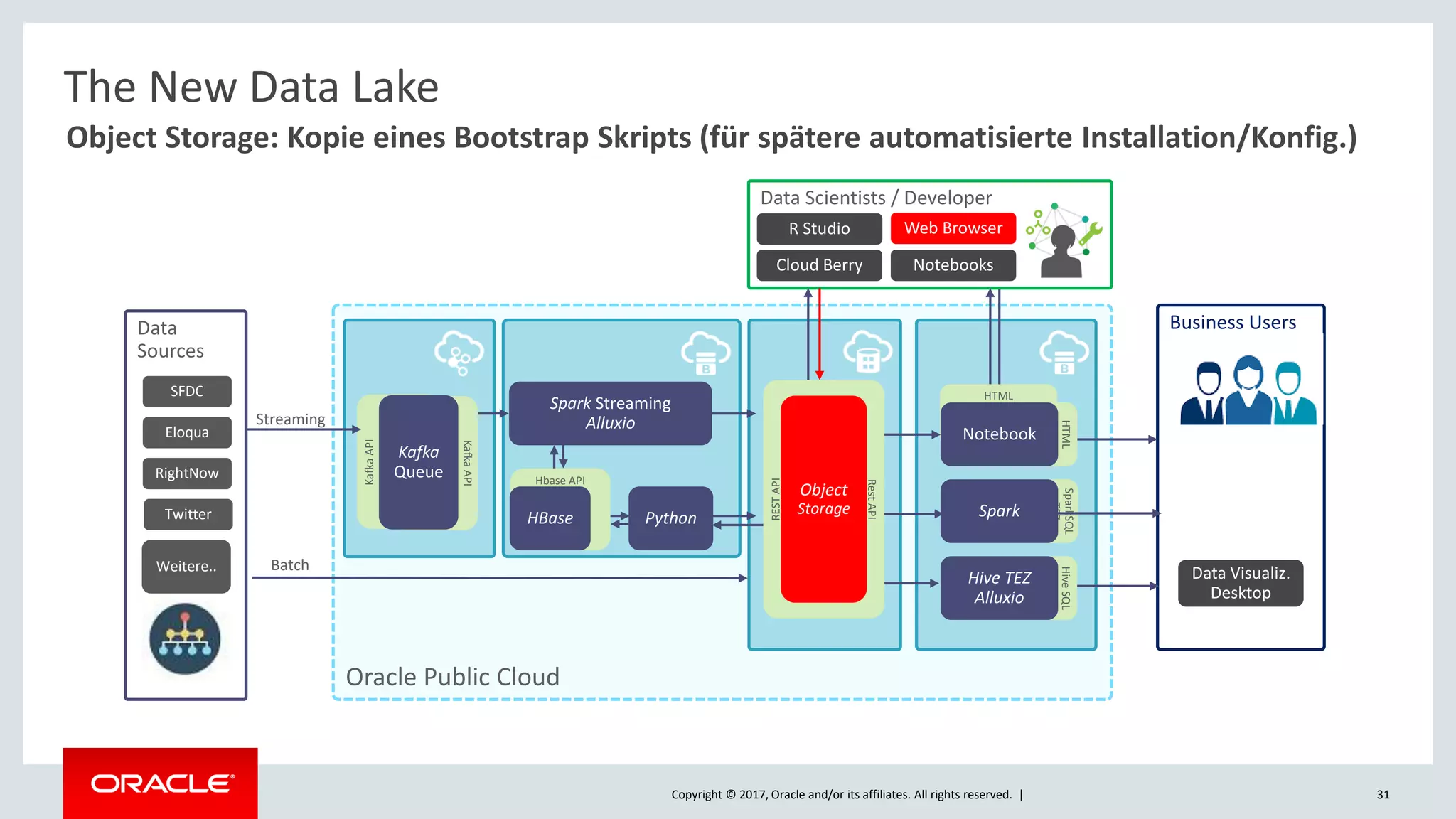

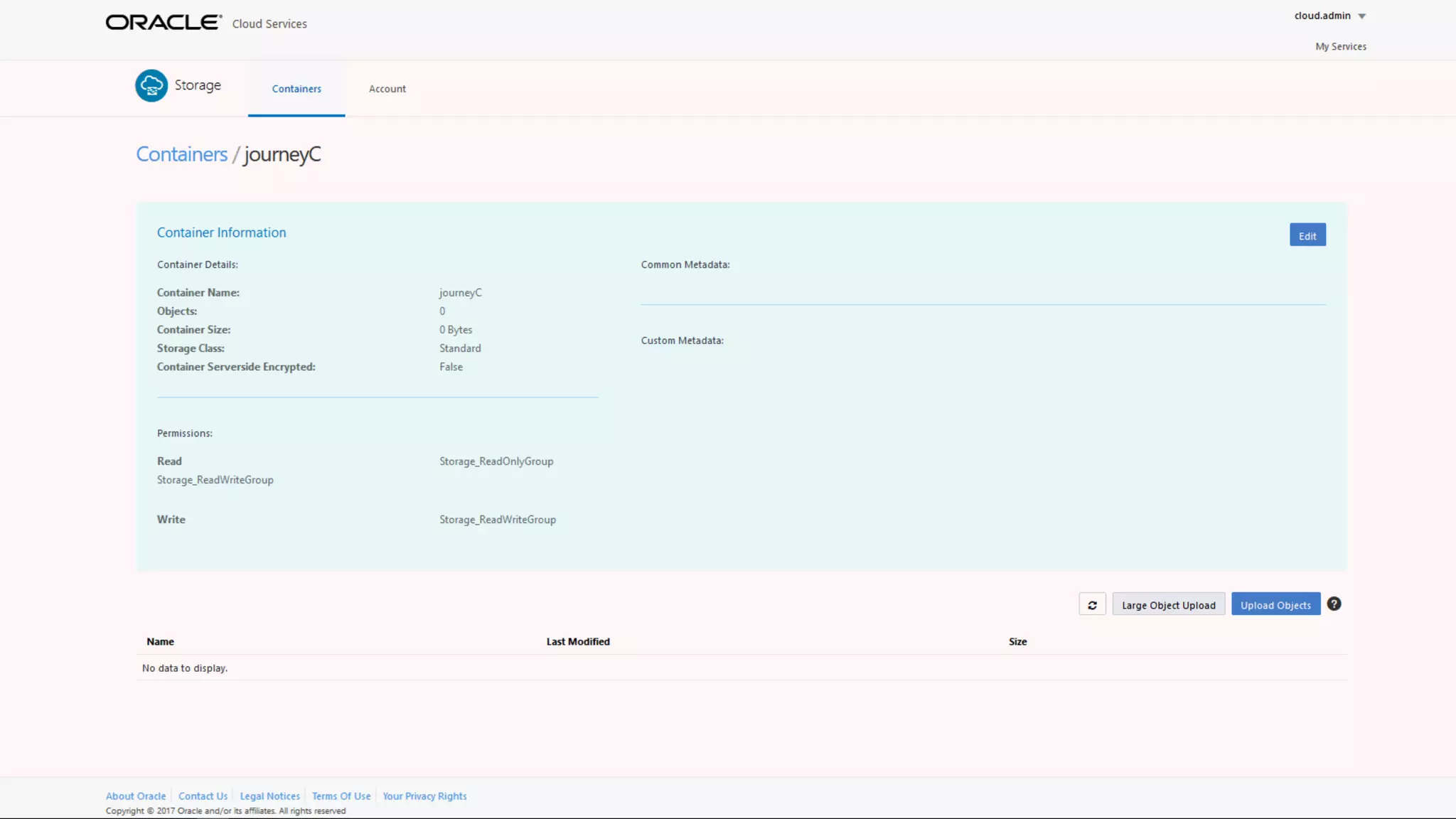

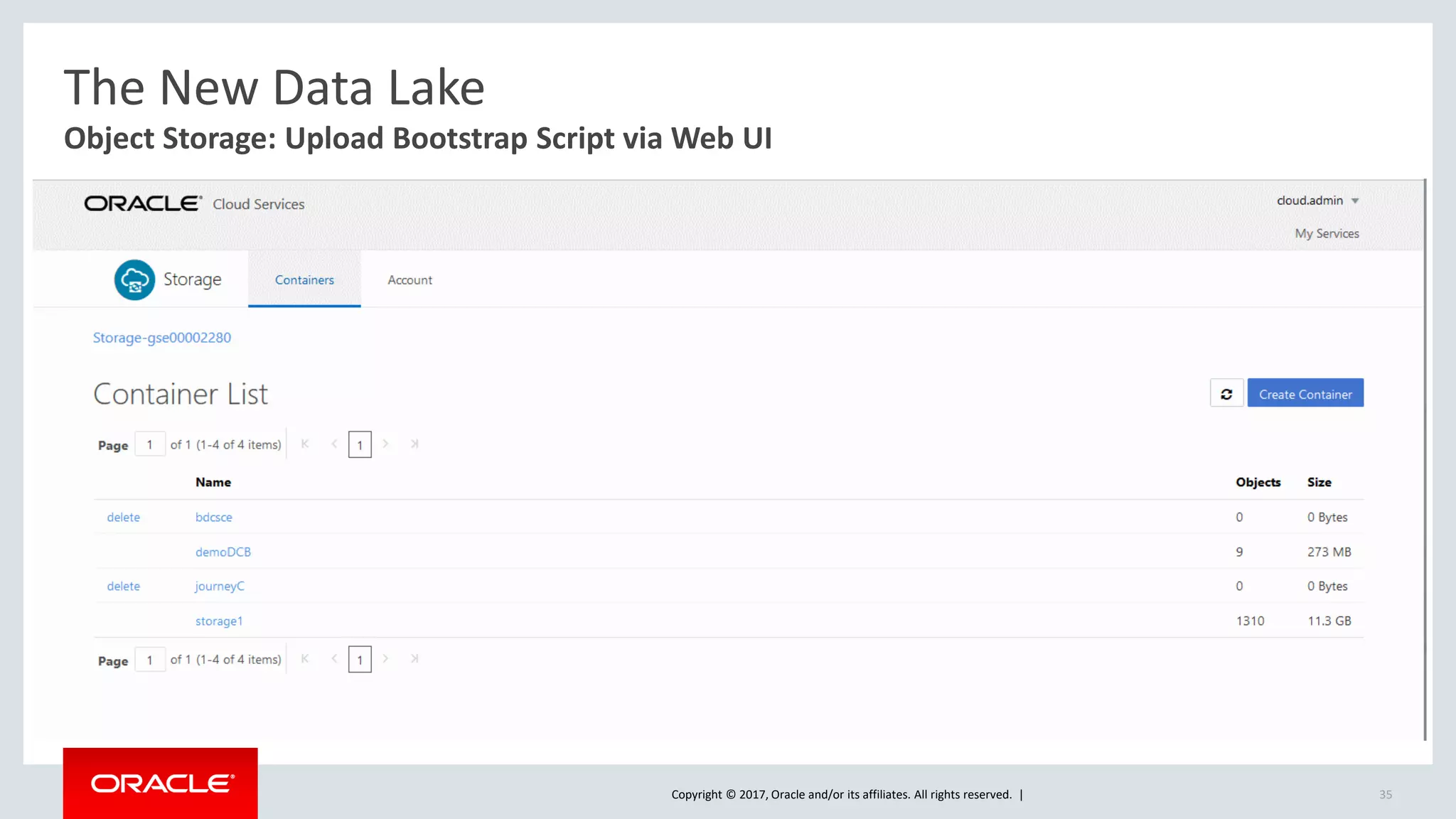

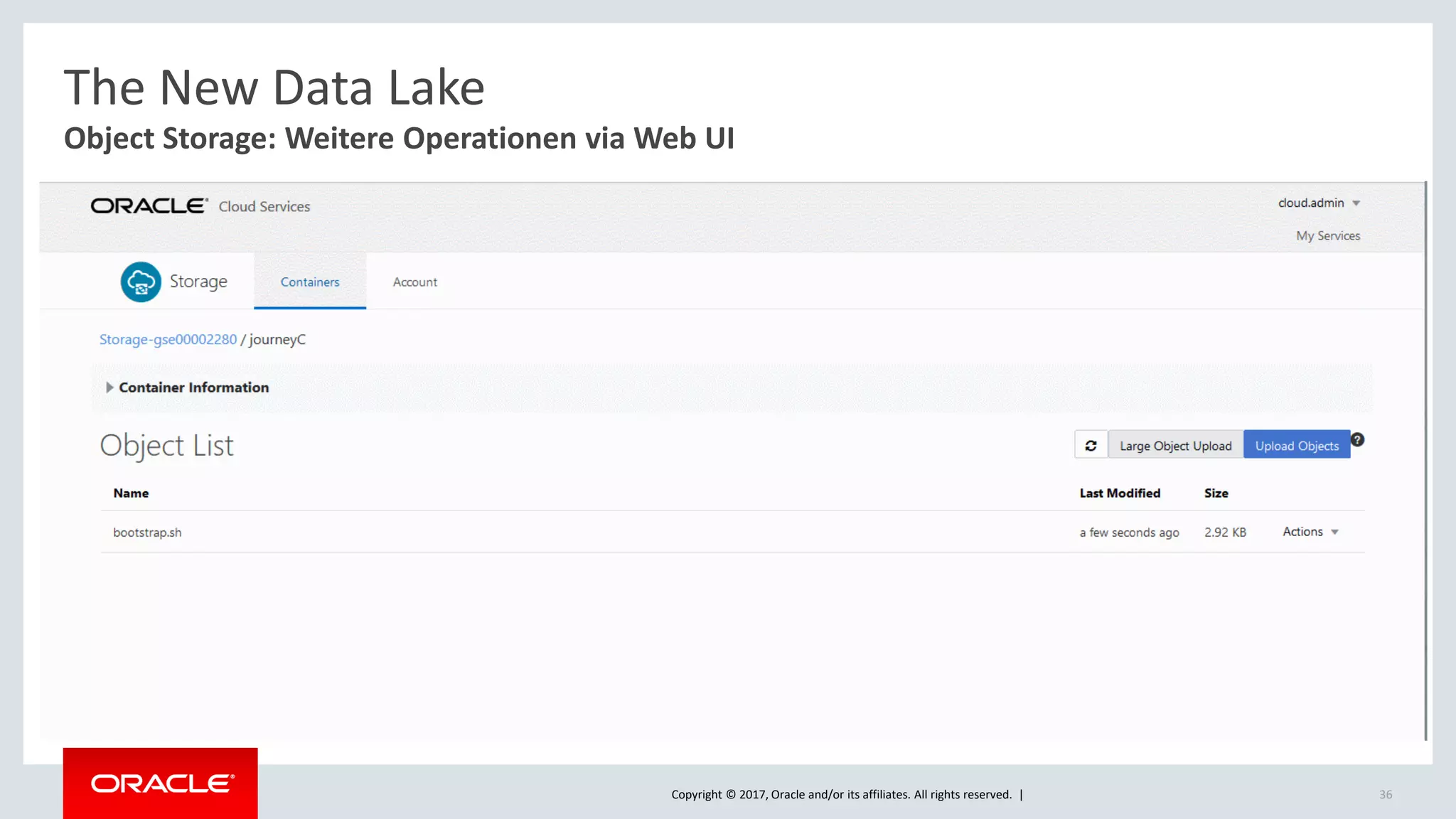

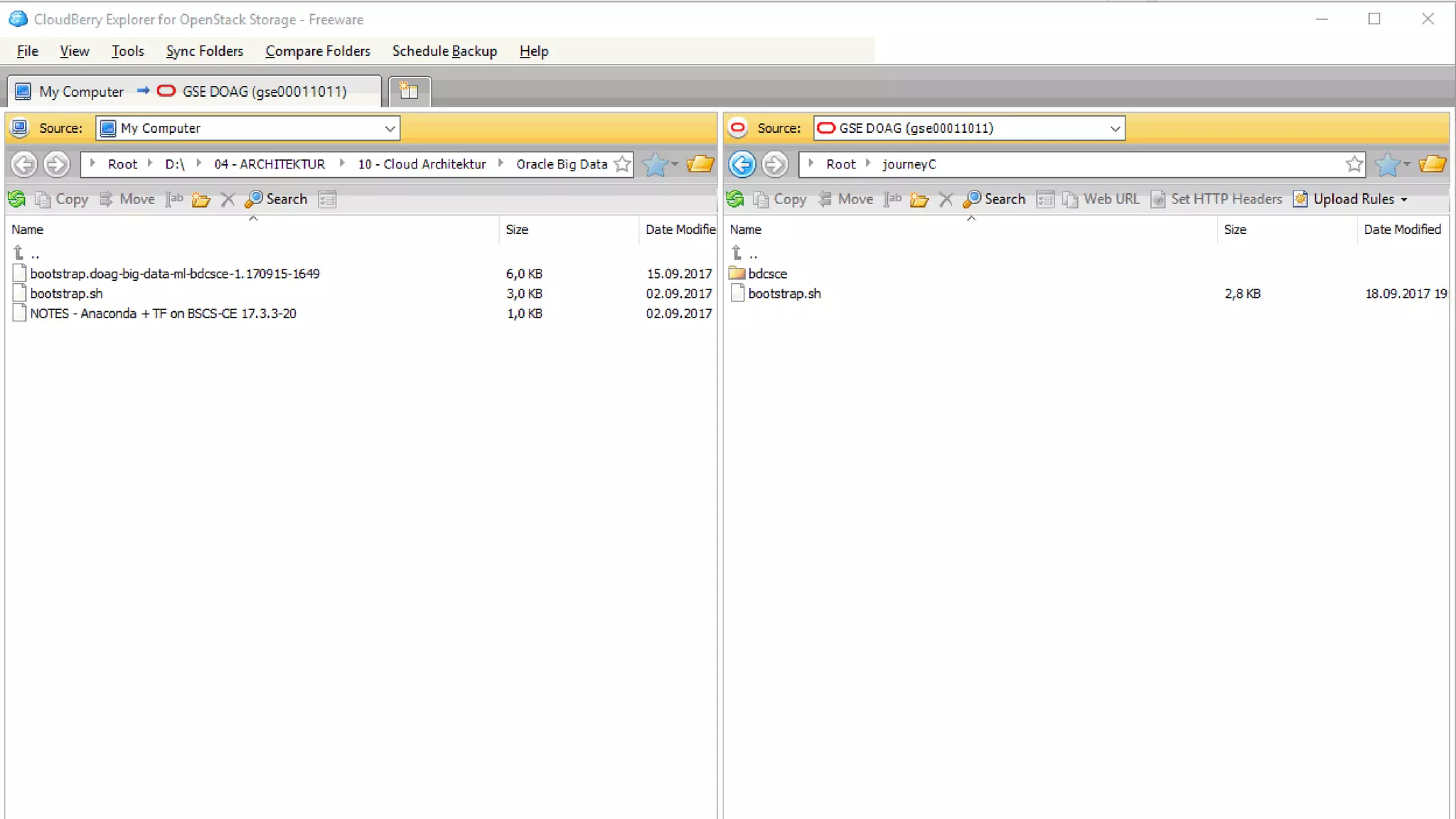

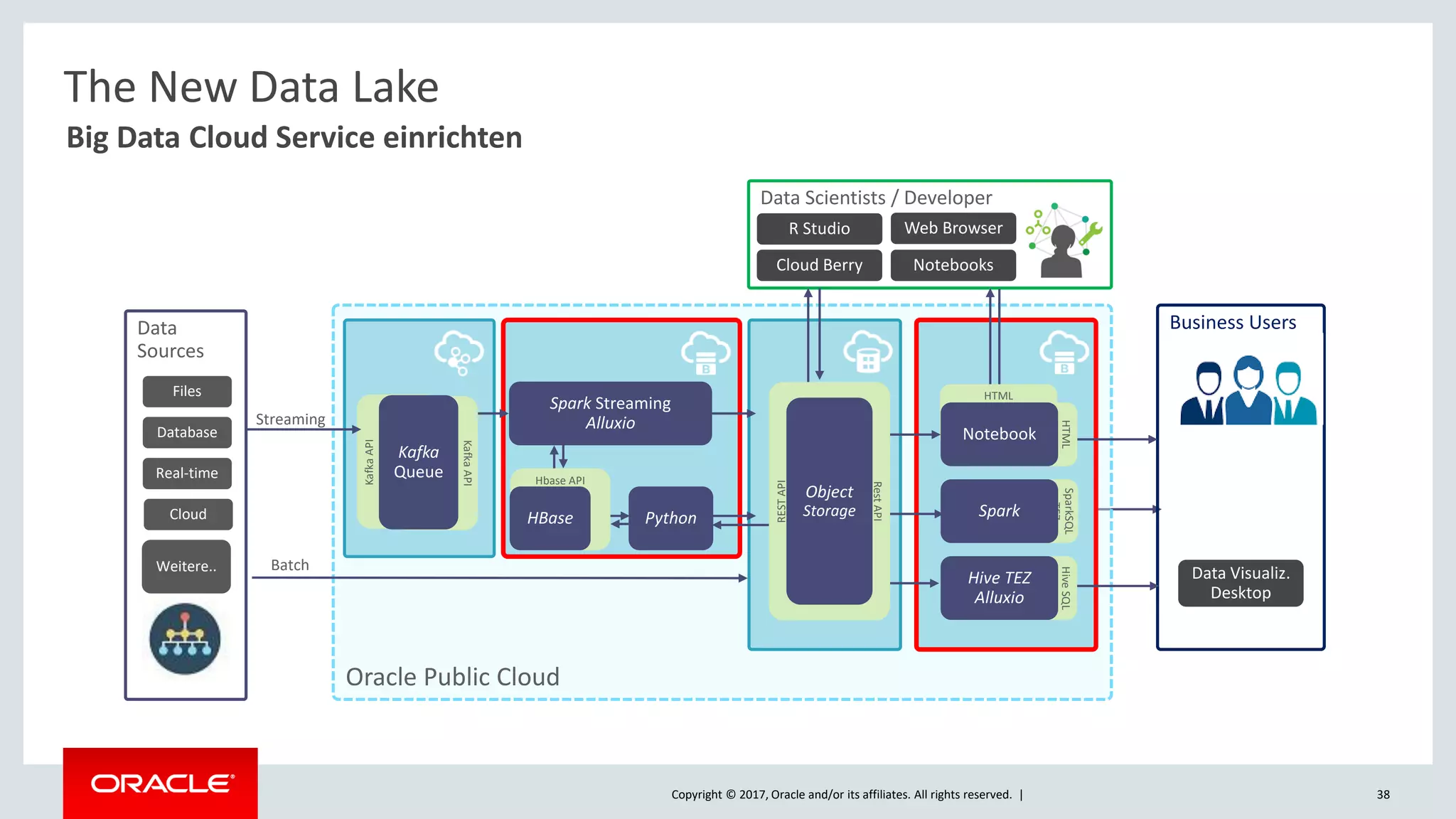

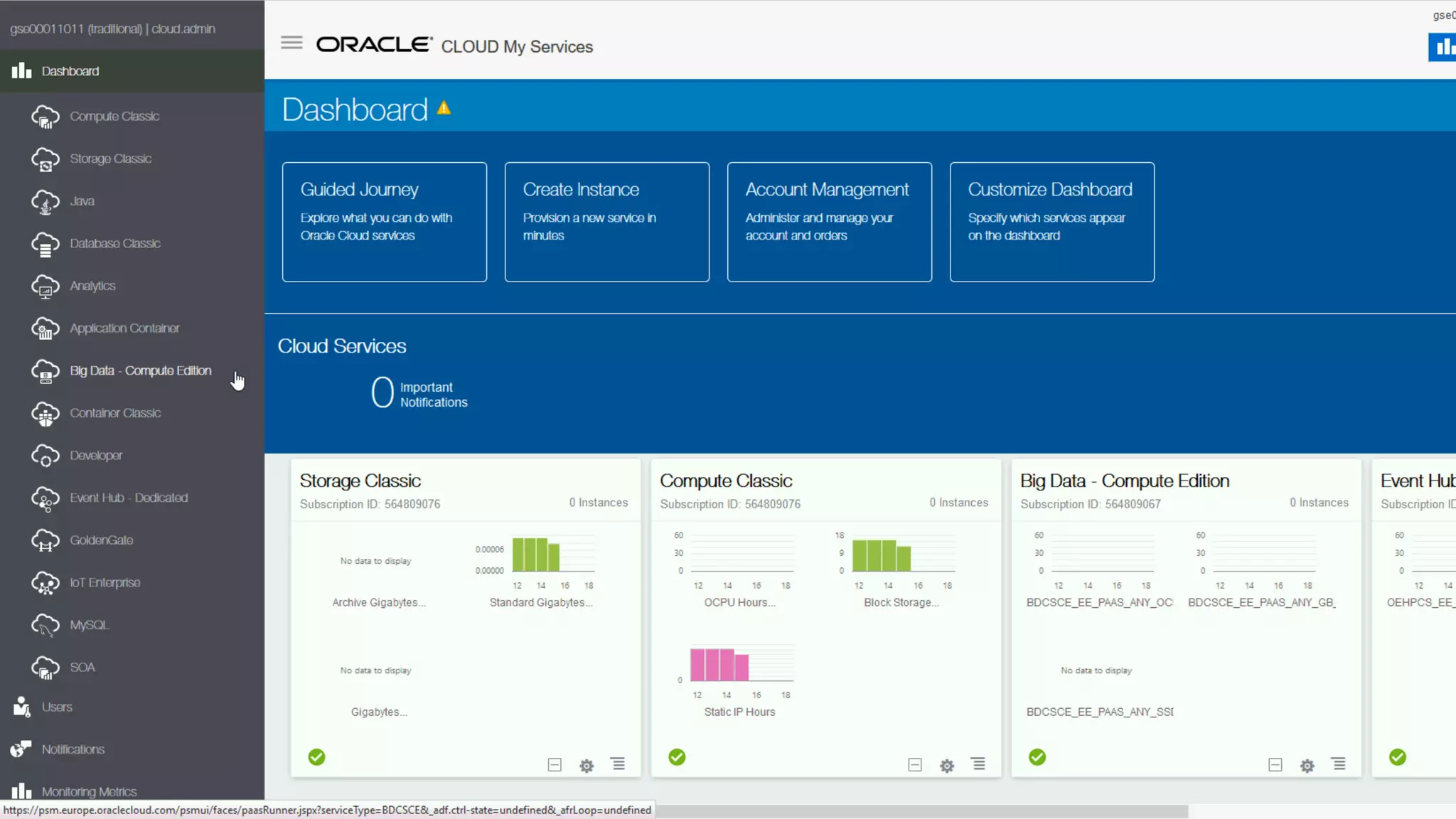

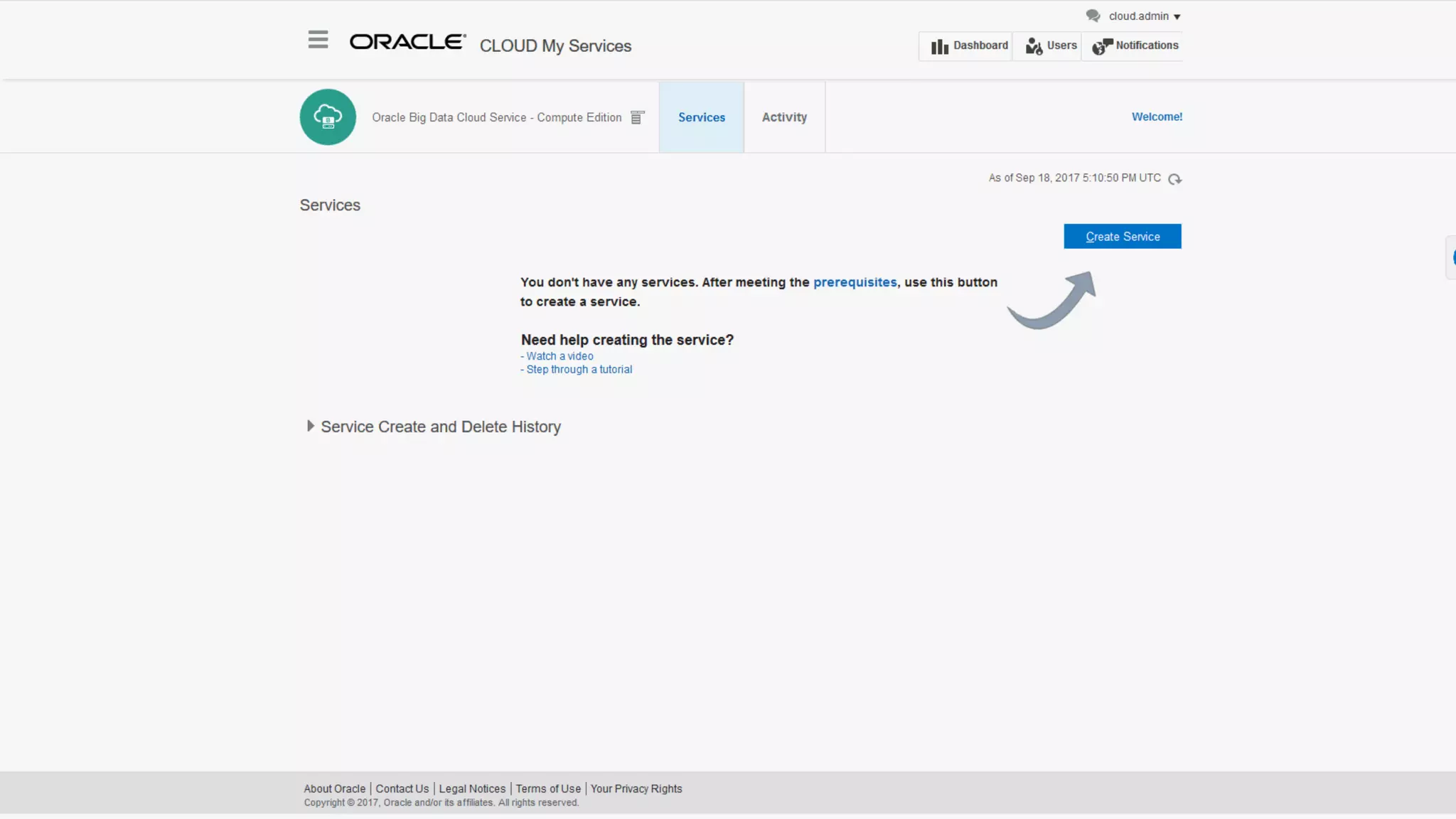

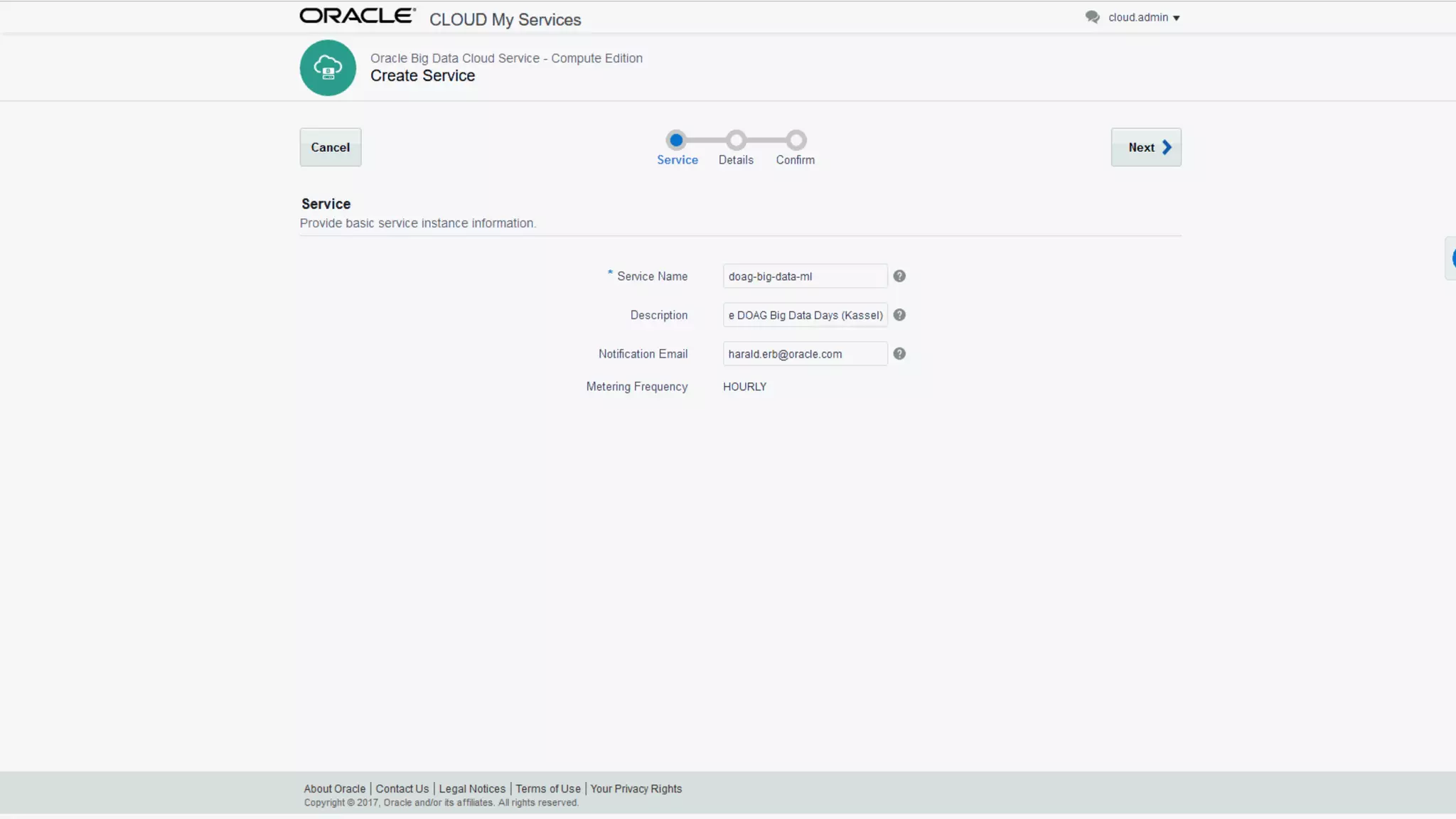

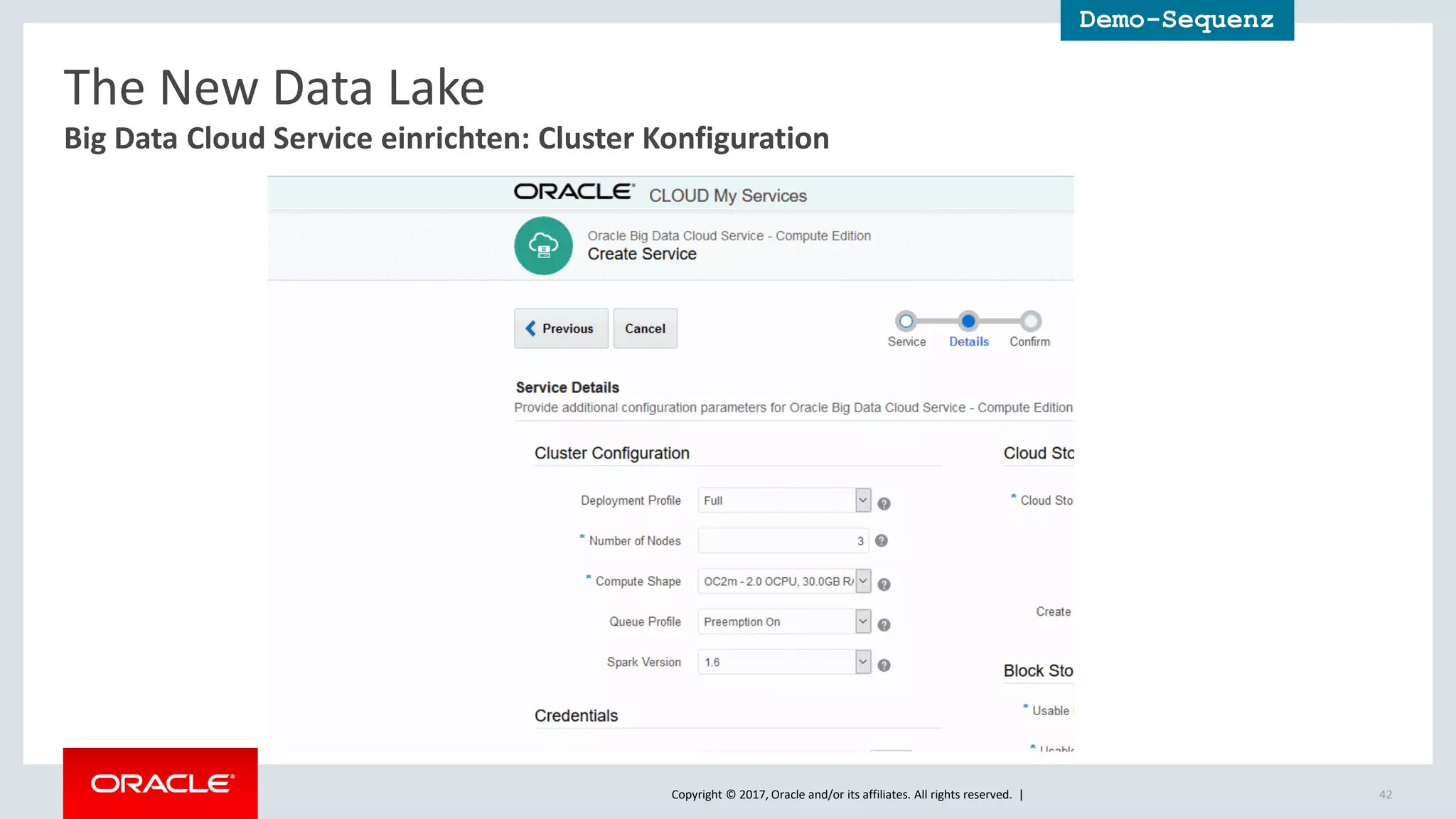

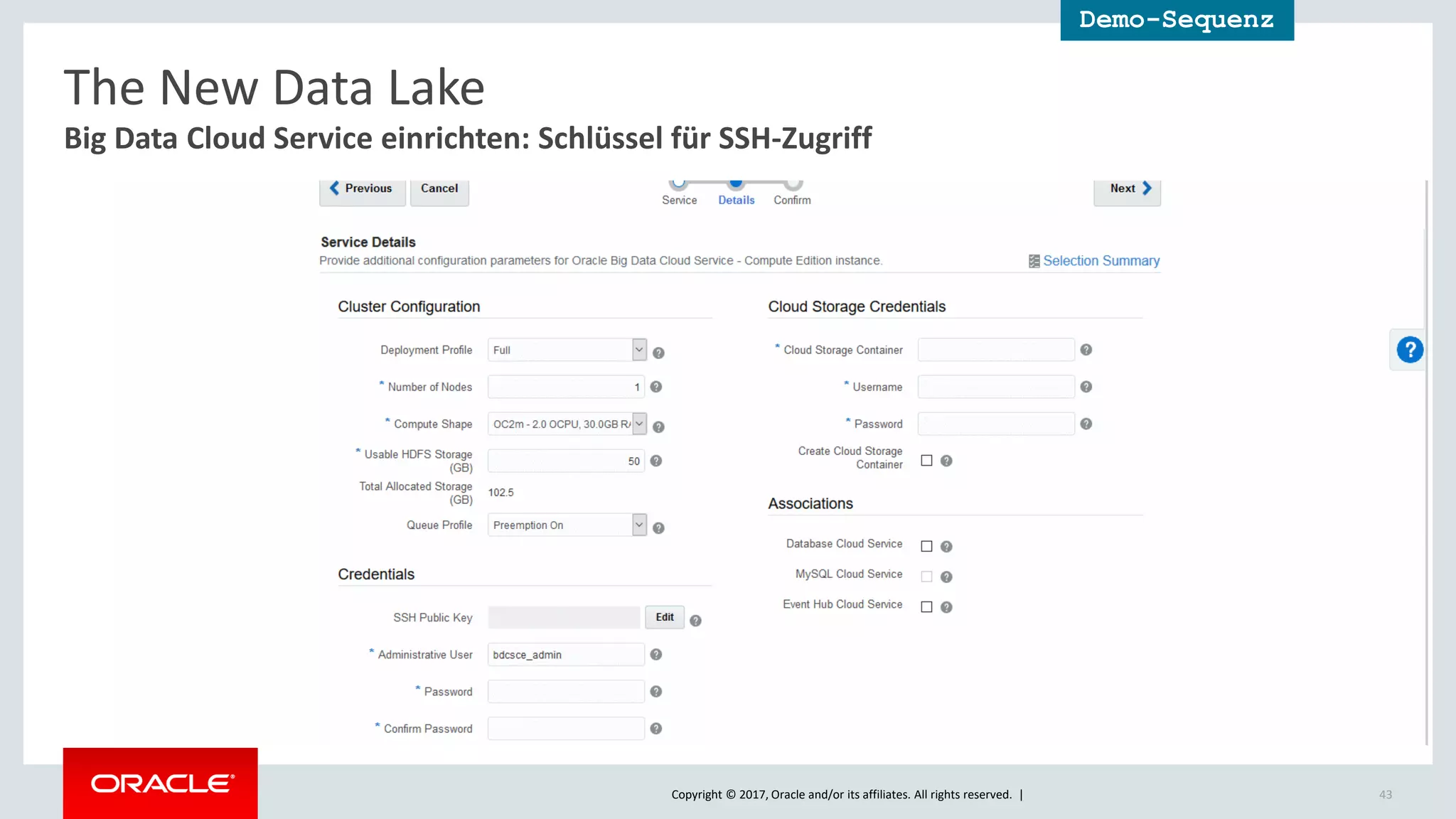

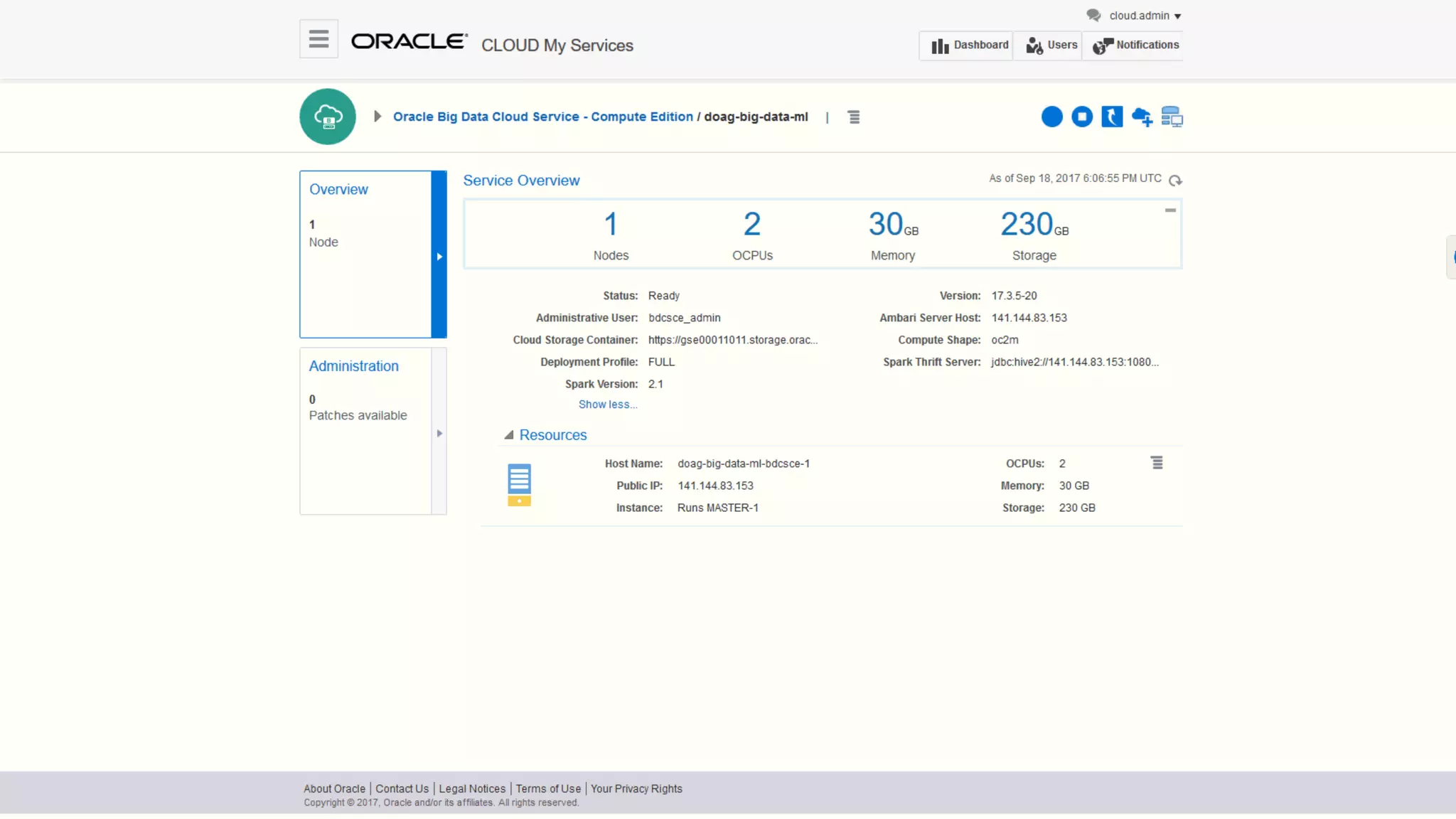

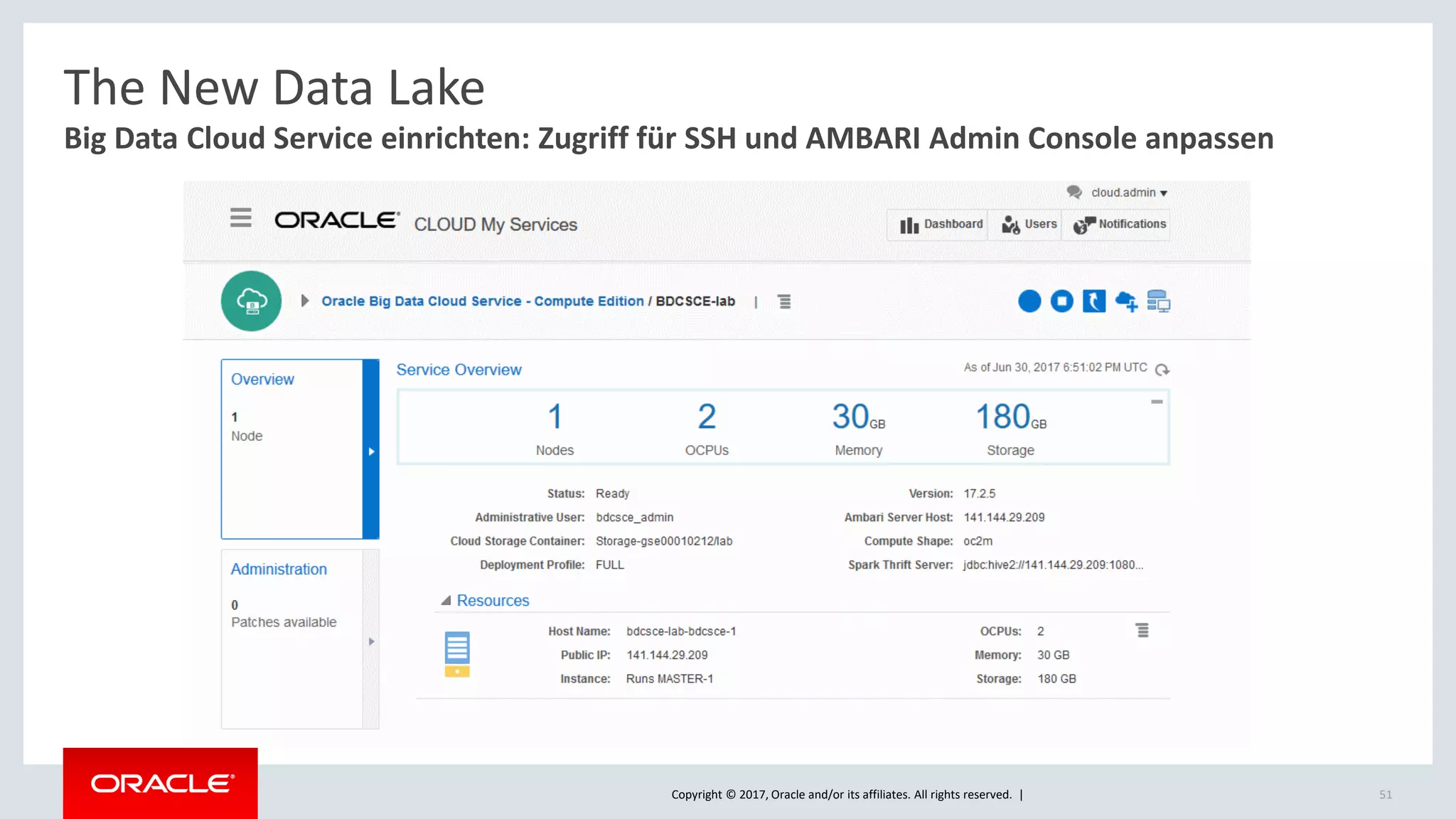

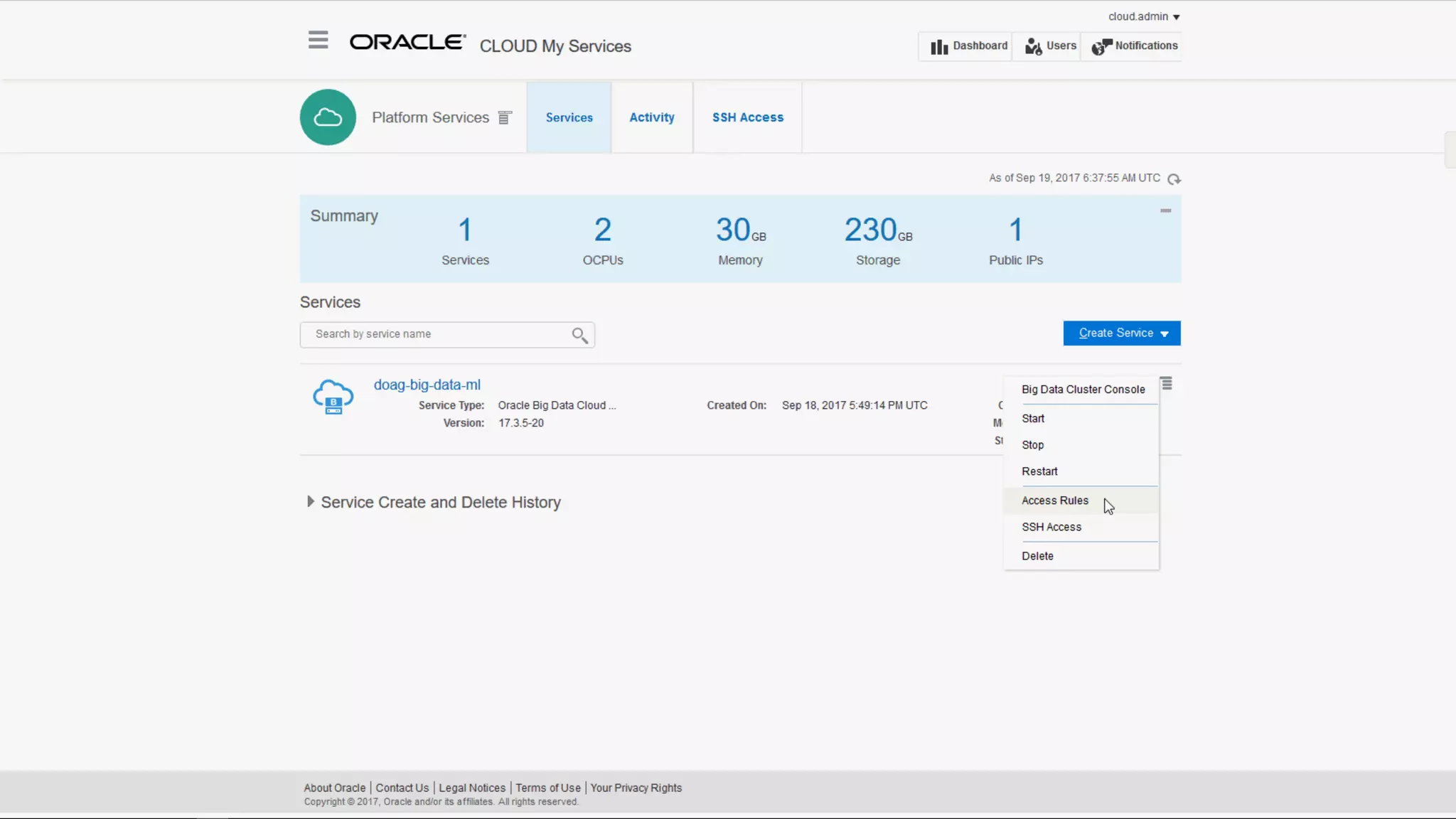

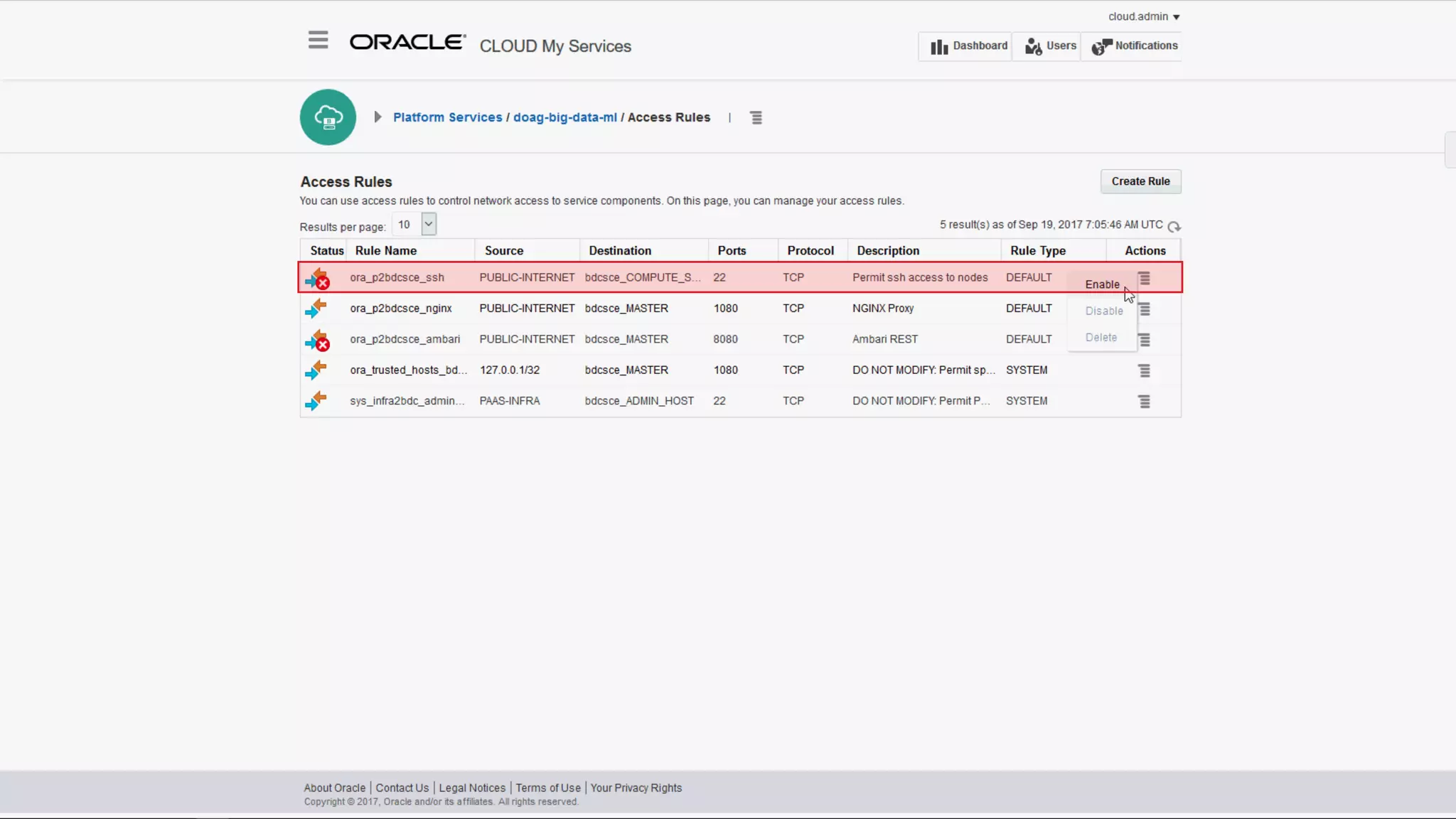

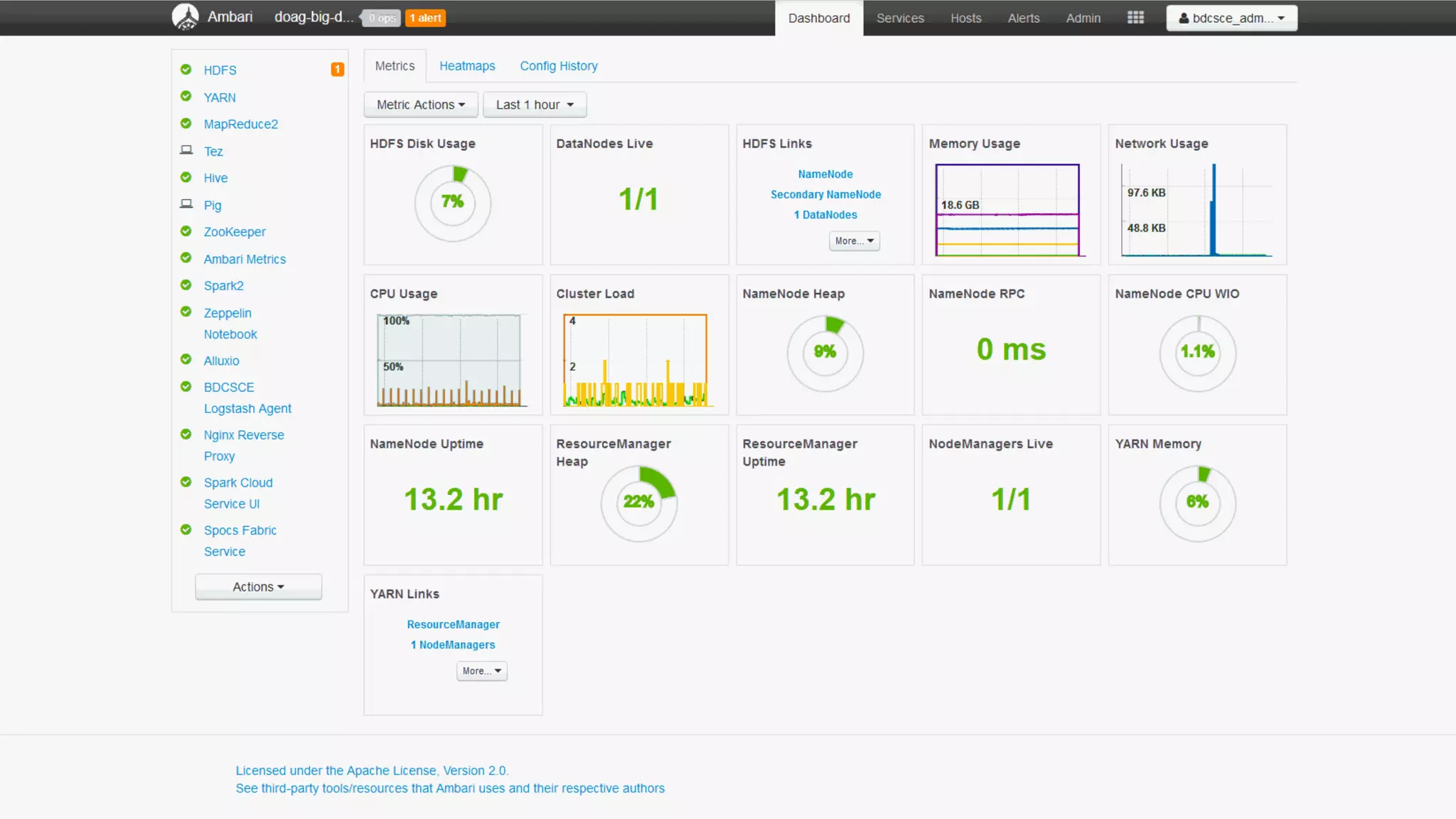

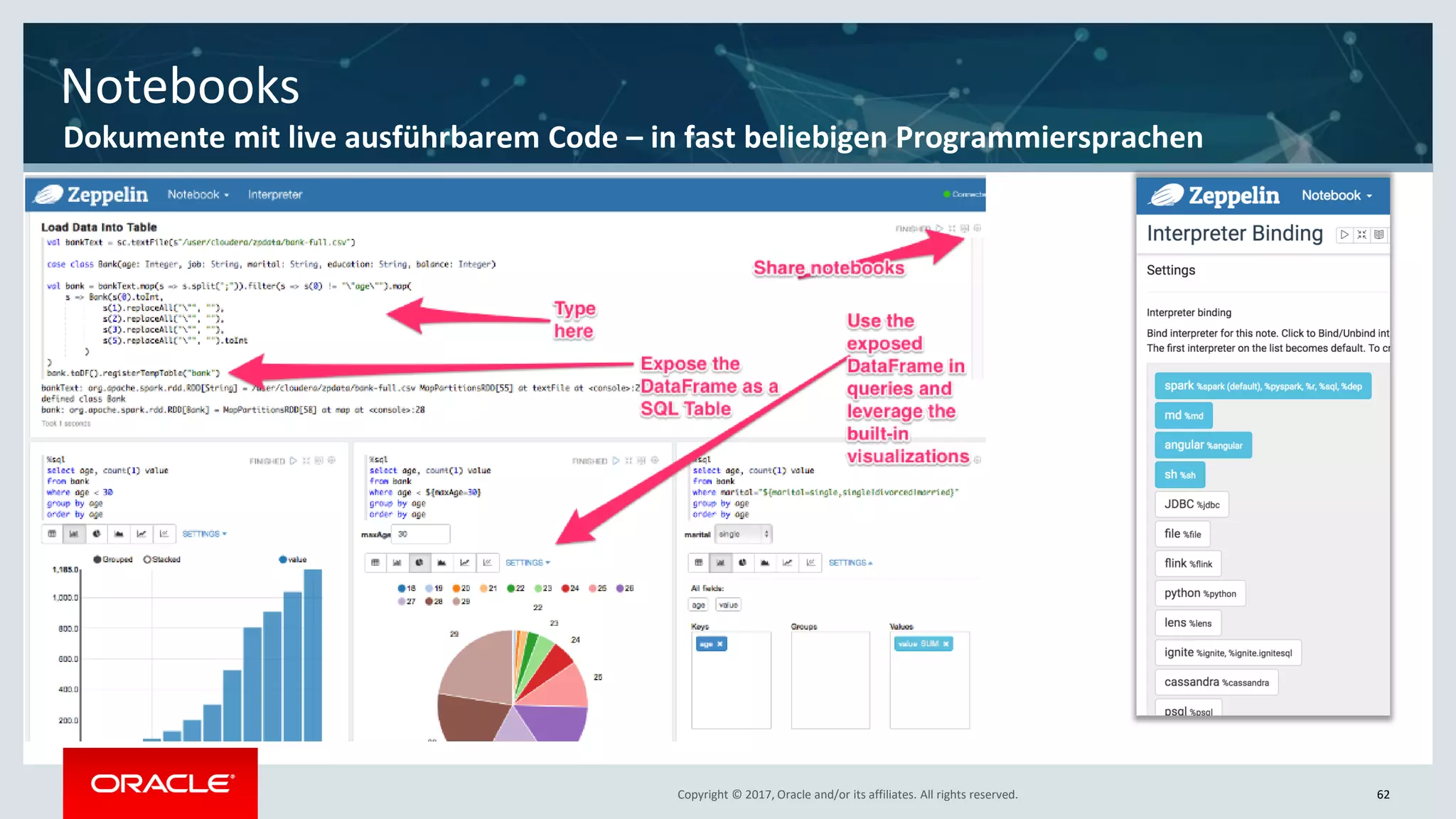

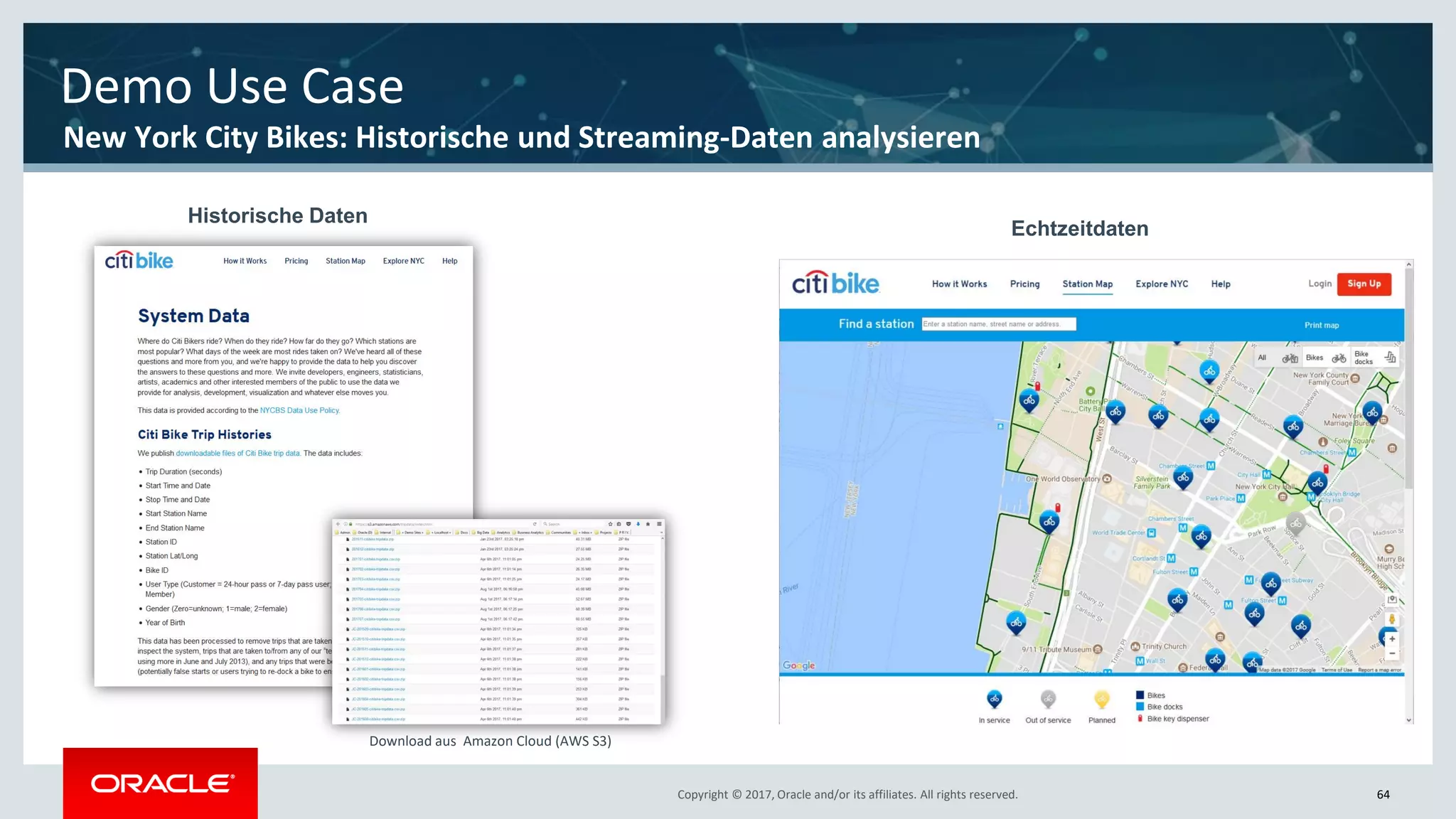

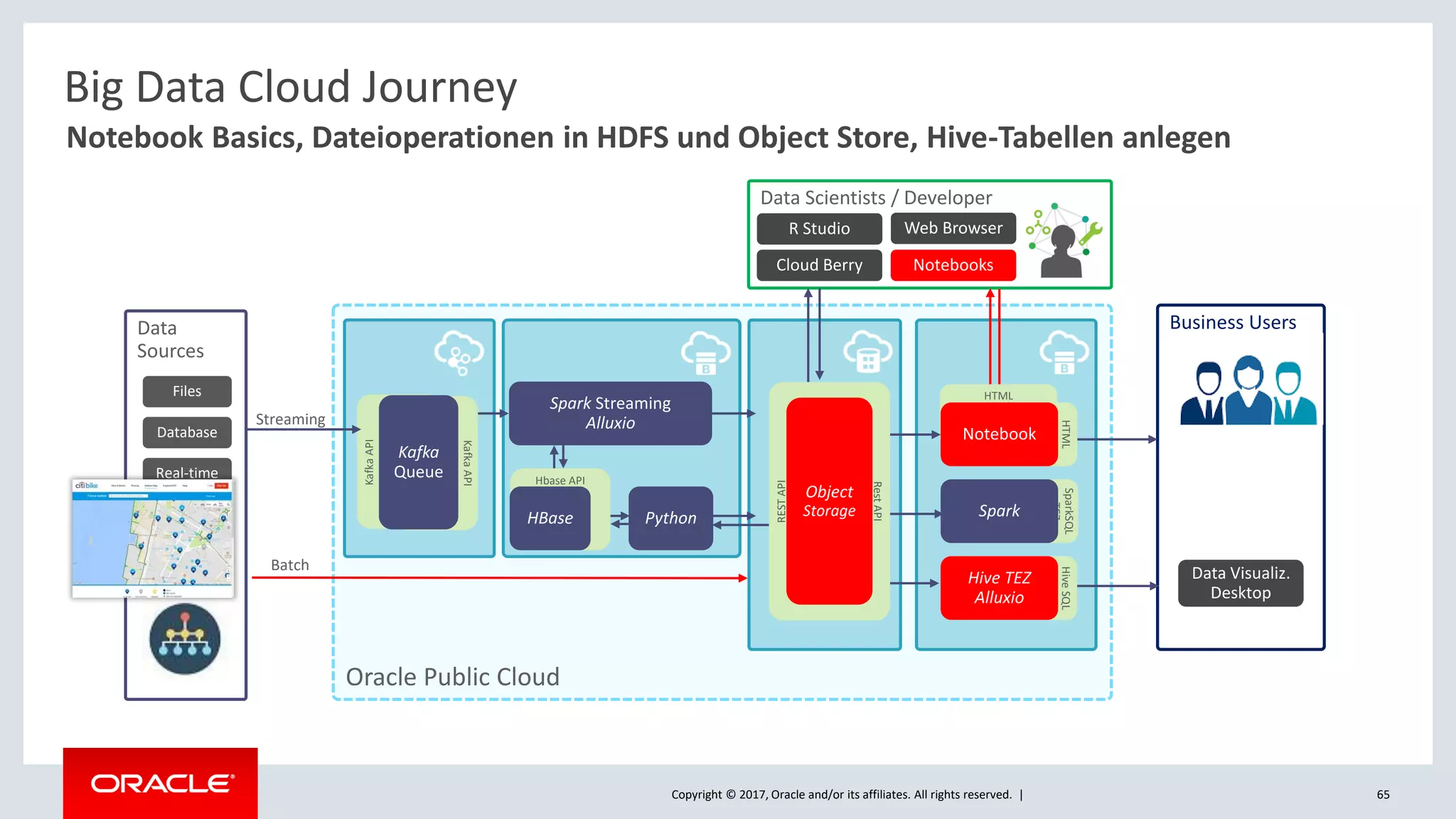

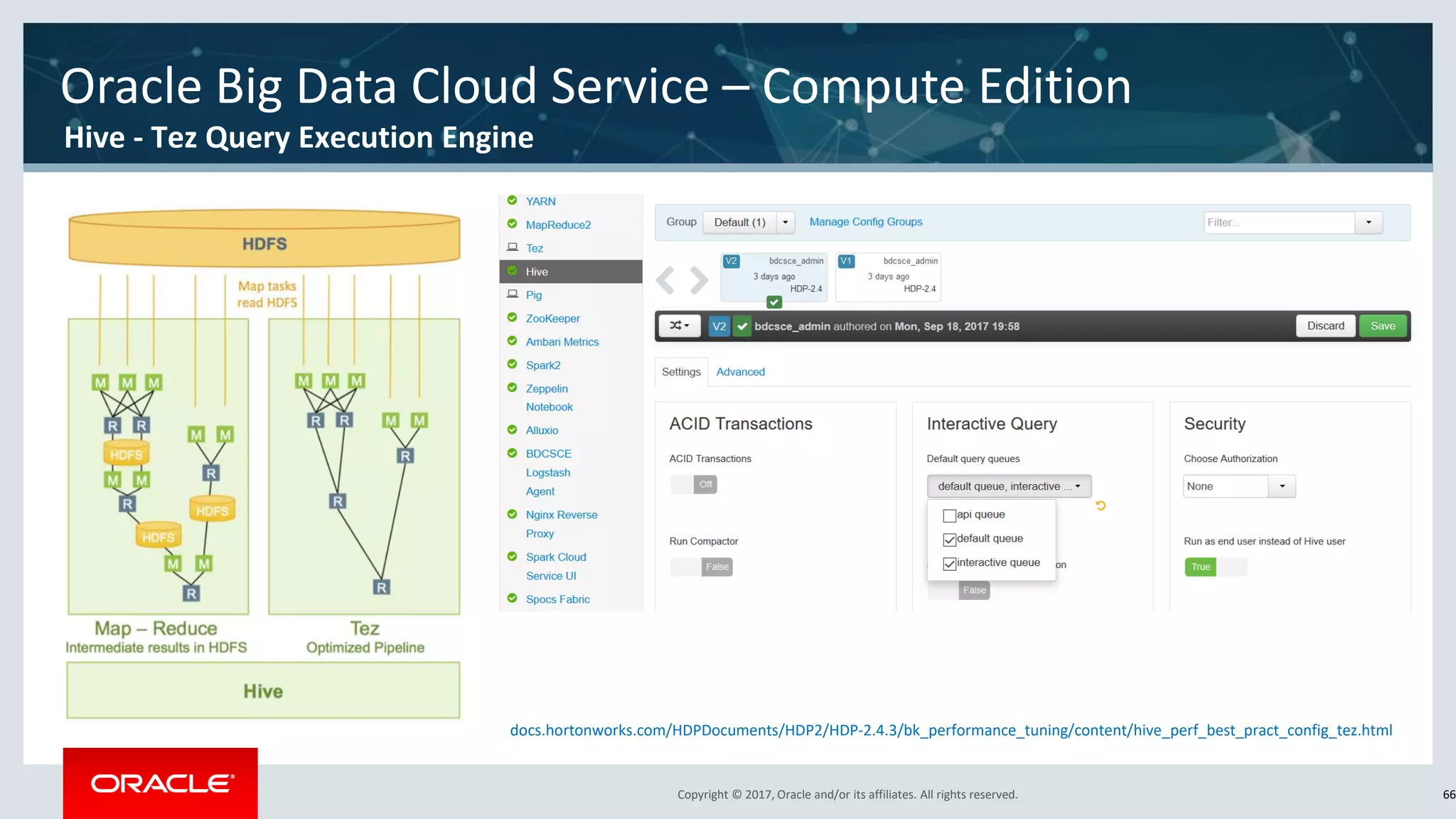

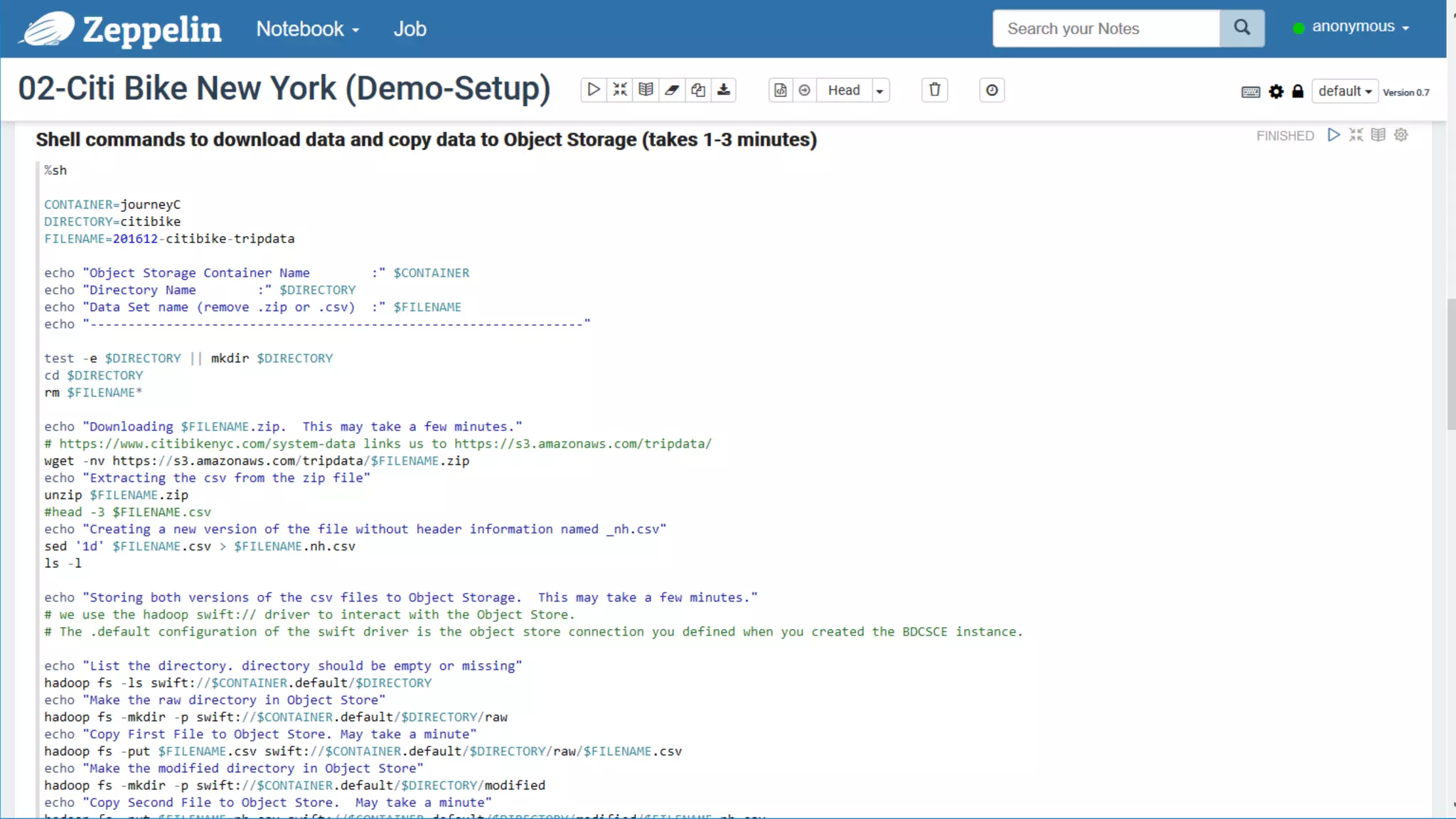

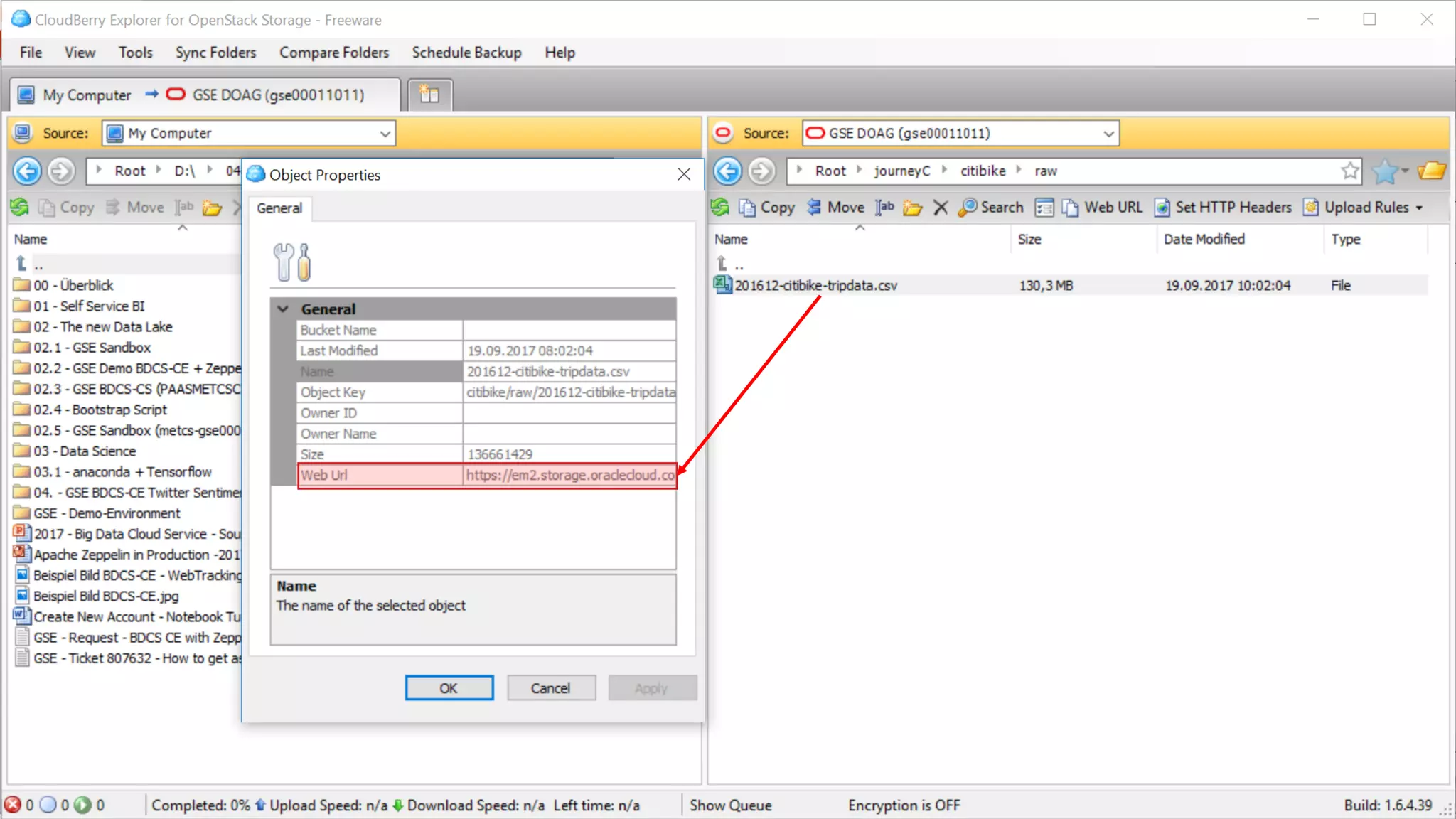

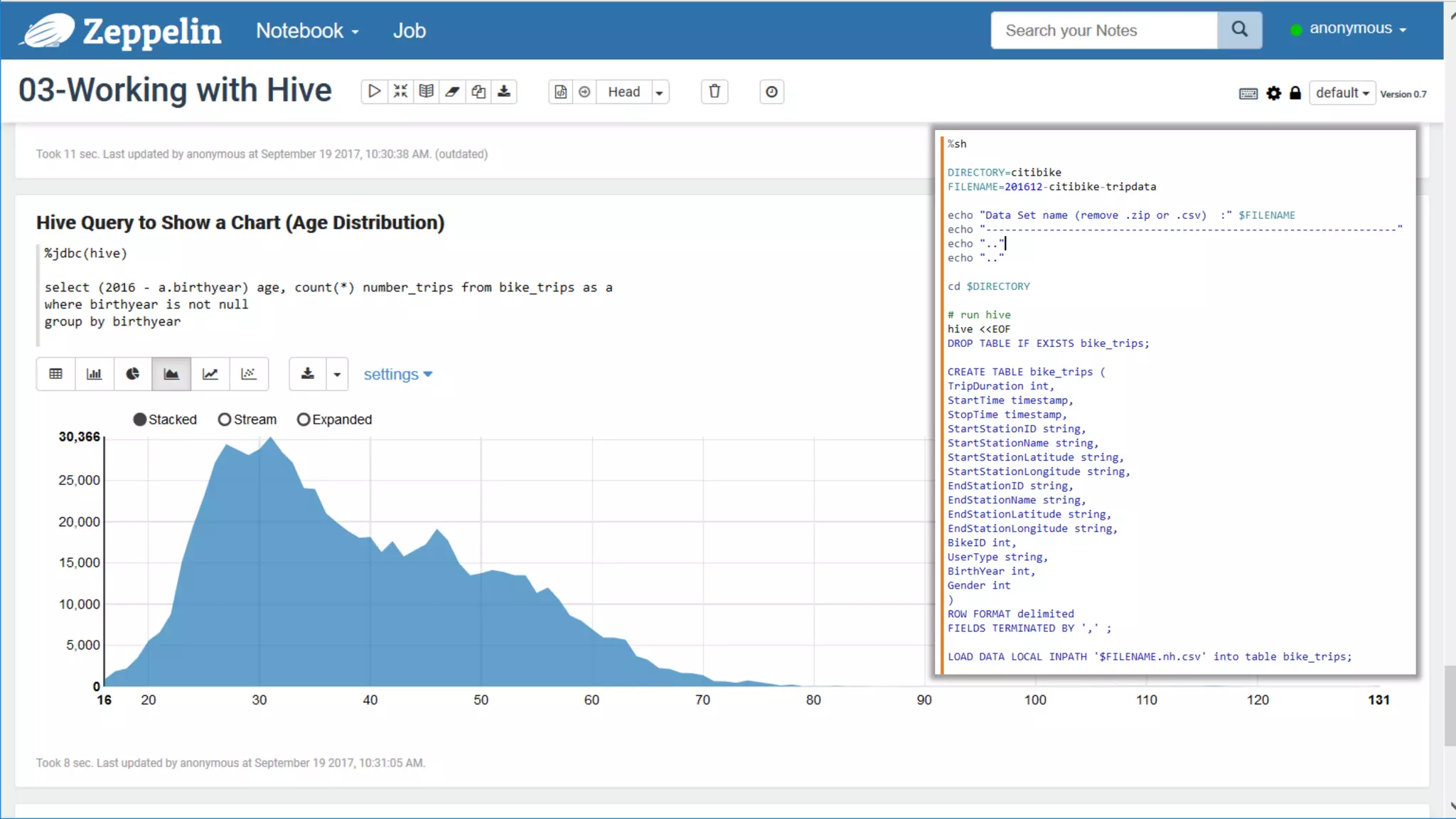

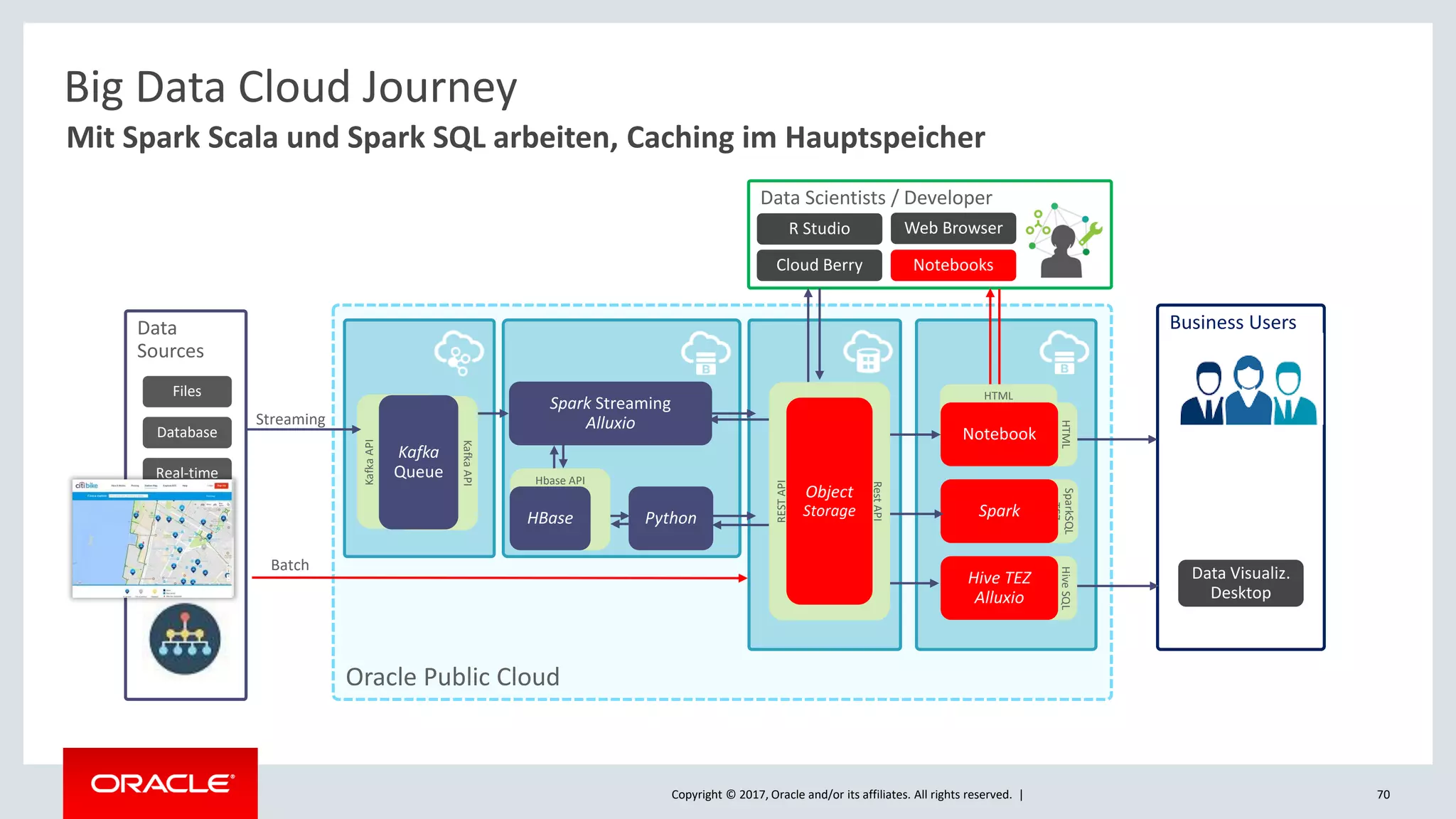

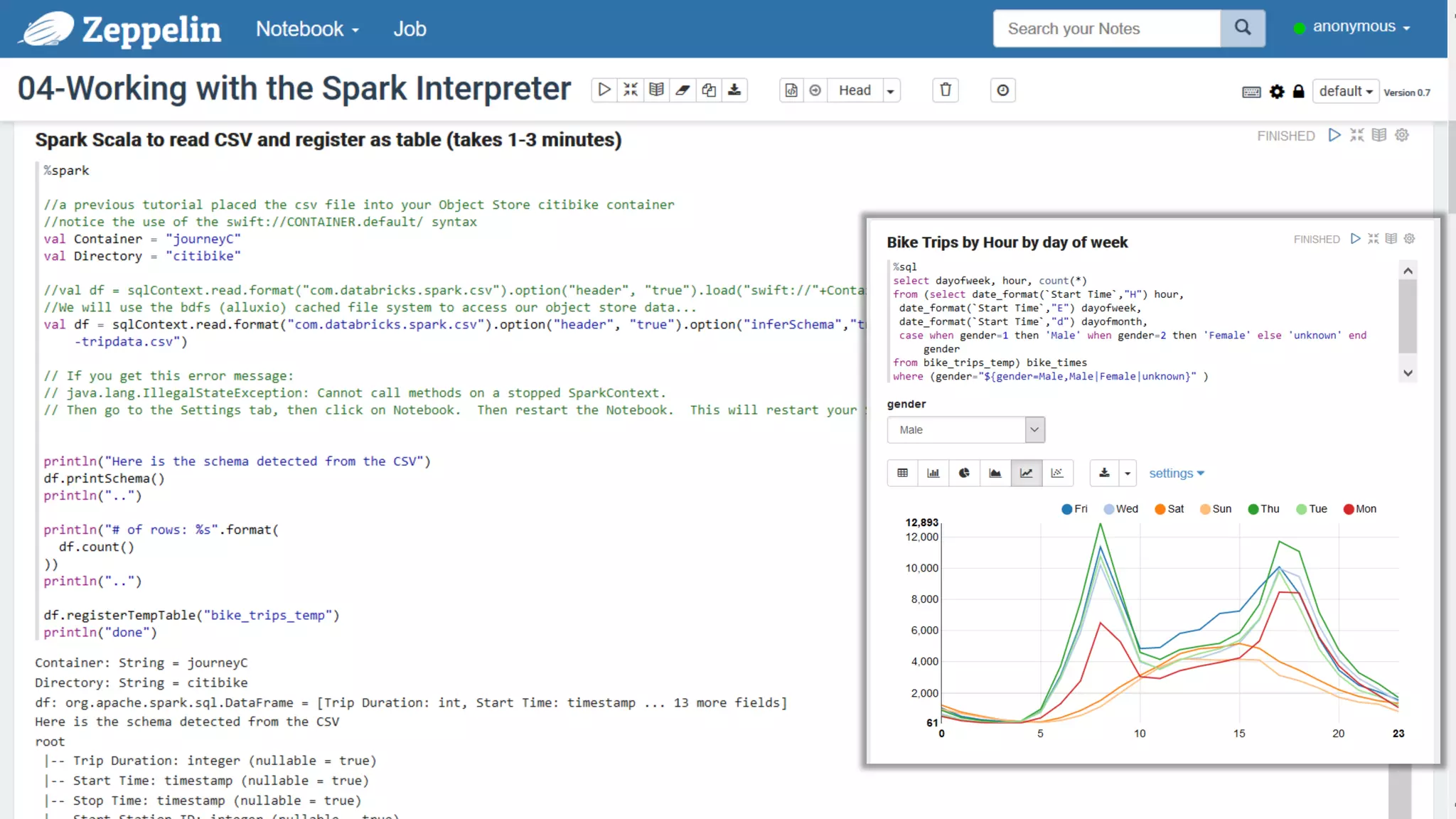

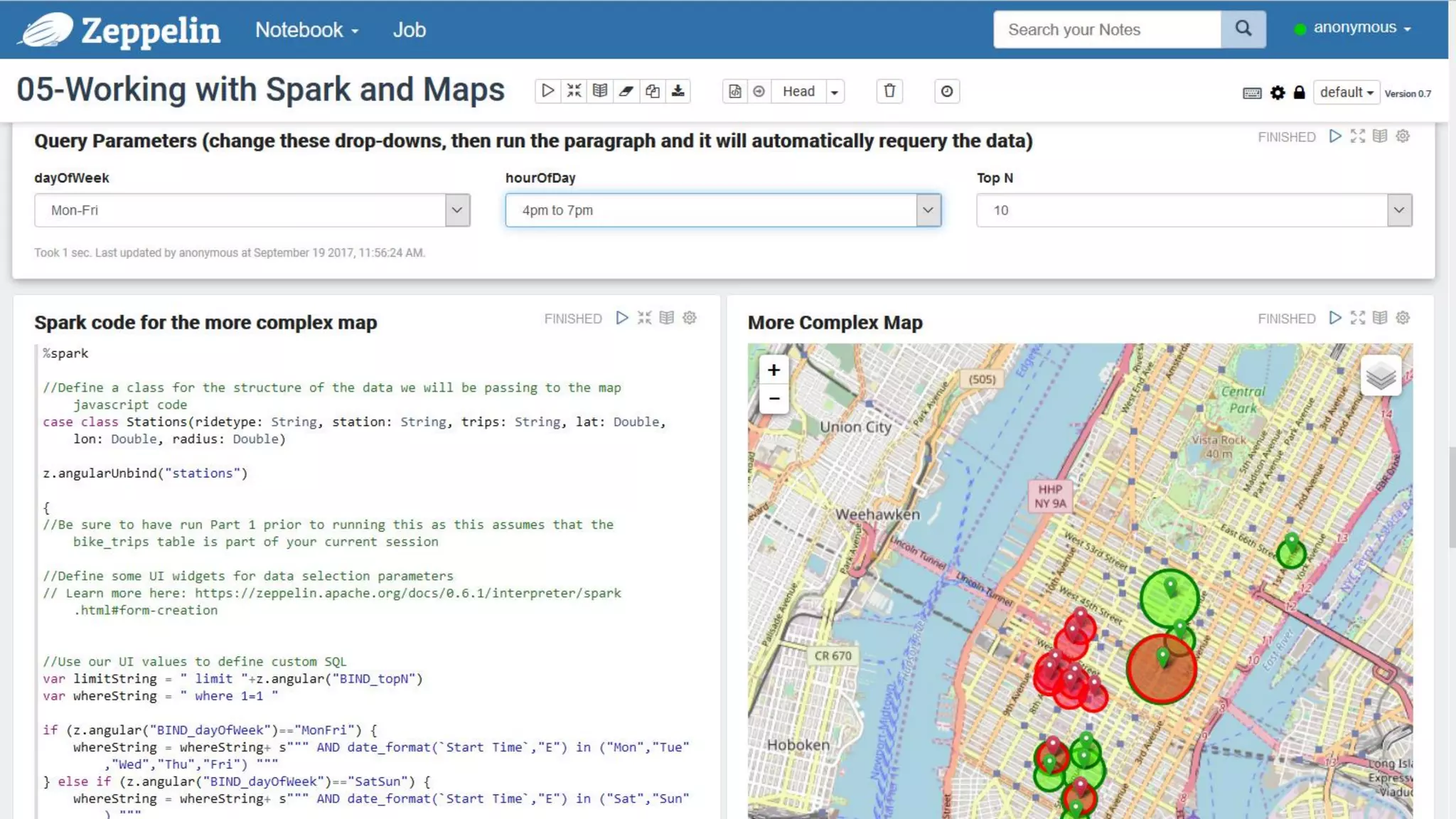

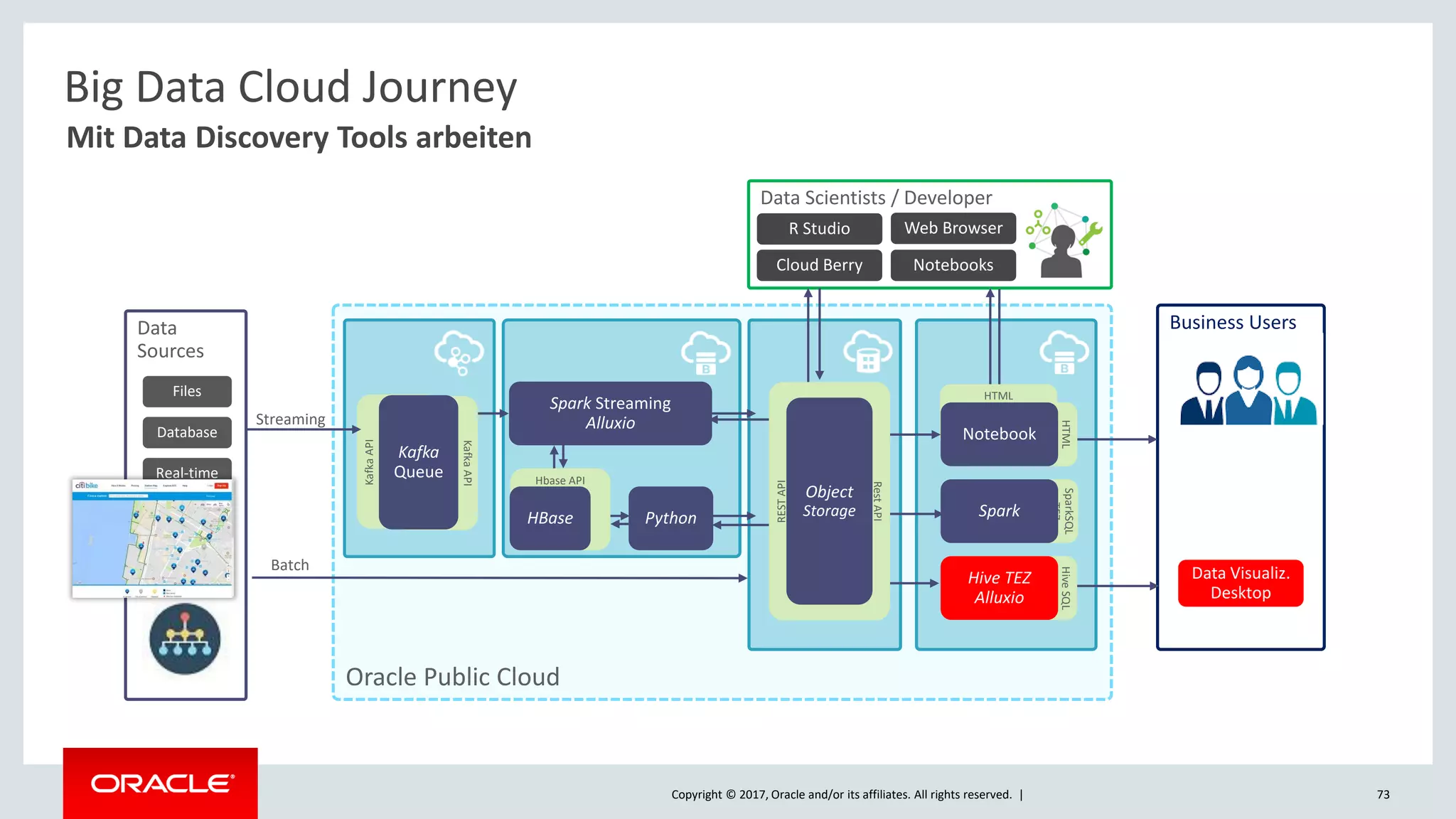

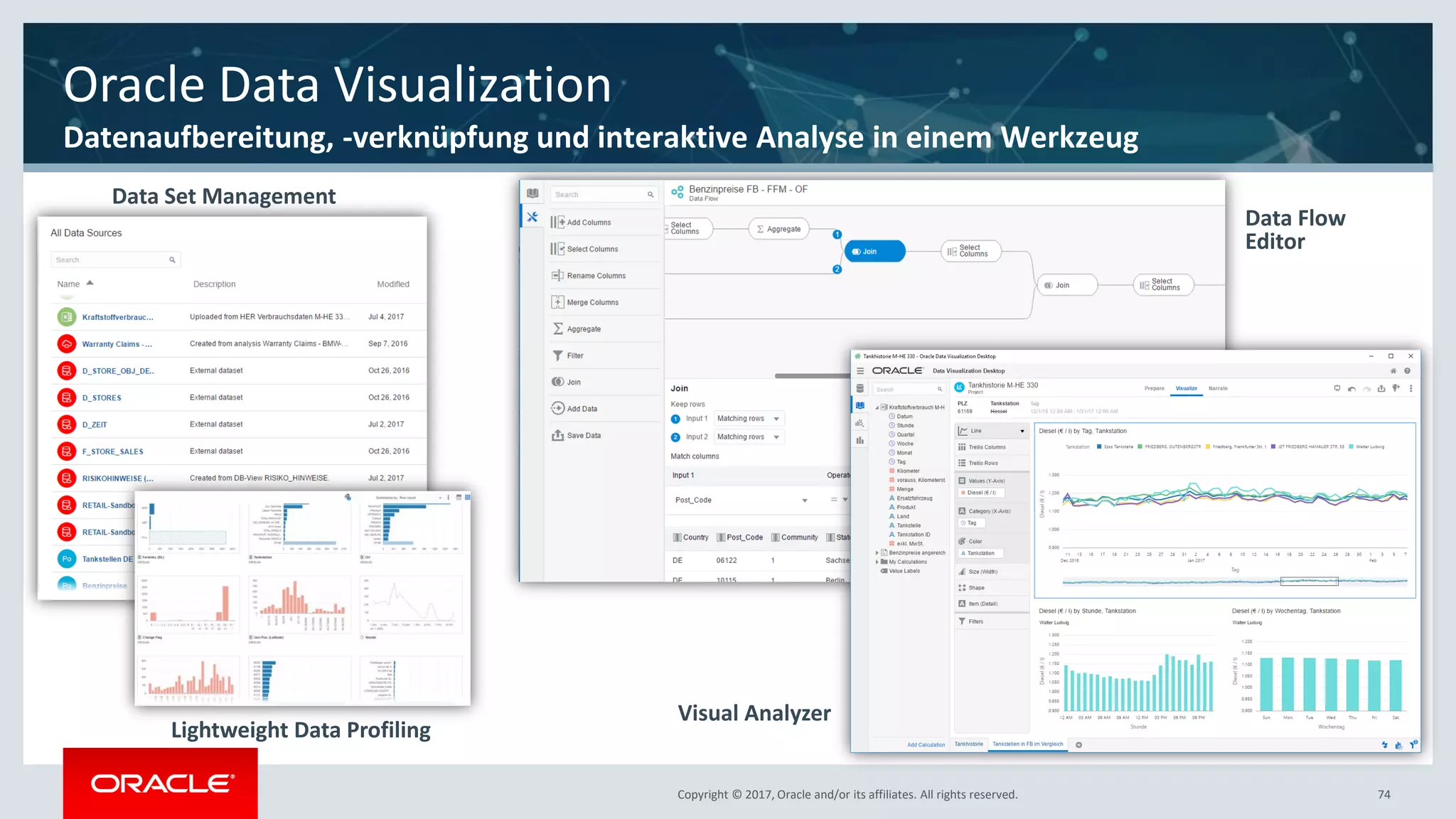

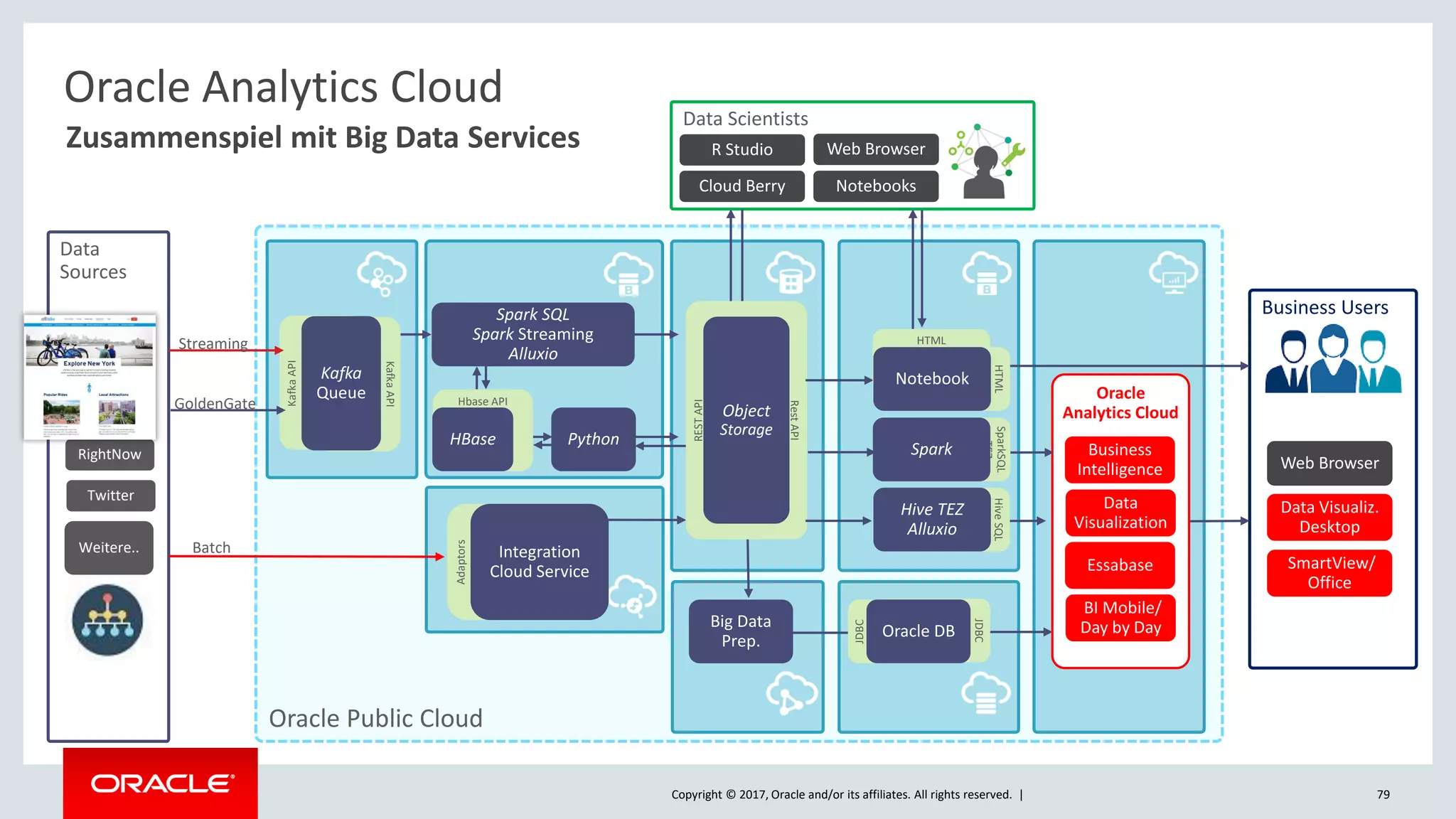

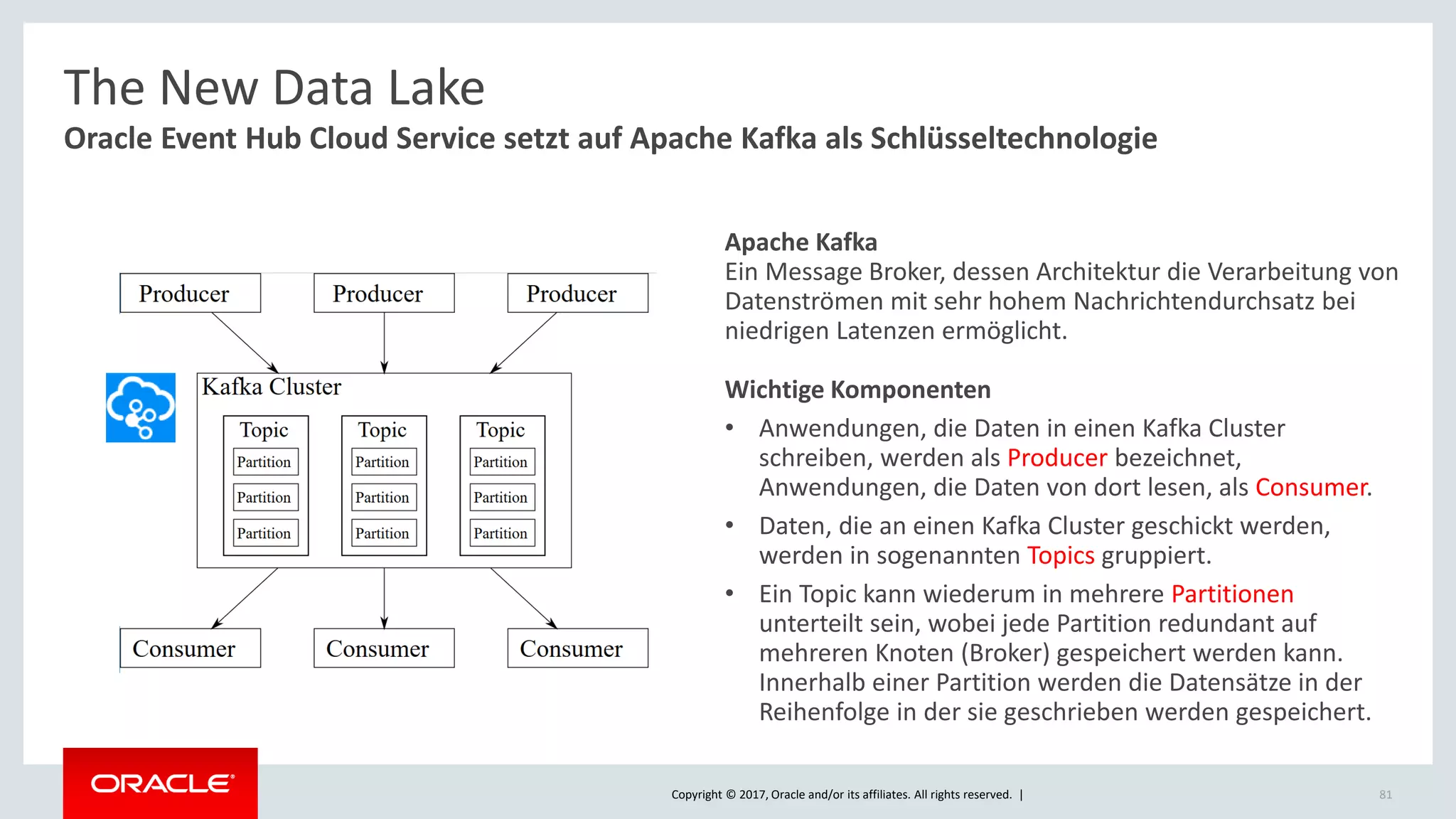

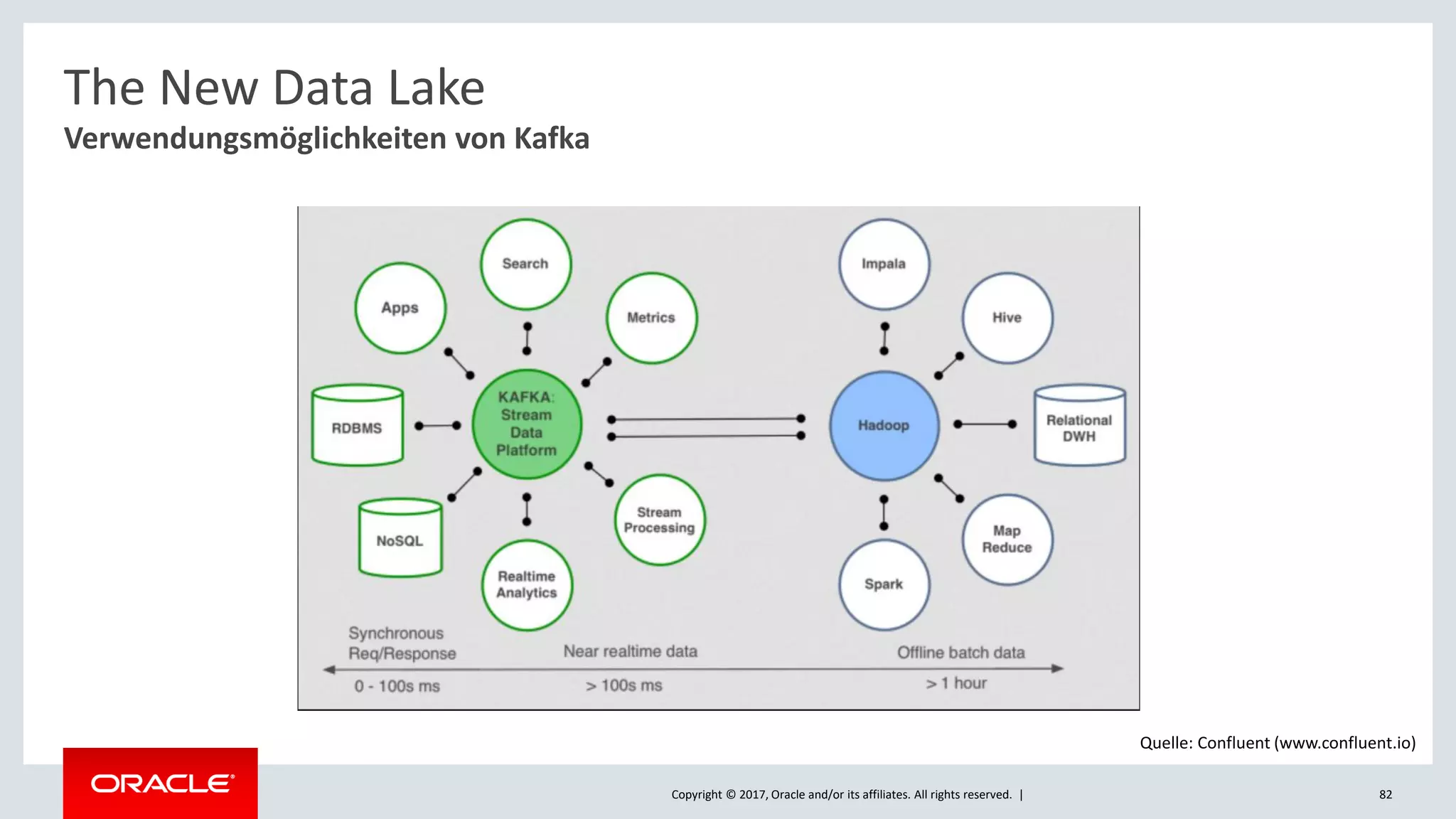

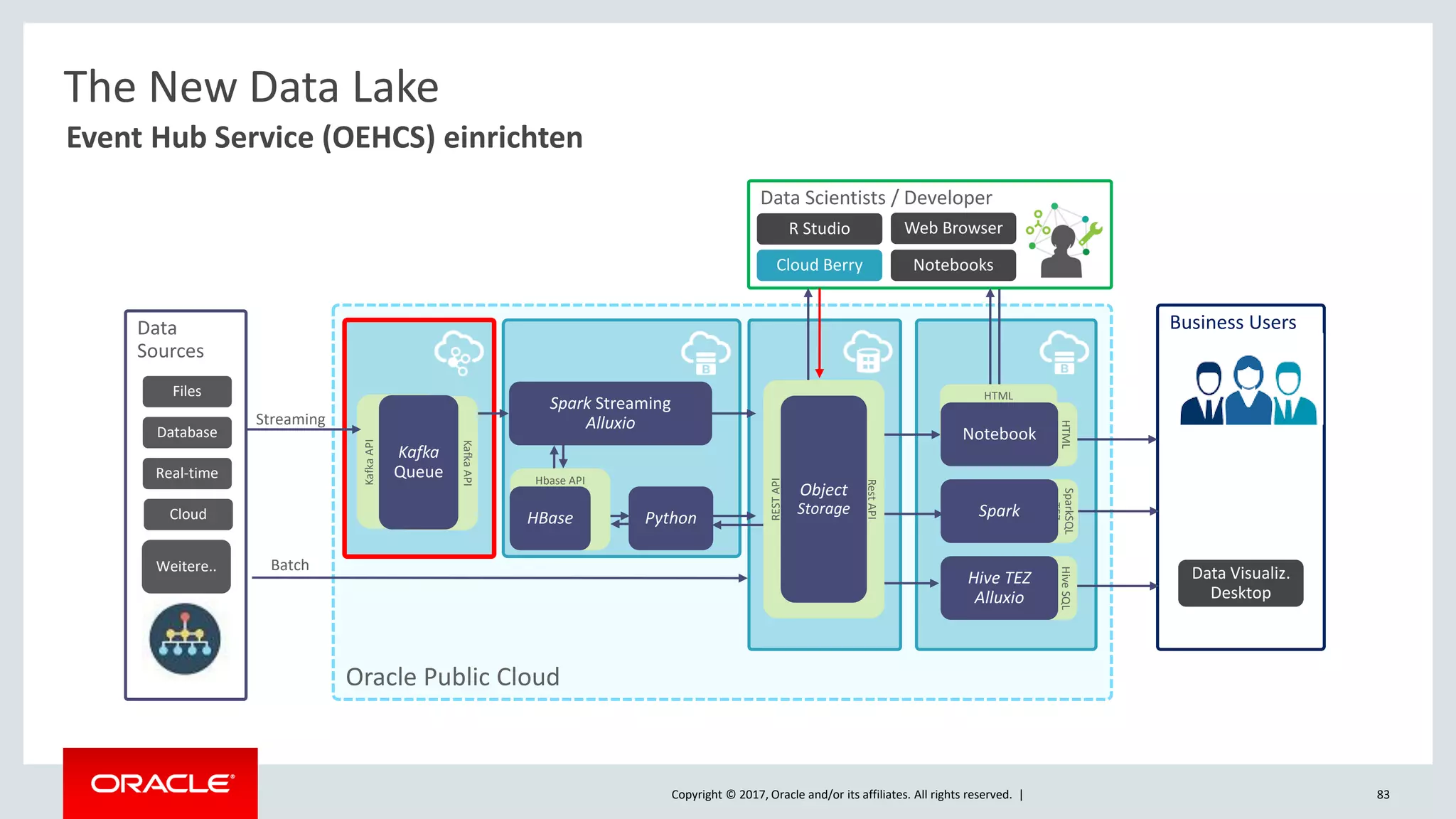

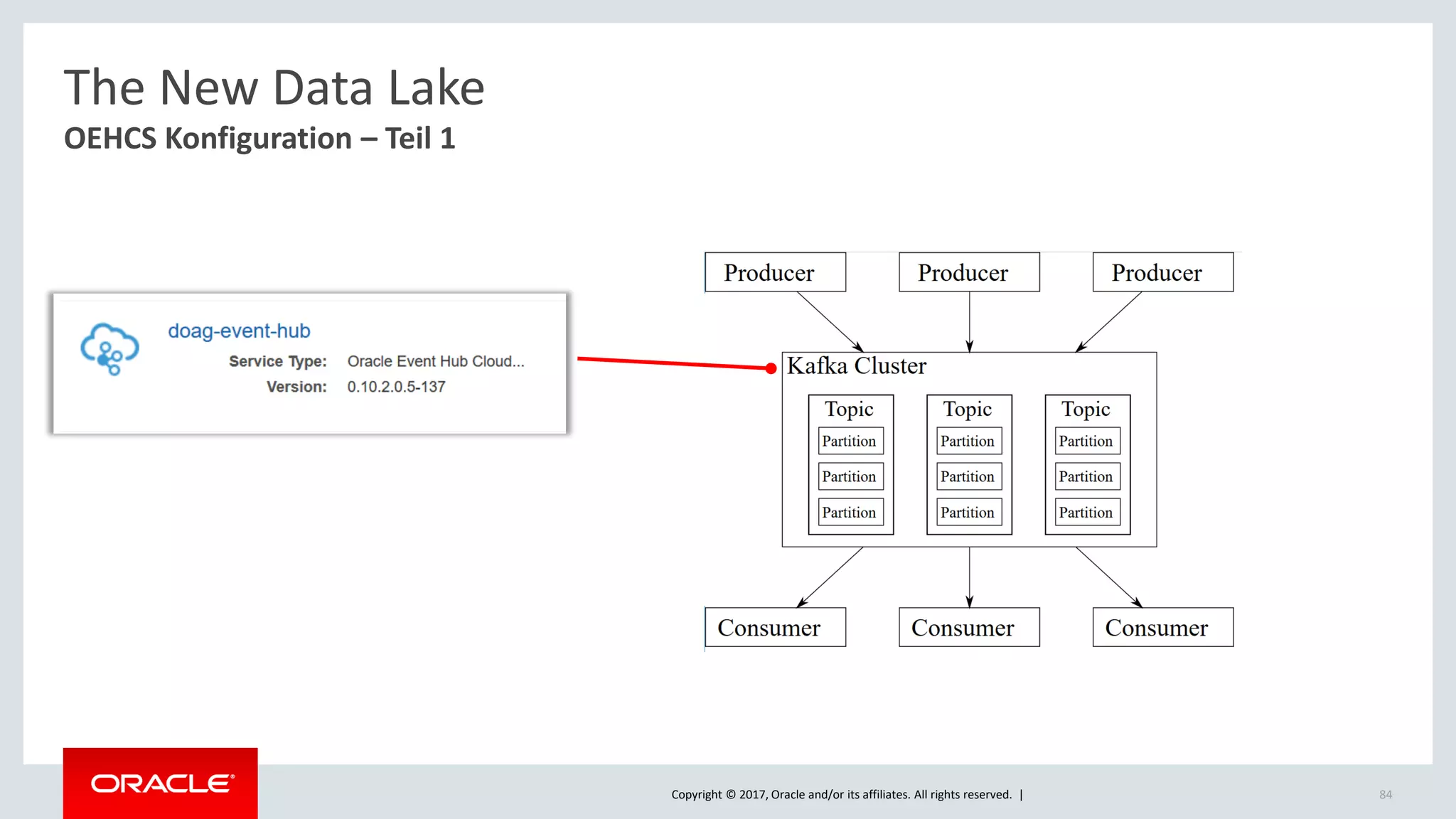

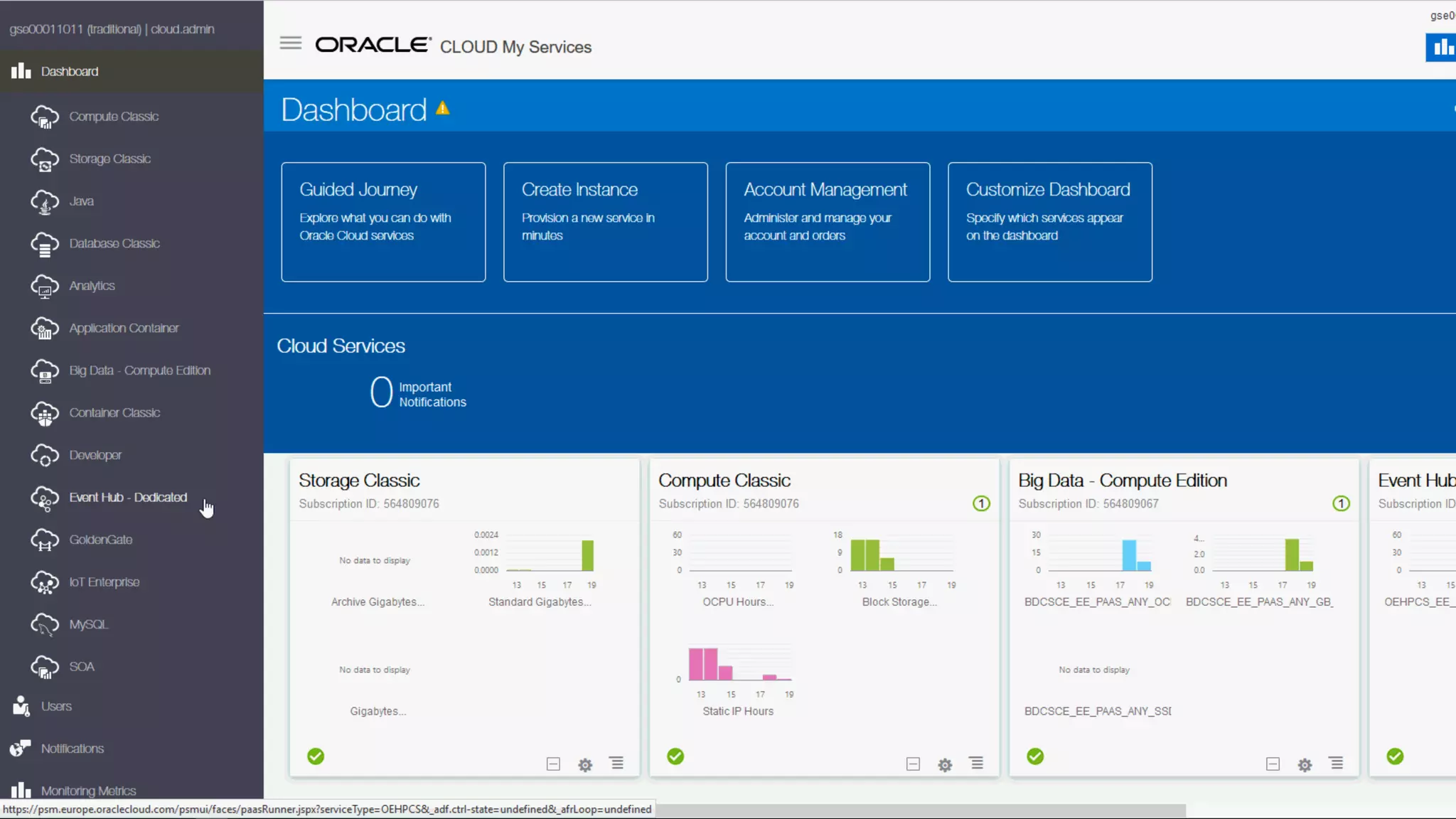

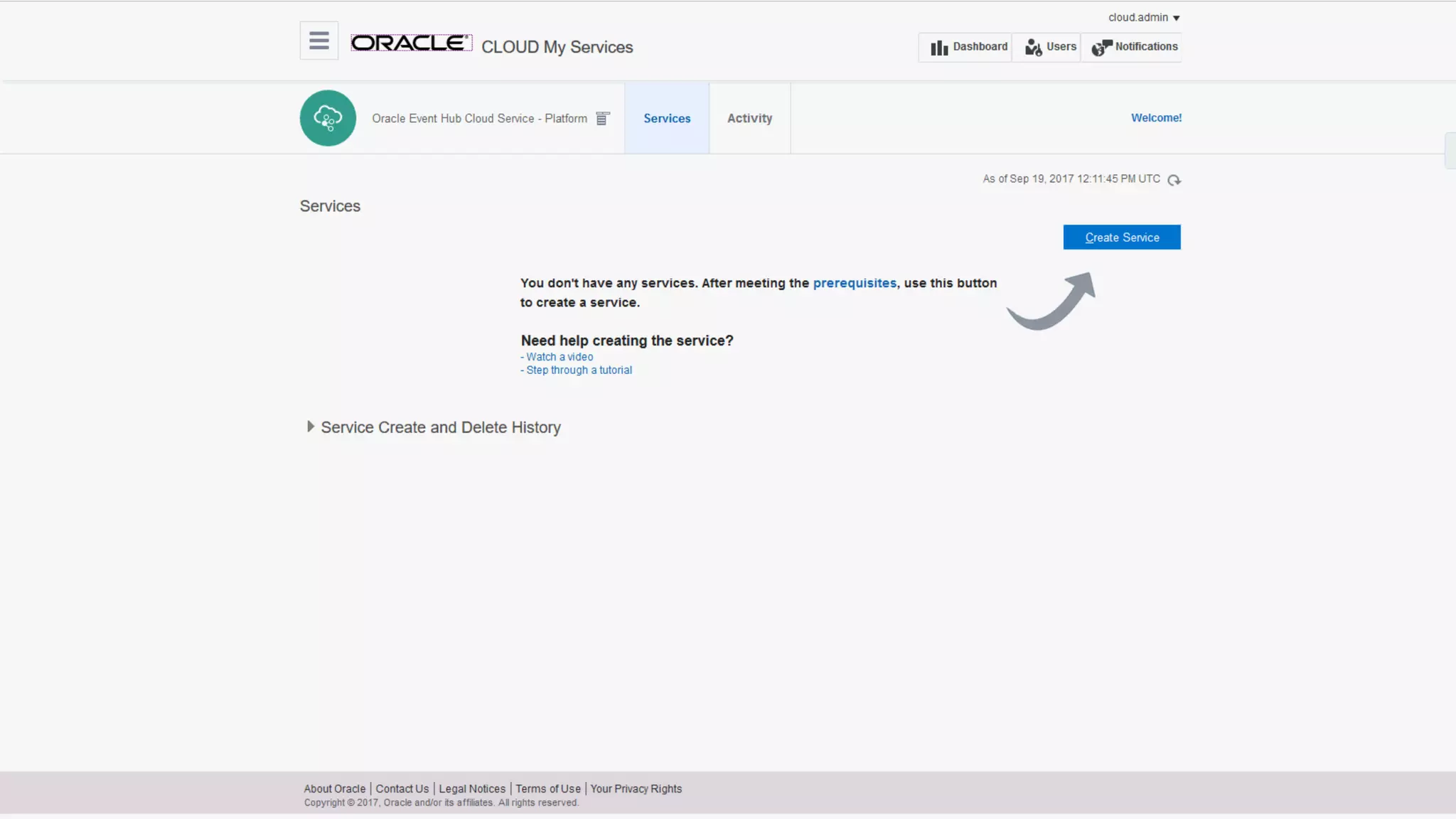

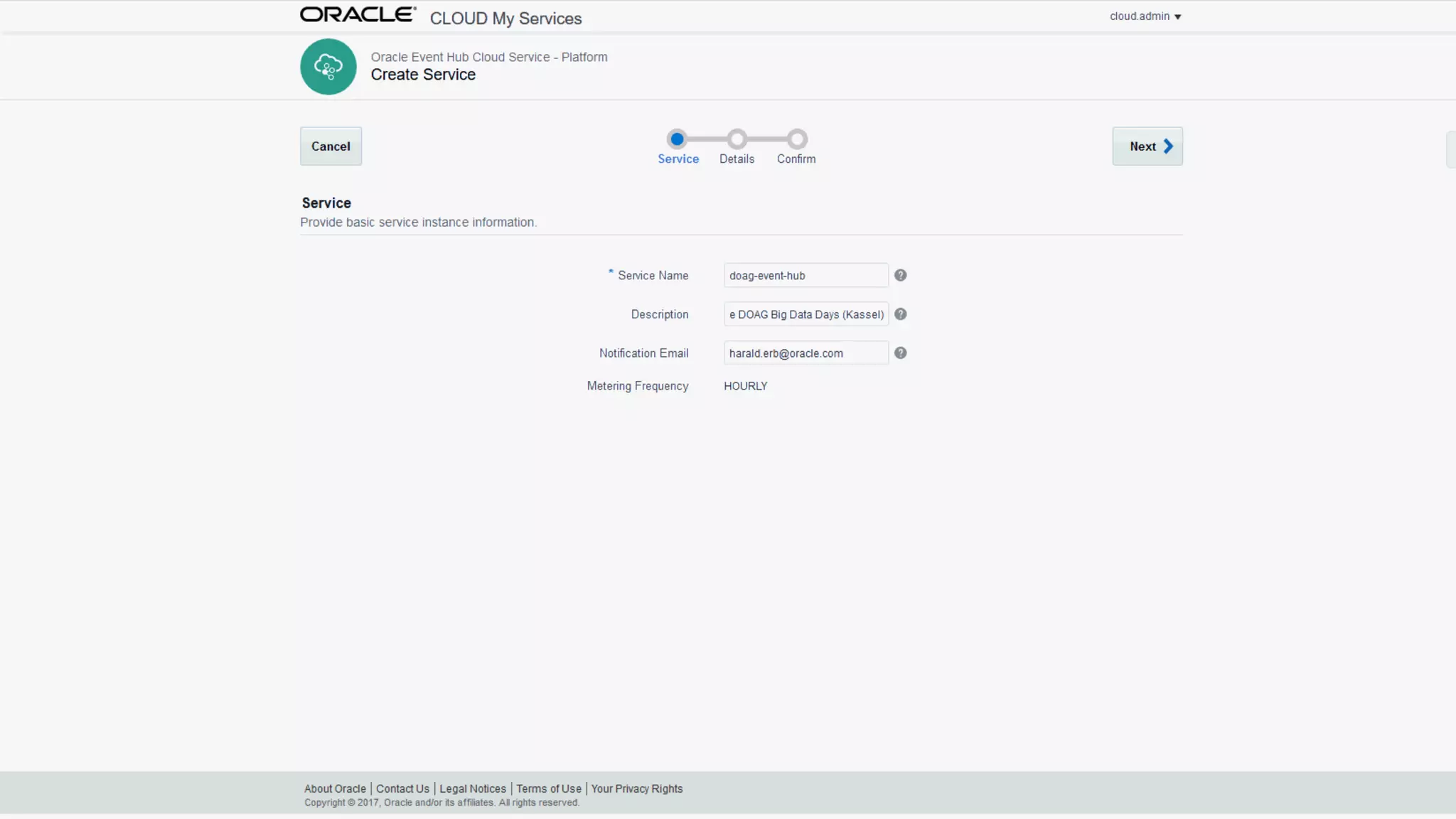

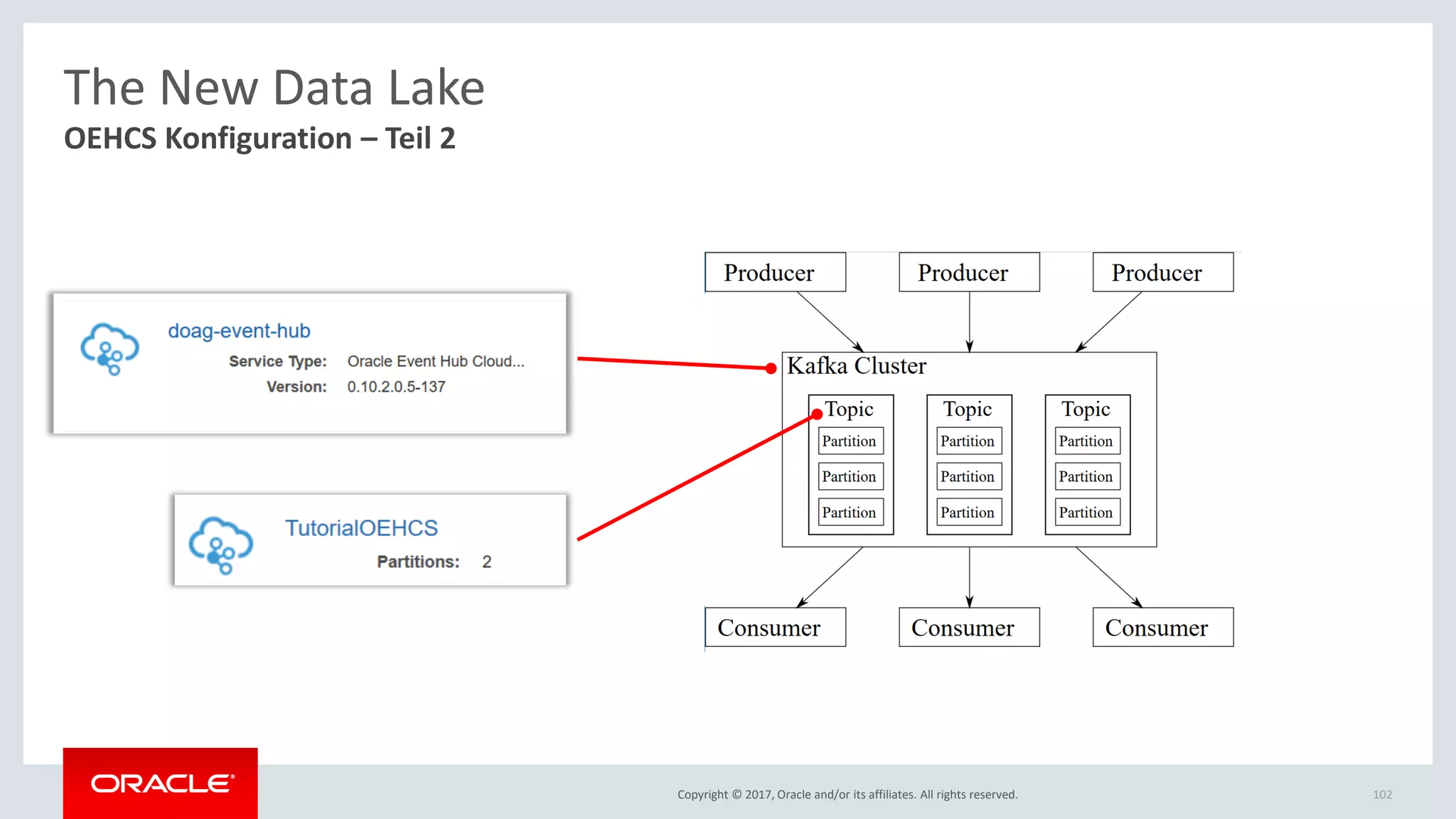

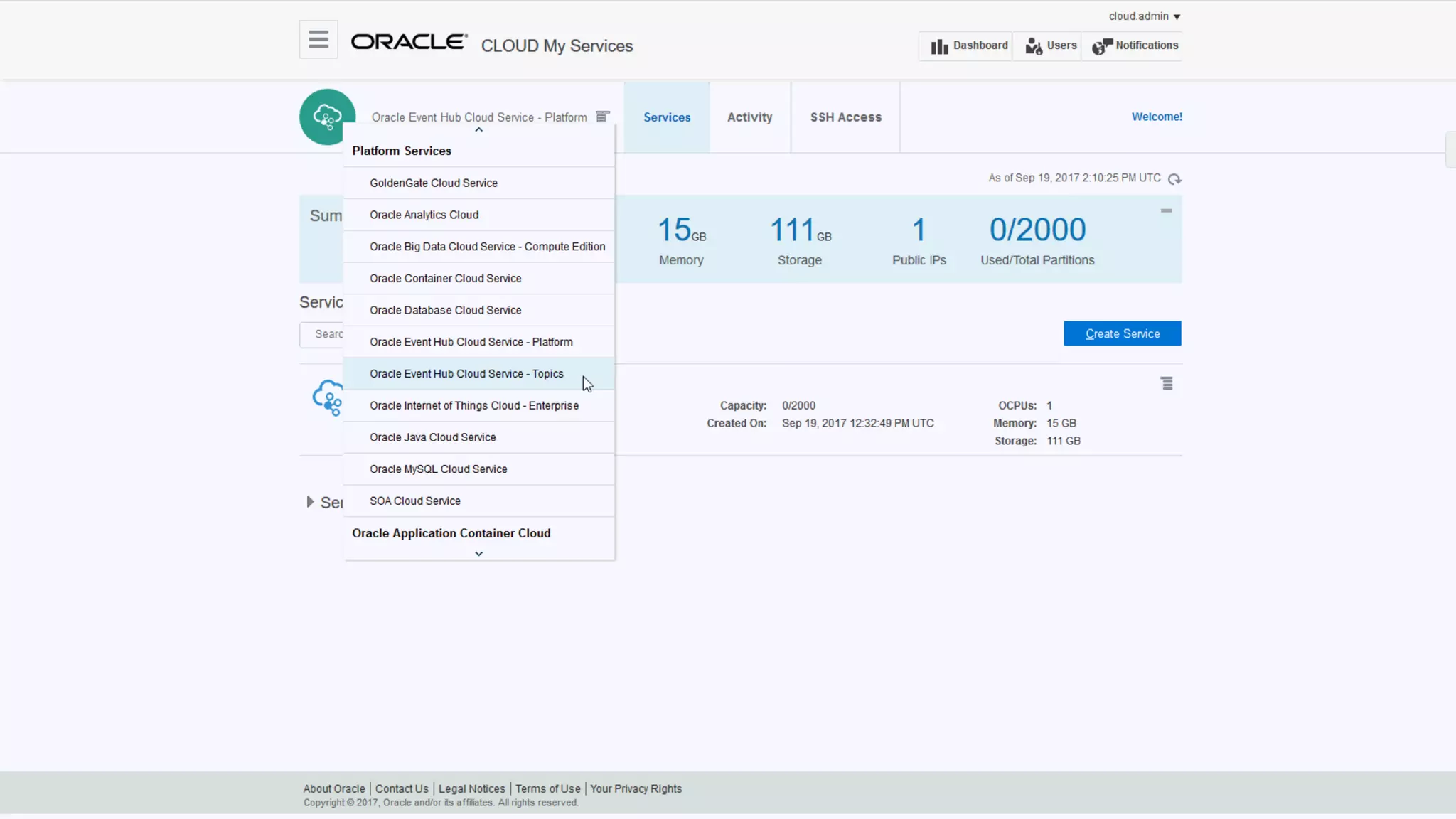

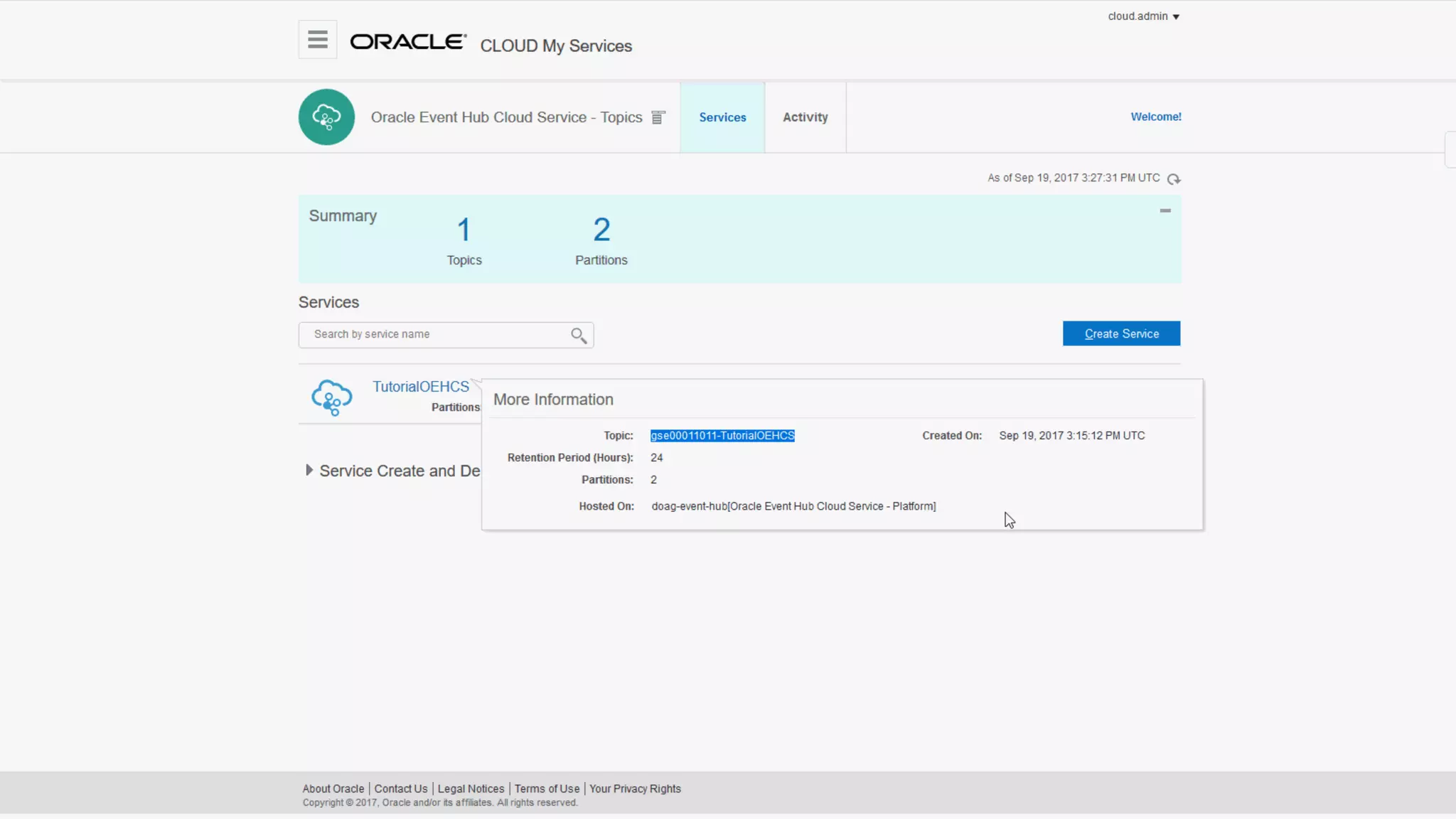

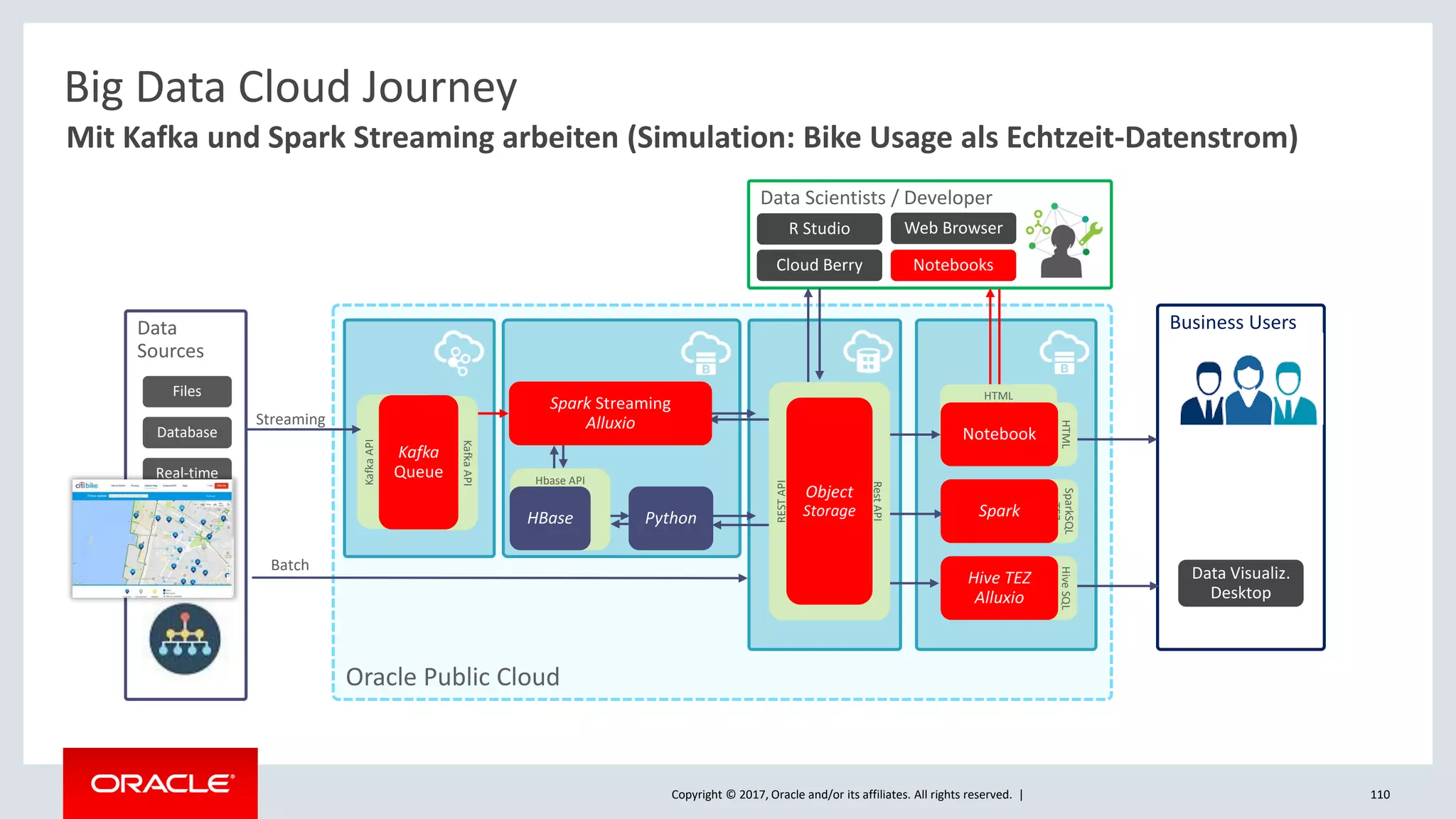

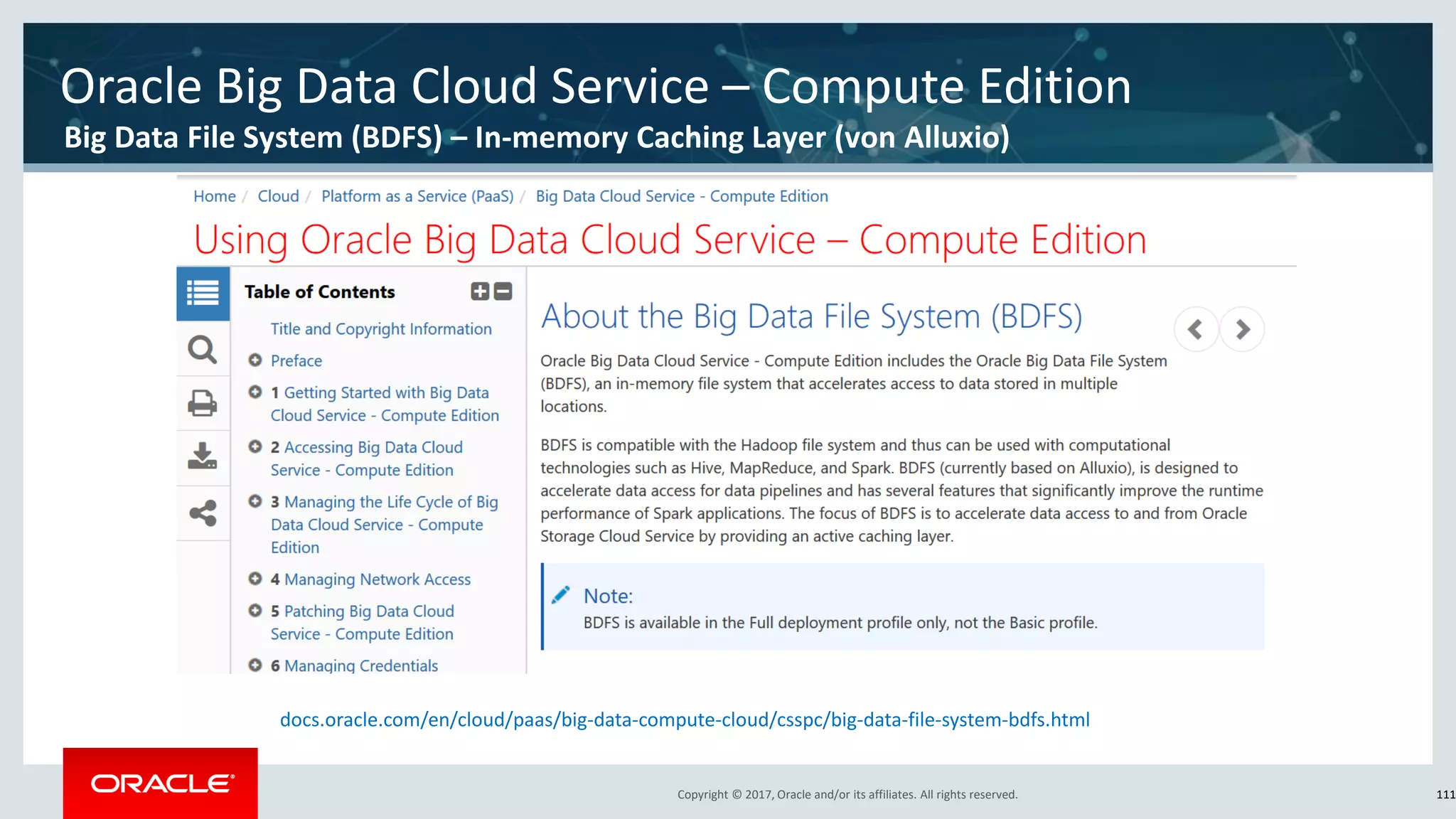

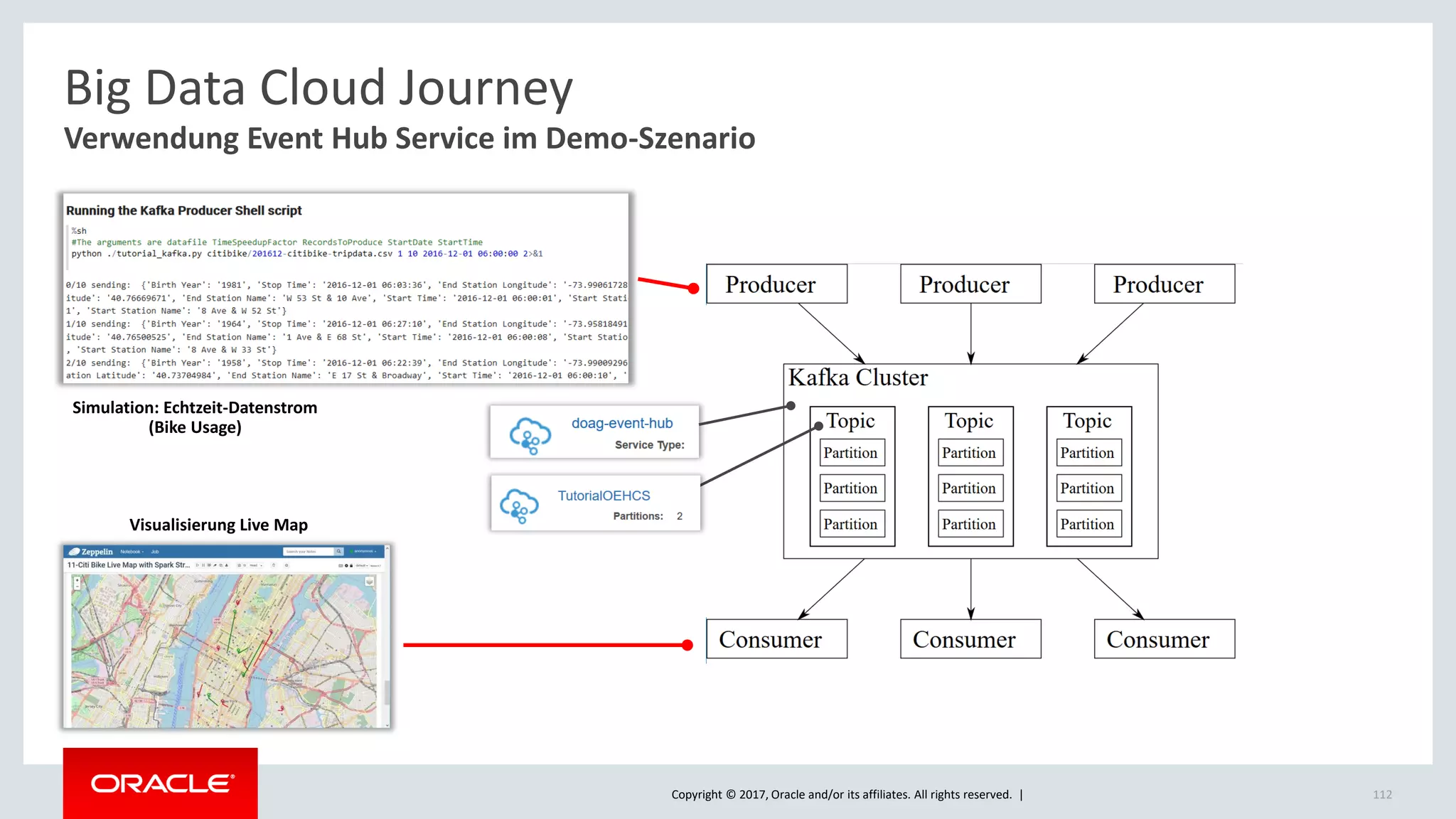

The document discusses Oracle's cloud-based data lake and analytics platform. It provides an overview of the key technologies and services available, including Spark, Kafka, Hive, object storage, notebooks and data visualization tools. It then outlines a scenario for setting up storage and big data services in Oracle Cloud to create a new data lake for batch, real-time and external data sources. The goal is to provide an agile and scalable environment for data scientists, developers and business users.