Discrete mathematical structures are widely used in machine learning. Boolean algebra, in particular, is used in many machine learning algorithms and applications. The document discusses:

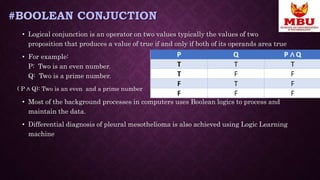

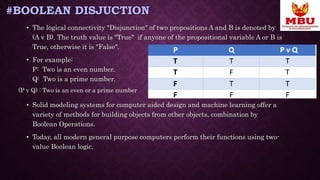

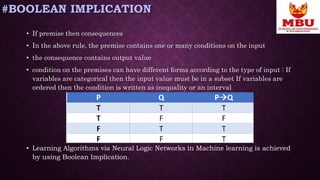

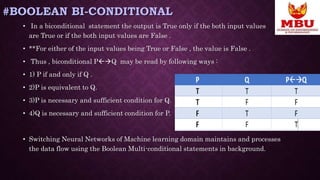

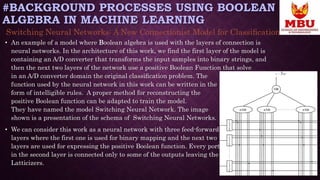

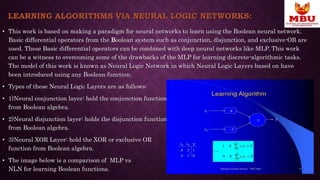

1) How Boolean logic is used in neural networks, with layers representing conjunction, disjunction, etc.

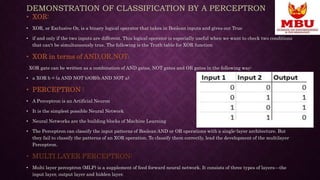

2) An example of classifying patterns with perceptrons using truth tables of Boolean AND and OR operations.

3) Real-life applications that use Boolean logic and machine learning, including diagnosing diseases from biomarker data and building a prognostic classifier for neuroblastoma.

Discrete mathematics concepts like Boolean algebra thus play an important role in machine learning algorithms and their applications to solve real-world problems.