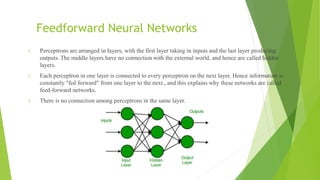

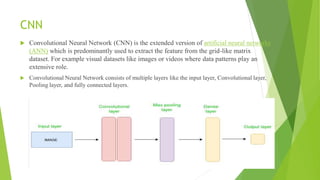

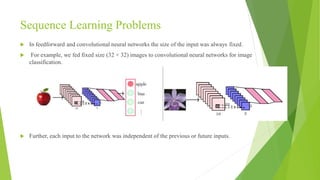

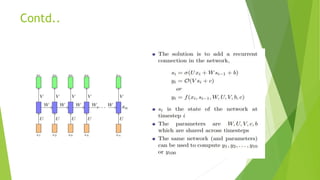

This document presents an overview of recurrent neural networks (RNNs) by Aamir Maqsood at Central University of Kashmir. It begins with an introduction and outline, then discusses feedforward neural networks and their limitations. Convolutional neural networks are also covered. RNNs are introduced as a way to handle sequence learning problems by accounting for dependence between inputs and variable input sizes. The document describes the basic RNN structure and cell, and covers different types of RNNs like one-to-one, one-to-many, many-to-one, and many-to-many. It concludes with references for further reading on RNNs.