Embed presentation

Download as PDF, PPTX

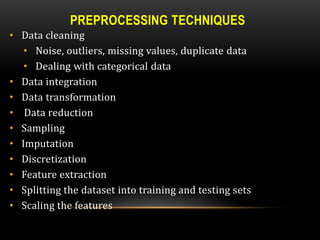

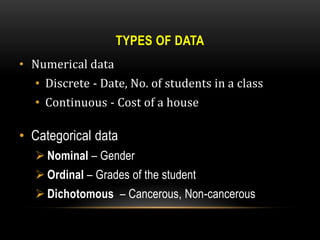

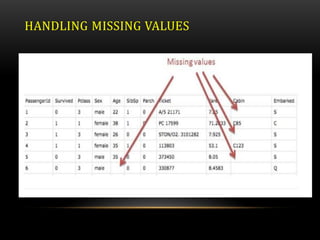

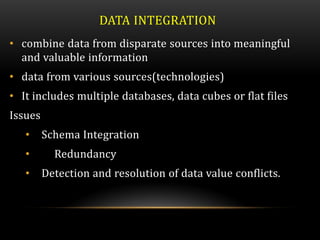

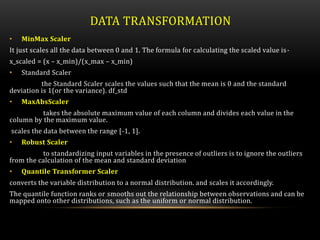

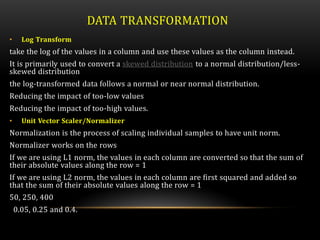

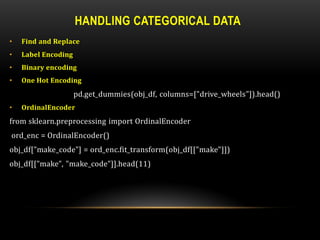

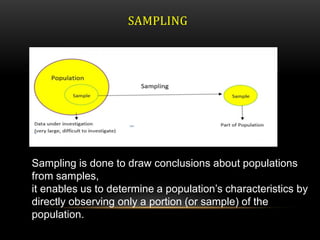

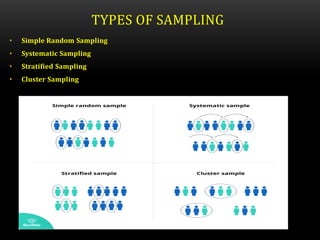

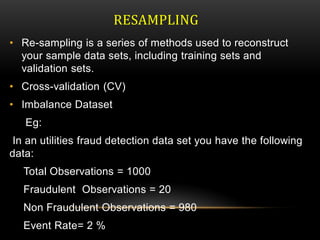

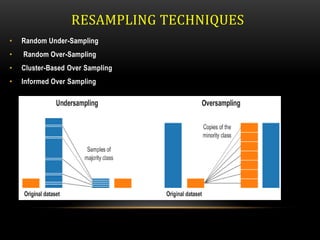

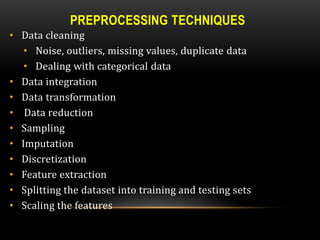

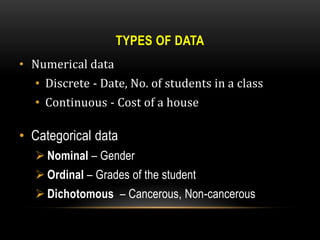

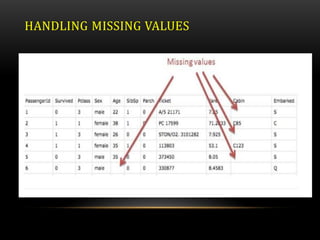

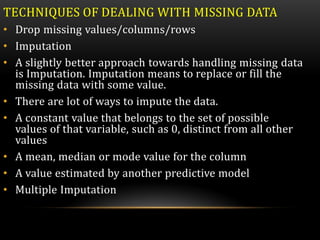

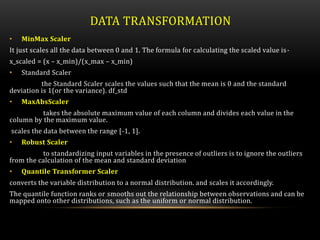

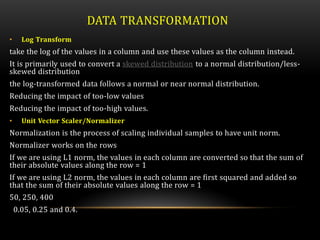

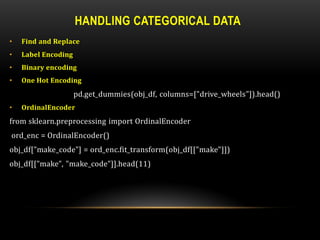

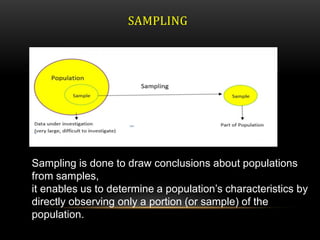

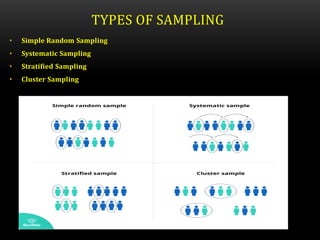

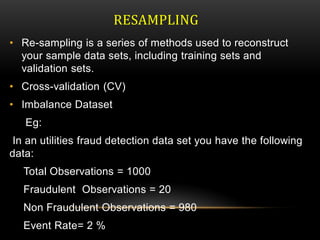

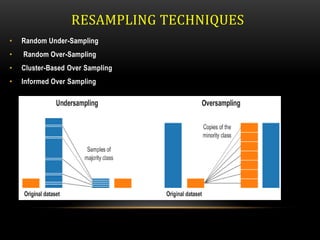

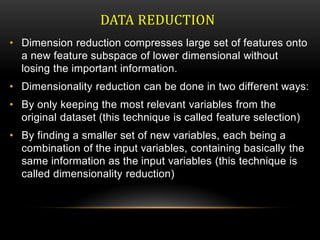

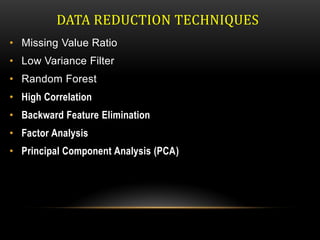

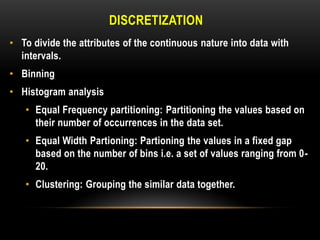

This document discusses various techniques for data preprocessing, which is the process of preparing raw data for analysis by cleaning, transforming, and reducing data. It describes methods for cleaning data by handling missing values, outliers, and duplicates. Techniques covered for transforming data include normalization, discretization, and handling categorical variables. The document also discusses sampling, feature selection, and other methods for reducing dimensions and selecting relevant features from datasets.