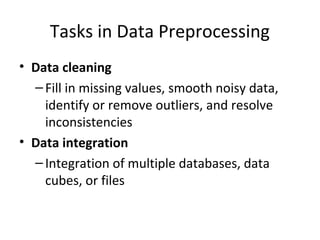

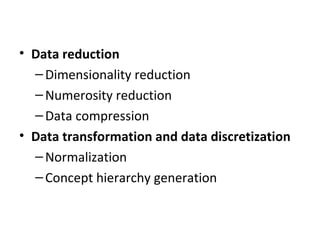

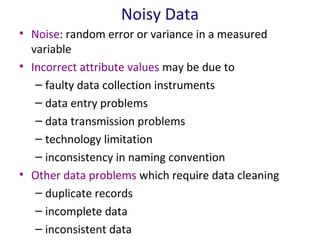

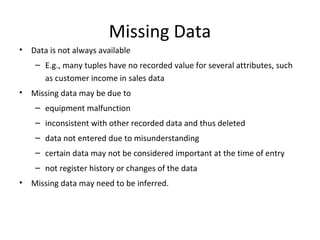

This document discusses data preprocessing techniques. It begins by explaining why preprocessing is important due to real-world data often being dirty, incomplete, noisy, or inconsistent. The main tasks of preprocessing are then outlined as data cleaning, integration, reduction, and transformation. Specific techniques for handling missing data, noisy data, and data integration are then described. Methods for data reduction through dimensionality reduction, numerosity reduction, and discretization are also summarized.