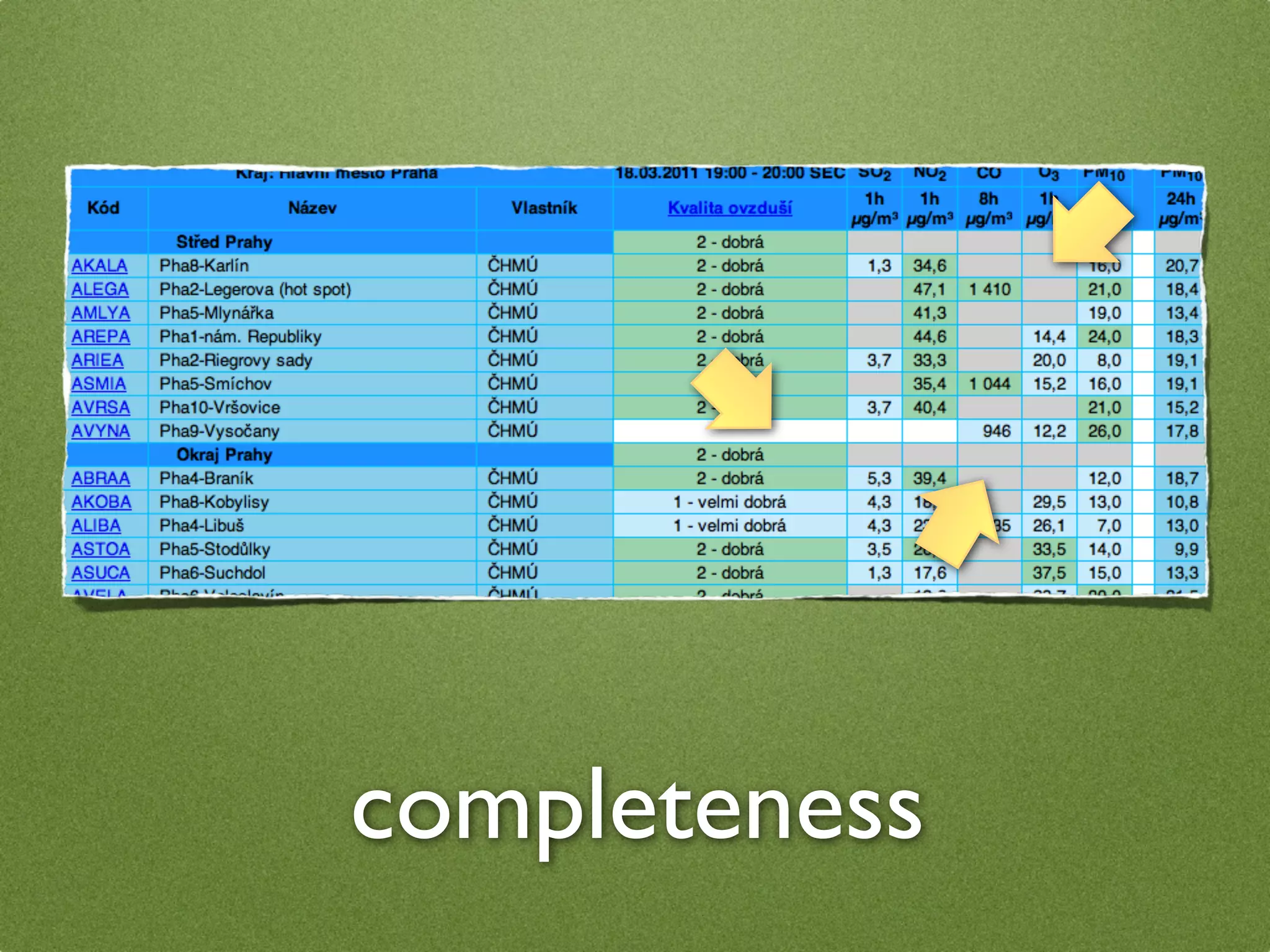

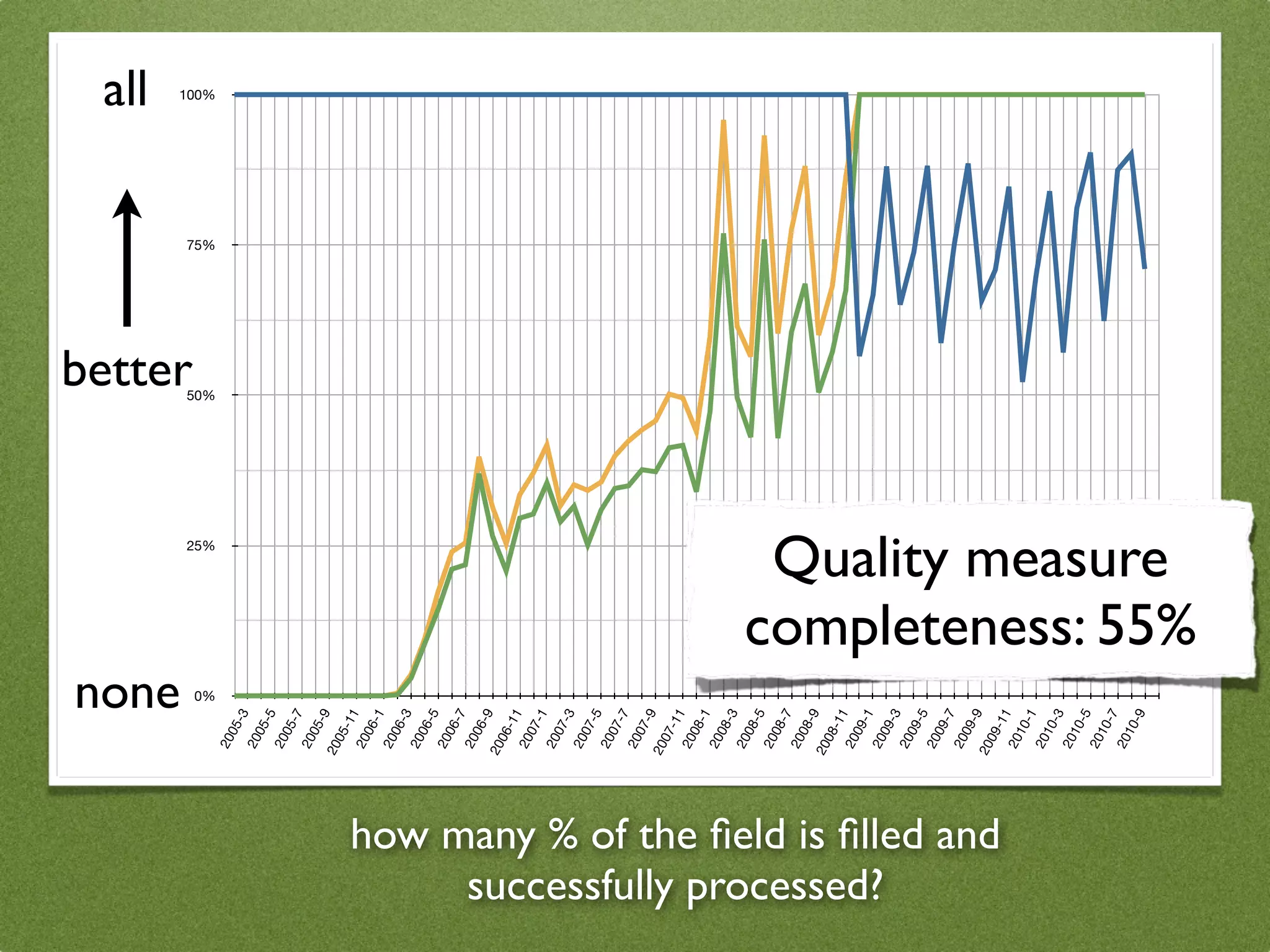

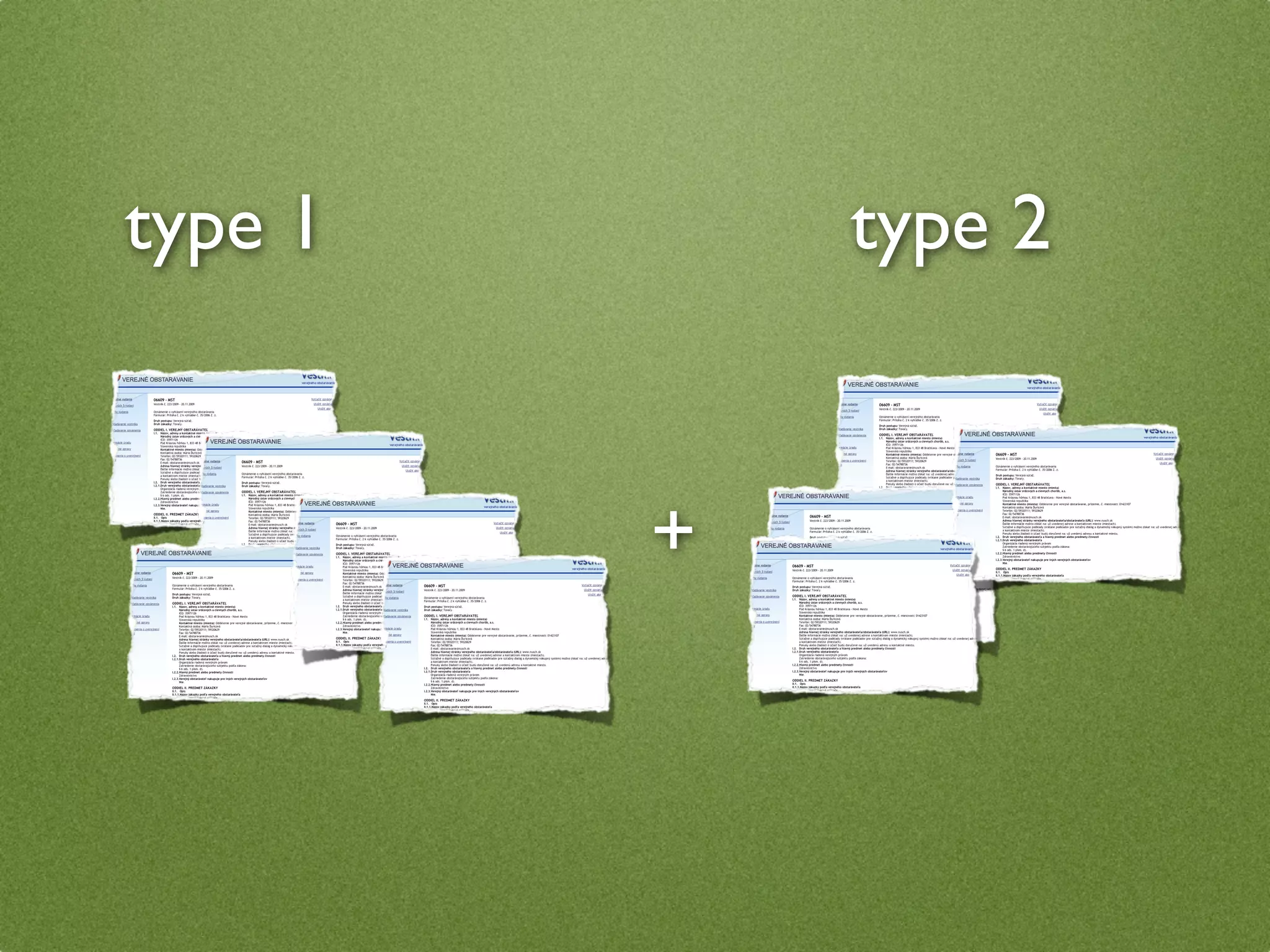

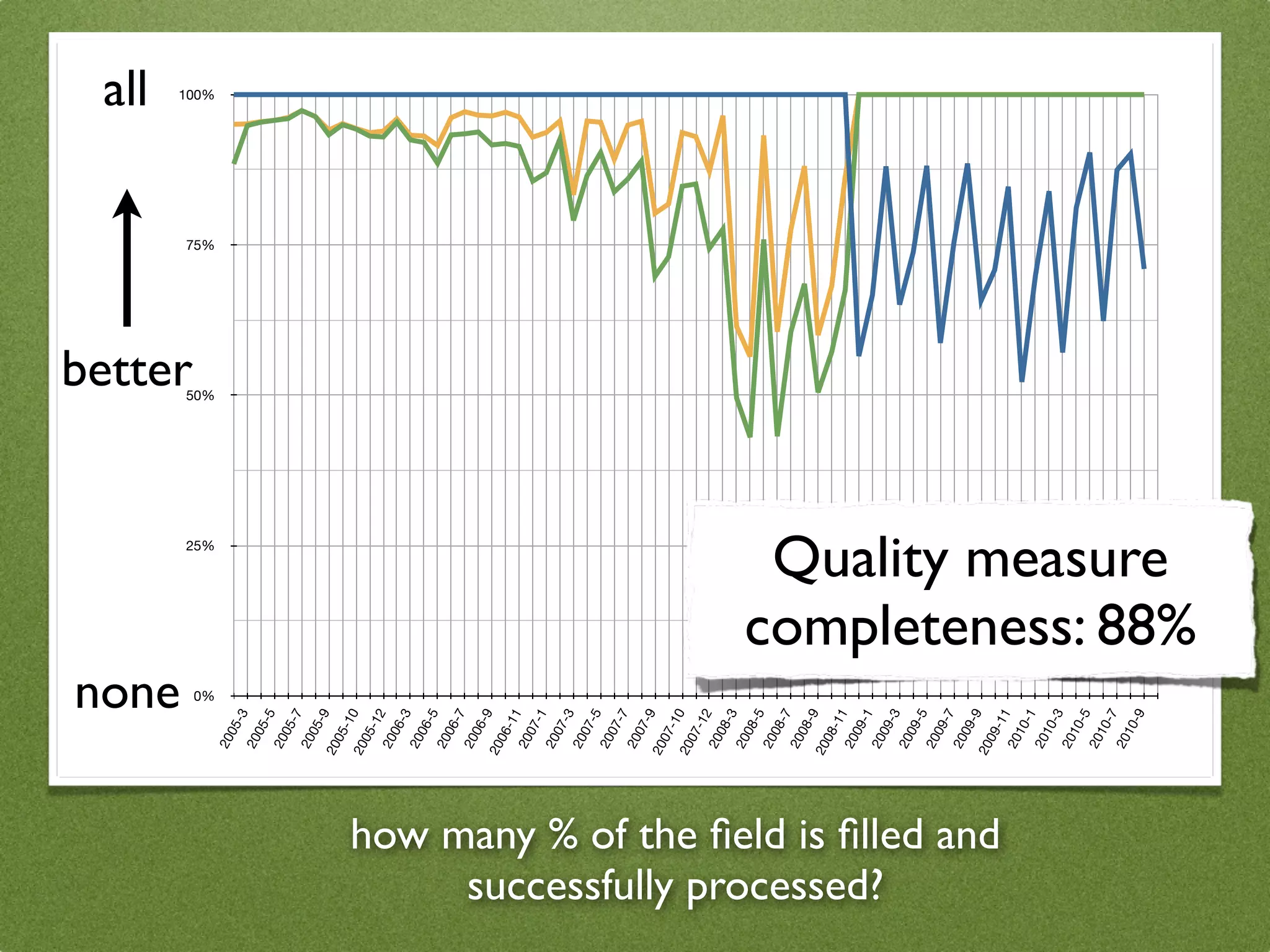

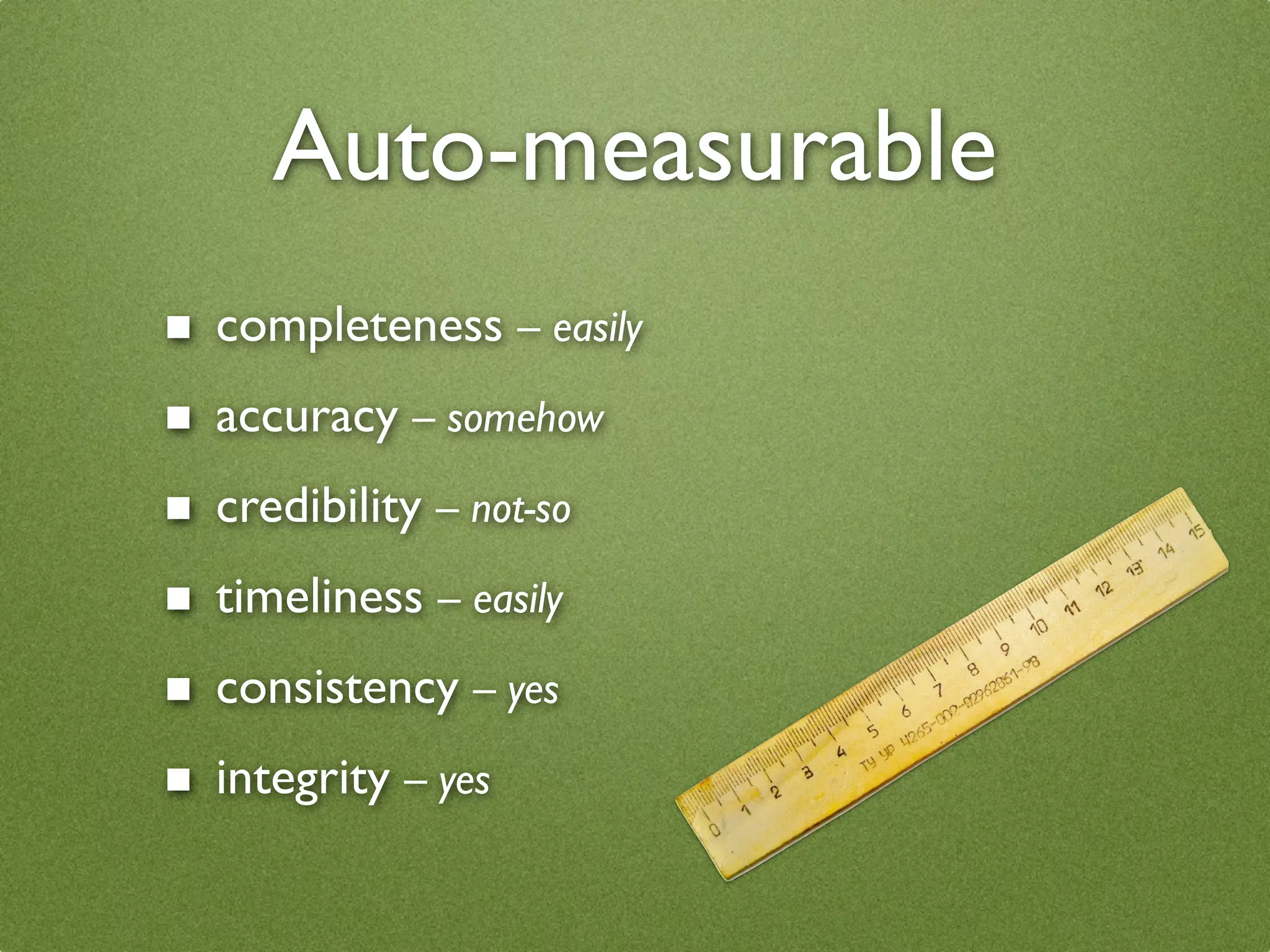

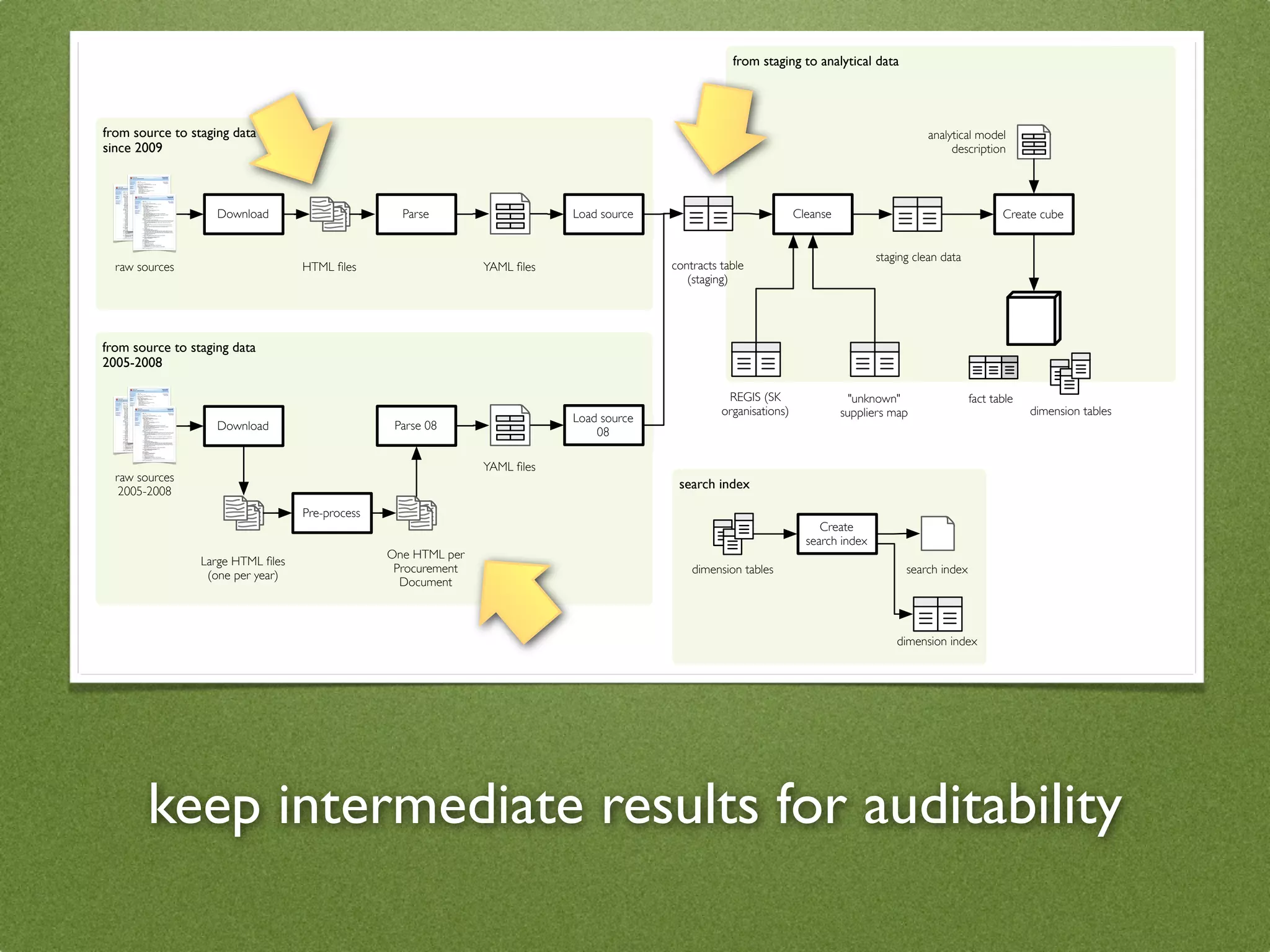

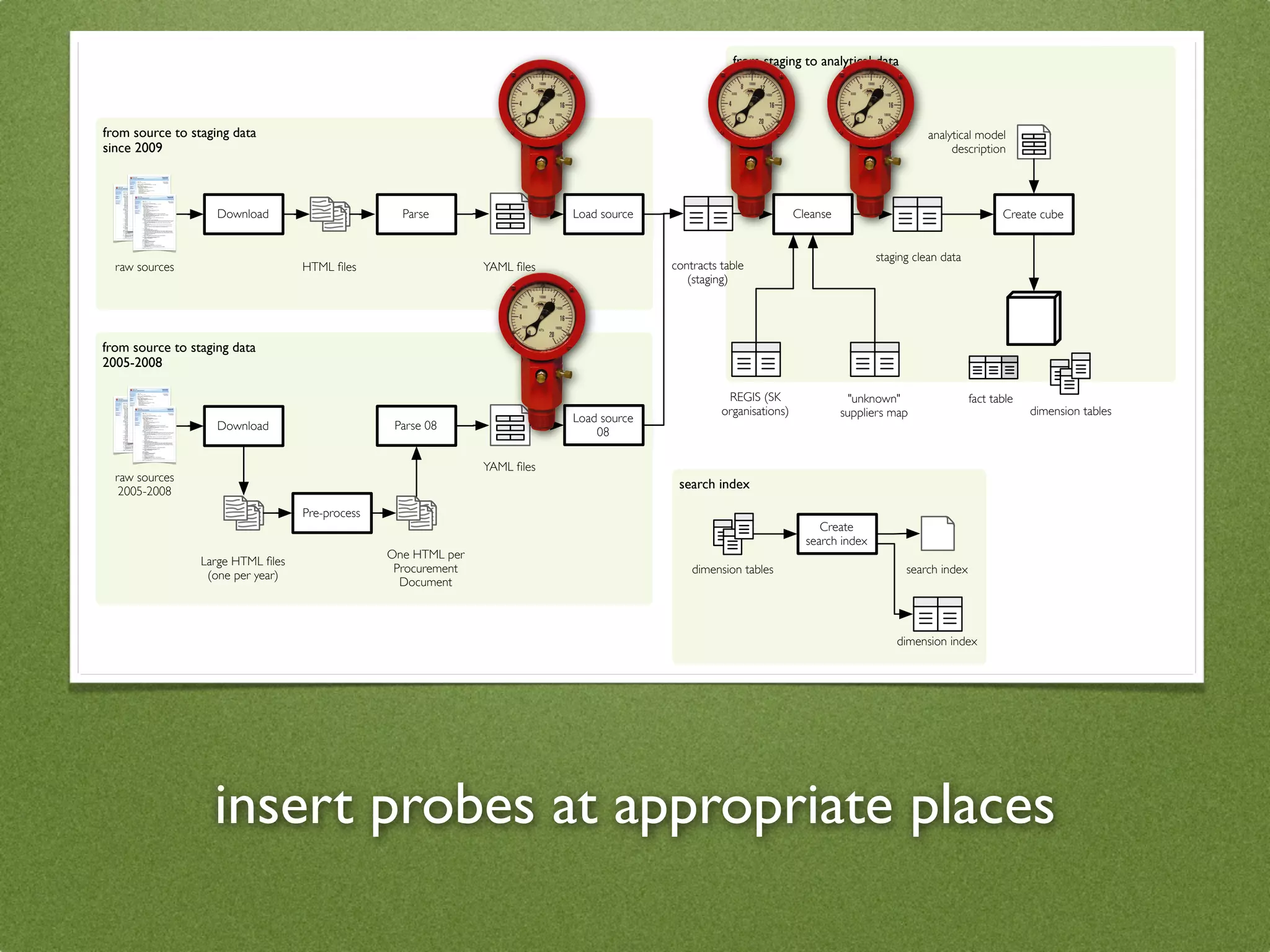

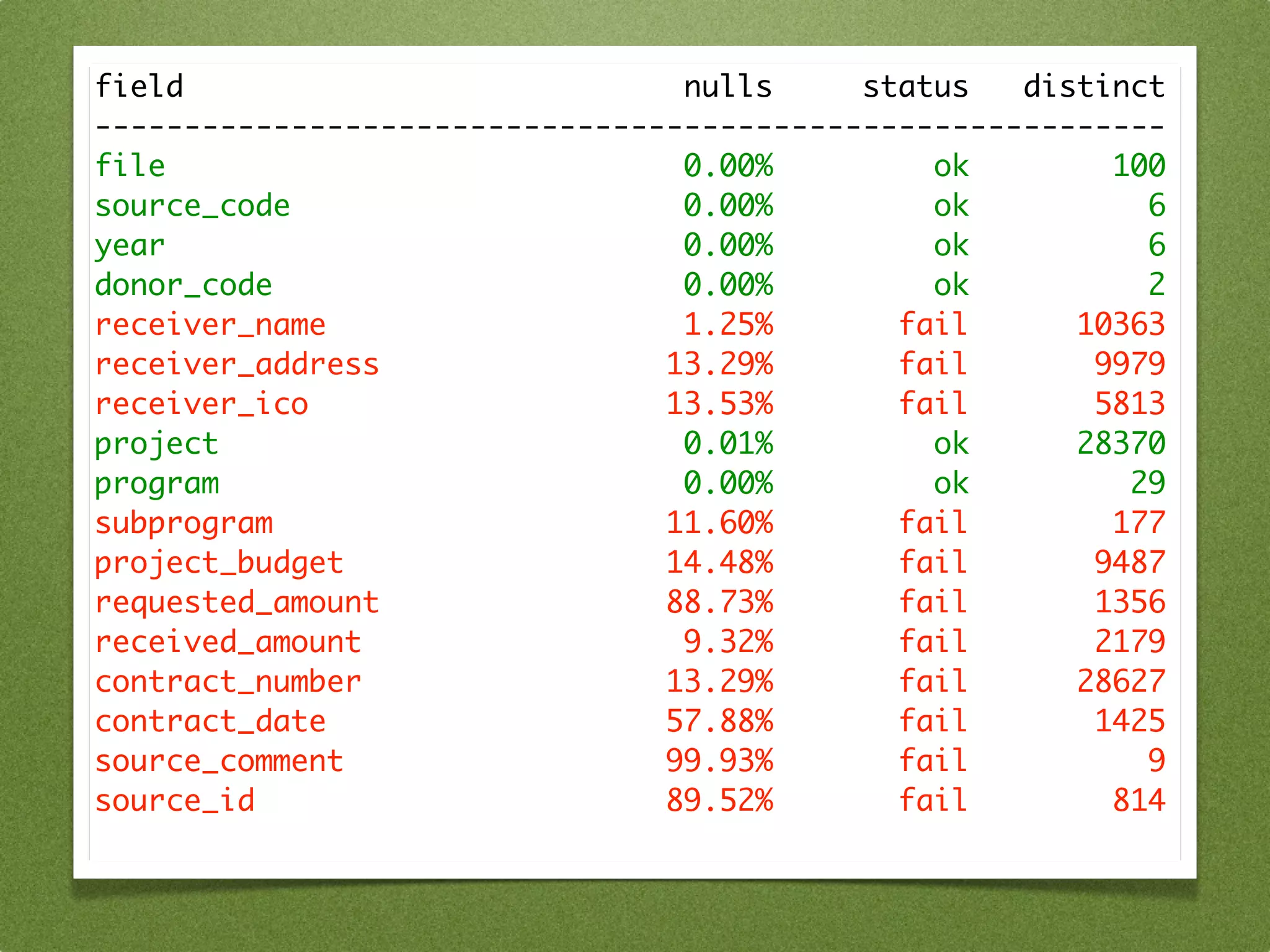

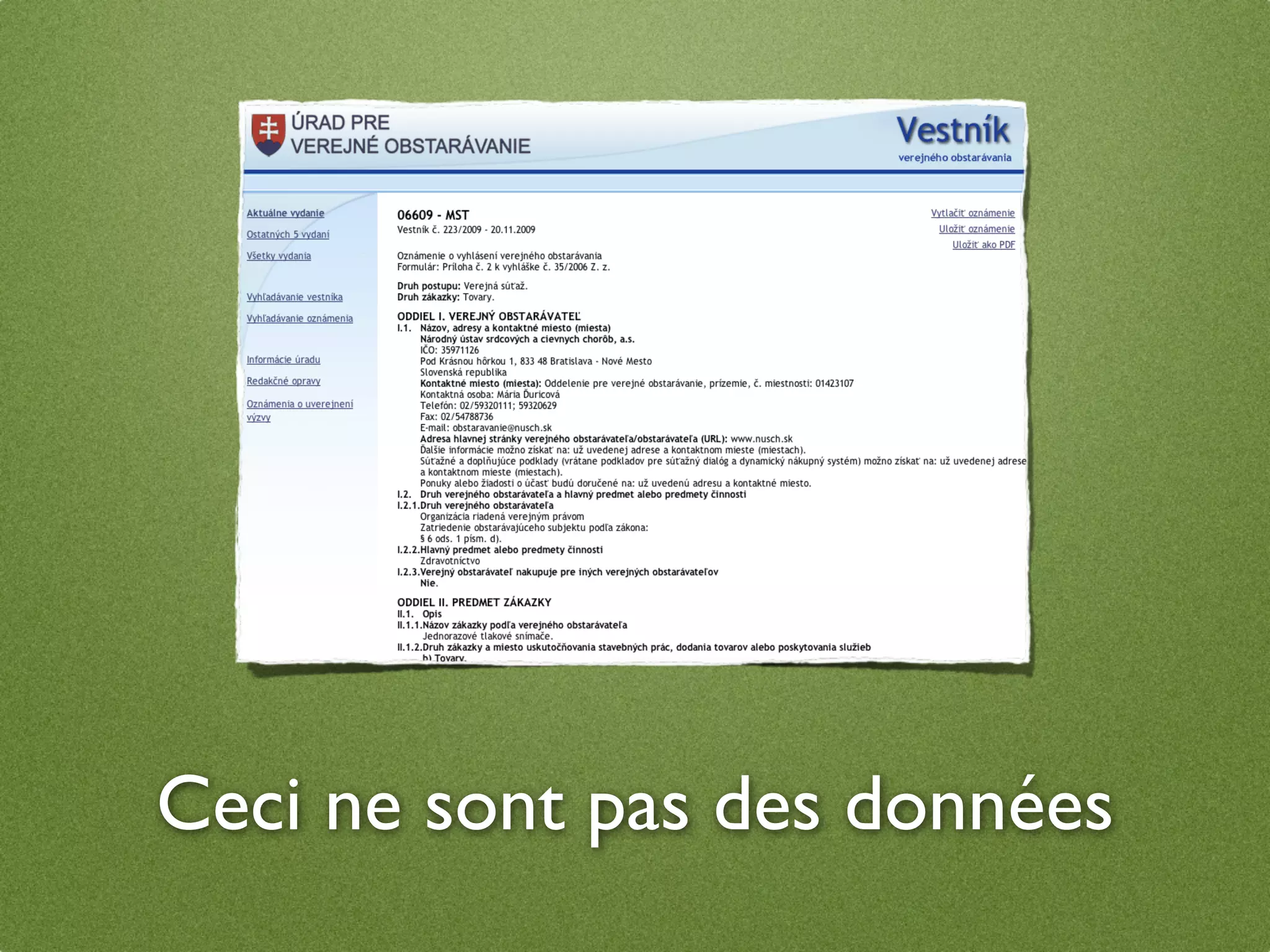

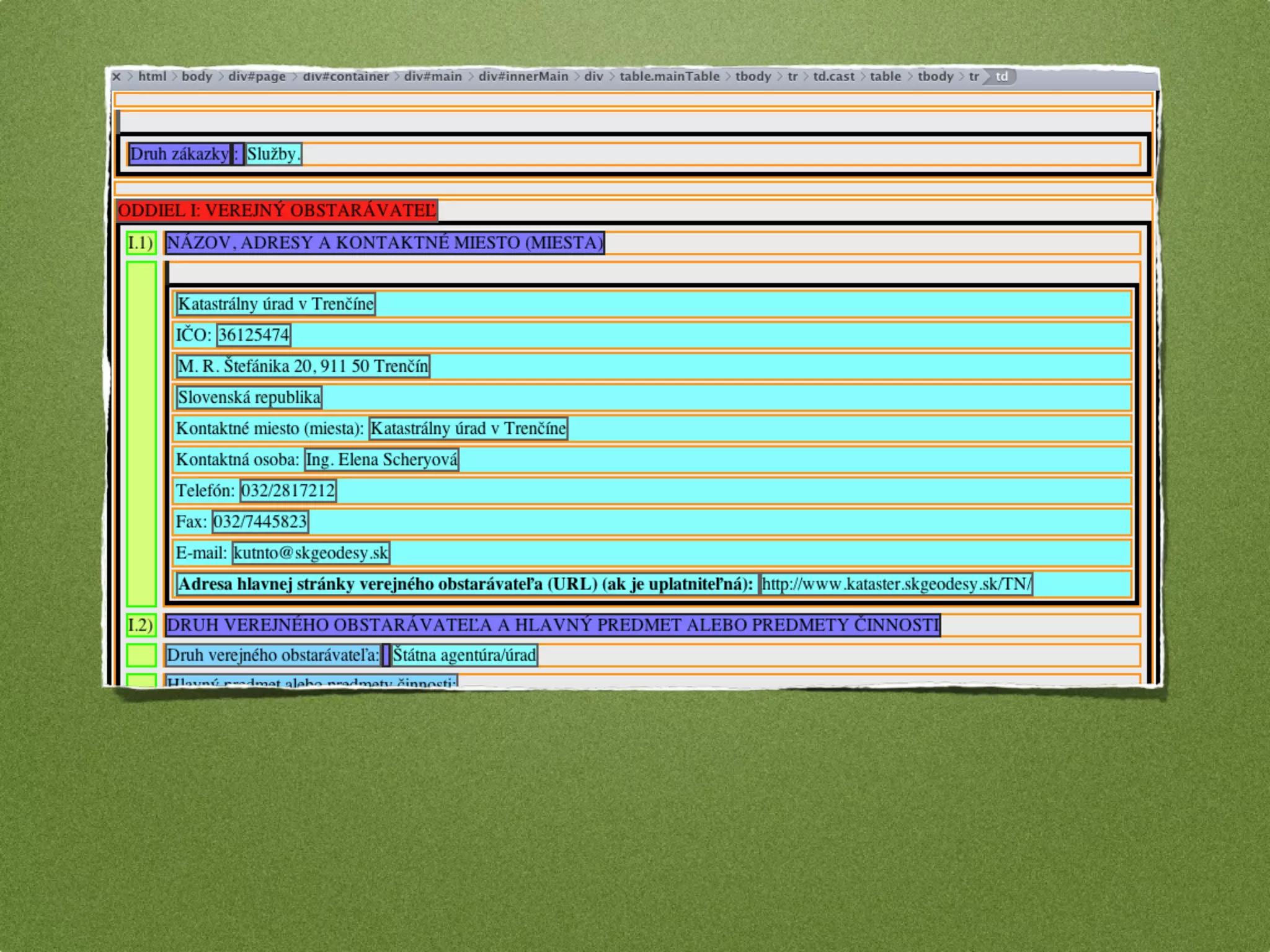

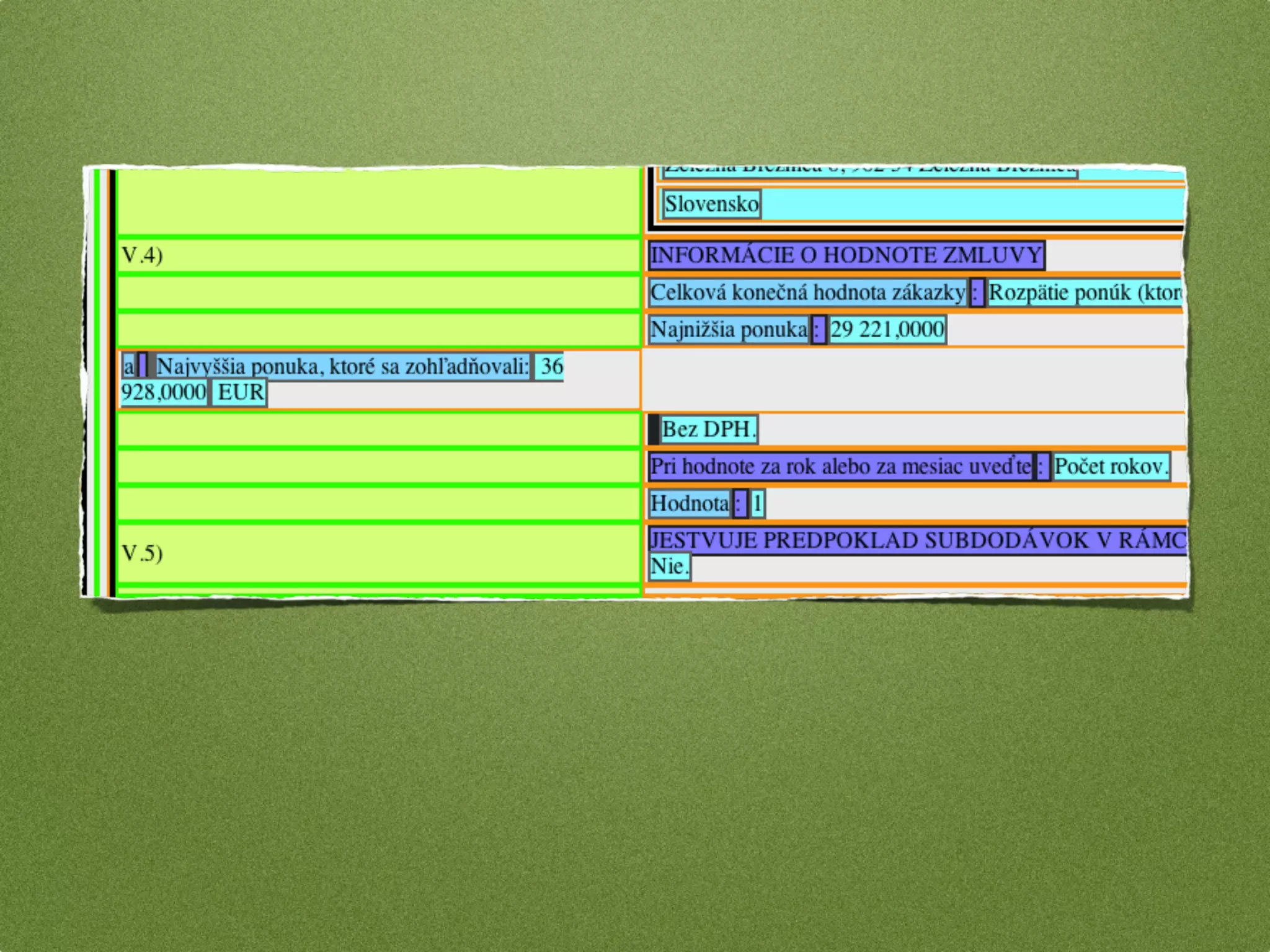

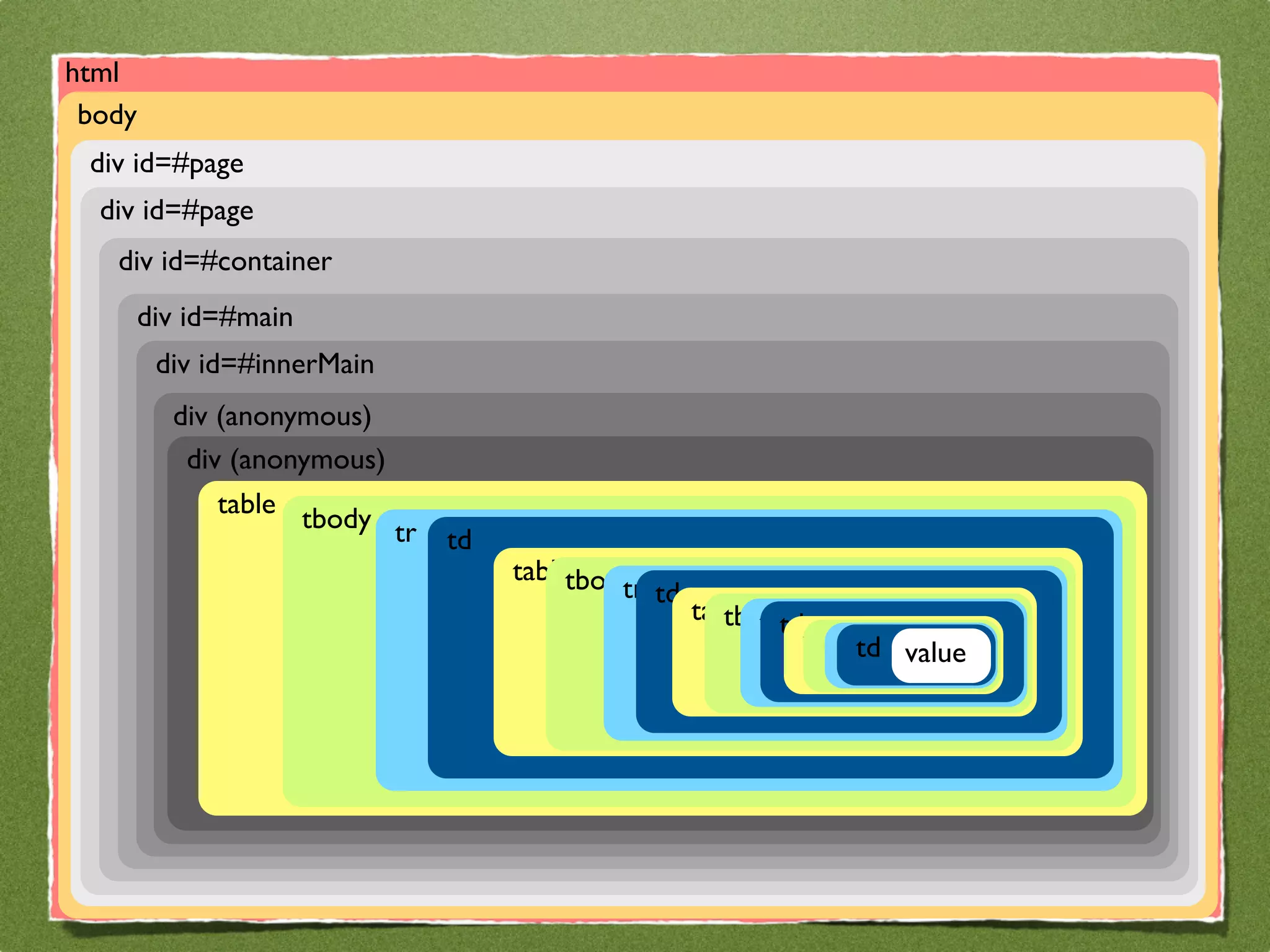

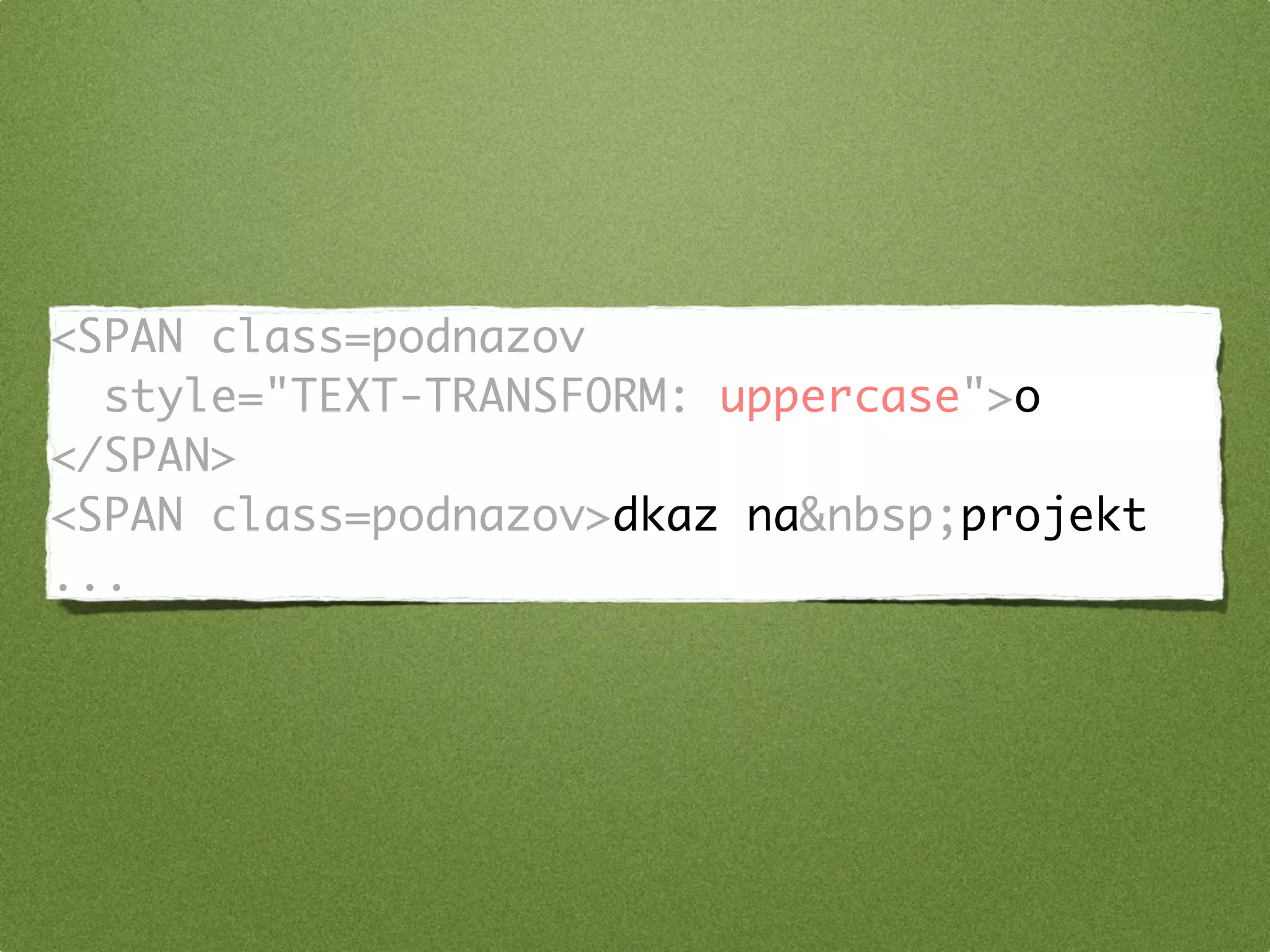

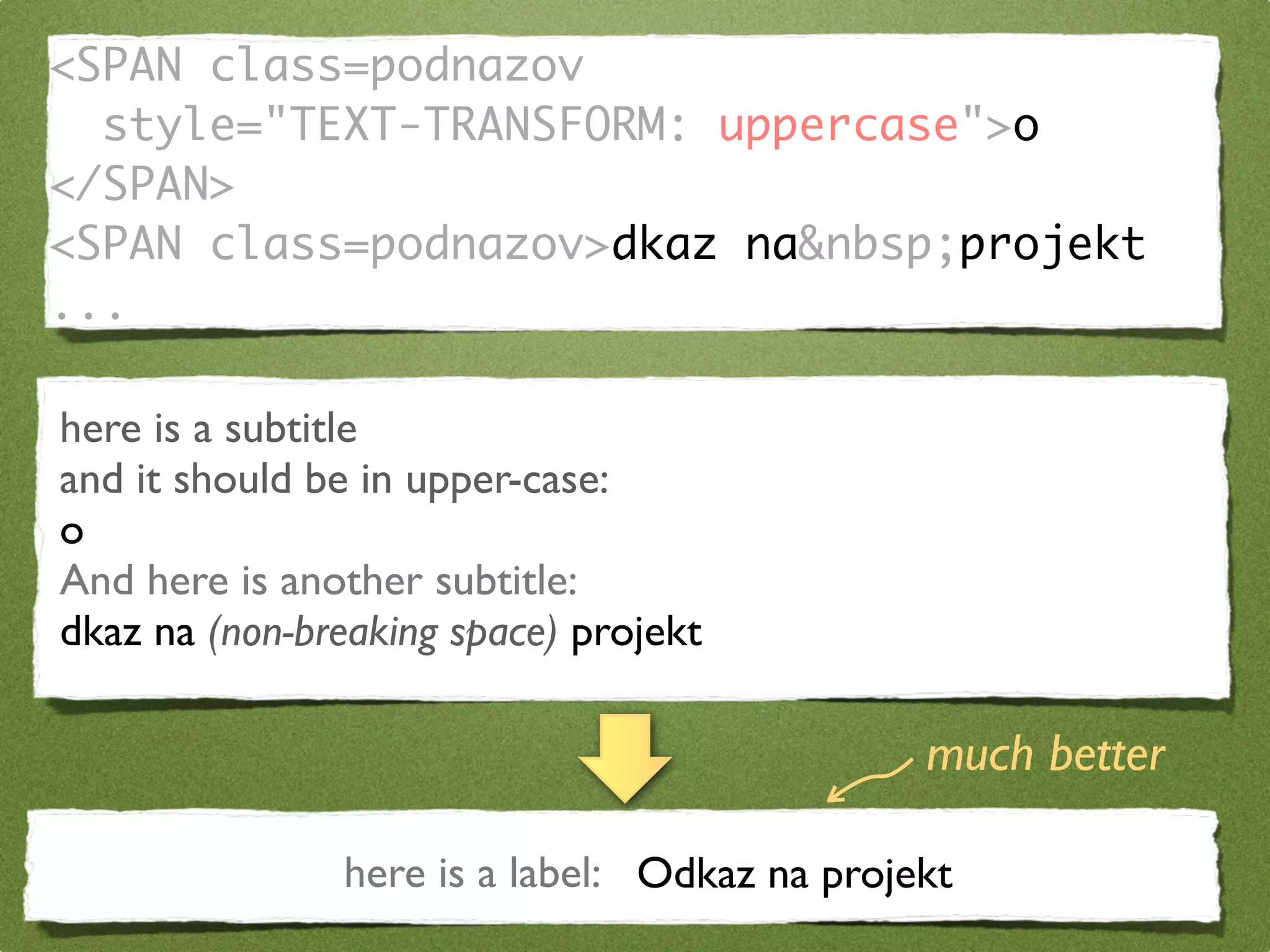

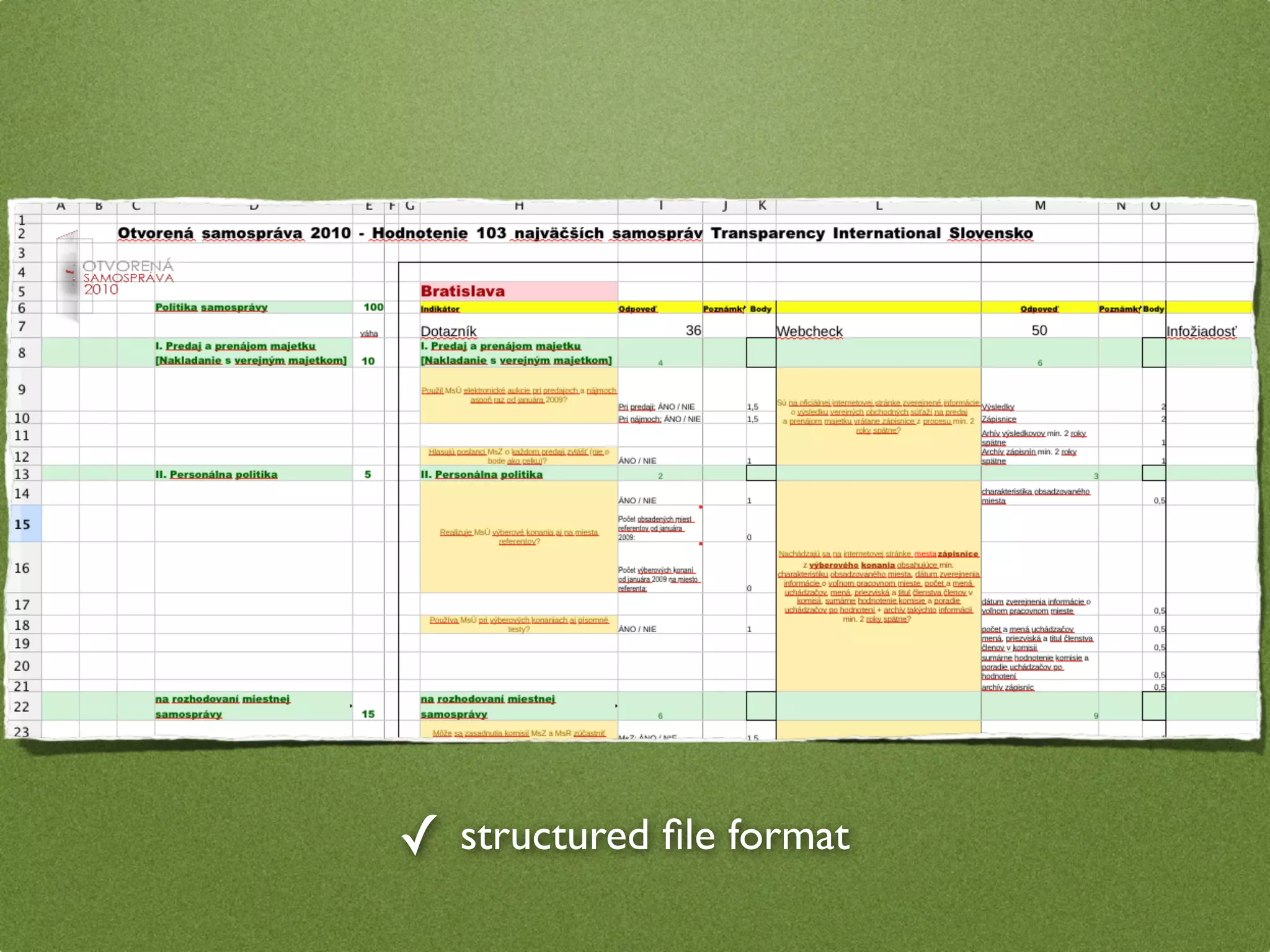

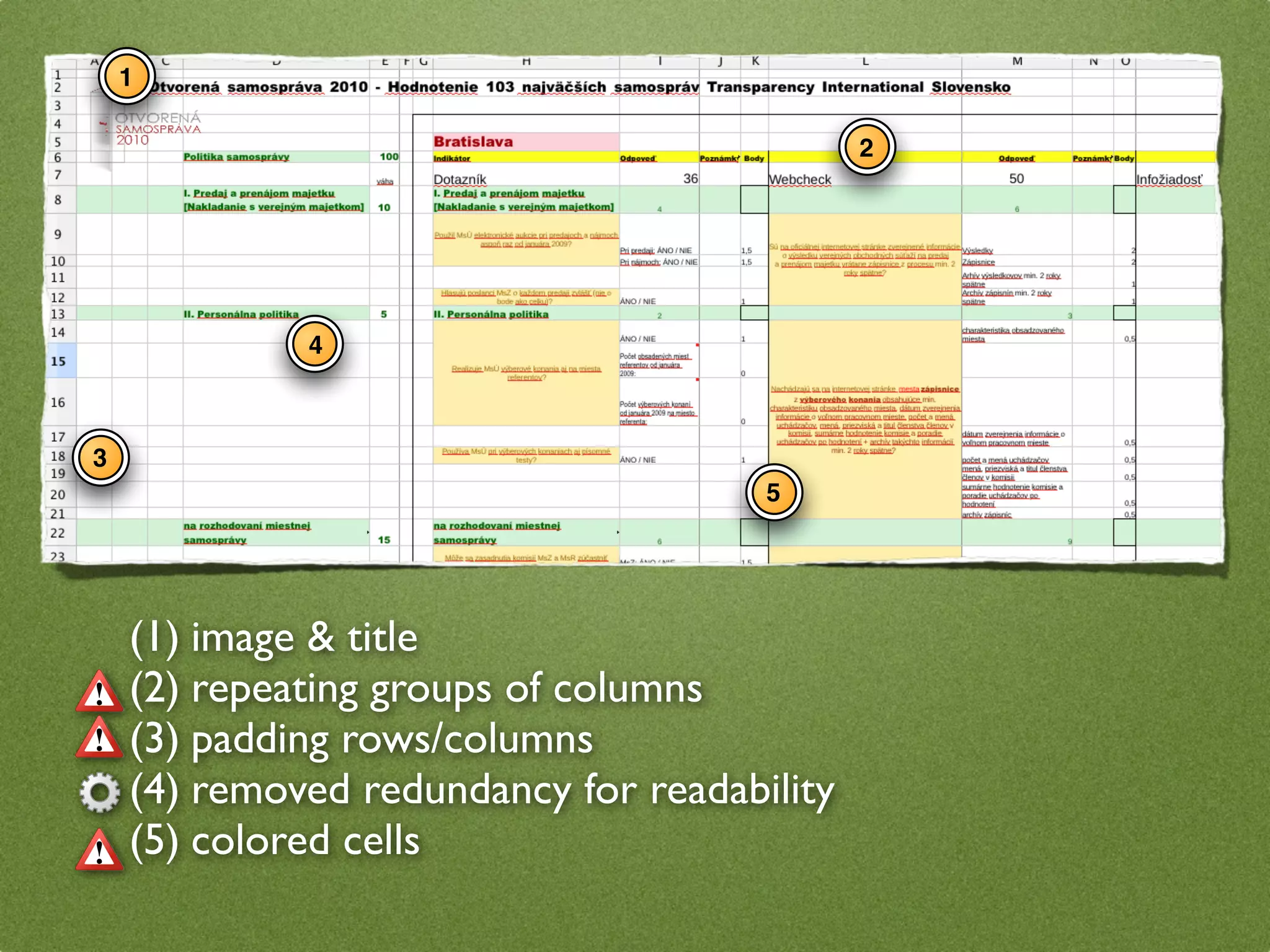

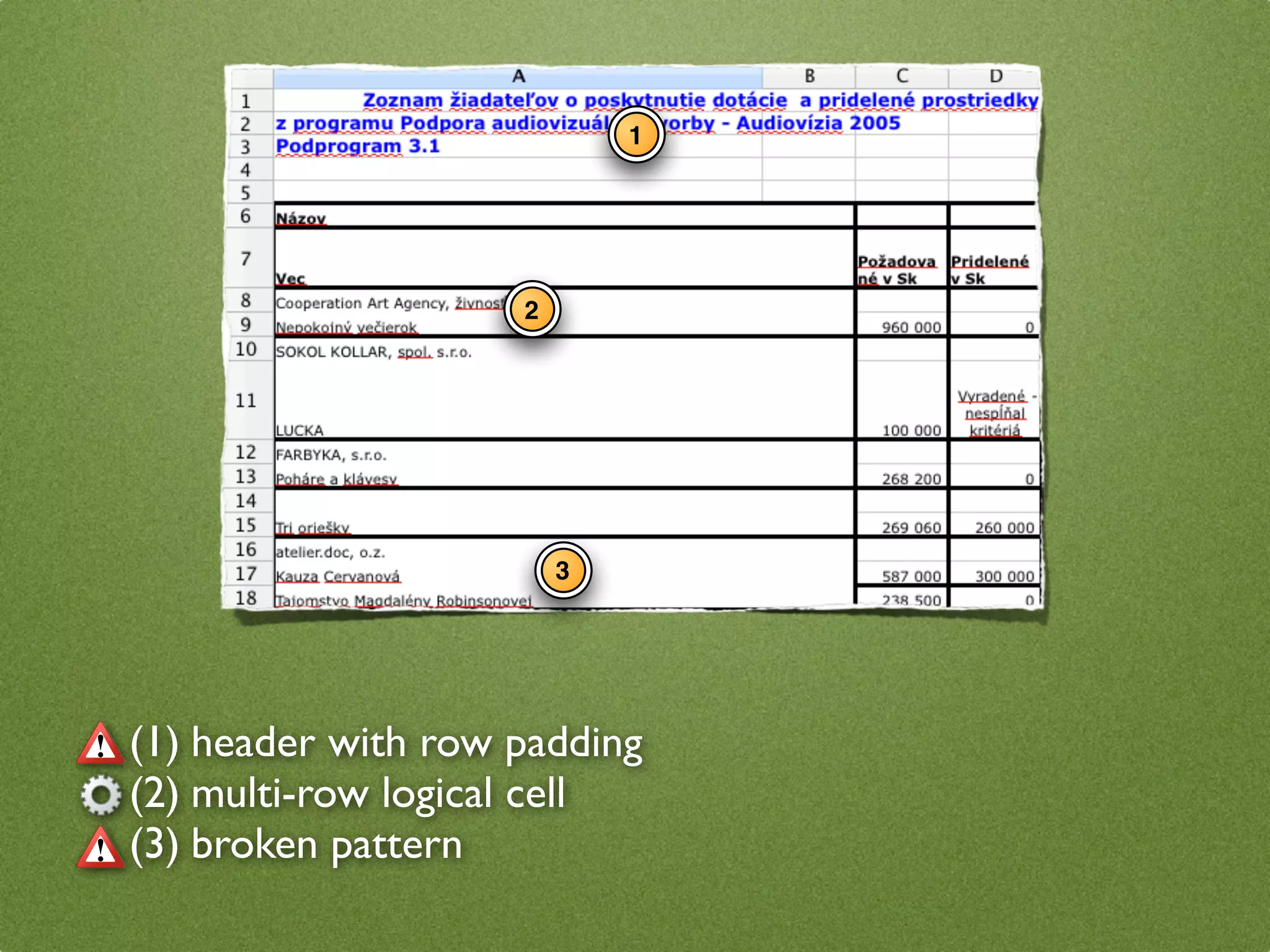

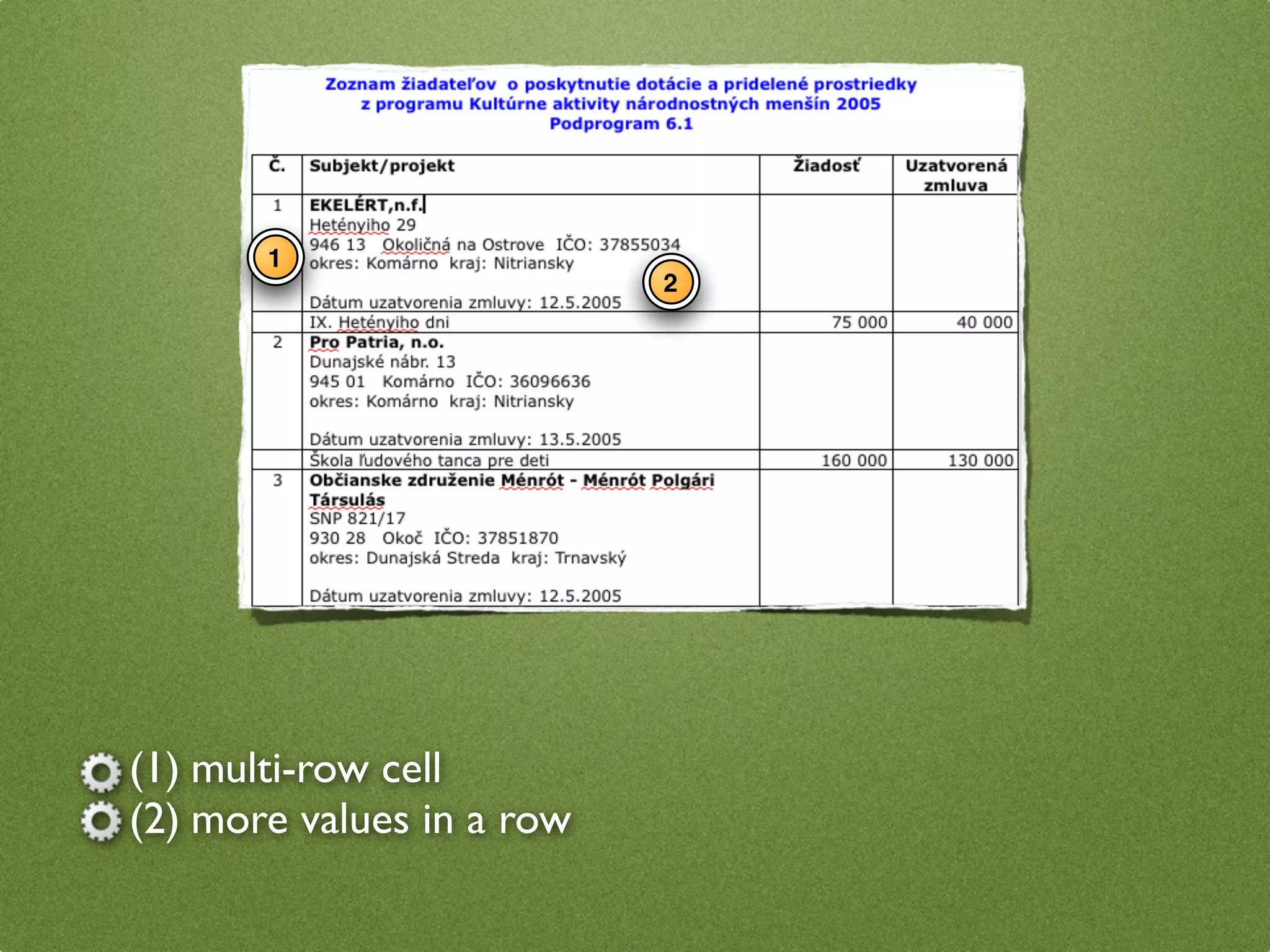

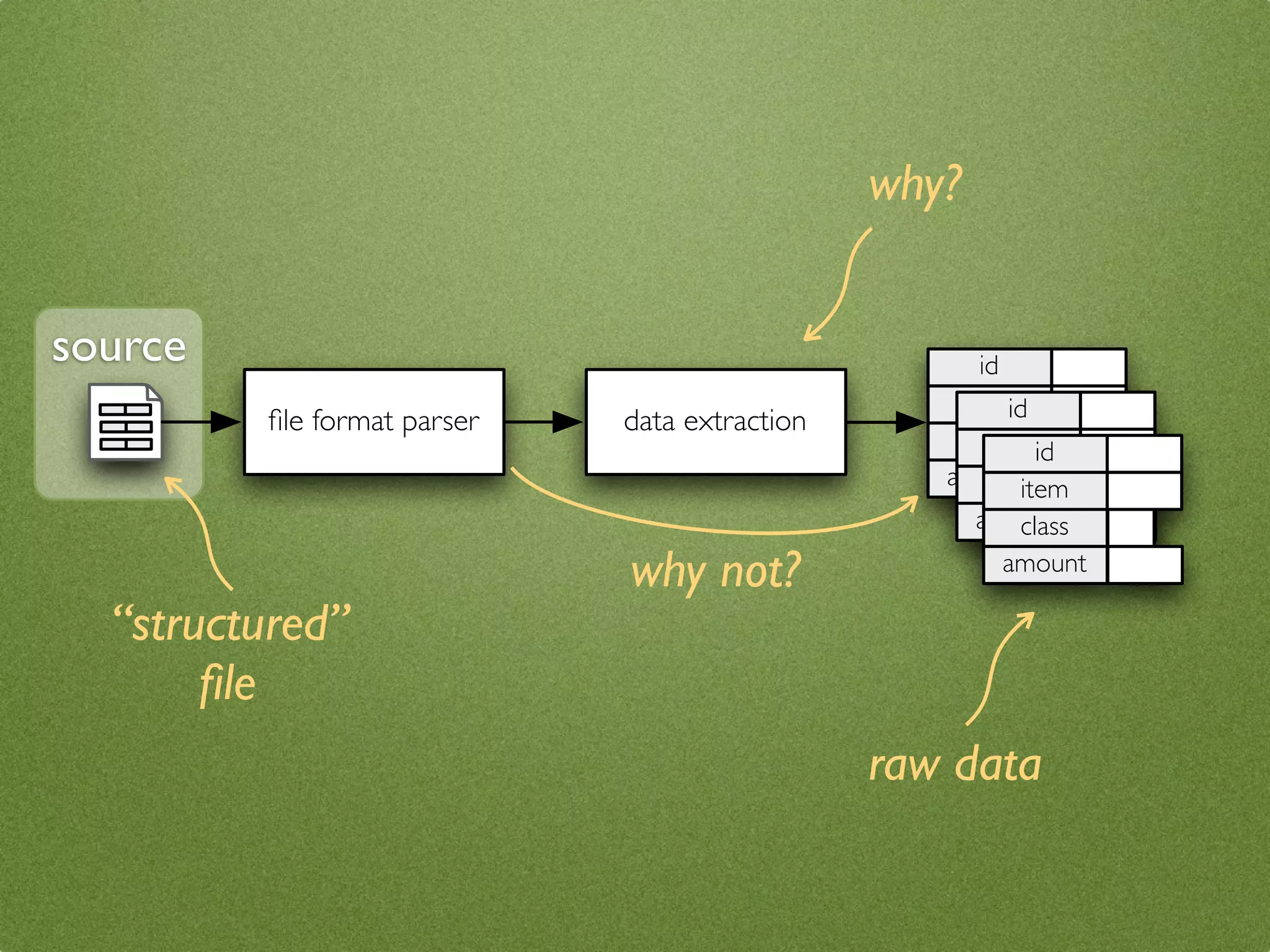

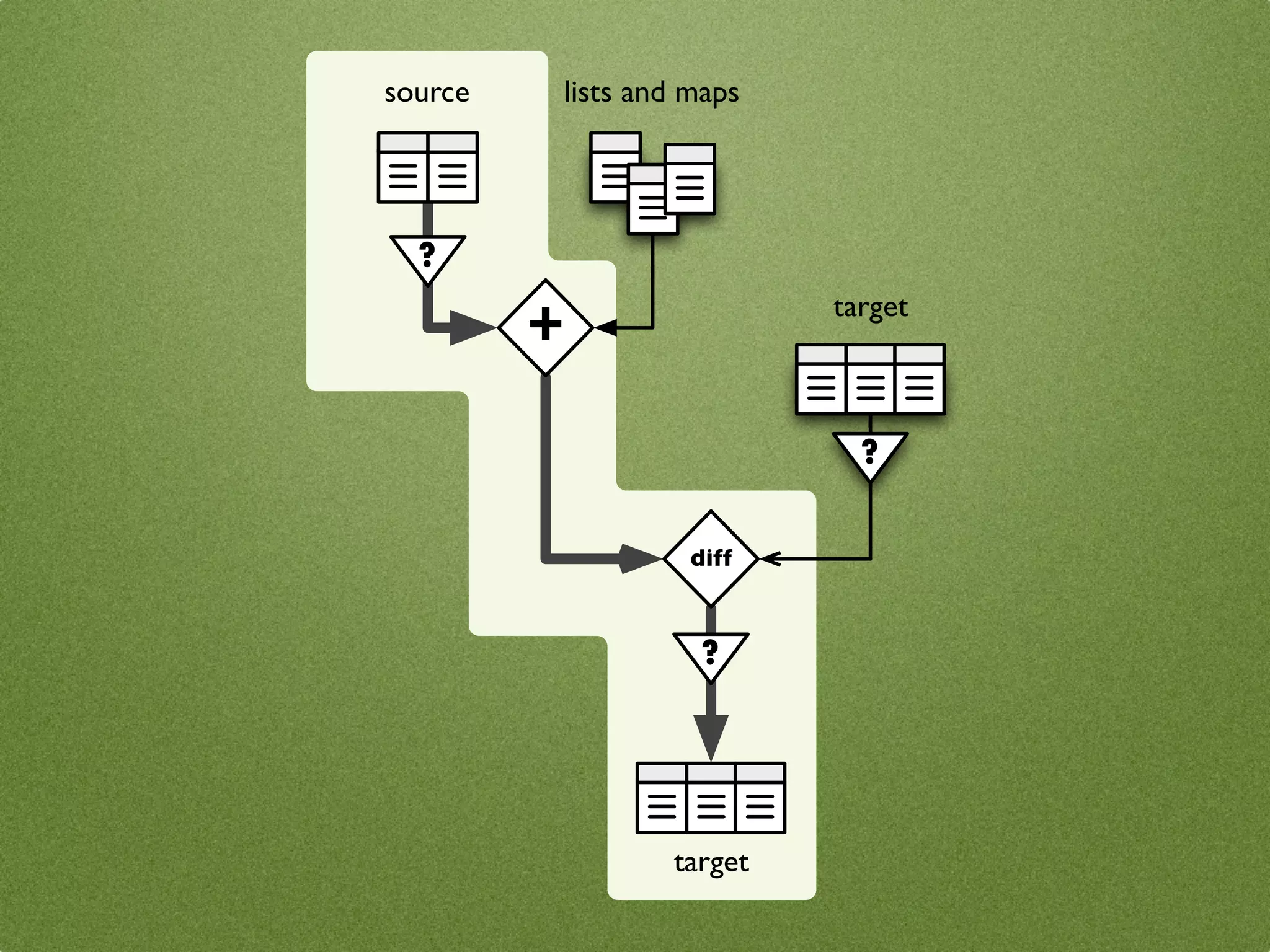

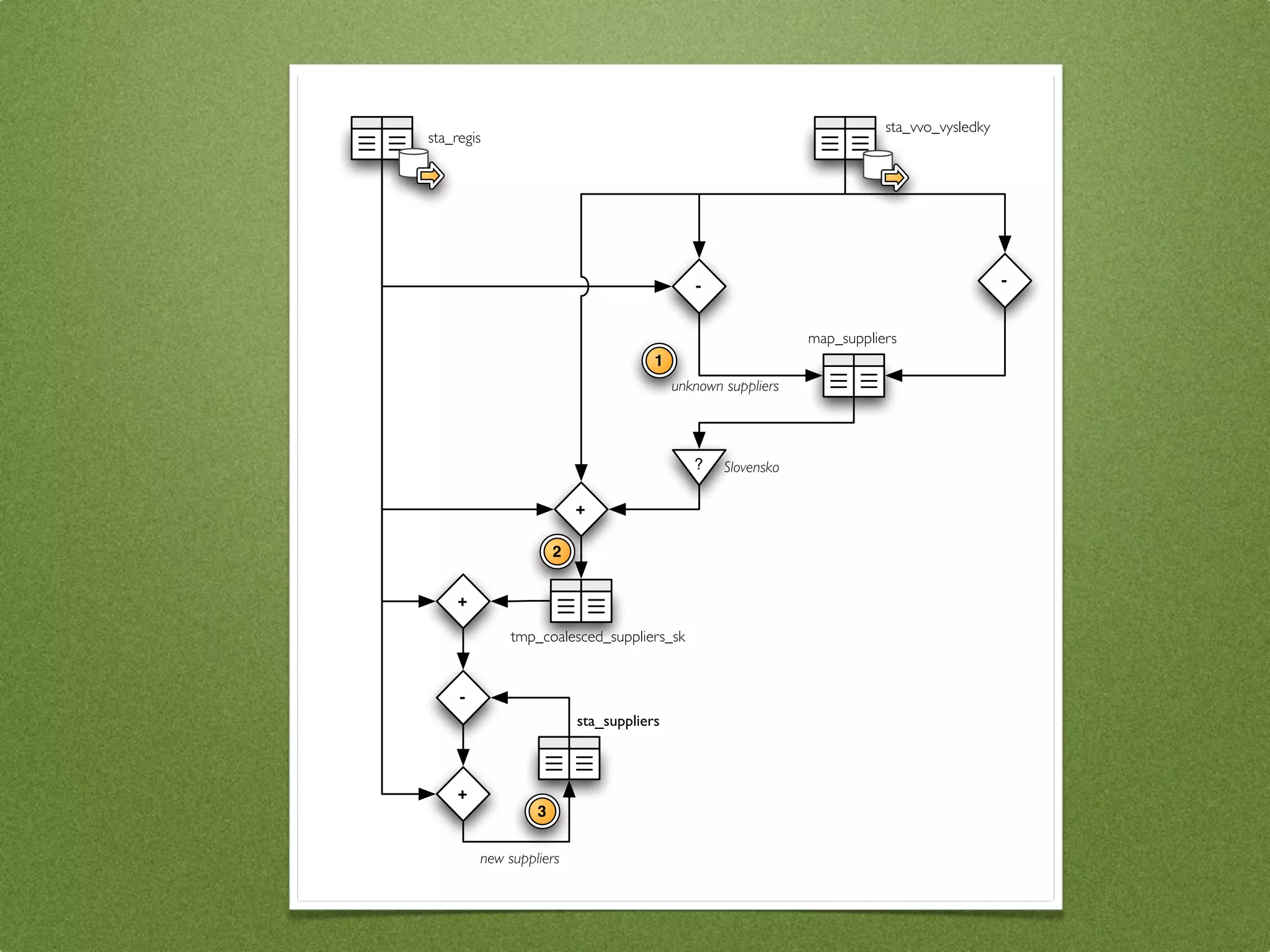

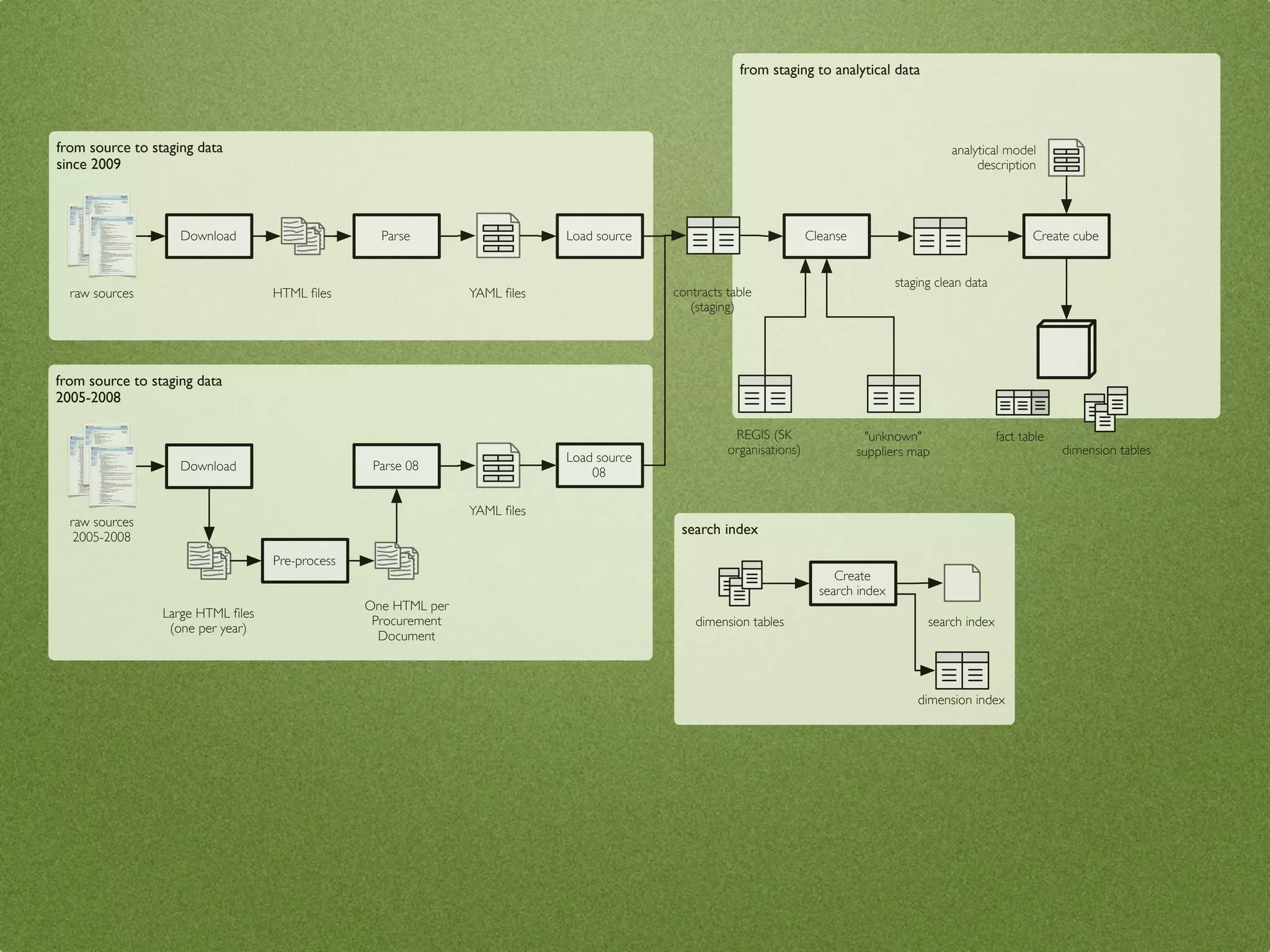

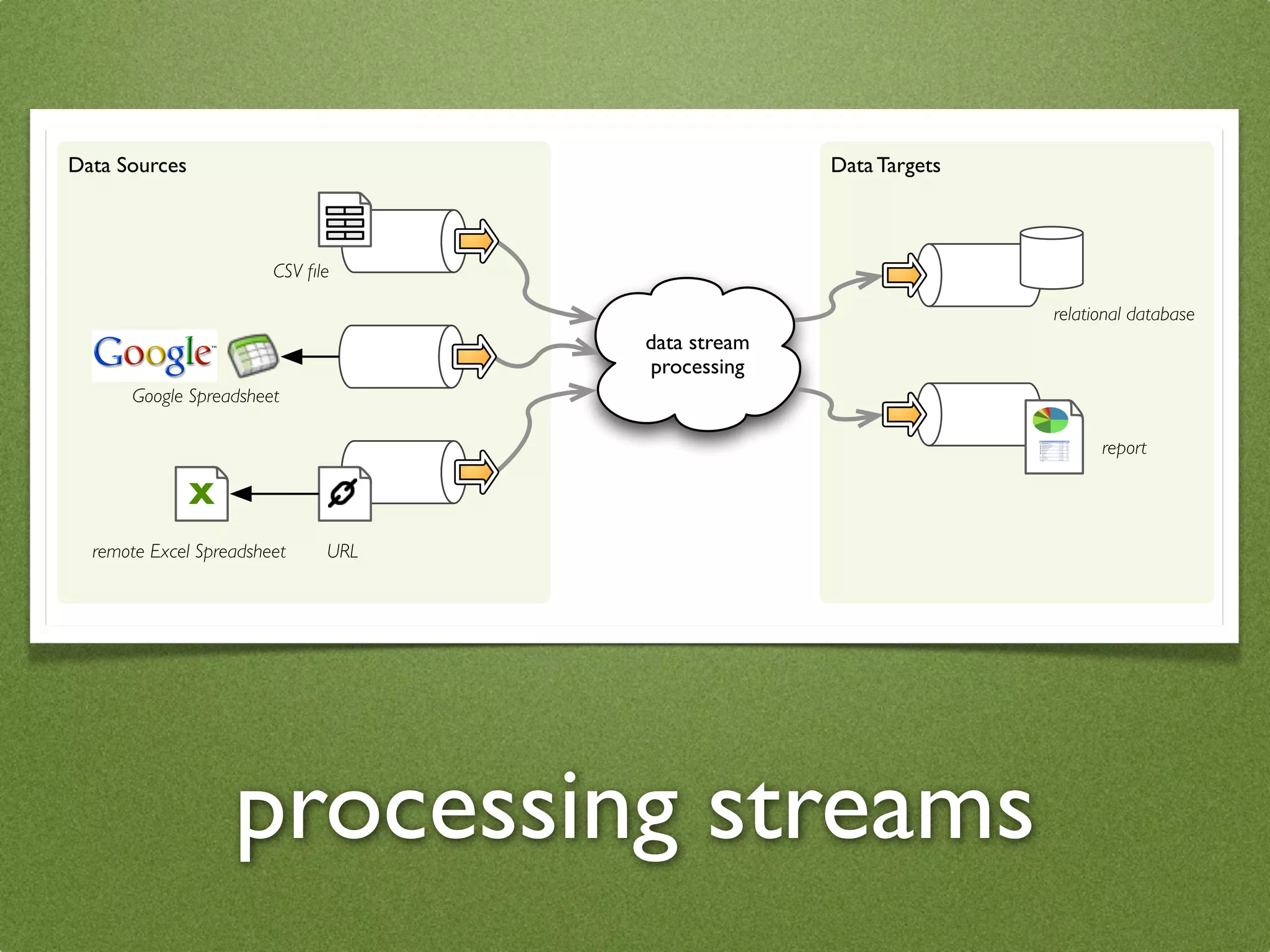

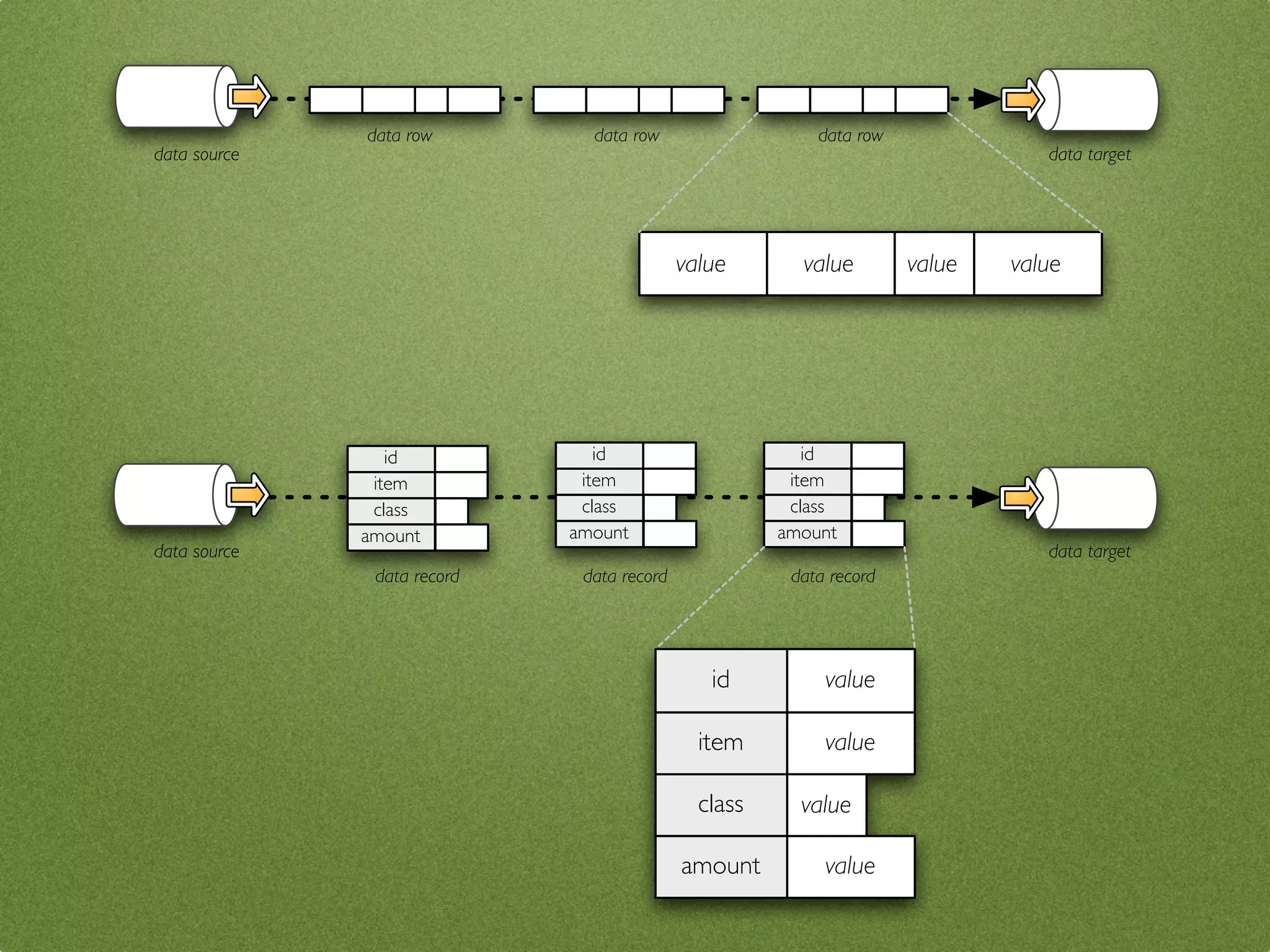

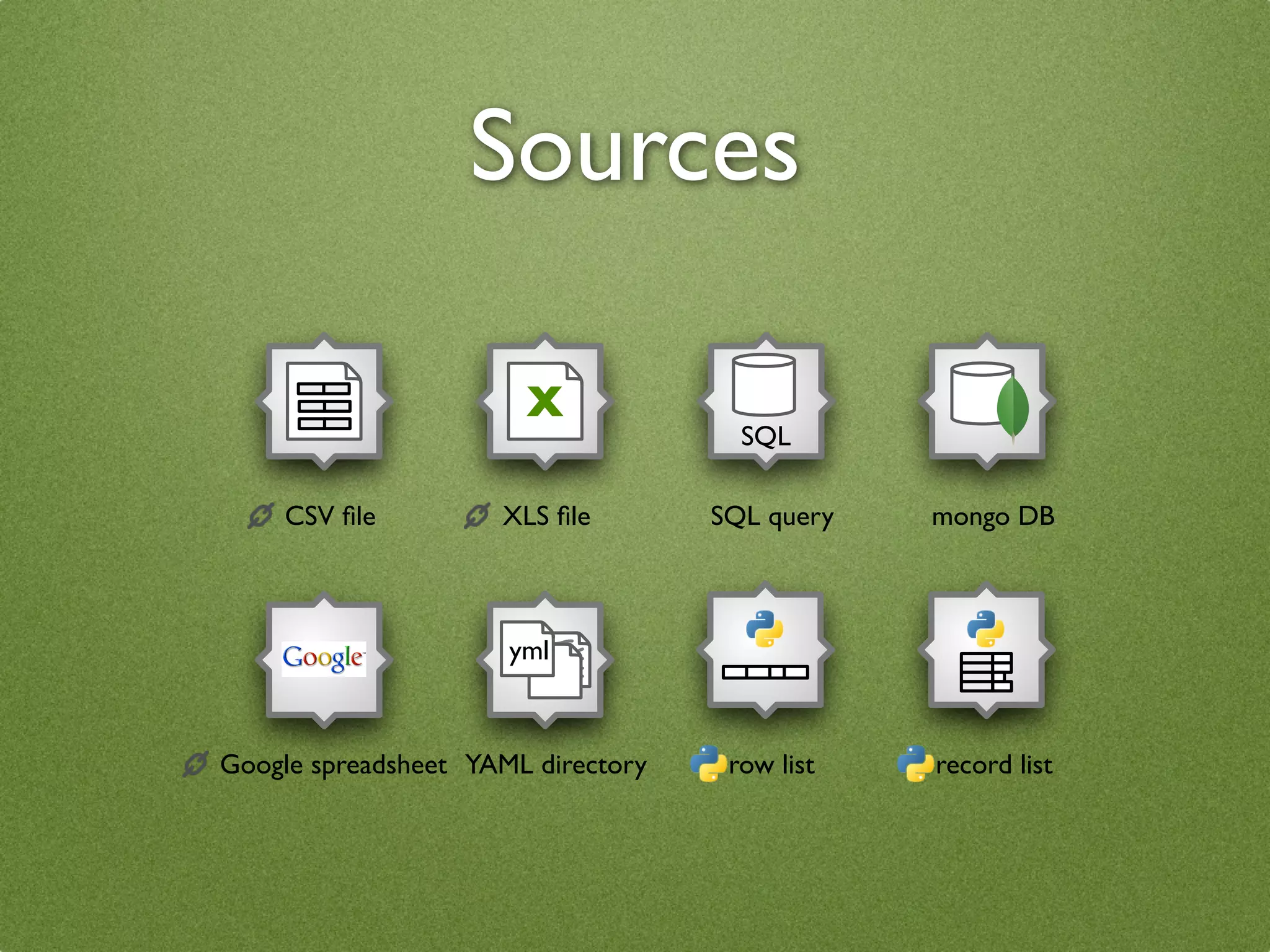

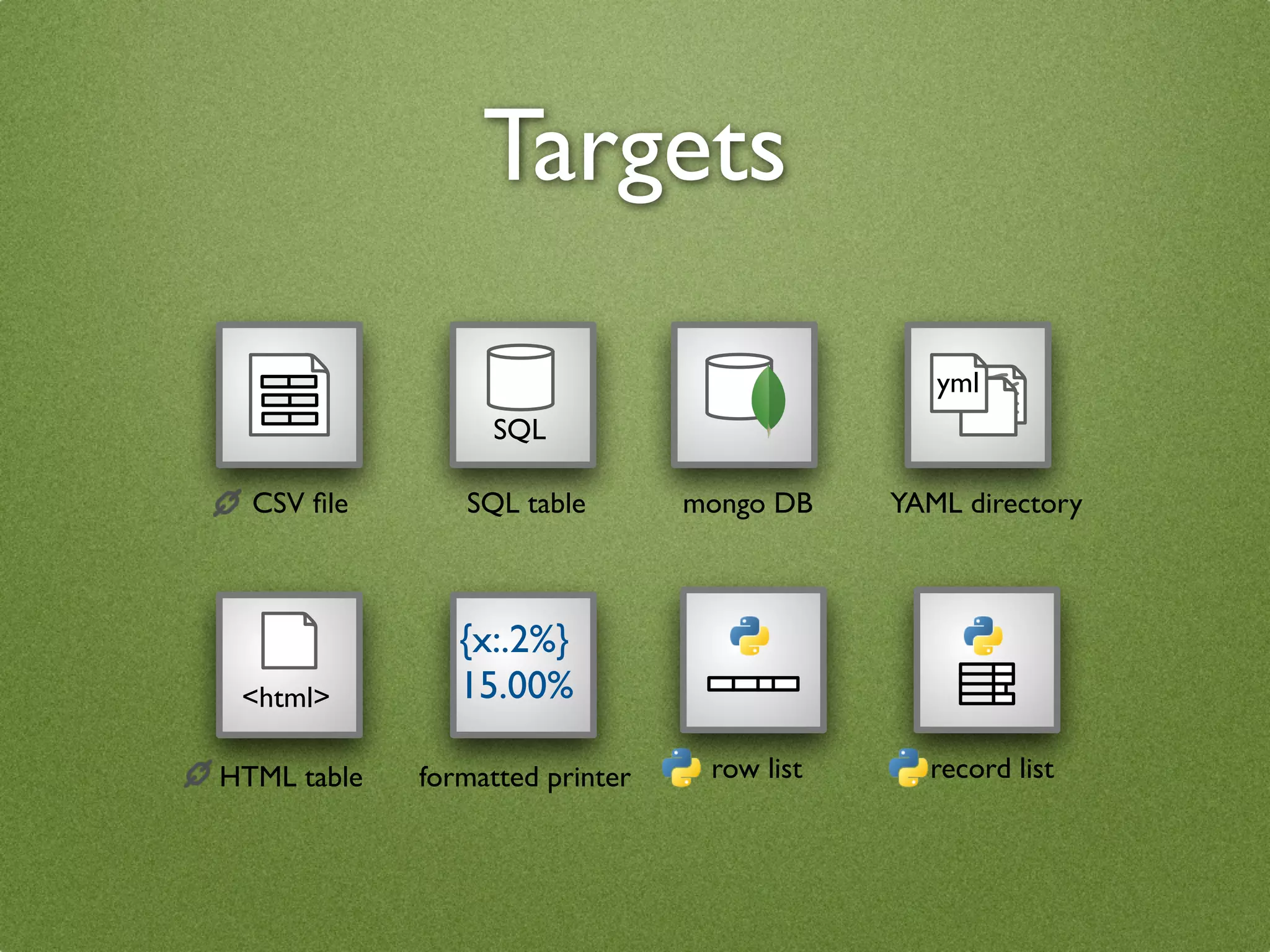

The document by Stefan Urbanek discusses data quality, defining dimensions such as completeness, accuracy, credibility, timeliness, consistency, and integrity. It highlights the importance of measuring data quality throughout the data lifecycle, including extraction, transformation, and loading (ETL) processes. Additionally, it presents methods for data cleansing and the use of structured data formats to improve data processing and reporting strengths.

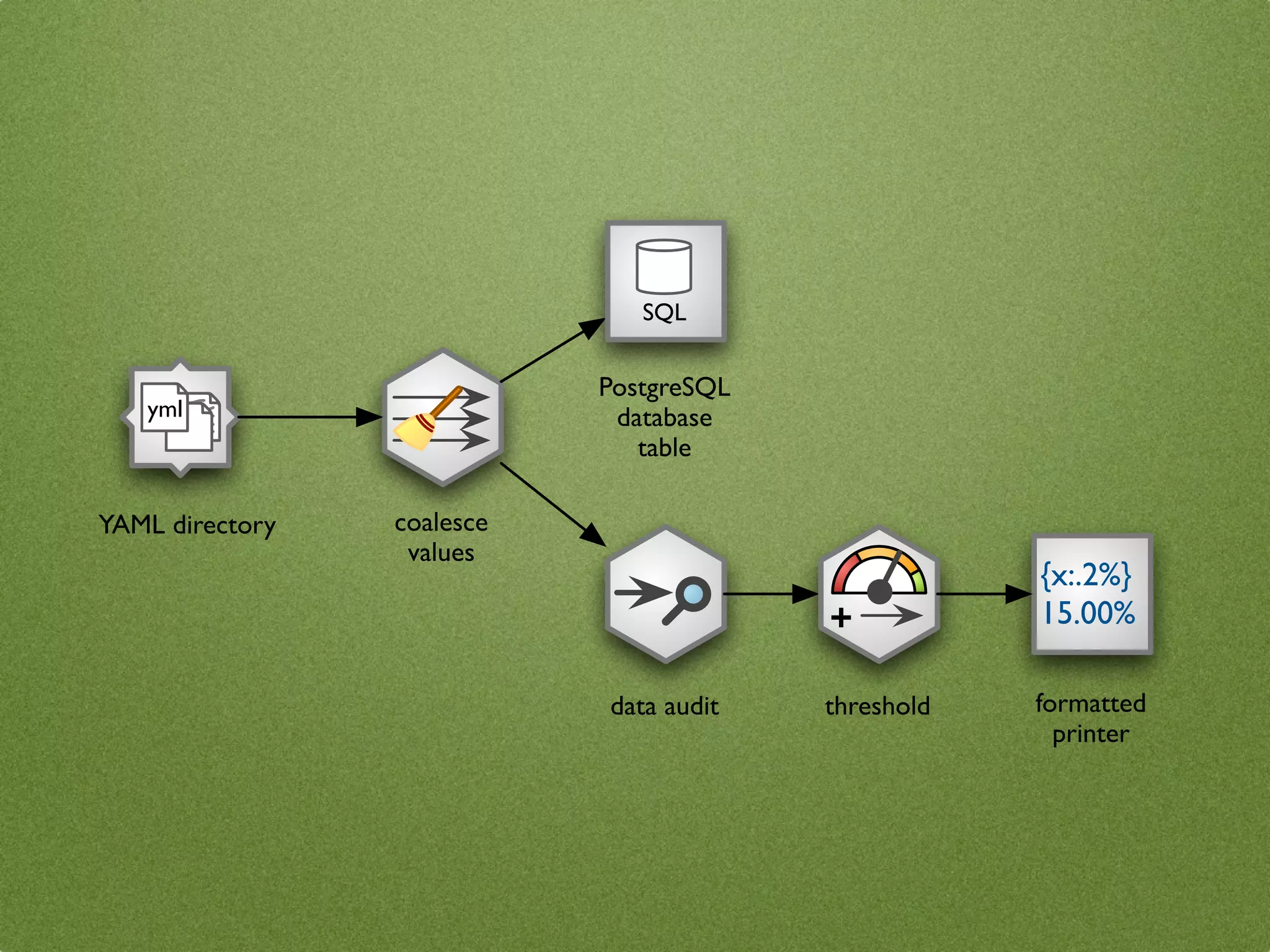

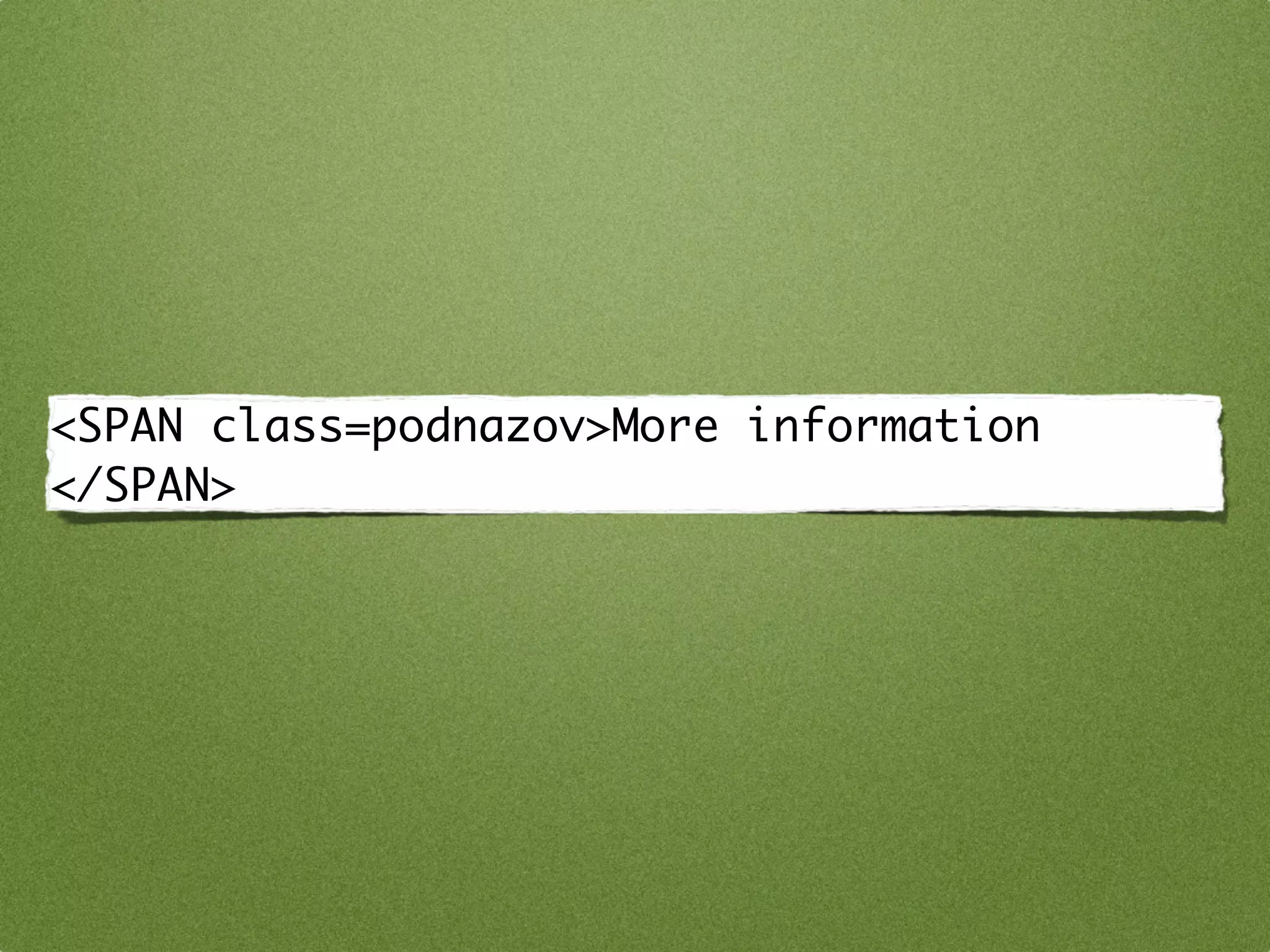

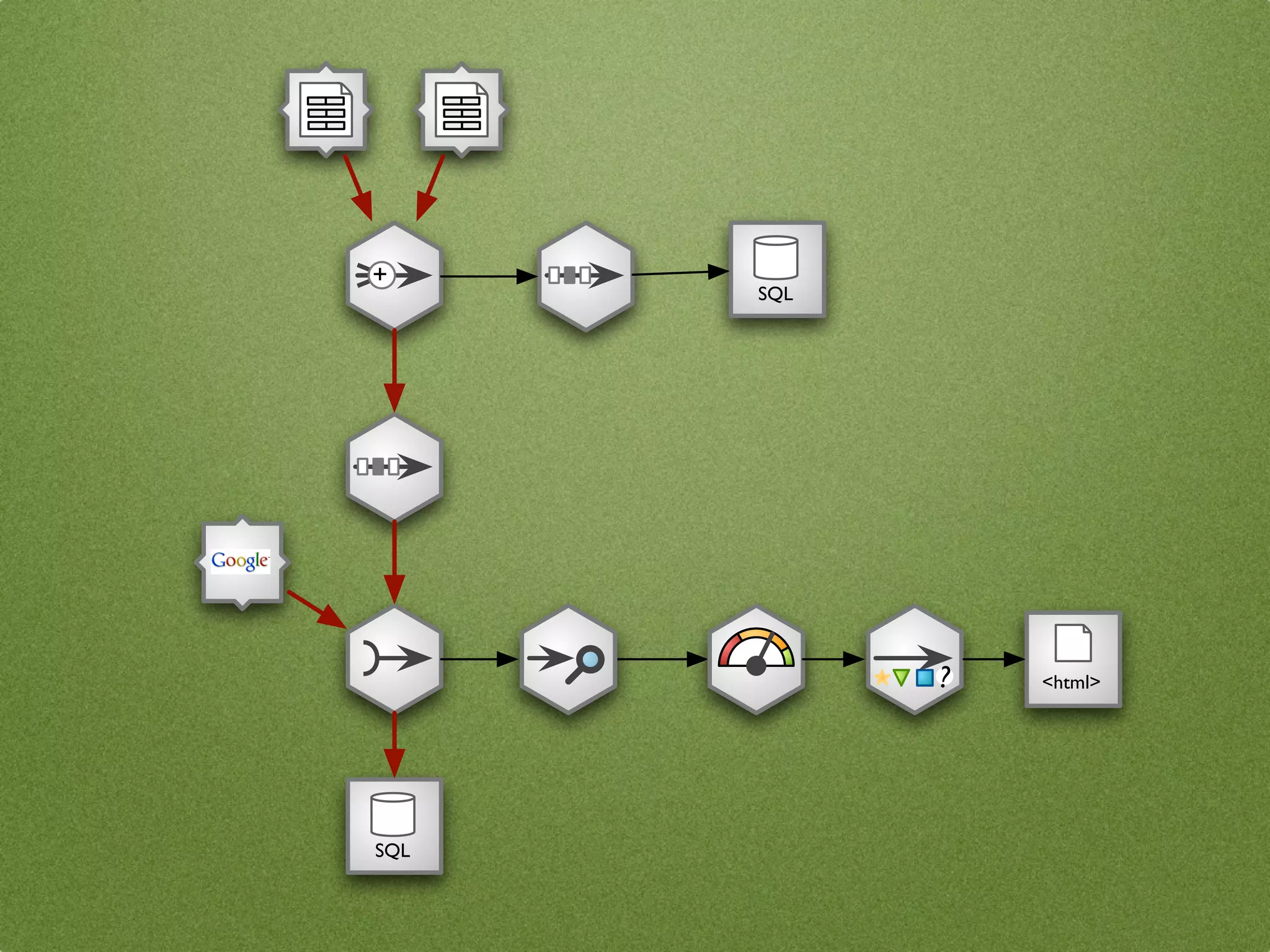

![yml nodes = {

"source": CSVSourceNode(...),

"clean": CoalesceValueToTypeNode(),

"output": DatabaseTableTargetNode(...),

"audit": AuditNode(...),

"threshold": ValueThresholdNode(),

"print": FormattedPrinterNode()

}

connections = [

("source", "clean"),

("clean", "output"),

SQL

("clean", "audit"),

("audit", "threshold"),

("threshold", "print")

]

+ ... # configure nodes here

stream = Stream(nodes, connections)

stream.initialize()

{x:.2%} stream.run()

15.00%](https://image.slidesharecdn.com/datacleansing-110403132829-phpapp02/75/Data-Cleansing-introduction-for-BigClean-Prague-2011-64-2048.jpg)