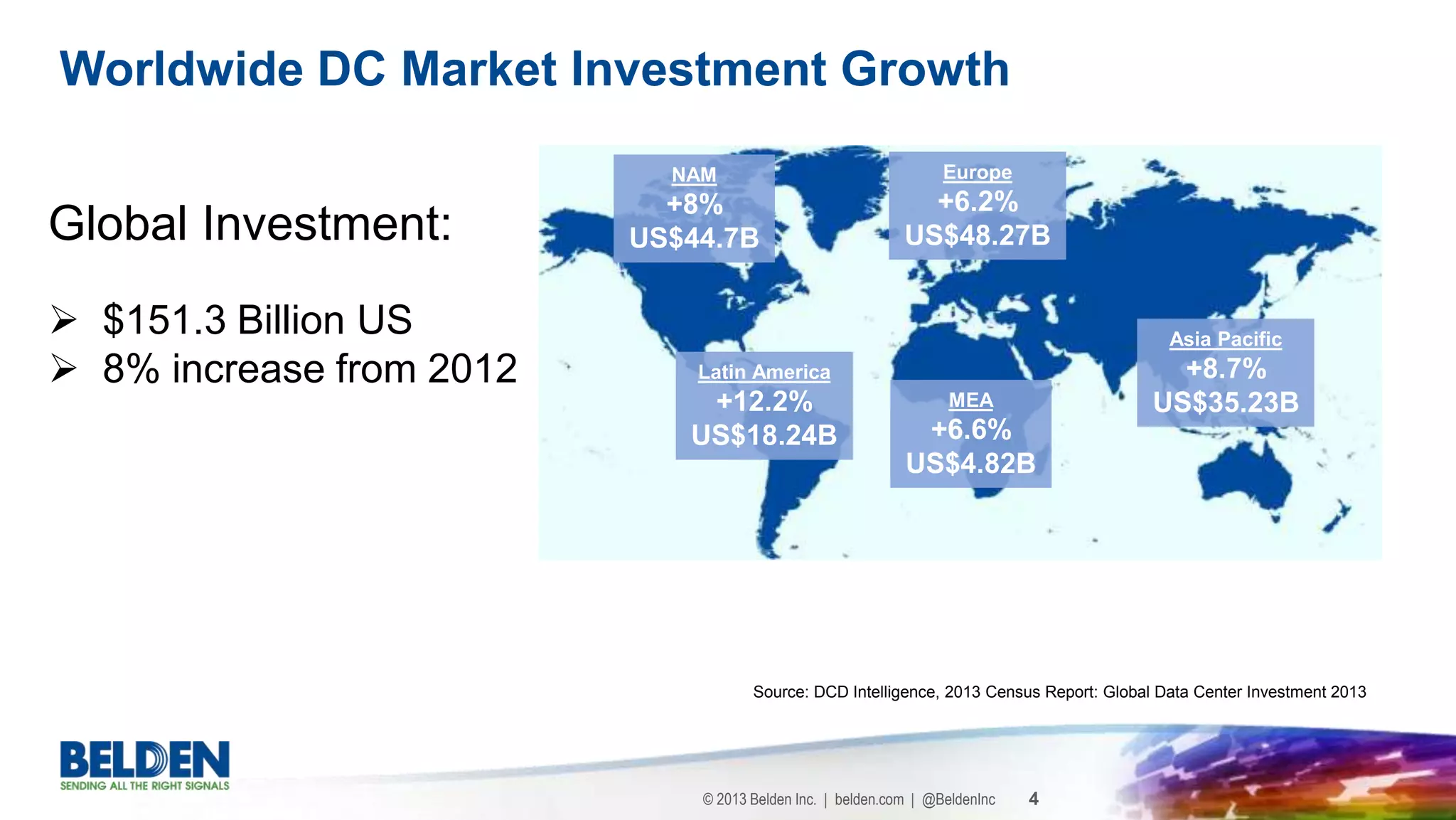

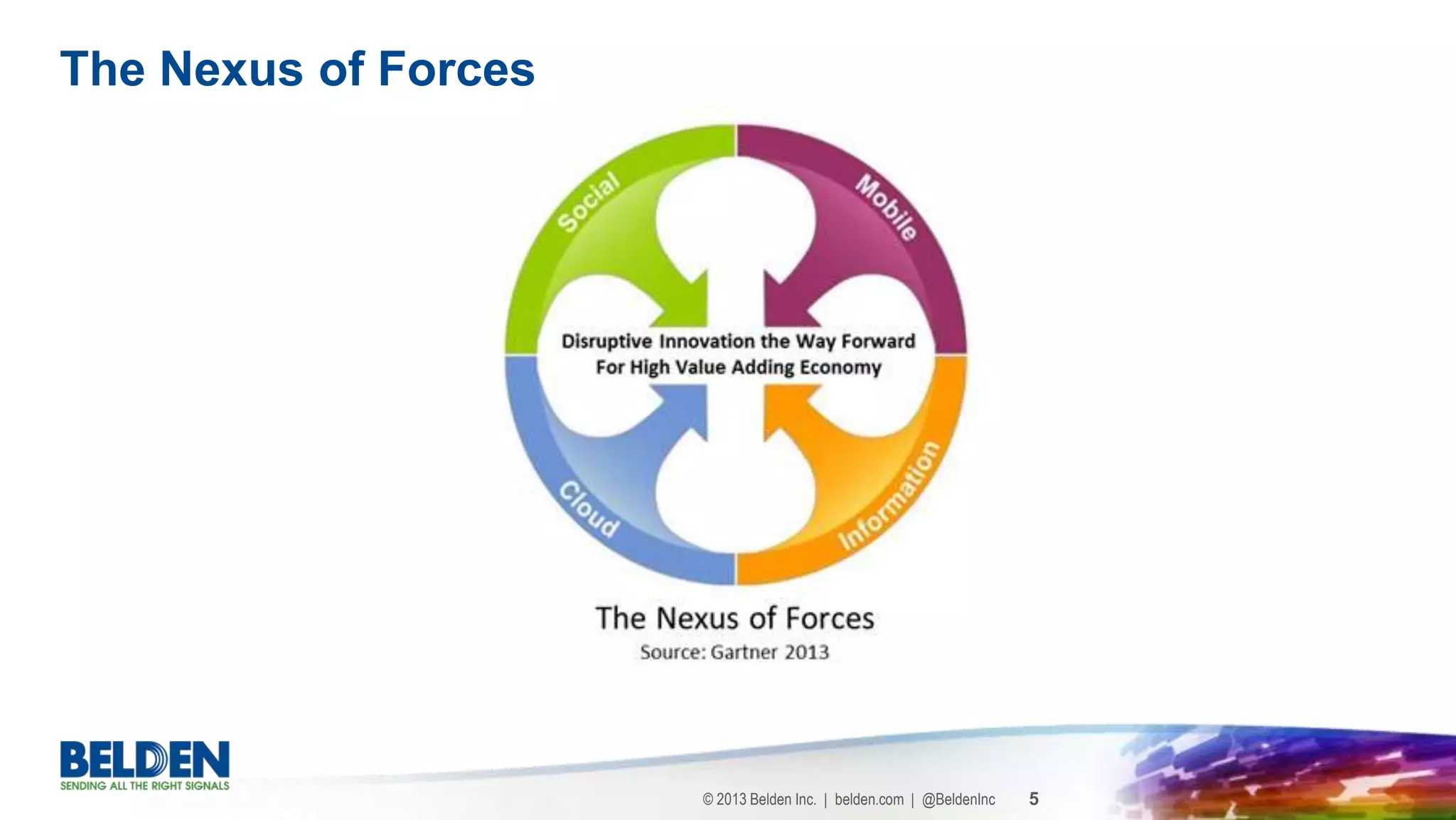

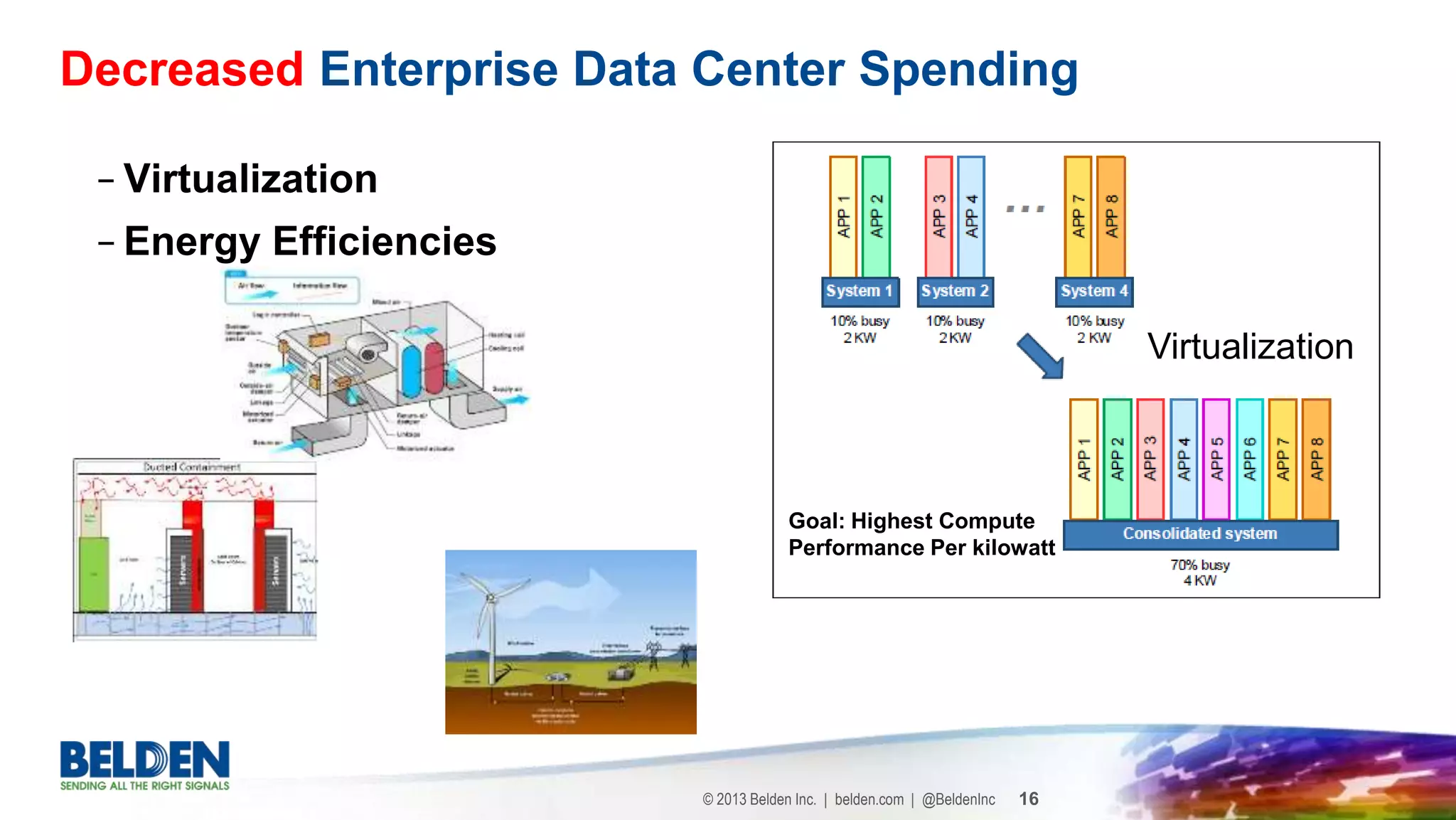

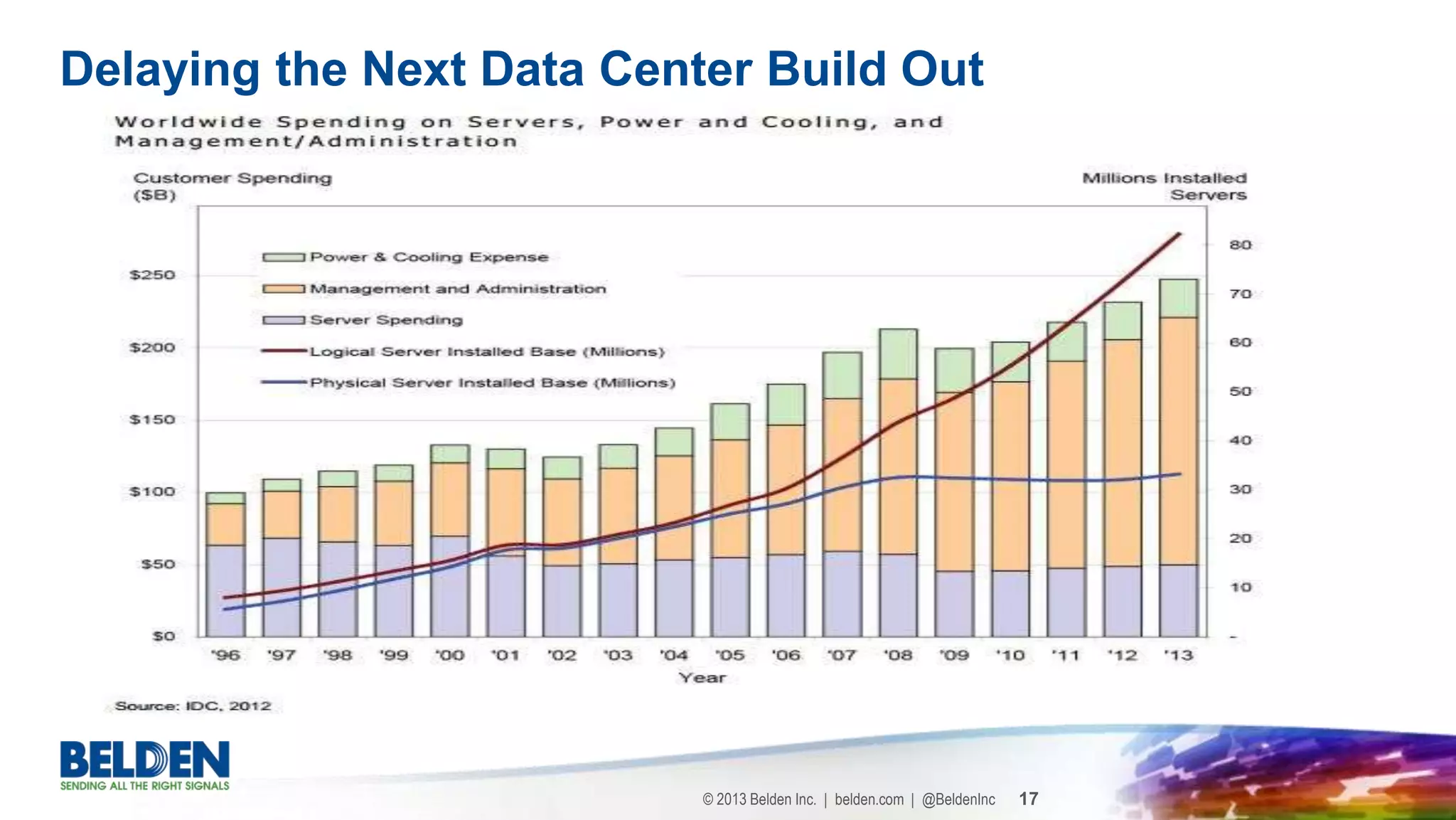

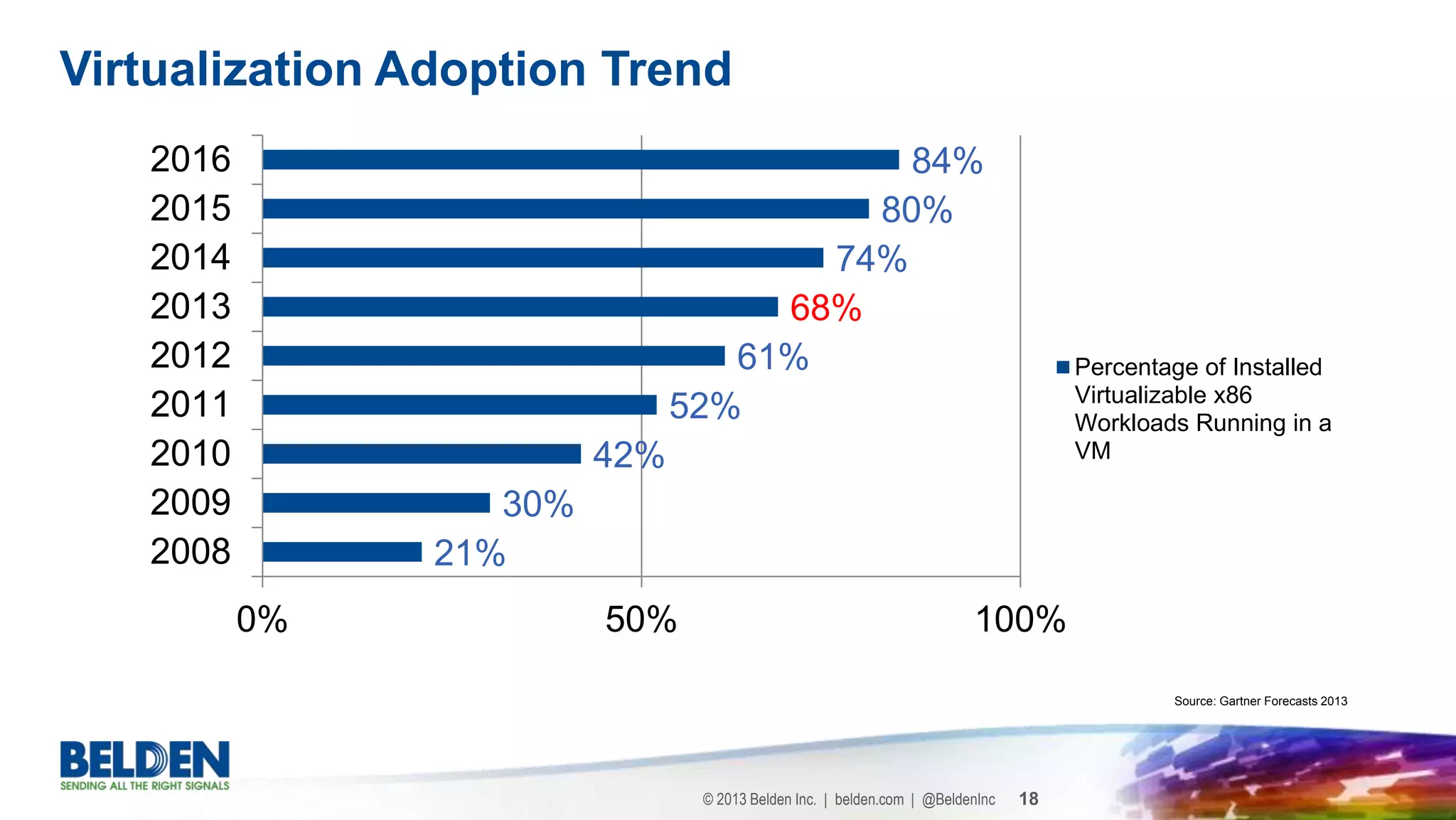

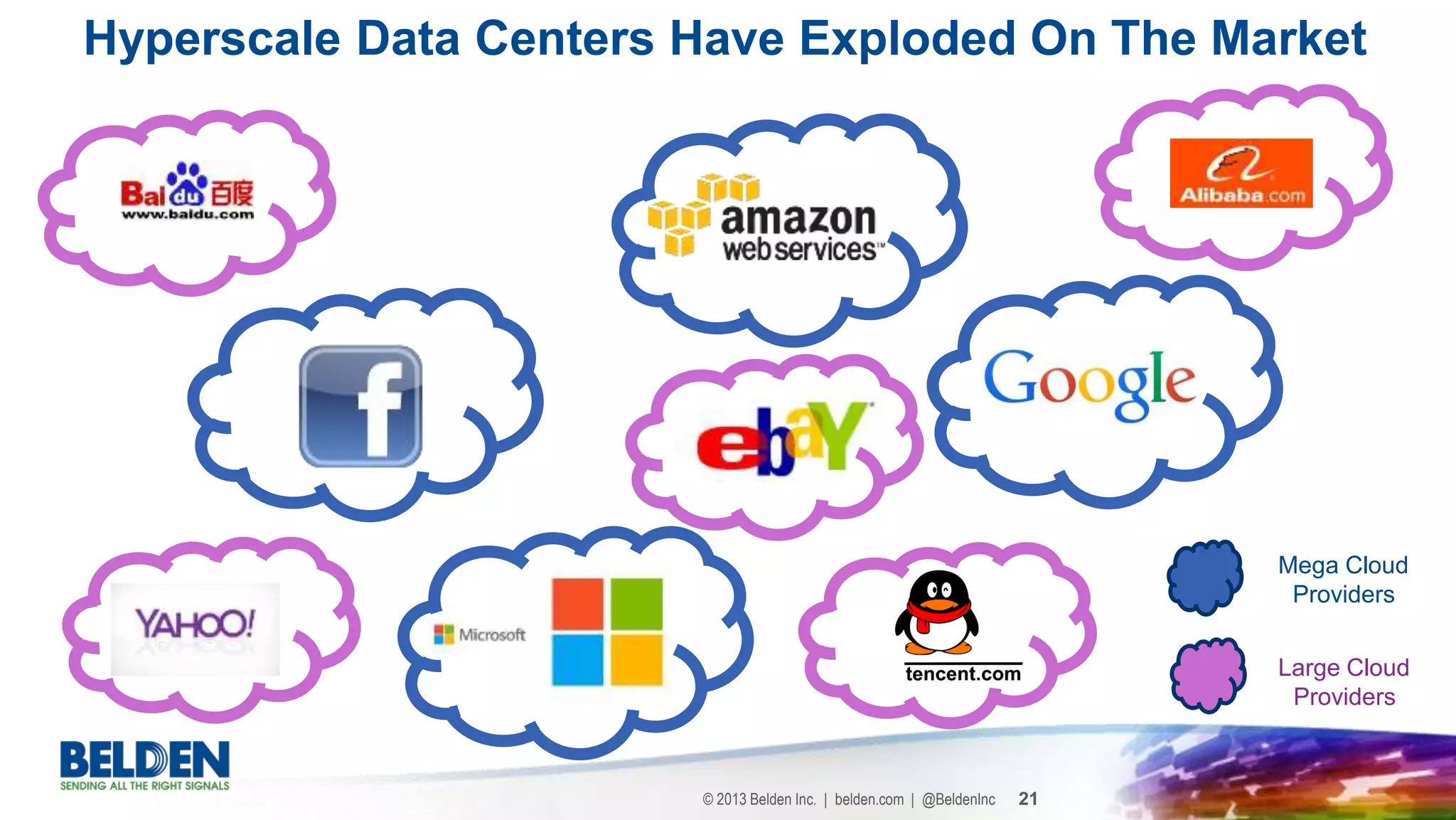

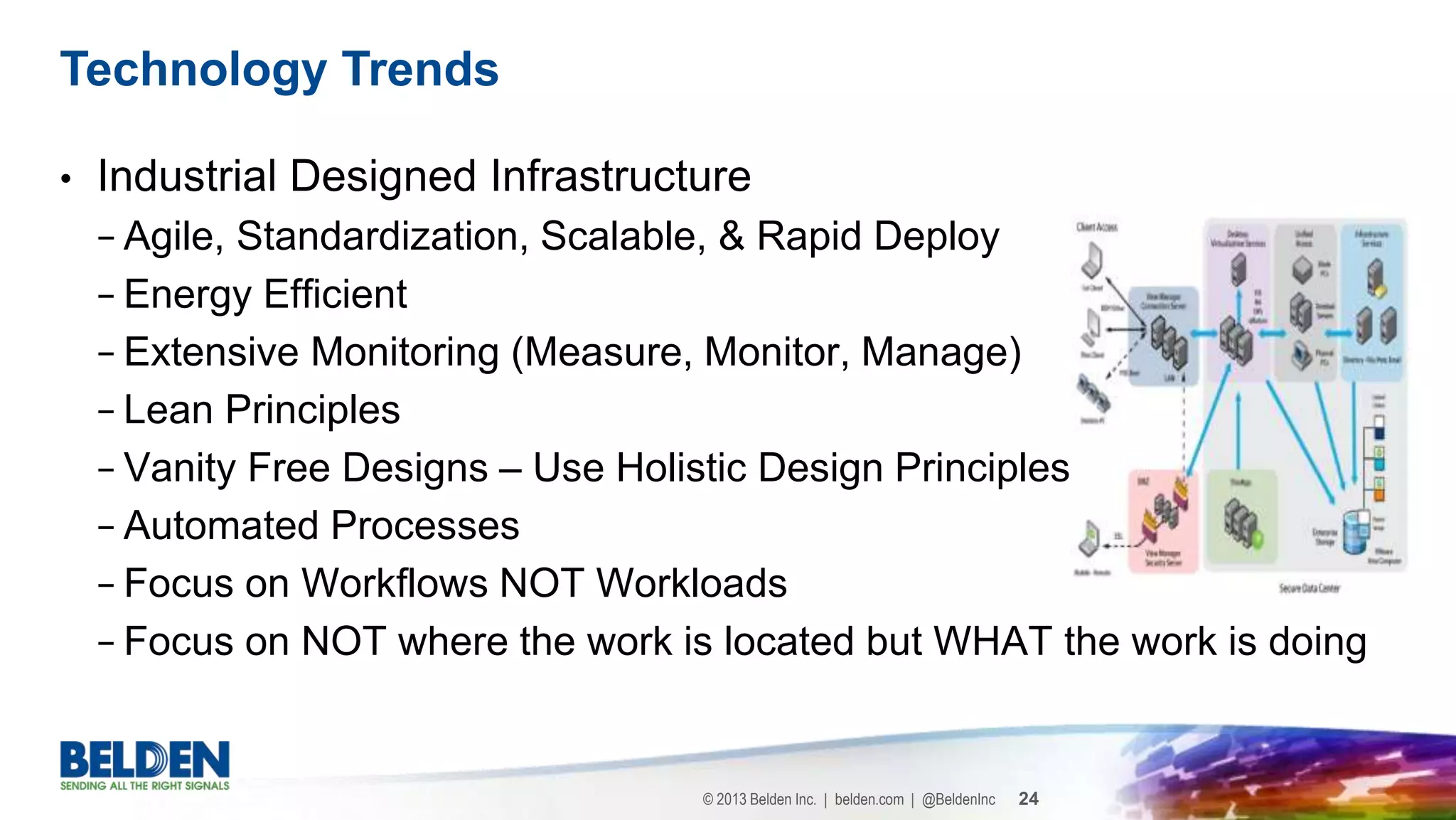

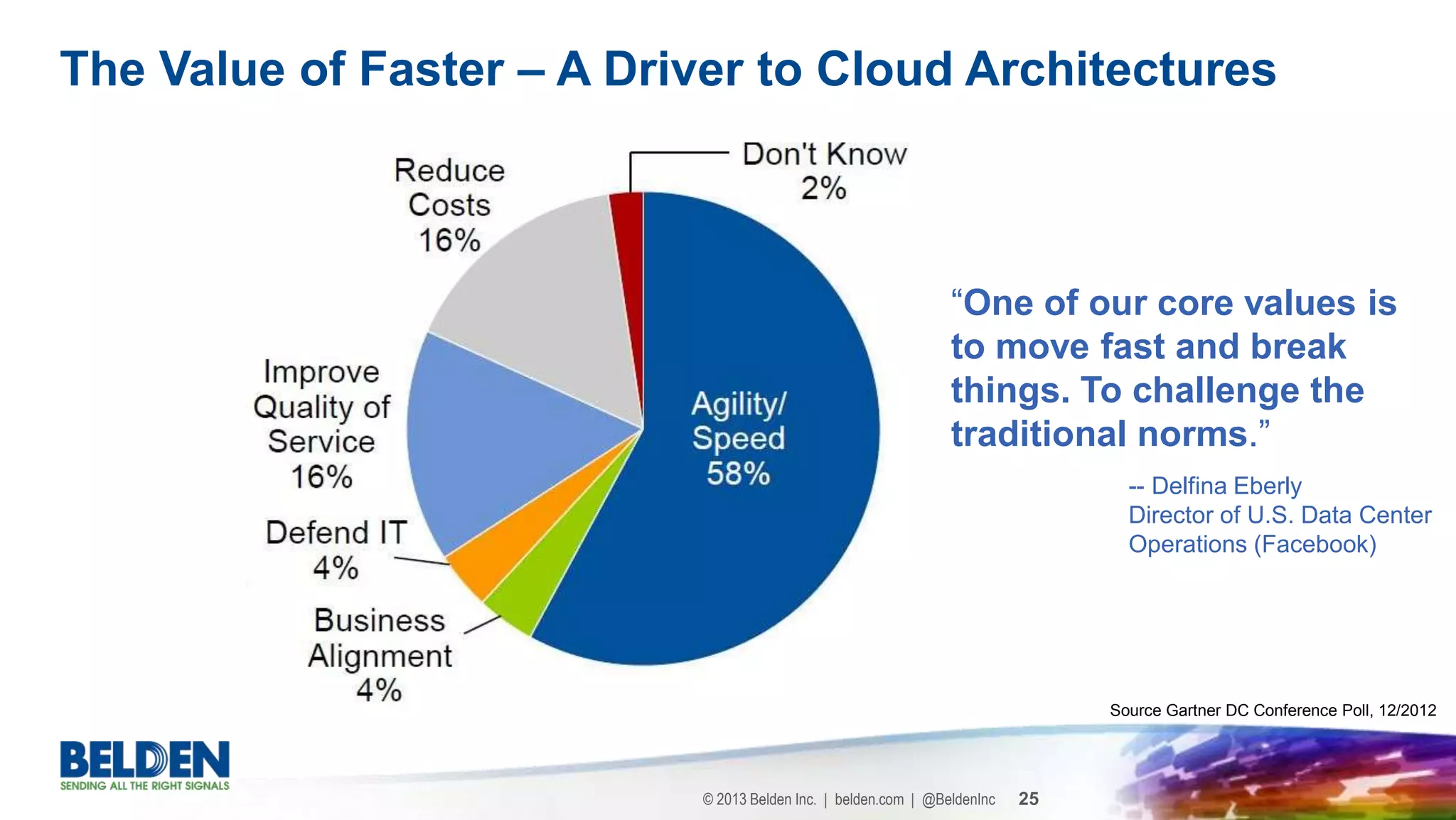

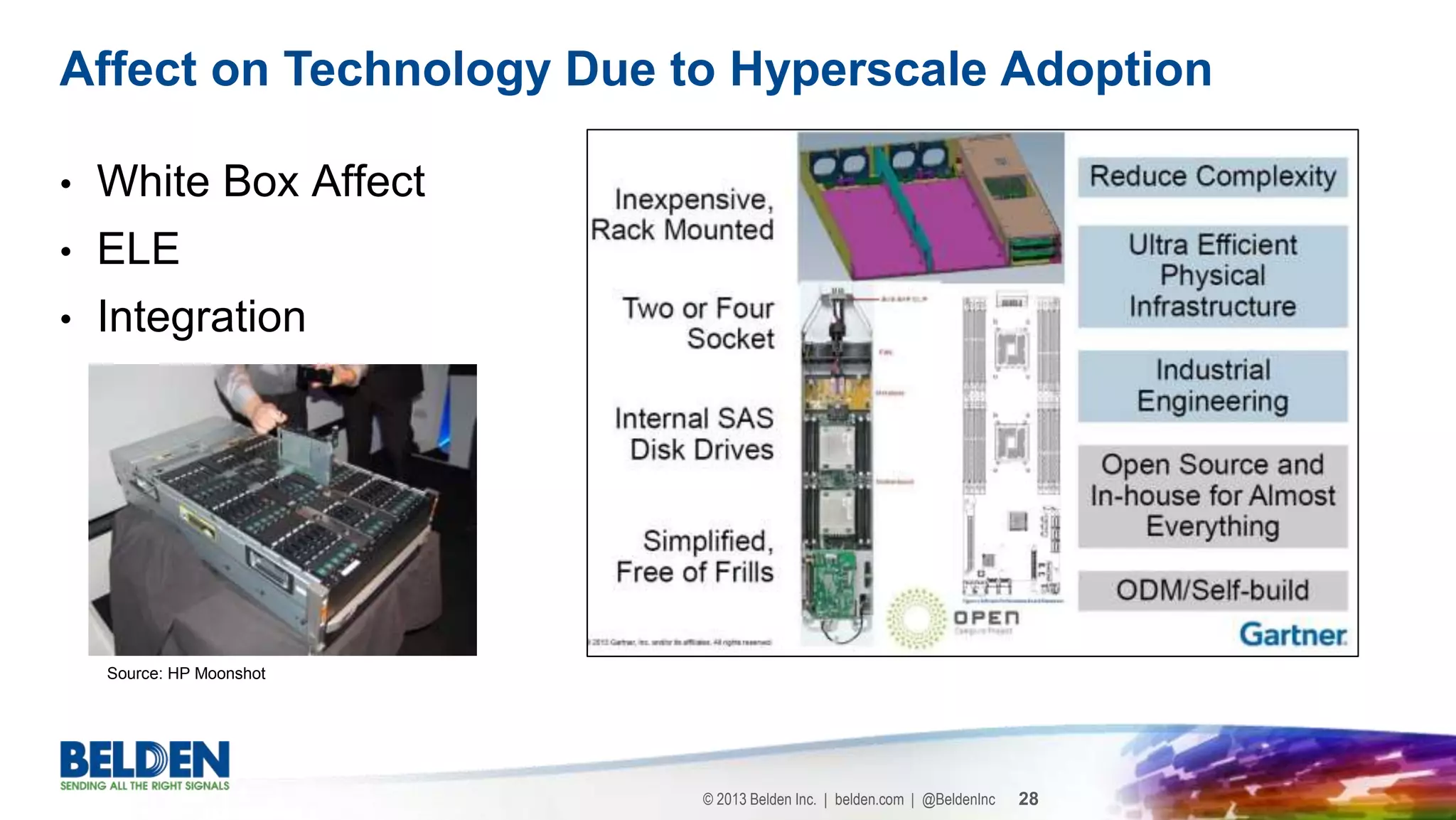

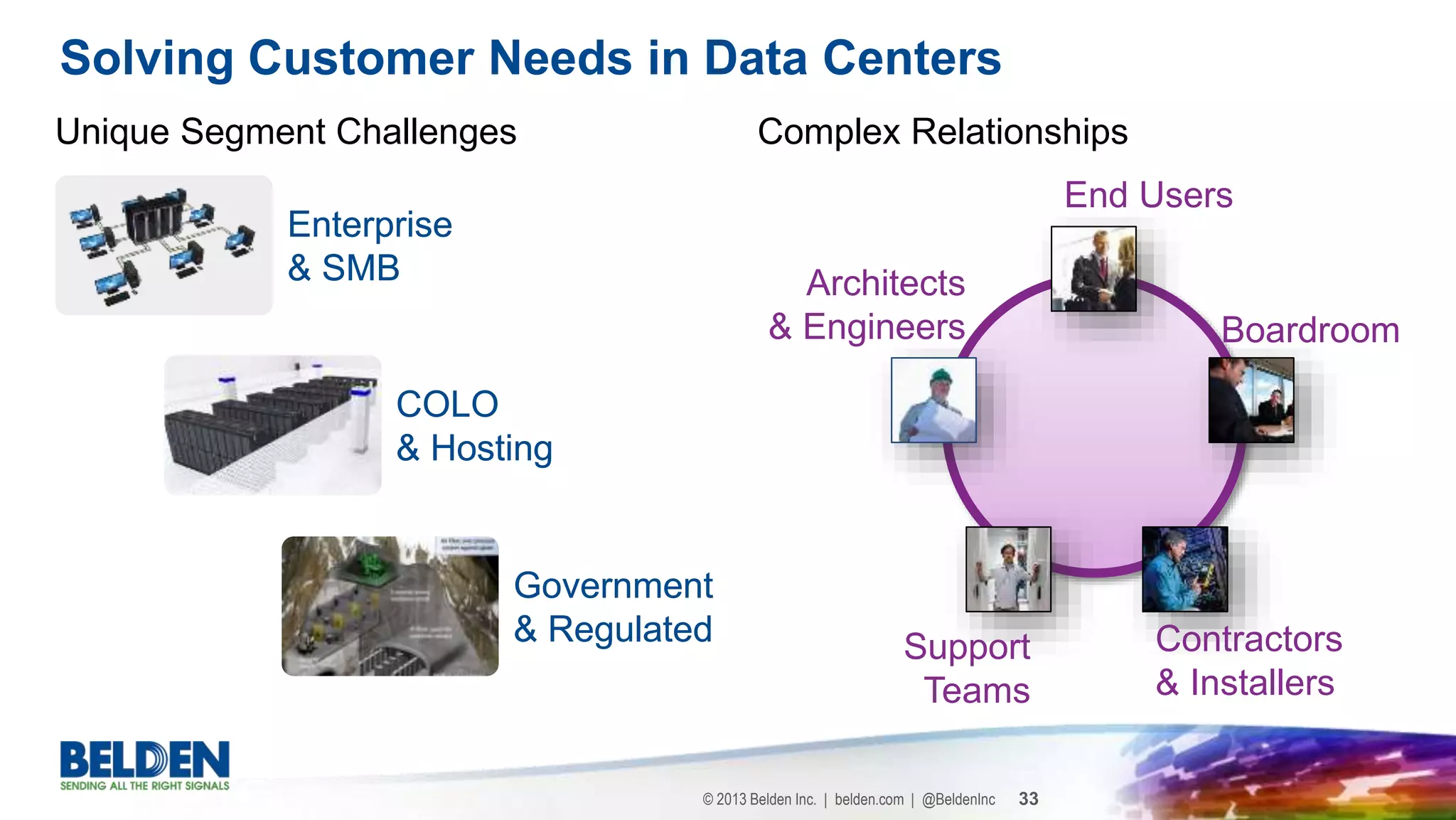

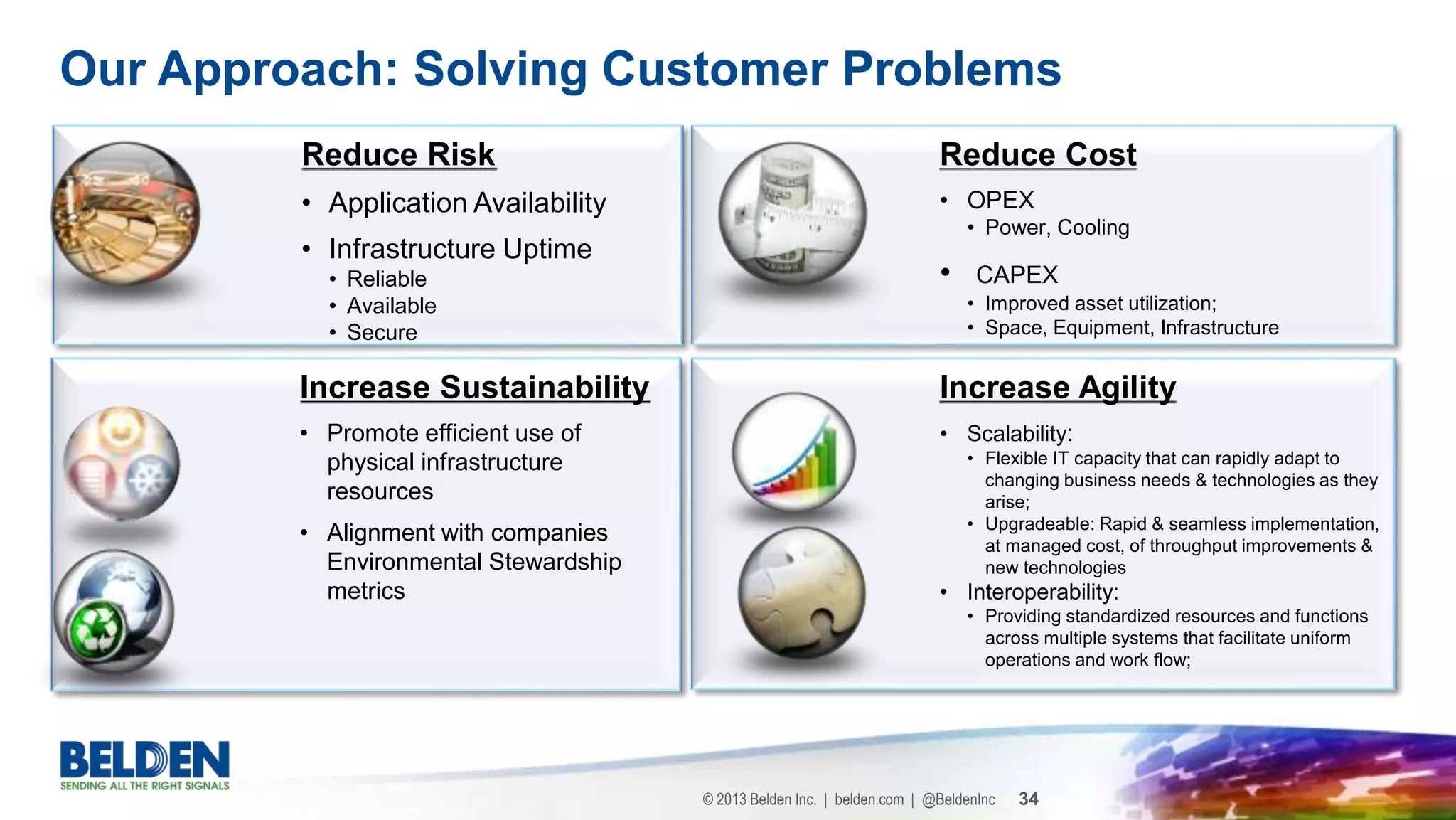

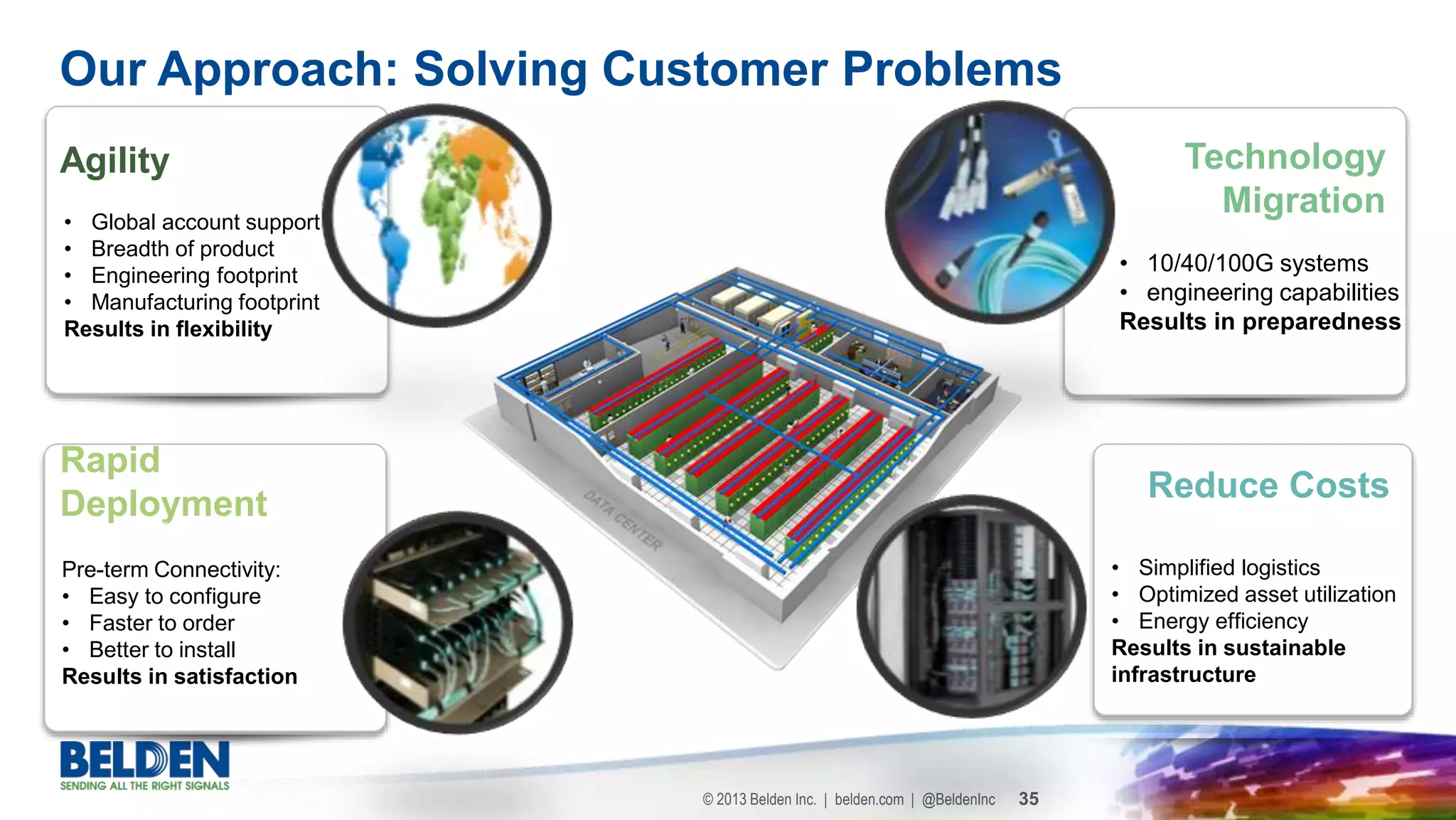

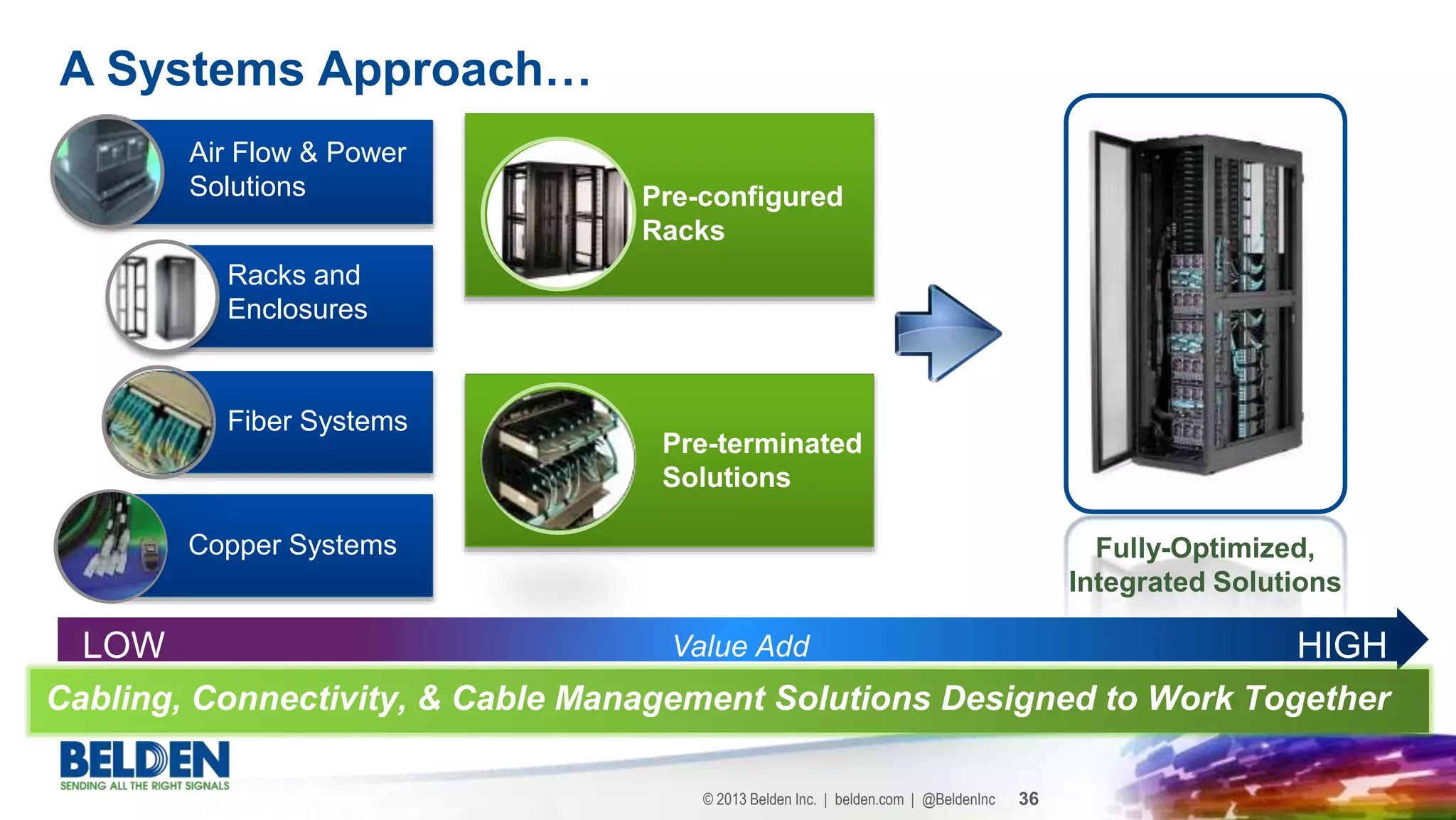

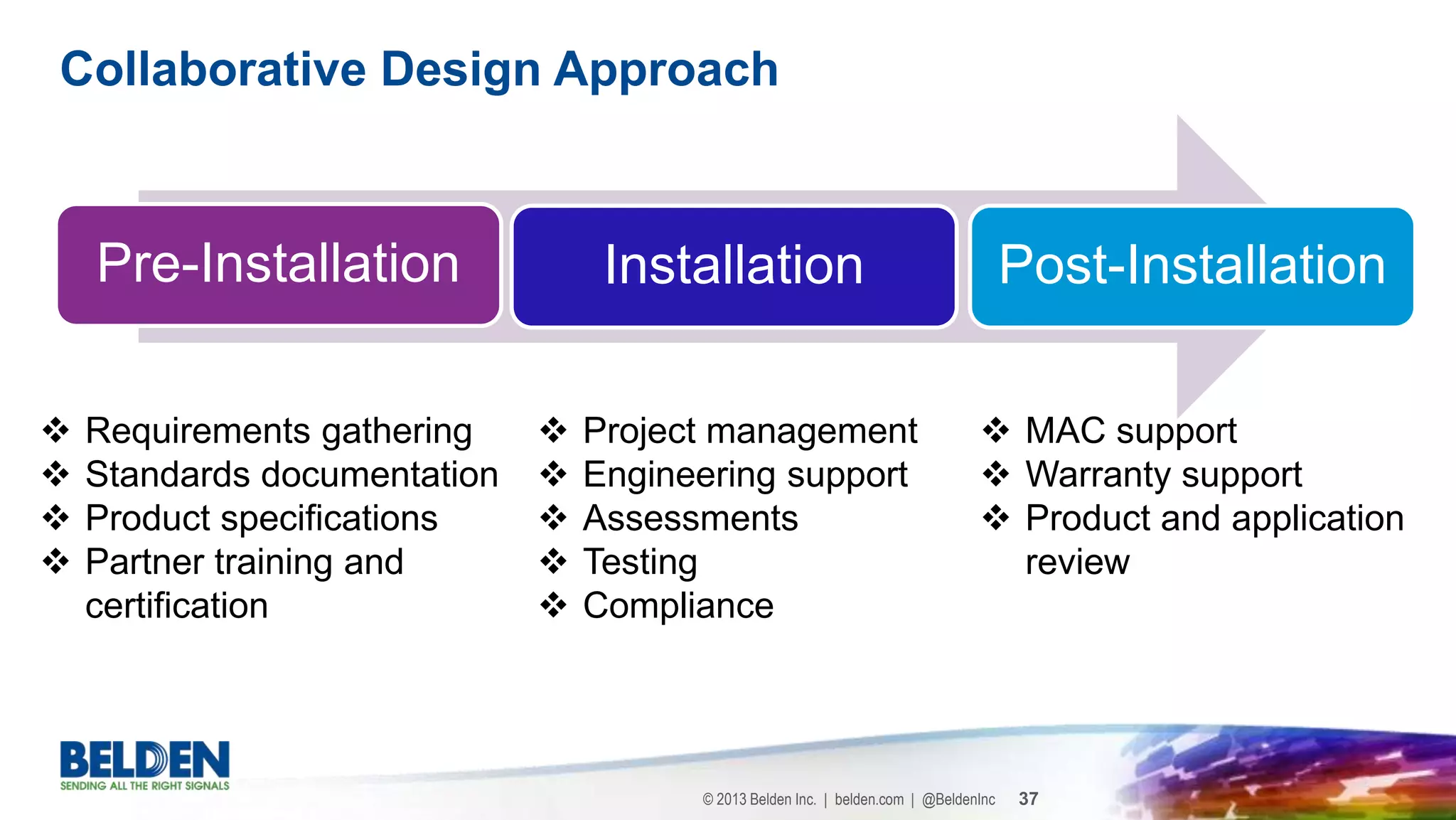

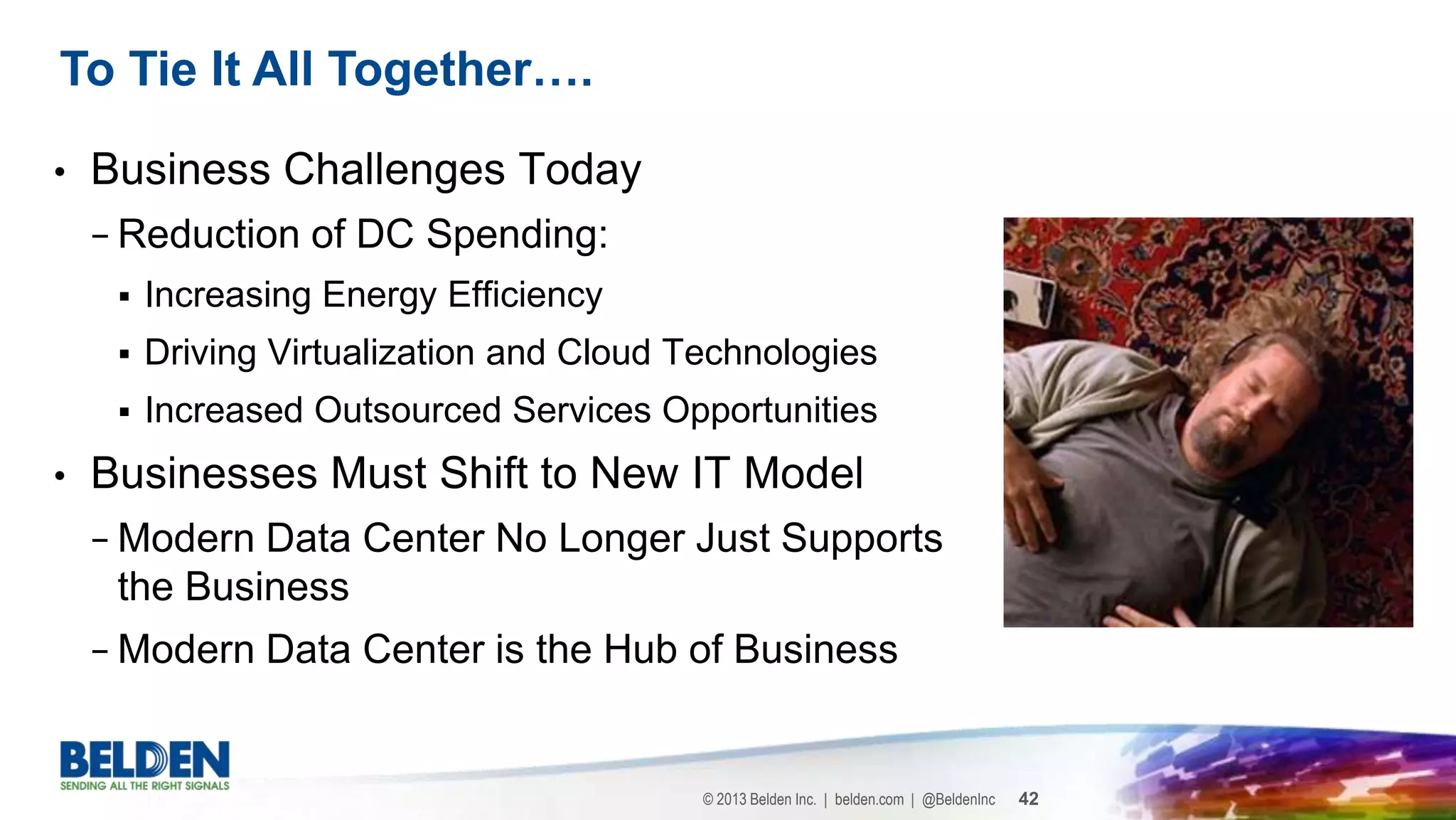

The document discusses the evolution of data centers transitioning into 'hyper' data centers that offer information access anytime, anywhere, and on any device. It highlights industry trends, business models, and technological innovations that drive reliance on modern data centers, emphasizing their role as central hubs for organizations. Additionally, it addresses the impact of virtualization, energy efficiency, and cloud technologies on reducing spending and shaping the future of data center design and operations.