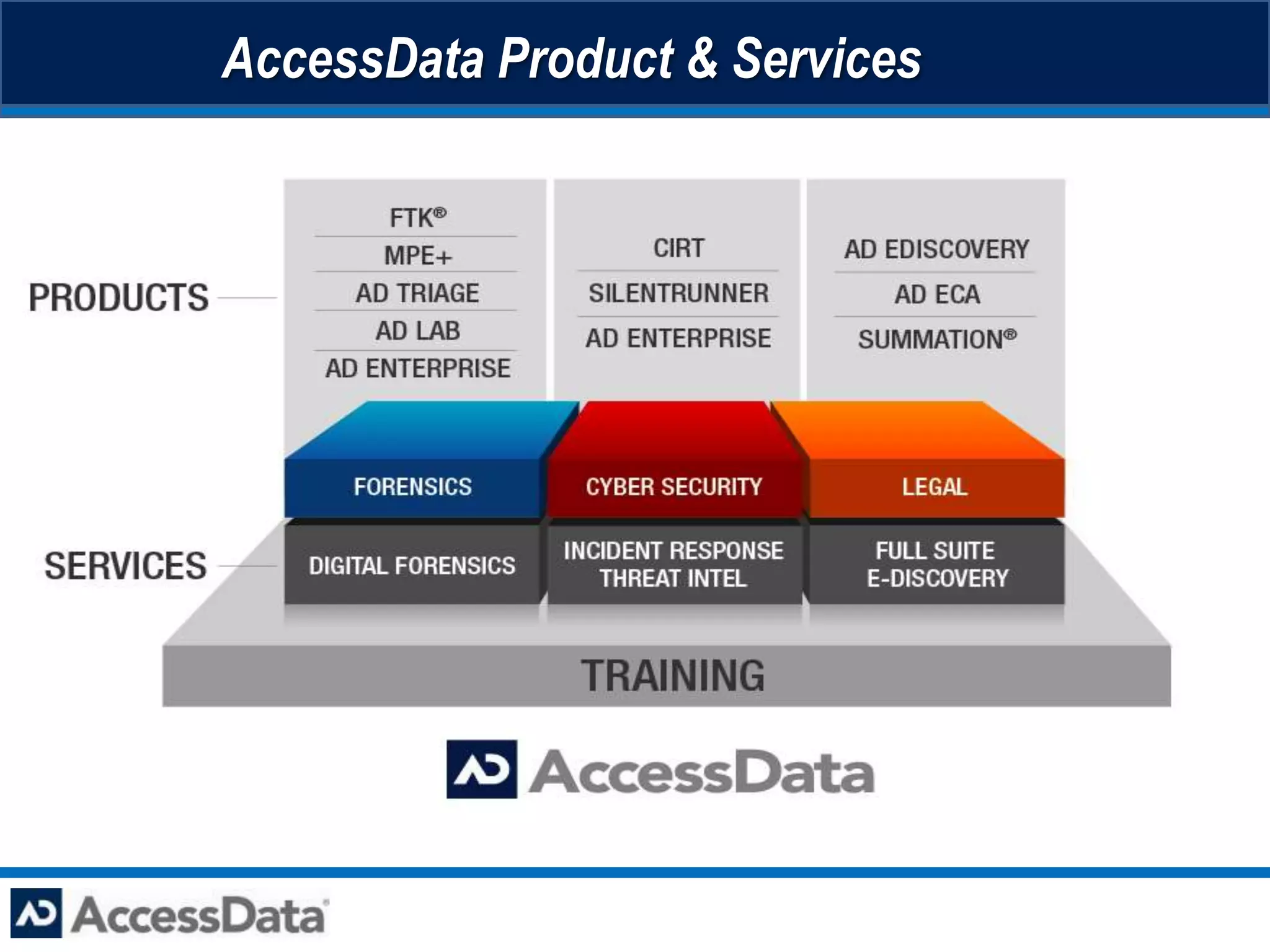

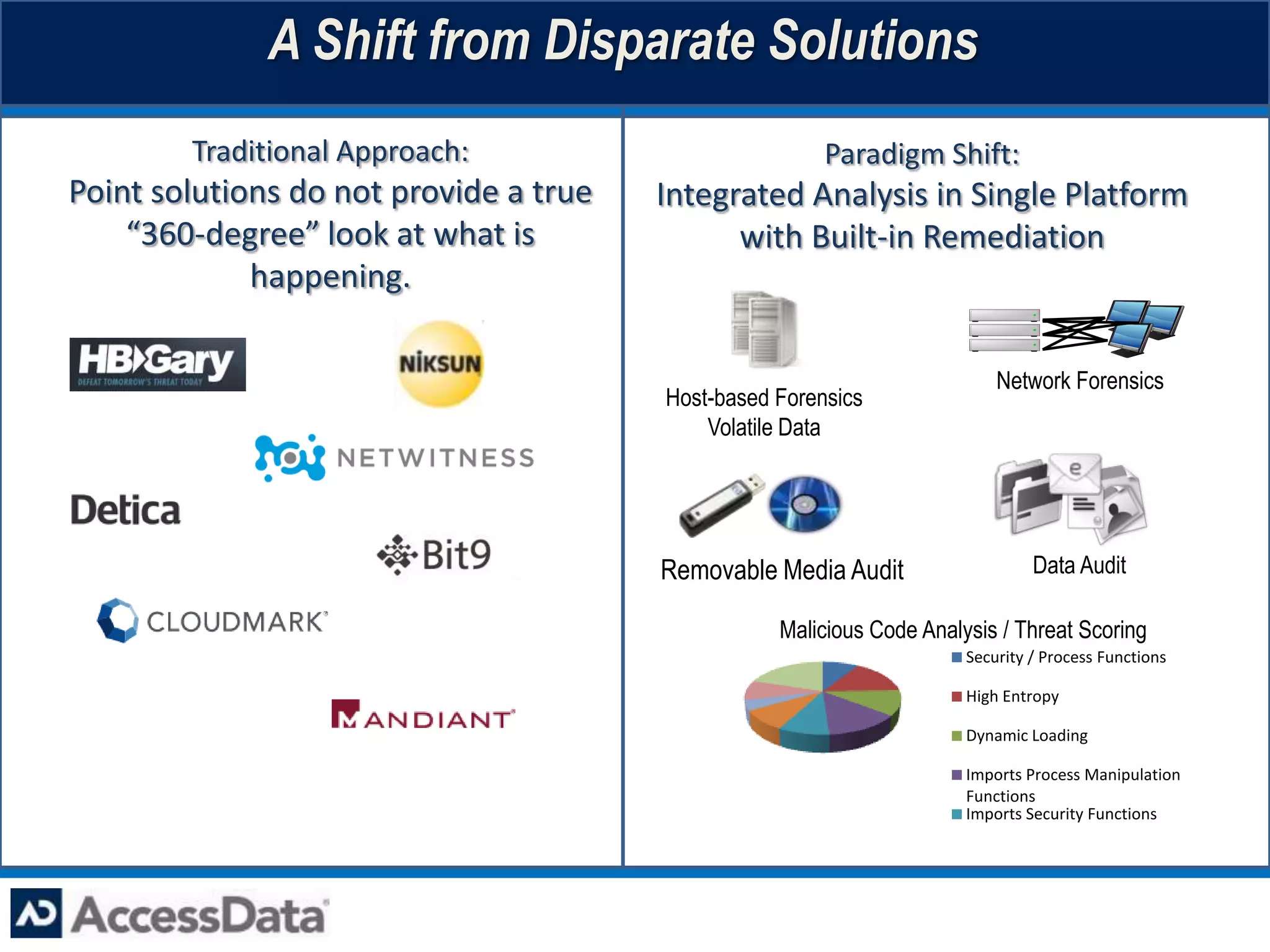

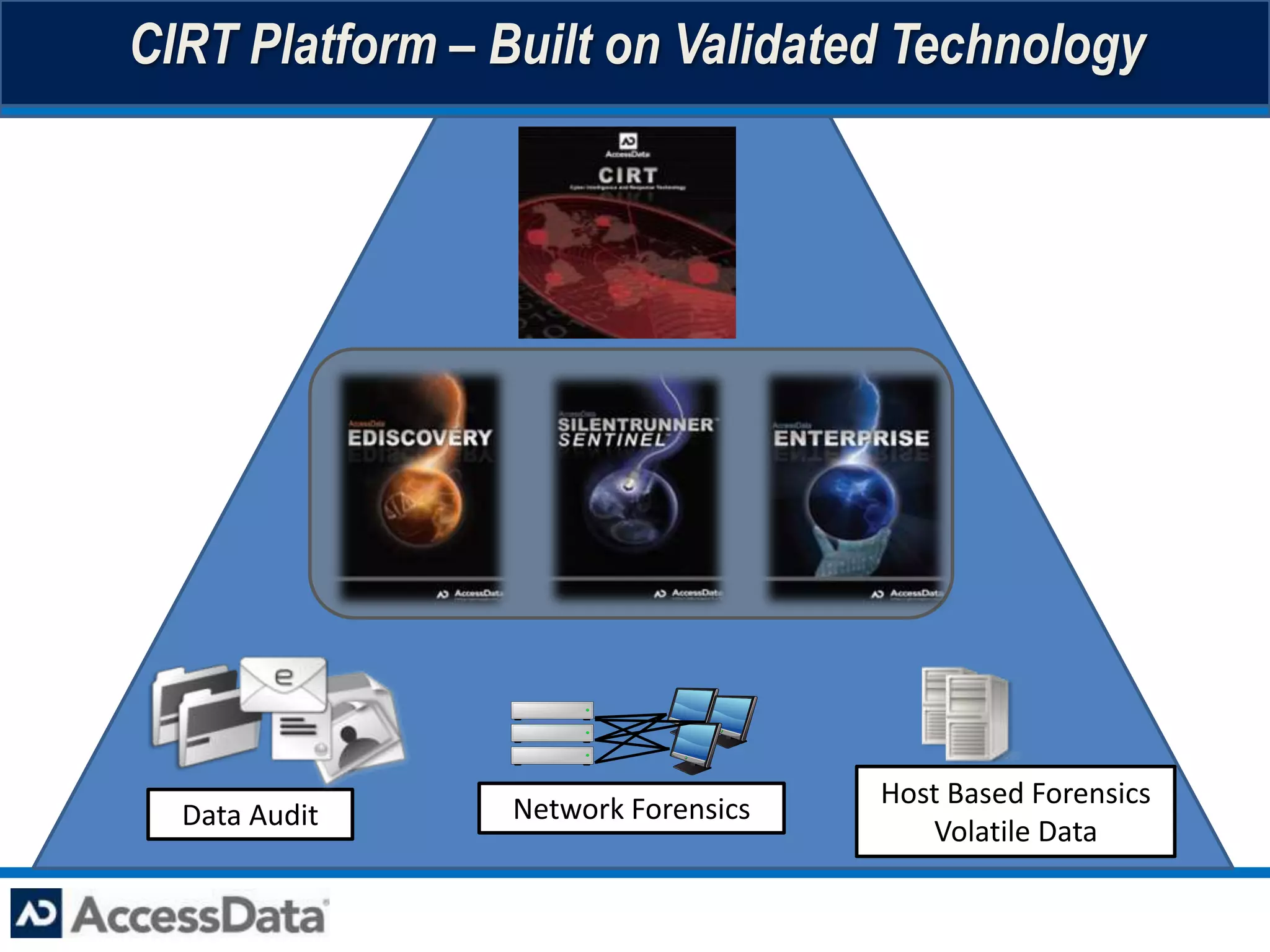

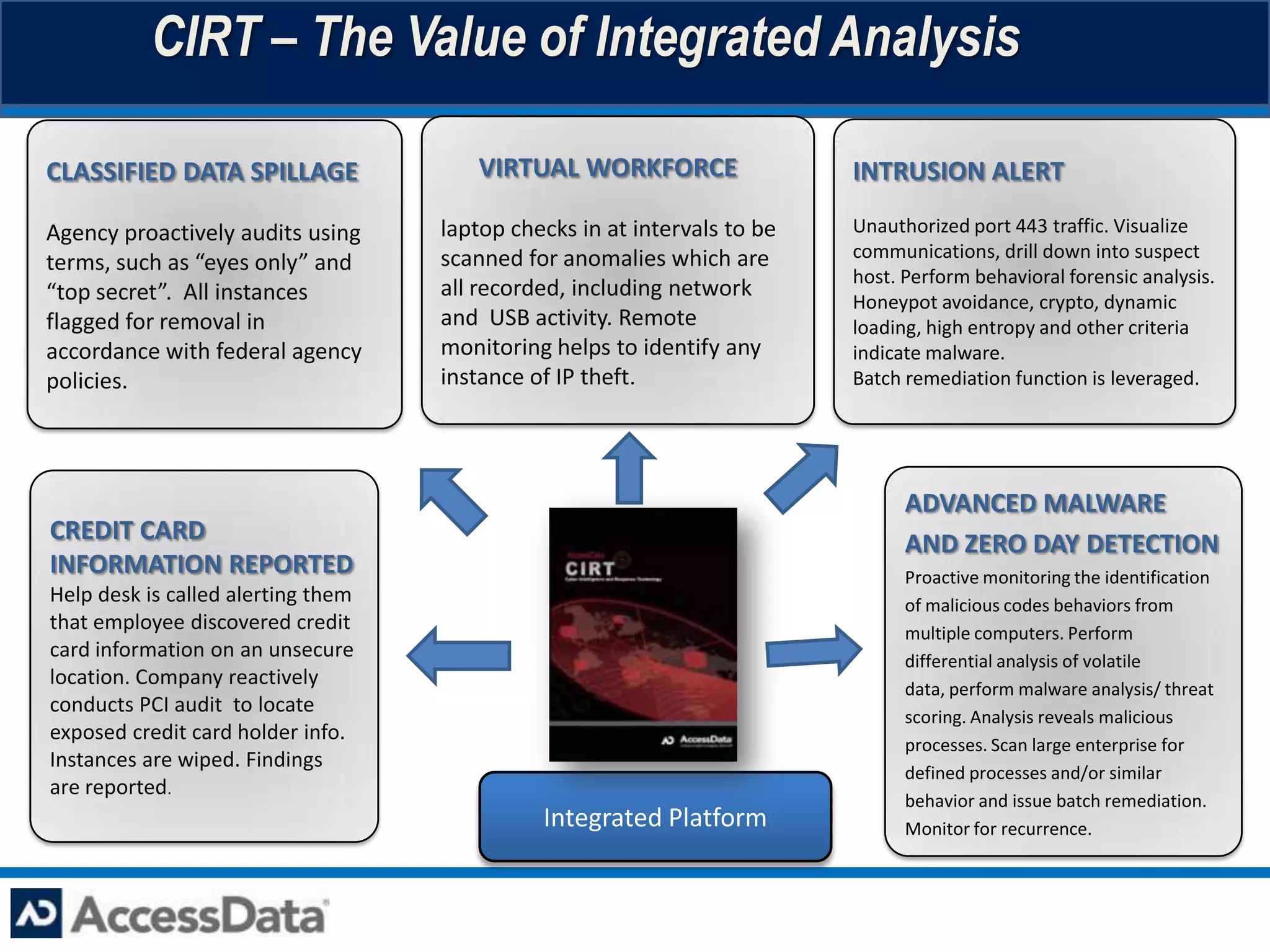

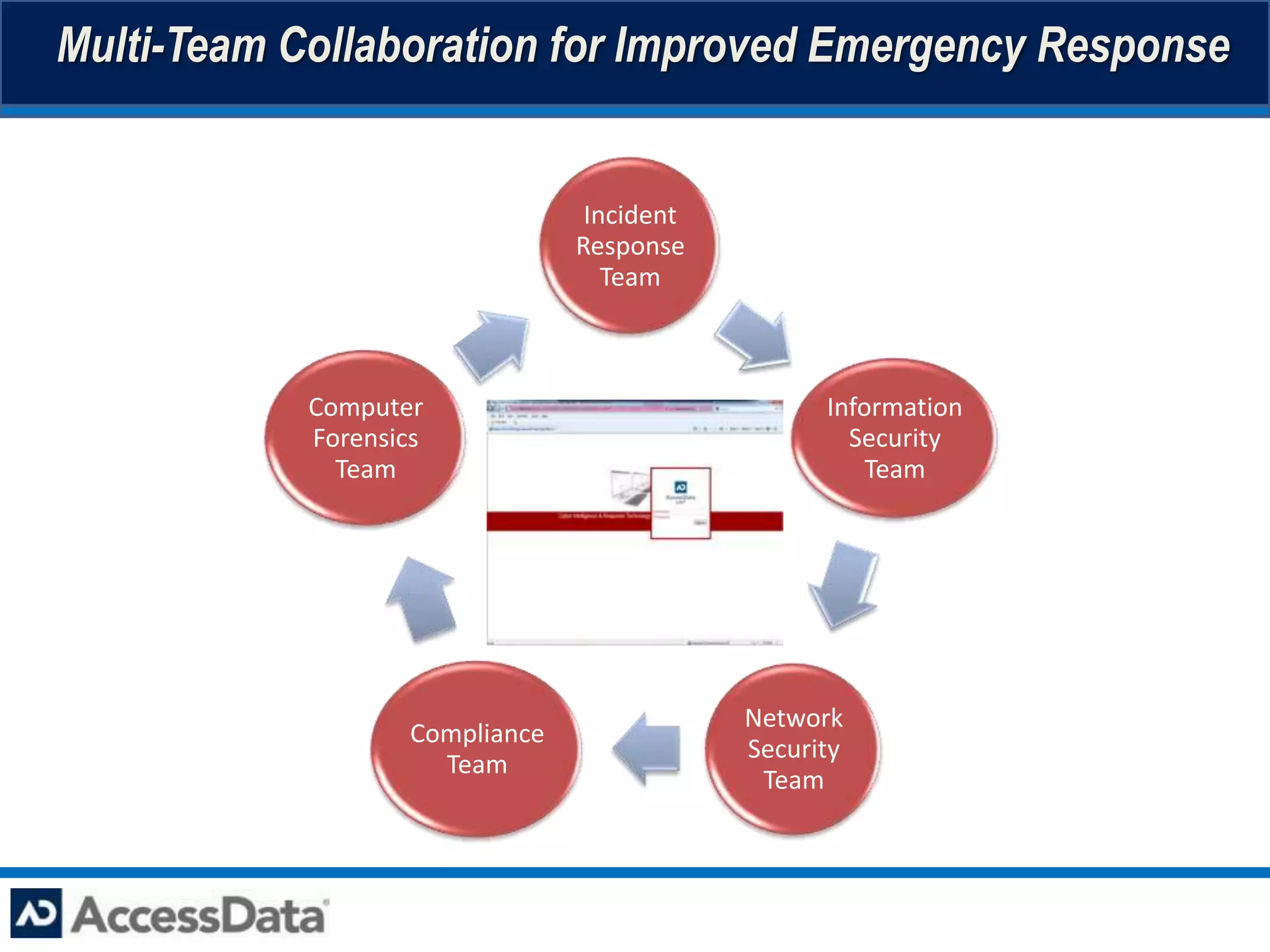

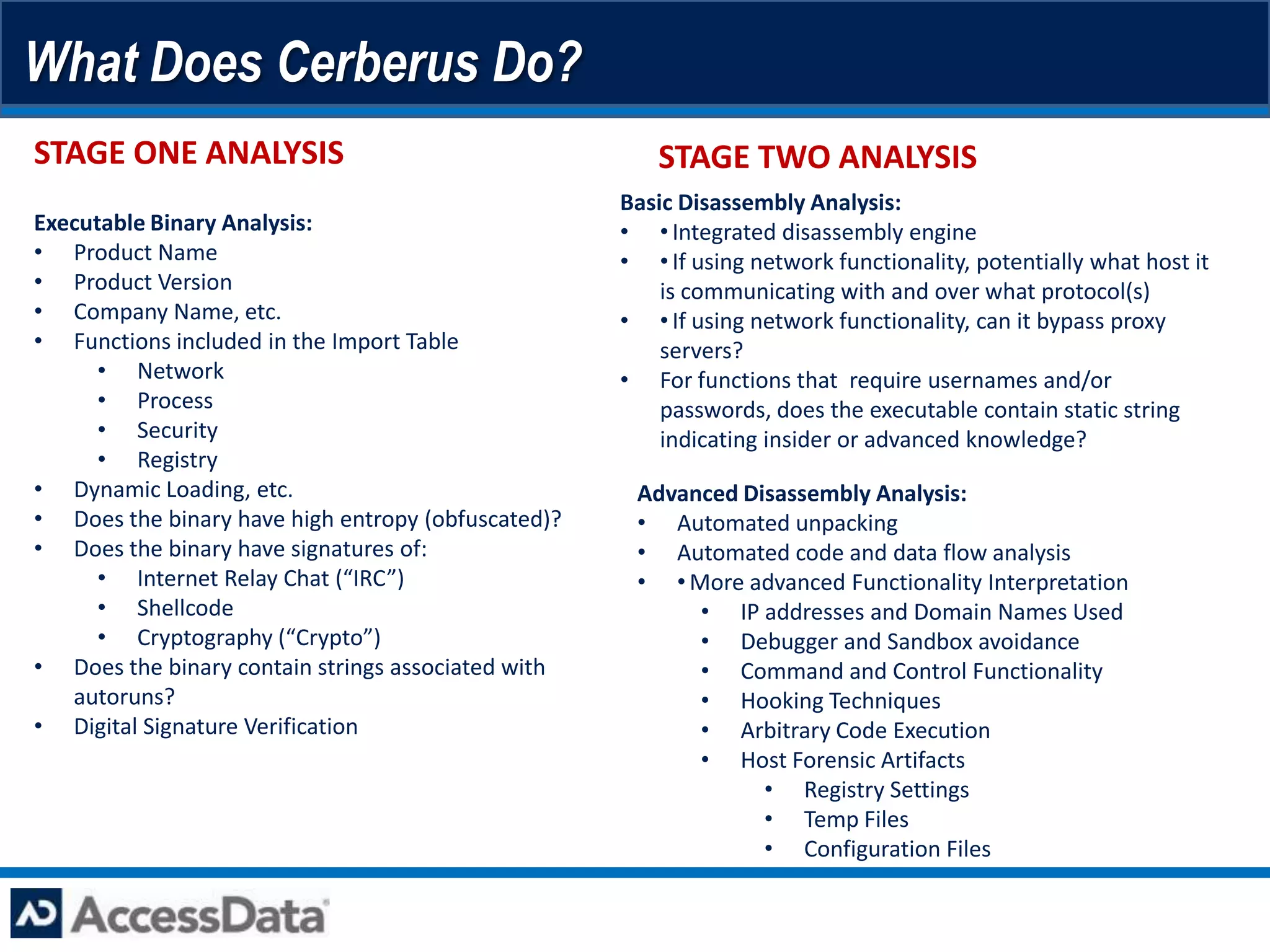

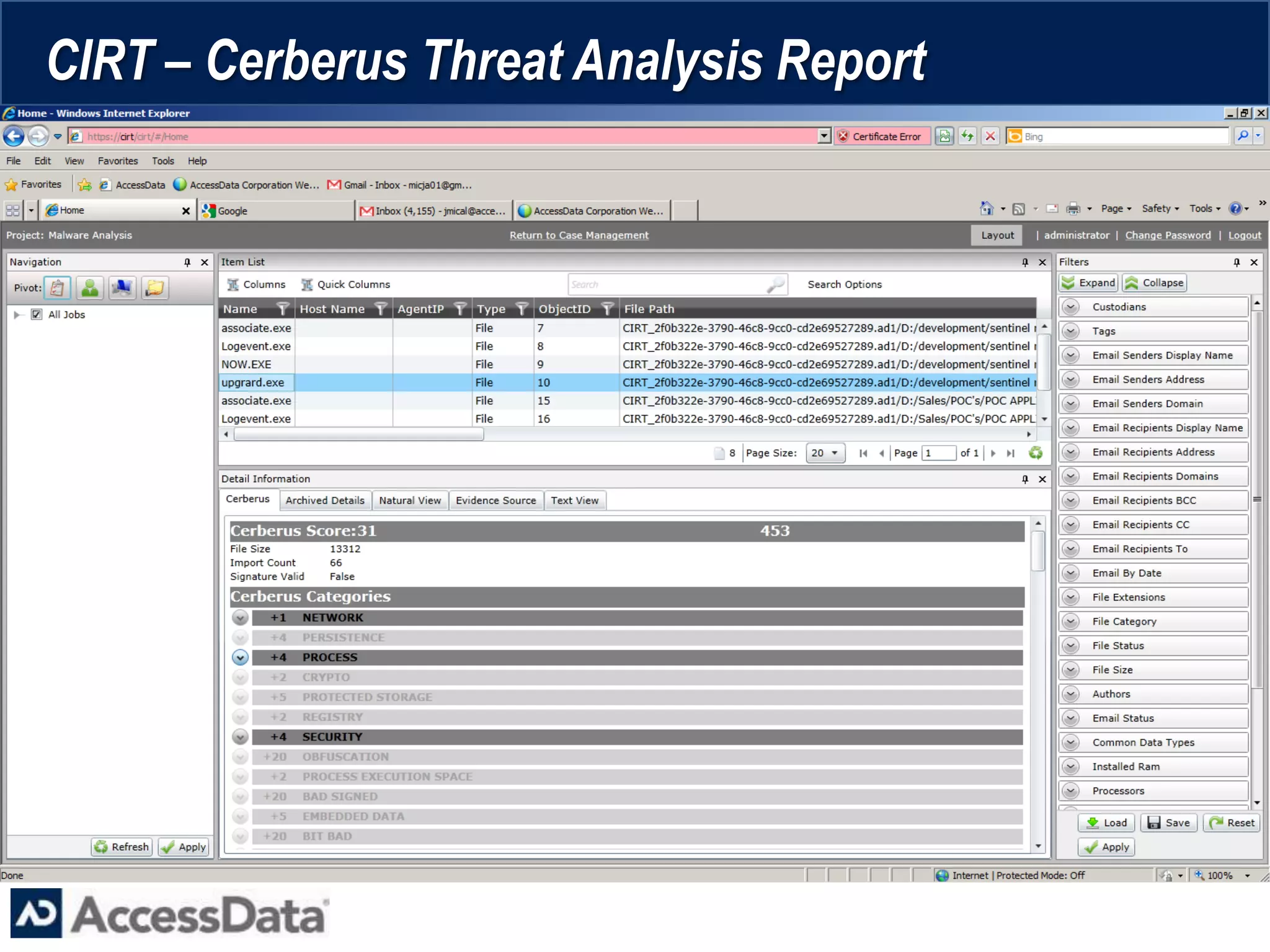

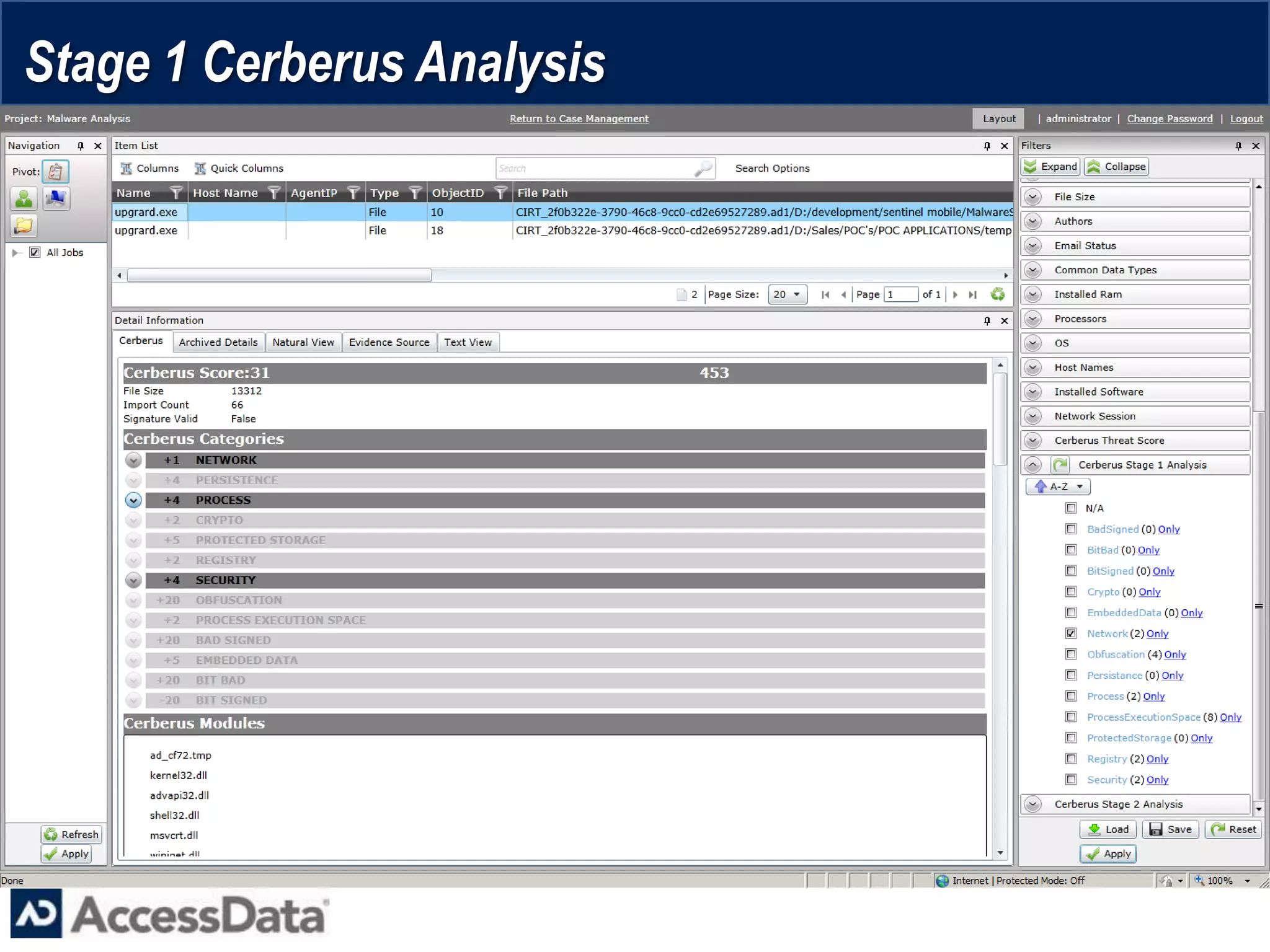

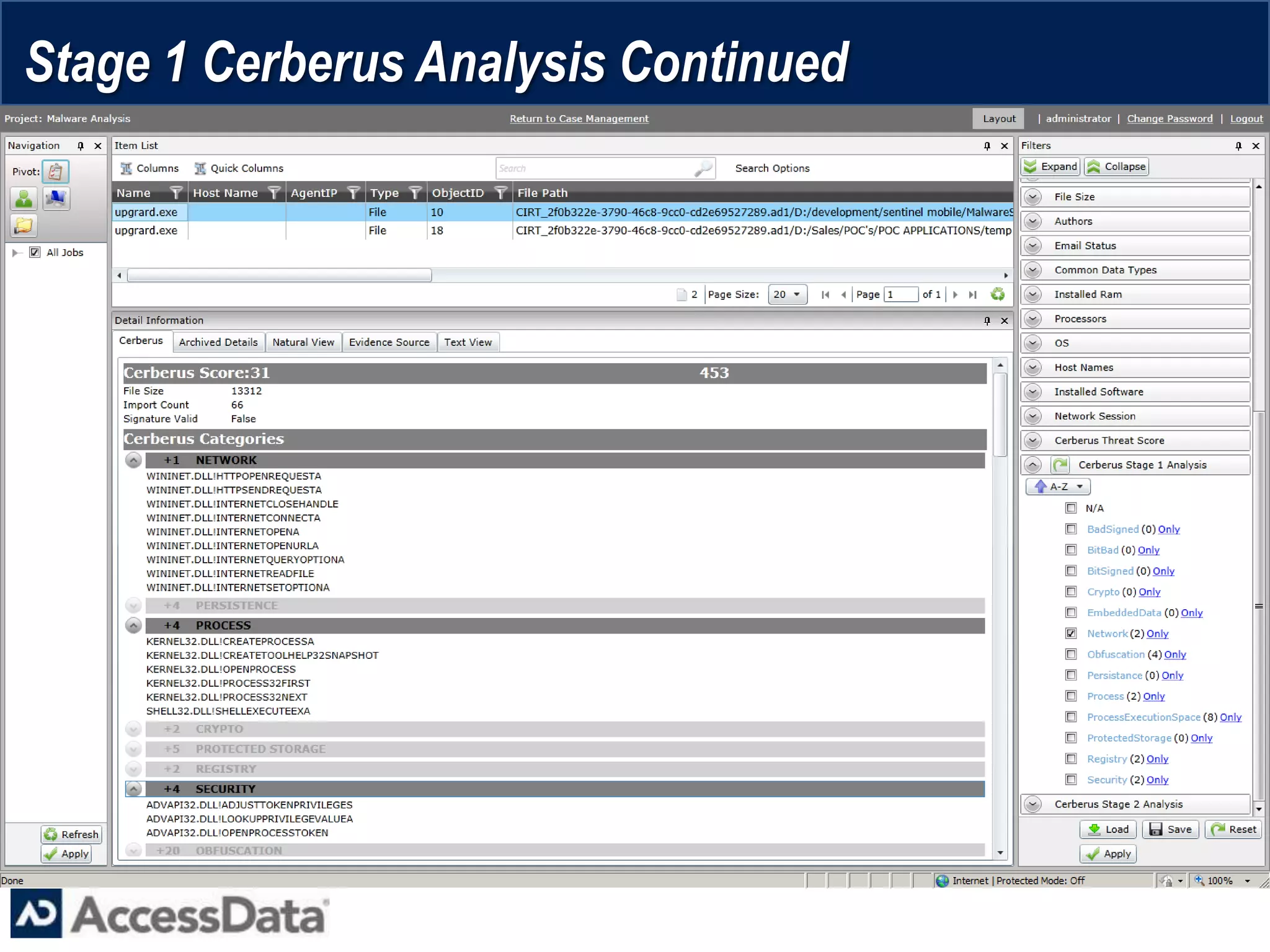

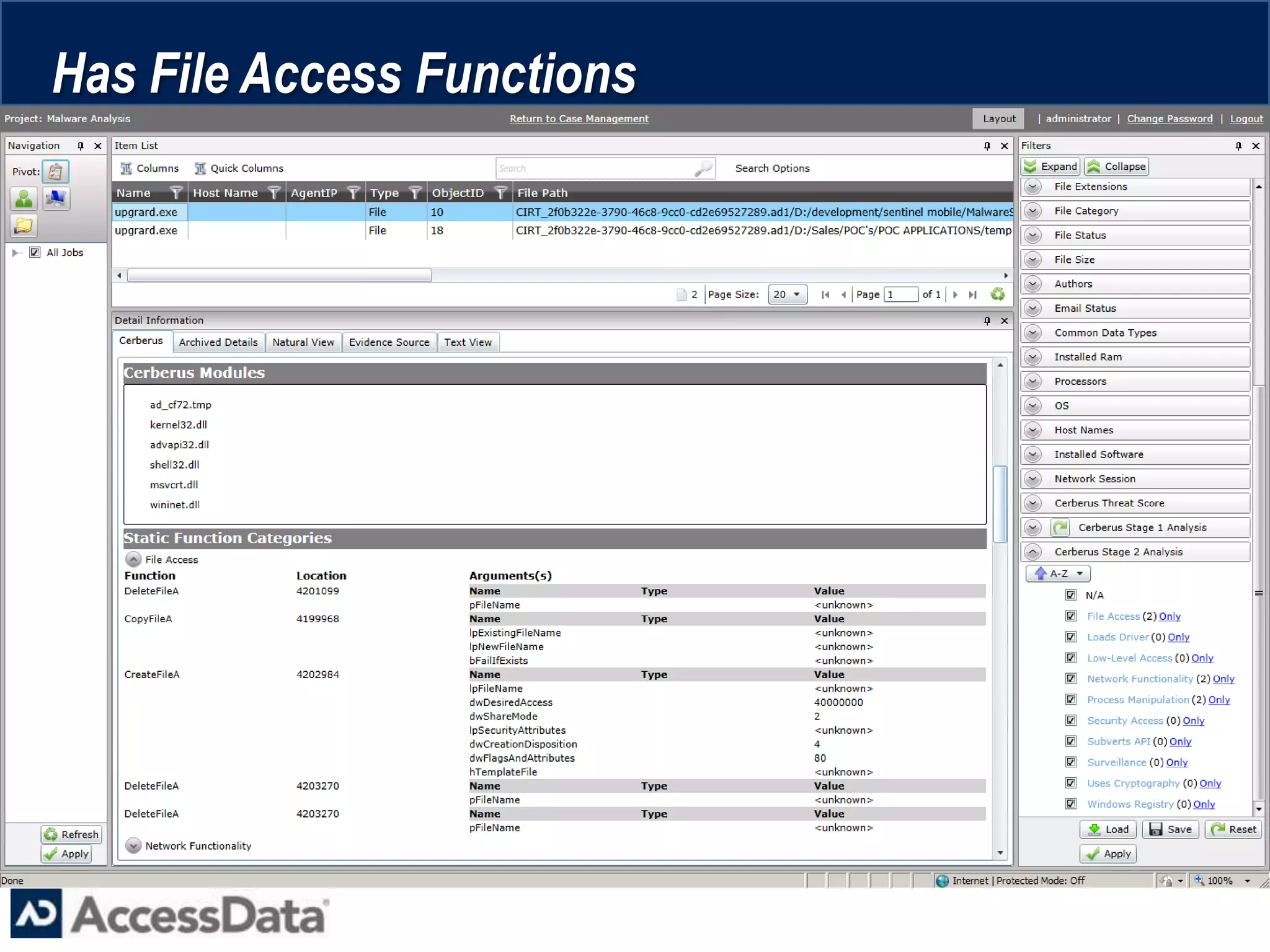

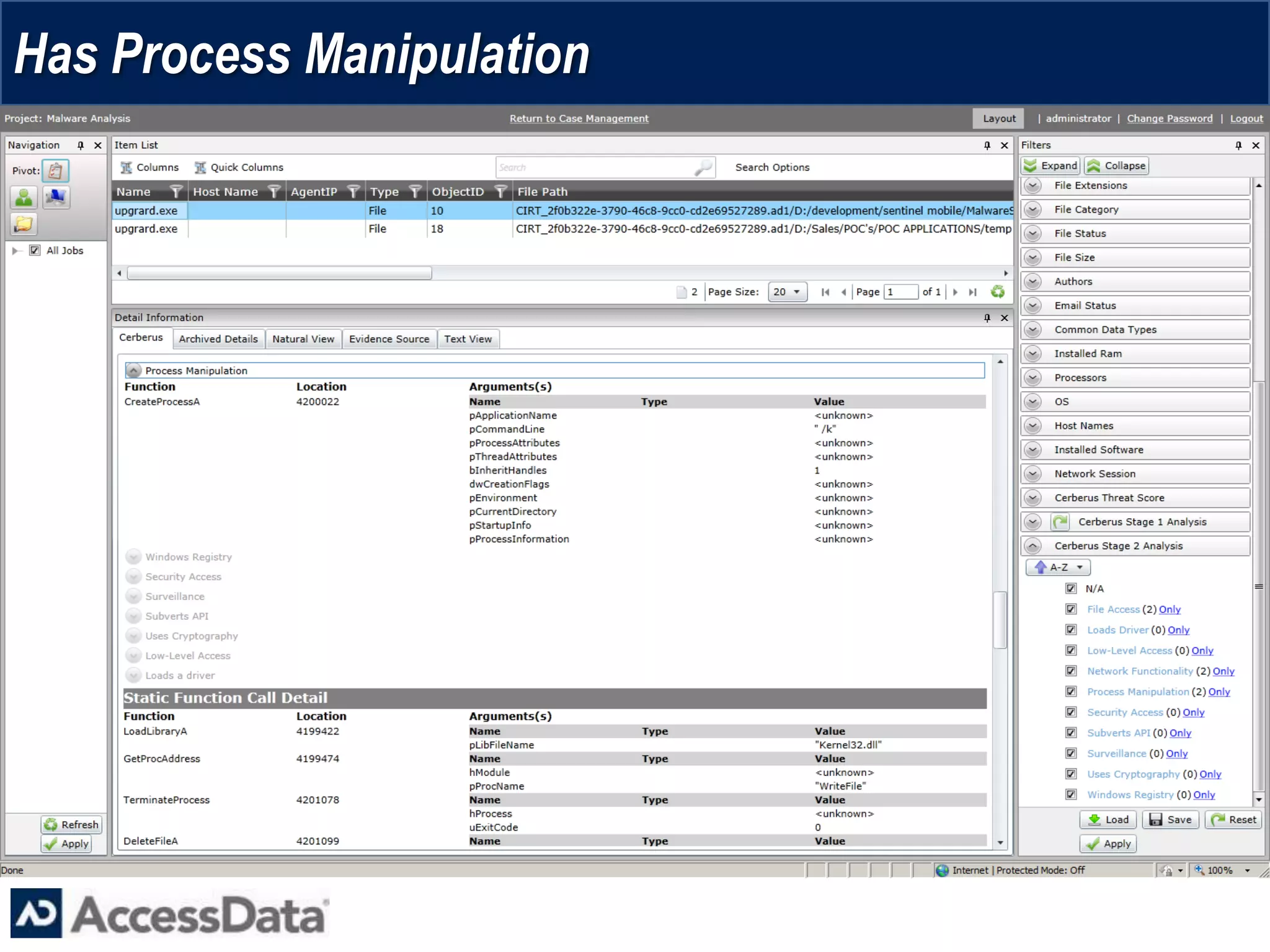

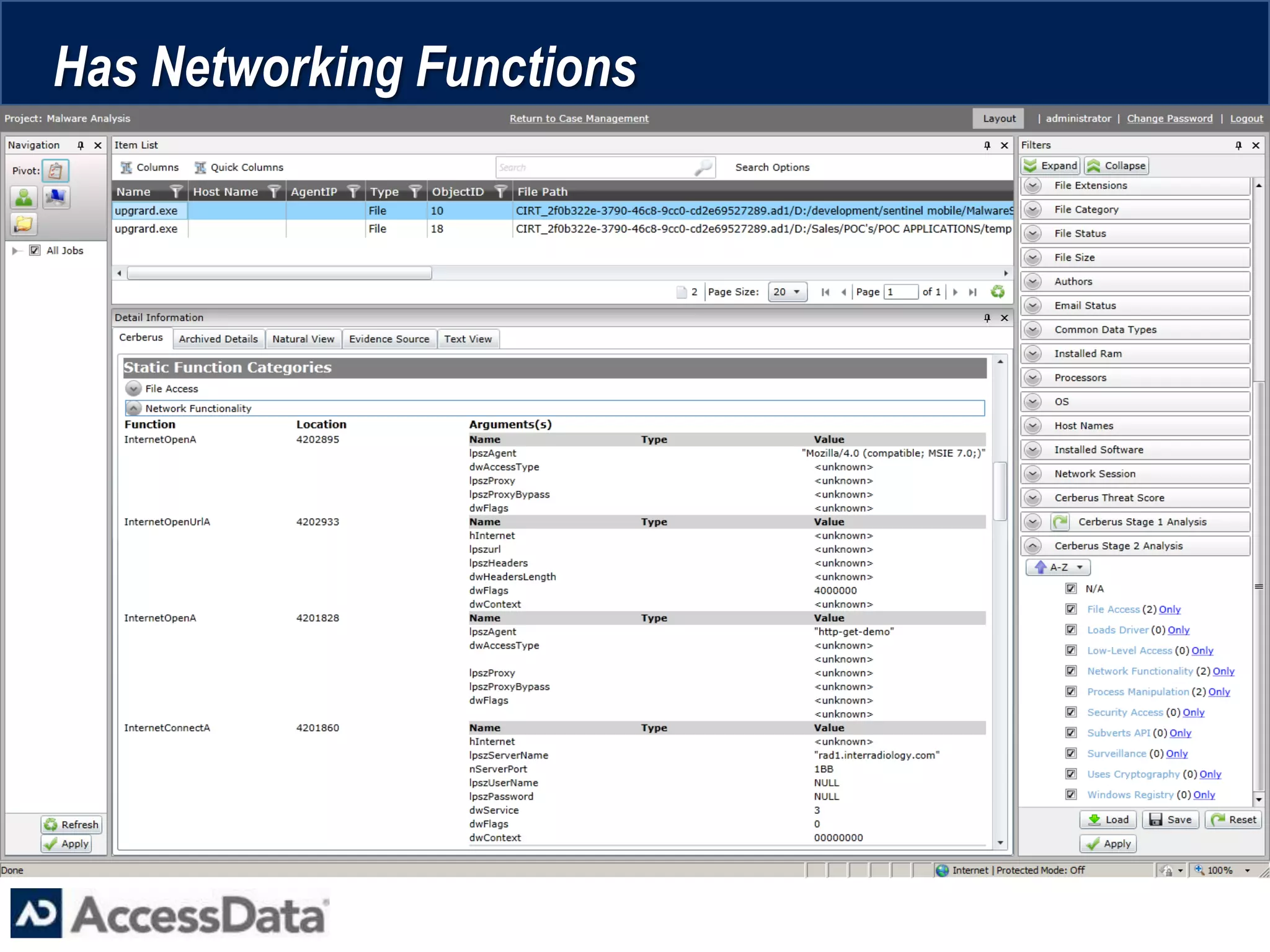

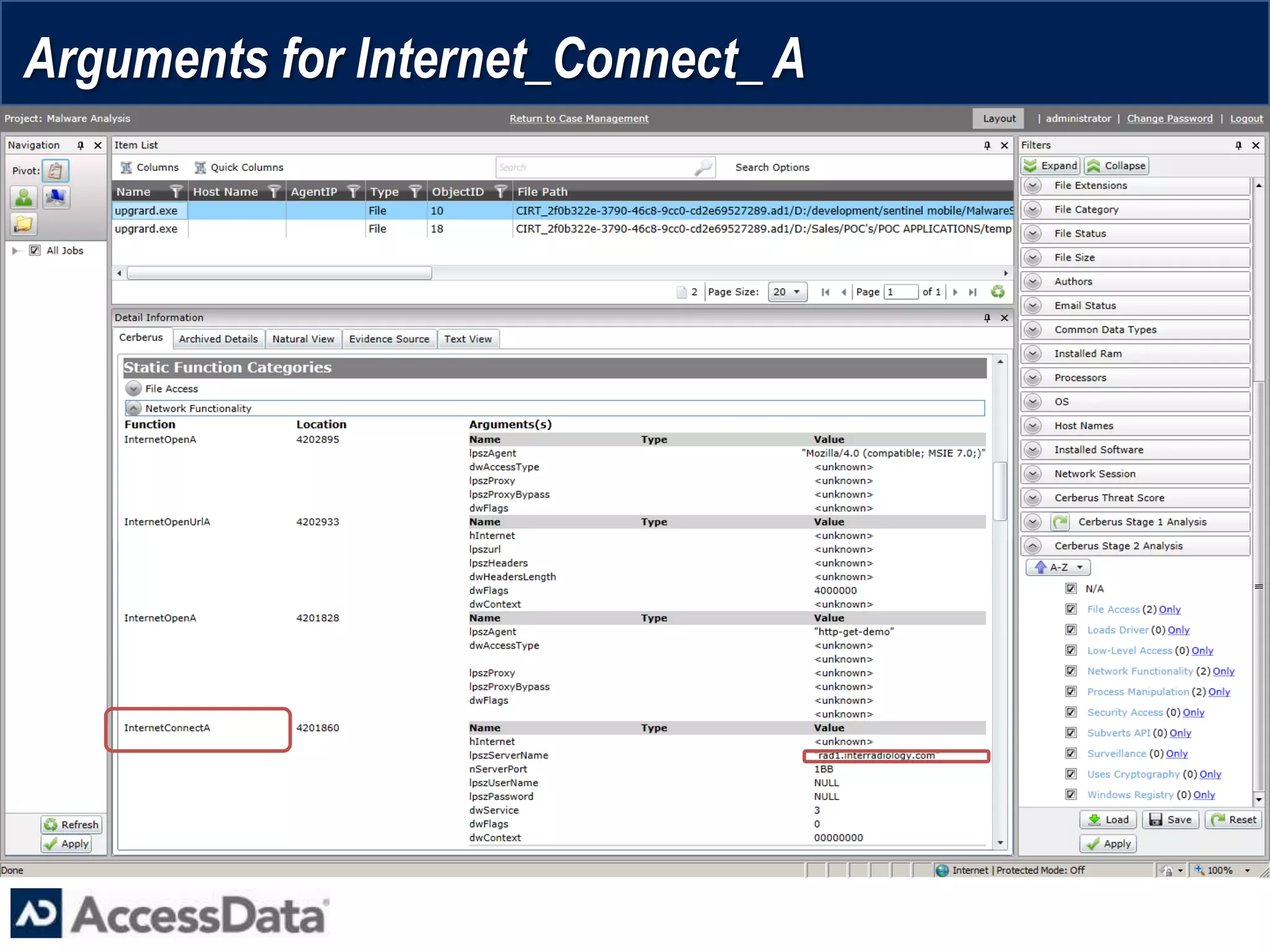

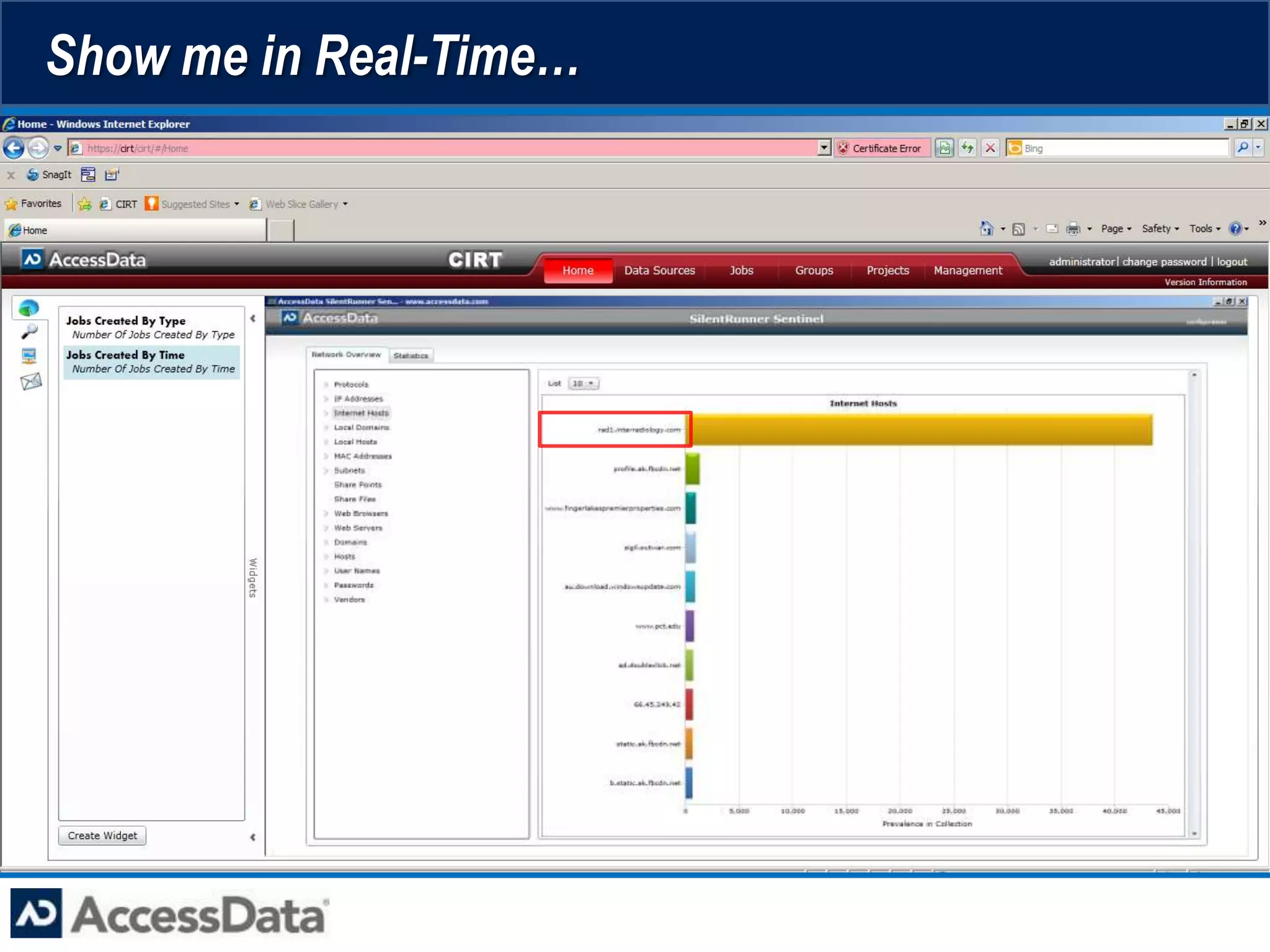

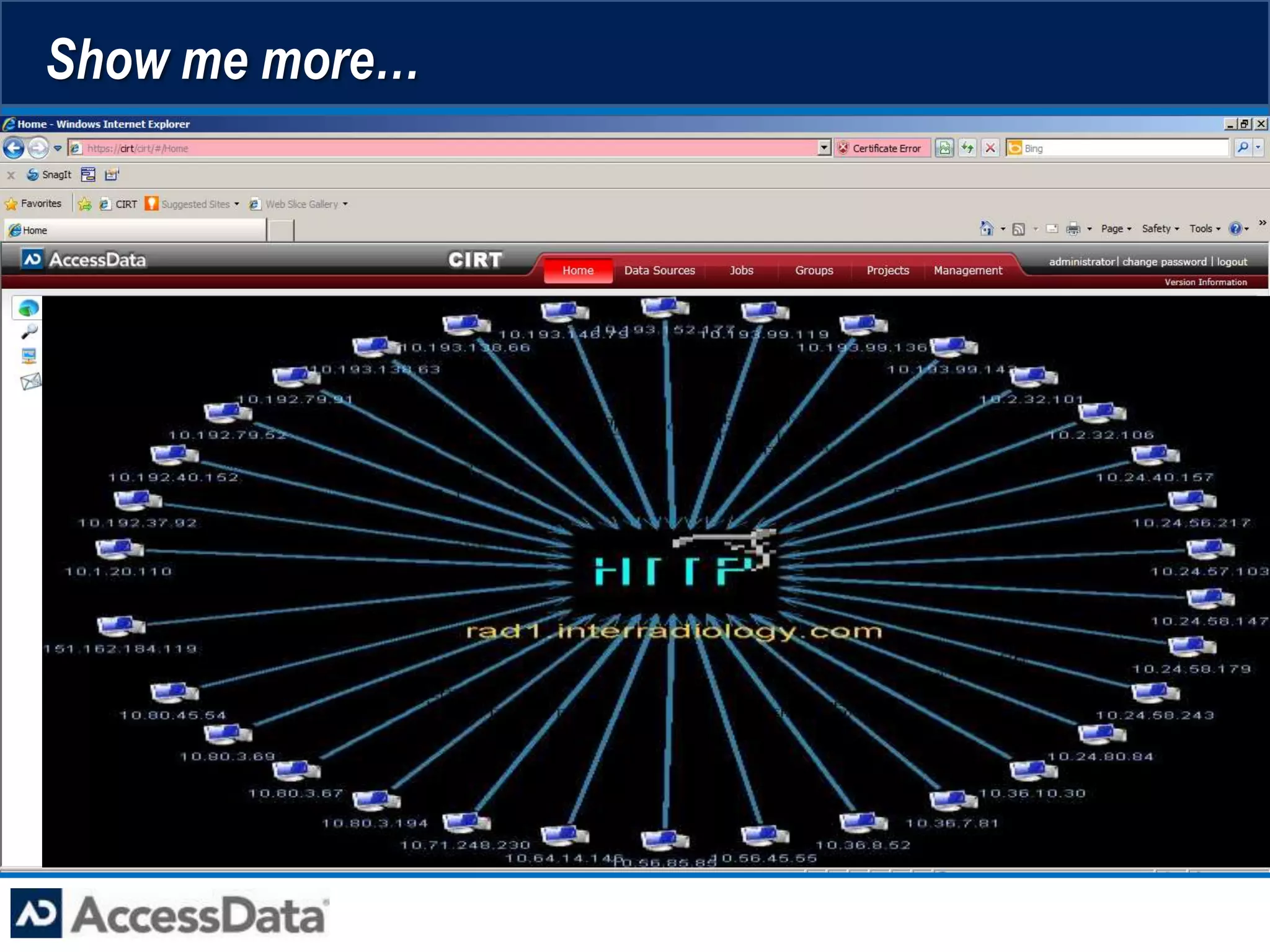

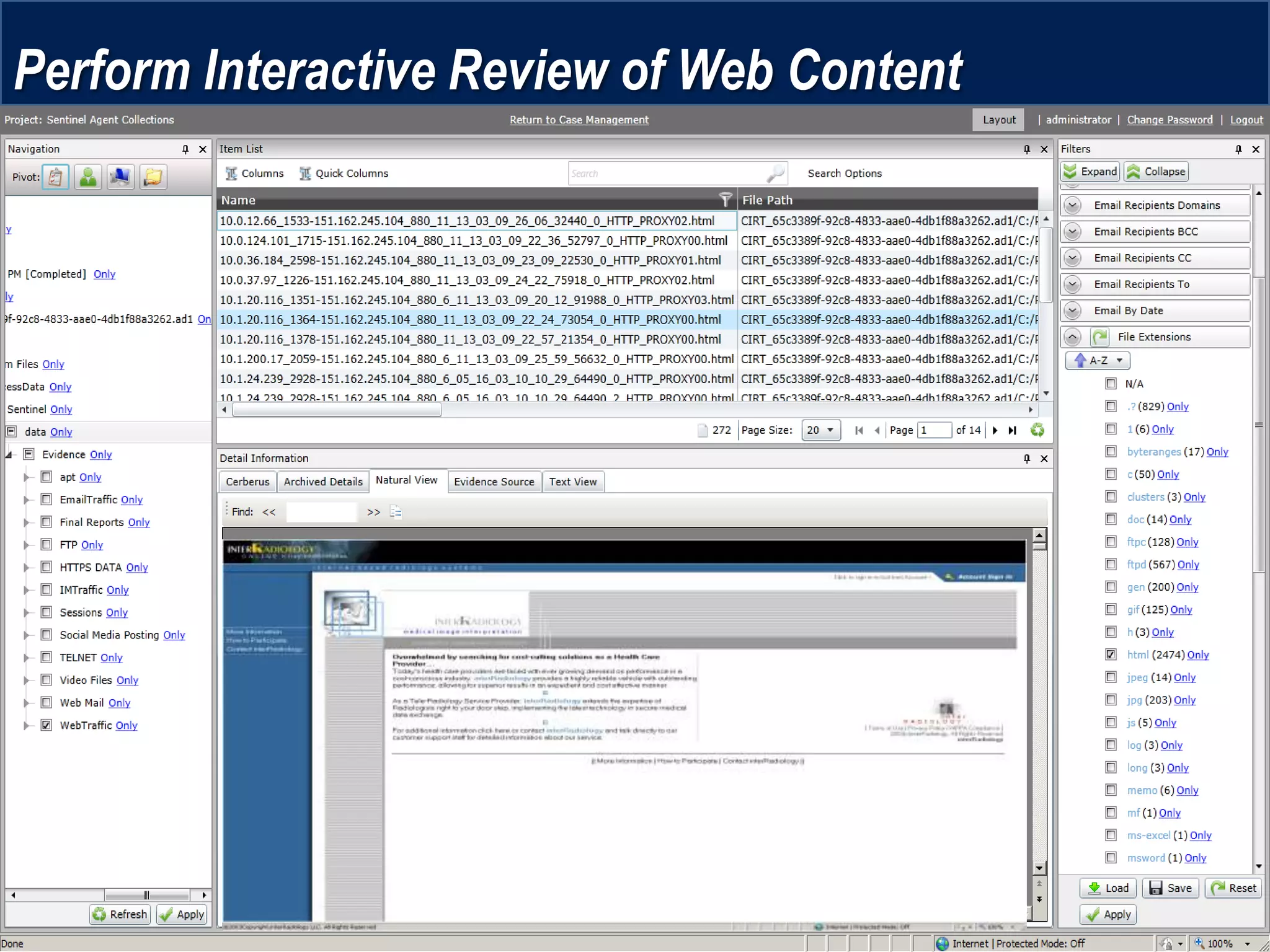

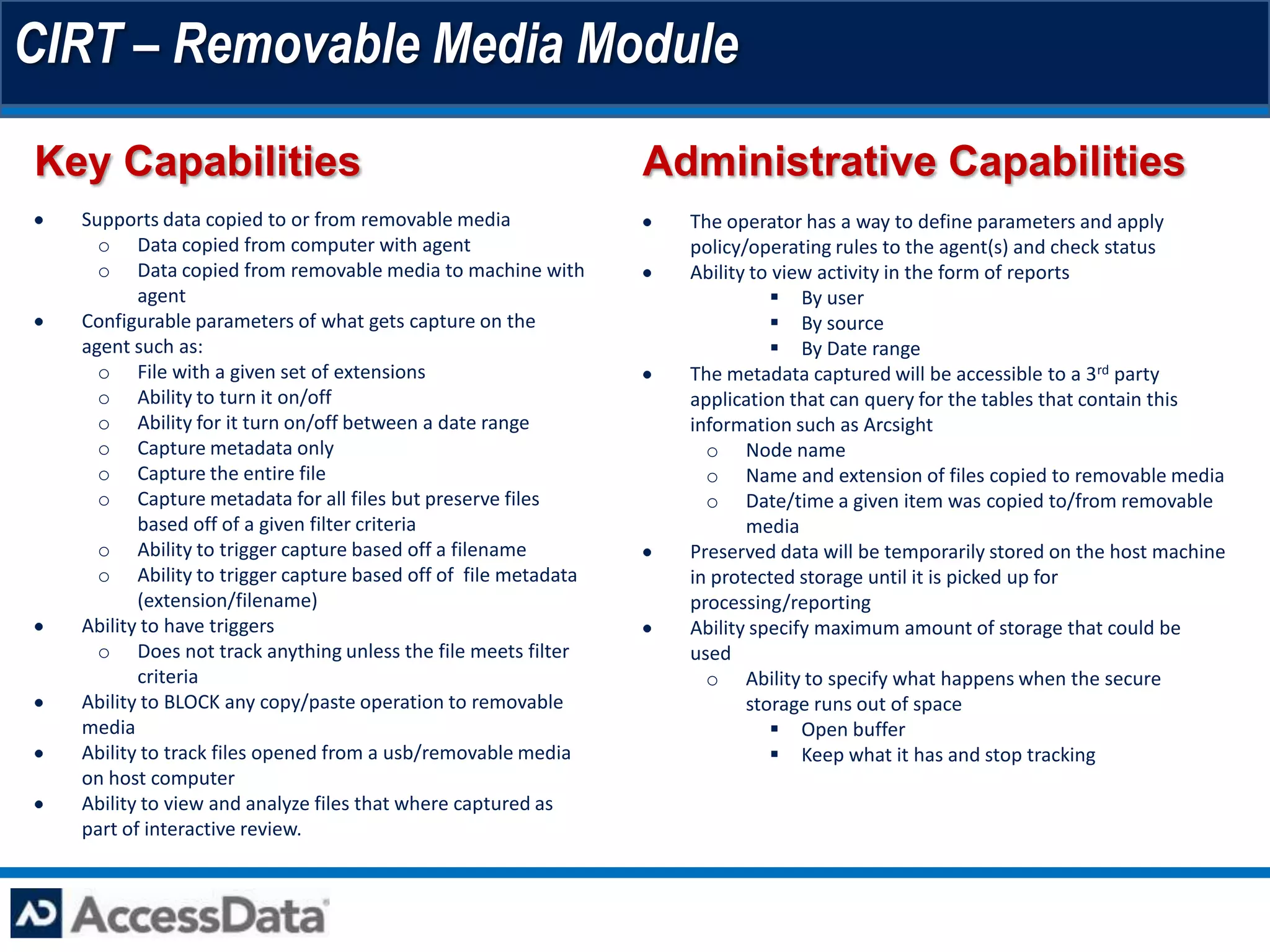

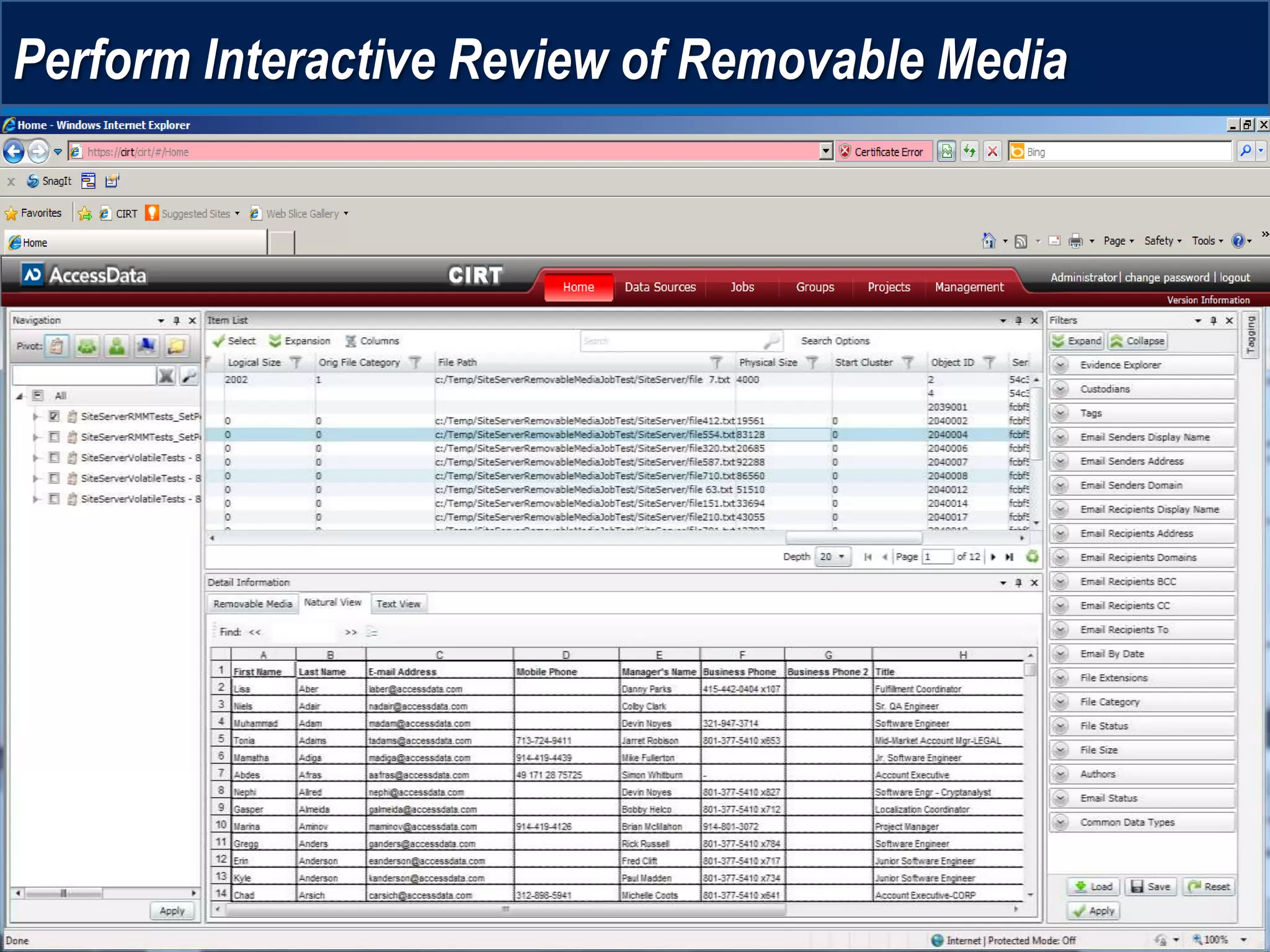

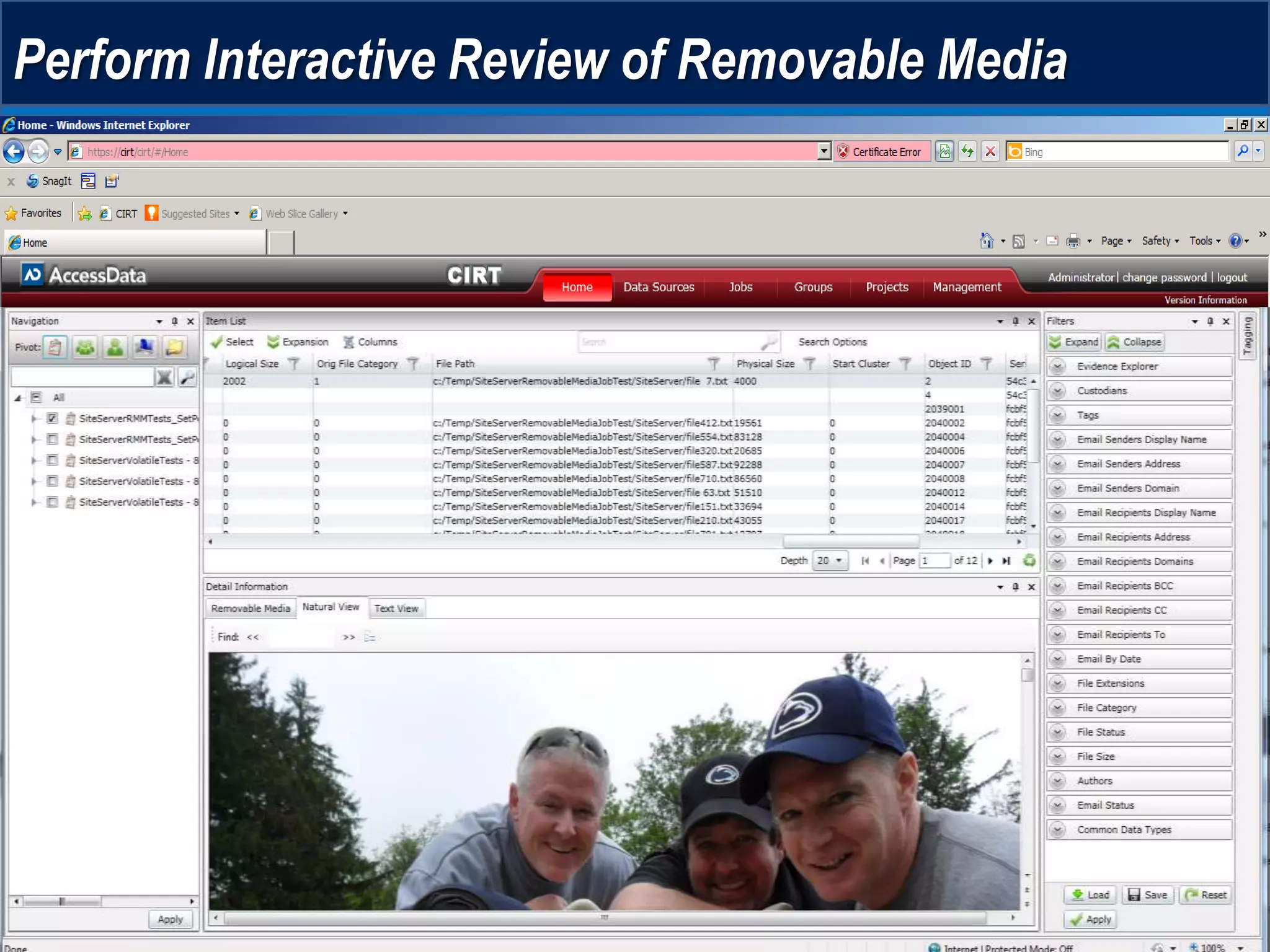

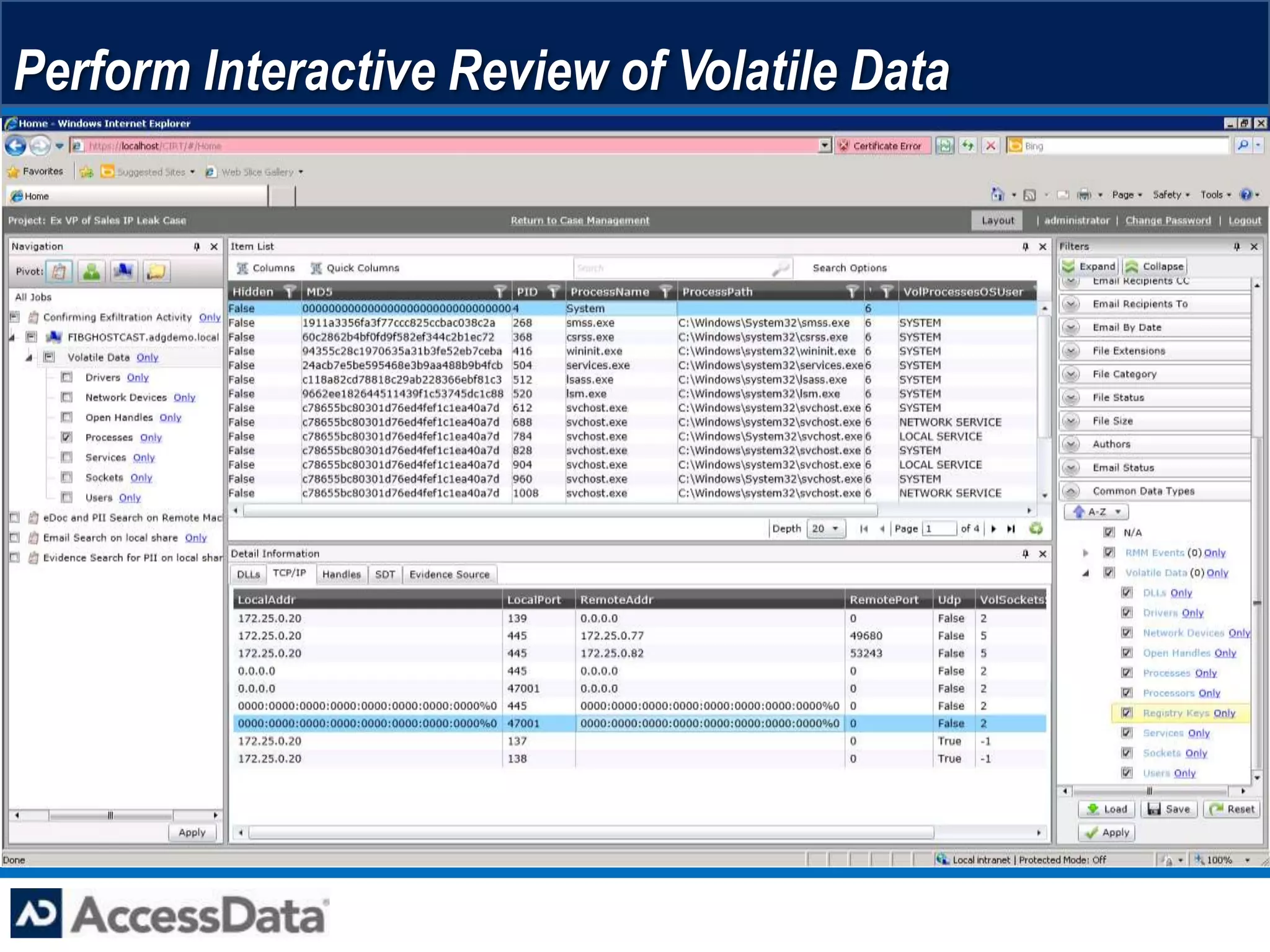

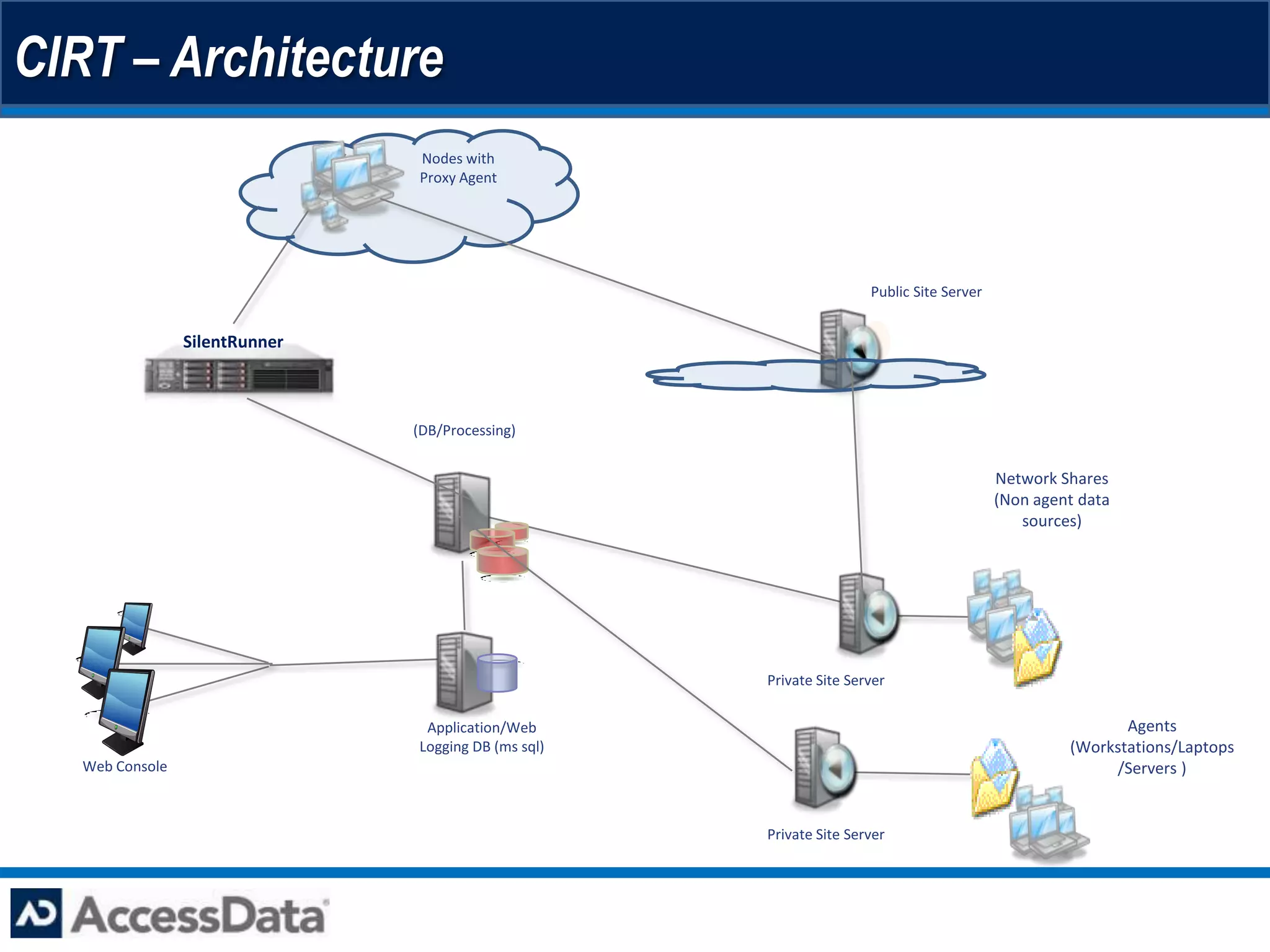

This document provides an overview of AccessData's Cyber Intelligence Response Technology (CIRT) platform. CIRT offers an integrated suite of digital forensics and incident response capabilities including network forensics, host-based forensics, data auditing, and malware analysis. Key features include an agent that can independently collect and store data from endpoints, a Cerberus module that analyzes files for malicious behaviors without signatures or prior knowledge, and modules for analyzing removable media, volatile memory, and network packet captures. The platform allows multiple teams such as incident response, computer forensics, and compliance to collaborate on investigations.