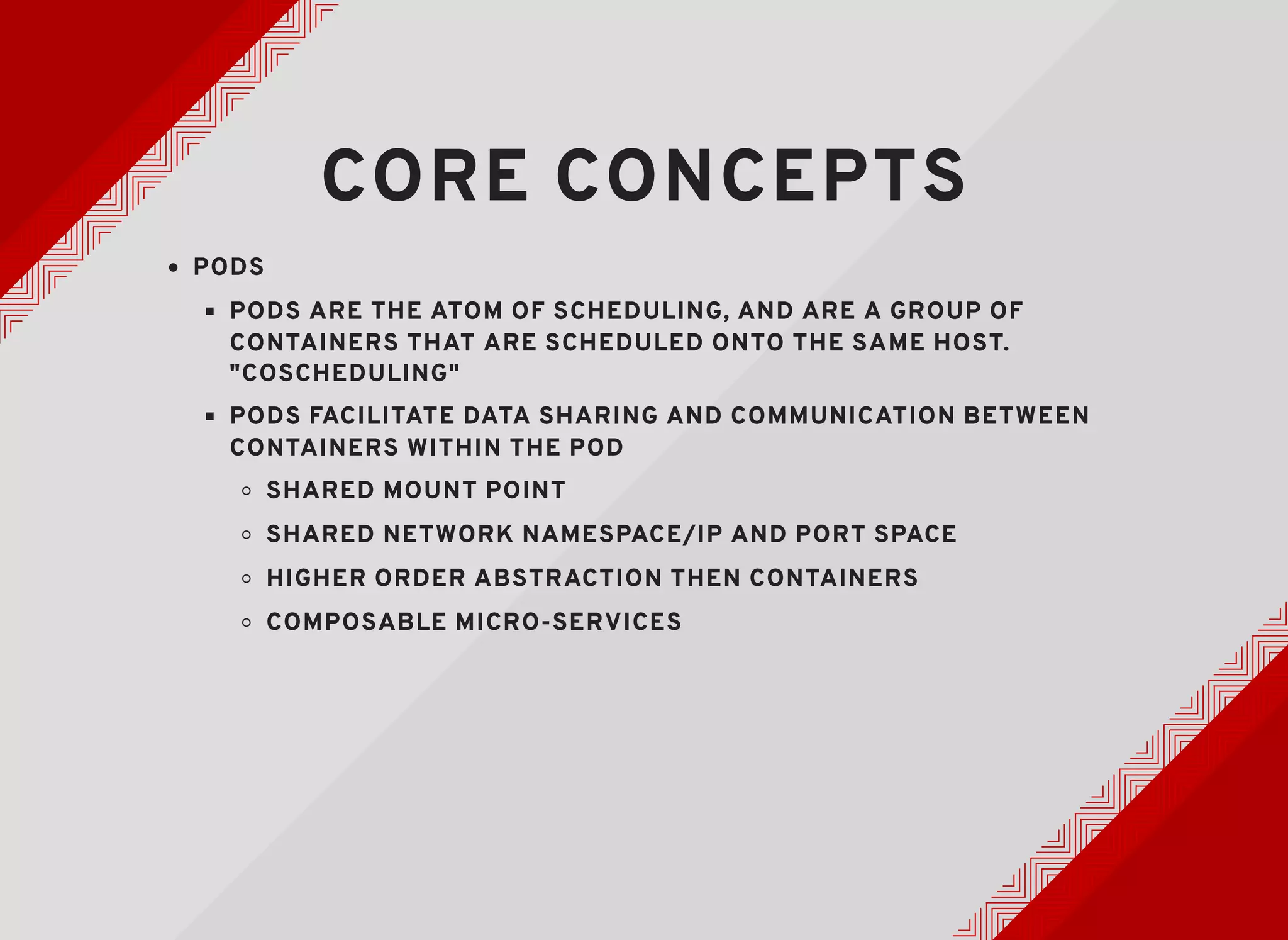

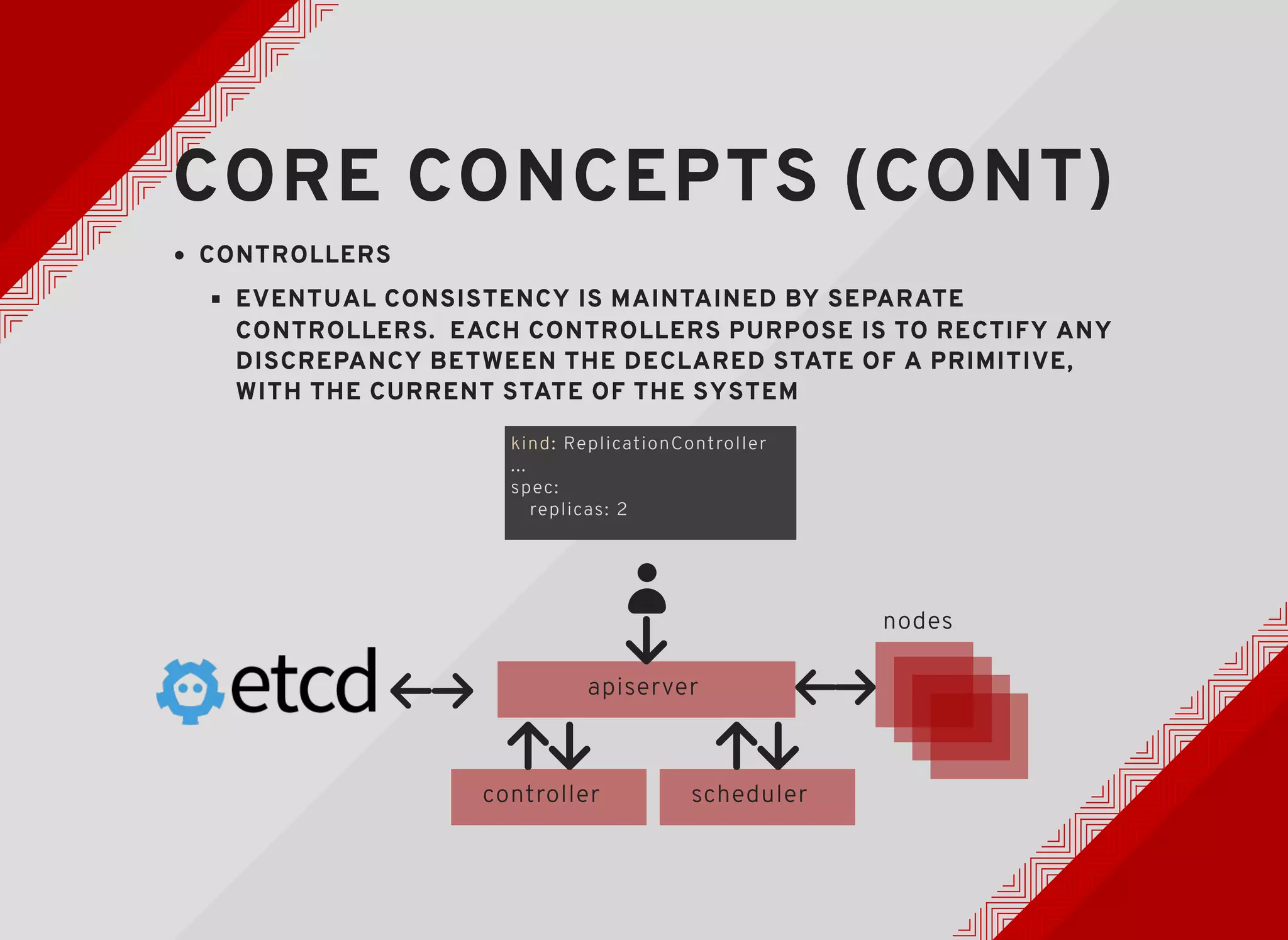

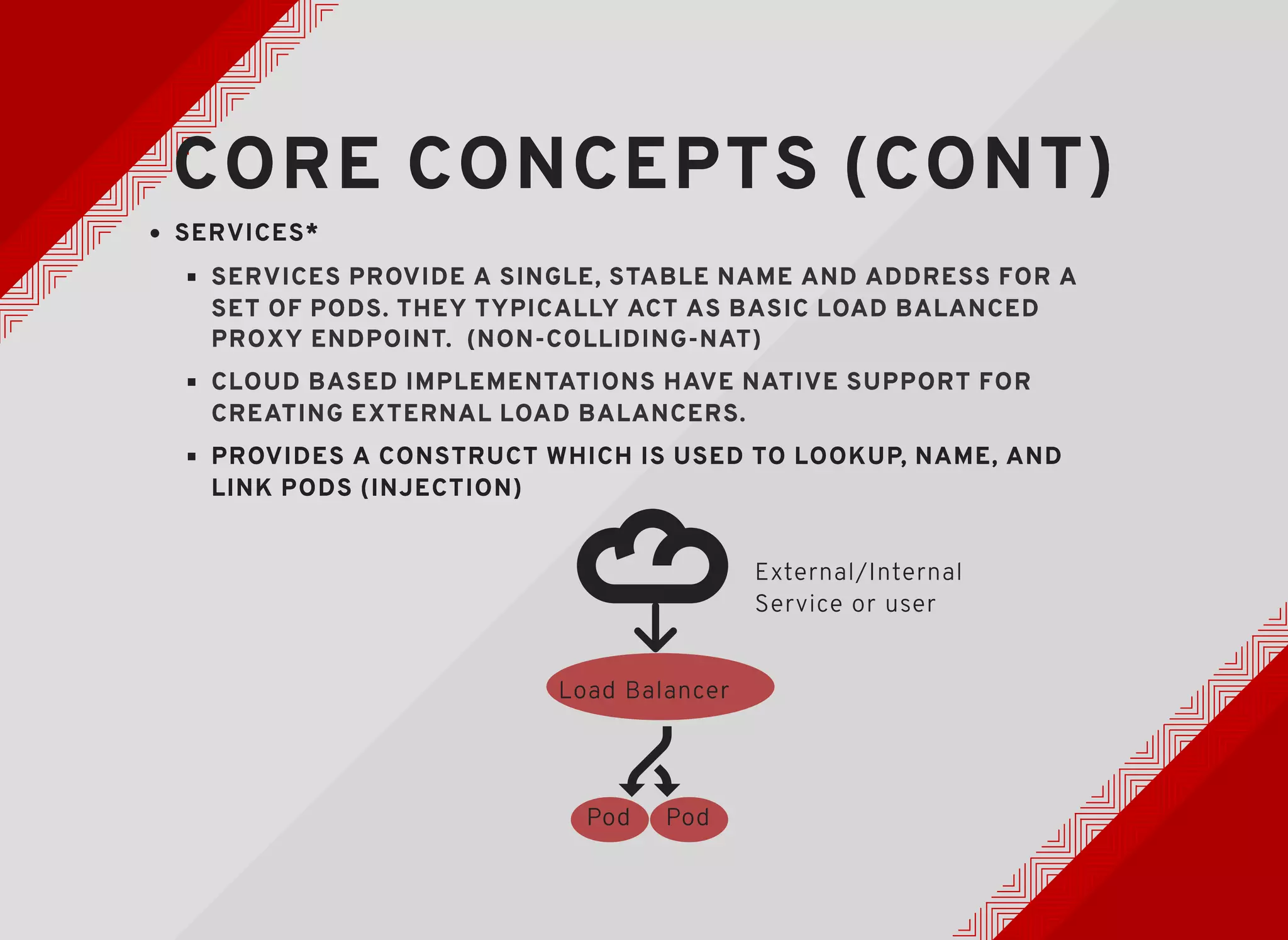

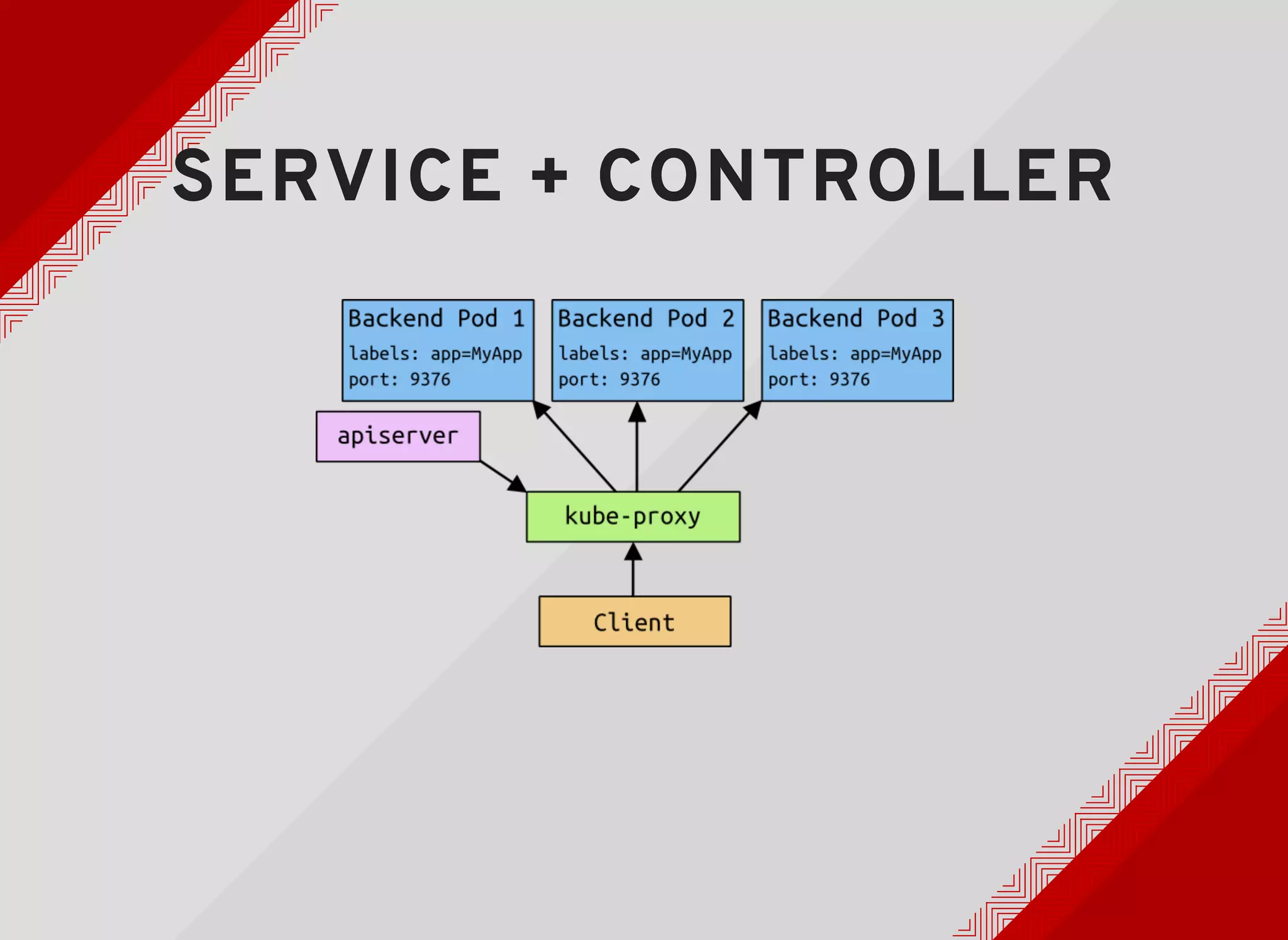

Kubernetes is an open-source system for automating deployment, scaling, and management of containerized applications. It groups containers that should be co-located into pods and manages replication and rollouts of pods across a cluster of machines. Kubernetes uses controllers to maintain the desired state by rectifying any discrepancies. It provides labels to map structures onto objects and services for load balancing pods behind a single IP address. Mesos and Kubernetes both aim to orchestrate containers but take different approaches, with Mesos focusing on resource isolation and sharing and Kubernetes focusing on deployment and management of applications.

![STEP BACK TO THE 80'SSTEP BACK TO THE 80'S

& 90'S& 90'S

[Ghostbusters] ~1984](https://image.slidesharecdn.com/mesoscon15k8snmesos-150820235337-lva1-app6891/75/Crossing-the-Streams-Mesos-lt-Kubernetes-4-2048.jpg)

![BEFORE "CONTAINERBEFORE "CONTAINER

ORCHESTRATION"ORCHESTRATION"

BEFORE IAAS/"CLOUD"BEFORE IAAS/"CLOUD"

THERE WAS THE GRIDTHERE WAS THE GRID

In the 1990s, inspired by the availability of high-speed wide area networks

and challenged by the computational requirements of new applications,

researchers began to imagine a computing infrastructure that would

“provide access to computing on demand” (COD) and permit “flexible,

secure, coordinated resource sharing among dynamic collections of

individuals, institutions, and resources”

[The History of the Grid] ~Ian Foster, Carl Kesselman](https://image.slidesharecdn.com/mesoscon15k8snmesos-150820235337-lva1-app6891/75/Crossing-the-Streams-Mesos-lt-Kubernetes-5-2048.jpg)

![FAST FORWARD TO 2015FAST FORWARD TO 2015

[Back to the Future Part 3]](https://image.slidesharecdn.com/mesoscon15k8snmesos-150820235337-lva1-app6891/75/Crossing-the-Streams-Mesos-lt-Kubernetes-11-2048.jpg)

![EXPOSING K8S SERVICESEXPOSING K8S SERVICES

{

...

"ports": [

{

"protocol": "TCP",

"port": 80,

"targetPort": 9376,

"nodePort": 30061

}

],

...

"type": "LoadBalancer"

},

"status": {

"loadBalancer": {

"ingress": [

{

"ip": "146.148.47.155"

}

...

}

nodePort: the Kubernetes master will

allocate a port from a flag-configured

range (default: 30000-32767), and

each Node will proxy that port (the

same port number on every Node) into

your Service.

type: LoadBalancer - On cloud

providers which support external load

balancers, setting the type field

to "LoadBalancer" will provision a load

balancer for your Service.

https://github.com/kubernetes/kubernetes/bl

ob/master/docs/user-guide/services.md](https://image.slidesharecdn.com/mesoscon15k8snmesos-150820235337-lva1-app6891/75/Crossing-the-Streams-Mesos-lt-Kubernetes-34-2048.jpg)

![ENJOY THE JOURNEYENJOY THE JOURNEY

[Ghostbusters] ~1984

IT MAY GET A LITTLE MESSY, BUT IT'S WORTH ITIT MAY GET A LITTLE MESSY, BUT IT'S WORTH IT](https://image.slidesharecdn.com/mesoscon15k8snmesos-150820235337-lva1-app6891/75/Crossing-the-Streams-Mesos-lt-Kubernetes-39-2048.jpg)