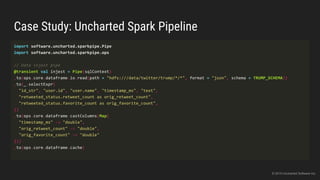

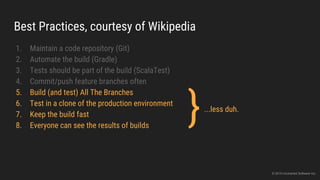

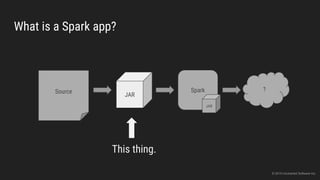

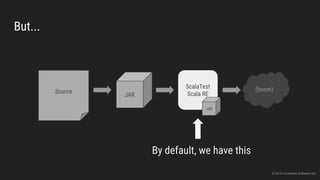

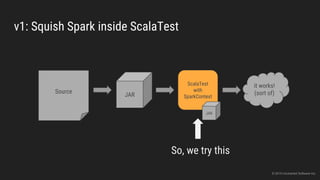

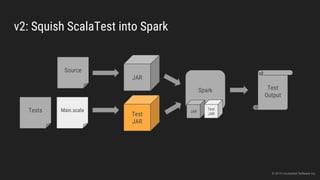

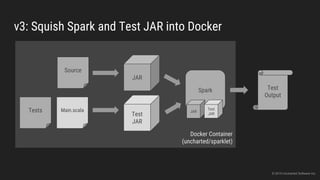

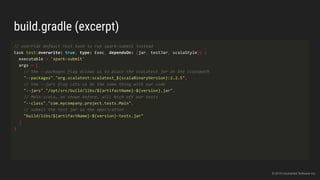

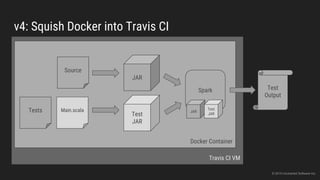

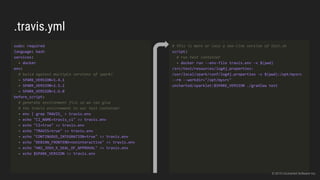

The document discusses the challenges of continuous integration for Apache Spark applications and presents a solution developed by Uncharted Software. It describes squeezing Spark, tests, and other tools into Docker containers to enable building and testing Spark apps across branches in a shared environment. This approach allows automating testing of Spark code commits, detecting issues early, and providing visibility of test results.