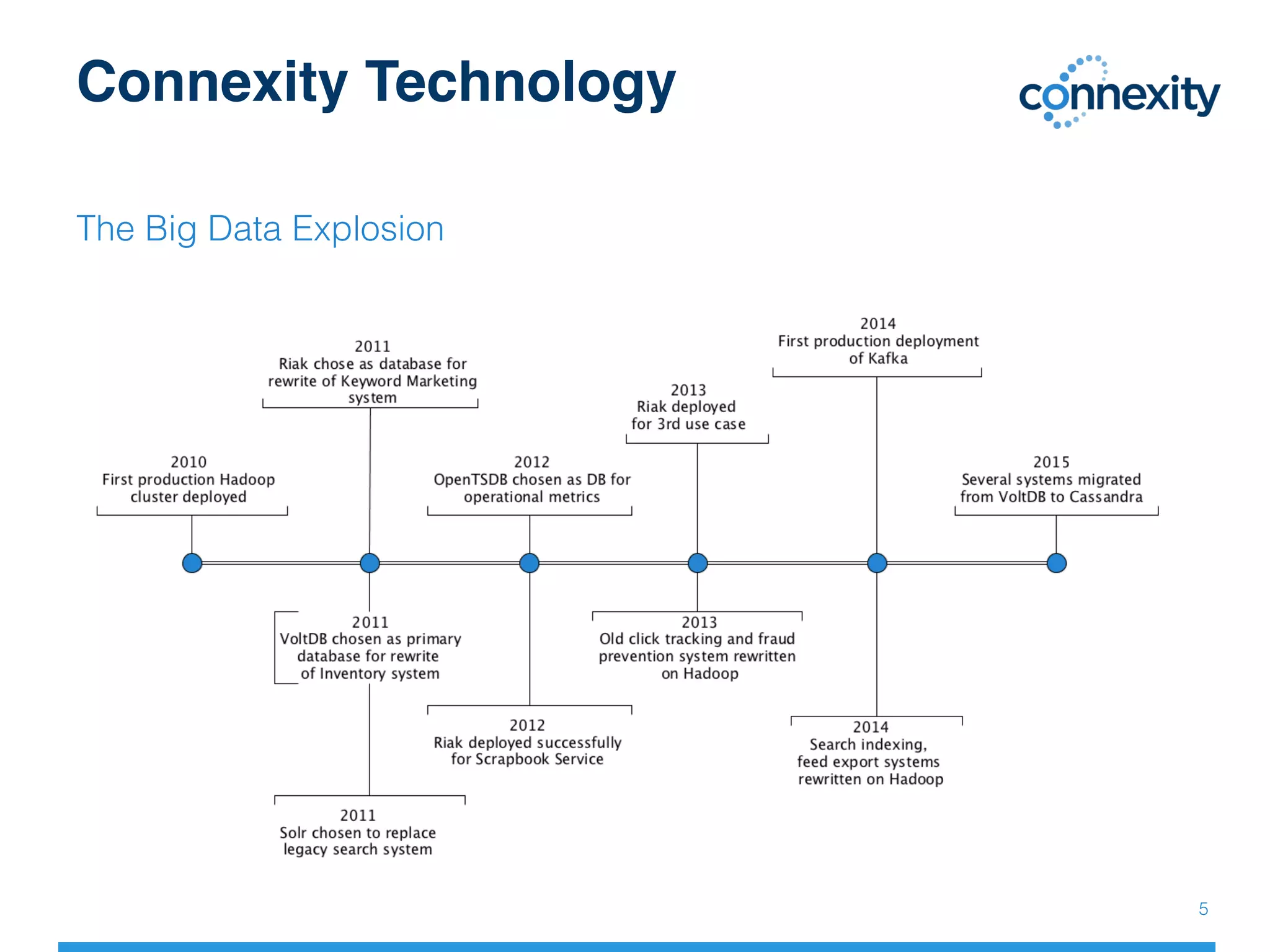

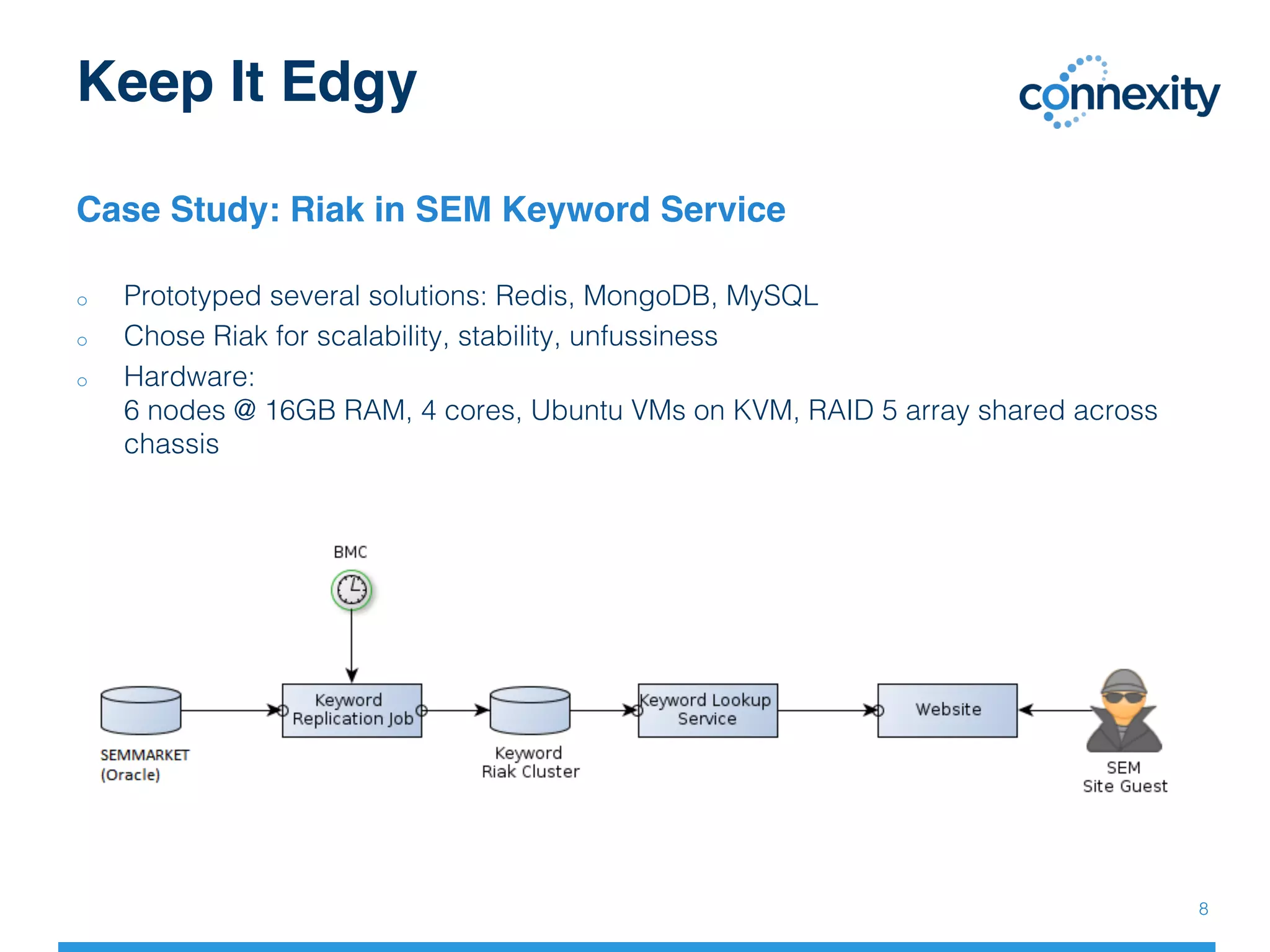

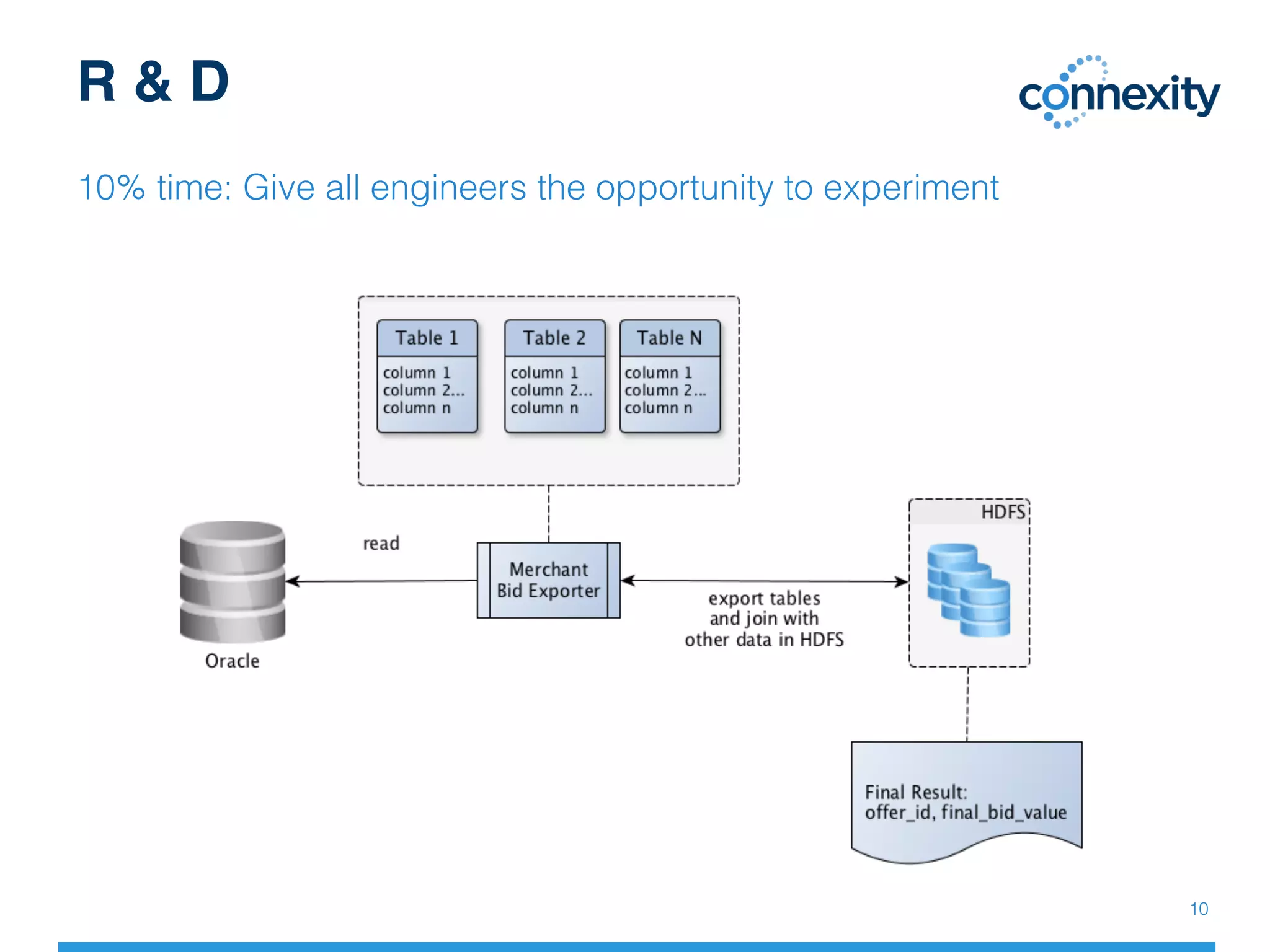

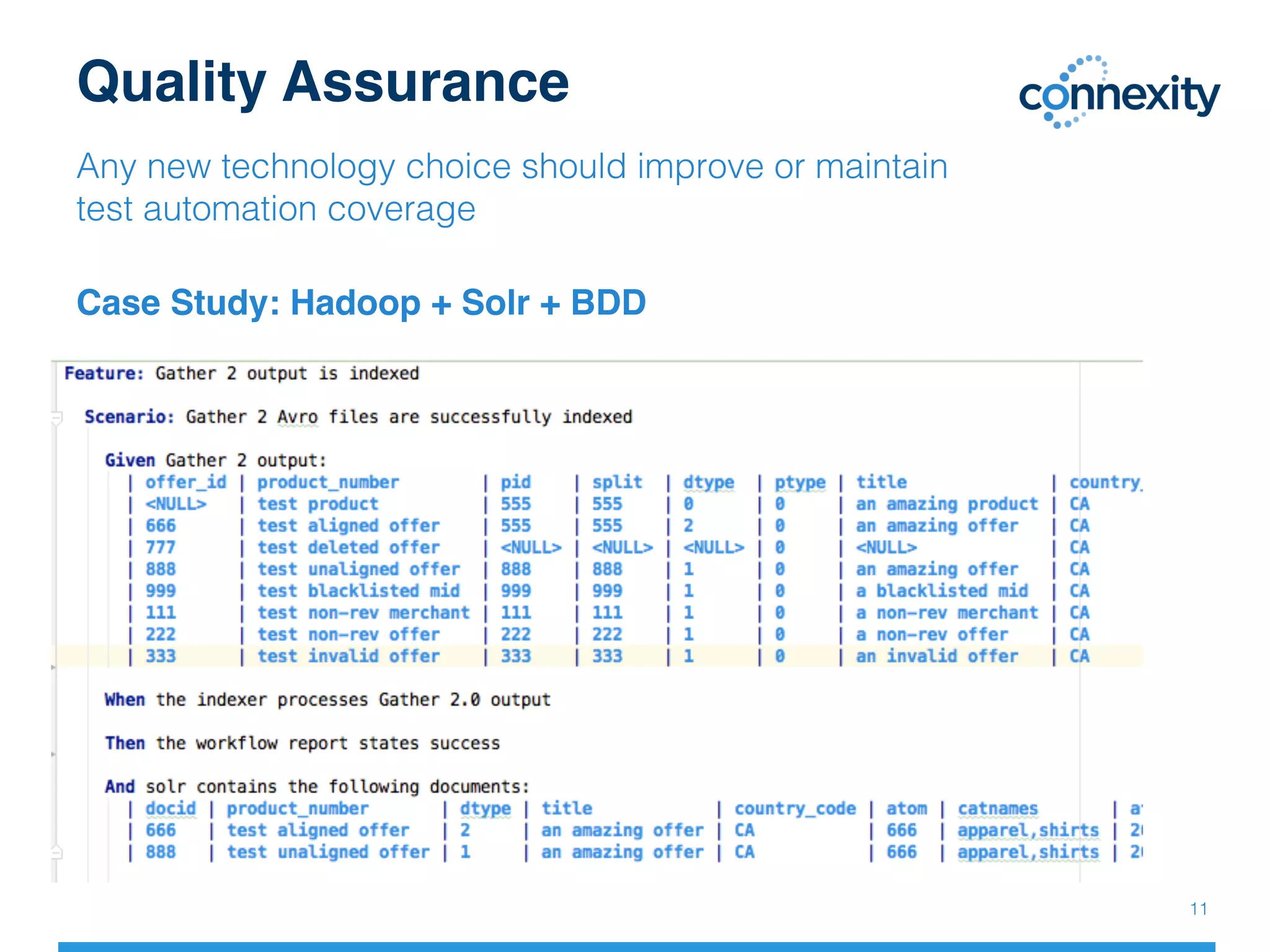

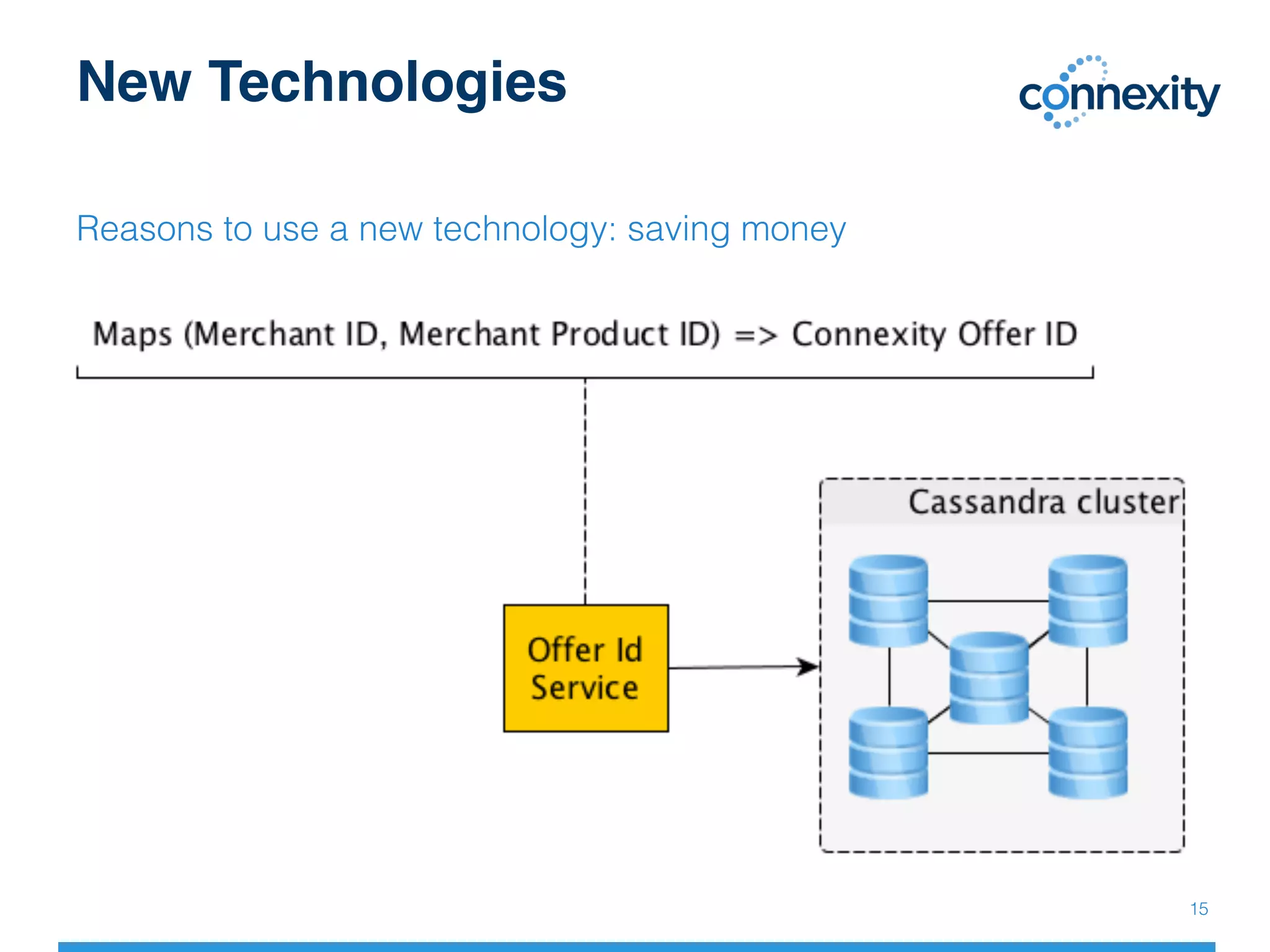

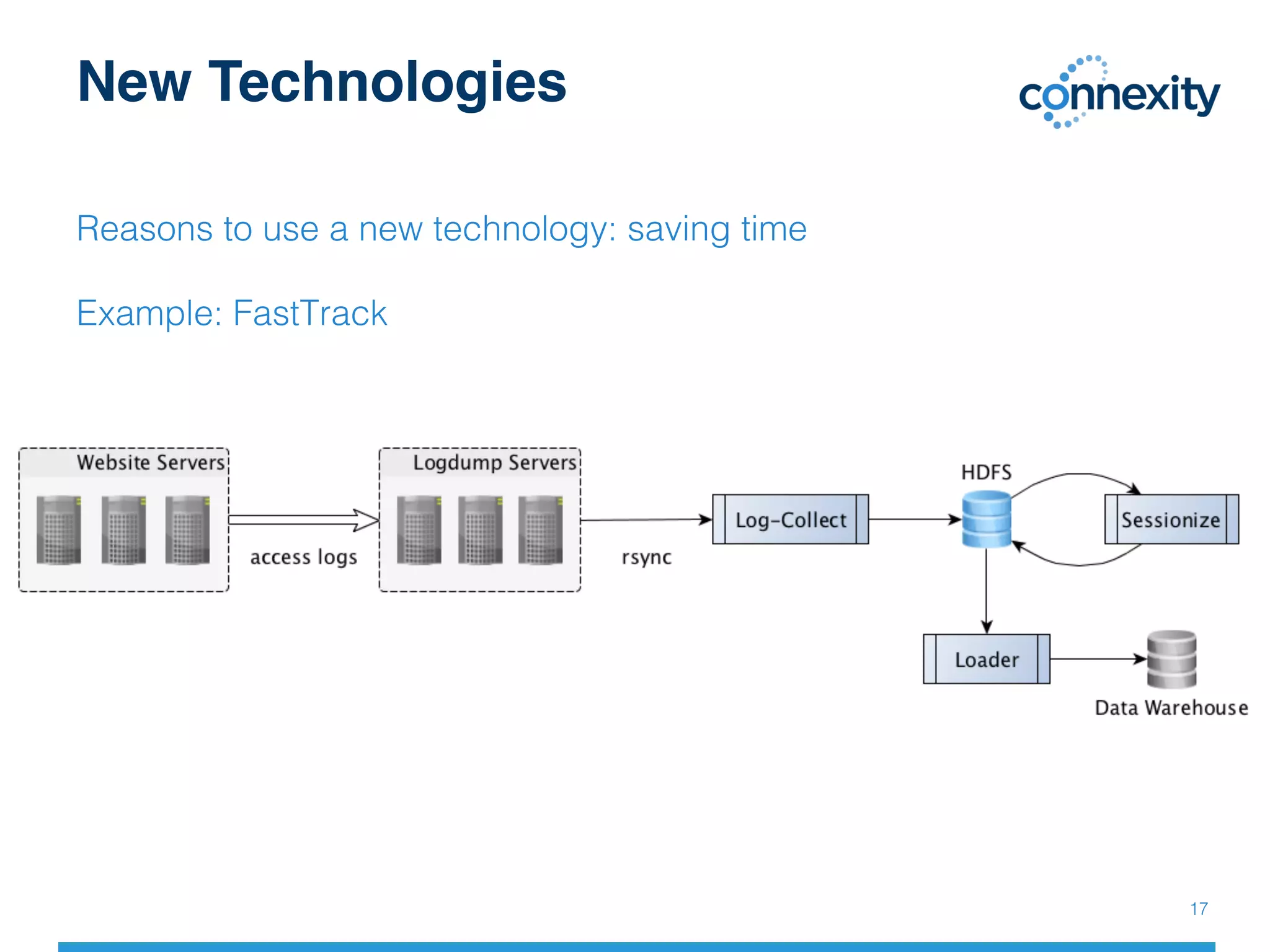

The document discusses Connexity's journey with big data and the technologies utilized to enhance marketing platforms and consumer insights. It highlights case studies, including the use of Riak for scalable keyword services and the importance of adopting new technologies to improve efficiency and meet industry standards. The document emphasizes the need for continuous experimentation and improvement within engineering teams to stay competitive in the evolving data landscape.