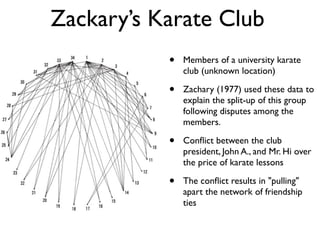

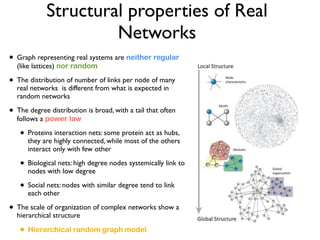

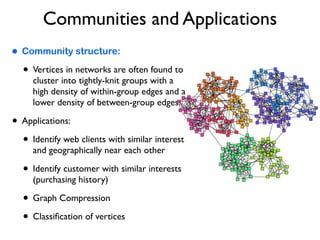

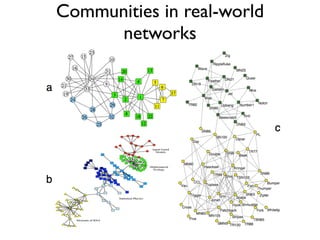

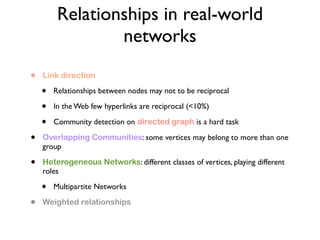

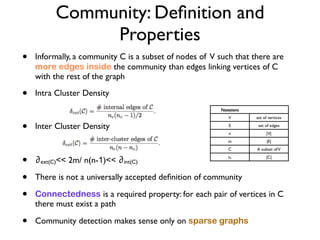

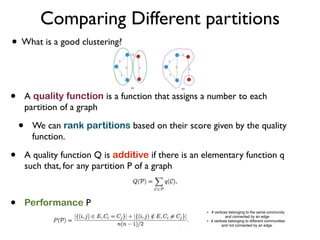

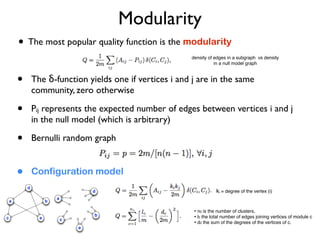

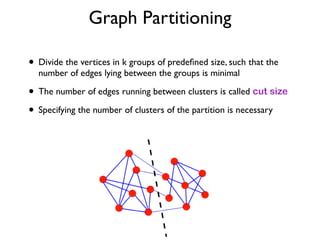

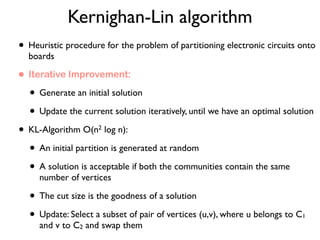

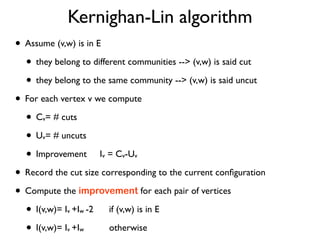

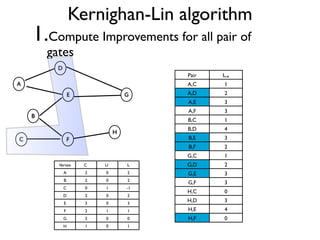

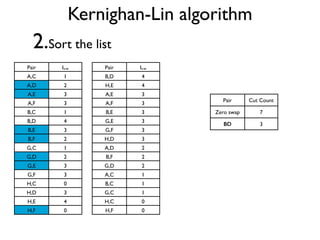

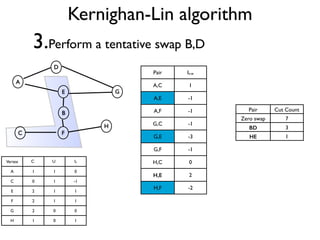

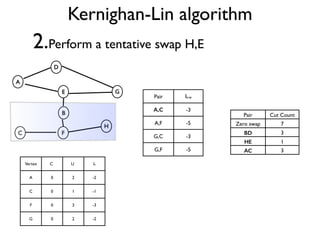

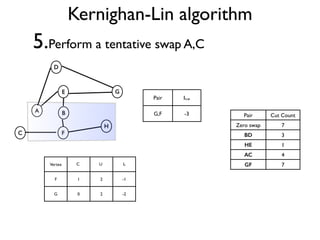

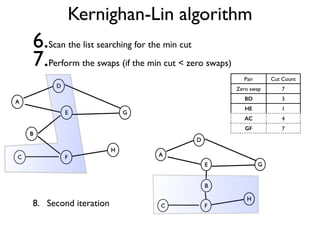

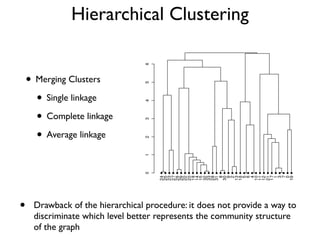

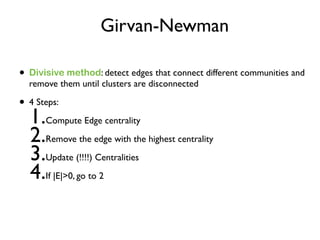

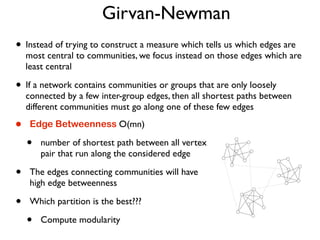

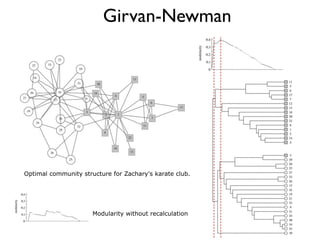

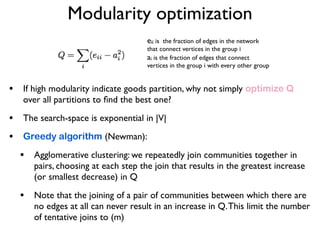

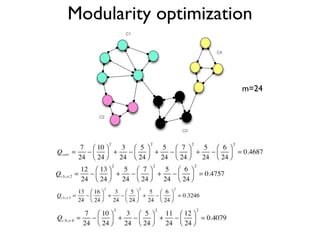

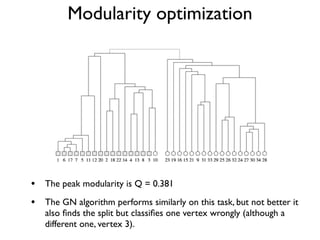

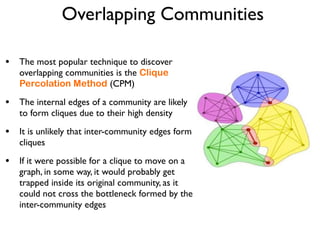

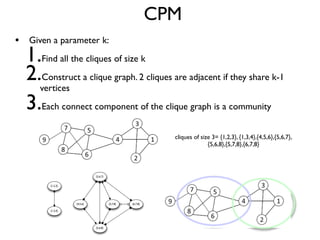

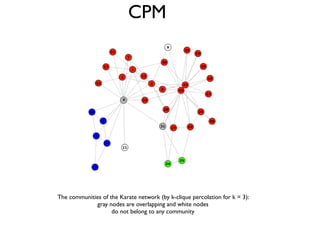

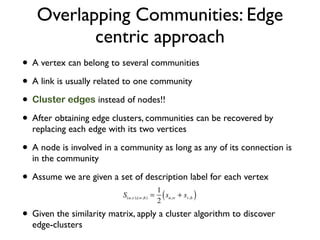

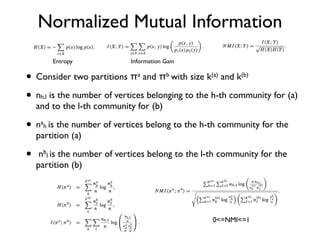

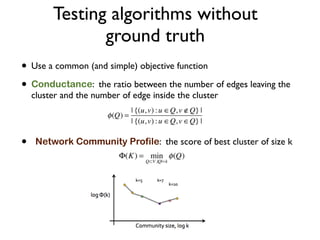

The document discusses community detection in graphs, highlighting how vertices in networks tend to cluster into groups with a high internal edge density. It covers various methods for detecting communities, such as the Kernighan-Lin algorithm, hierarchical clustering, and the Girvan-Newman method, while also addressing complexities like overlapping communities and the evaluation of detection algorithms. Additionally, it emphasizes the importance of modularity in assessing community structures and presents examples illustrating these concepts.