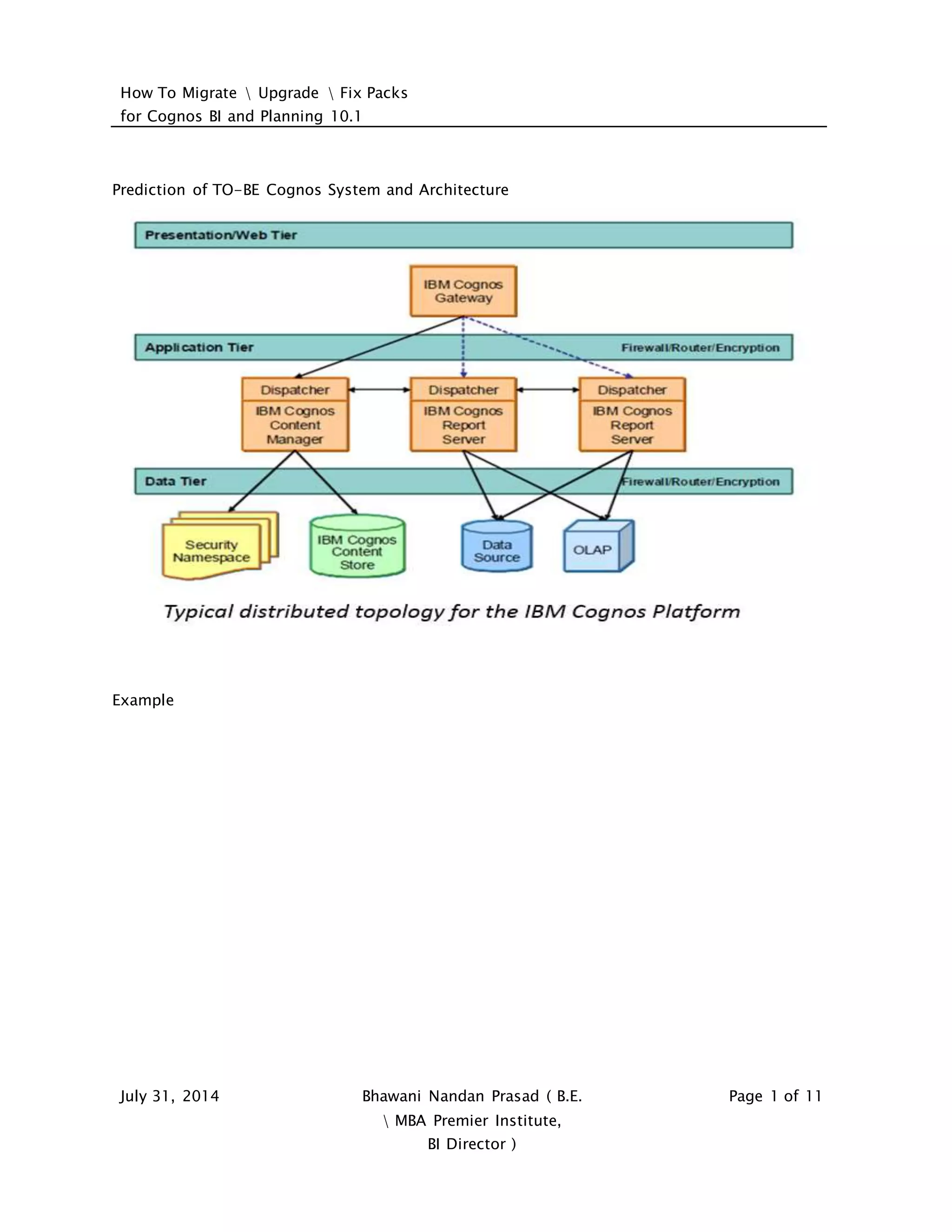

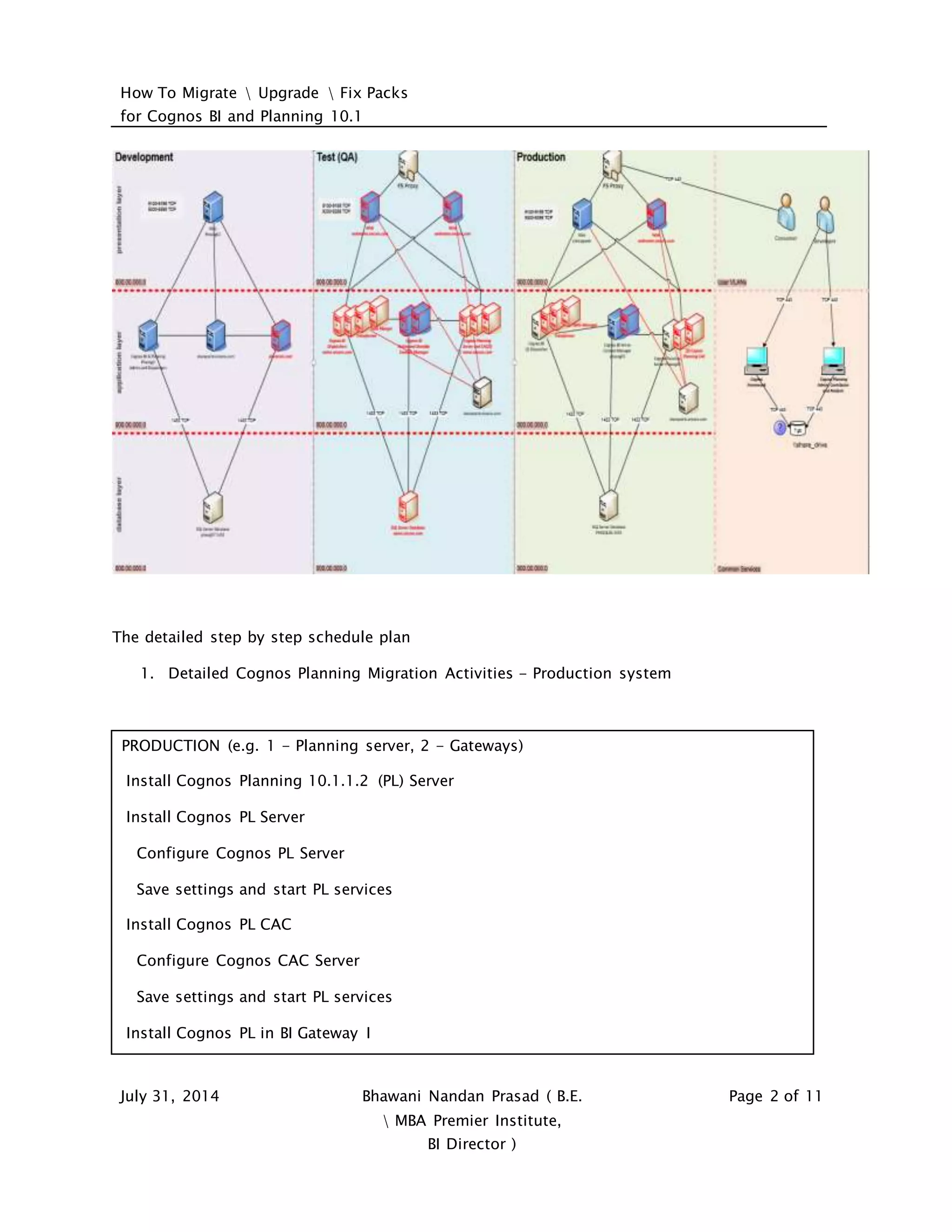

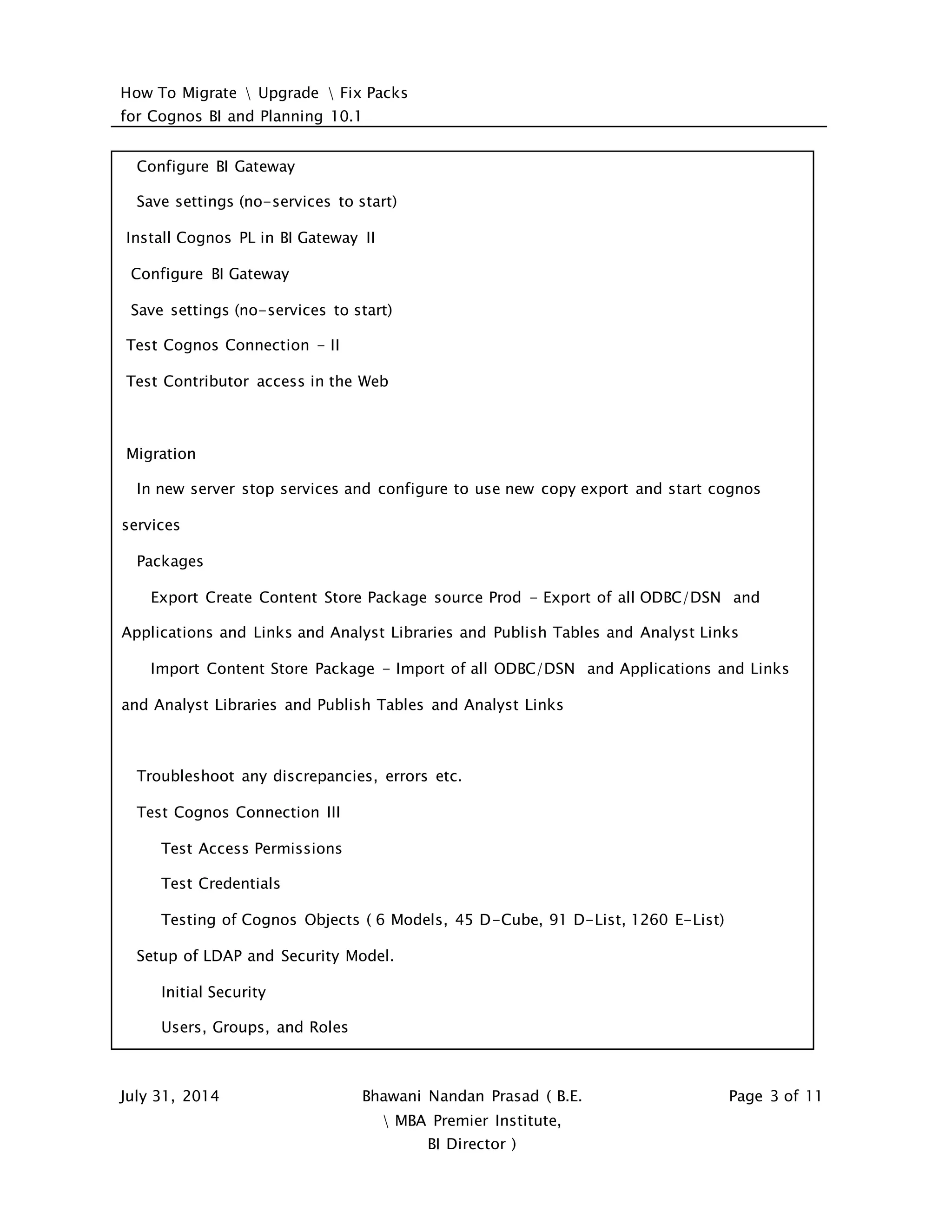

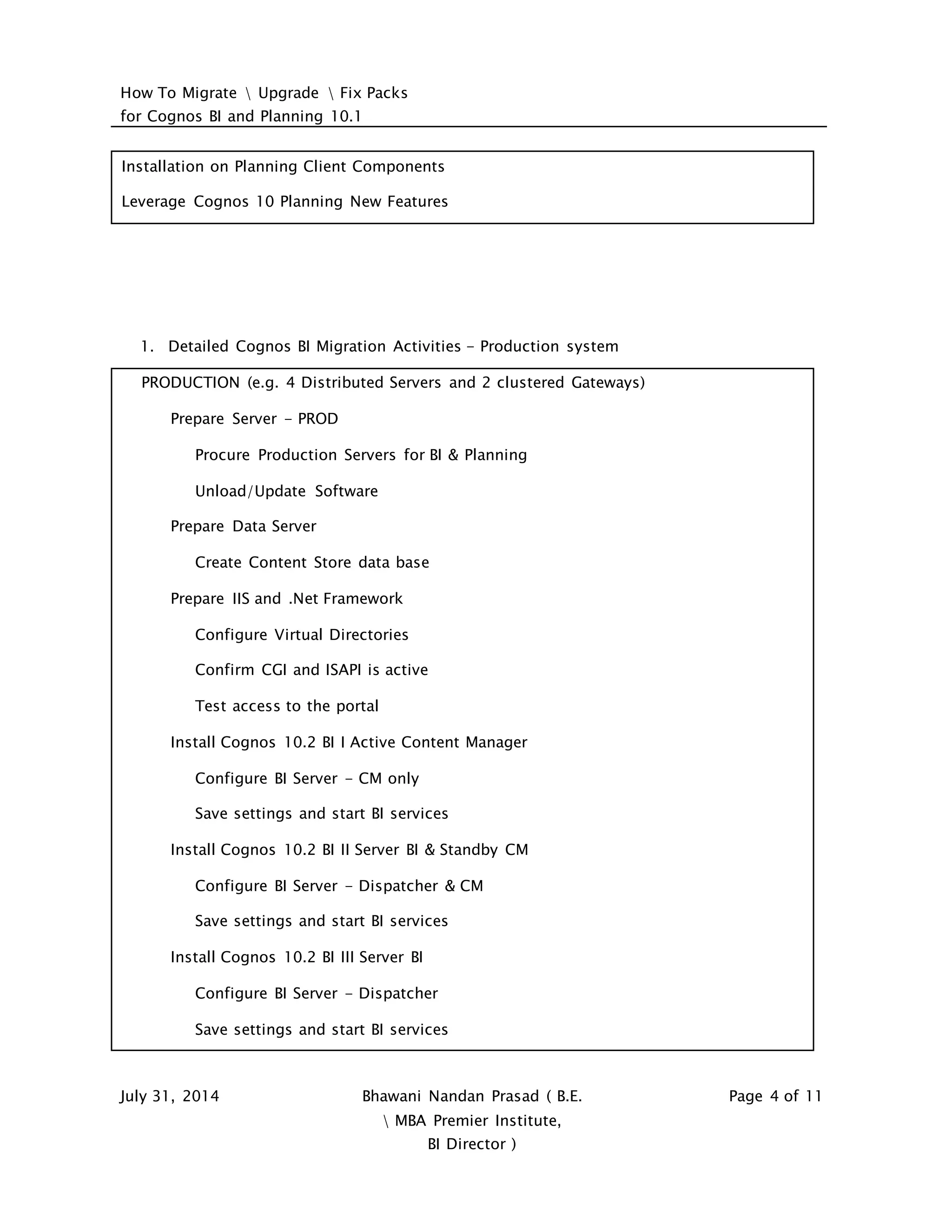

This document provides guidance on migrating, upgrading, and installing fix packs for Cognos BI and Planning 10.1. It includes a detailed schedule and steps for migrating production and test systems. Key activities include installing server components, configuring gateways, exporting and importing content, testing, setting up security, and leveraging new features. Guidelines are provided for evaluating current systems, creating a migration plan, preparing test environments, installation order, and report development best practices.