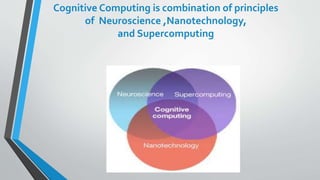

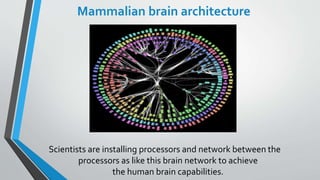

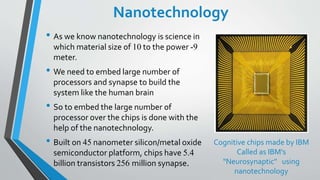

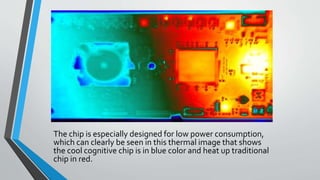

The document discusses cognitive computing, which mimics human brain functions through a combination of neuroscience, nanotechnology, and supercomputing. It highlights the brain-inspired architecture of cognitive devices, including IBM's neurosynaptic chips, and their capabilities in parallel computing, data mining, machine learning, and natural language processing. The conclusion emphasizes the importance of cognitive computing for future decision-making and data management across various professional fields.