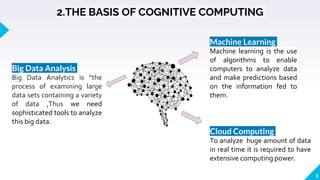

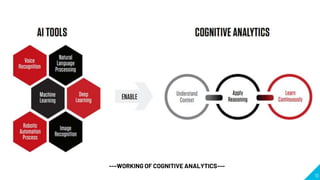

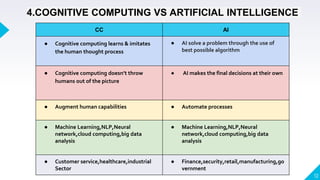

The document discusses cognitive computing, which emulates human thought processes through self-learning systems and machine learning algorithms, enabling automation in various sectors. It explores the advantages and disadvantages of cognitive computing, its applications in fields such as healthcare and education, and contrasts it with artificial intelligence. The conclusion emphasizes the ongoing evolution of cognitive technology and its potential to enhance human efficiency and decision-making.

![11. REFERENCES

[1] School of Computer Science and Technology, Huazhong University of Science and Technology,

430074, China 2Wuhan National Laboratory for Optoelectronics, Wuhan 430074, China.IEEE ,ACCESS

[2] Introduction to Cognitive Computing - DZone Big Data. dzone.com. (2021). Retrieved 28 February 2021,

from https://dzone.com/articles/introduction-to-cognitive-computing.Rohit Akiwatkar.,Technology

Consultant, Cloud services, Simform

[3] DEFINITION cognitive computing.

By Bridget Botelho, Editorial Director, News

Retrieved 28 February 2021, from https://enterprise.techtarget.com/definition/cognitive-computing.

[4] How Cognitive Computing Affects our Lives. https://www.pentalog.com/blog/cognitive-computing-tech-

innovation#. (2021). Retrieved 28 February 2021, author : Andrei Sajin Technical Expert - Software

Architect

[5] Cognitive-Computing-Whitepaper.pdf,Marlabs Inc. (Global Headquaters) One Corporate Place South,

3rd Floor Piscataway, NJ - 08854-6116,Senthil Nathan R Practice Head, BI, Data Science, & Big Data at

Marlabs

24](https://image.slidesharecdn.com/seminarpptfinal1-210309164618/85/Cognitive-computing-ppt-24-320.jpg)

![[6] Advantages of cognitive computing,disadvantages of cognitive computing. Rfwireless-world.com. (2021).

Retrieved 28 February 2021, from https://www.rfwireless-world.com/Terminology/Advantages-and-

Disadvantages-of-cognitive-computing.html.

[7] La Salle University La Salle University Digital Commons Mathematics and Computer Science Capstones,

Department of Spring , Stephen Knowles knowless1@student.lasalle.ed, Cognitive Computing Creates Value In

Healthcare and Shows Poten.pdf

[8] Role of Cognitive Computing in Education. (2021). Retrieved 28 February 2021, from

https://www.thetechnologyheadlines.com/most-popular/Cognitive-Computing-in-Education/

[9] Aftabhussain.com. 2021. Cognitive Computing and the Education Sector. [online] Available at:

<http://www.aftabhussain.com/cognitive_computing> [Accessed 28 February 2021].Aftab Hussain,Department

of Computer Science, University of Houston.

25](https://image.slidesharecdn.com/seminarpptfinal1-210309164618/85/Cognitive-computing-ppt-25-320.jpg)