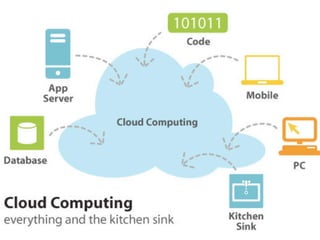

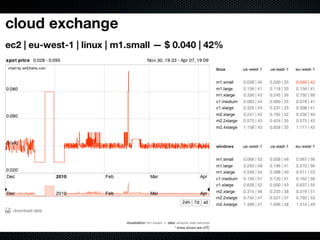

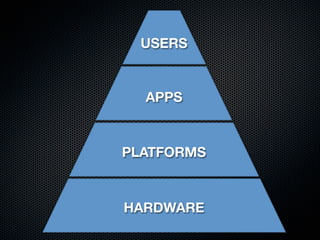

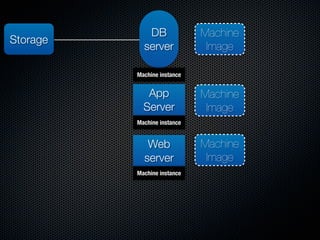

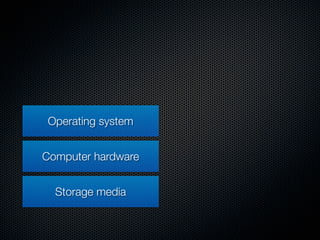

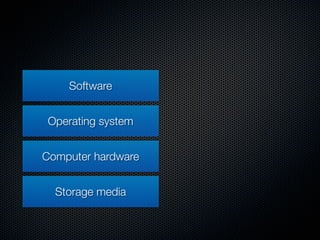

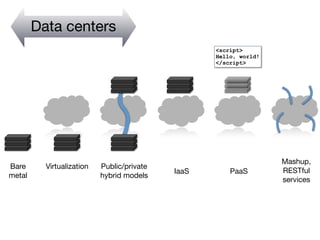

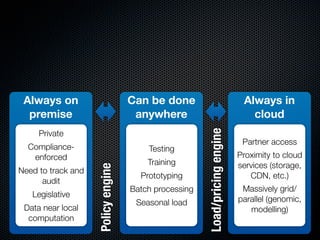

The document provides an overview of cloud computing by discussing key topics across six parts:

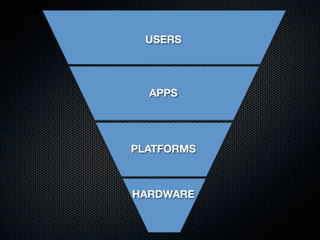

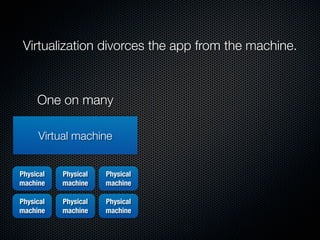

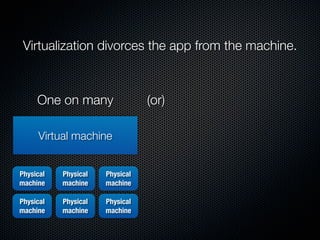

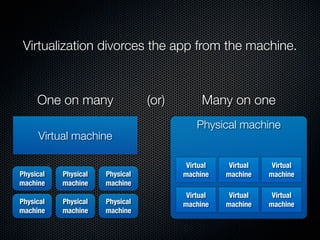

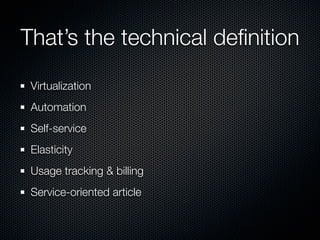

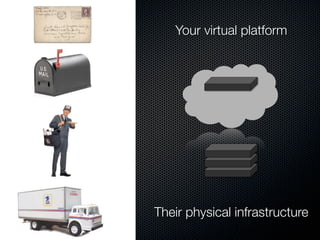

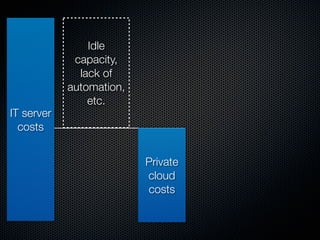

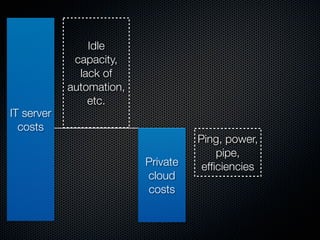

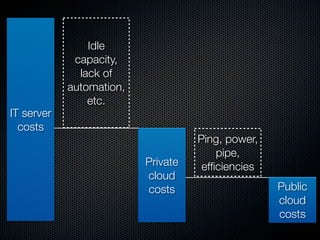

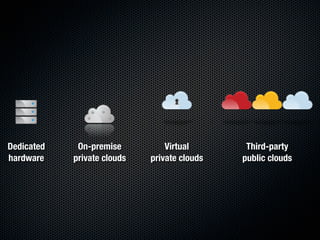

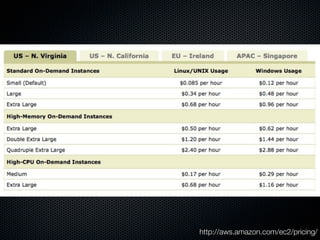

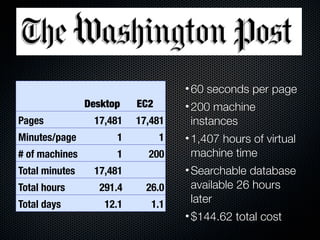

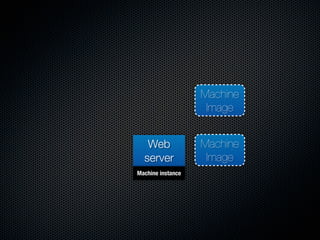

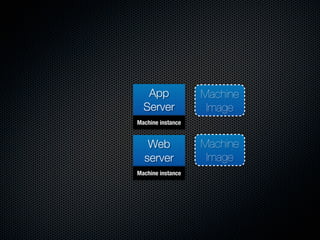

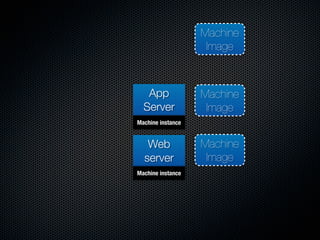

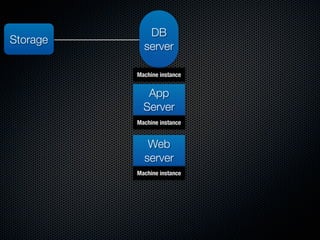

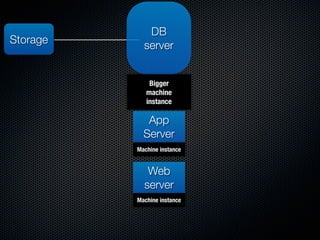

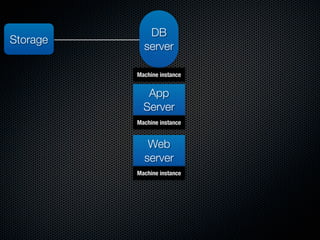

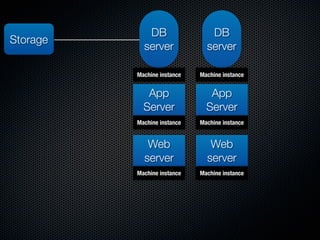

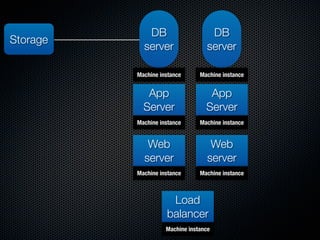

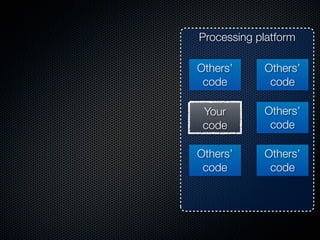

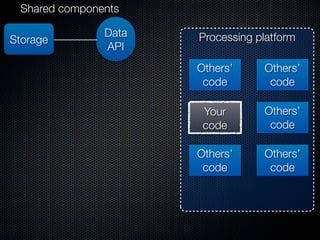

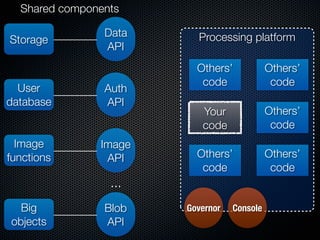

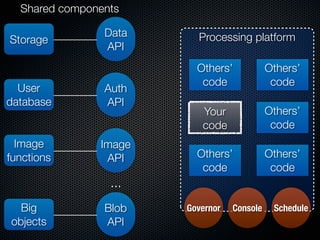

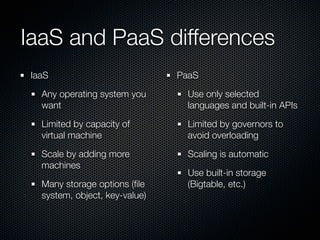

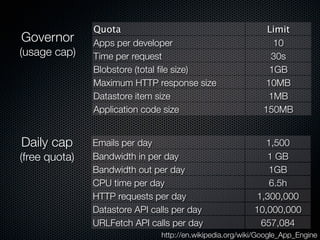

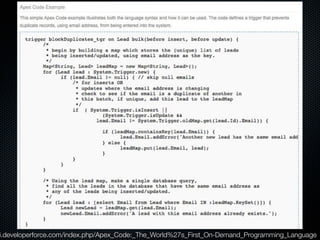

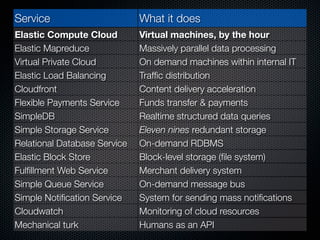

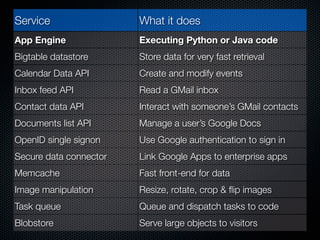

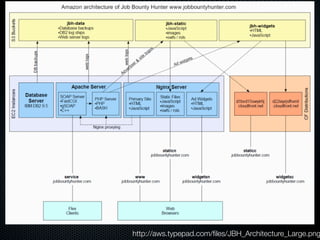

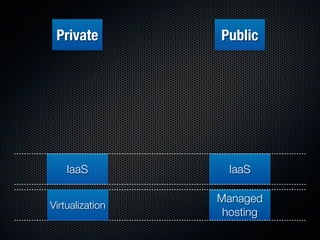

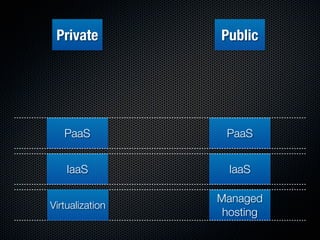

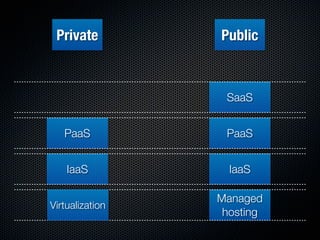

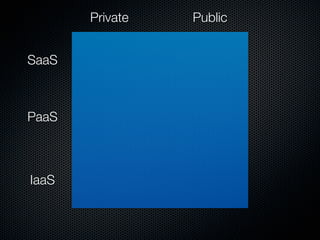

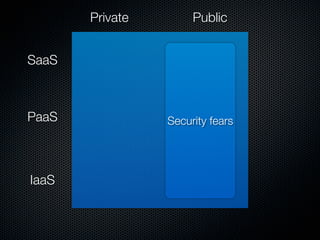

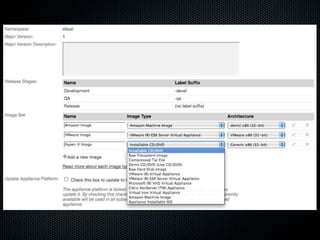

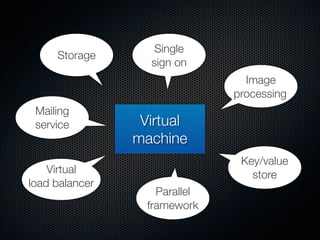

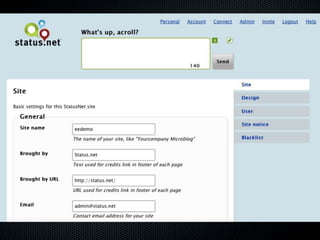

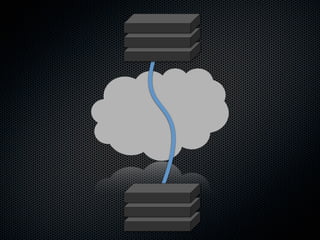

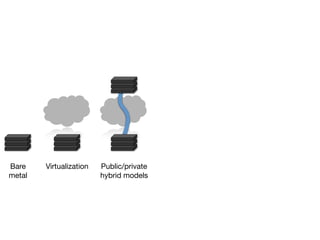

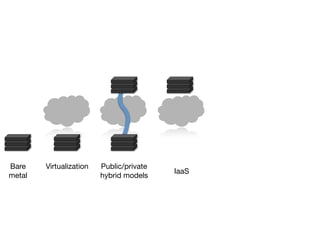

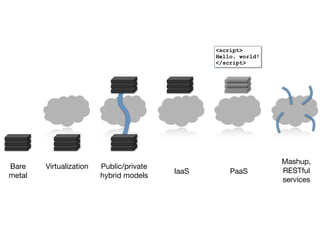

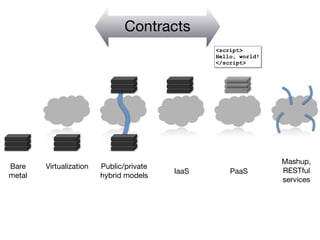

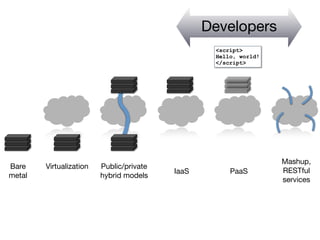

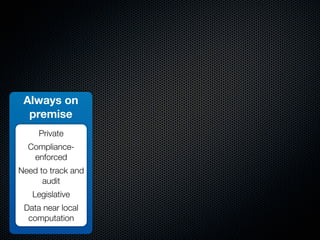

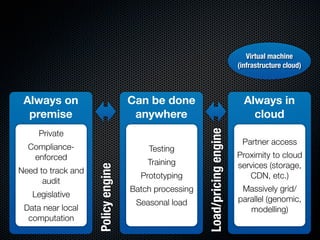

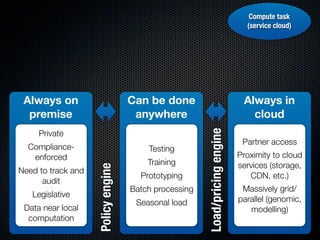

Part one discusses how cloud computing has disrupted IT by democratizing access. Part two covers the history of virtualization. Part three explains how cloud stacks separate concerns between physical infrastructure, virtual platforms, and applications. Part four frames clouds as an on-demand business model compared to traditional IT. Part five outlines the major types of cloud services including IaaS, PaaS and differences between them. Finally, part six notes that in reality, most cloud offerings blend aspects of infrastructure, platforms and software as a service.