The document discusses cache memory and provides information on various aspects of cache memory including:

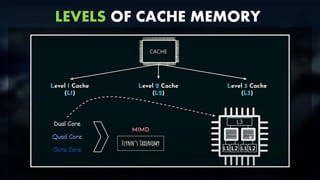

- Introduction to cache memory including its purpose and levels.

- Cache structure and organization including cache row entries, cache blocks, and mapping techniques.

- Performance of cache memory including factors like cycle count and hit ratio.

- Cache coherence in multiprocessor systems and coherence protocols.

- Synchronization mechanisms used in multiprocessor systems for cache coherence.

- Paging techniques used in cache memory including address translation using page tables and TLBs.

- Replacement algorithms used to determine which cache blocks to replace when the cache is full.