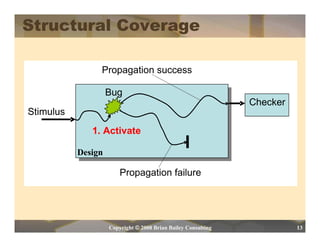

Sun Tzu's The Art of War provides guidance for verification engineers in their battle against Murphy the Design. Key lessons include knowing your enemy (understanding the design and best verification approaches), knowing yourself (improving processes and understanding strengths and weaknesses), and preparing yourself with the right tools while maintaining flexibility. Using feedback based on objective metrics is also important for success.