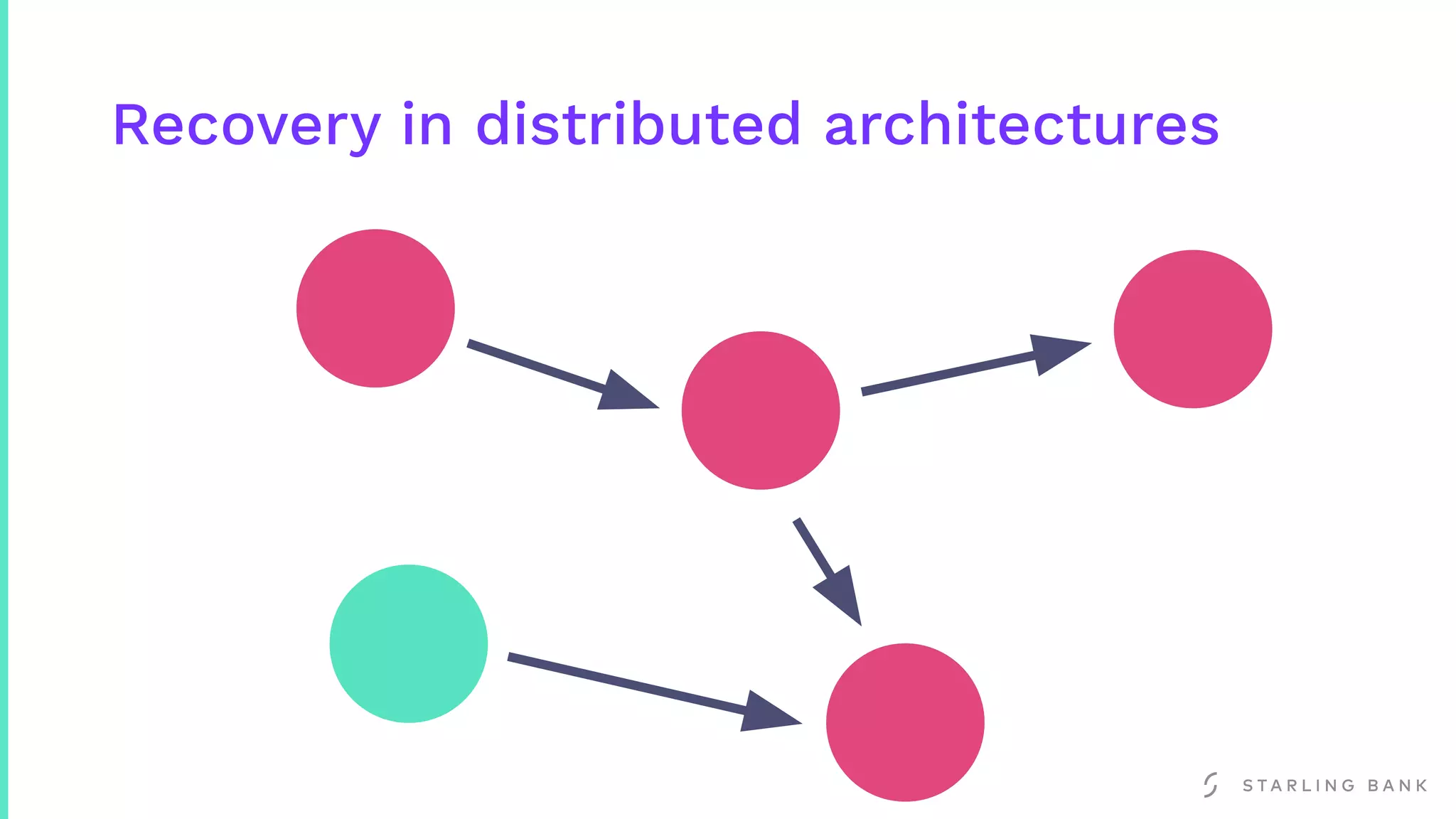

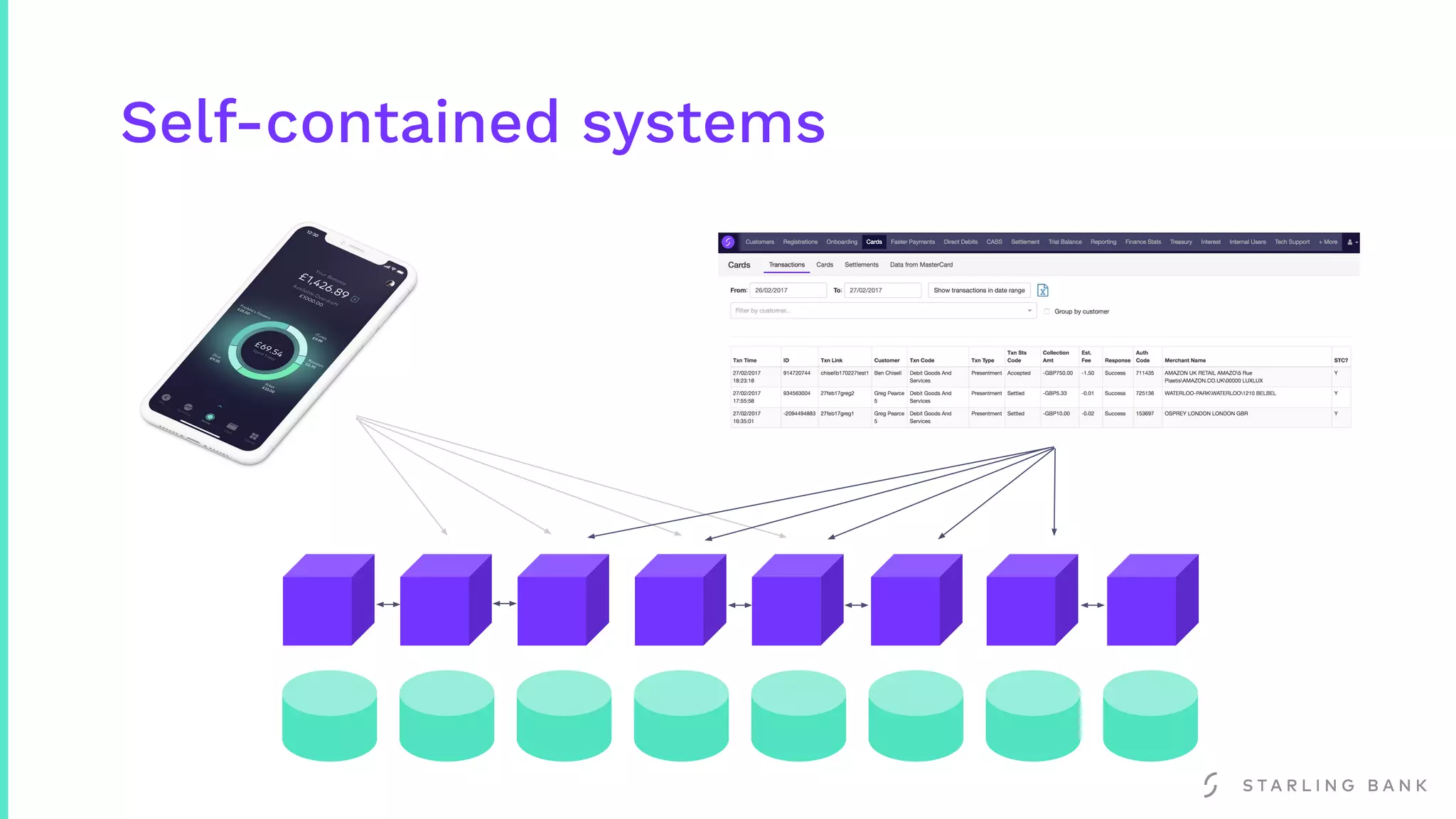

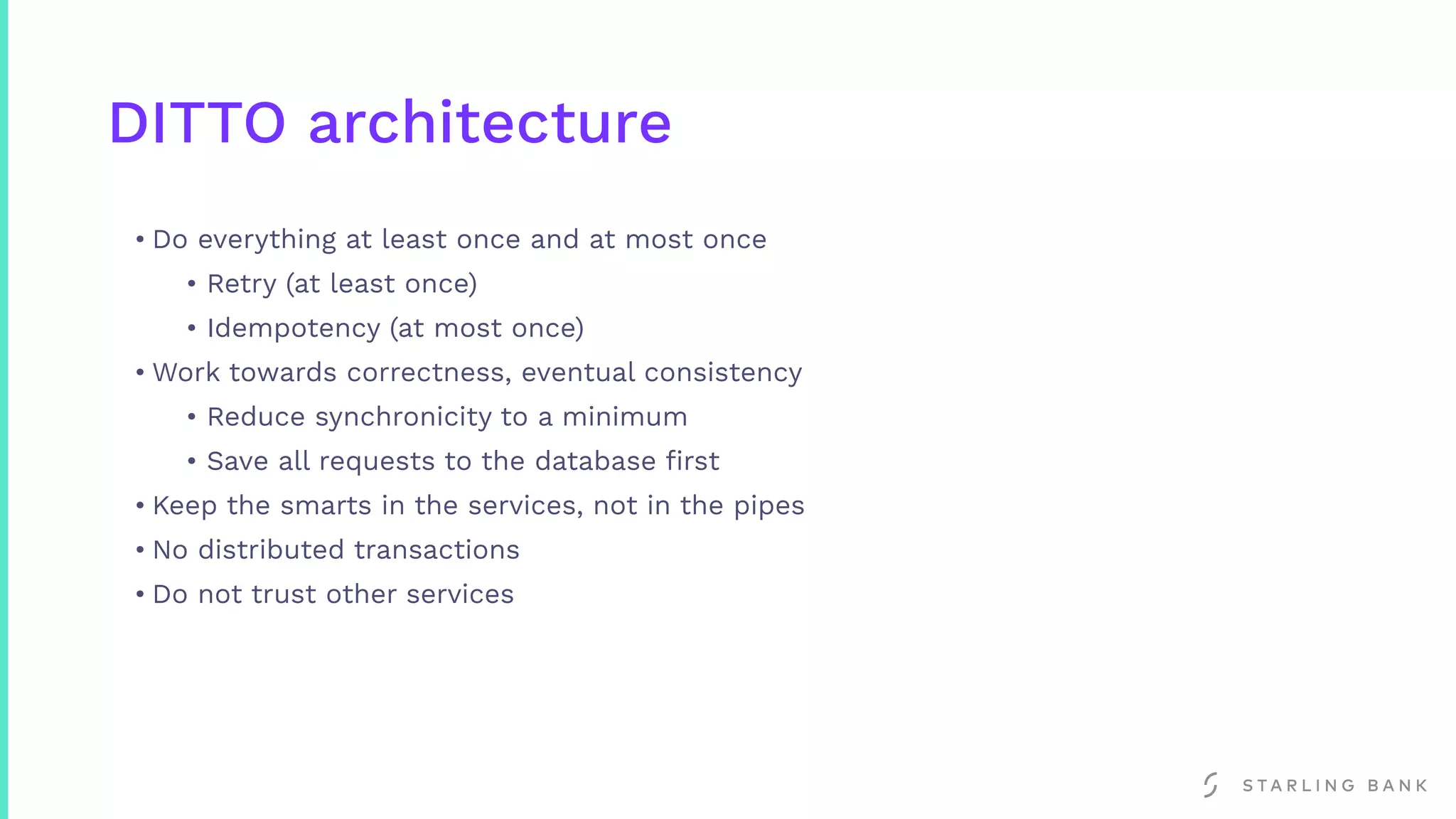

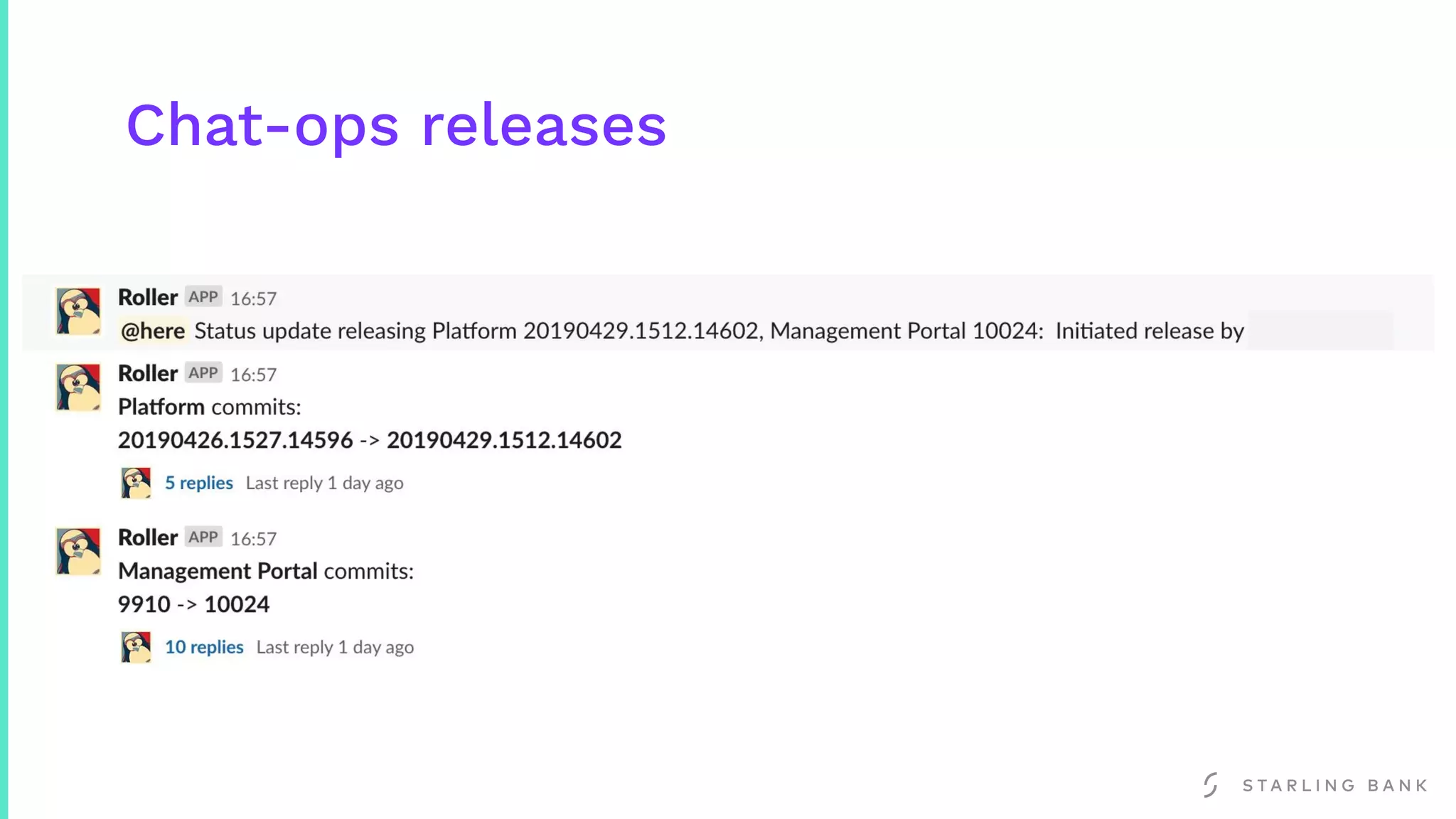

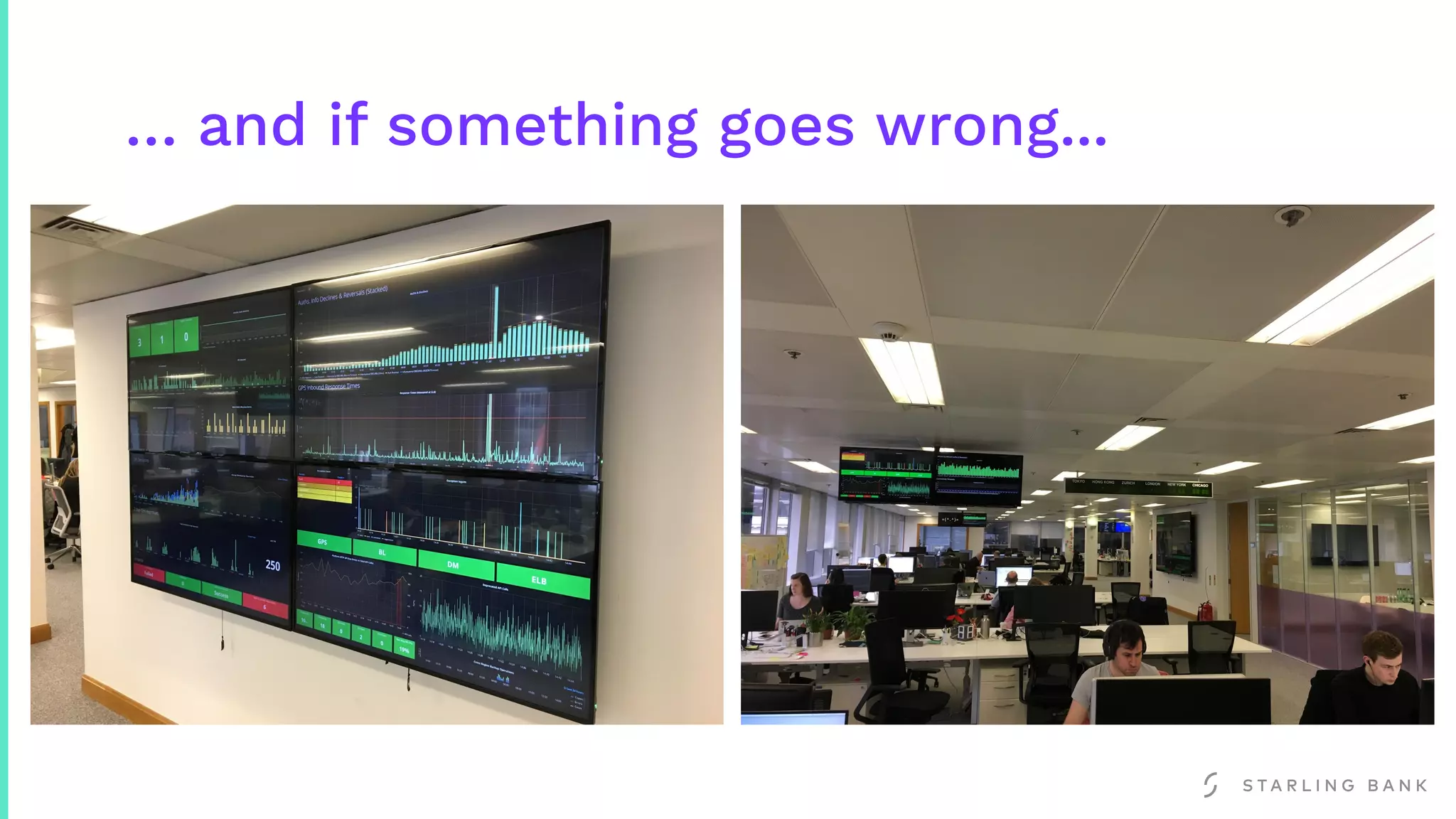

This document discusses the challenges of building reliable banking architectures in the cloud and how Starling Bank addressed this issue. It introduces some key concepts like distributed architectures, self-contained systems, and the DITTO architecture which focuses on idempotency and eventual consistency. The benefits of this approach for Starling Bank included safe instance termination, continuous delivery of backend changes up to 5 times a day using chat-ops releases, and the ability to "chaos test" to ensure reliability.