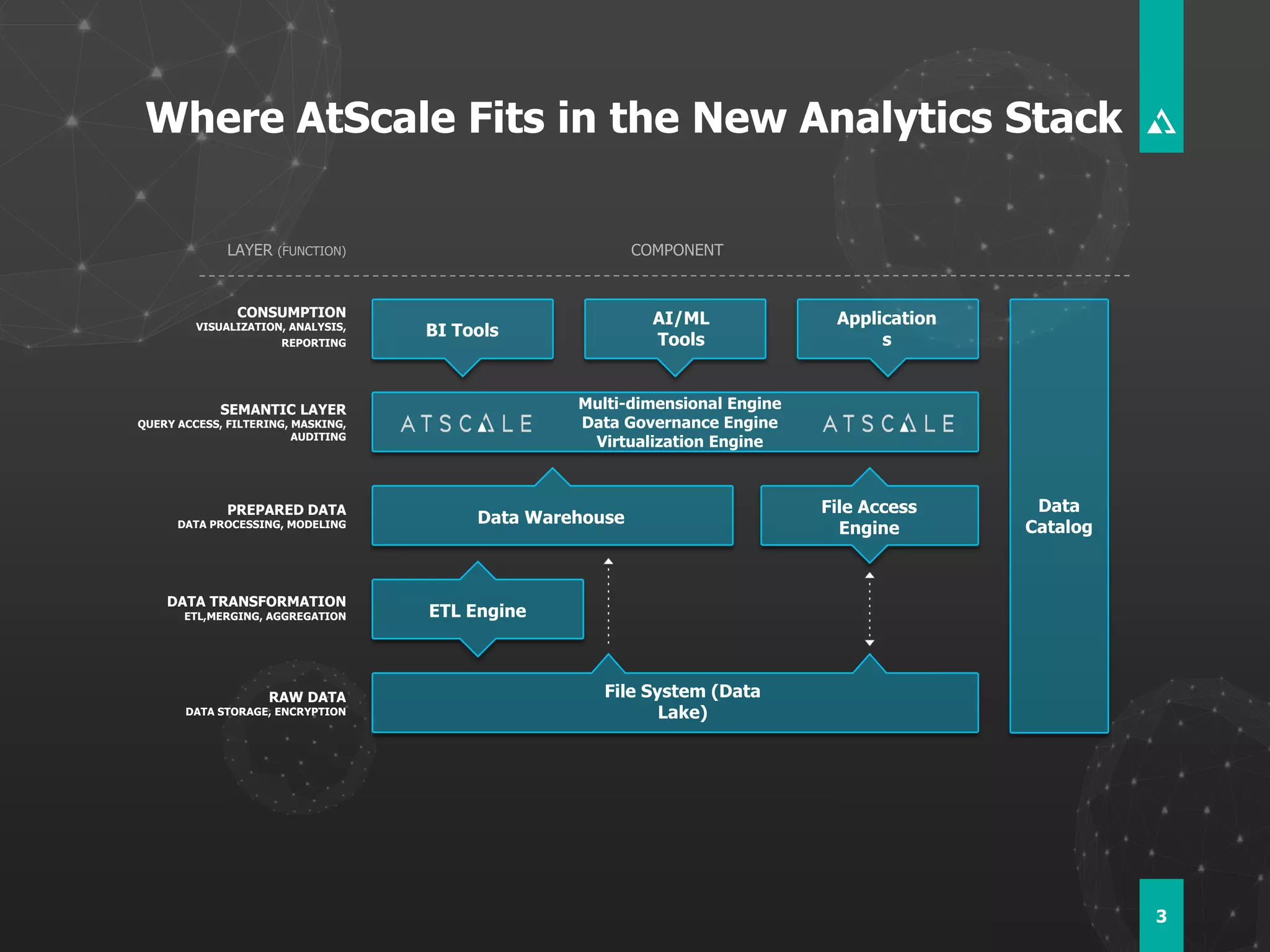

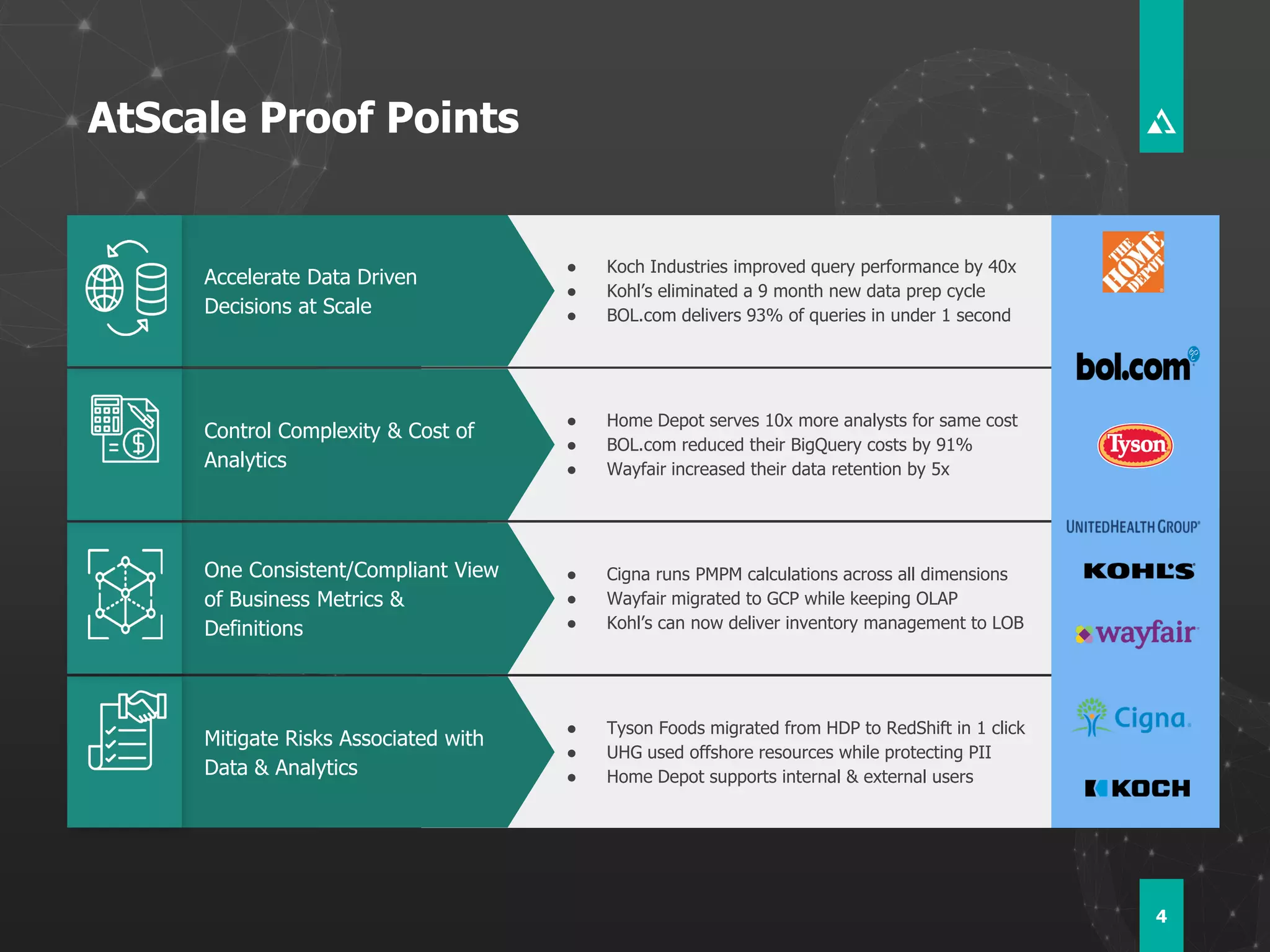

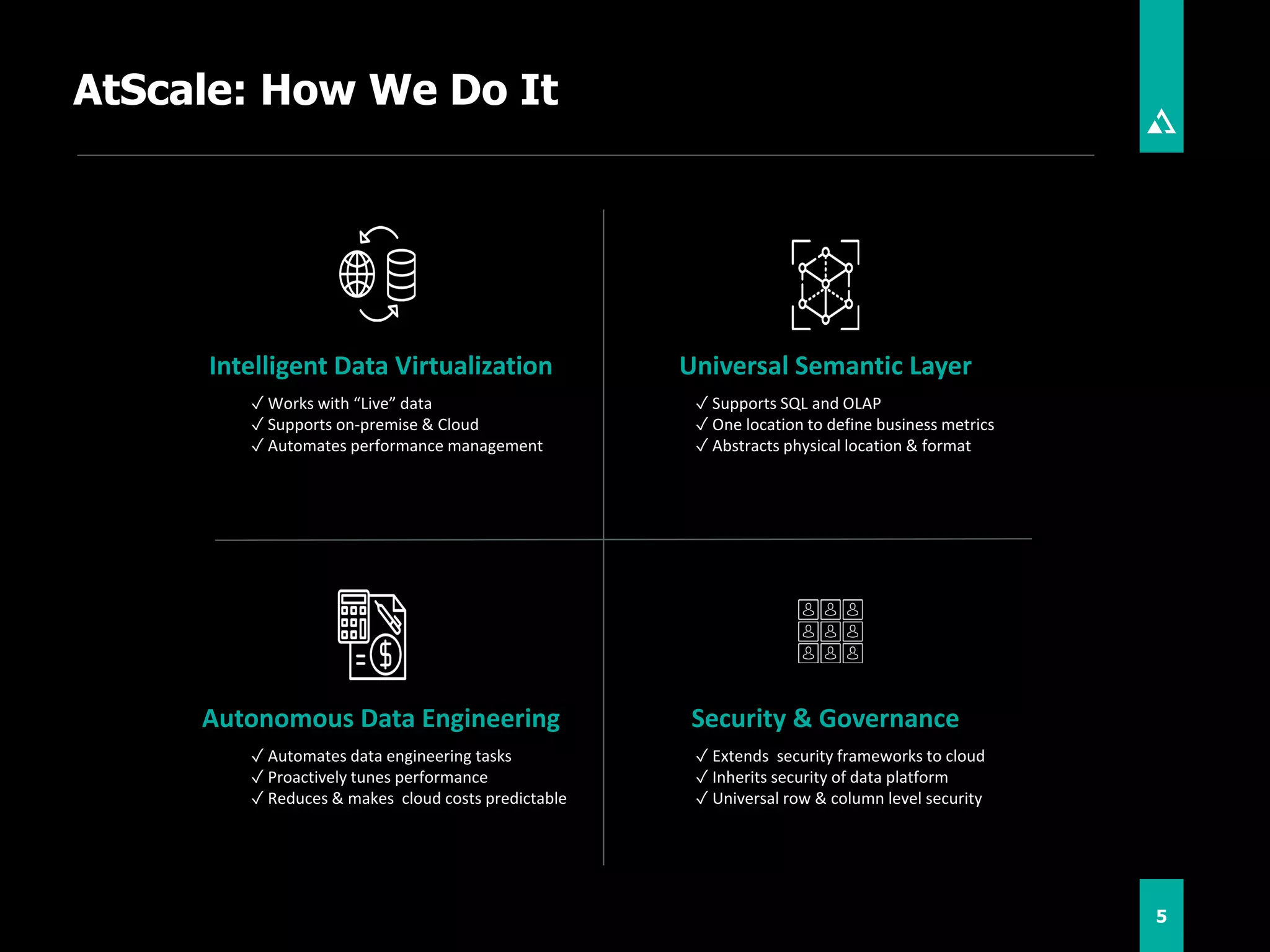

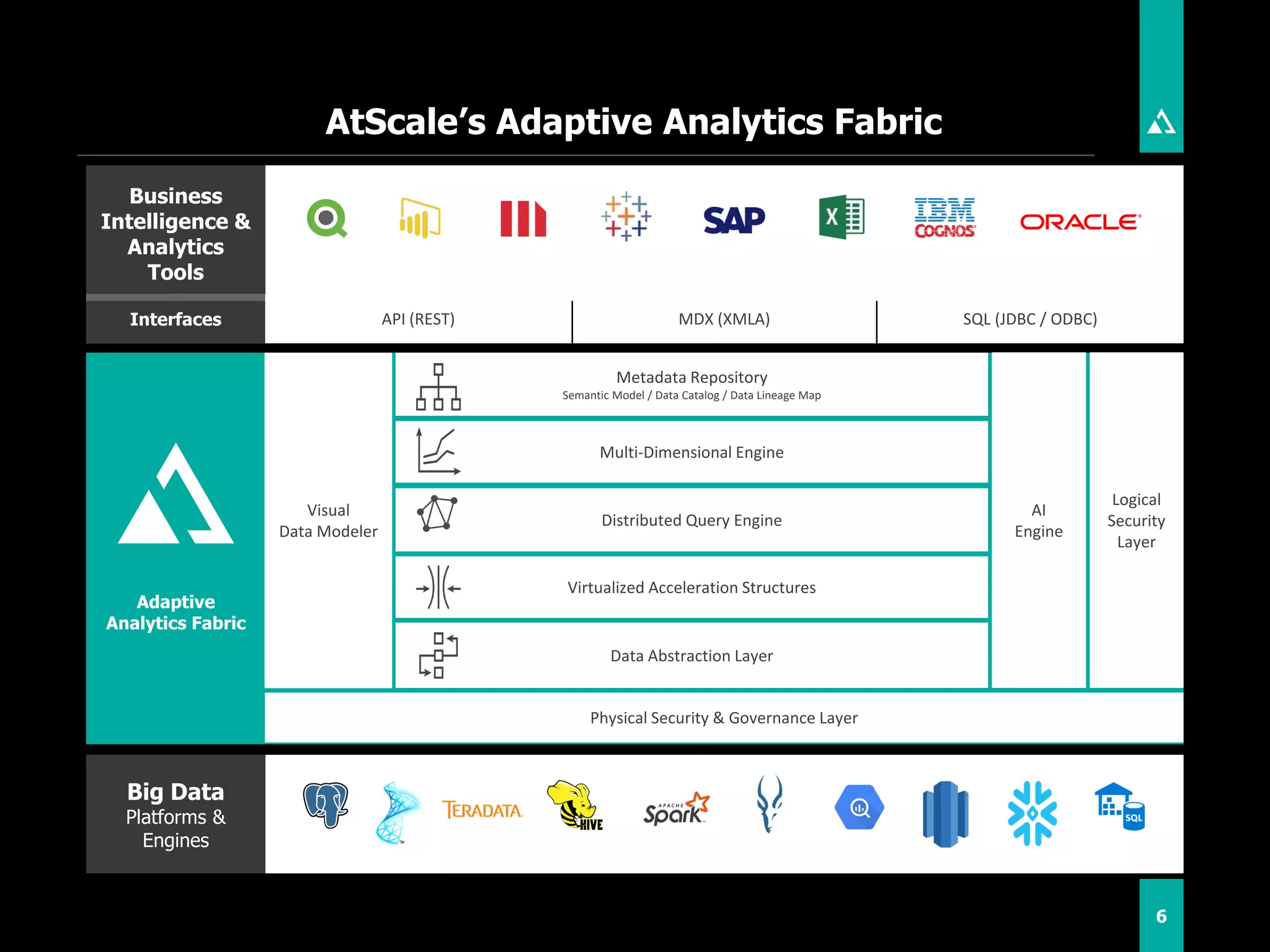

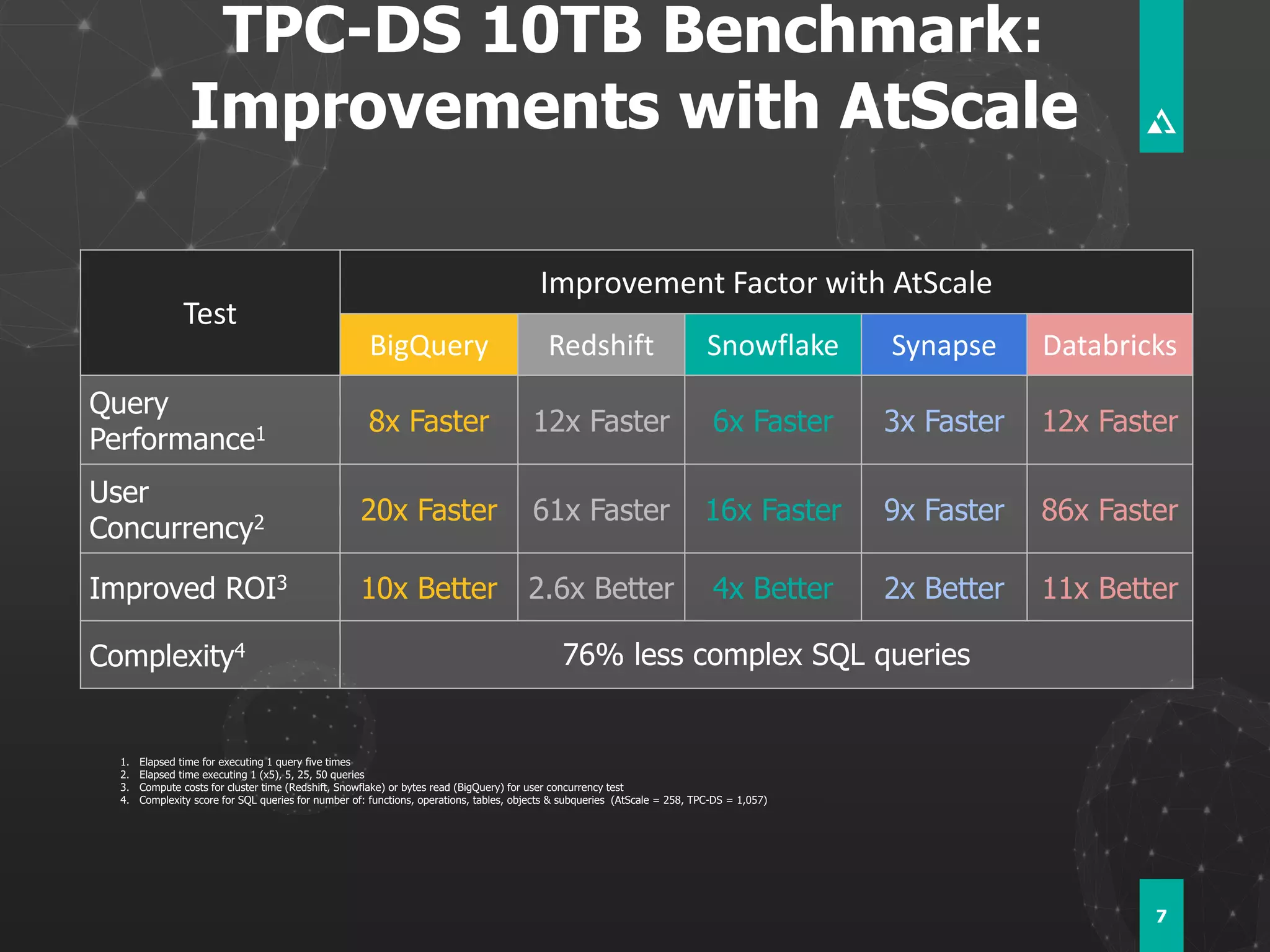

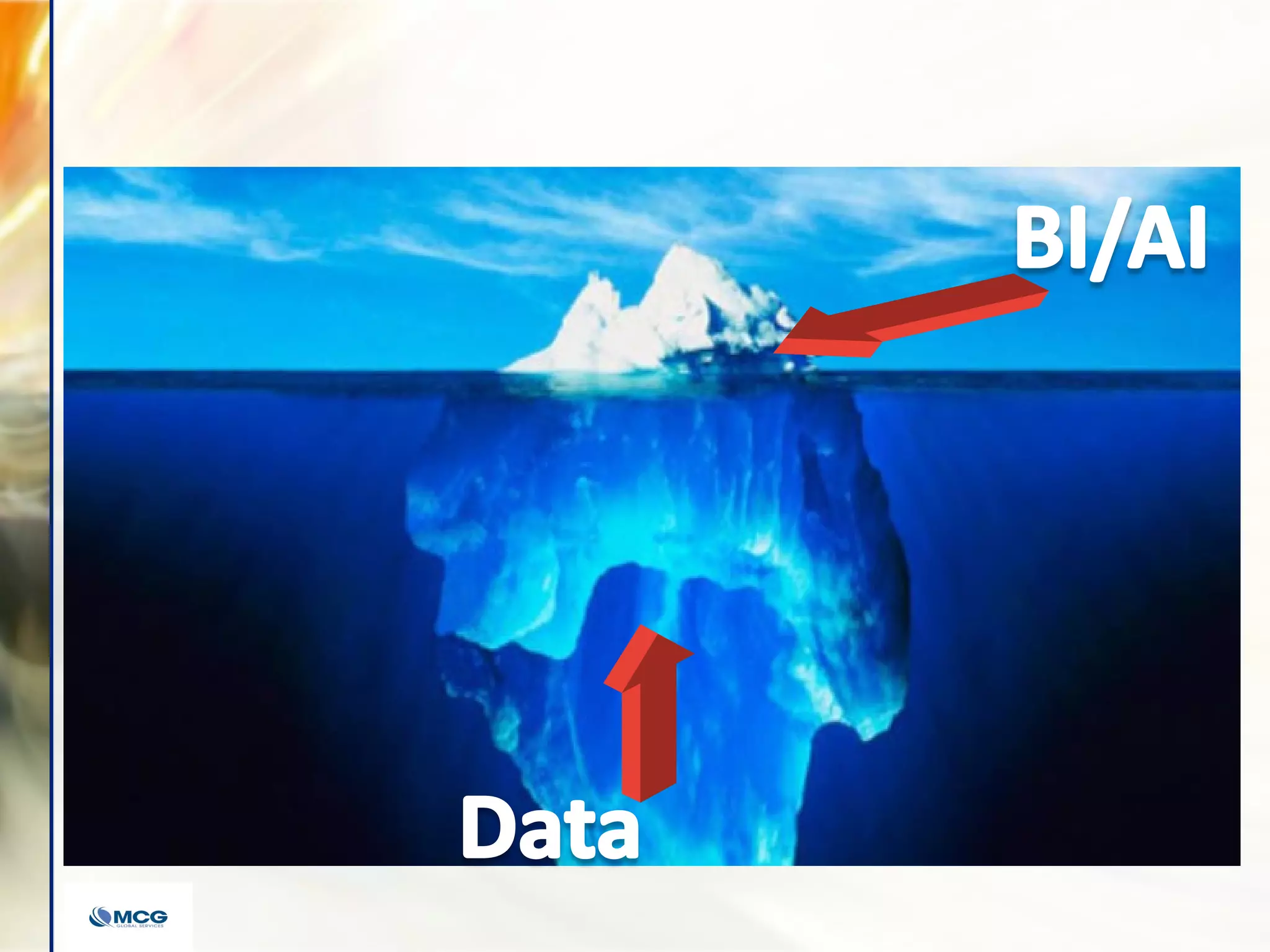

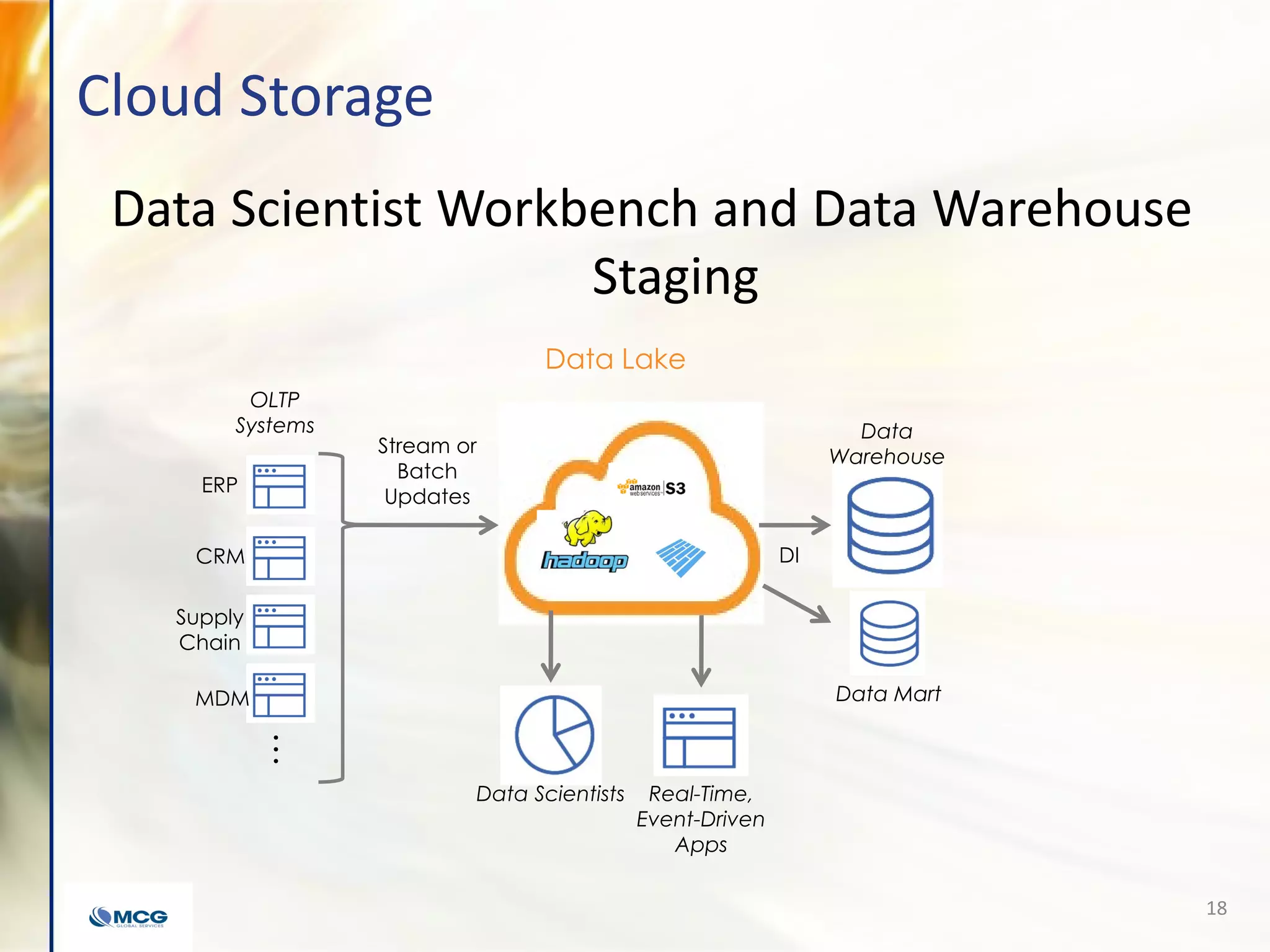

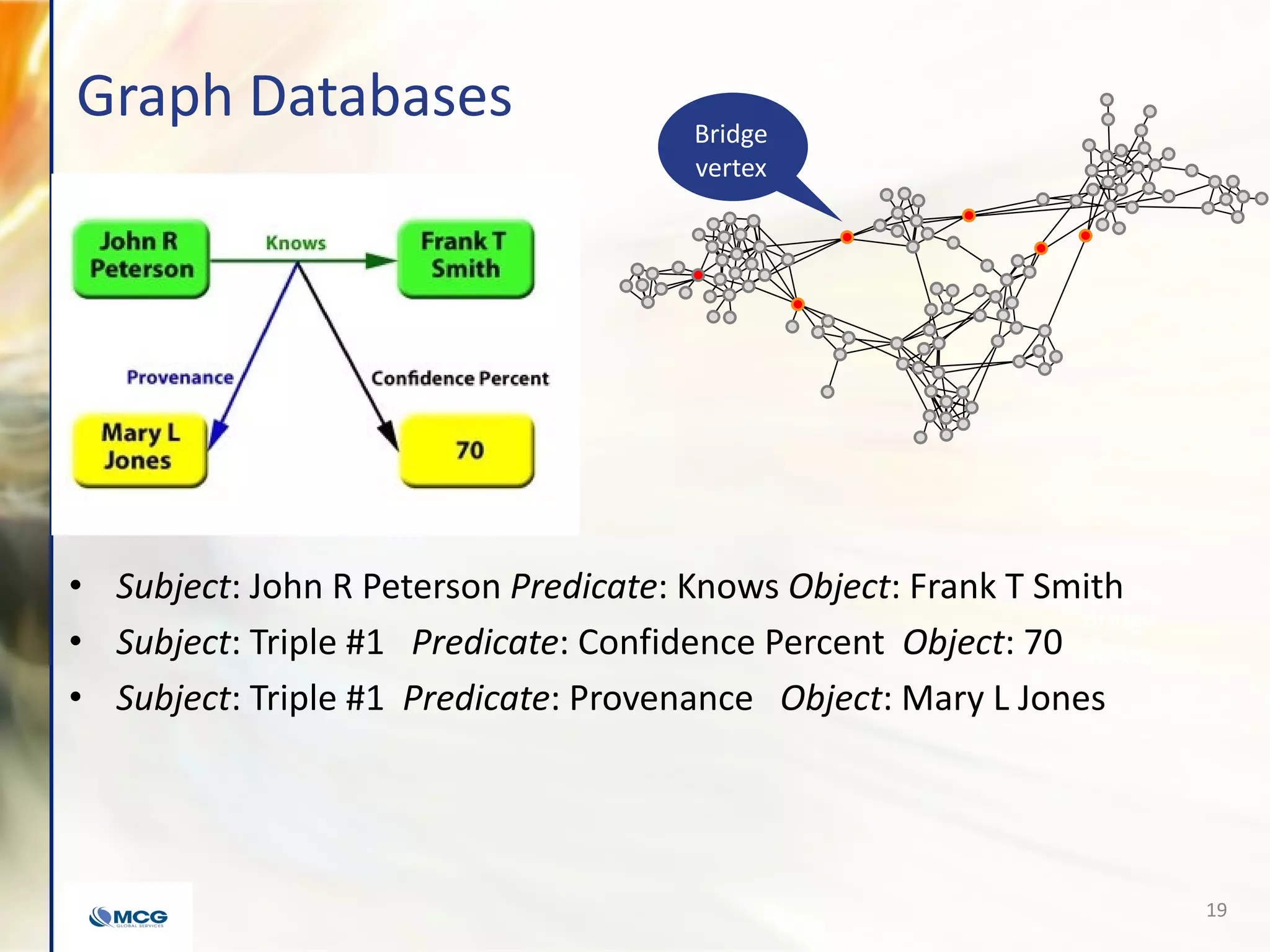

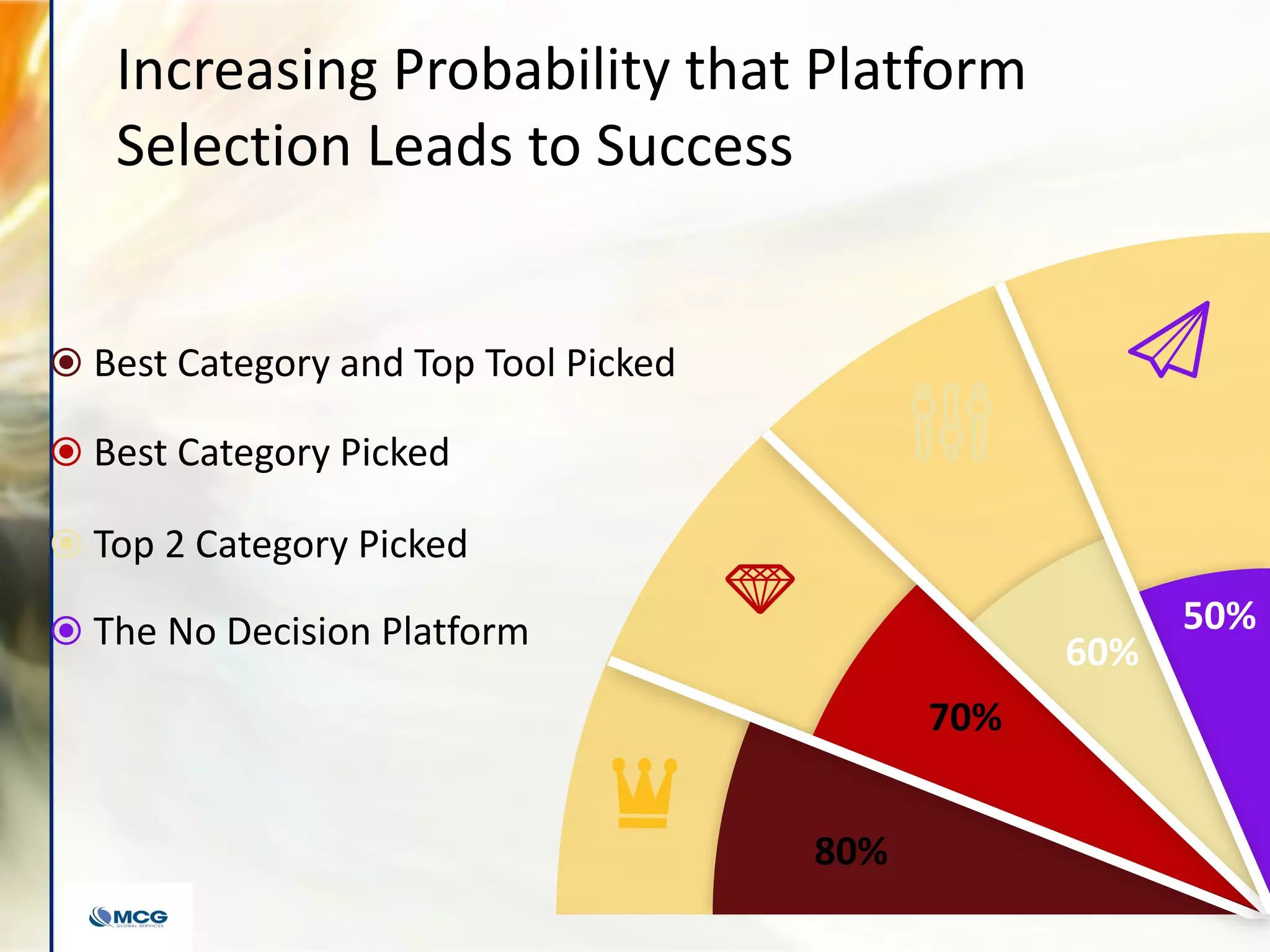

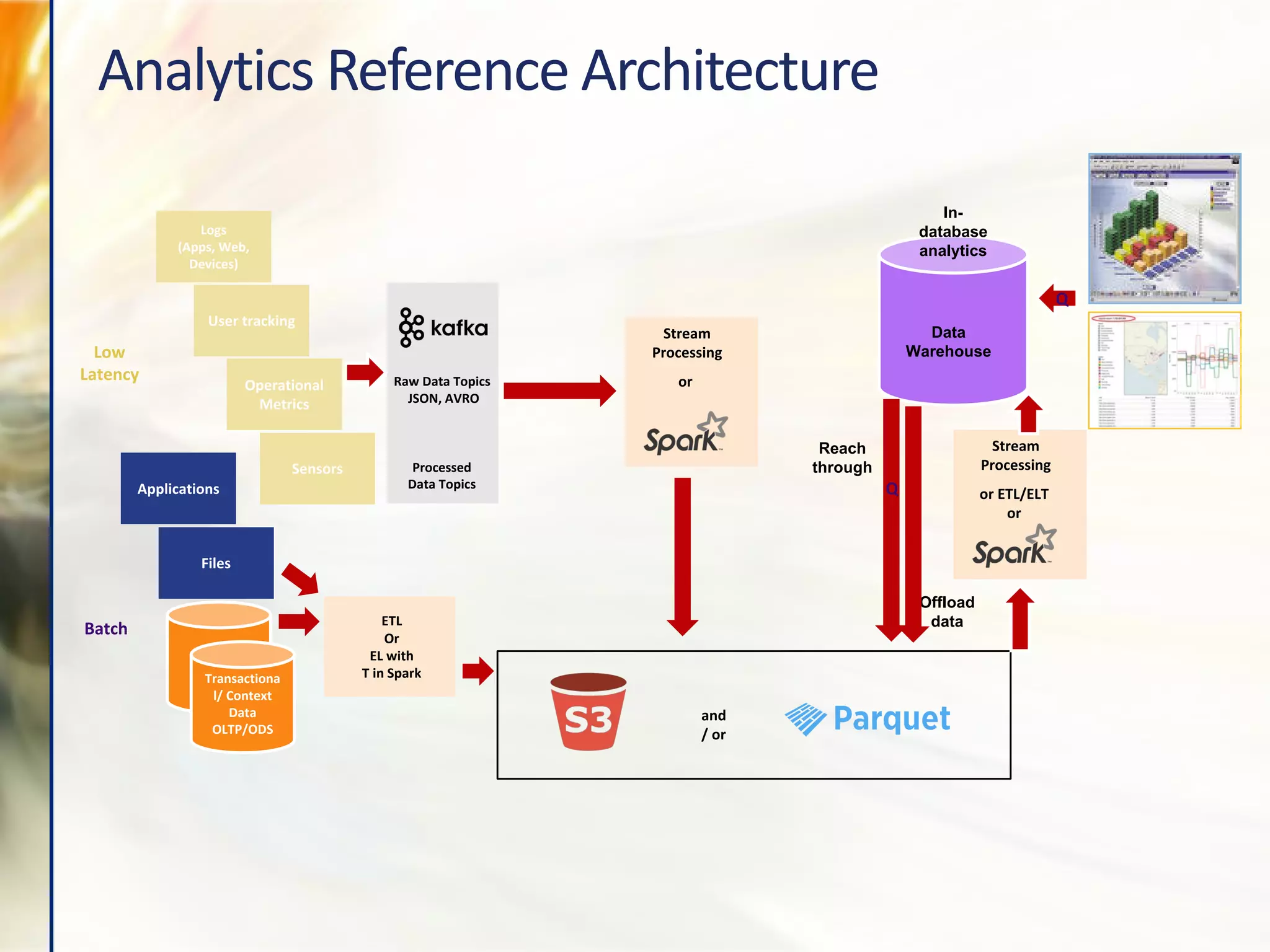

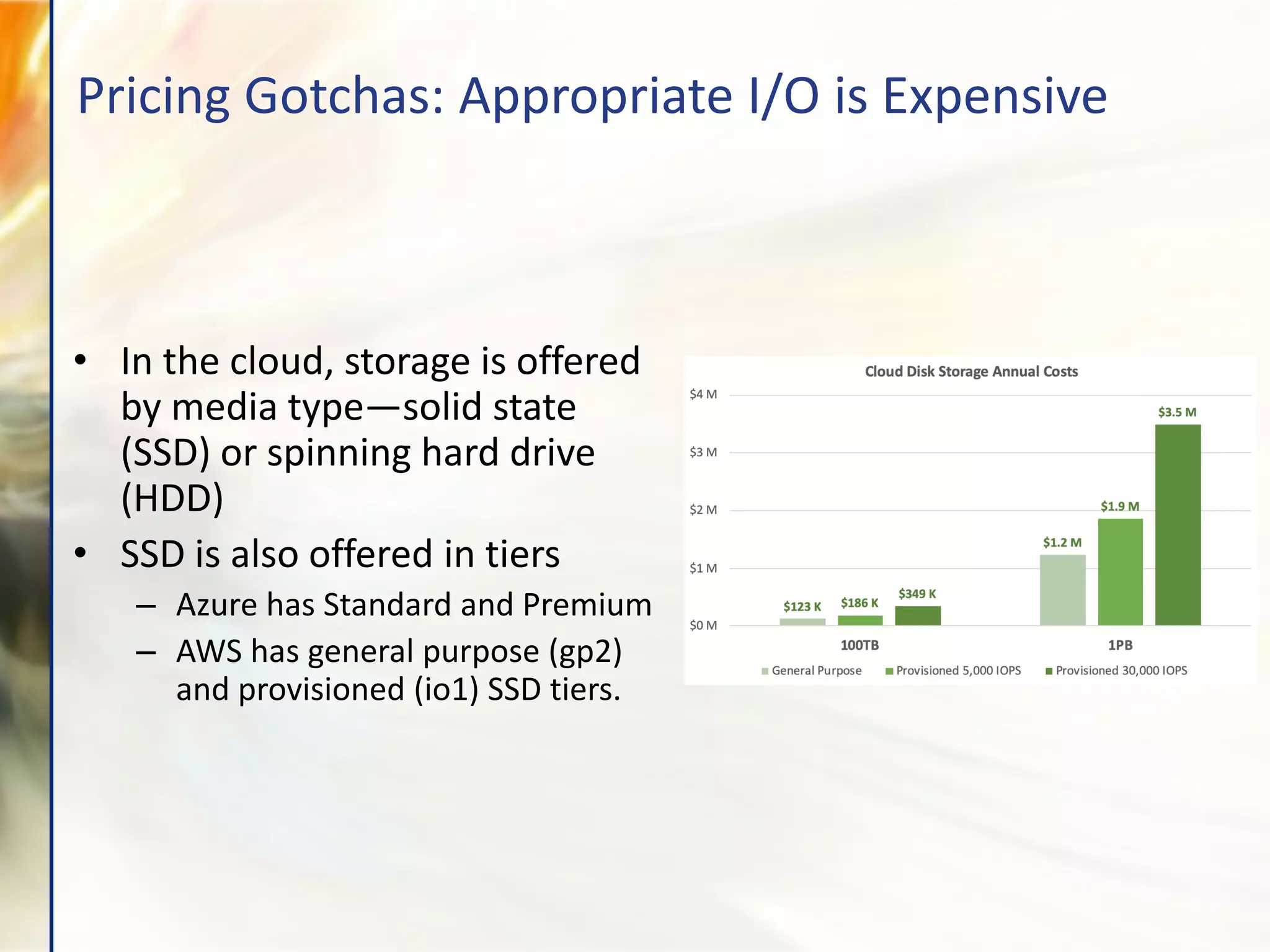

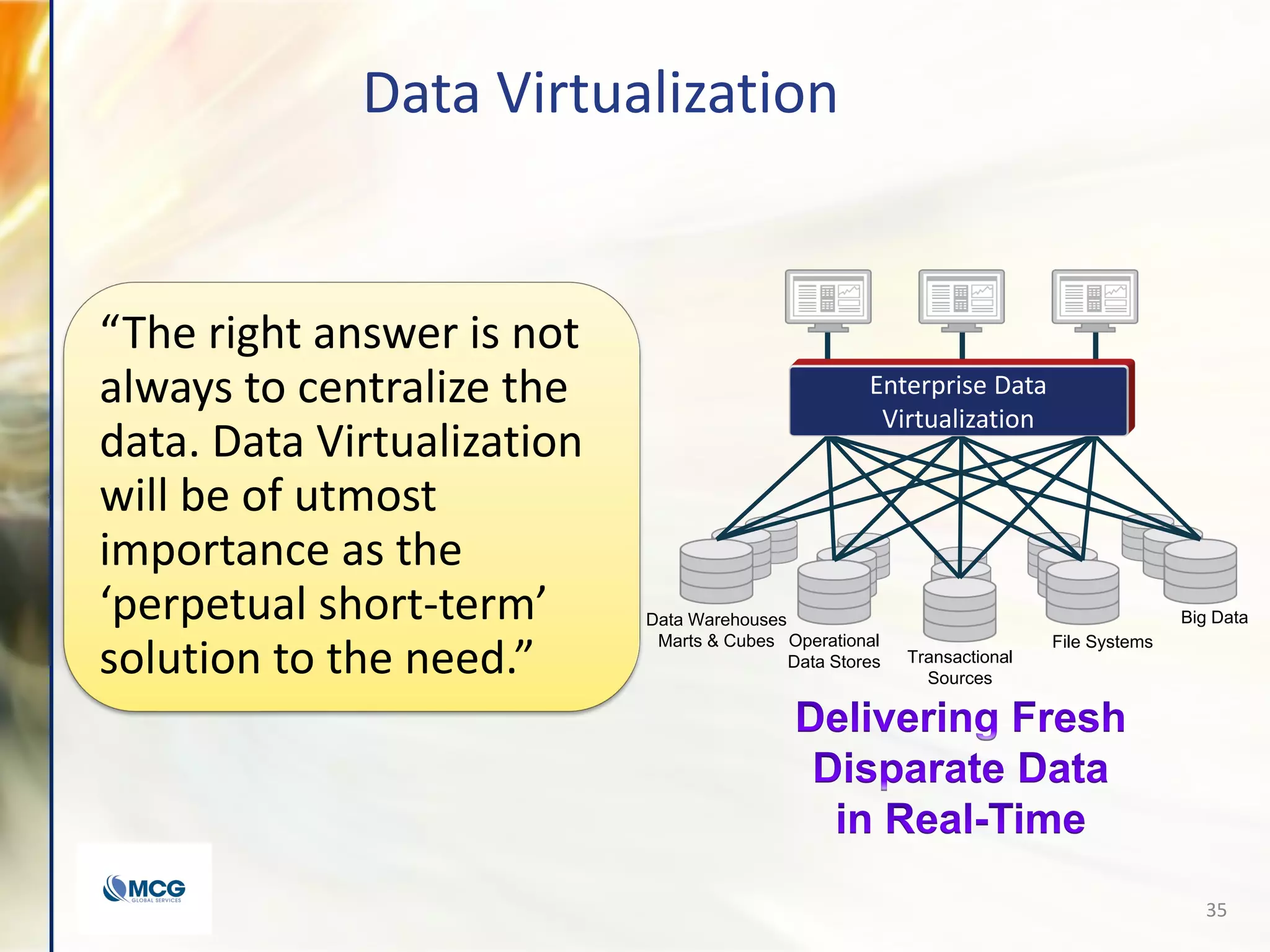

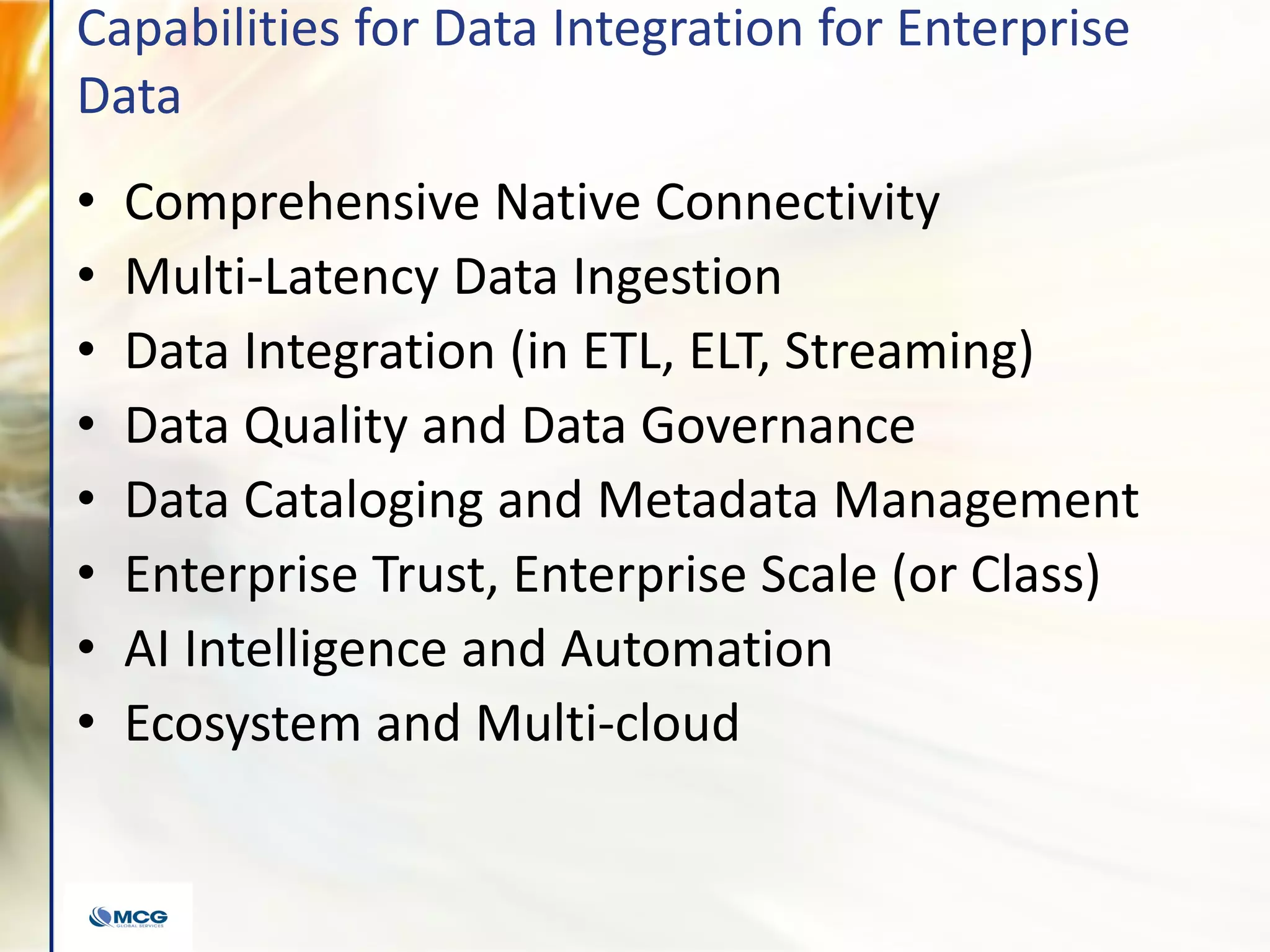

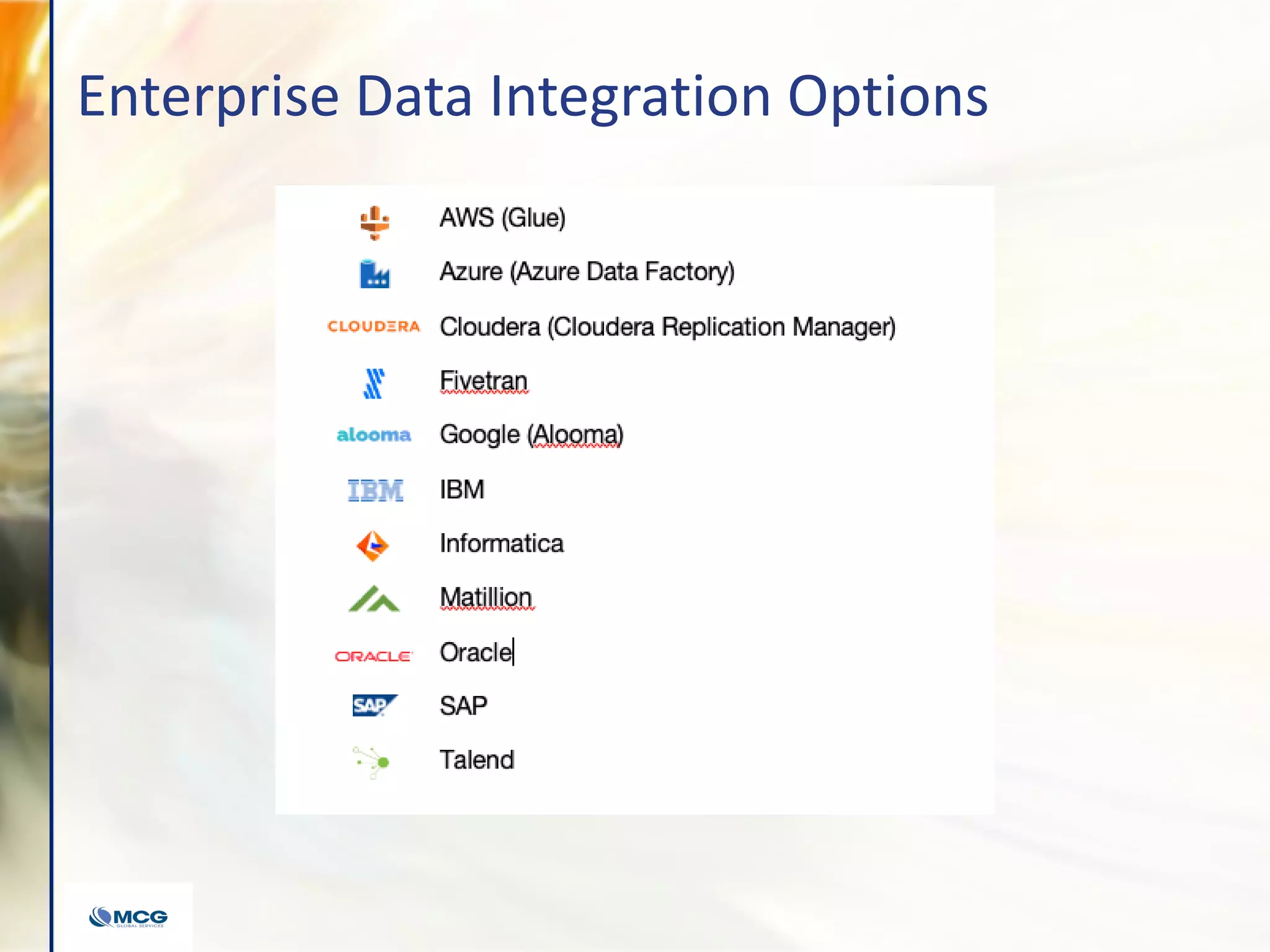

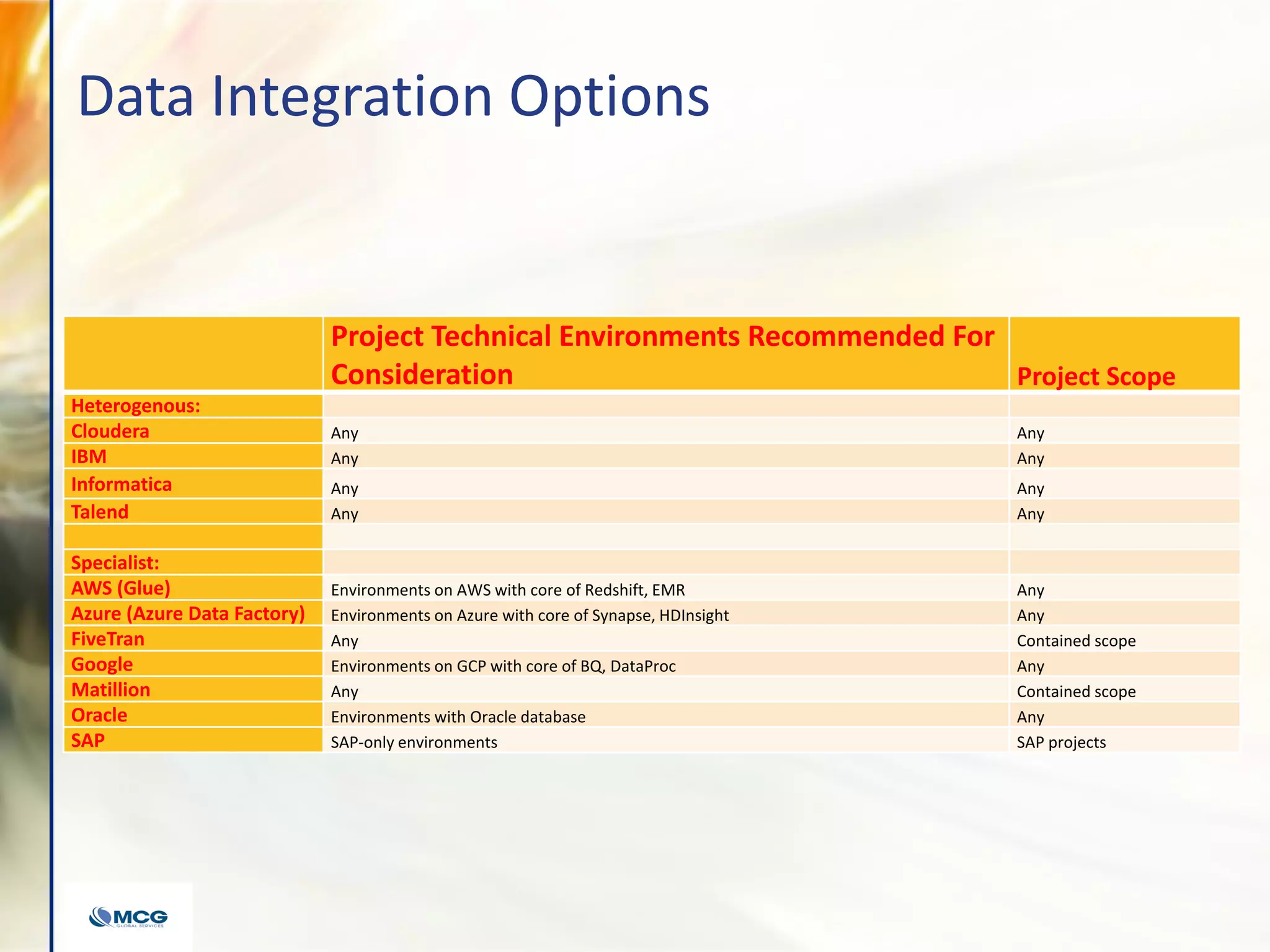

The document discusses the evolution of data platforms and their impact on enterprise analytic strategies, highlighting various components such as data storage, processing, and governance. It includes case studies showcasing how organizations like Tyson Foods and Home Depot improved their analytics capabilities and reduced costs using modern data solutions. The content emphasizes the importance of a universal semantic layer, intelligent data virtualization, and autonomous data engineering in current analytics frameworks.