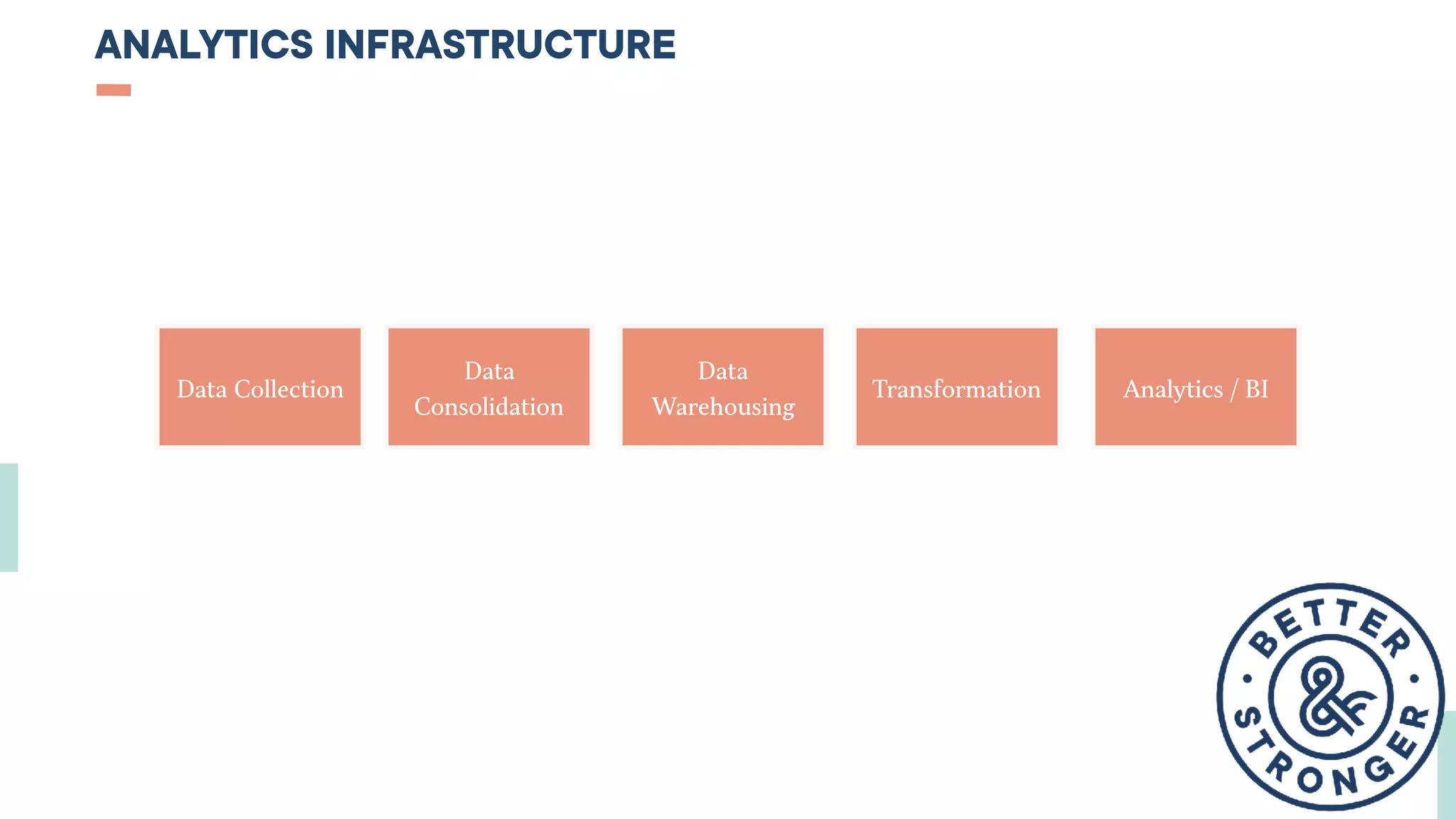

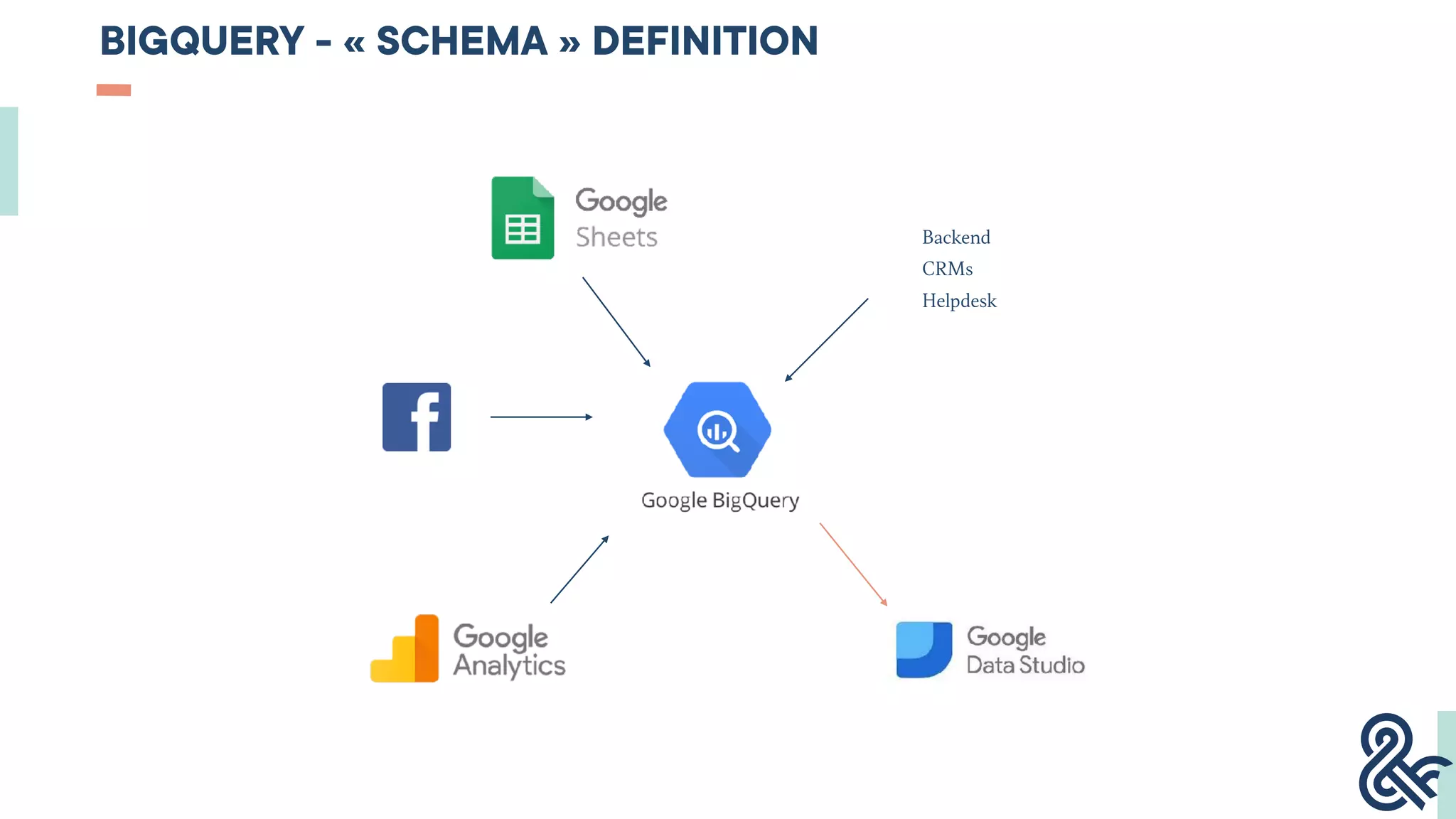

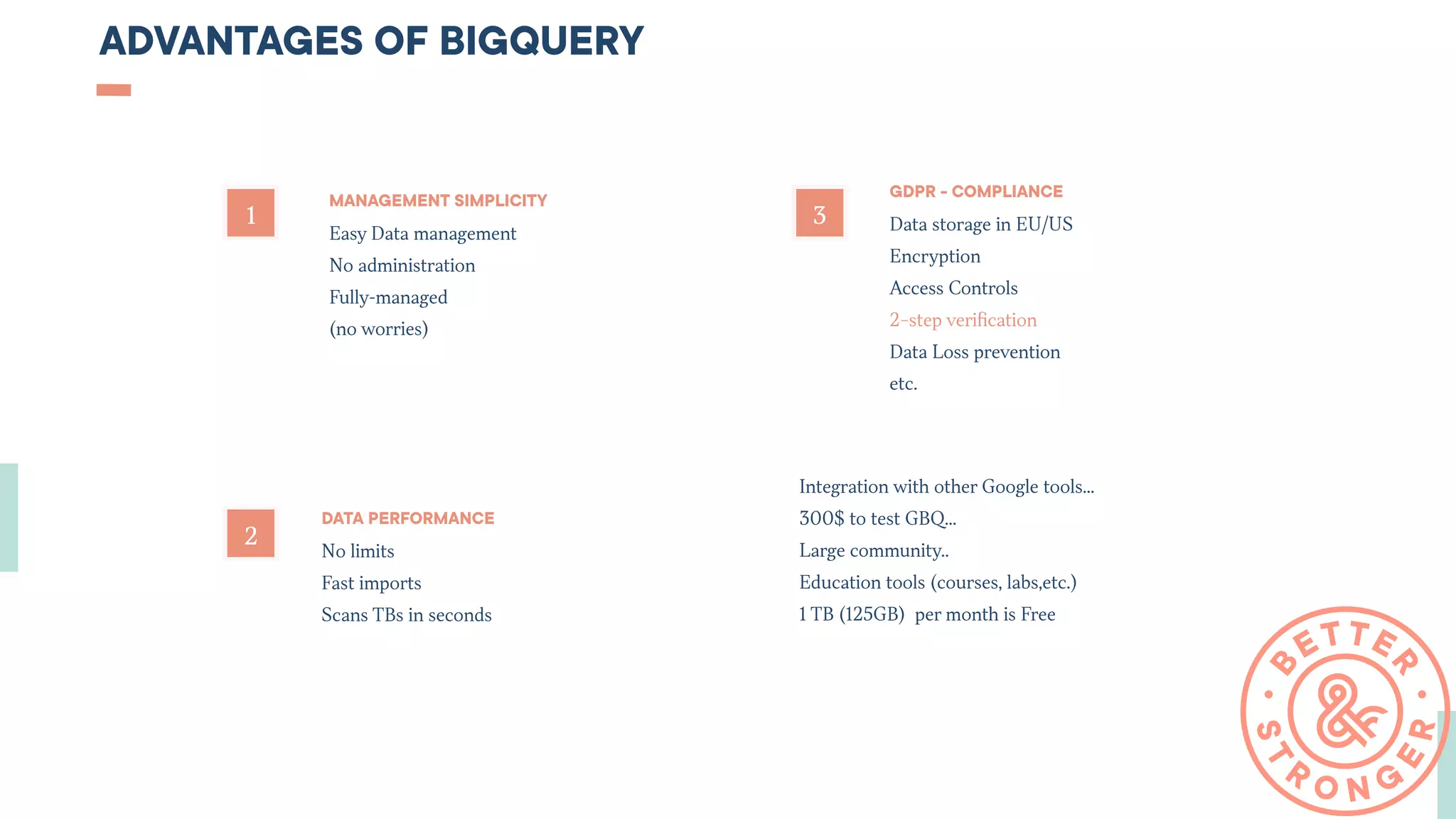

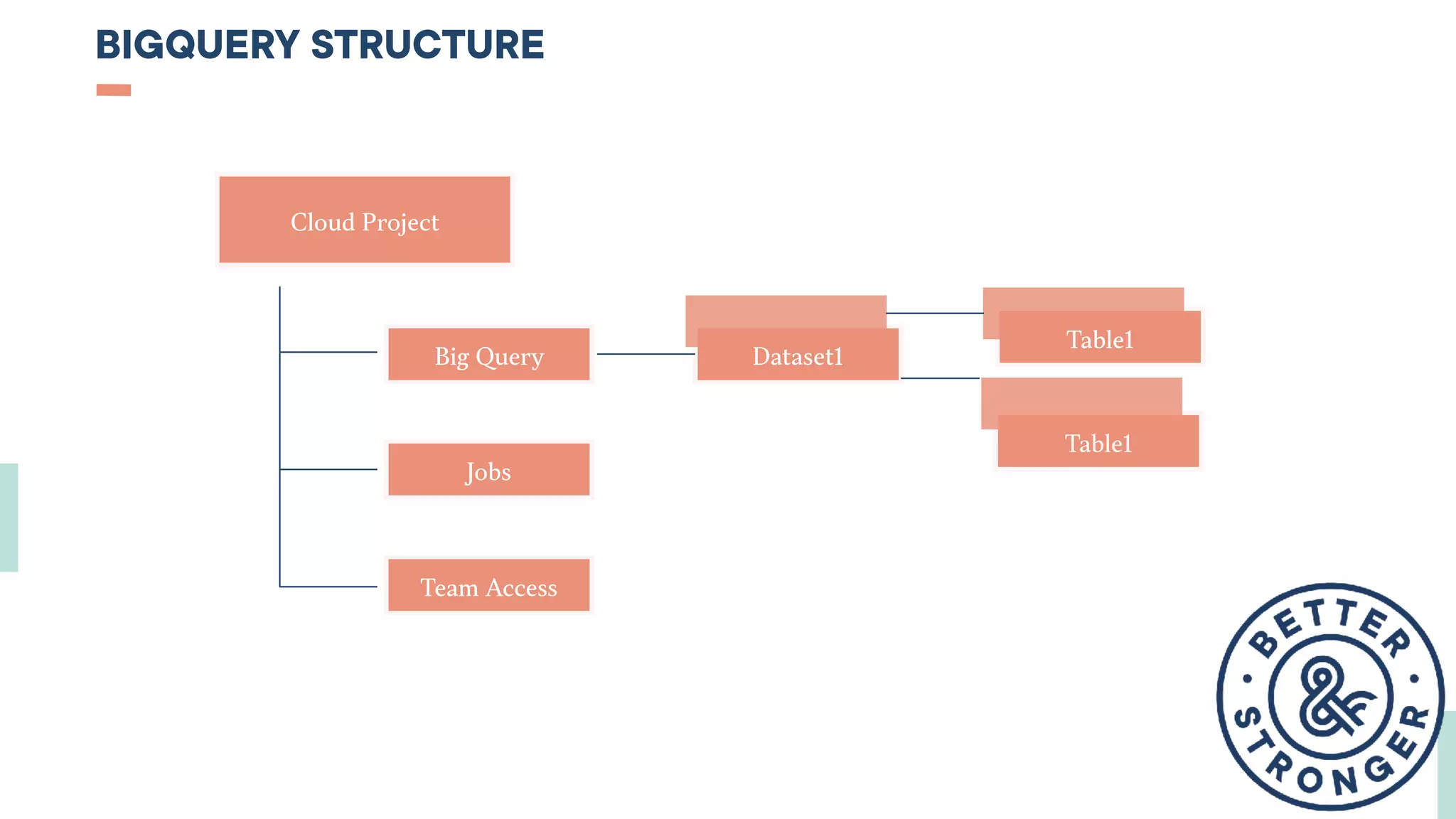

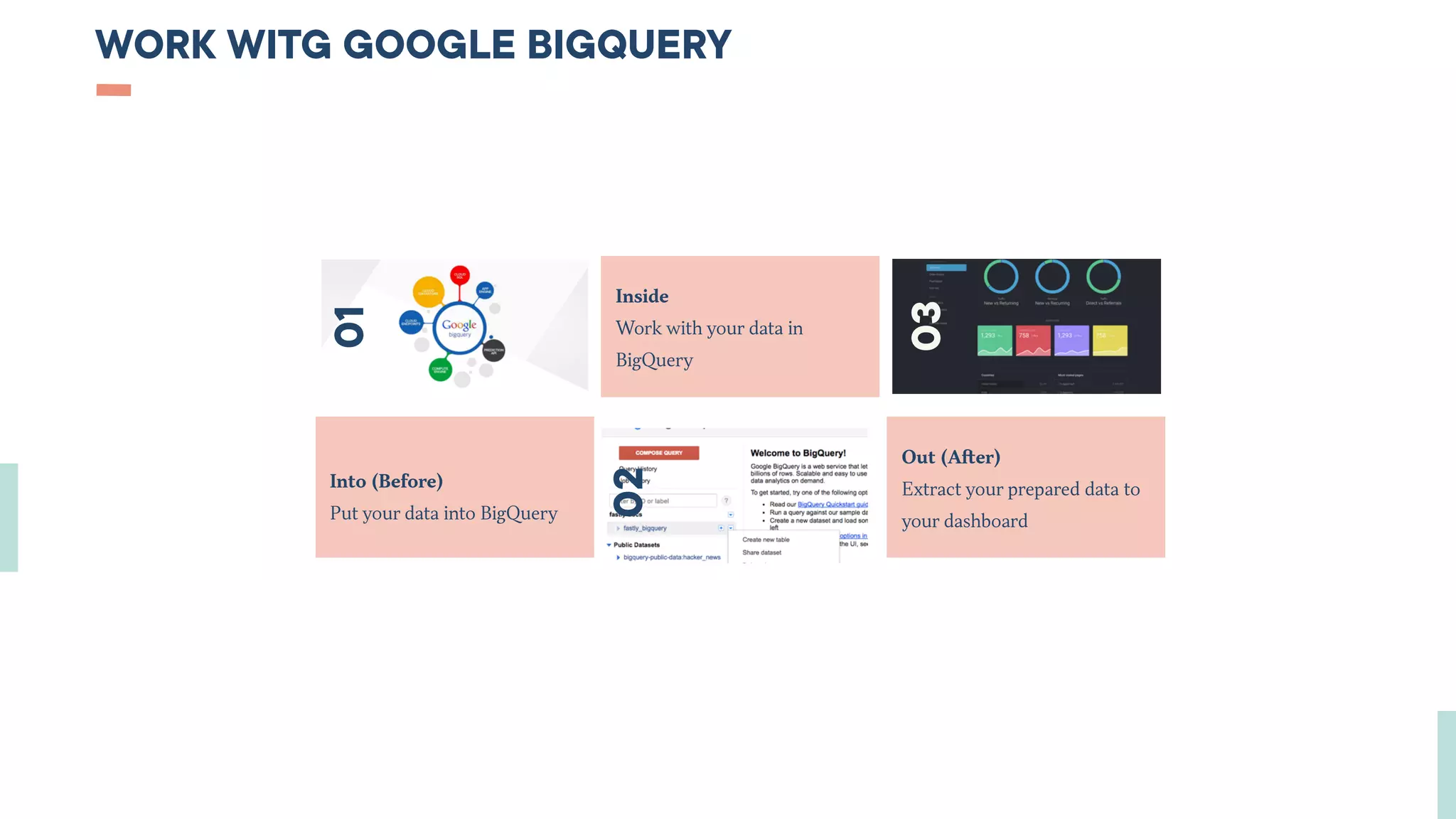

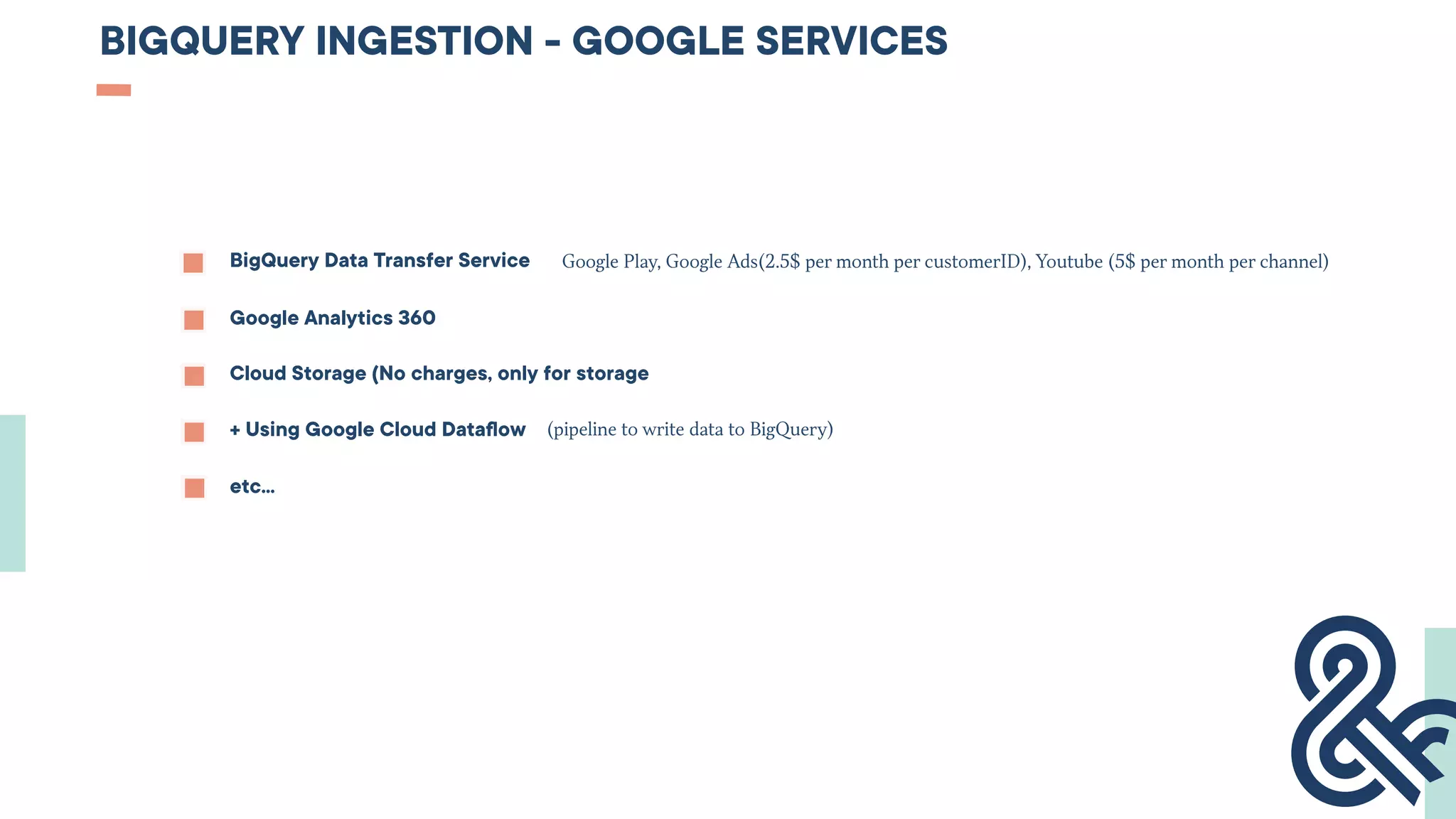

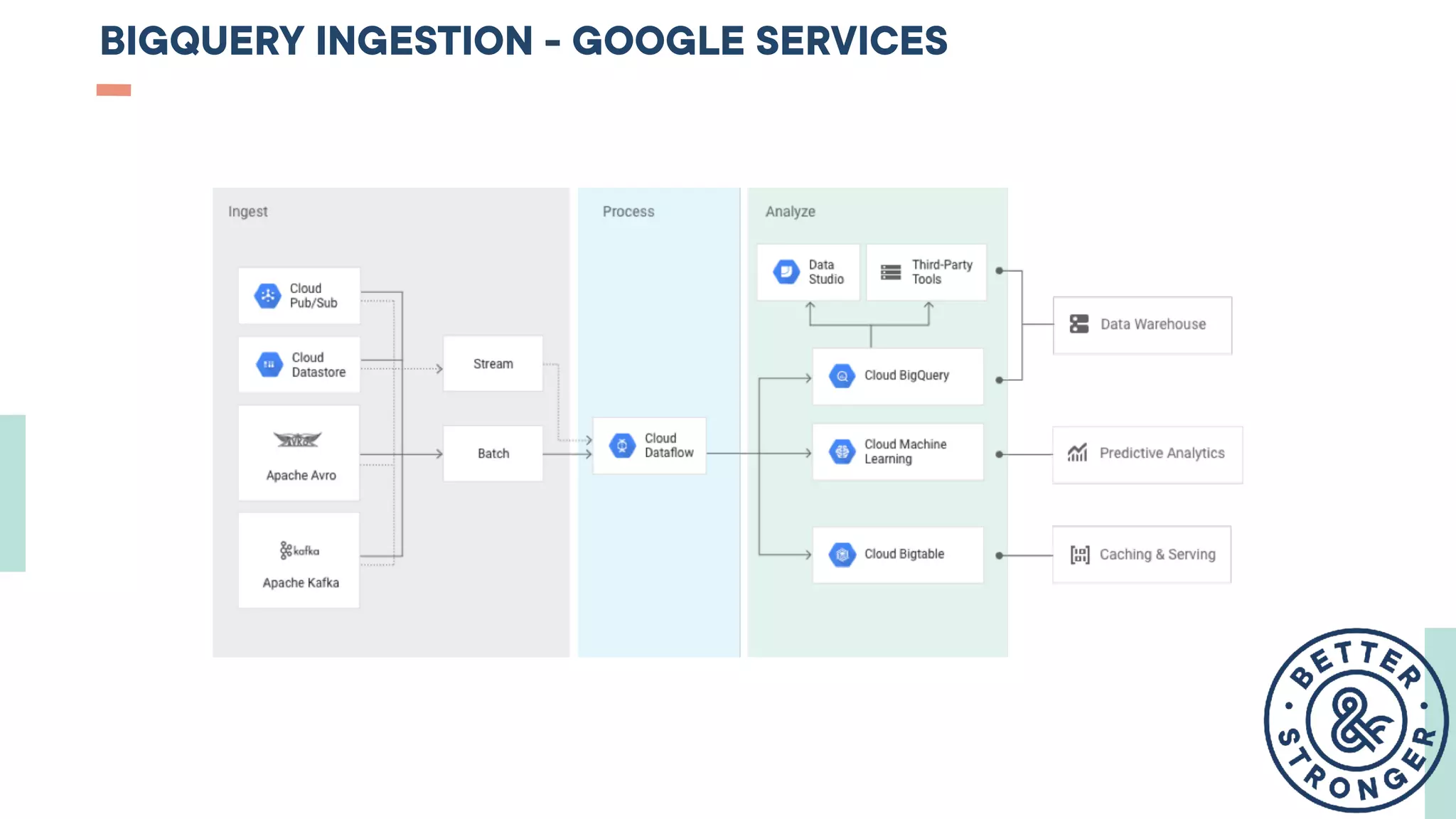

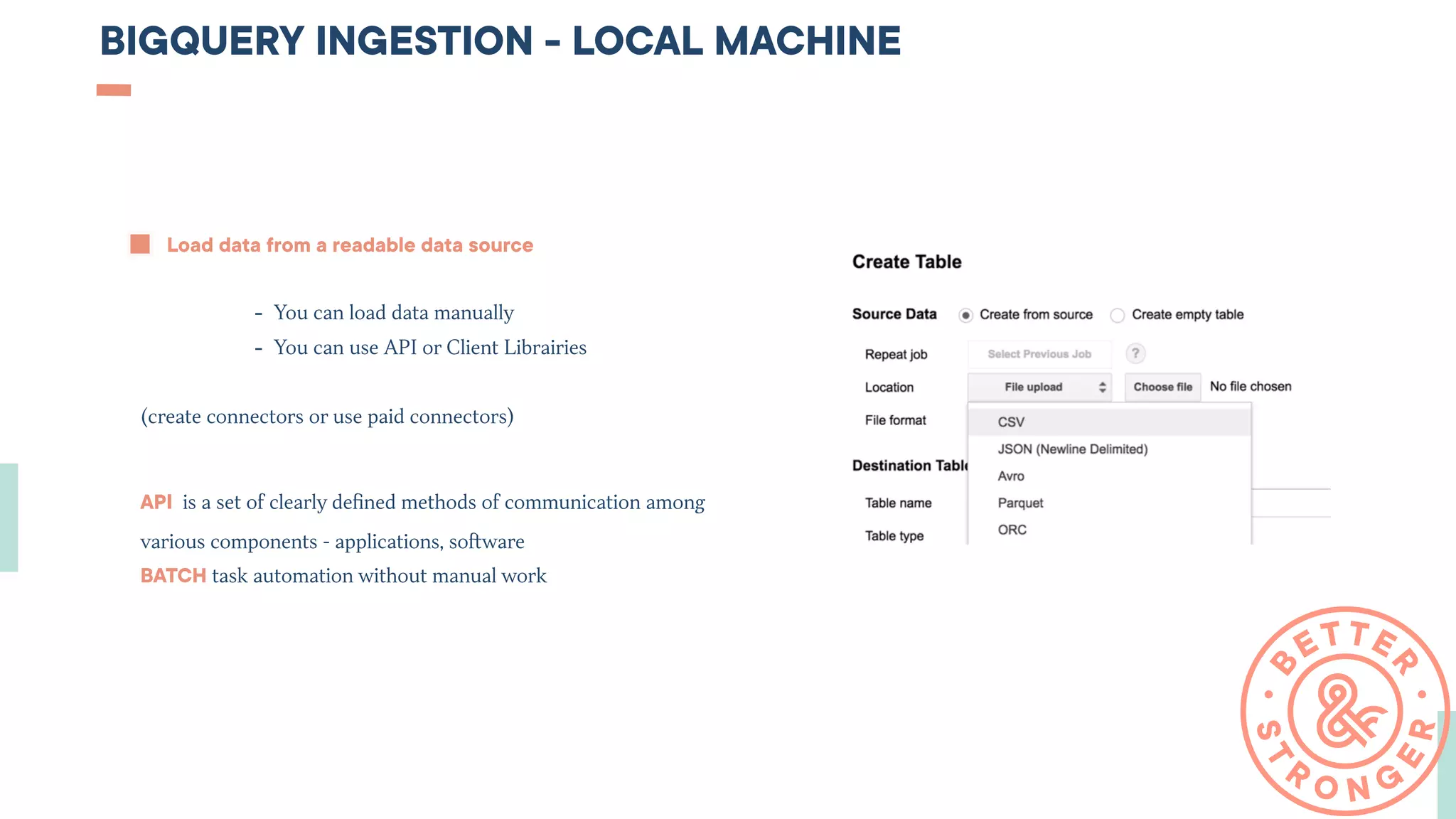

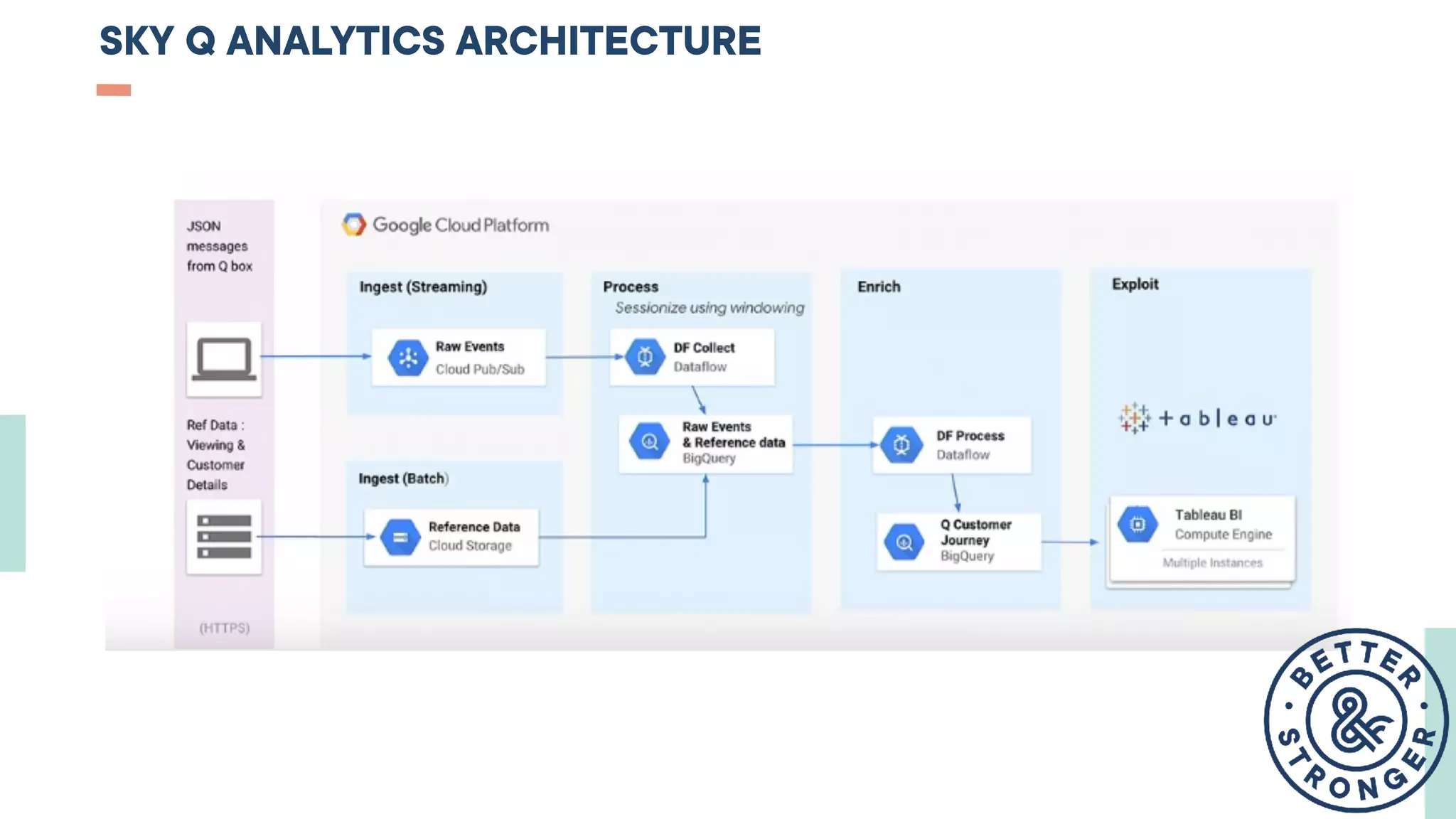

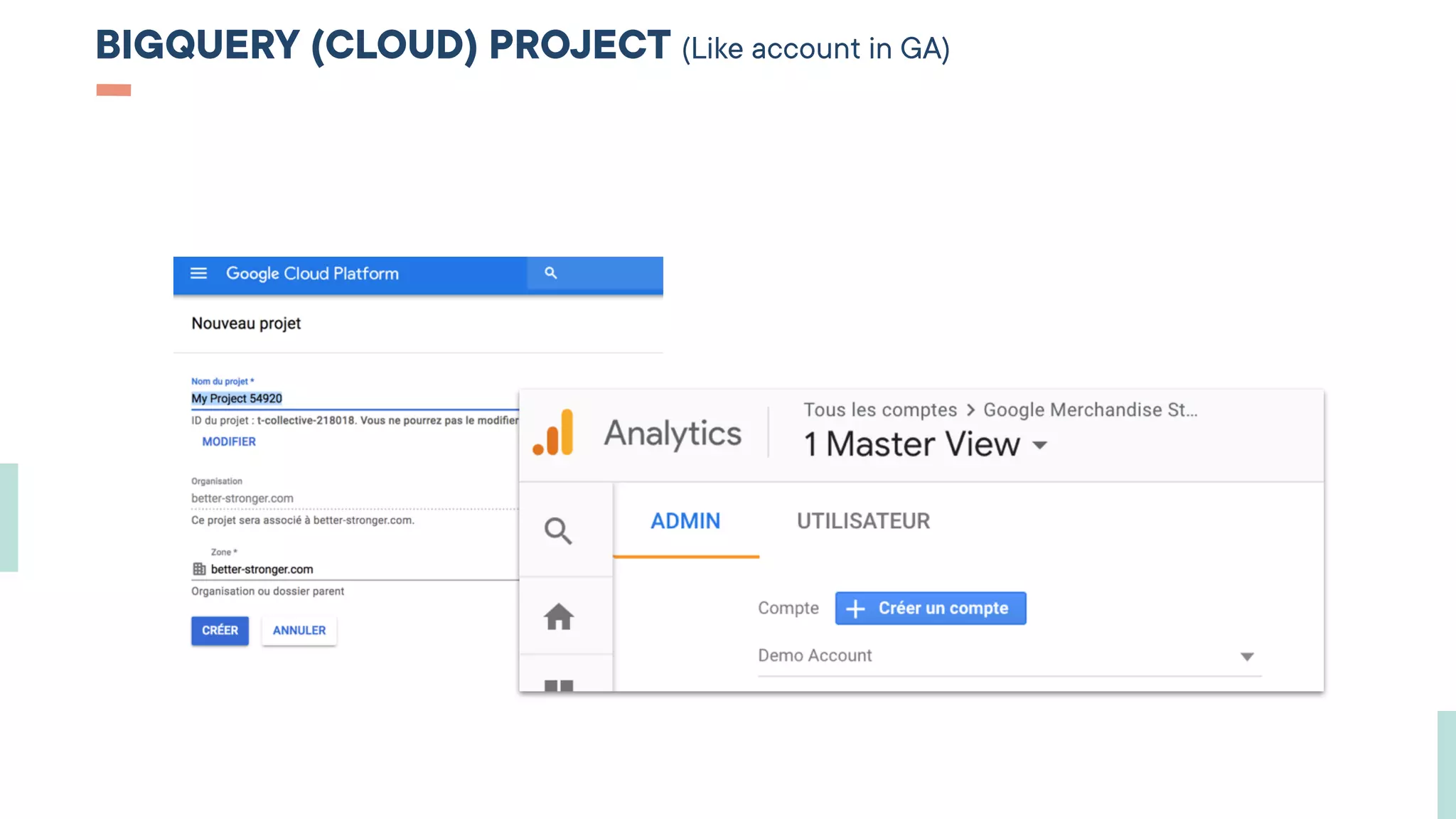

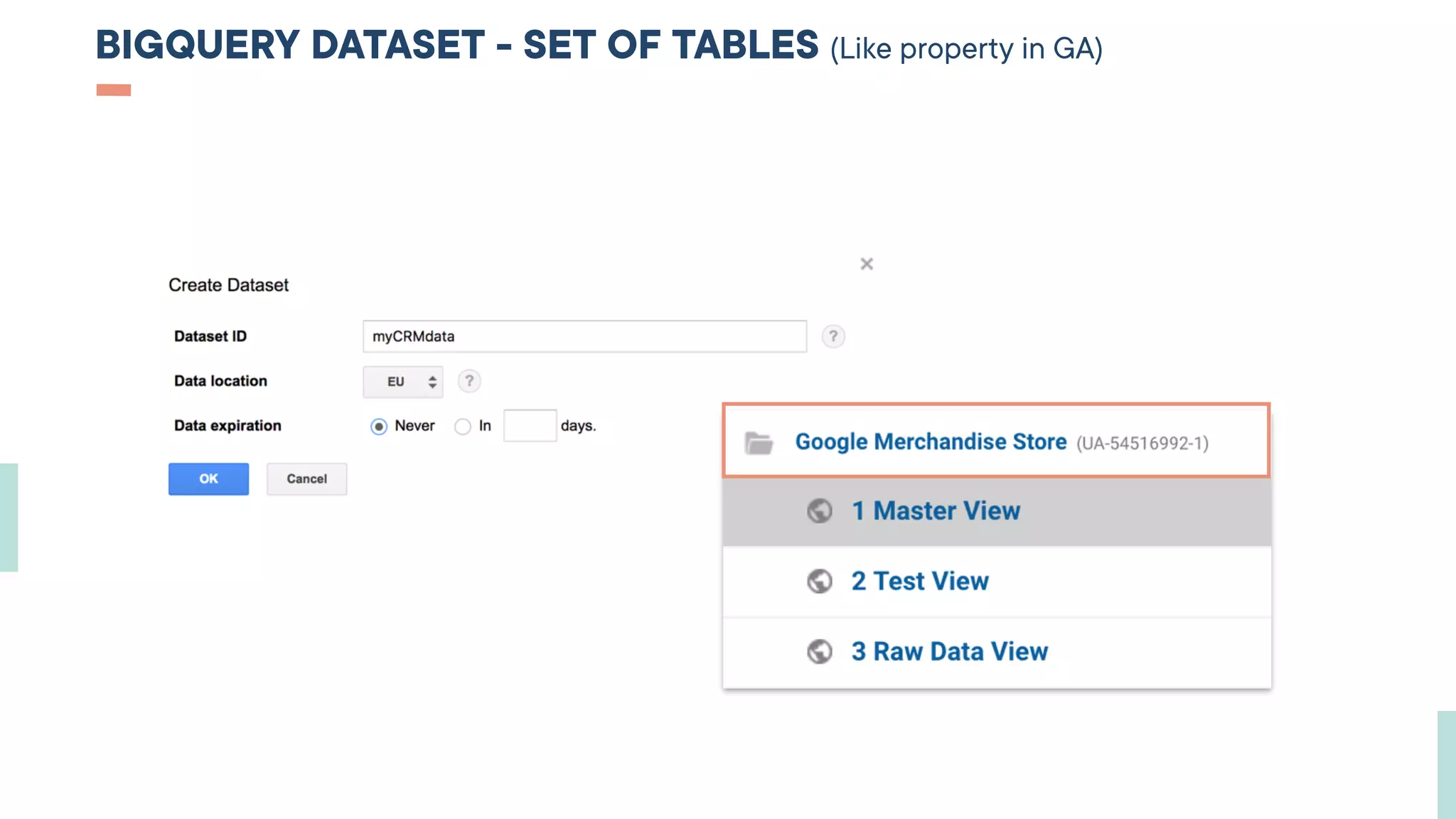

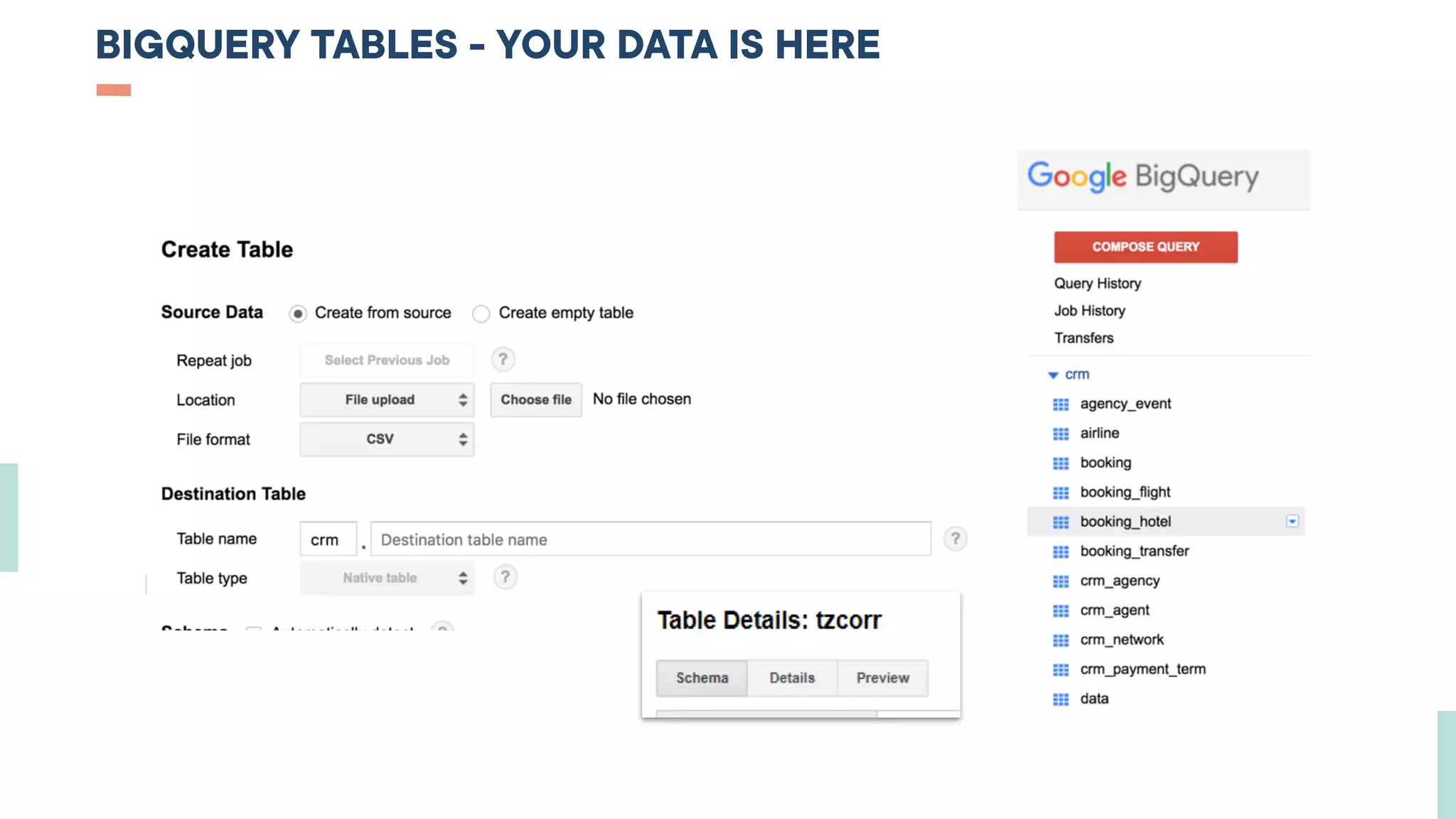

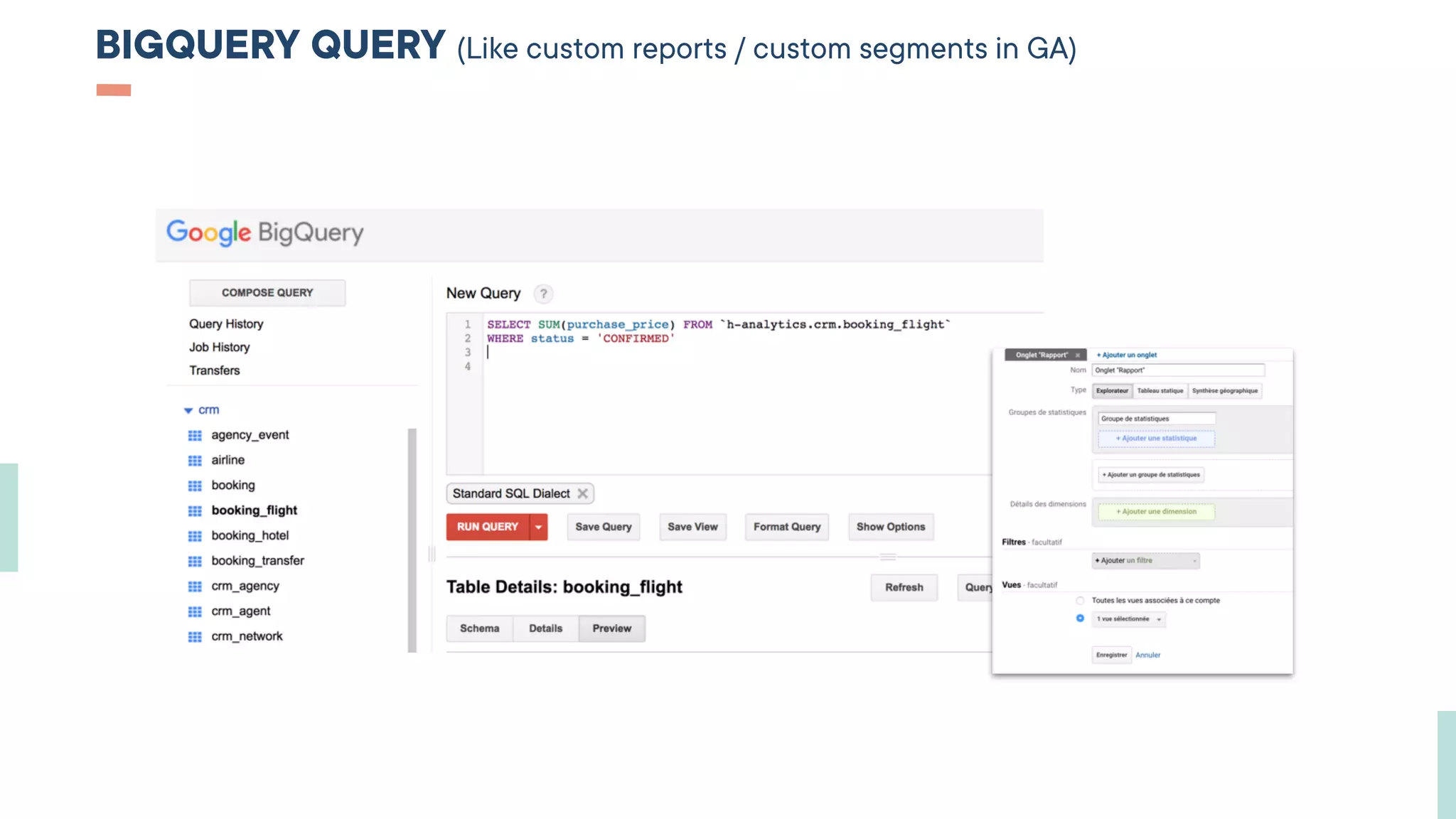

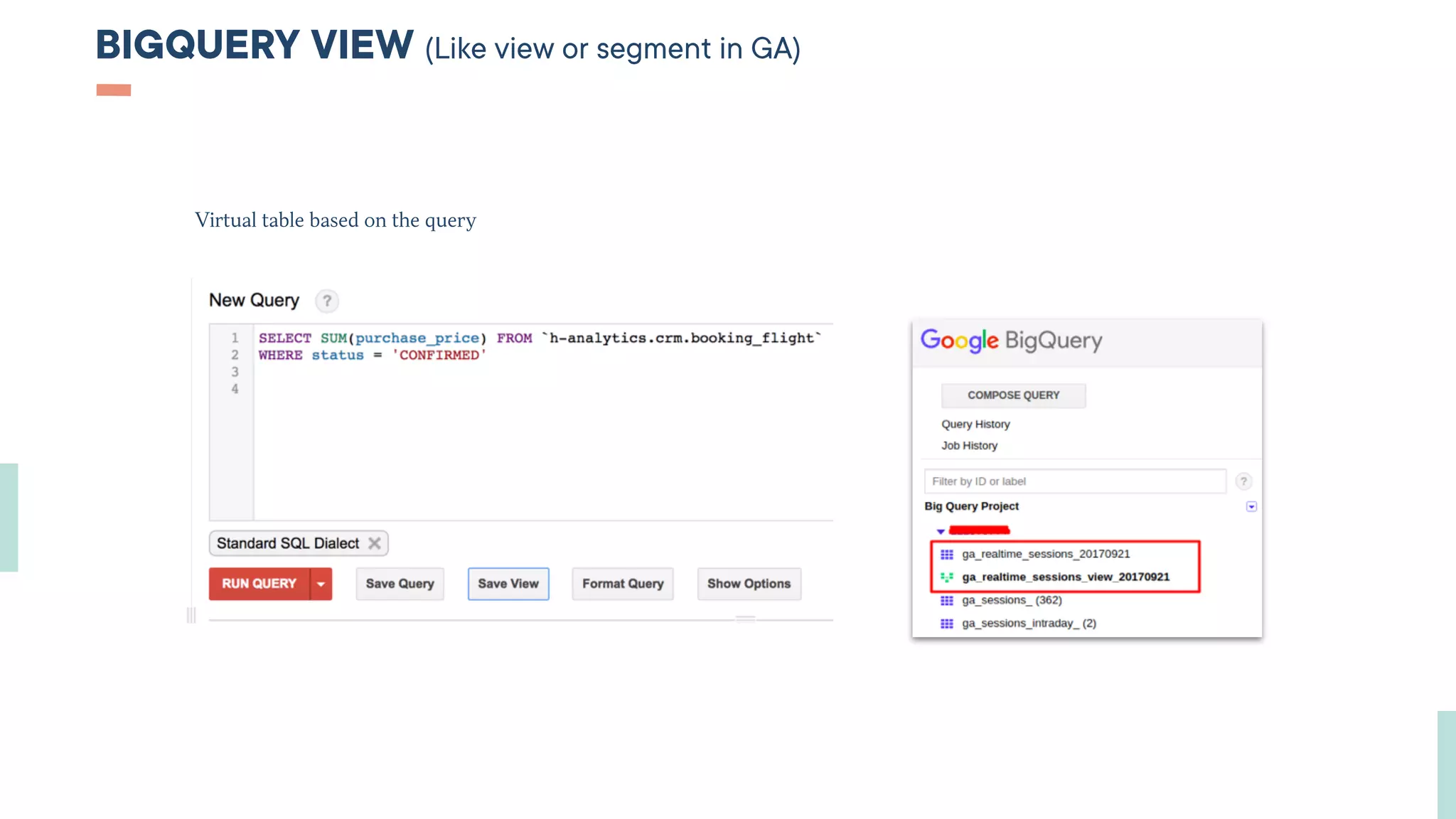

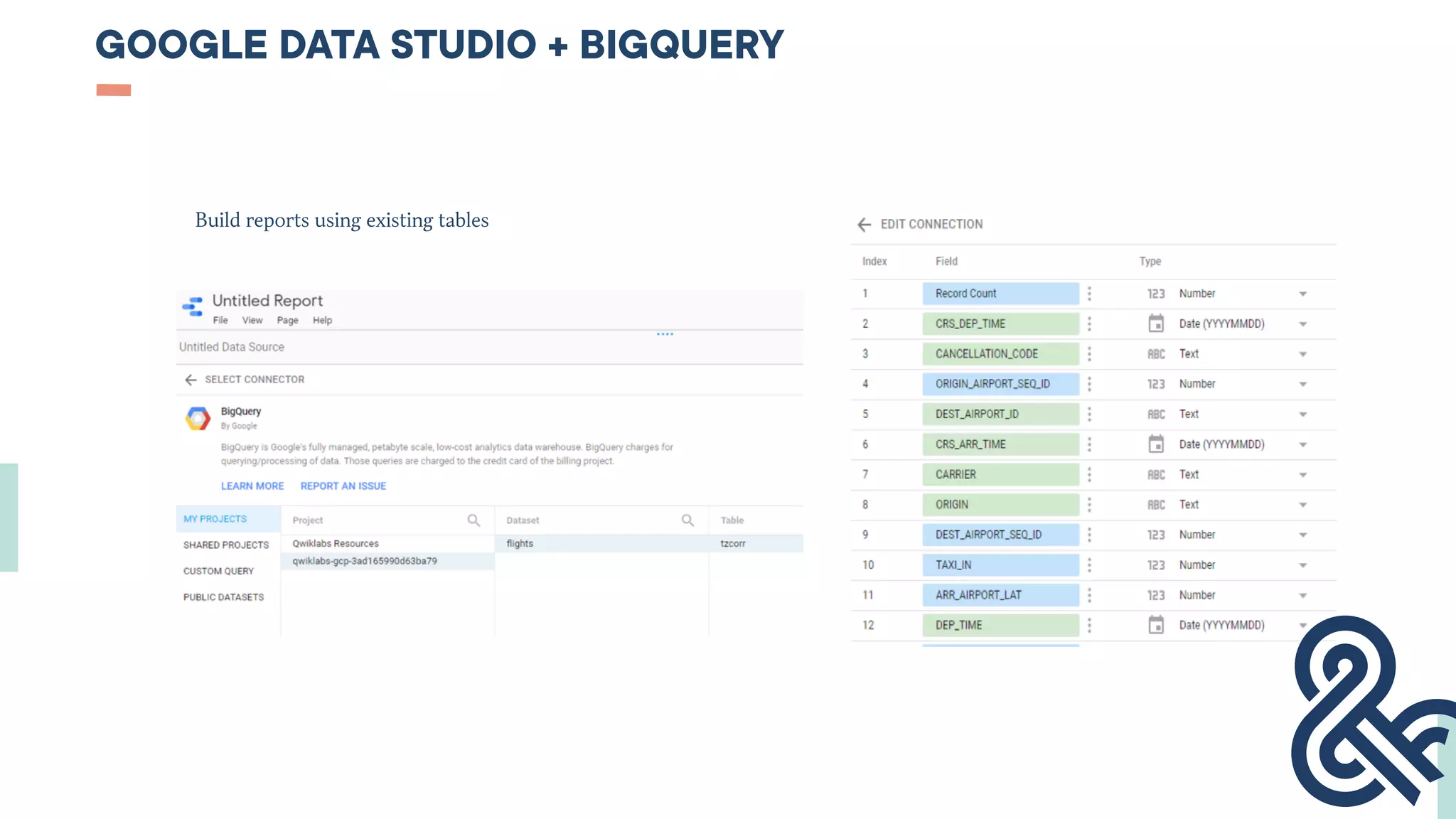

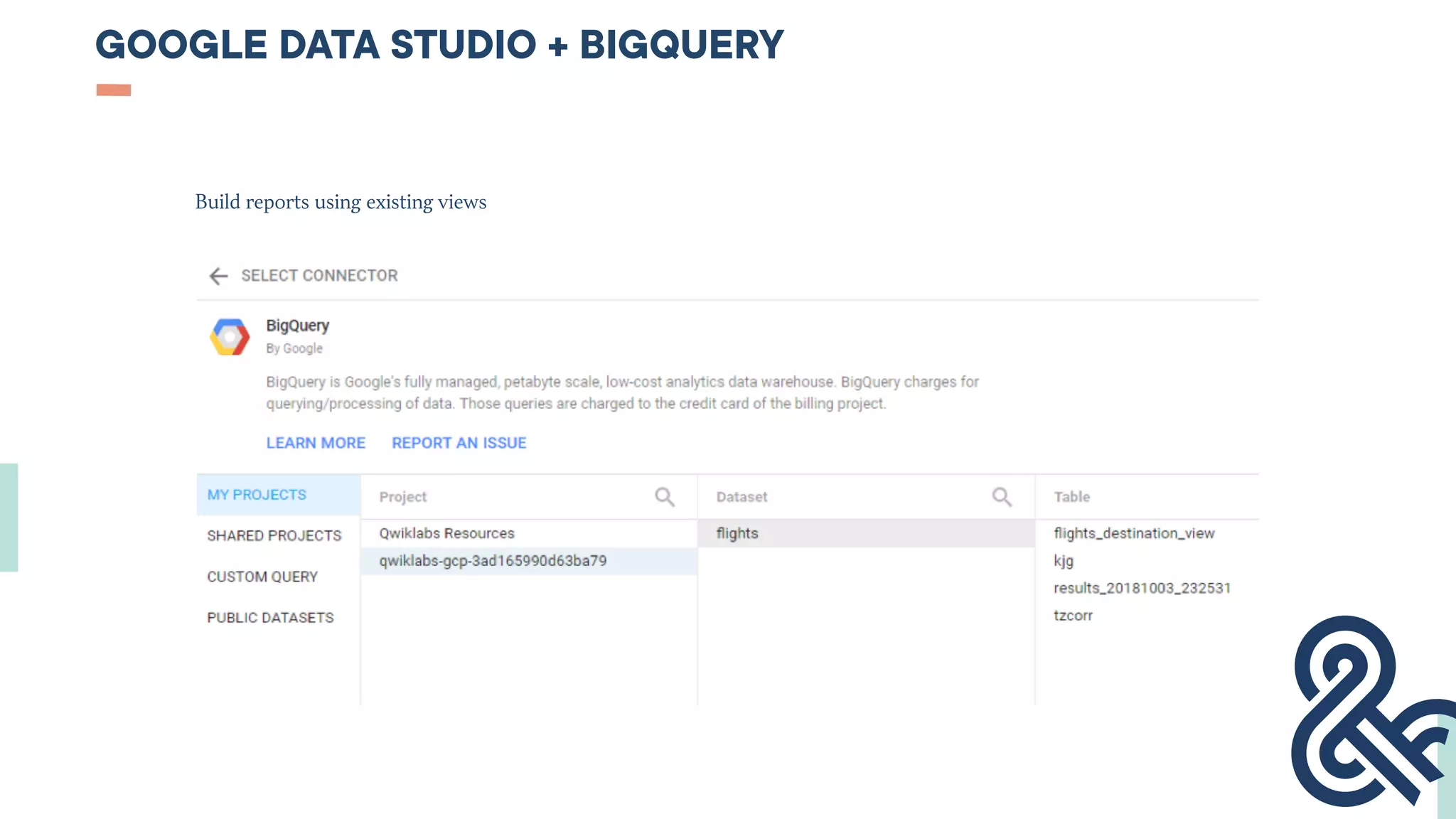

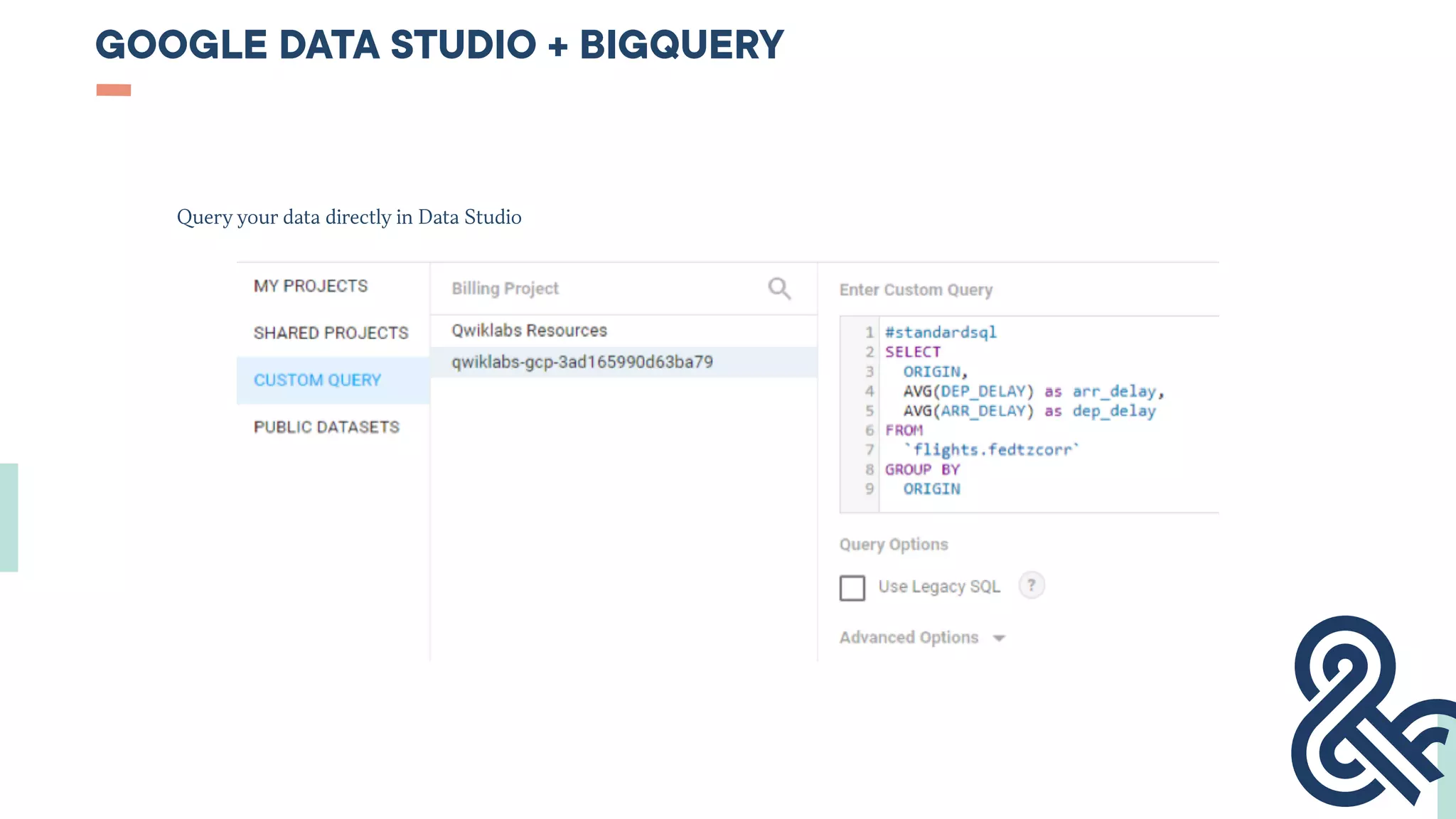

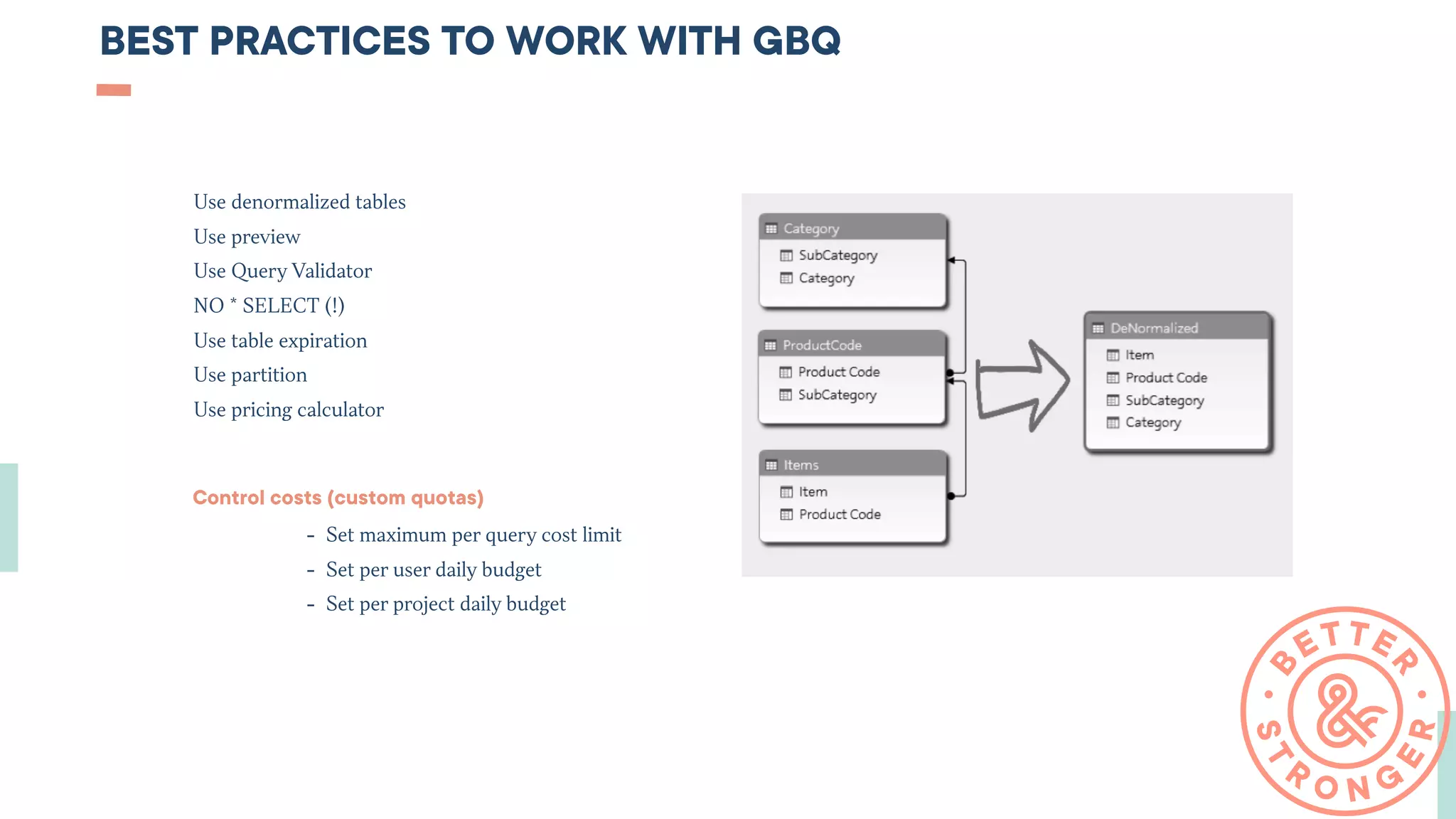

This document serves as a beginner's guide to Google BigQuery, covering data loading, usage, and dashboard creation. It highlights BigQuery's advantages such as management simplicity, high performance, and integration with other Google tools, while emphasizing the need for basic SQL knowledge. Additionally, it provides best practices for cost management and effective data handling within BigQuery.