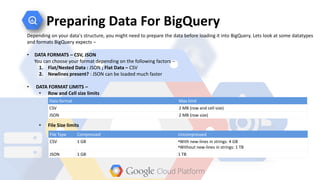

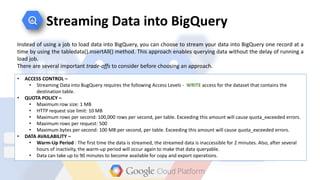

Google BigQuery is Google's fully managed big data analytics service that allows users to analyze very large datasets. It offers a fast and easy to use service with no infrastructure to manage. Developers can stream up to 100,000 rows of data per second for near real-time analysis. BigQuery bills users per project on a pay-as-you-go model, with the first 1TB of data processed each month free of charge.

![Loading Data into BigQuery

Loading Data Using the BQ Tool

• If not specified, the default file format is CSV

(comma separated values)

• The files can also use newline delimited JSON

format

• Schema

Either a filename or a comma-separated list

of column_name:datatype

pairs that describe the file format.

• Data source may be on local machine or on

Cloud Storage

"bq load" command

Syntax:

bq load [--source_format=NEWLINE_DELIMITED_JSON|CSV] destination_table data_source_uri

table_schema](https://image.slidesharecdn.com/bigquery-150714180931-lva1-app6891/85/Big-query-20-320.jpg)