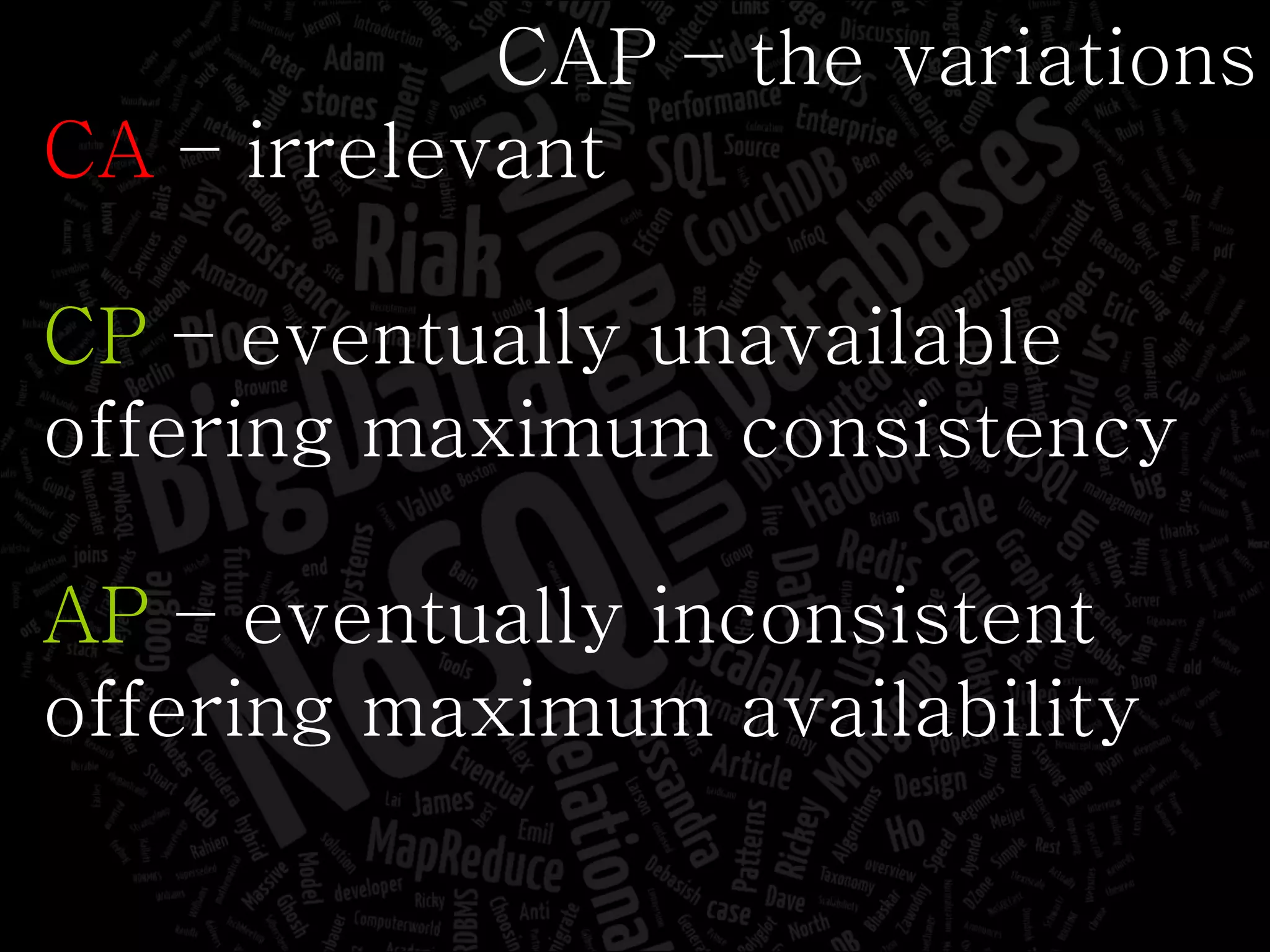

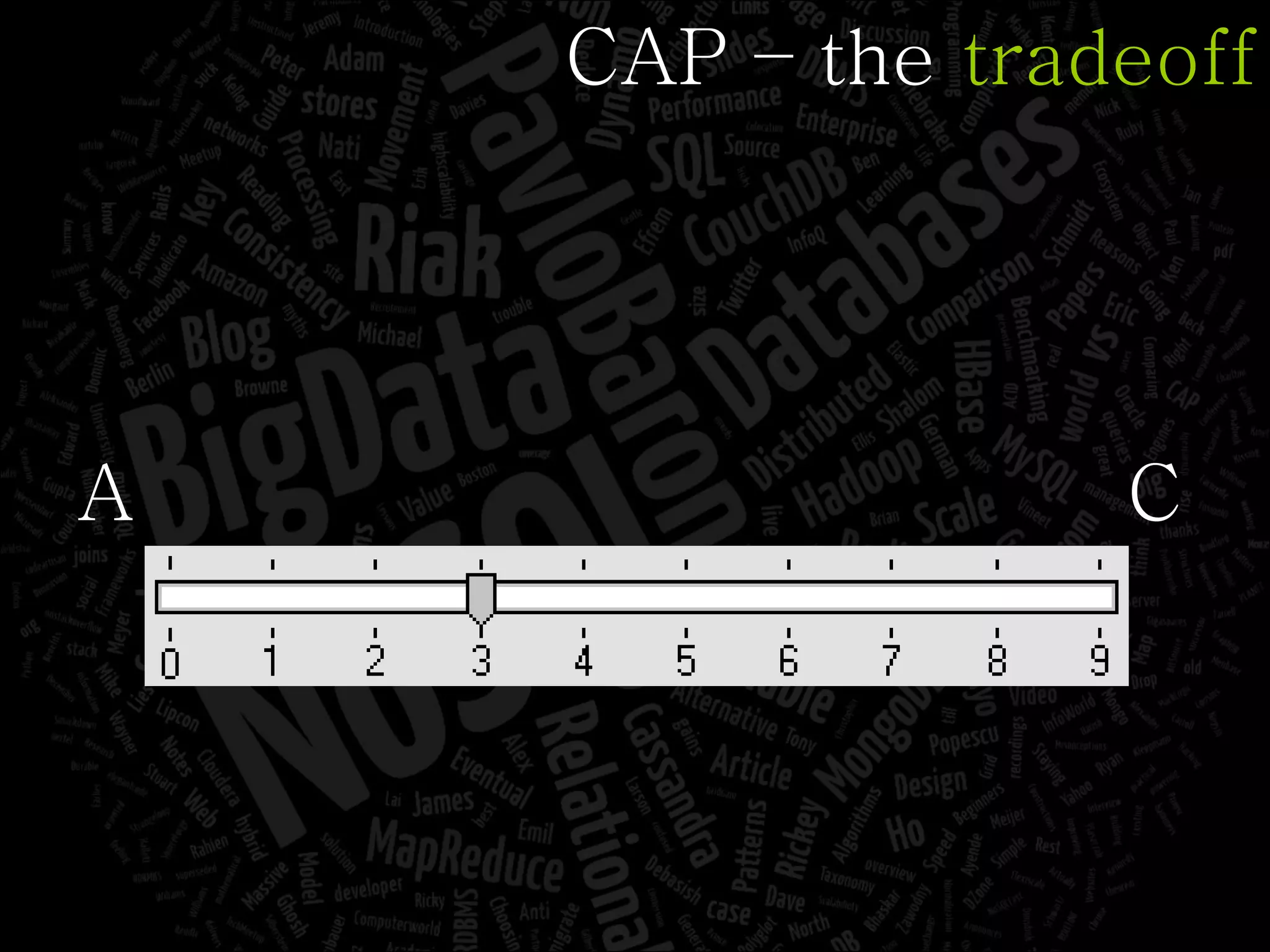

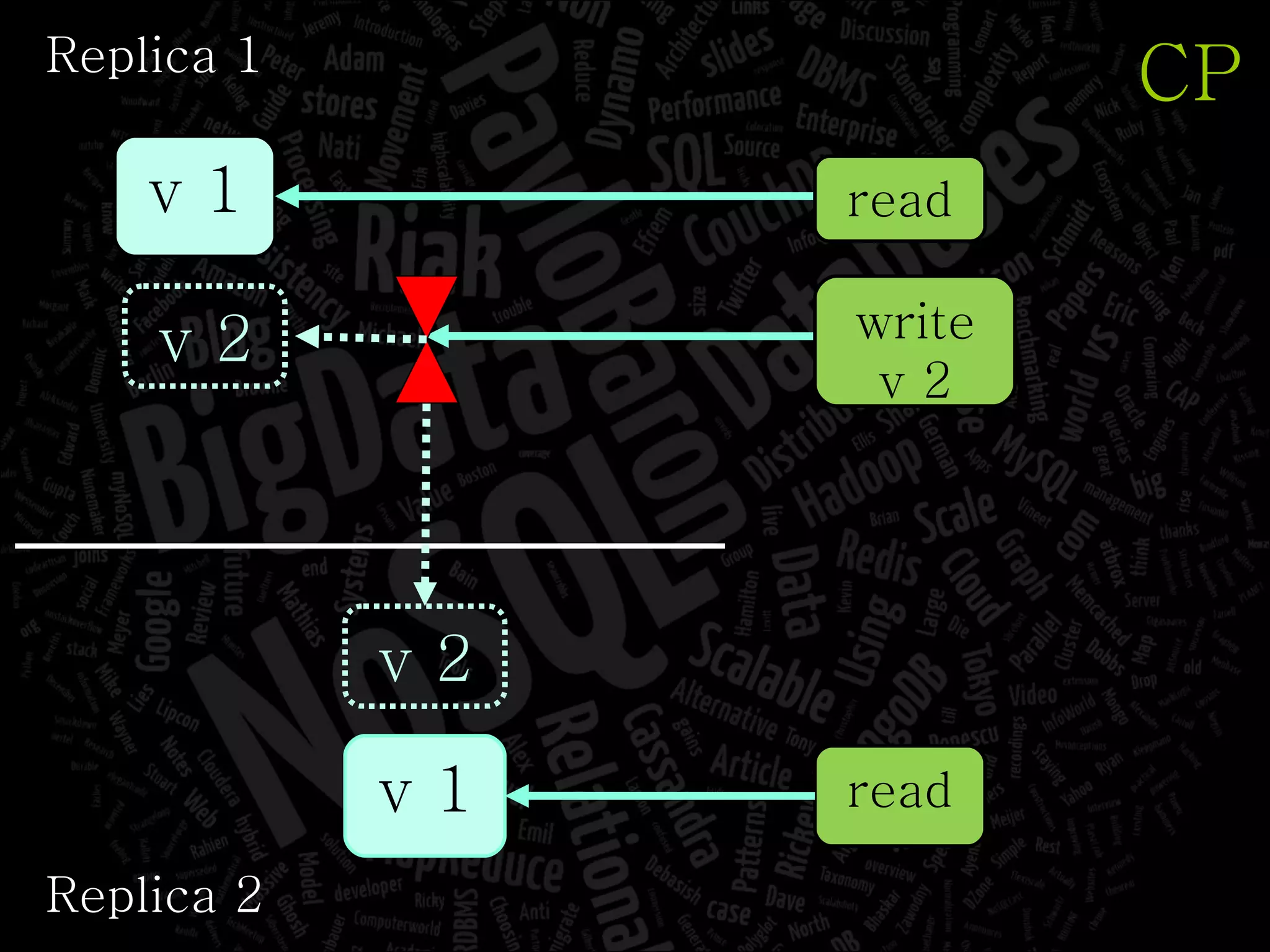

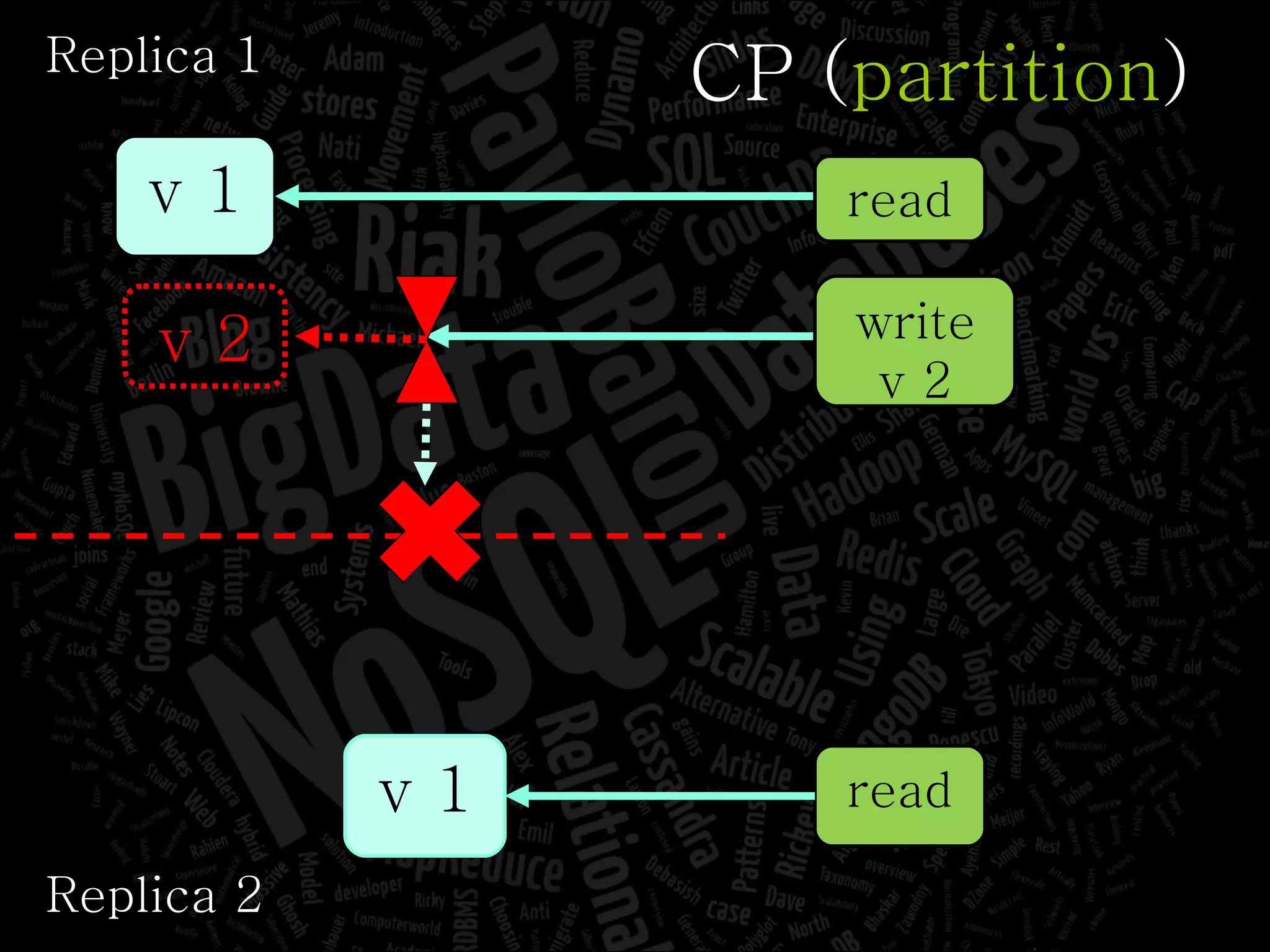

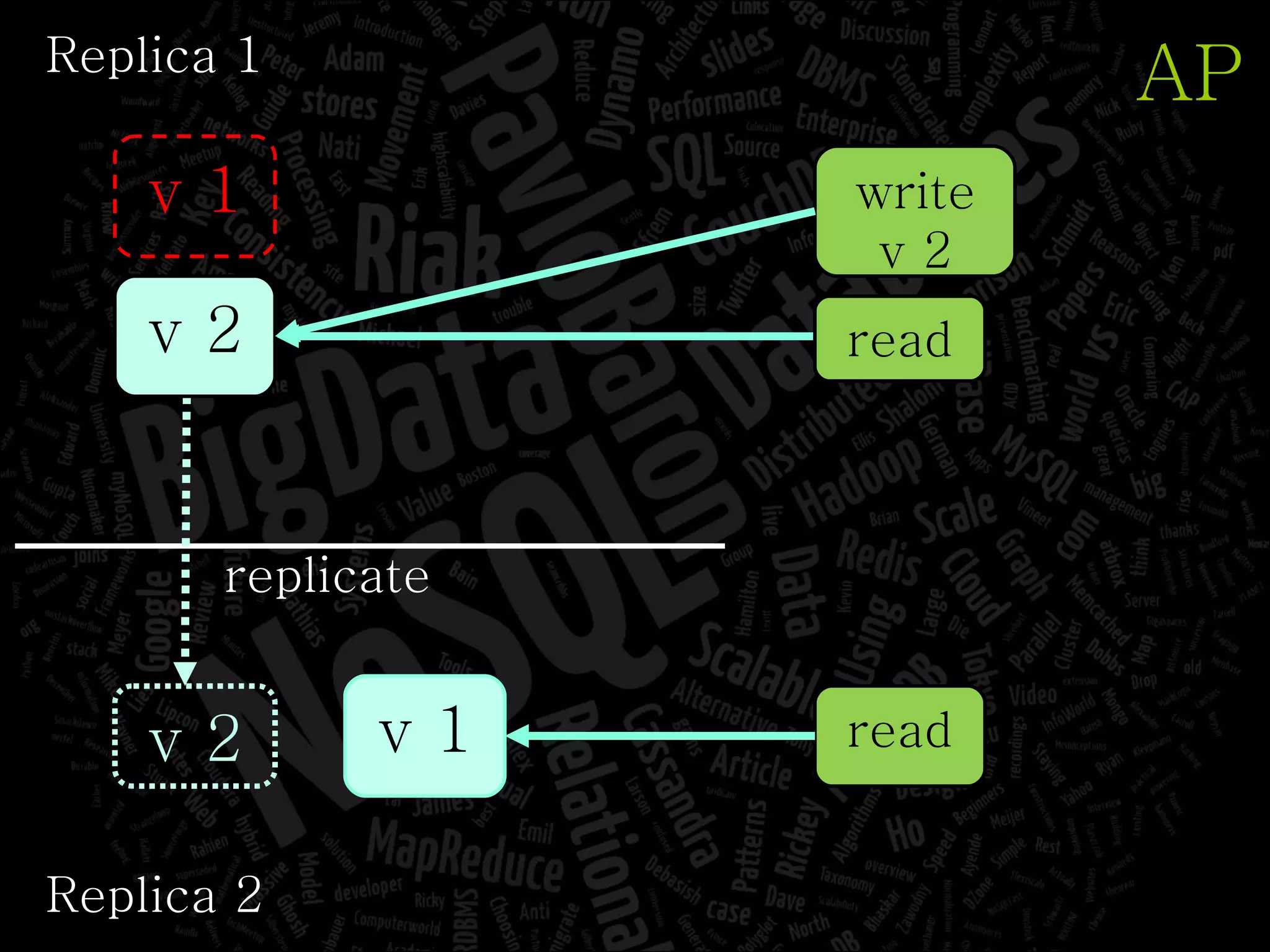

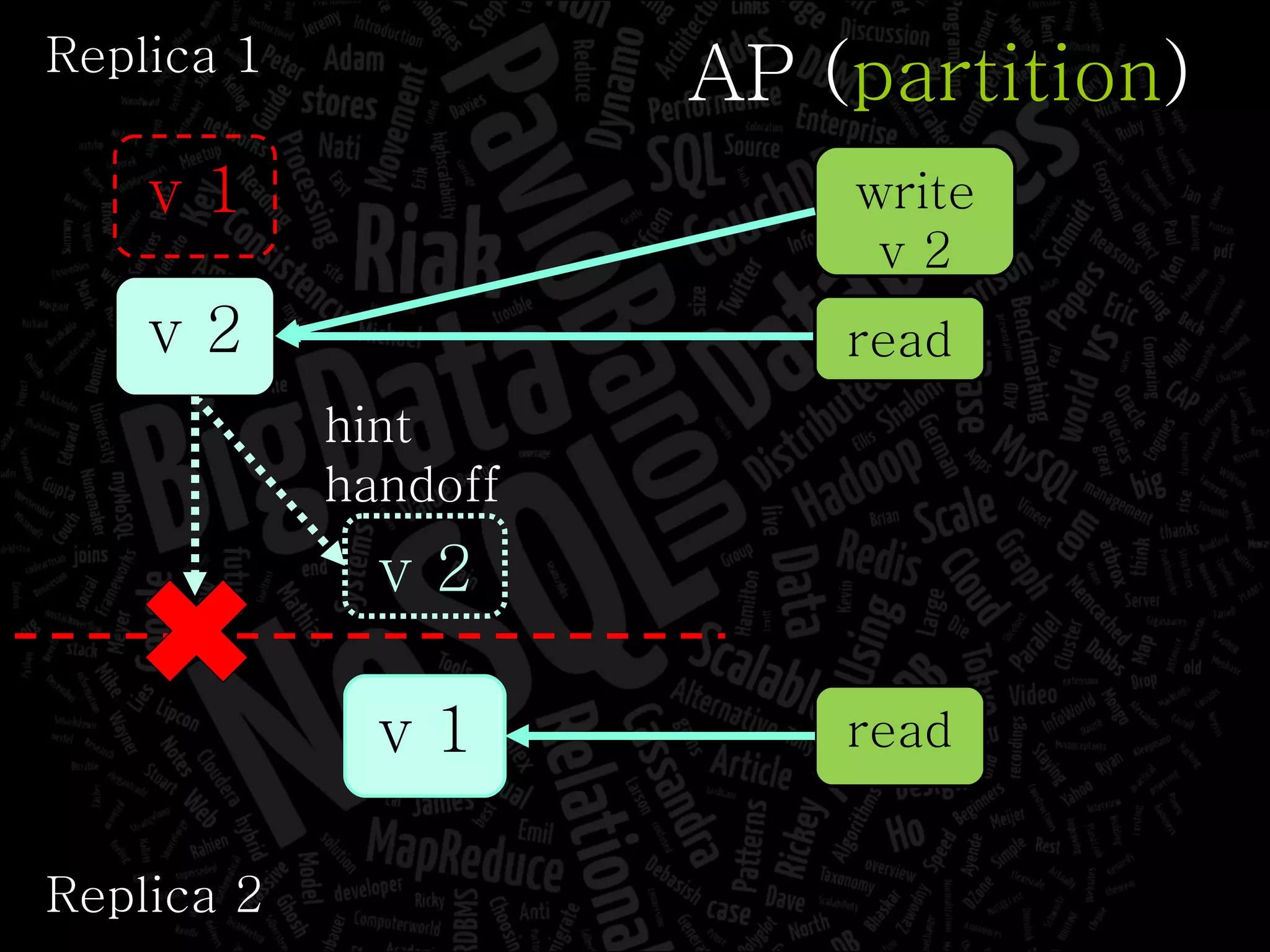

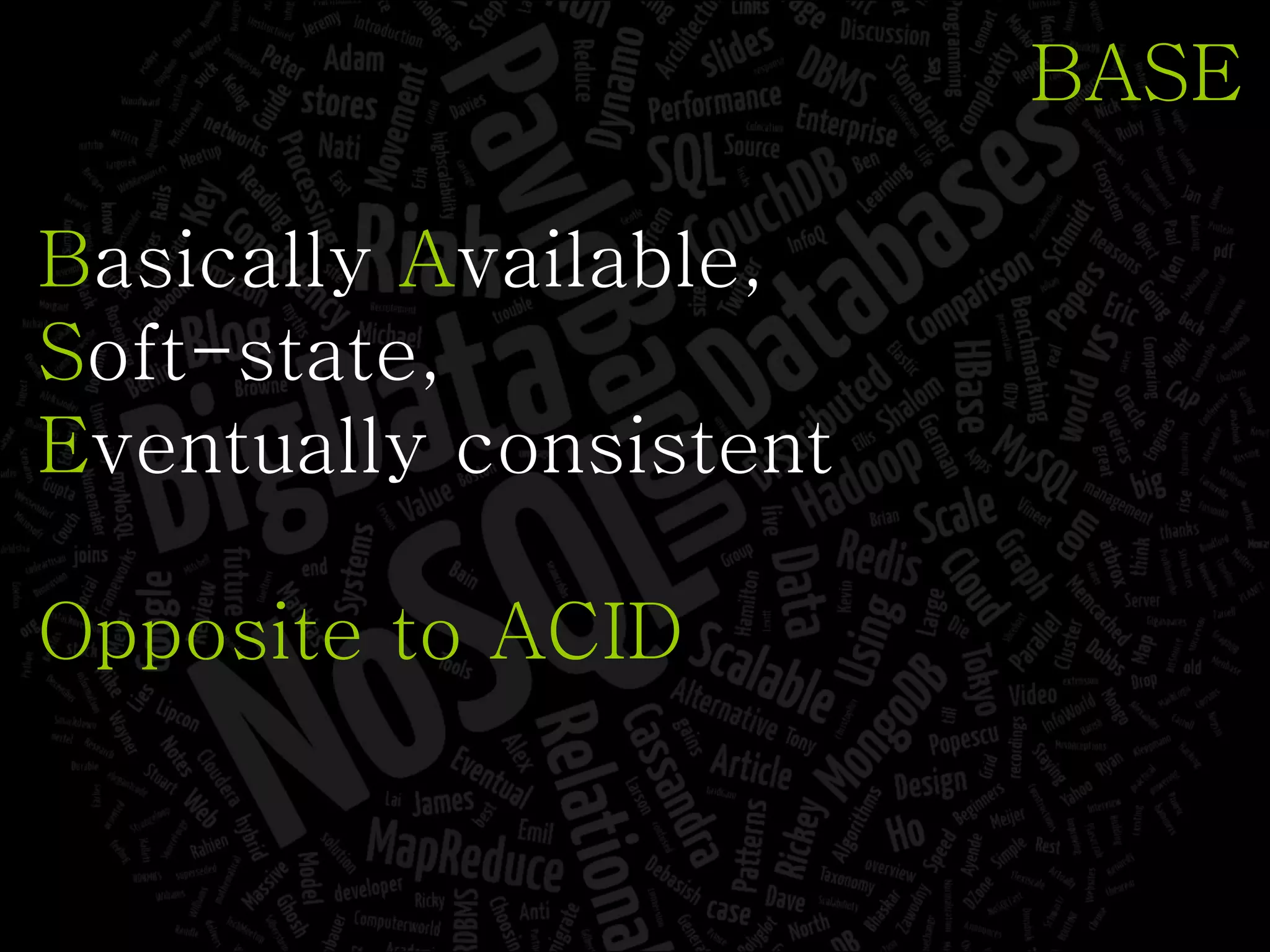

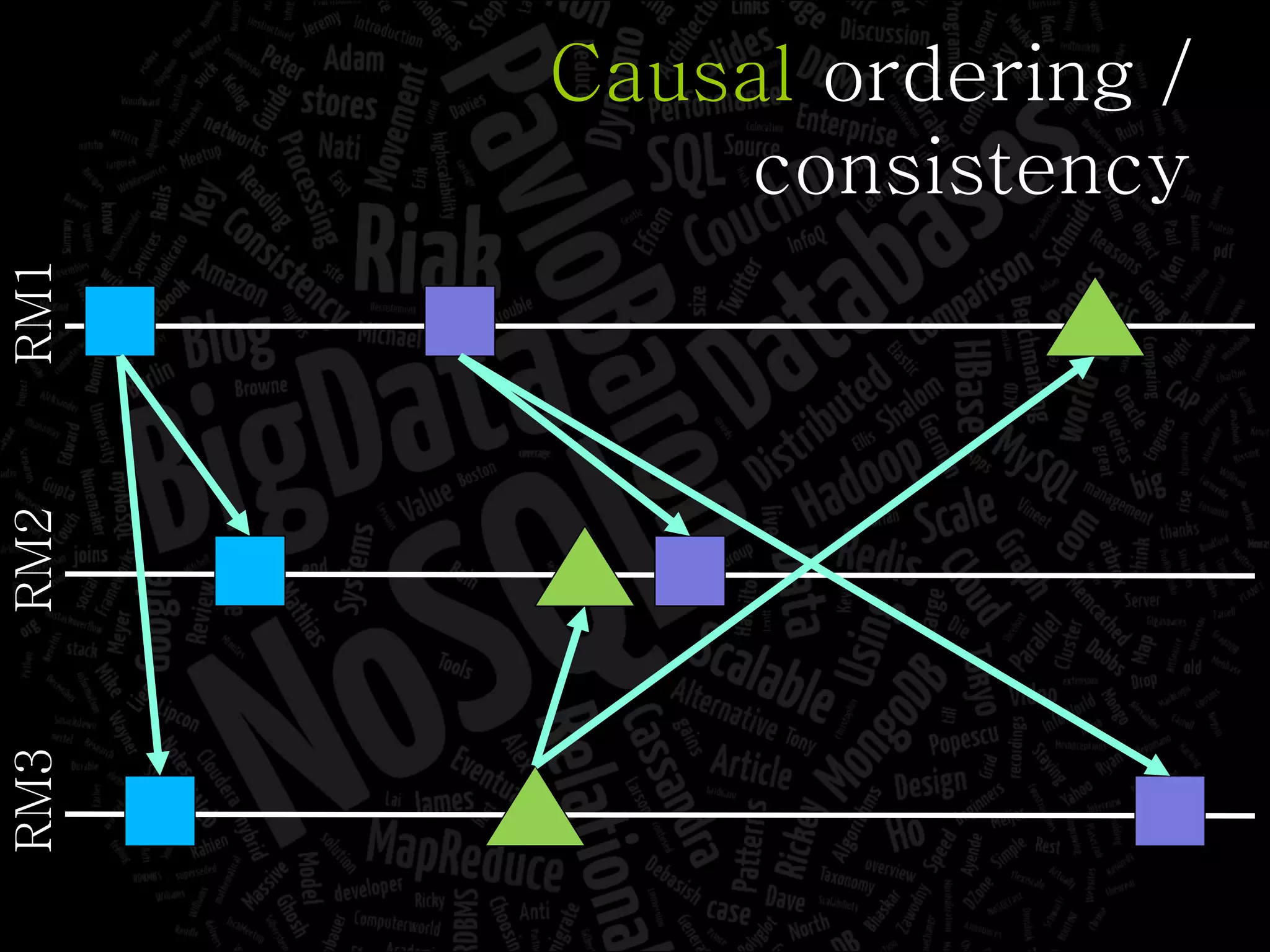

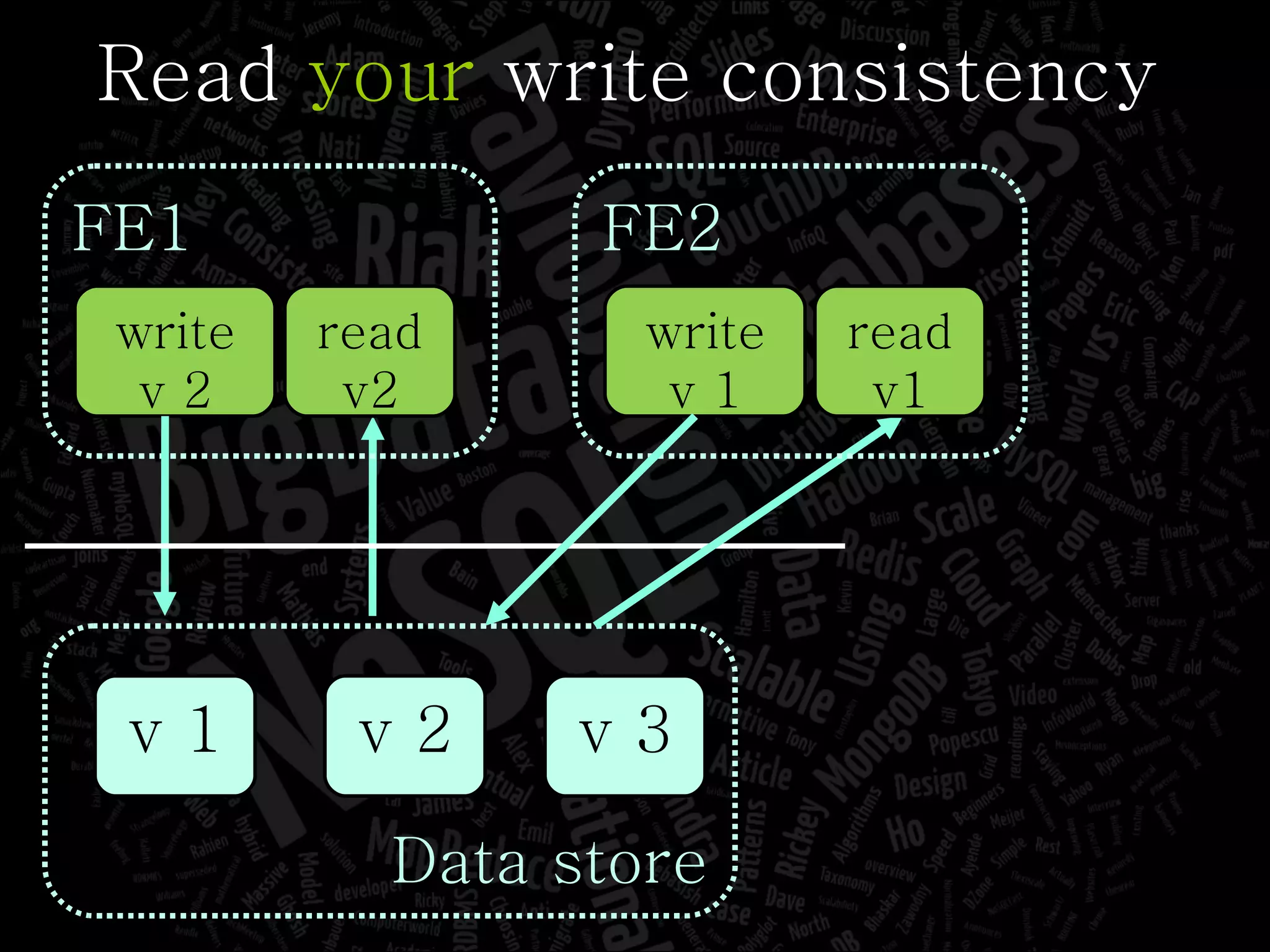

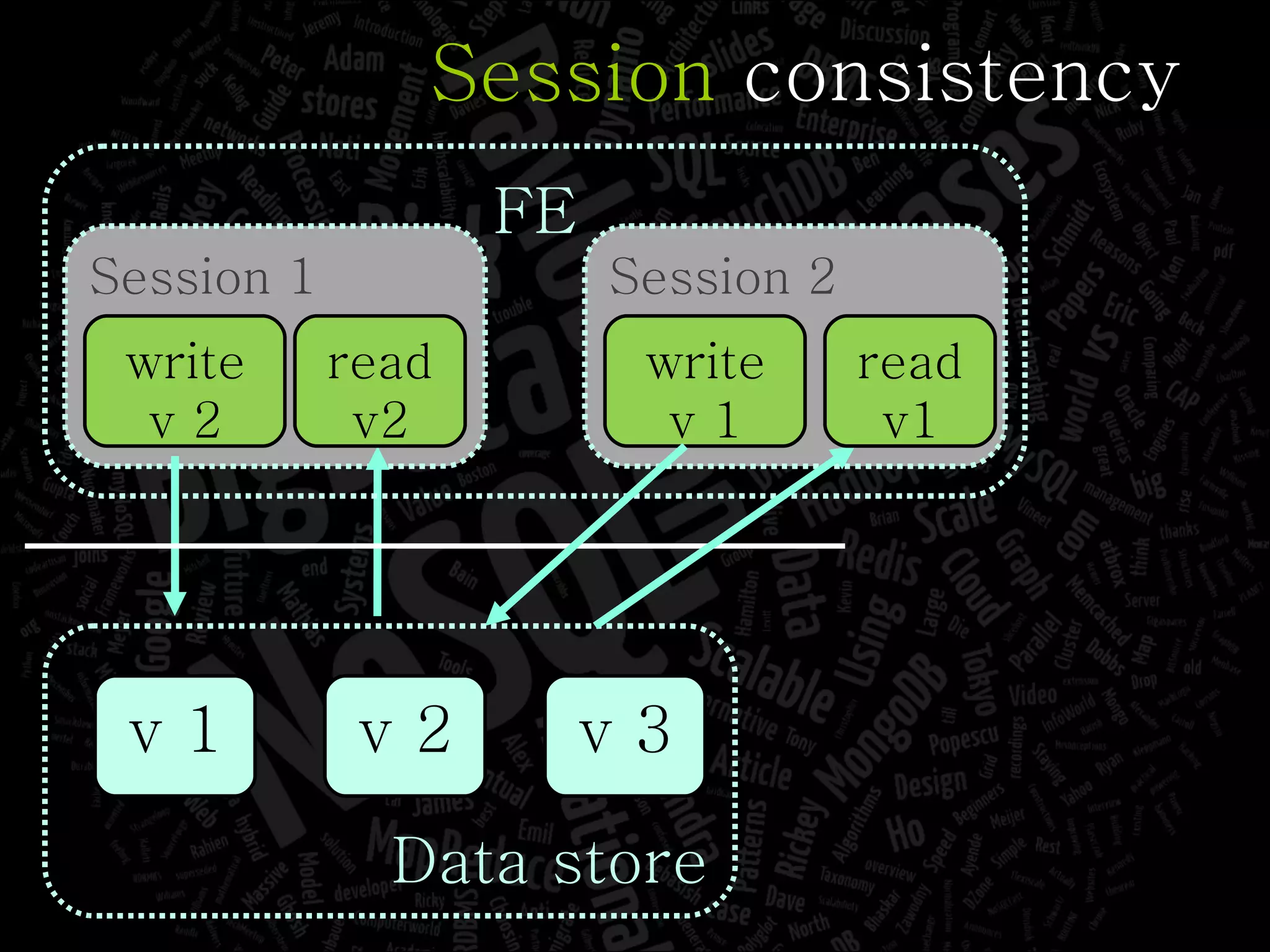

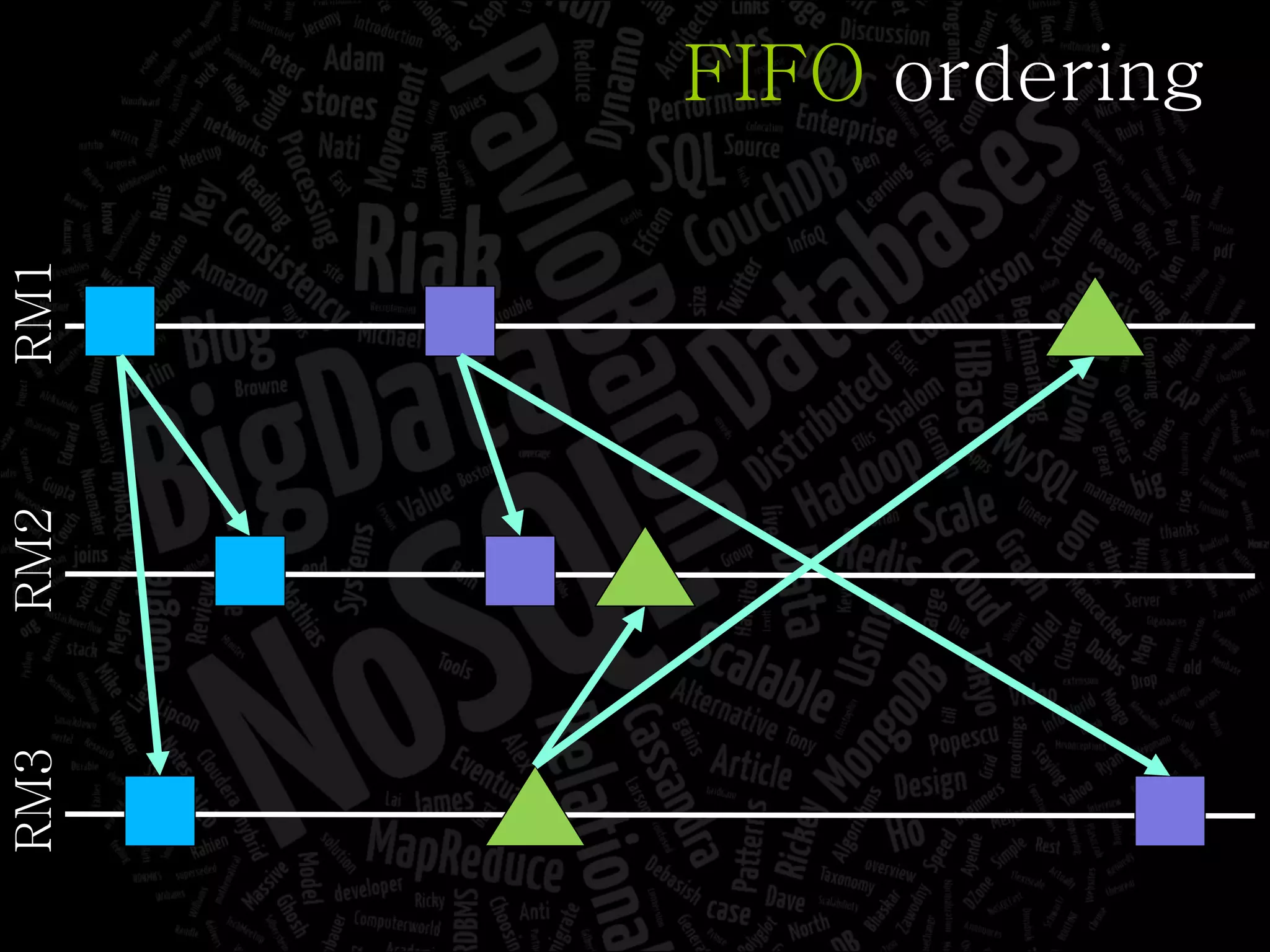

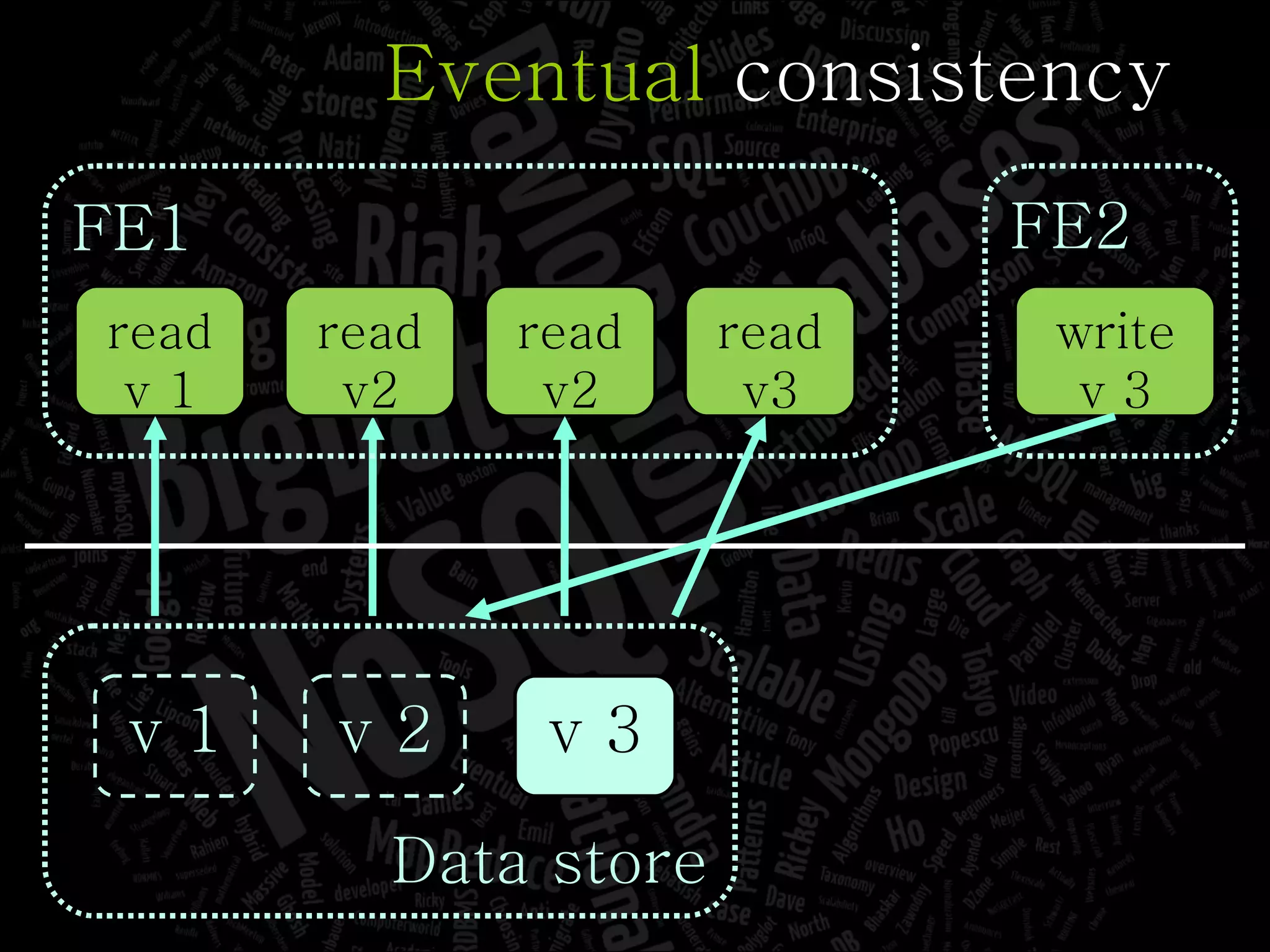

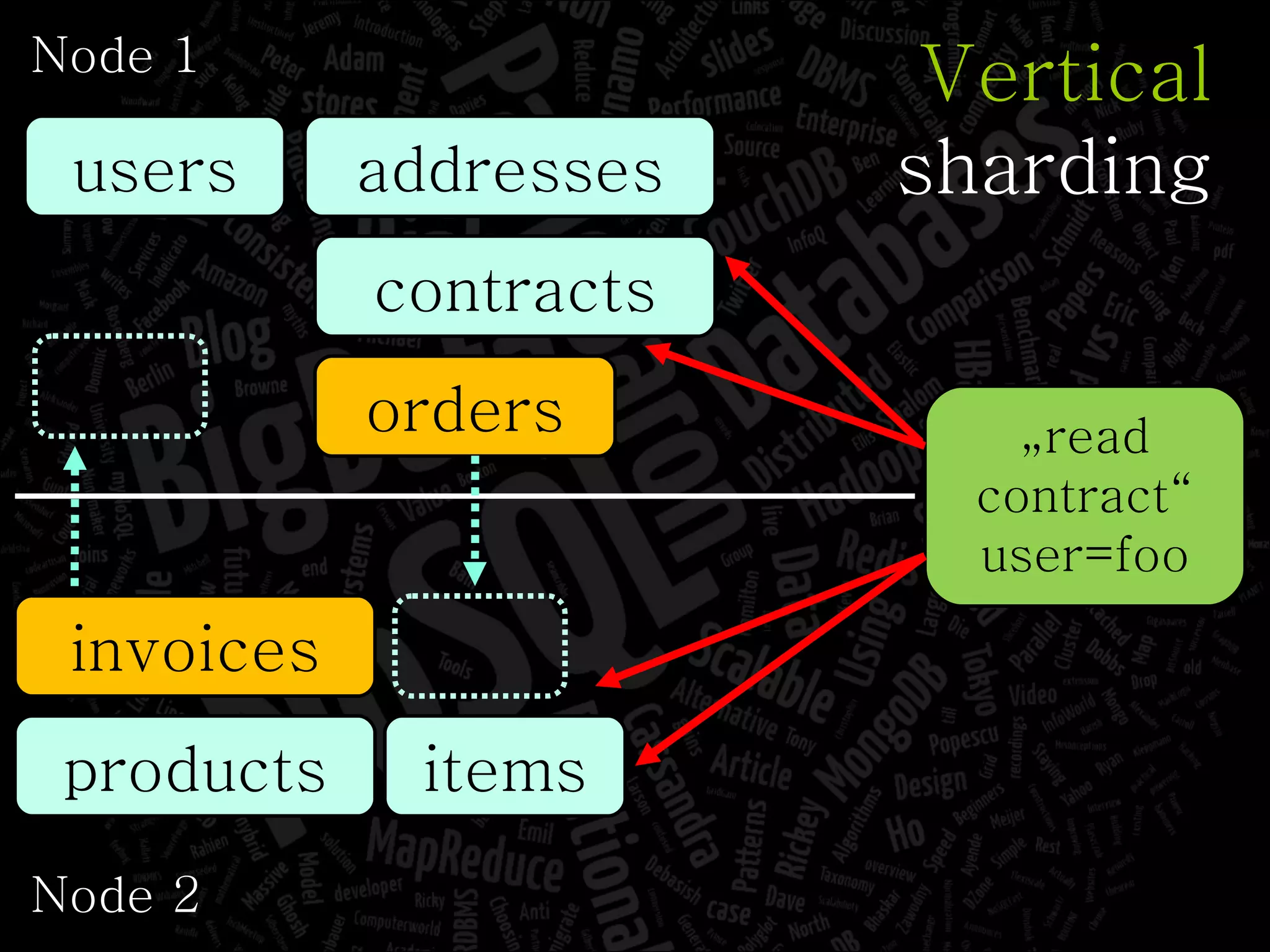

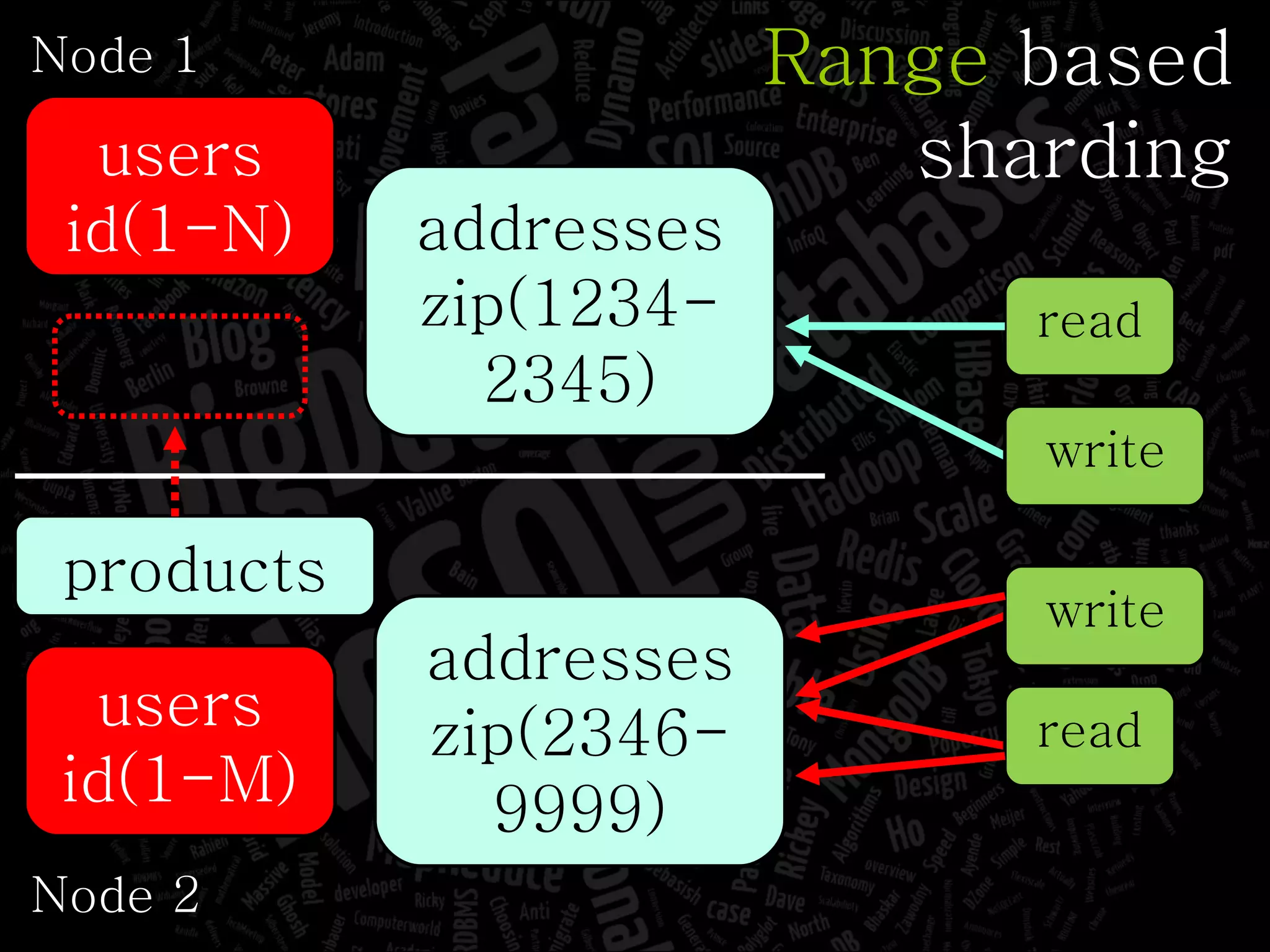

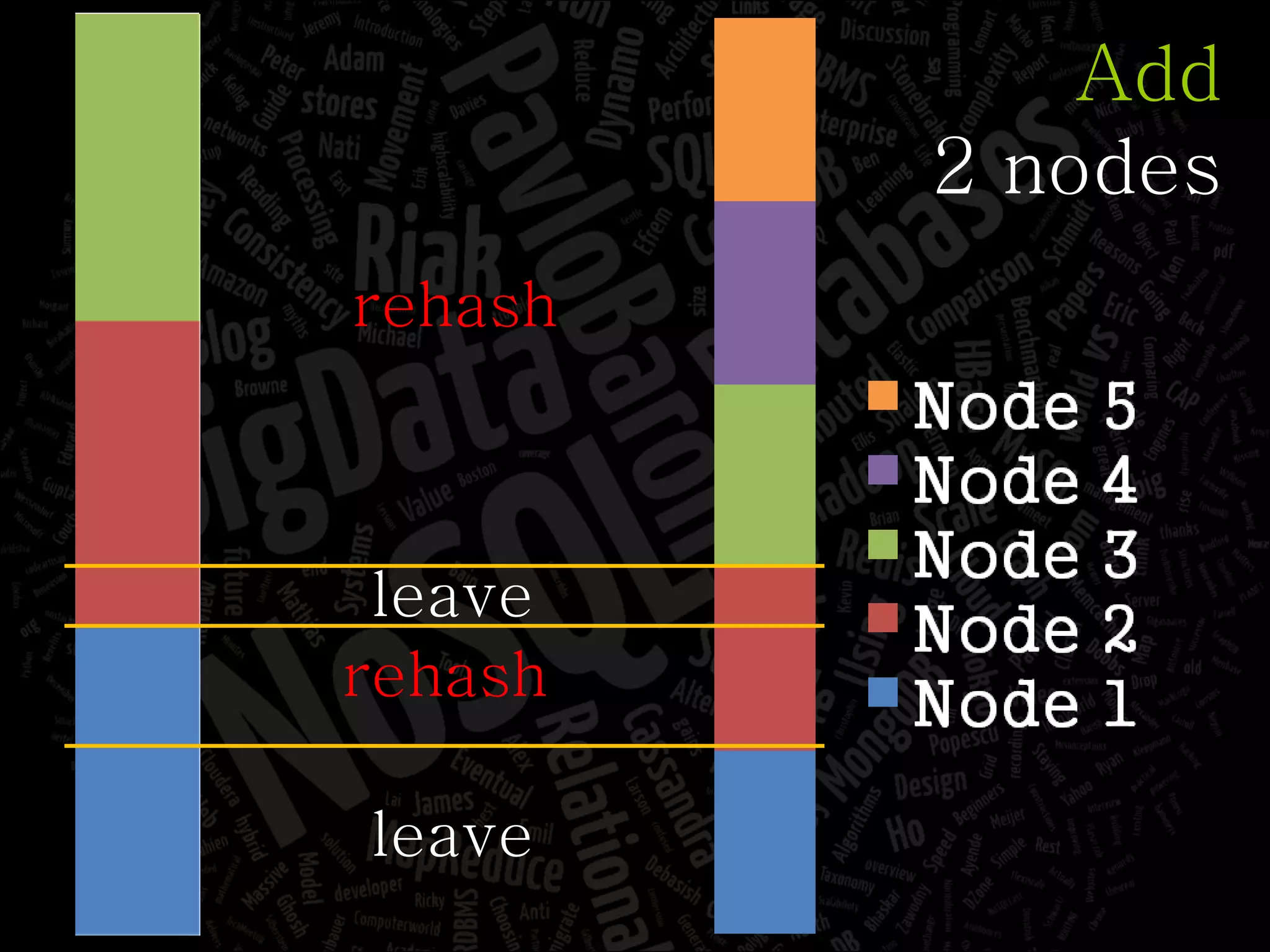

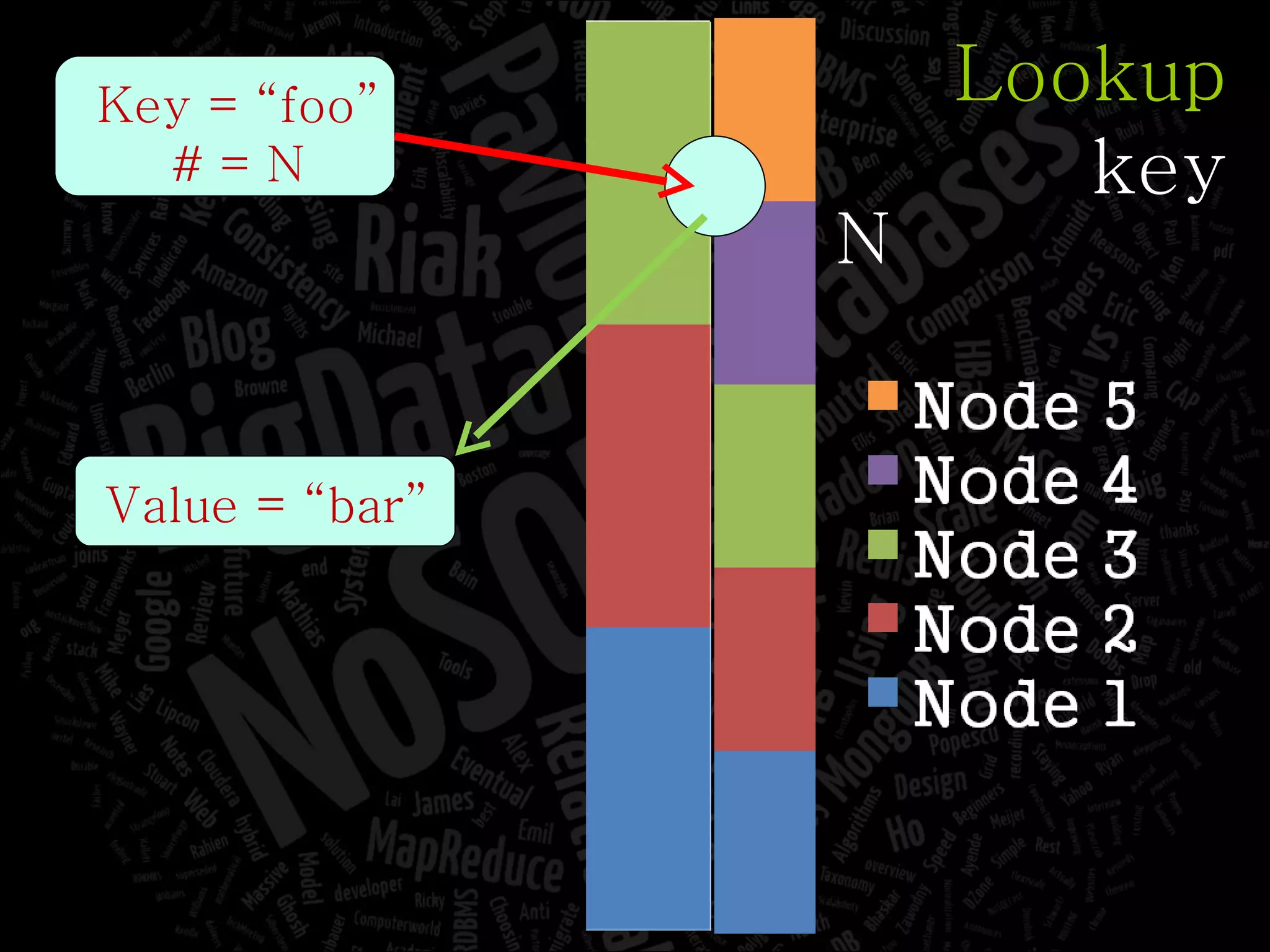

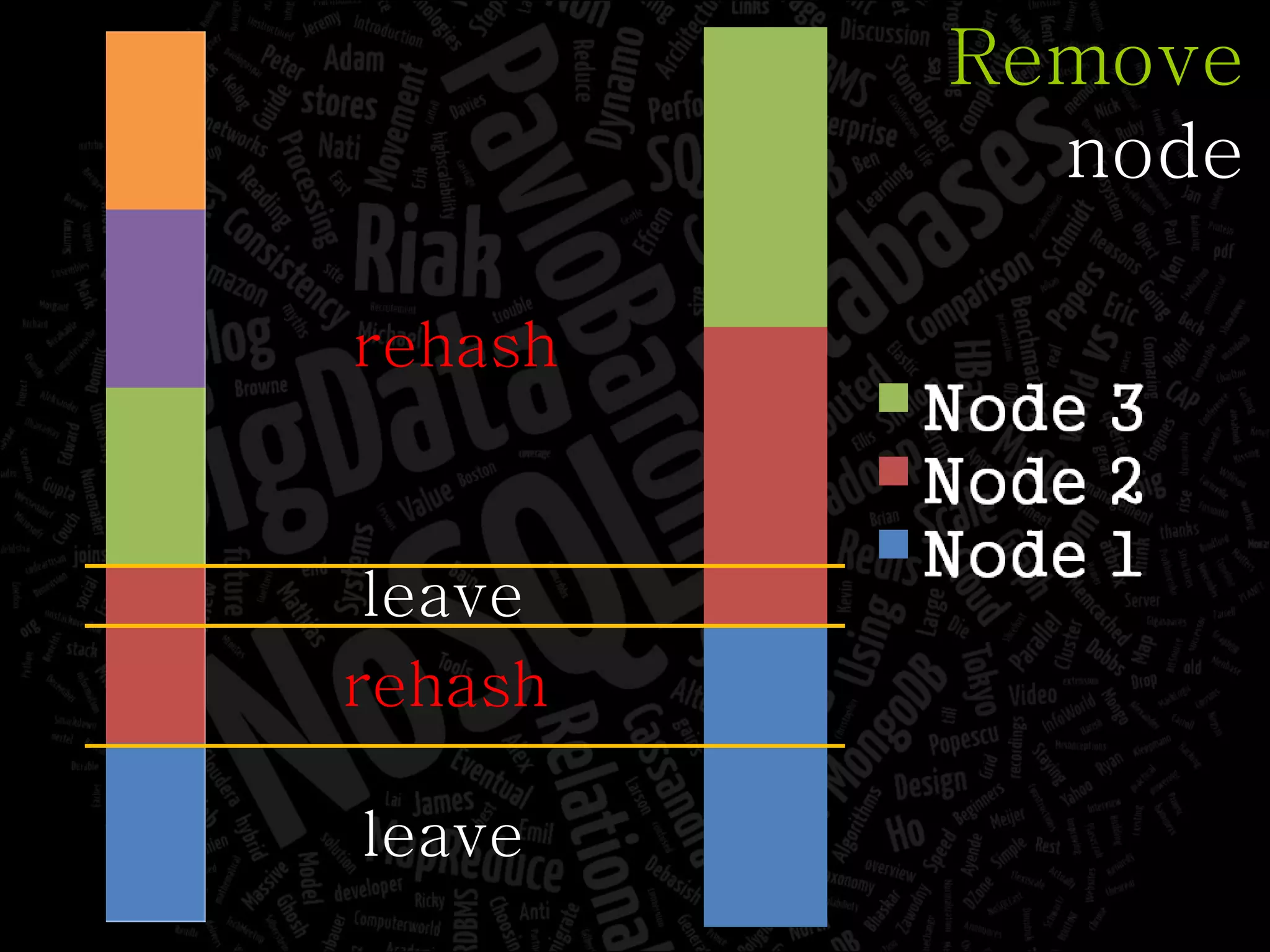

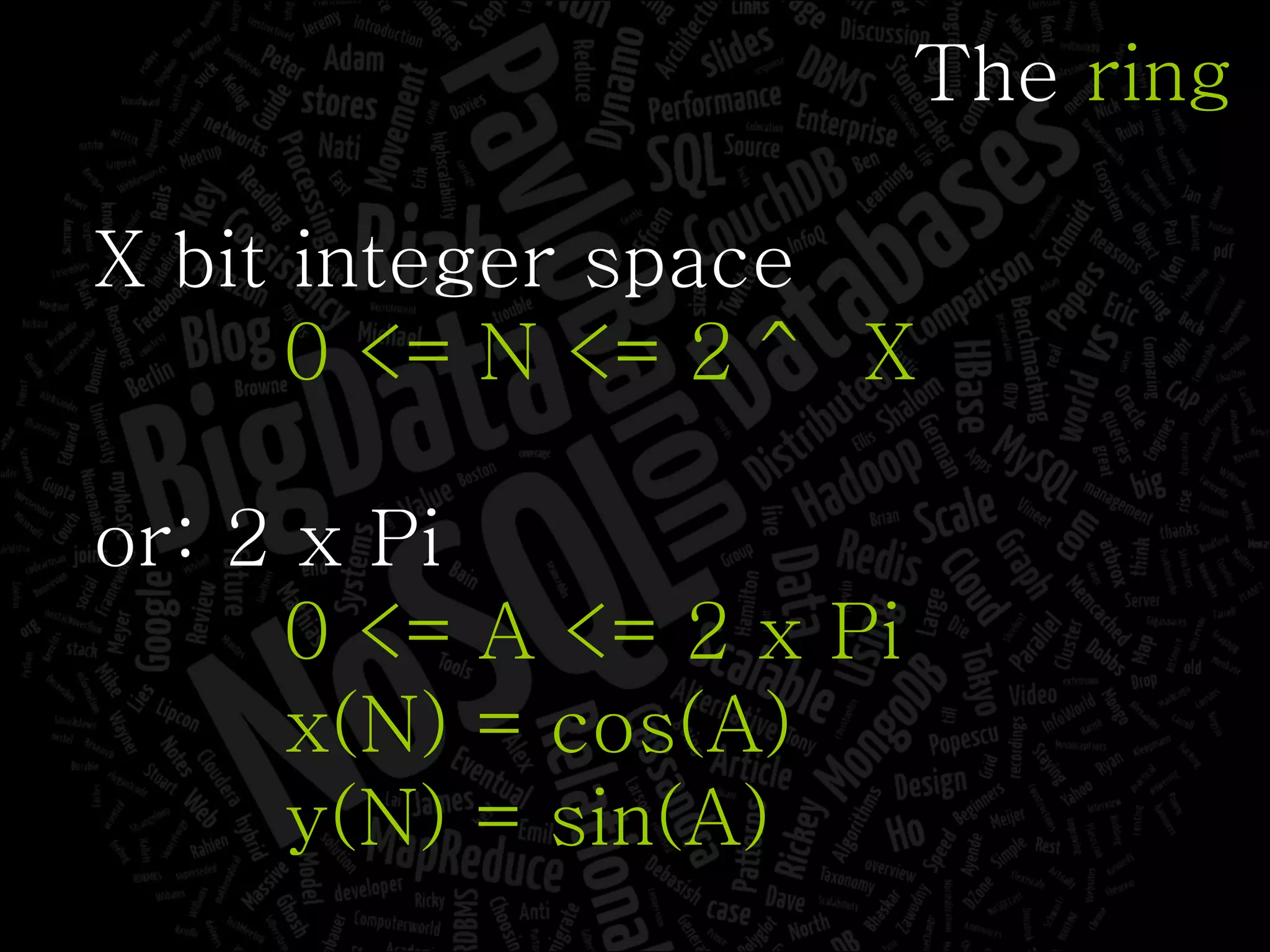

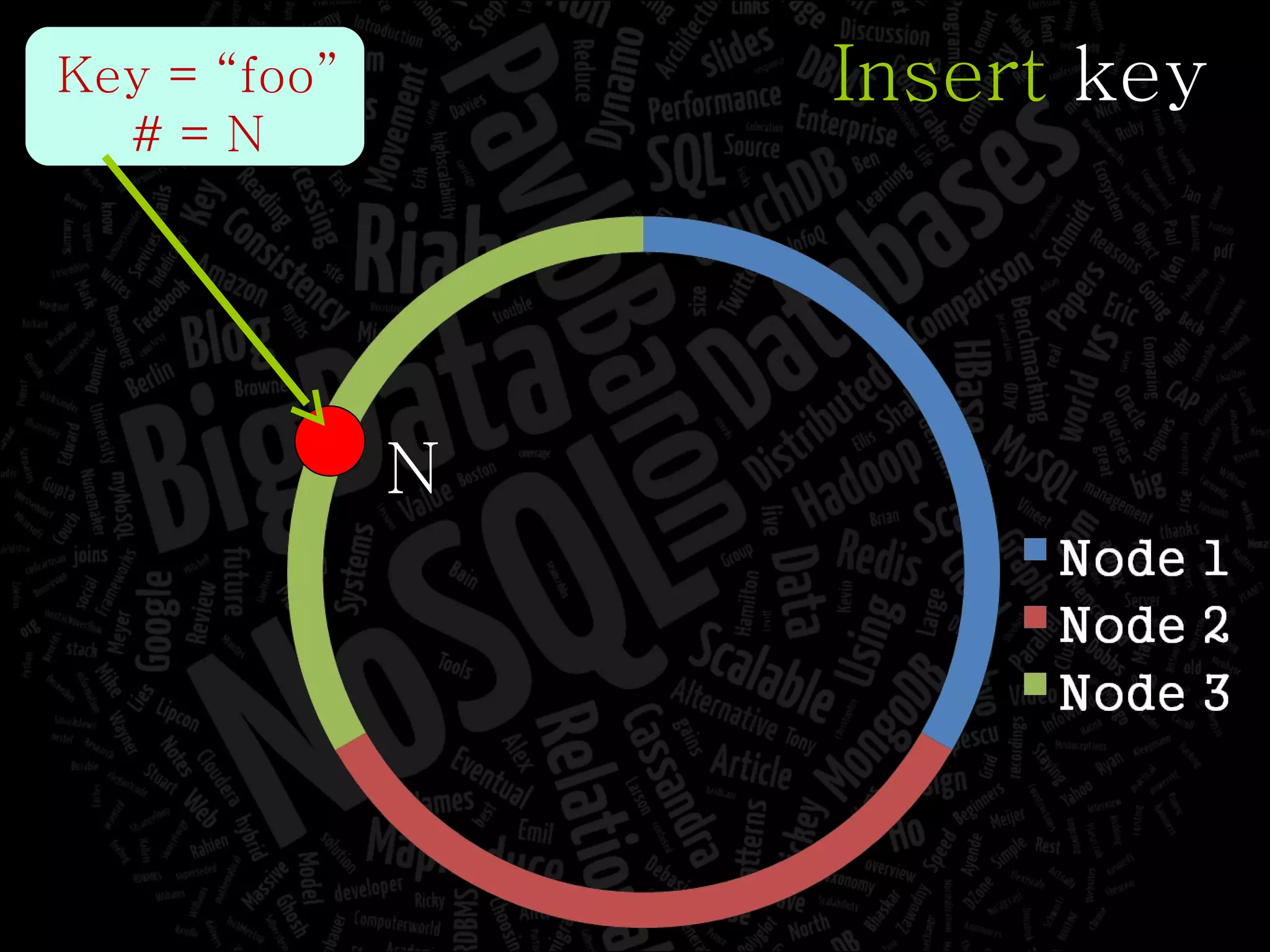

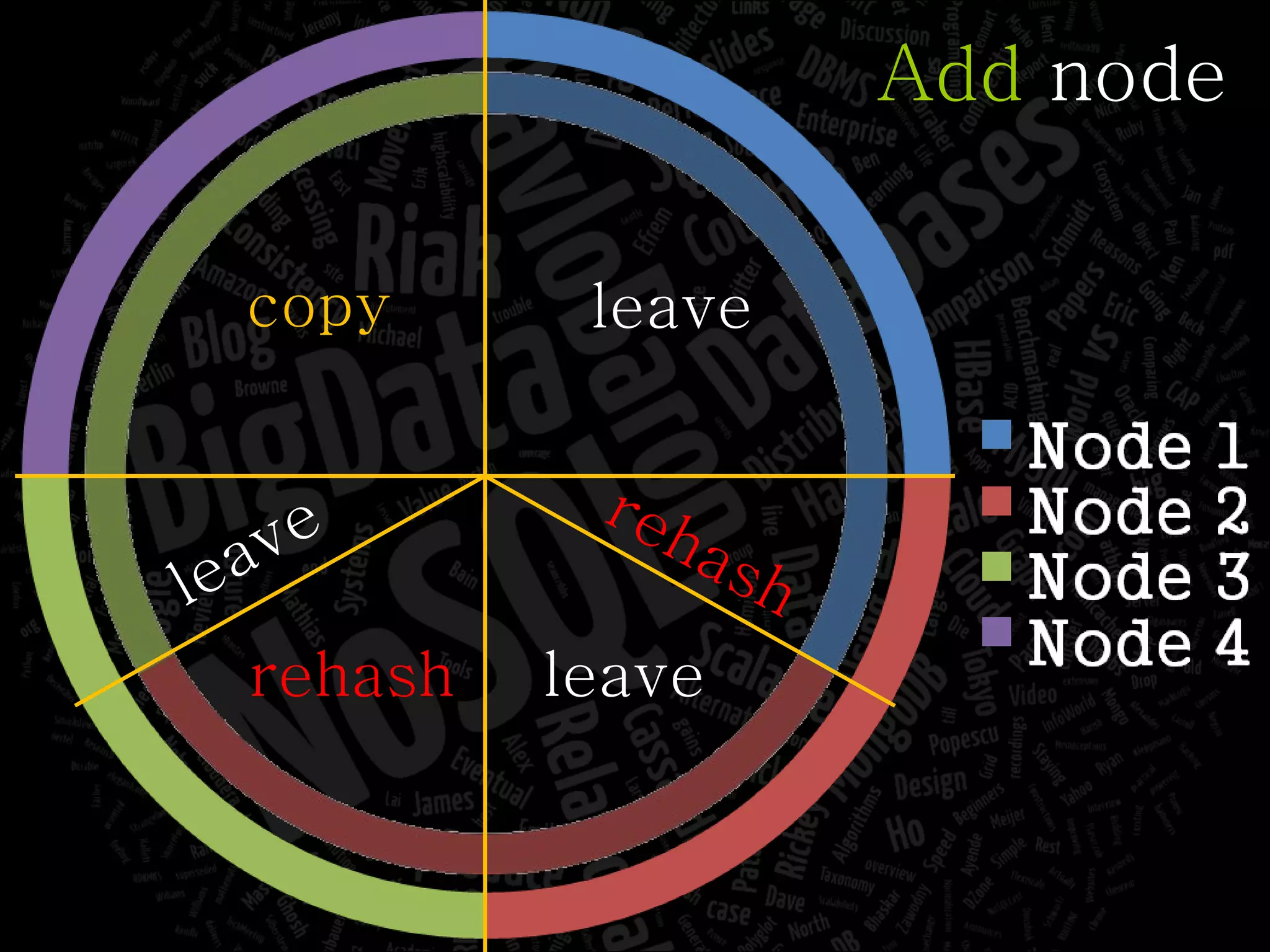

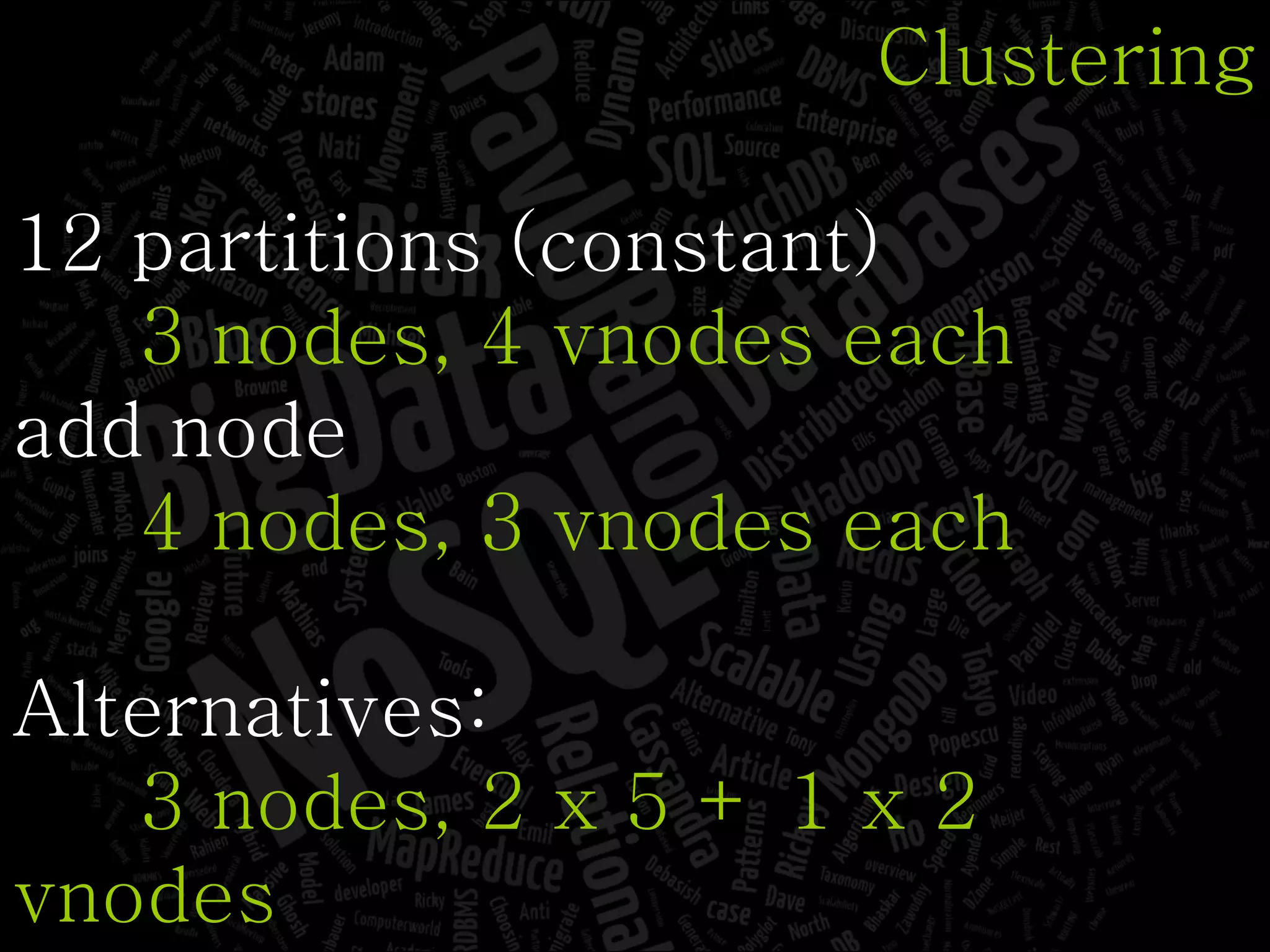

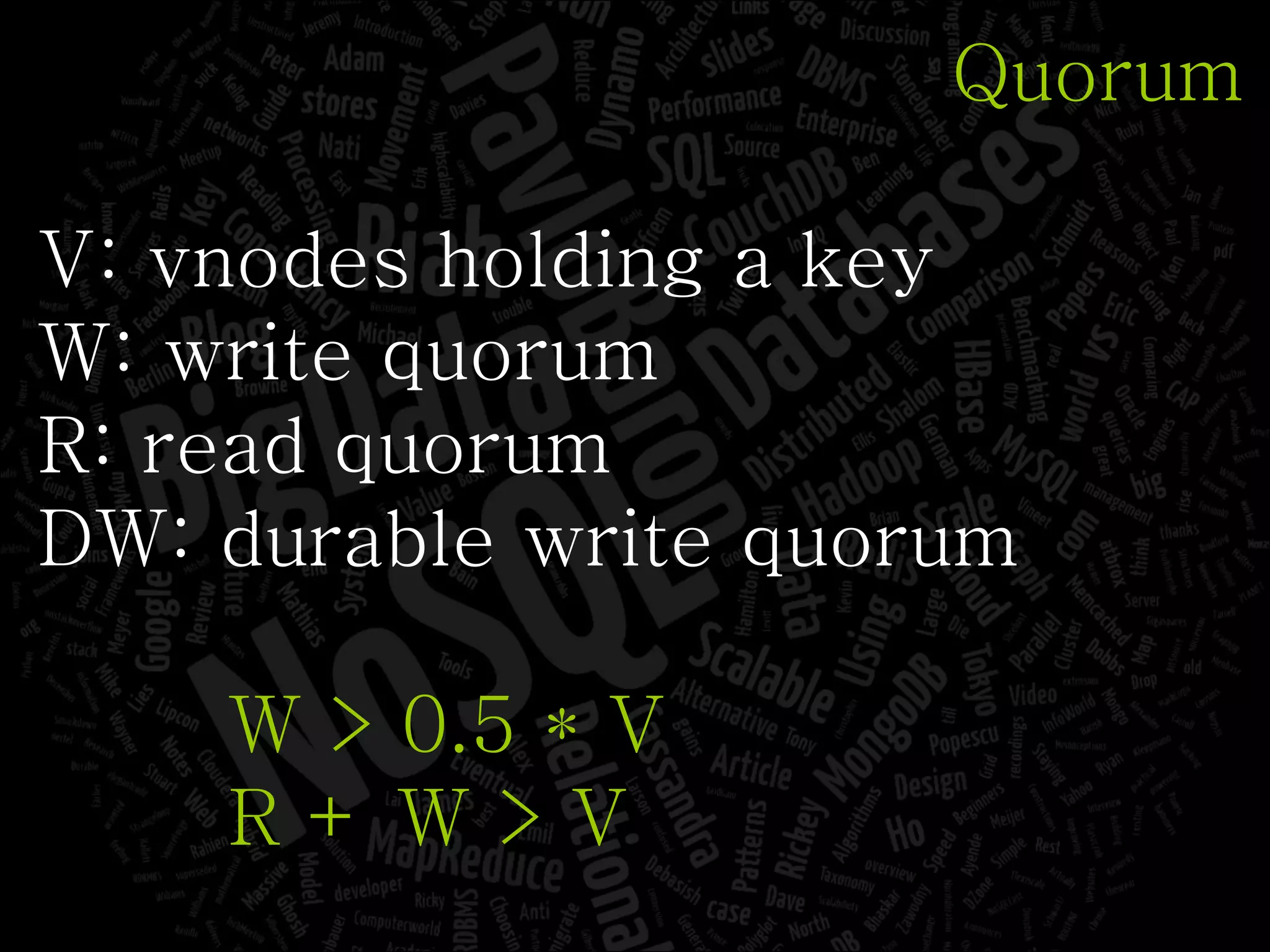

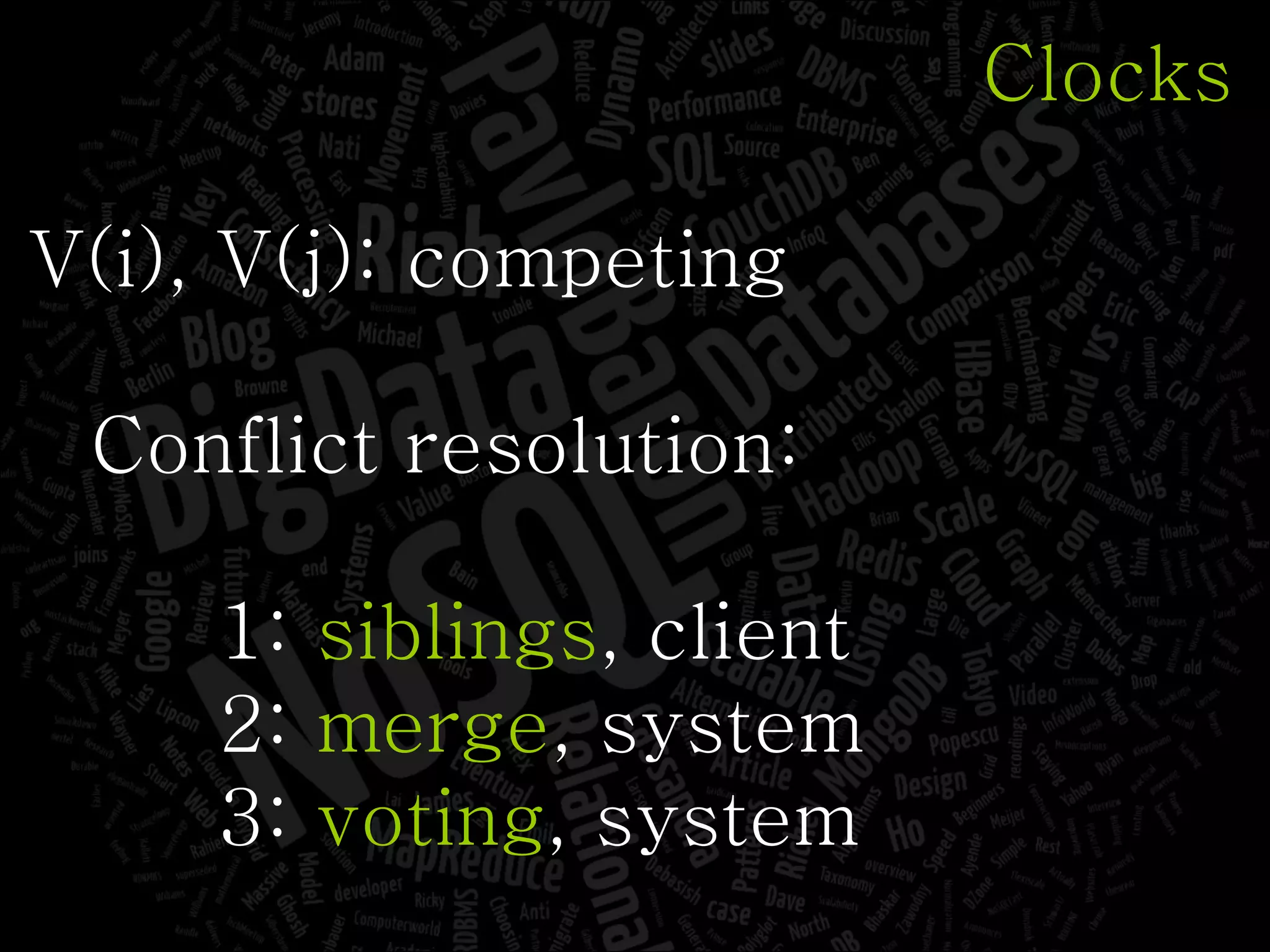

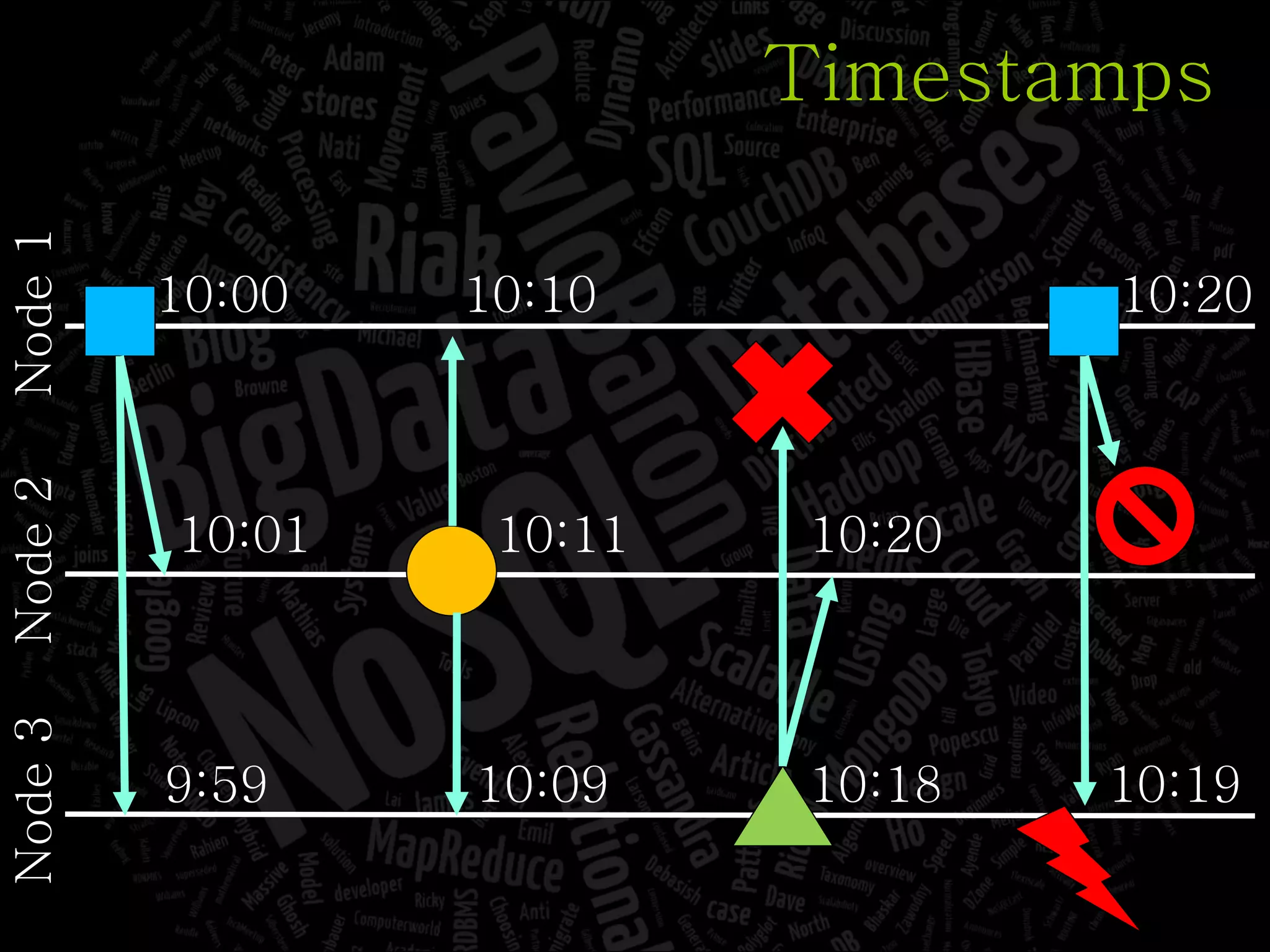

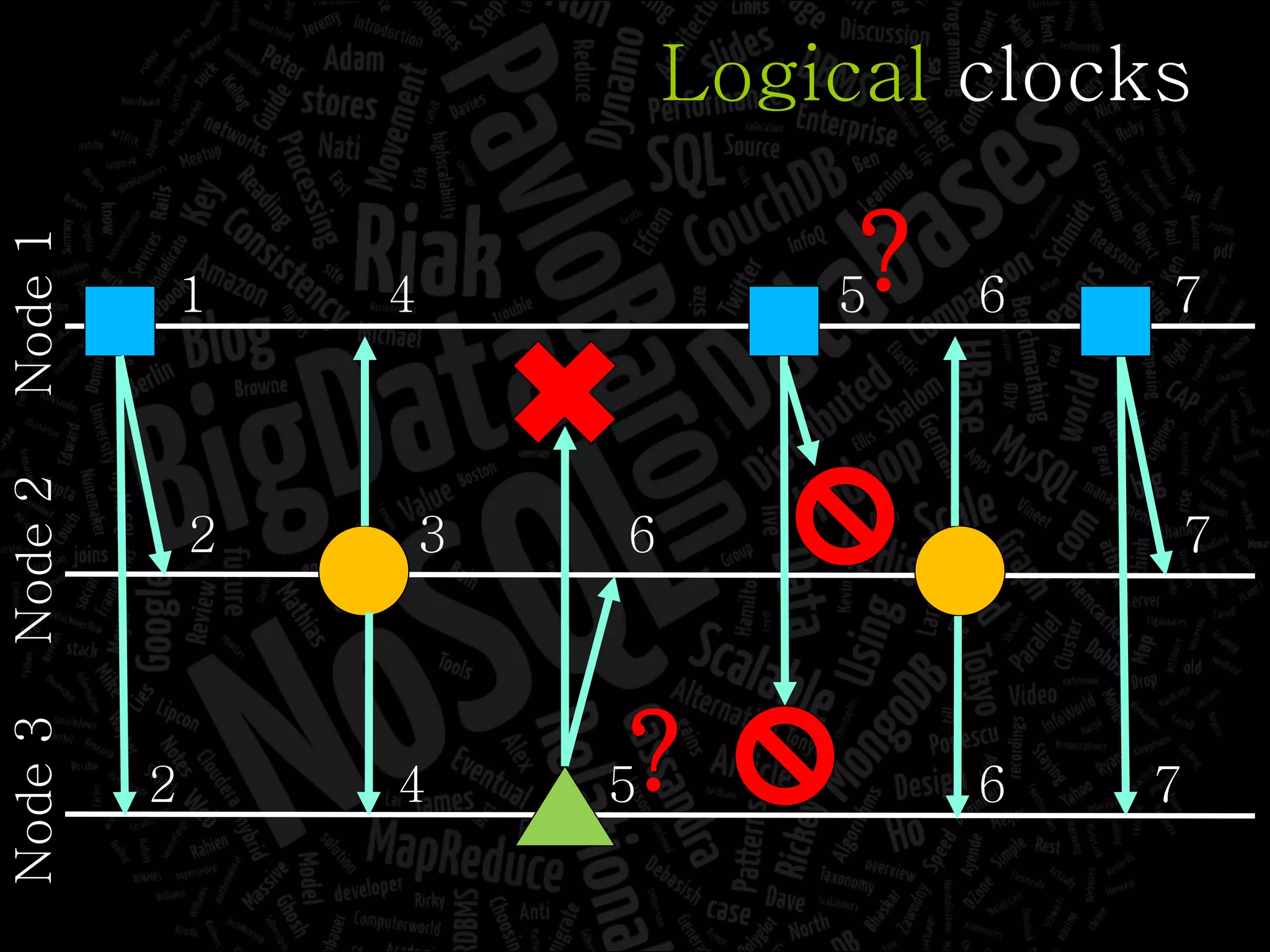

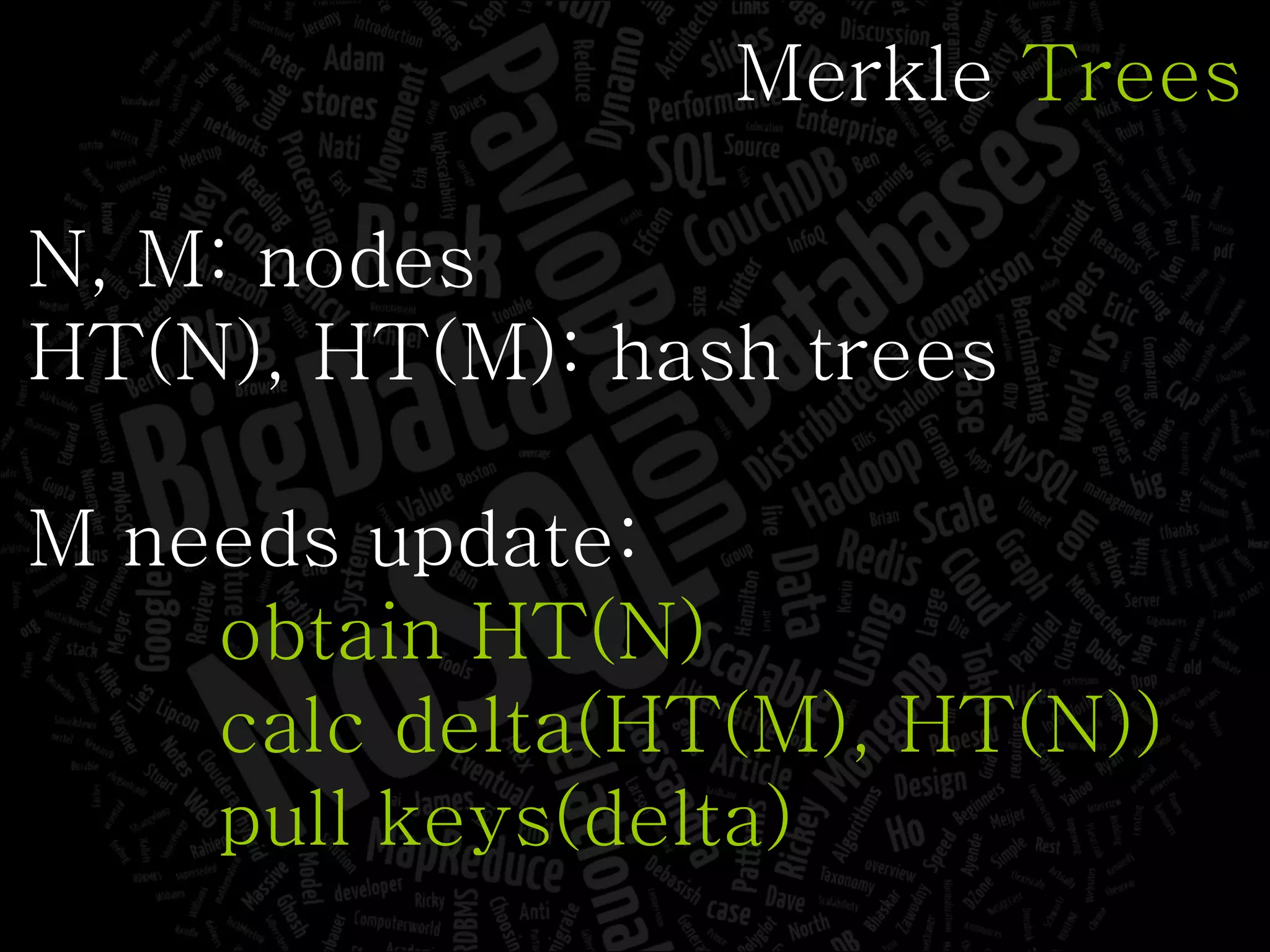

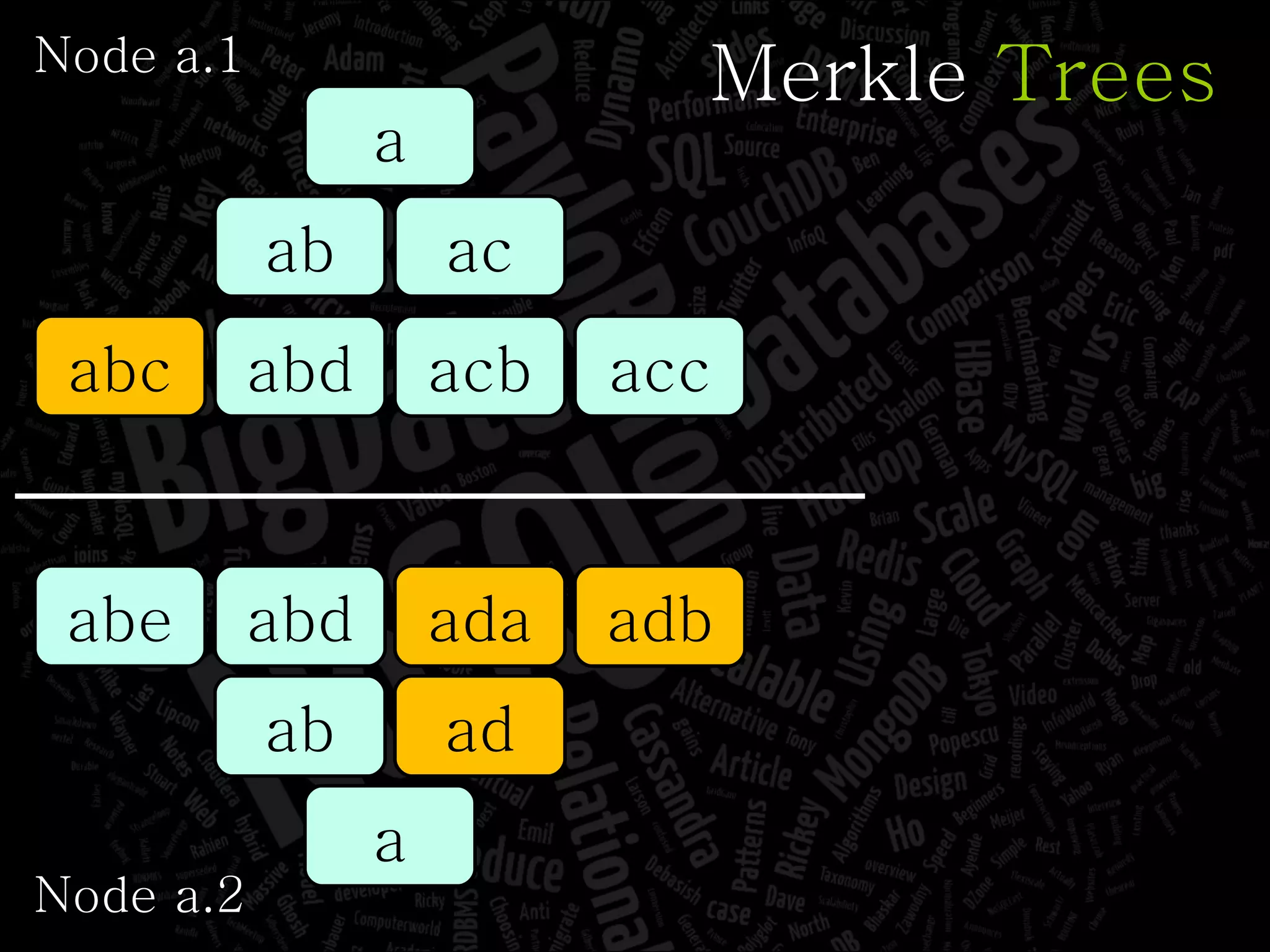

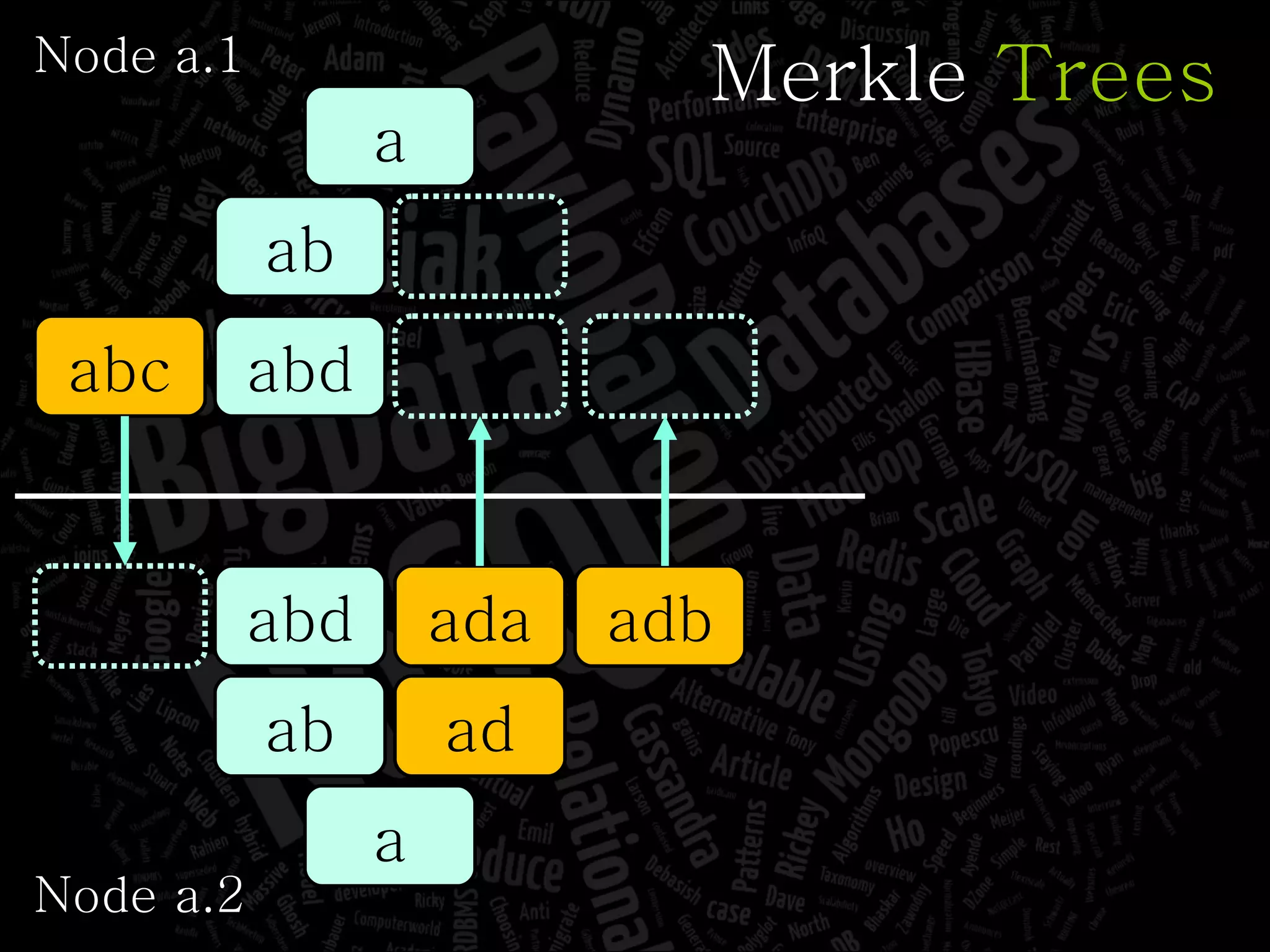

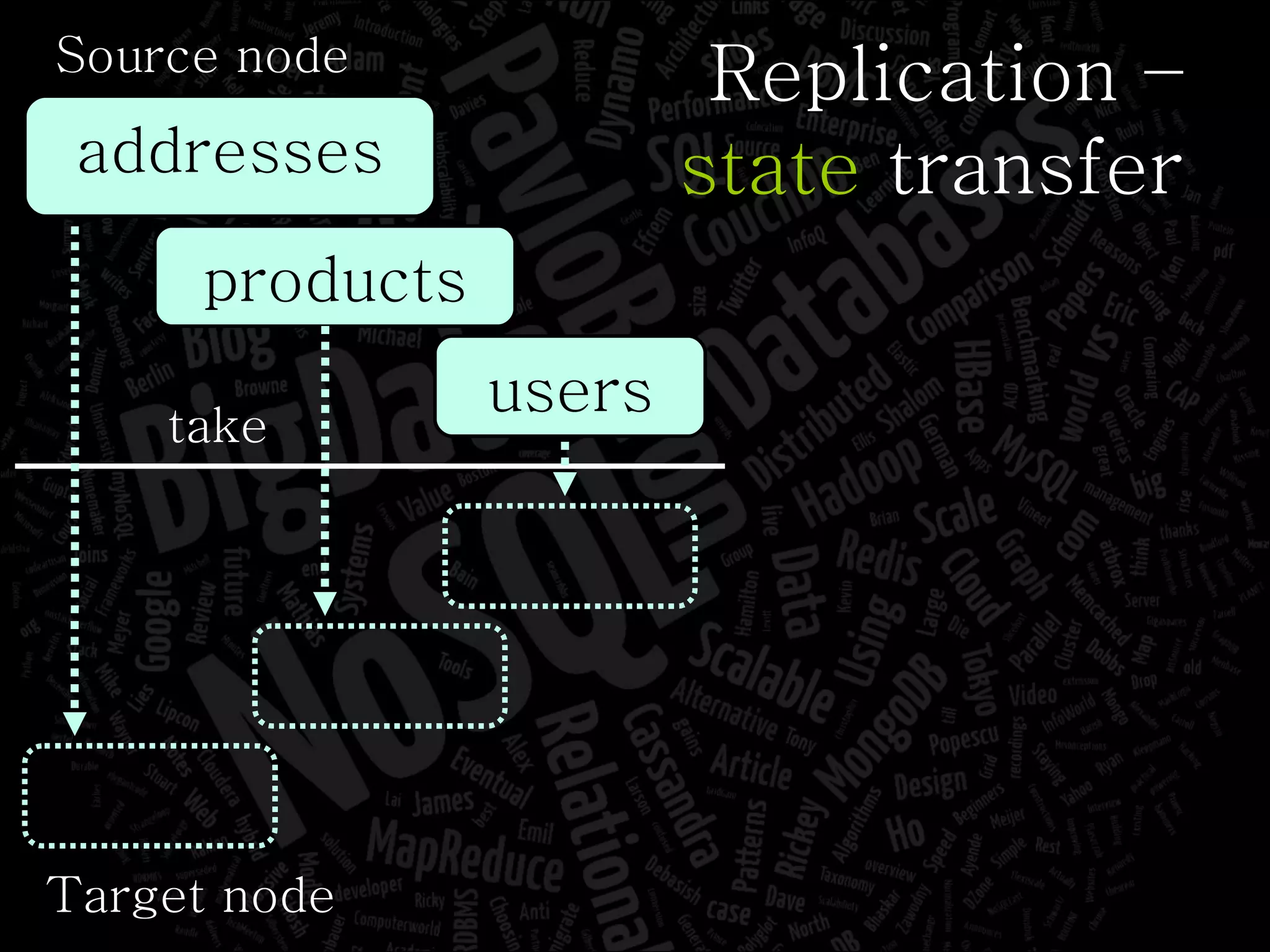

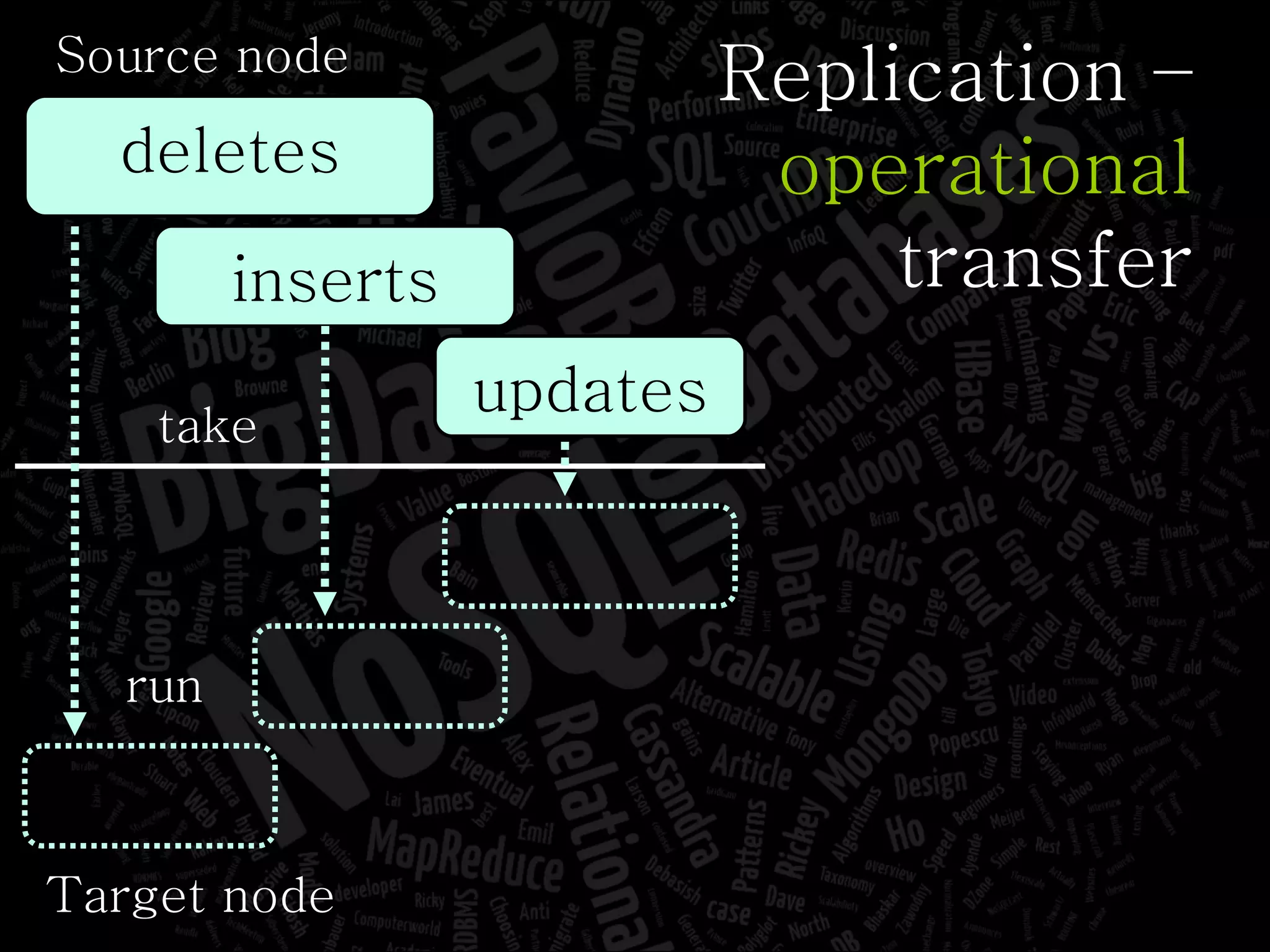

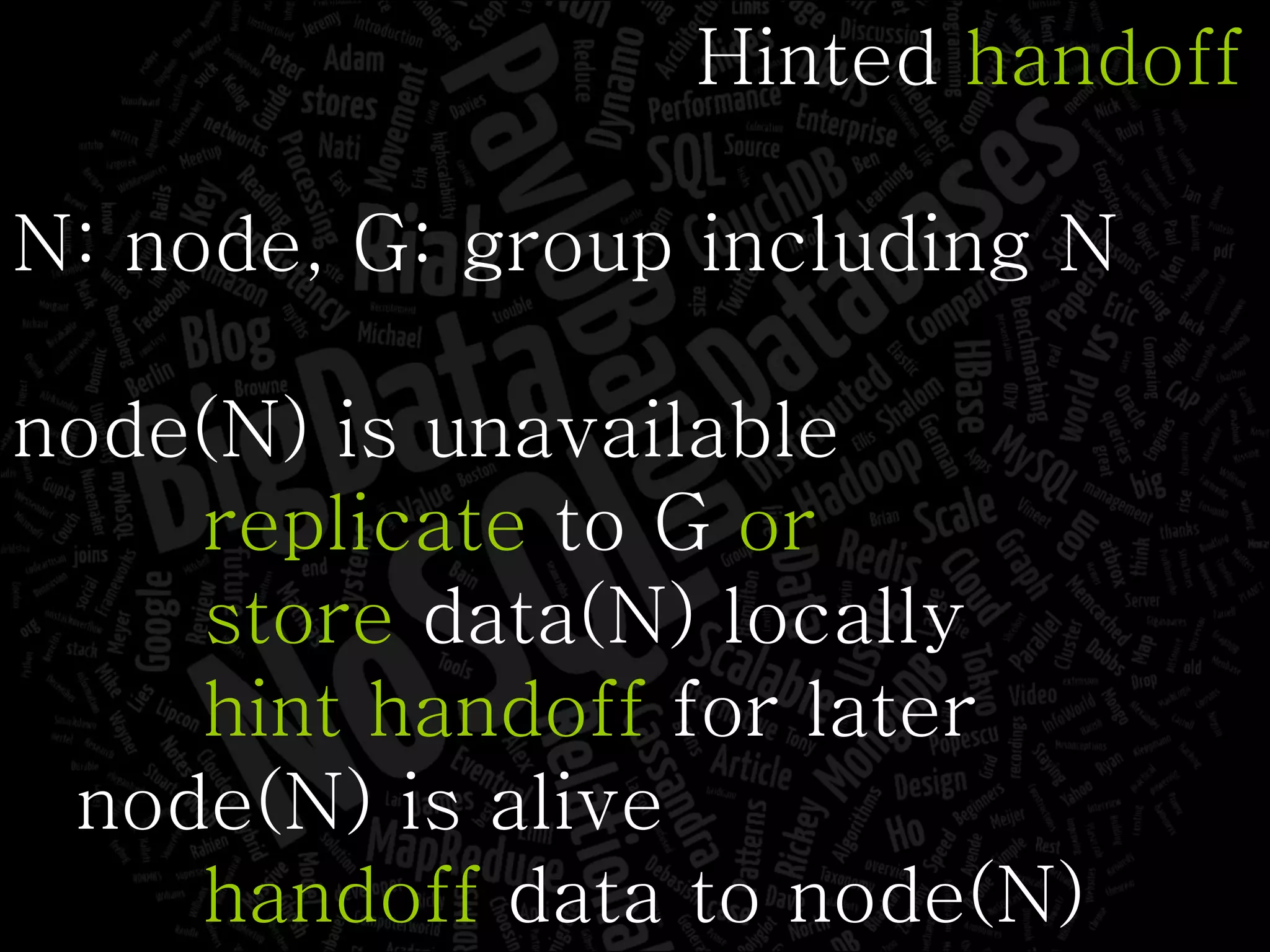

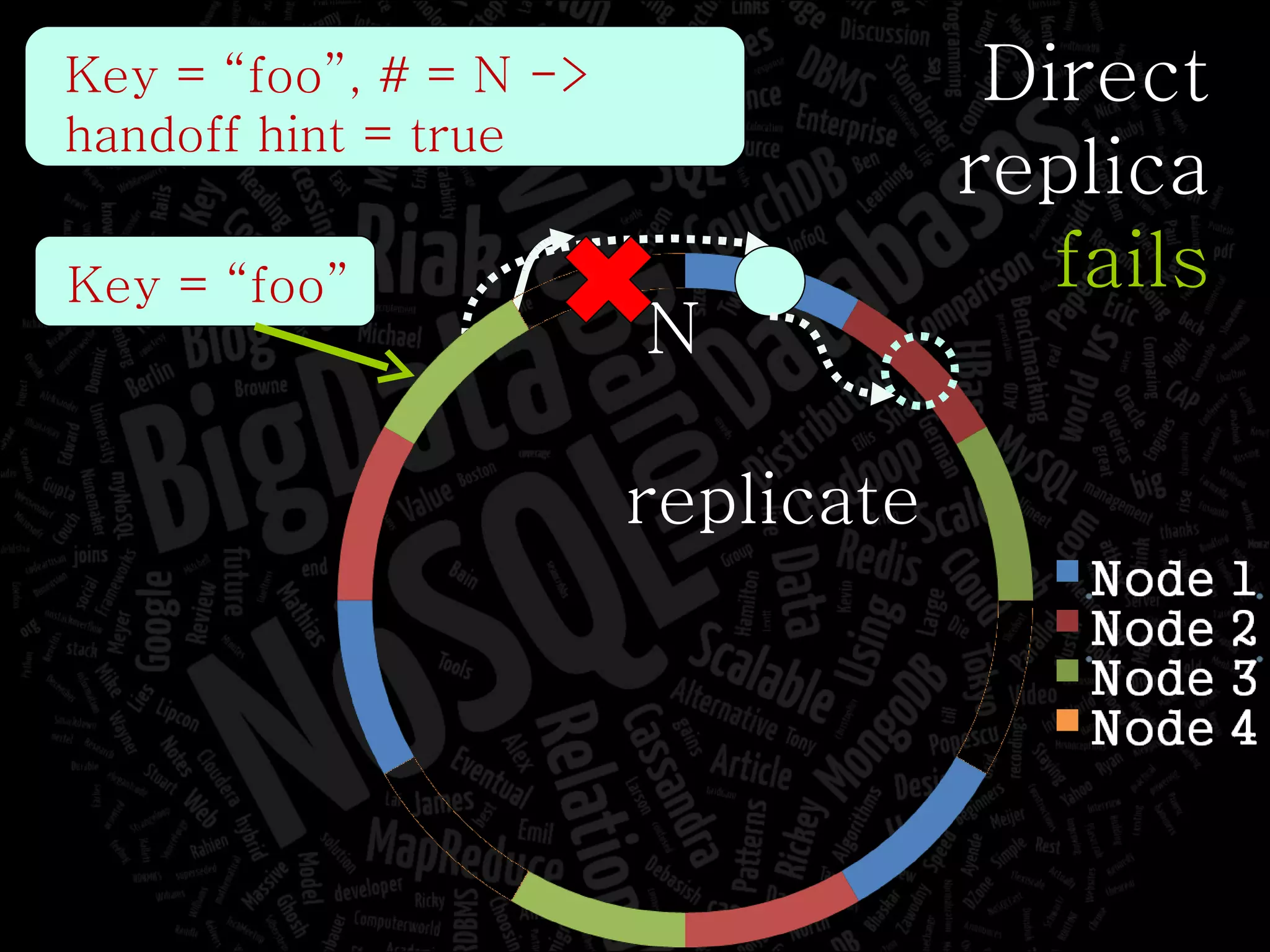

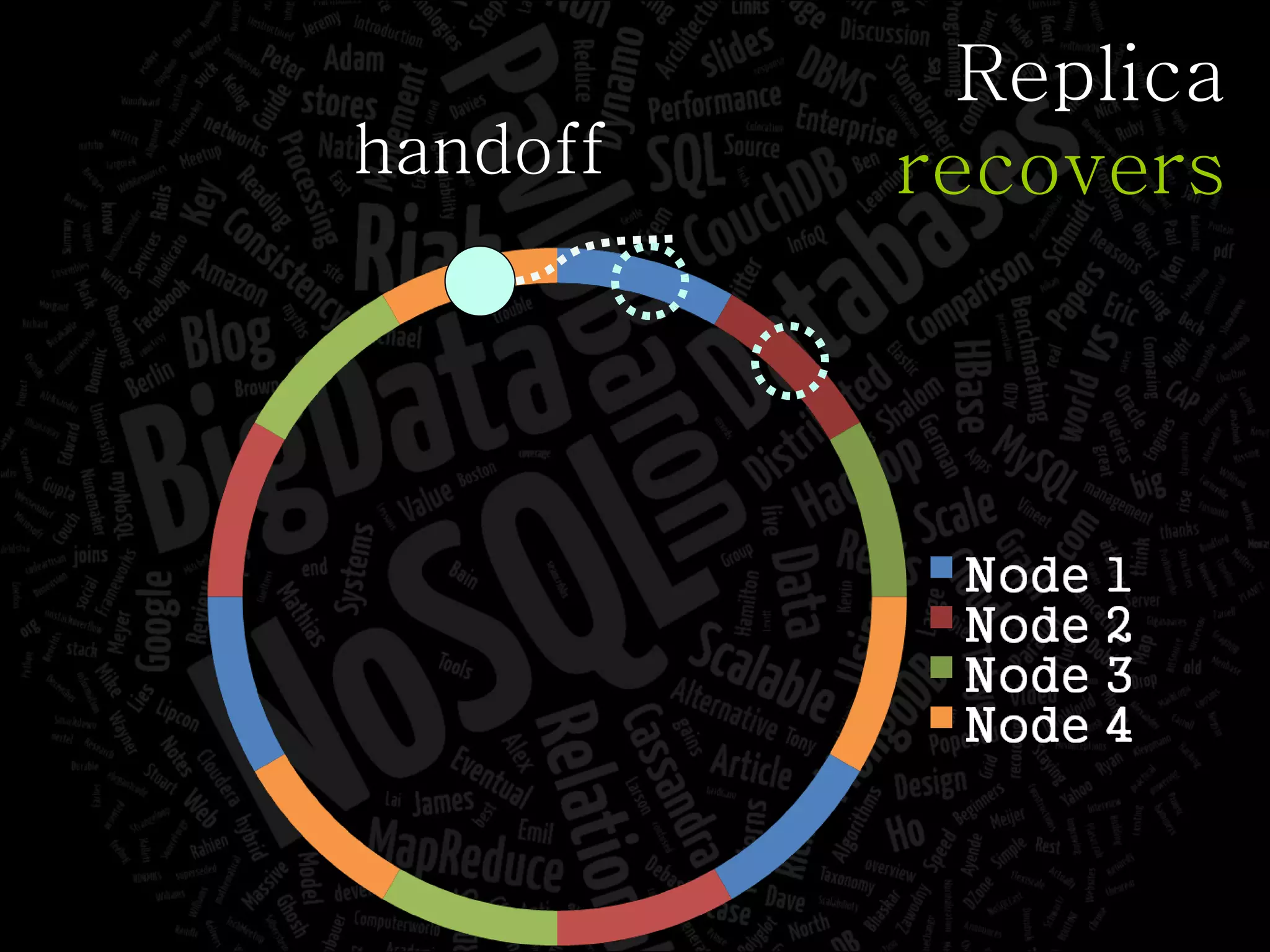

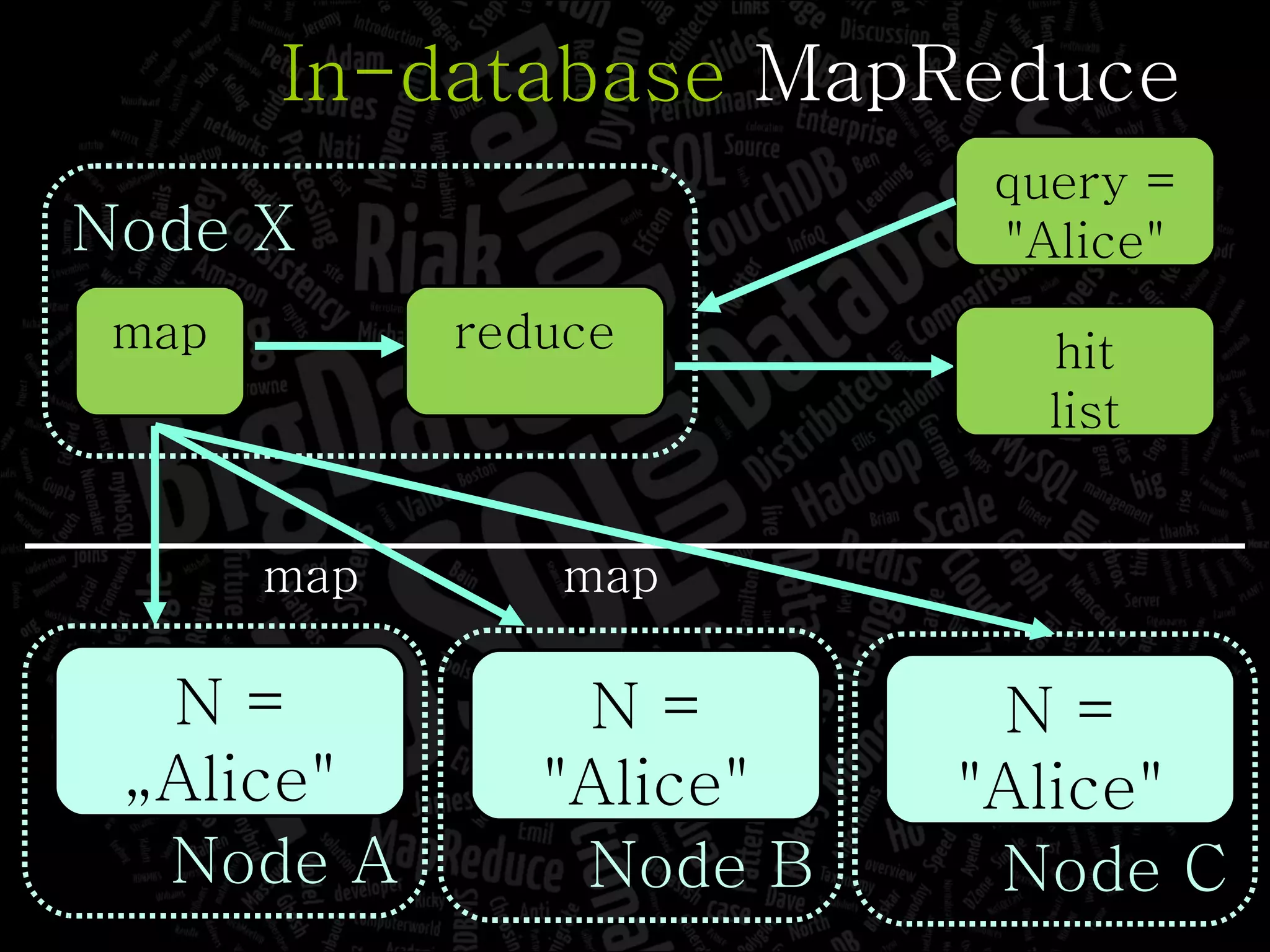

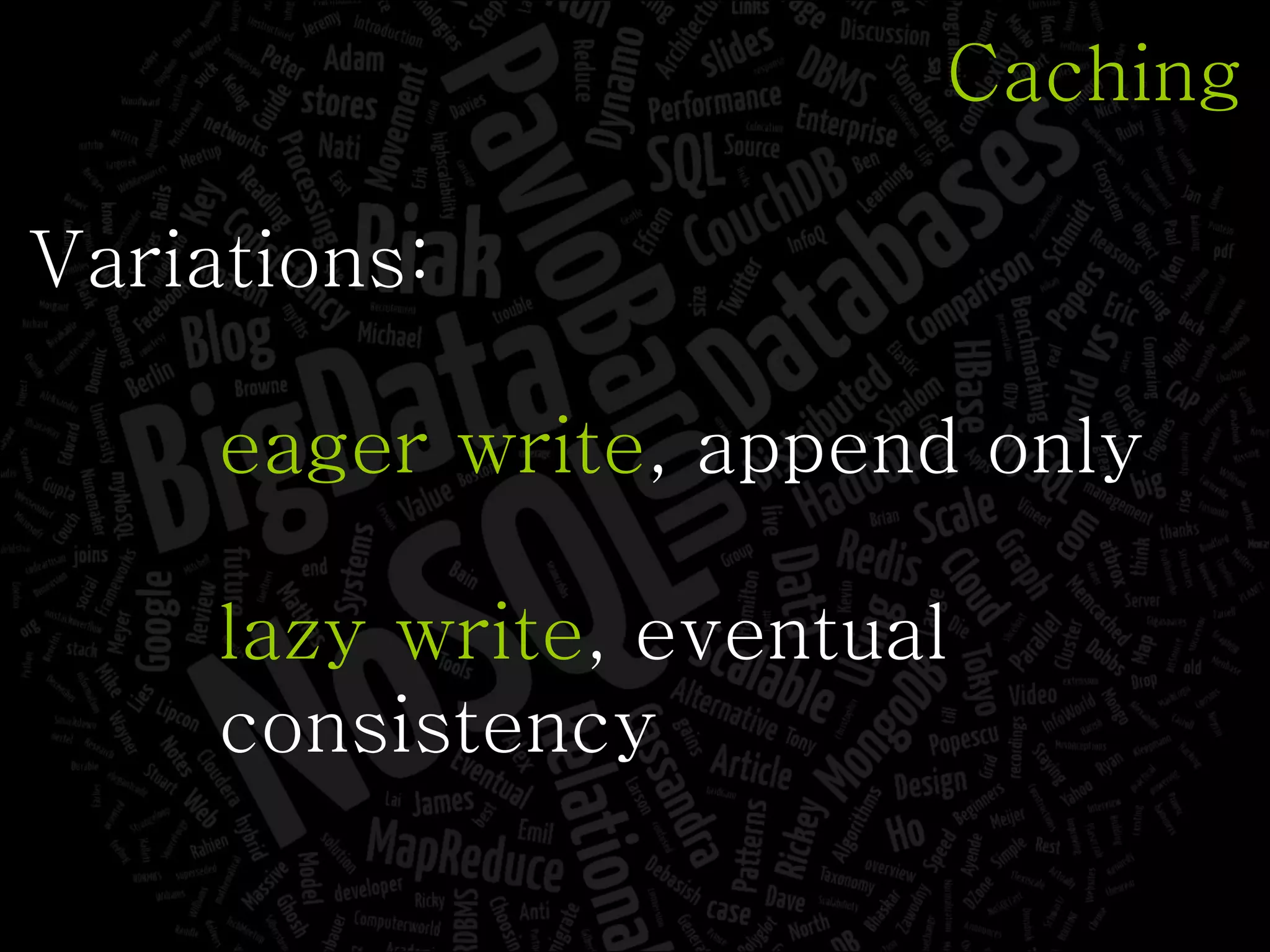

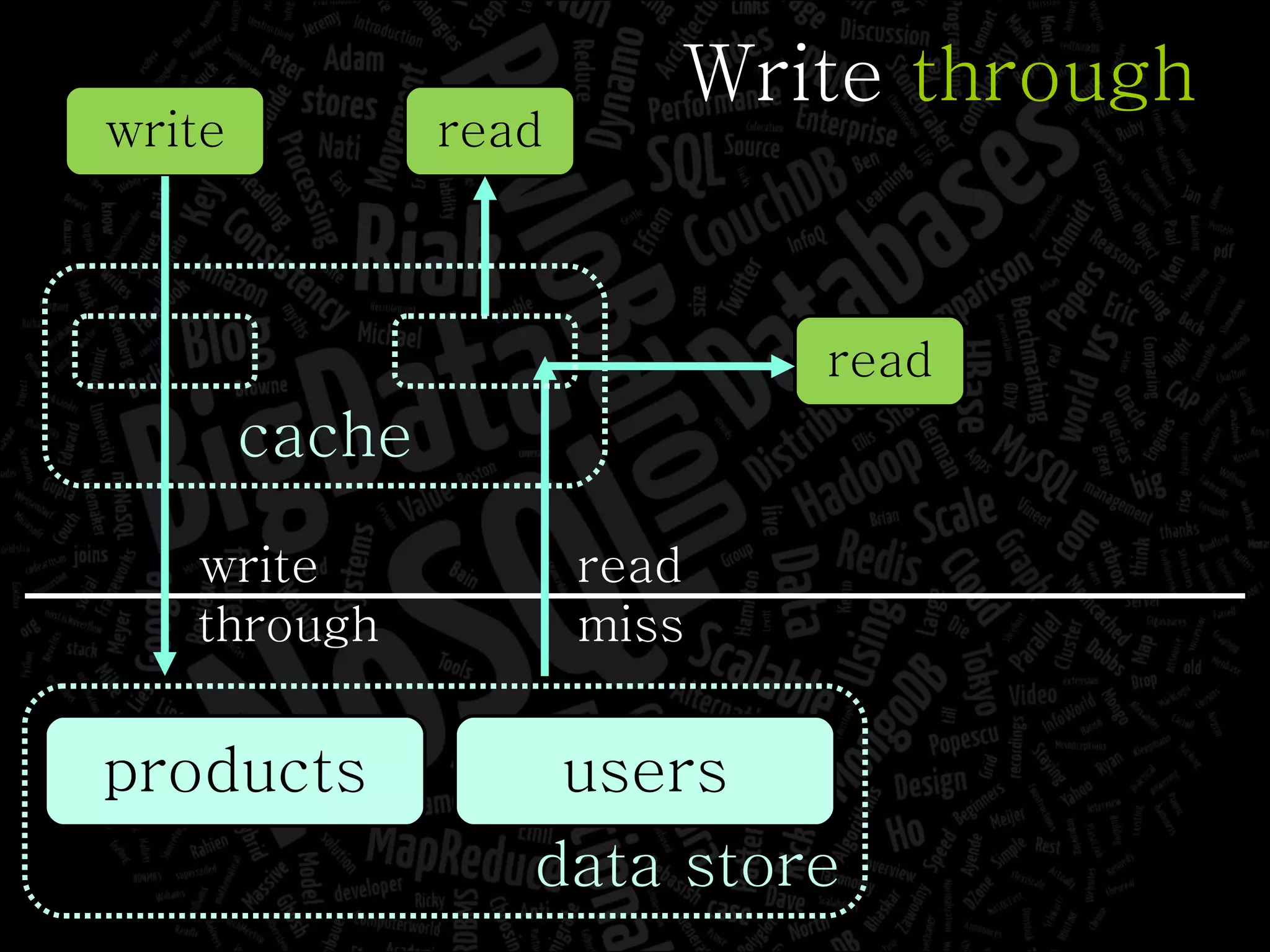

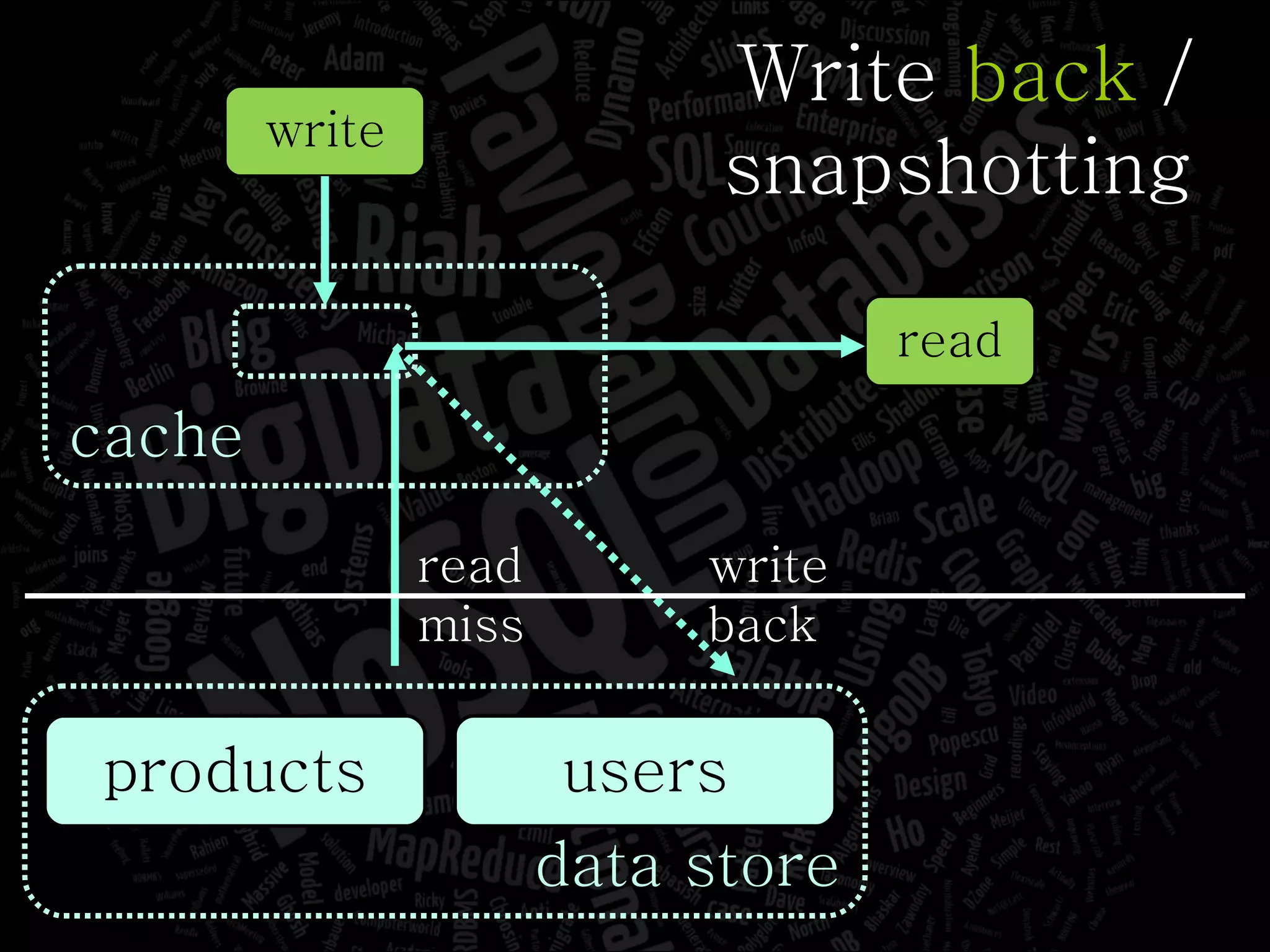

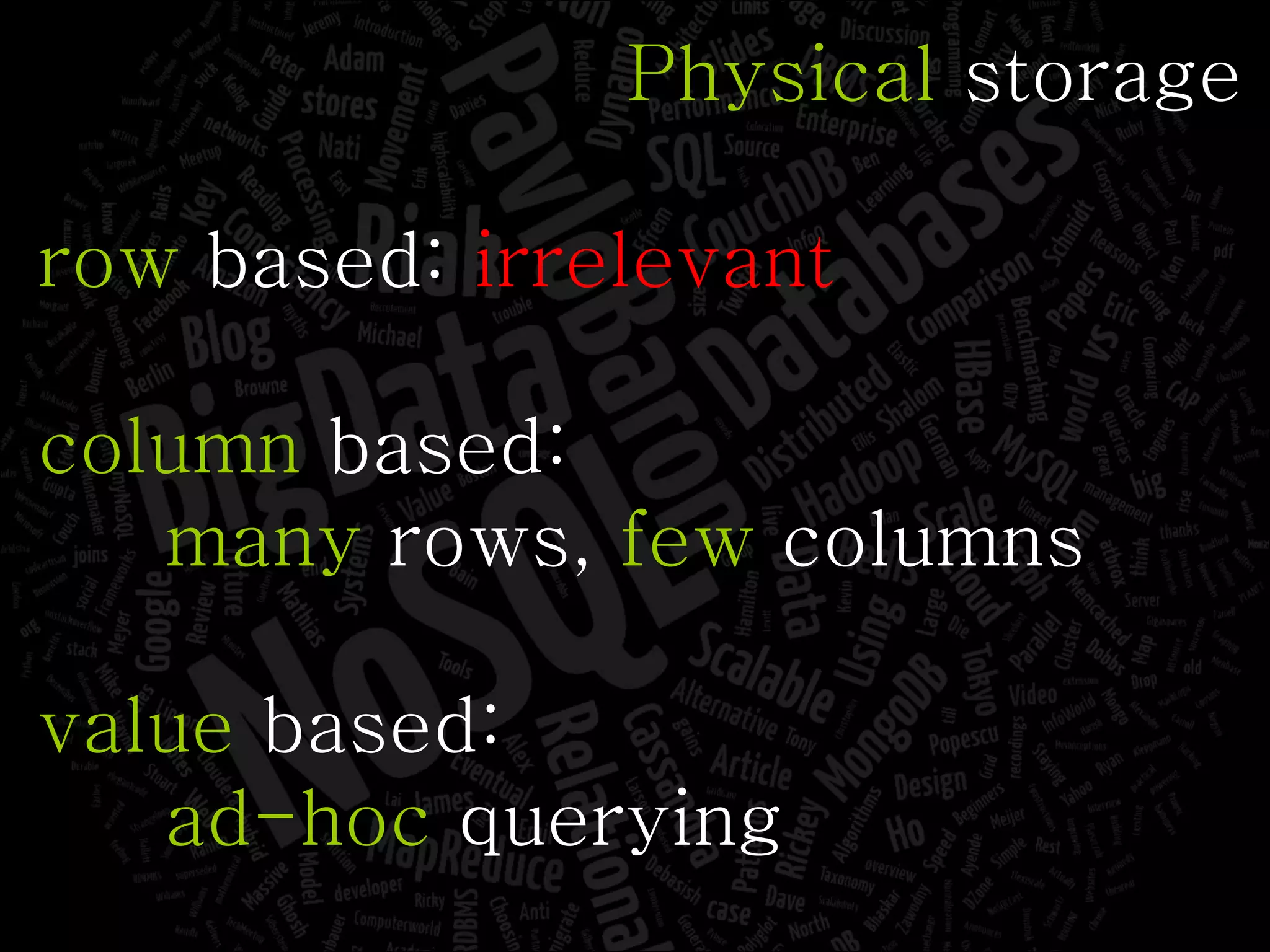

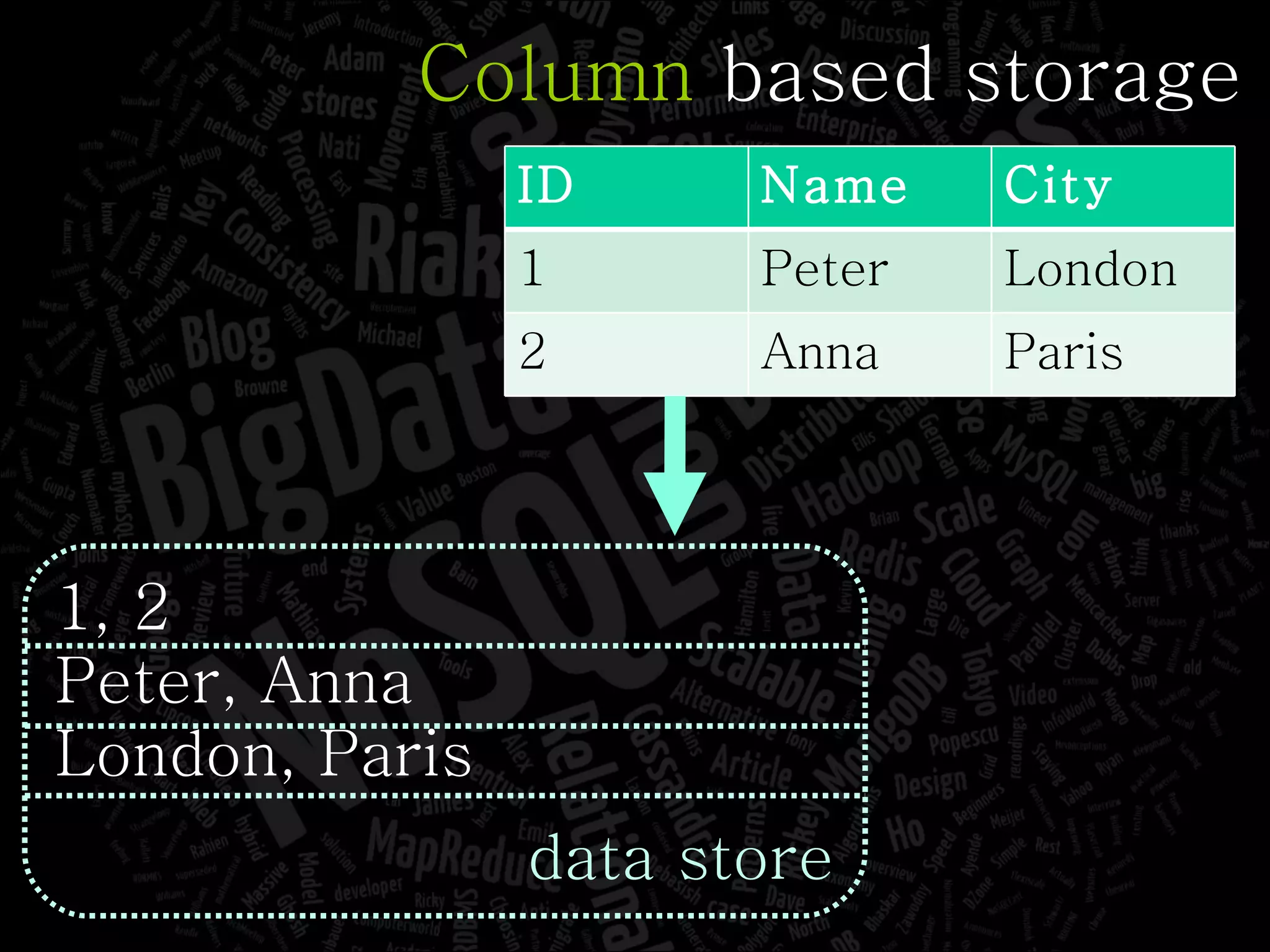

The document discusses the complexities of big data and the importance of appropriate data management strategies. It highlights various concepts relevant to data systems, including NoSQL, CAP theorem, and data replication techniques, while emphasizing the need for flexibility in data handling to accommodate growth and accessibility. It concludes by touching on different storage approaches and design considerations necessary for efficiently managing large datasets.

![Pavlo Baron http://www.pbit.org [email_address] @pavlobaron](https://image.slidesharecdn.com/efs11baronbigdataandnosql2-110711132400-phpapp01/75/Big-Data-NoSQL-EFS-11-Pavlo-Baron-2-2048.jpg)

![Value based storage 1:1, 3:Peter, 5:London, 2:2, 4:Anna, 6:Paris, 7:[1, 3, 5], 8:[2, 4, 6] data store ID Name City 1 Peter London 2 Anna Paris](https://image.slidesharecdn.com/efs11baronbigdataandnosql2-110711132400-phpapp01/75/Big-Data-NoSQL-EFS-11-Pavlo-Baron-142-2048.jpg)