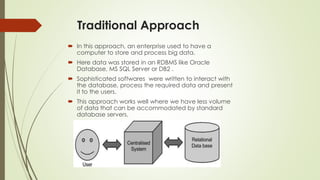

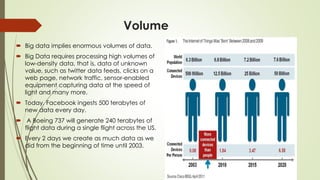

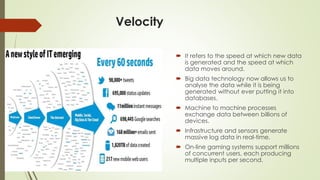

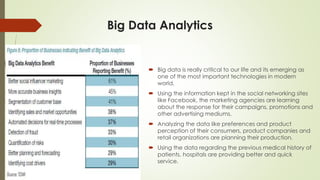

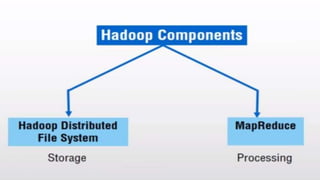

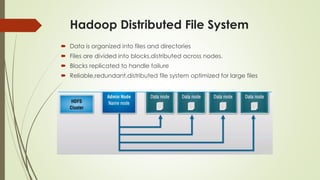

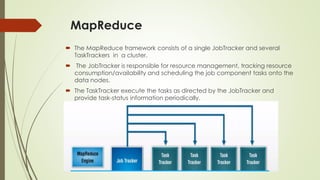

The document discusses the evolution and significance of big data, outlining its characteristics, traditional approaches, and the role of technologies like Hadoop. Big data encompasses vast volumes of diverse data that require advanced processing methods, which traditional systems struggle to manage. The presentation emphasizes the importance of big data analytics in enhancing organizational efficiency and decision-making across various sectors.