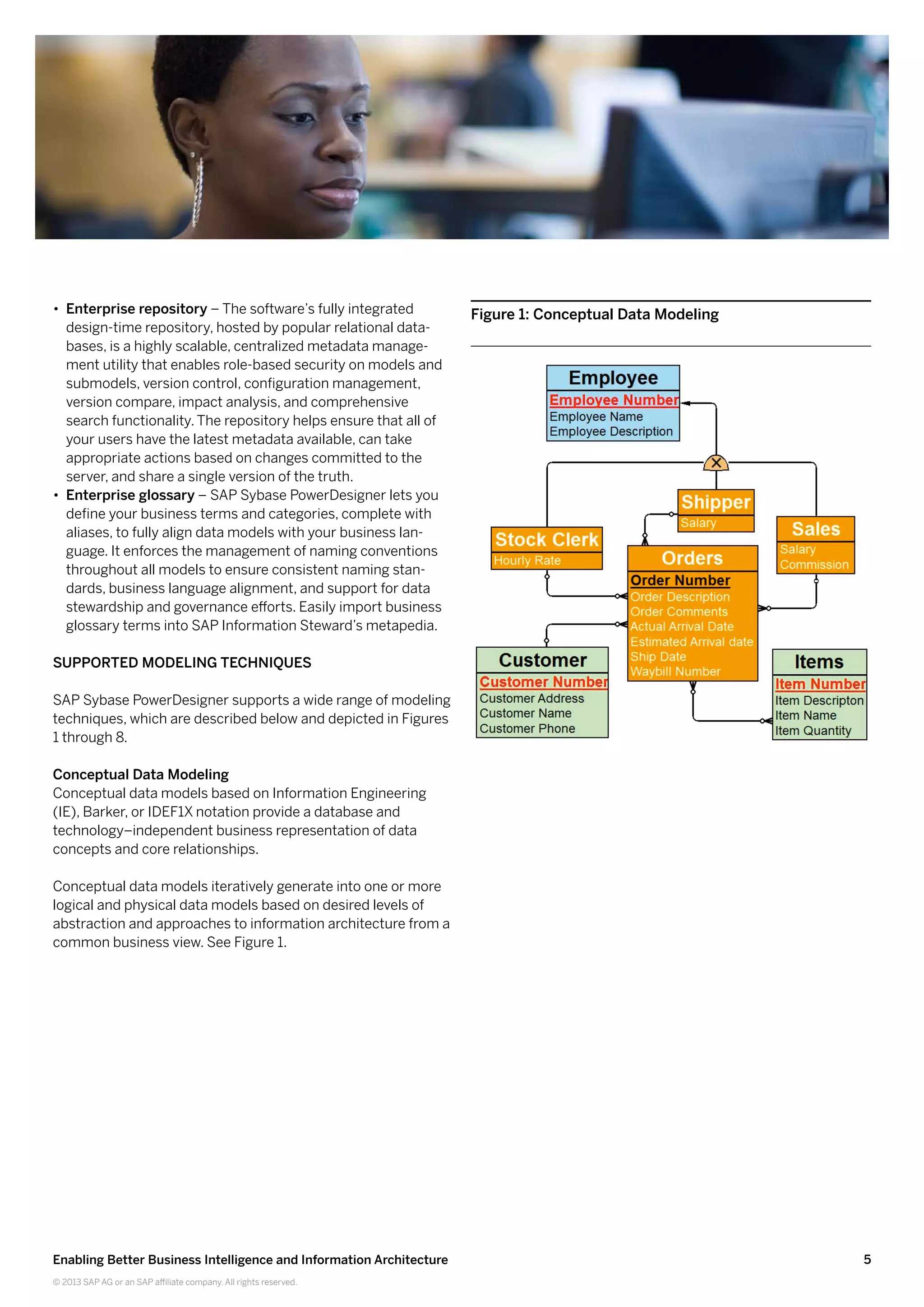

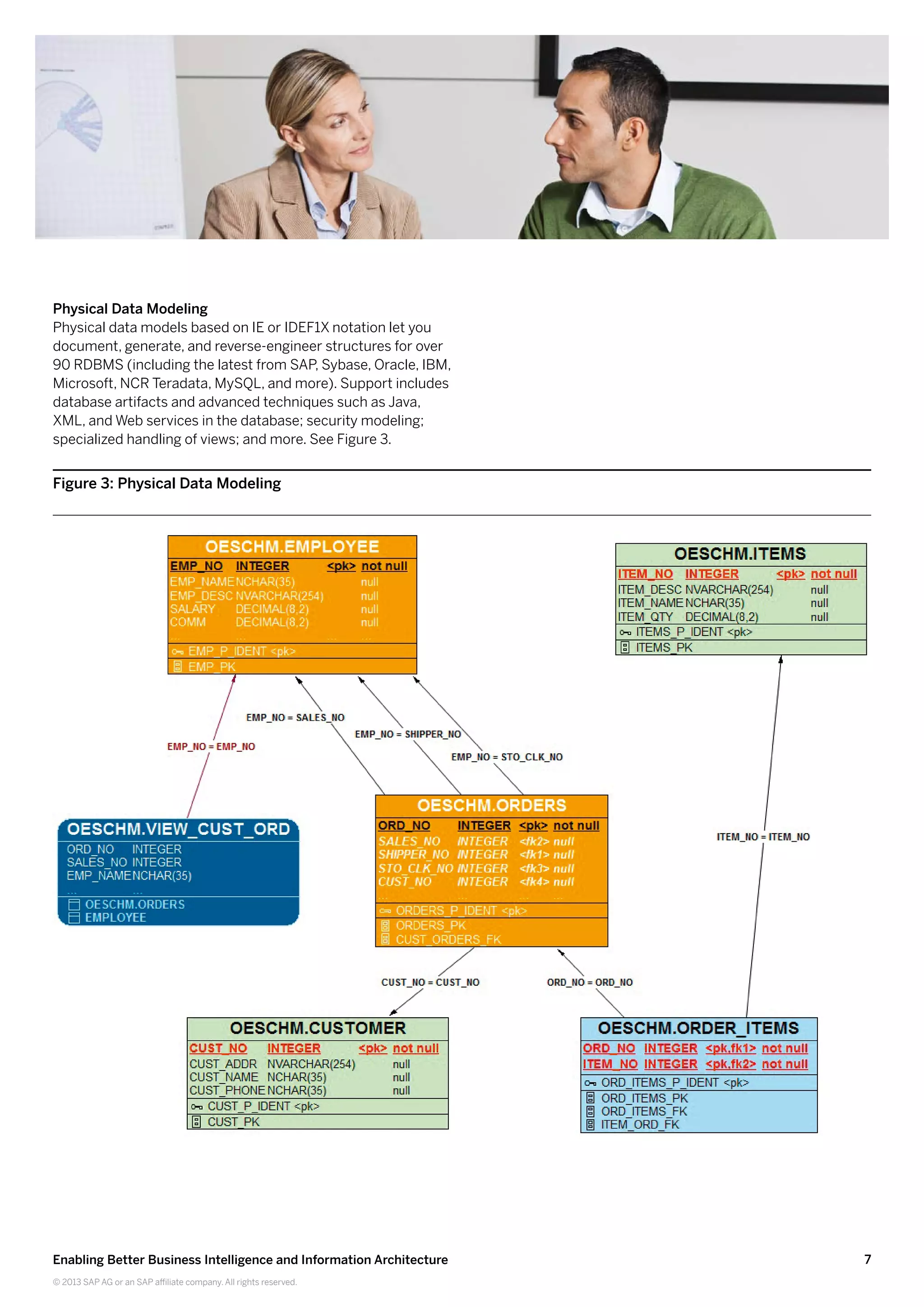

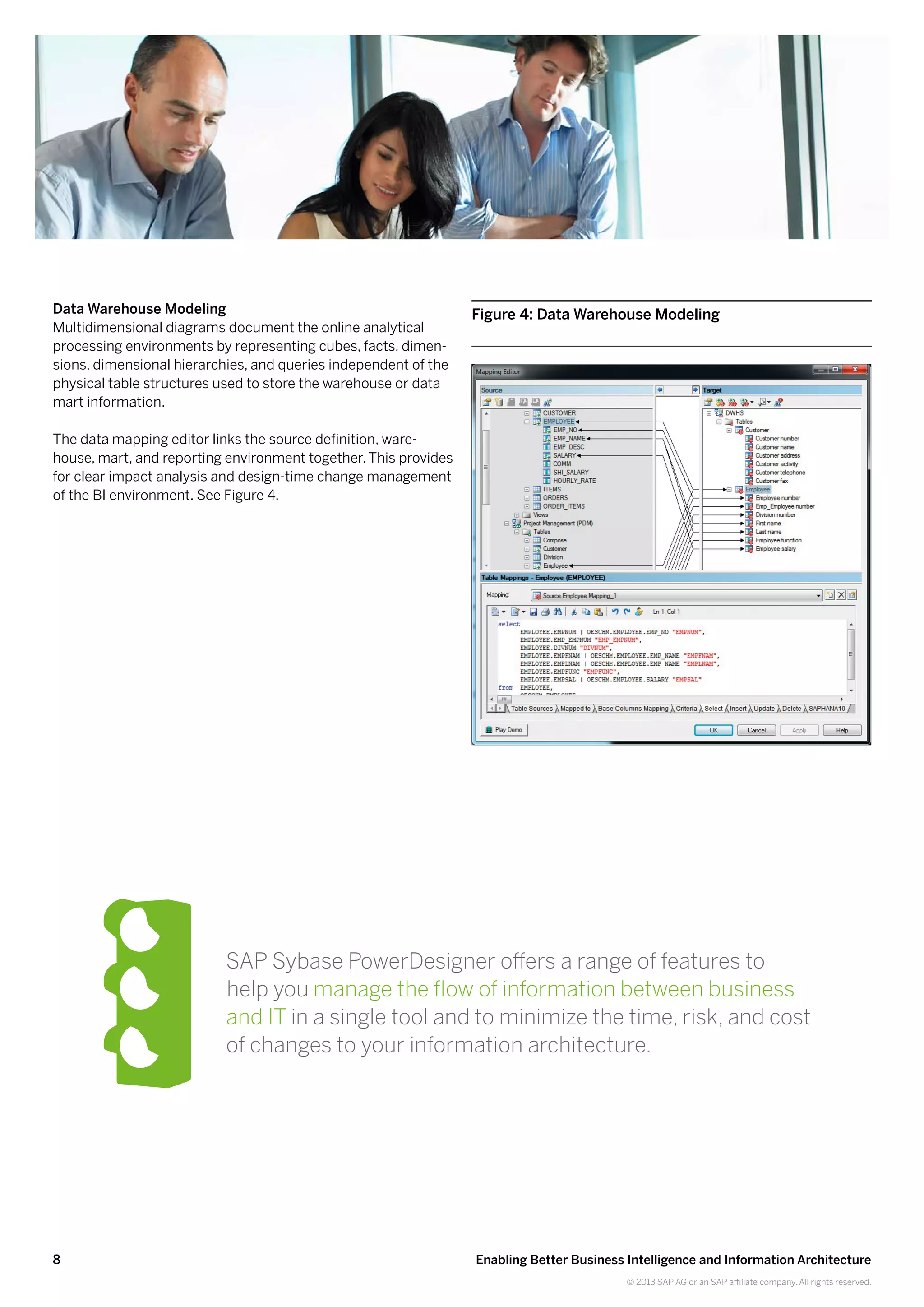

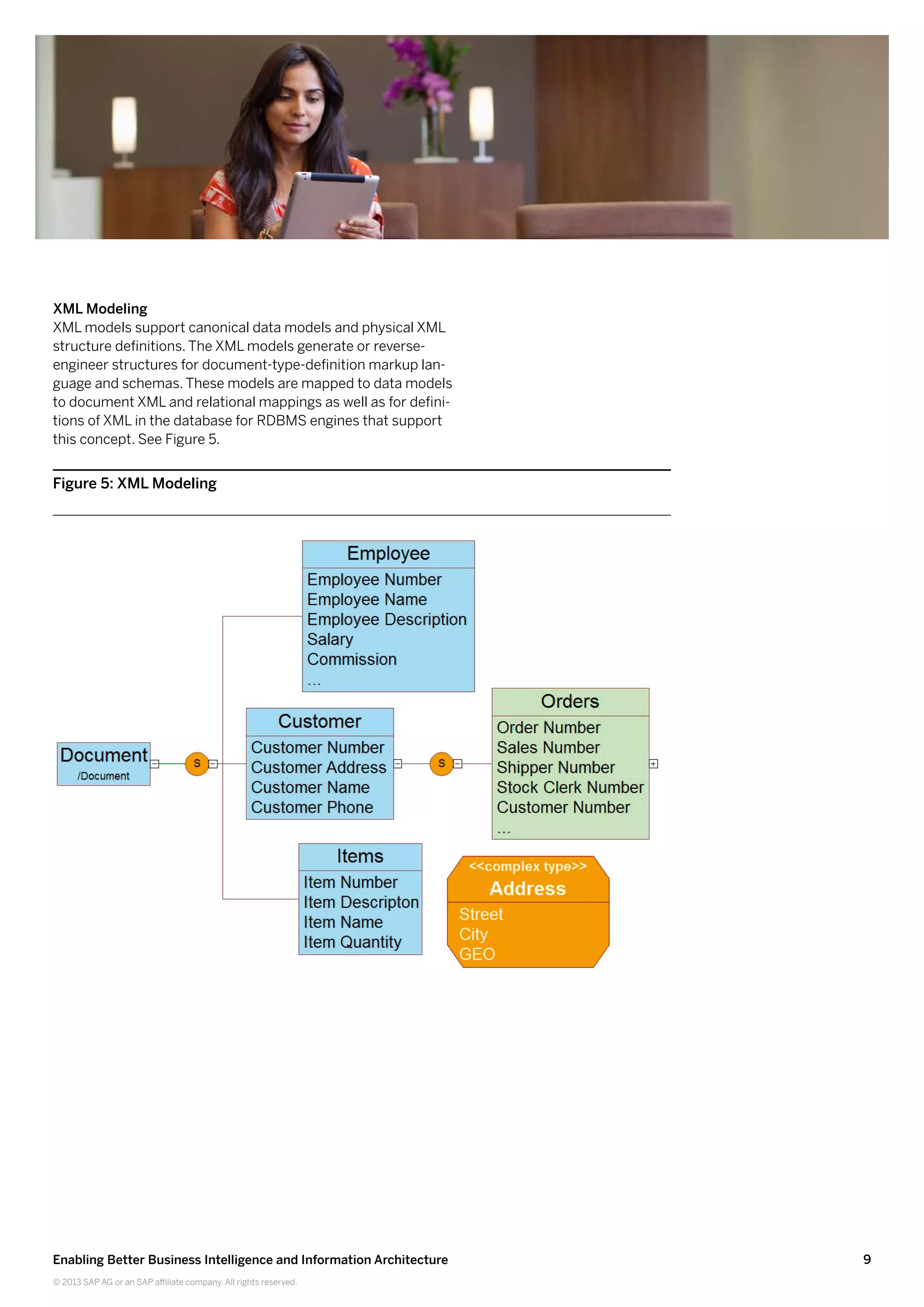

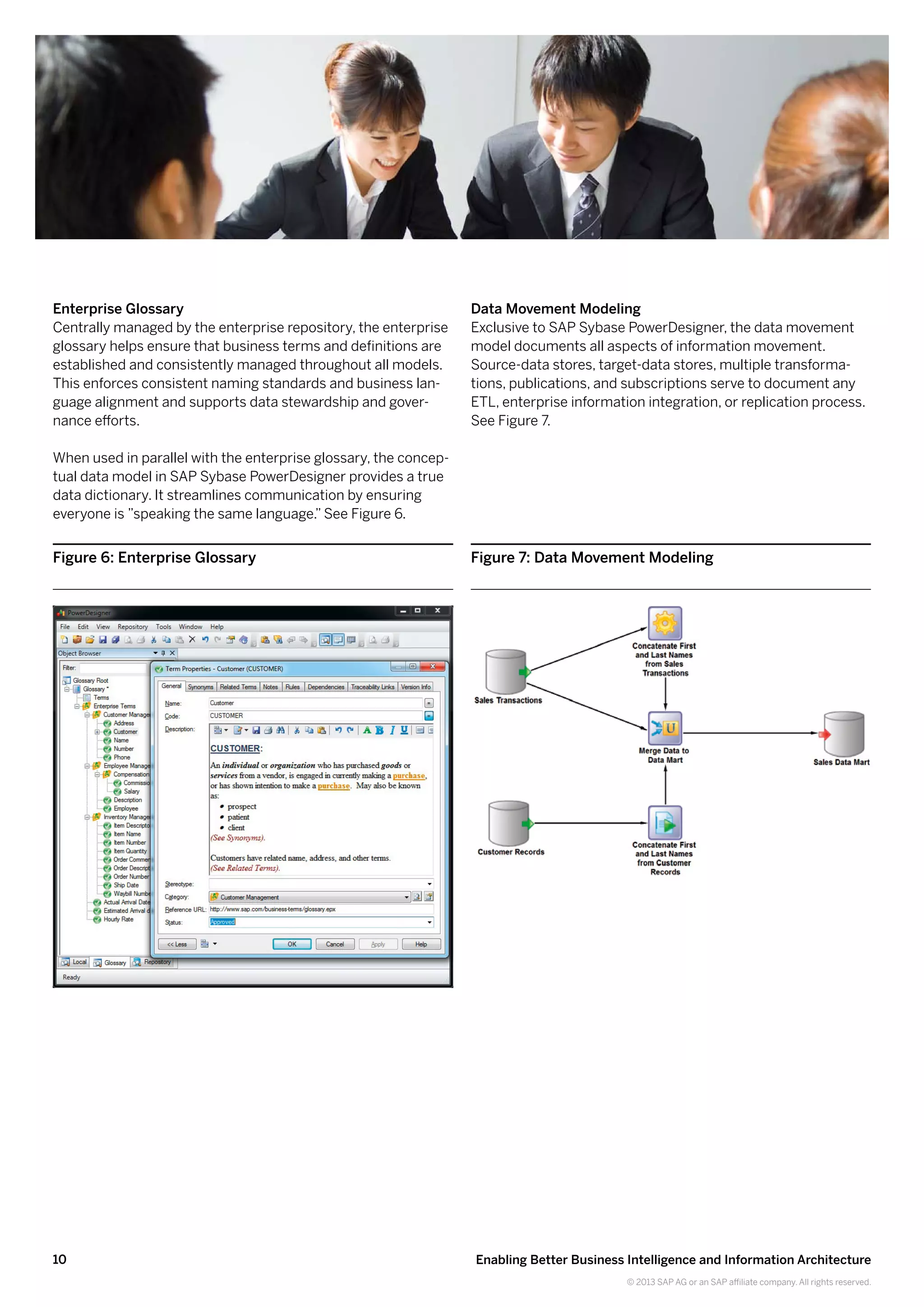

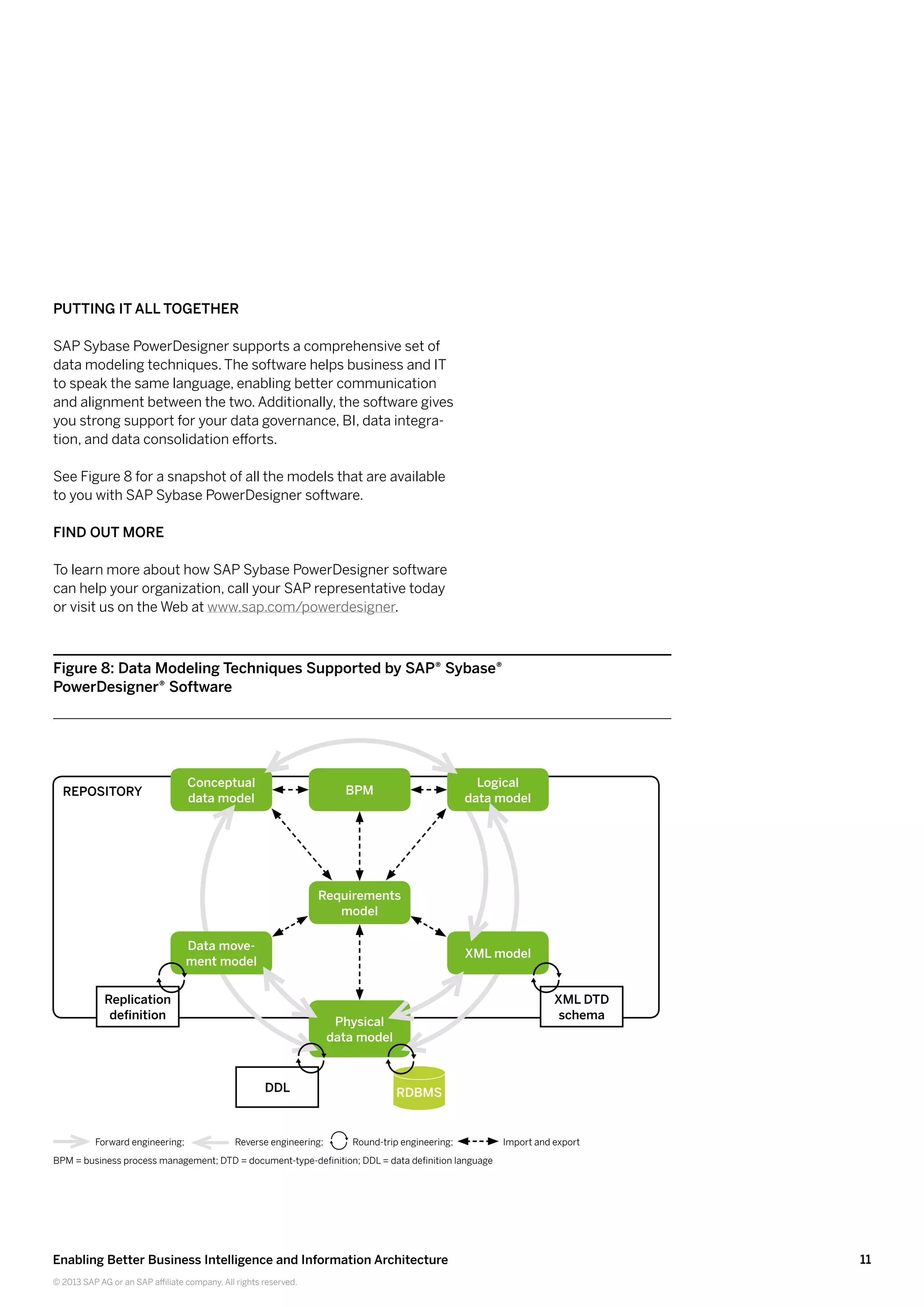

SAP Sybase PowerDesigner software provides modeling tools that help improve business intelligence and information architecture. It establishes a 360-degree view of key information assets through metadata management. Impact analysis functionality reduces the risks and costs of changes. The software supports various modeling techniques including conceptual data modeling, logical data modeling, physical data modeling, and more. It also includes features like an enterprise glossary and repository.