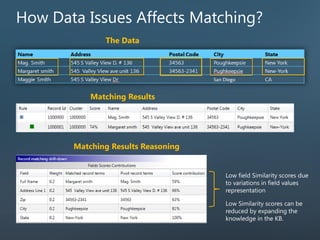

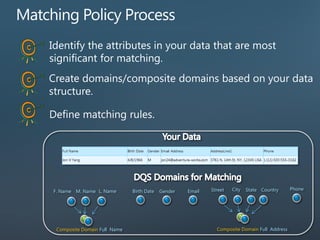

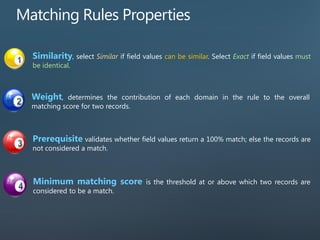

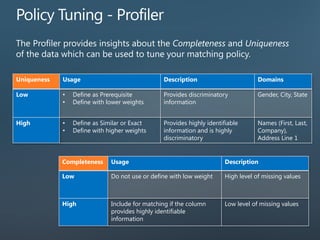

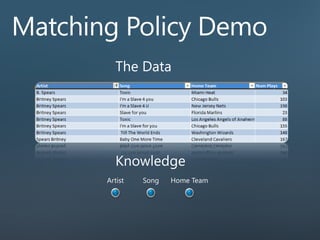

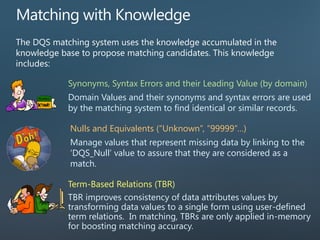

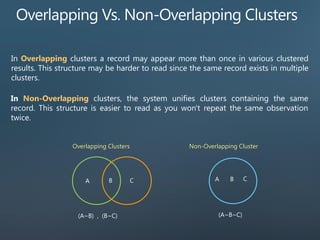

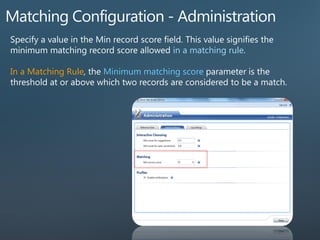

This document provides an overview of Data Quality Services (DQS) matching and Master Data Services (MDS). It discusses record matching, data issues that affect matching, the DQS matching process, and key components like the matching policy and knowledge base. It also introduces MDS and its configuration tools.