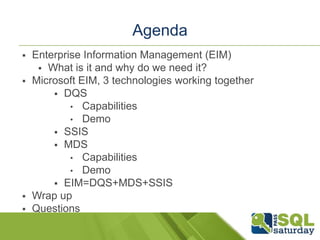

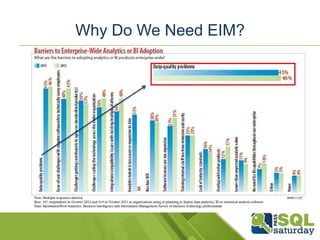

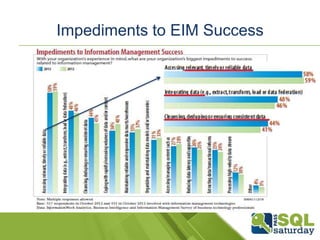

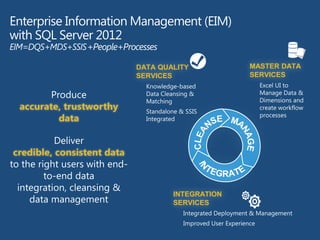

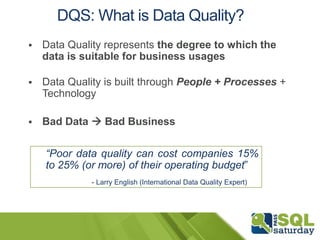

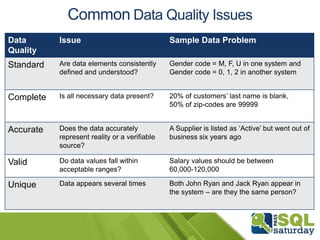

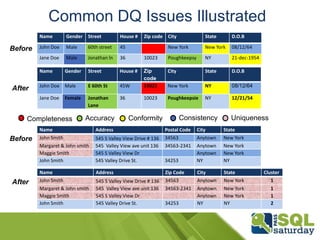

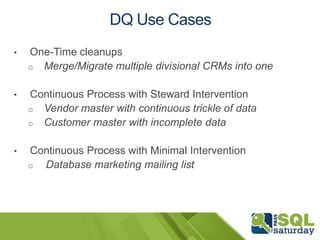

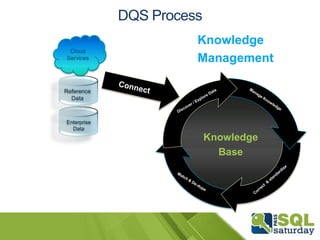

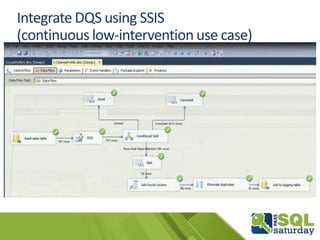

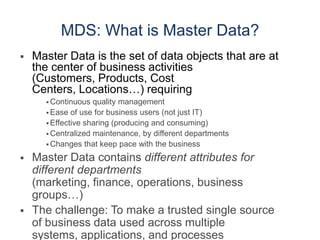

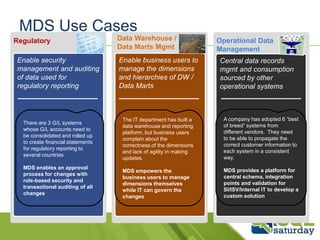

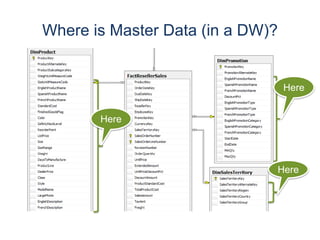

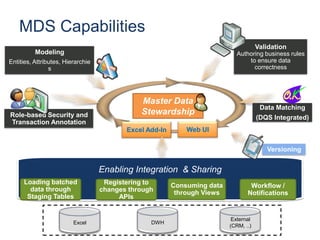

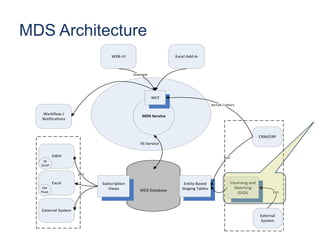

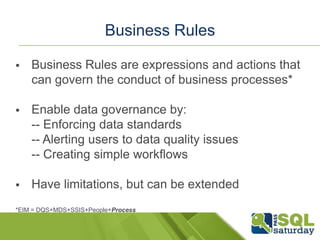

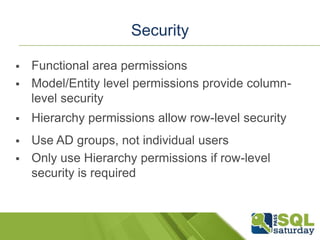

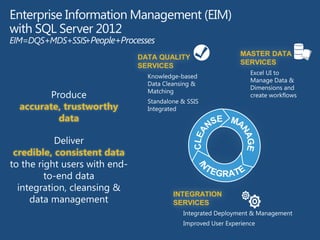

The document discusses Enterprise Information Management (EIM) in SQL Server 2012, highlighting the need for data quality and master data management through tools like Data Quality Services (DQS), Master Data Services (MDS), and SQL Server Integration Services (SSIS). It emphasizes the importance of data quality in business operations, providing insights into common data issues and the capabilities of each technology. Additionally, it outlines how effective EIM requires a combination of people, processes, and technology to enhance business intelligence success.