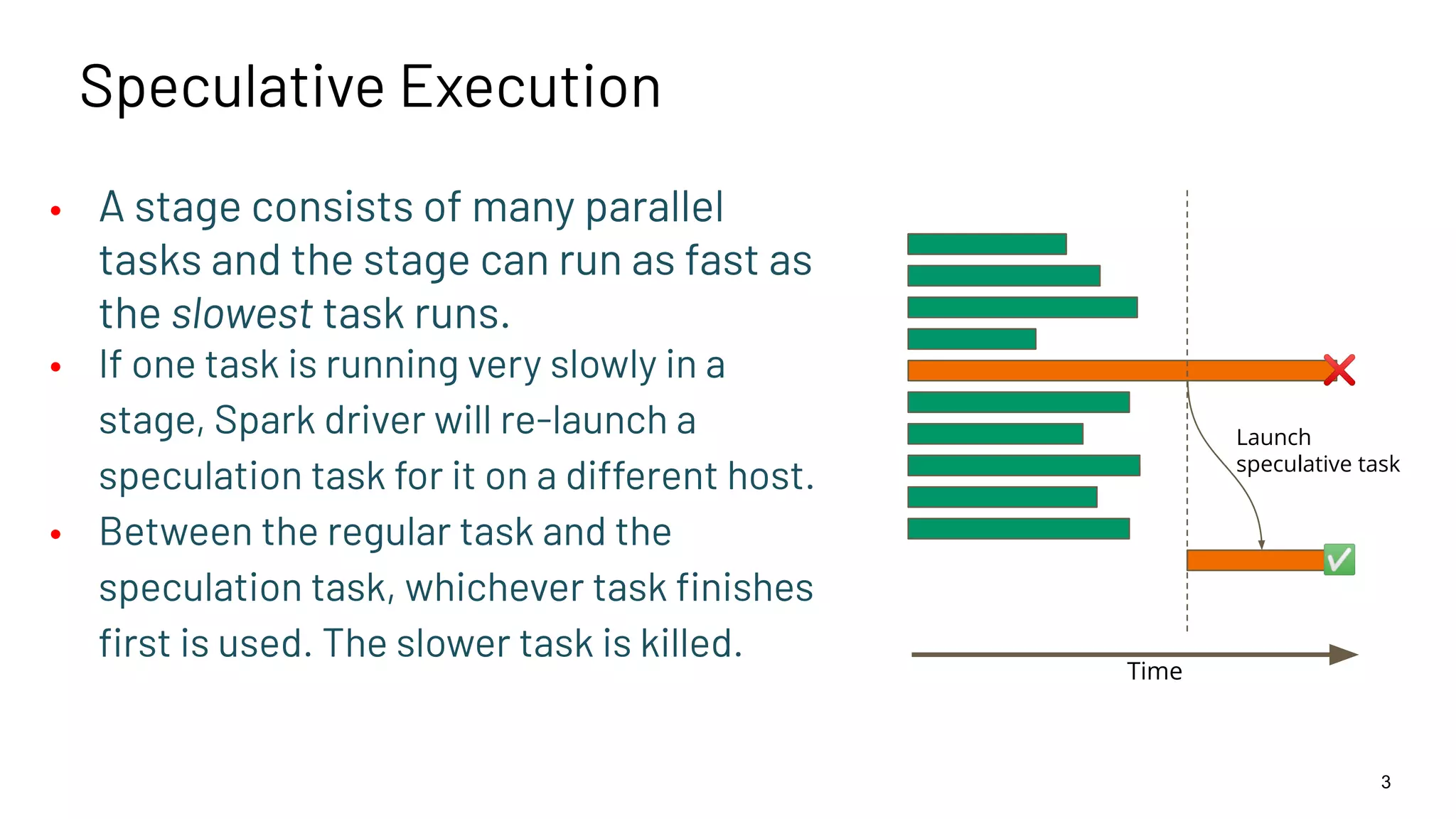

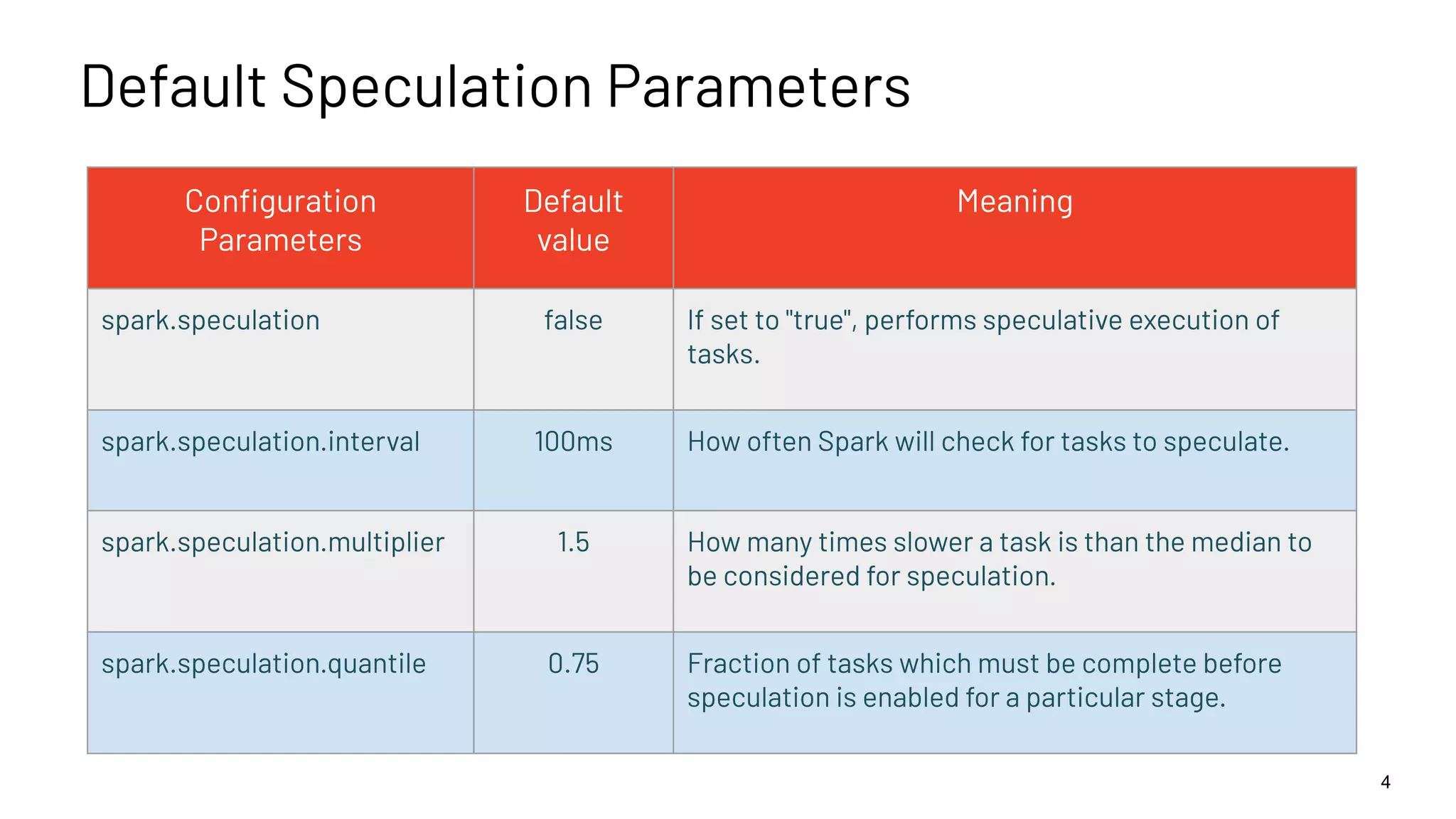

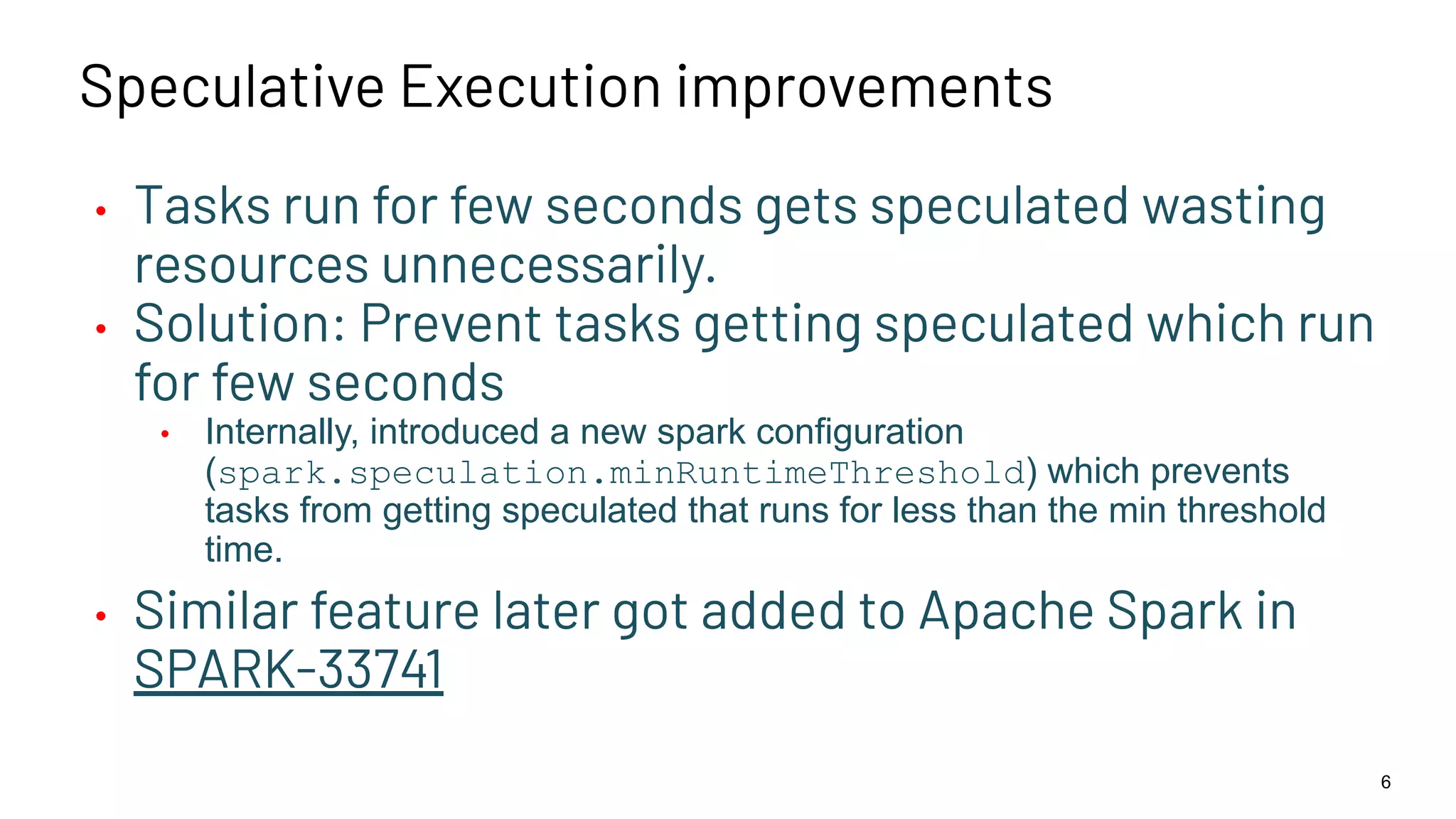

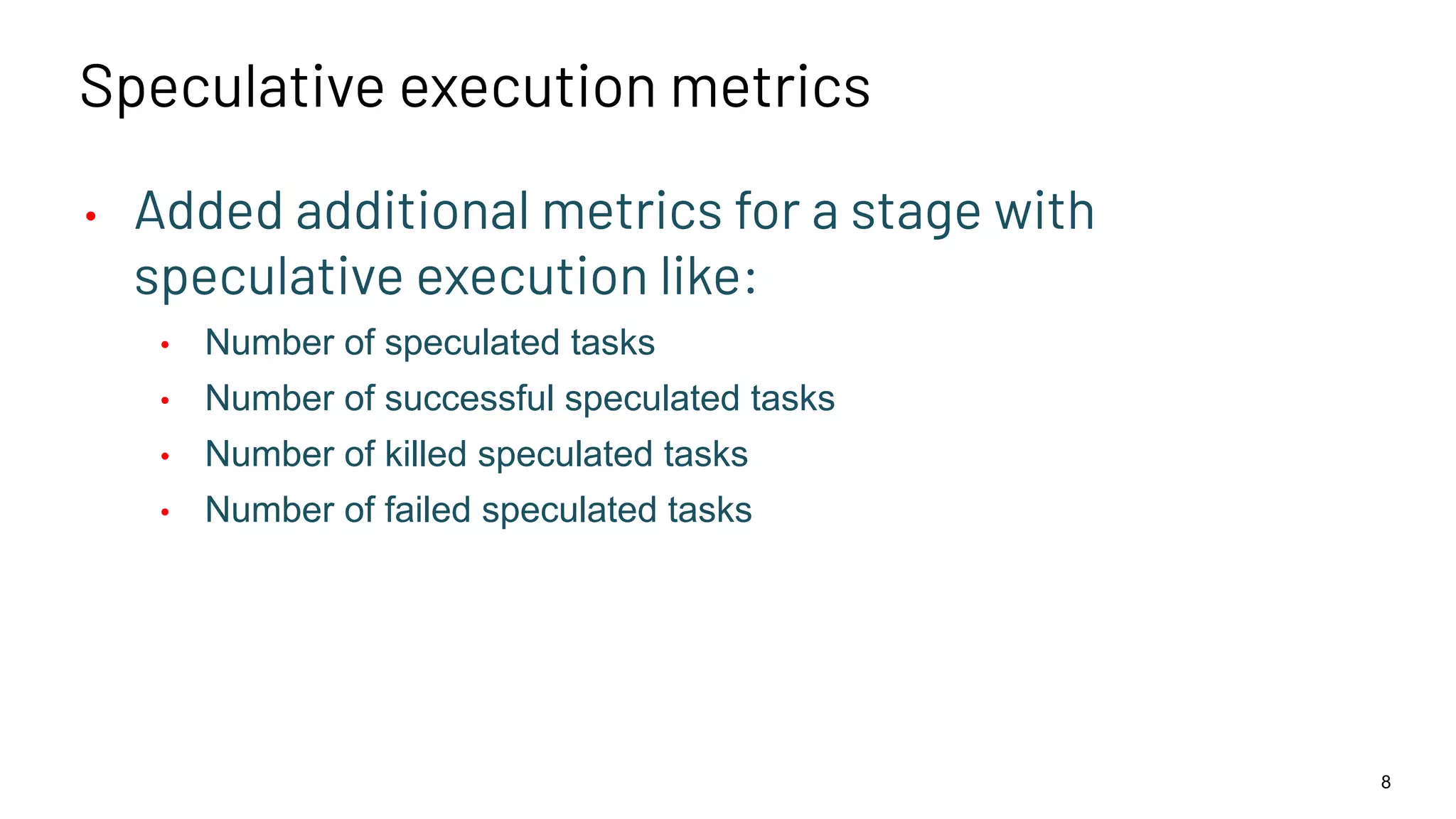

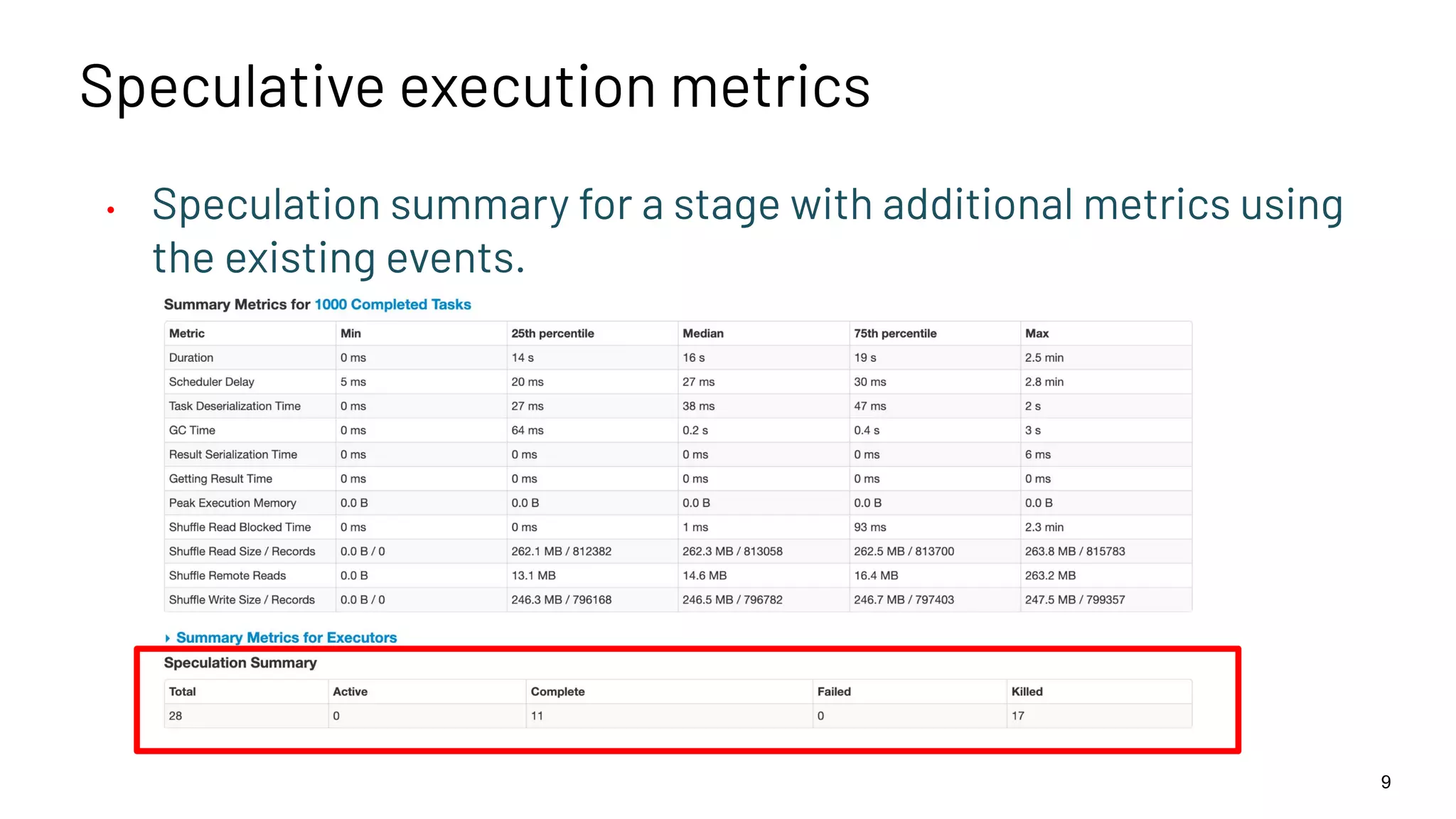

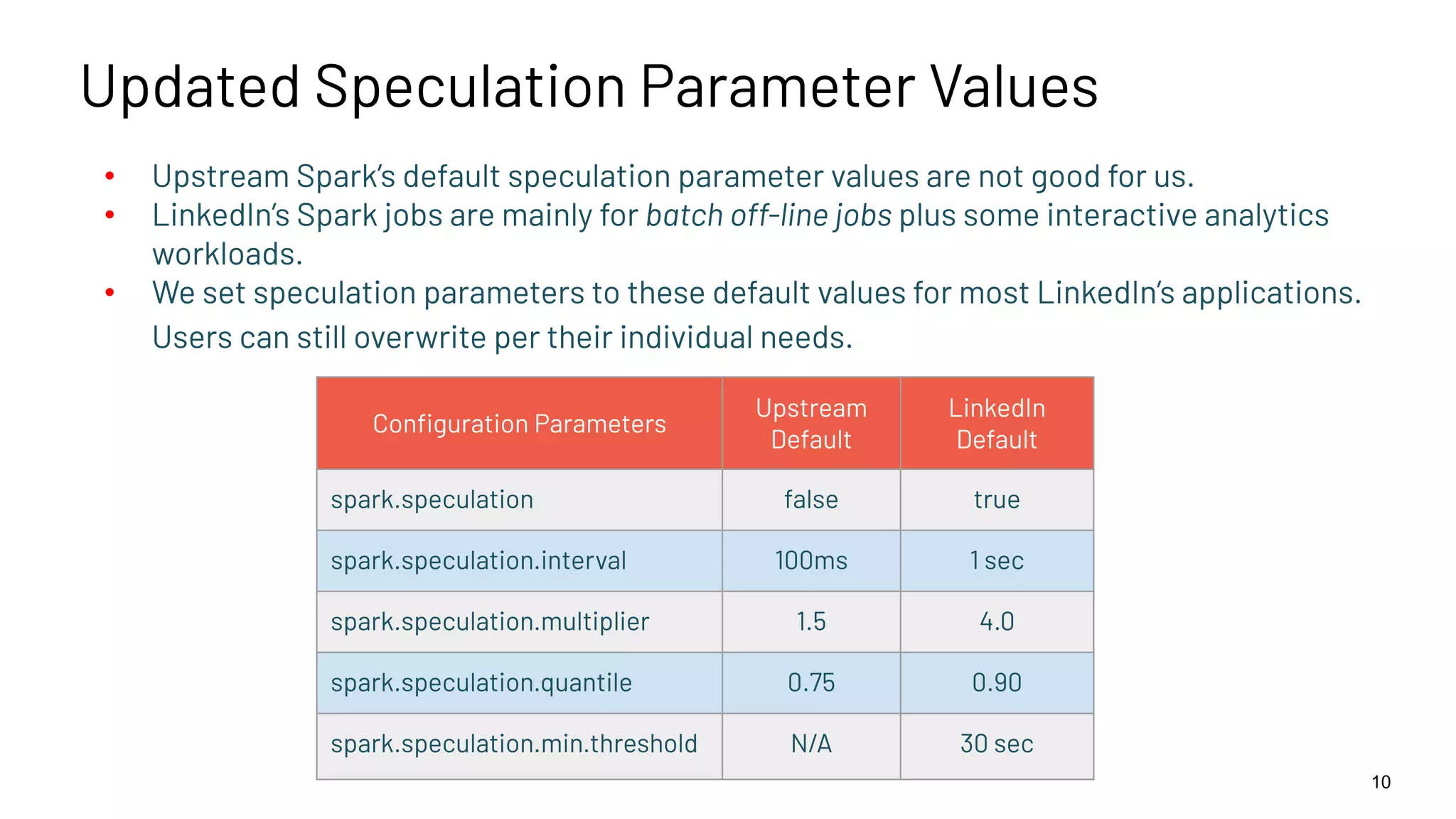

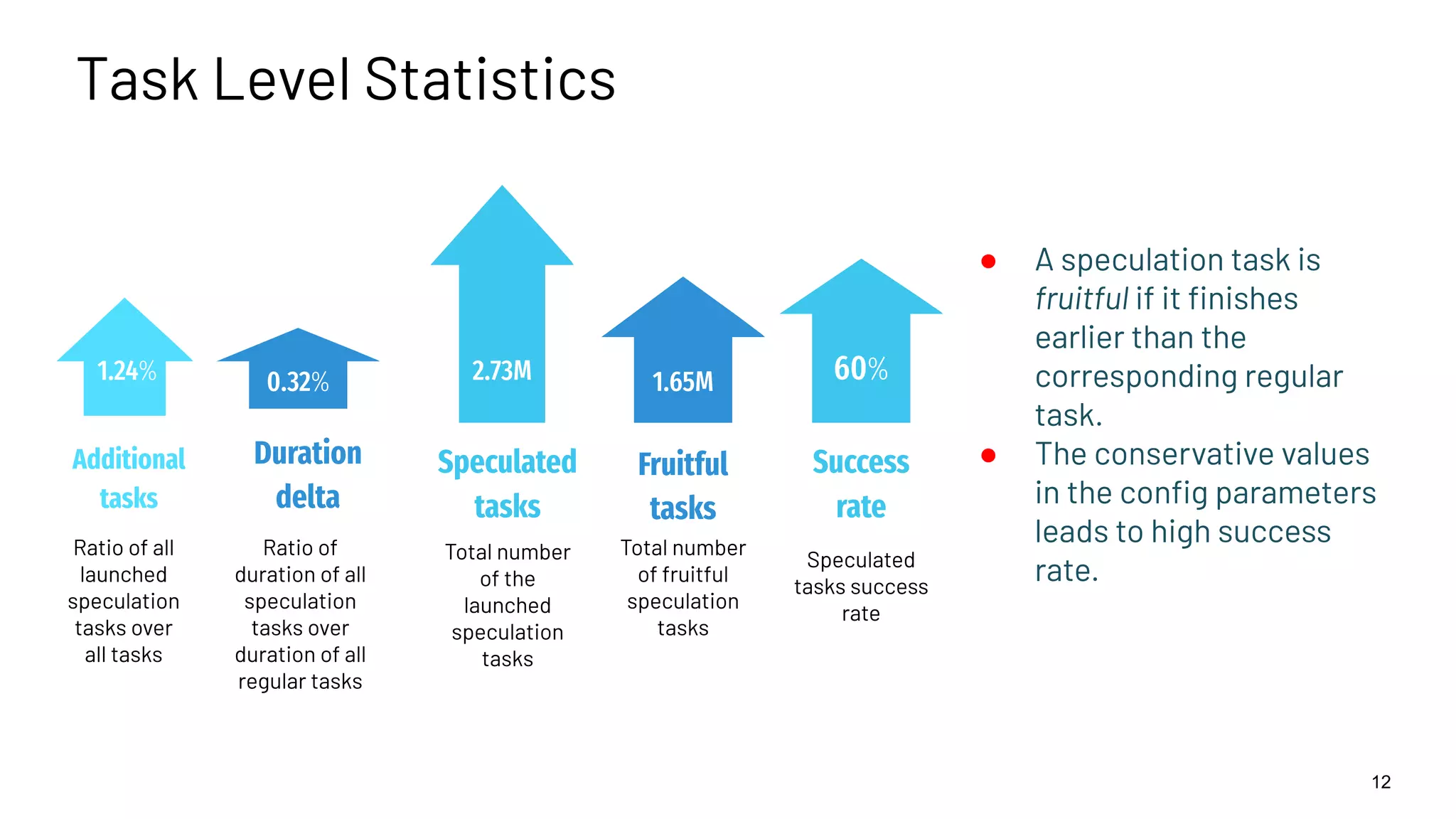

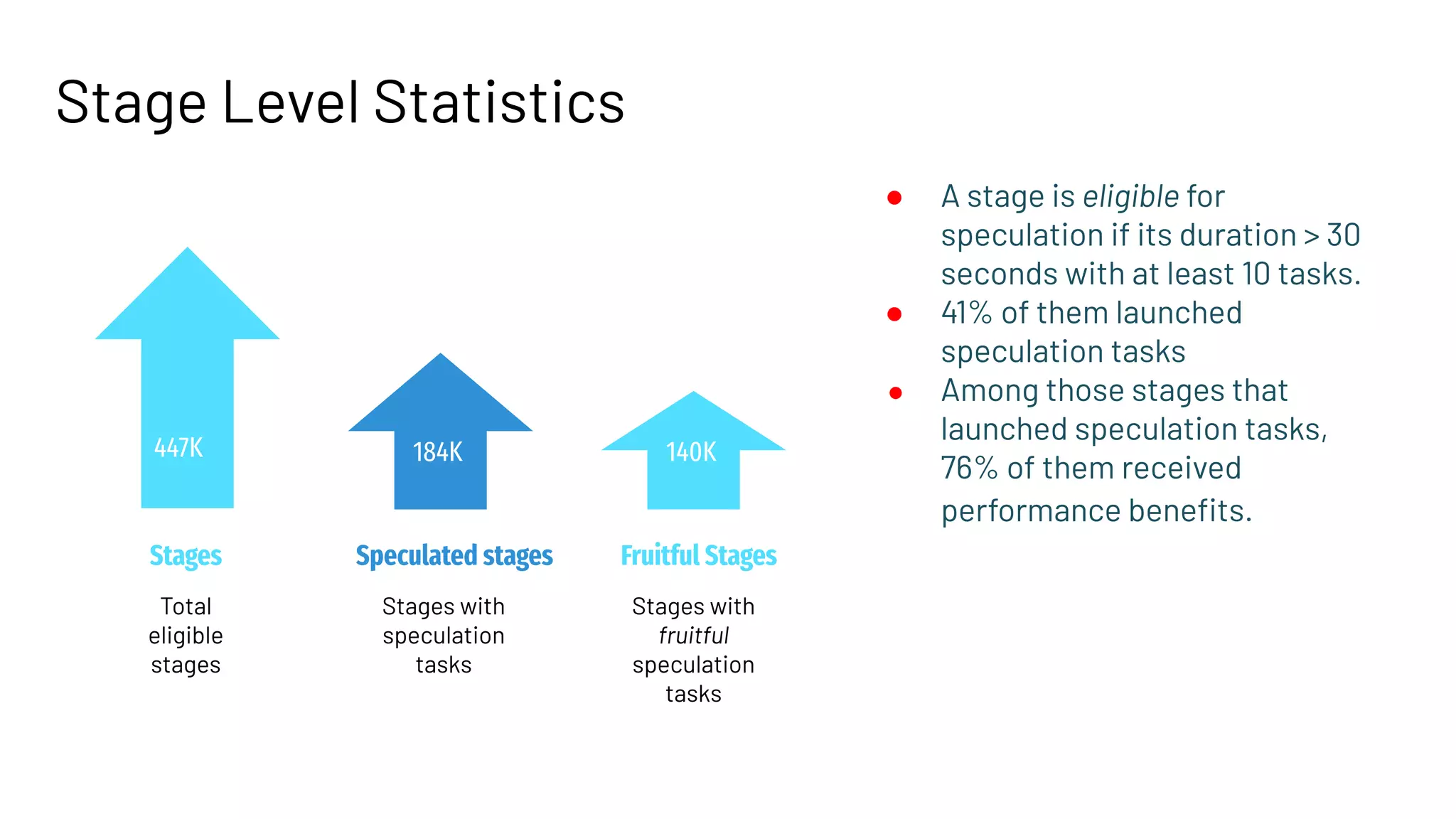

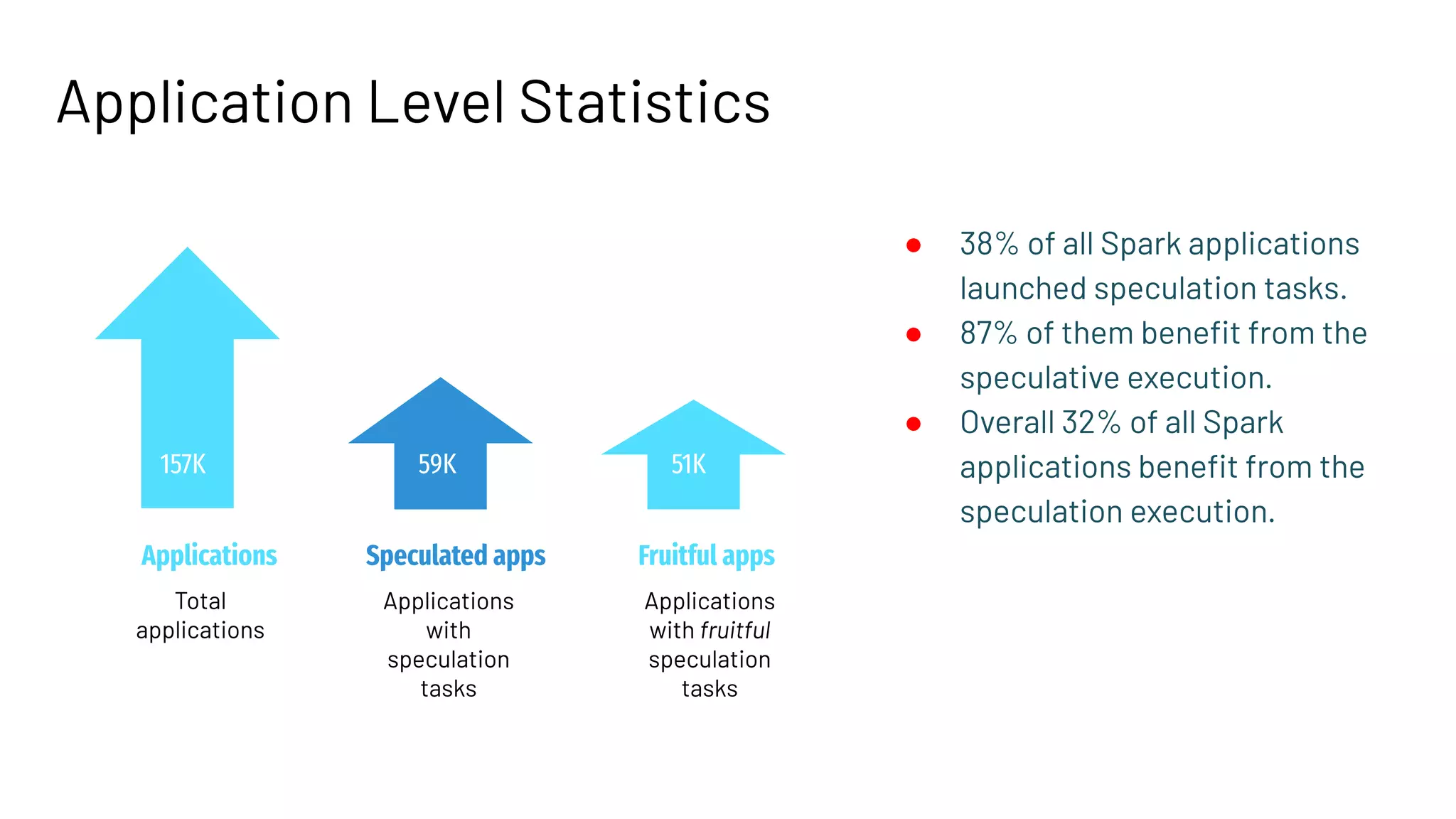

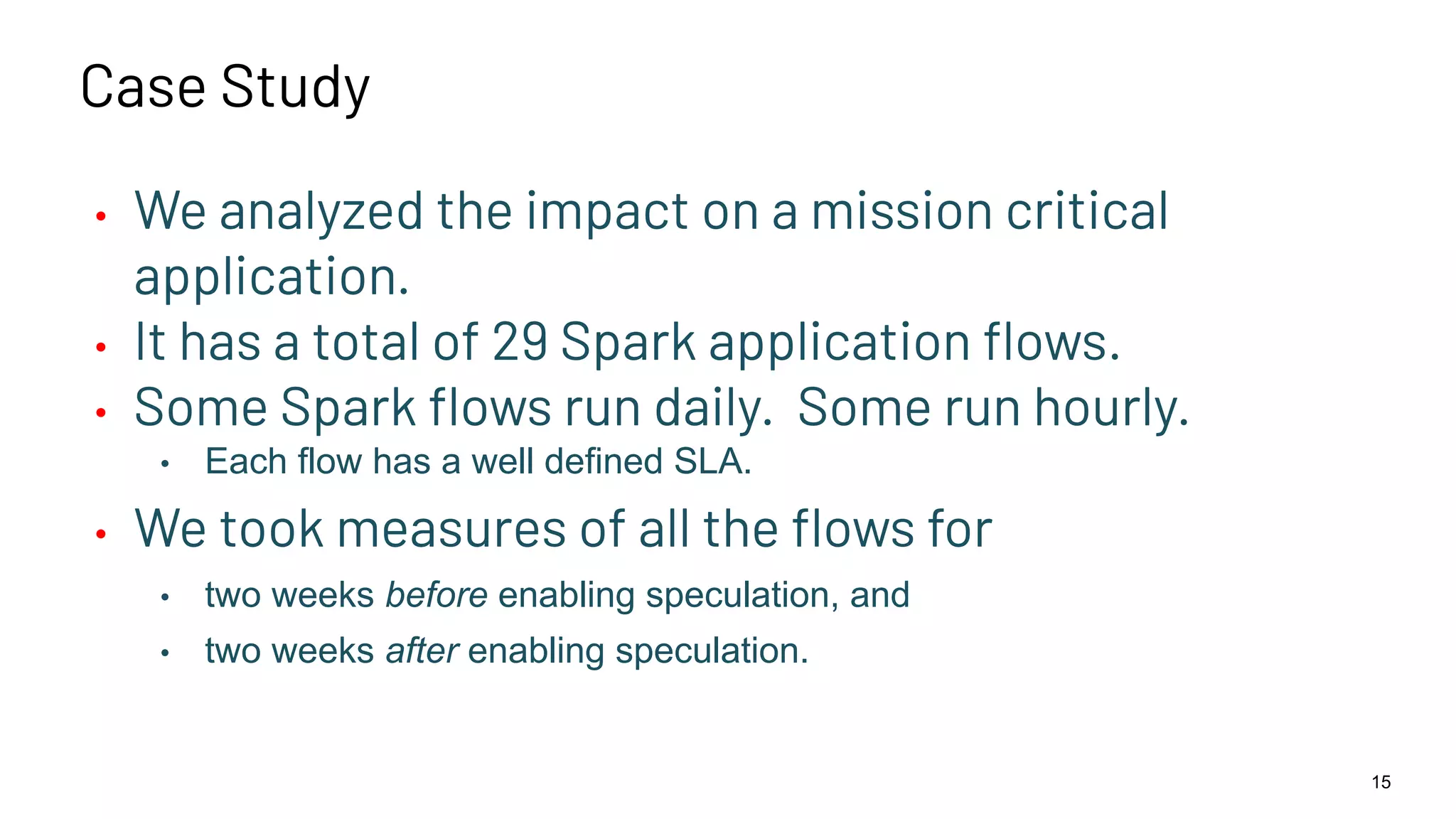

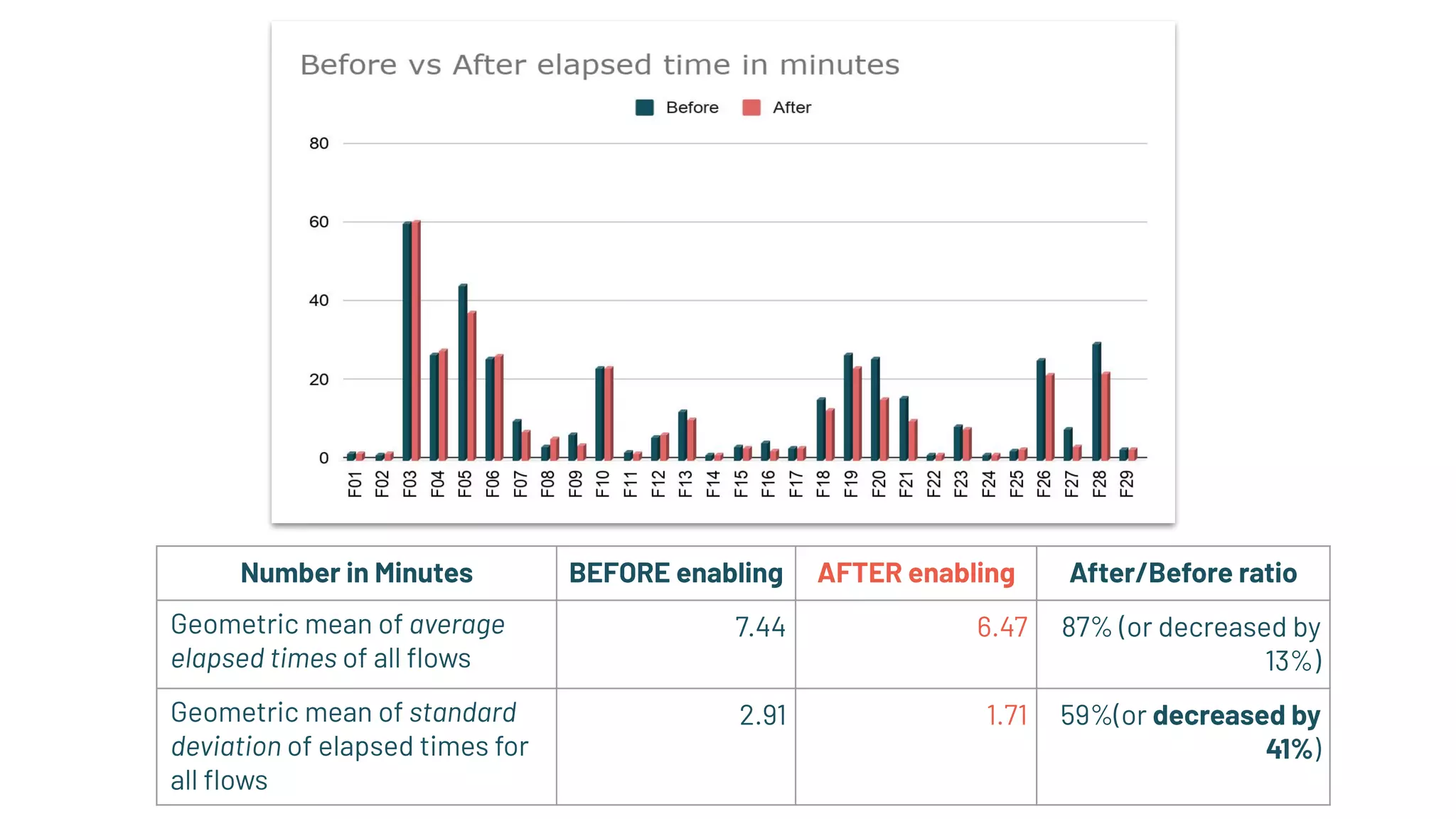

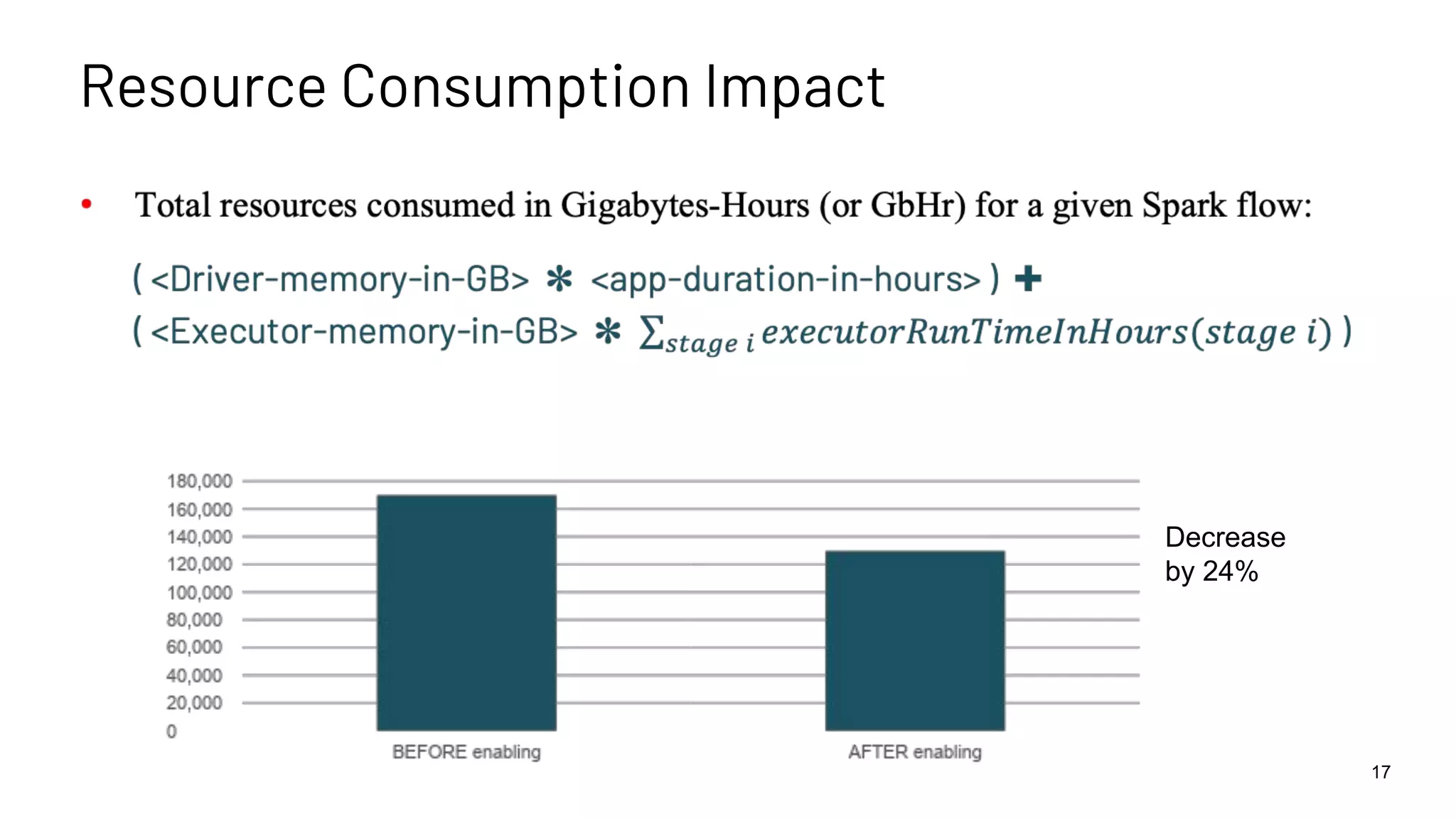

The document discusses best practices for enabling speculative execution in large-scale platforms, particularly in the context of Apache Spark at LinkedIn. It outlines configuration parameters, motivation for improvements, and metrics for analyzing speculative execution's impact on task performance and resource utilization. The findings indicate that tailored speculative execution parameters can enhance performance, reduce job completion times, and lead to more predictable system behavior.